[GitHub] spark issue #18544: [SPARK-21318][SQL]Improve exception message thrown by `l...

Github user SparkQA commented on the issue: https://github.com/apache/spark/pull/18544 **[Test build #96424 has started](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/96424/testReport)** for PR 18544 at commit [`9f07557`](https://github.com/apache/spark/commit/9f07557a6d5356f056bfc0d5e2e6993f7602b487). --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22461: [SPARK-25453][SQL][TEST] OracleIntegrationSuite IllegalA...

Github user AmplabJenkins commented on the issue: https://github.com/apache/spark/pull/22461 Merged build finished. Test PASSed. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22461: [SPARK-25453][SQL][TEST] OracleIntegrationSuite IllegalA...

Github user AmplabJenkins commented on the issue: https://github.com/apache/spark/pull/22461 Test PASSed. Refer to this link for build results (access rights to CI server needed): https://amplab.cs.berkeley.edu/jenkins//job/SparkPullRequestBuilder/96414/ Test PASSed. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22461: [SPARK-25453][SQL][TEST] OracleIntegrationSuite IllegalA...

Github user SparkQA commented on the issue: https://github.com/apache/spark/pull/22461 **[Test build #96414 has finished](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/96414/testReport)** for PR 22461 at commit [`100e808`](https://github.com/apache/spark/commit/100e808544bbe9d2c618bf623d94991b46adbae5). * This patch passes all tests. * This patch merges cleanly. * This patch adds no public classes. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #18544: [SPARK-21318][SQL]Improve exception message thrown by `l...

Github user SparkQA commented on the issue: https://github.com/apache/spark/pull/18544 **[Test build #96422 has started](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/96422/testReport)** for PR 18544 at commit [`6f12ad6`](https://github.com/apache/spark/commit/6f12ad68cdb7ab75a25c581286be35e847a2e0bb). --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22399: [SPARK-25408] Move to mode ideomatic Java8

Github user Fokko commented on a diff in the pull request:

https://github.com/apache/spark/pull/22399#discussion_r219480345

--- Diff:

sql/hive-thriftserver/src/main/java/org/apache/hive/service/cli/CLIService.java

---

@@ -146,16 +146,11 @@ public UserGroupInformation getHttpUGI() {

public synchronized void start() {

super.start();

// Initialize and test a connection to the metastore

-IMetaStoreClient metastoreClient = null;

try {

- metastoreClient = new HiveMetaStoreClient(hiveConf);

- metastoreClient.getDatabases("default");

-} catch (Exception e) {

- throw new ServiceException("Unable to connect to MetaStore!", e);

-}

-finally {

- if (metastoreClient != null) {

-metastoreClient.close();

+ try (IMetaStoreClient metastoreClient = new

HiveMetaStoreClient(hiveConf)) {

--- End diff --

Good one, thanks

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22407: [SPARK-25416][SQL] ArrayPosition function may return inc...

Github user ueshin commented on the issue: https://github.com/apache/spark/pull/22407 LGTM, pending Jenkins. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22513: [SPARK-25499][TEST]Refactor BenchmarkBase and Benchmark

Github user AmplabJenkins commented on the issue: https://github.com/apache/spark/pull/22513 Merged build finished. Test PASSed. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22513: [SPARK-25499][TEST]Refactor BenchmarkBase and Benchmark

Github user AmplabJenkins commented on the issue: https://github.com/apache/spark/pull/22513 Test PASSed. Refer to this link for build results (access rights to CI server needed): https://amplab.cs.berkeley.edu/jenkins//job/SparkPullRequestBuilder/96407/ Test PASSed. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22513: [SPARK-25499][TEST]Refactor BenchmarkBase and Benchmark

Github user SparkQA commented on the issue: https://github.com/apache/spark/pull/22513 **[Test build #96407 has finished](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/96407/testReport)** for PR 22513 at commit [`1c3c0f6`](https://github.com/apache/spark/commit/1c3c0f692d38b361f35017df3e999f7838e28e48). * This patch passes all tests. * This patch merges cleanly. * This patch adds no public classes. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22407: [SPARK-25416][SQL] ArrayPosition function may return inc...

Github user AmplabJenkins commented on the issue: https://github.com/apache/spark/pull/22407 Test PASSed. Refer to this link for build results (access rights to CI server needed): https://amplab.cs.berkeley.edu/jenkins//job/testing-k8s-prb-make-spark-distribution-unified/3340/ Test PASSed. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22407: [SPARK-25416][SQL] ArrayPosition function may return inc...

Github user AmplabJenkins commented on the issue: https://github.com/apache/spark/pull/22407 Merged build finished. Test PASSed. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22407: [SPARK-25416][SQL] ArrayPosition function may return inc...

Github user SparkQA commented on the issue: https://github.com/apache/spark/pull/22407 **[Test build #96421 has started](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/96421/testReport)** for PR 22407 at commit [`bb18108`](https://github.com/apache/spark/commit/bb181084b8d0130bf53fcc1417b10d518eae). --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #21596: [SPARK-24601] Update Jackson to 2.9.6

Github user AmplabJenkins commented on the issue: https://github.com/apache/spark/pull/21596 Test PASSed. Refer to this link for build results (access rights to CI server needed): https://amplab.cs.berkeley.edu/jenkins//job/SparkPullRequestBuilder/96413/ Test PASSed. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #21596: [SPARK-24601] Update Jackson to 2.9.6

Github user AmplabJenkins commented on the issue: https://github.com/apache/spark/pull/21596 Merged build finished. Test PASSed. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22407: [SPARK-25416][SQL] ArrayPosition function may return inc...

Github user ueshin commented on the issue: https://github.com/apache/spark/pull/22407 Jenkins, retest this please. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22407: [SPARK-25416][SQL] ArrayPosition function may return inc...

Github user ueshin commented on the issue: https://github.com/apache/spark/pull/22407 So, before #22448 (branch-2.4 if we don't backport #22448), we don't coerce between decimals, and after that (master, not merged yet though), we will do, right? I'm okay with the behavior. I'd retrigger the build because it's been a long time since the last build. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #21596: [SPARK-24601] Update Jackson to 2.9.6

Github user SparkQA commented on the issue: https://github.com/apache/spark/pull/21596 **[Test build #96413 has finished](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/96413/testReport)** for PR 21596 at commit [`2bab06f`](https://github.com/apache/spark/commit/2bab06f8e73be0e2724bbd5c836360ab5f107d44). * This patch passes all tests. * This patch merges cleanly. * This patch adds no public classes. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22433: [SPARK-25442][SQL][K8S] Support STS to run in k8s...

Github user suryag10 commented on a diff in the pull request: https://github.com/apache/spark/pull/22433#discussion_r219476048 --- Diff: docs/running-on-kubernetes.md --- @@ -340,6 +340,39 @@ RBAC authorization and how to configure Kubernetes service accounts for pods, pl [Using RBAC Authorization](https://kubernetes.io/docs/admin/authorization/rbac/) and [Configure Service Accounts for Pods](https://kubernetes.io/docs/tasks/configure-pod-container/configure-service-account/). +## Running Spark Thrift Server + +Thrift JDBC/ODBC Server (aka Spark Thrift Server or STS) is Spark SQLâs port of Apache Hiveâs HiveServer2 that allows +JDBC/ODBC clients to execute SQL queries over JDBC and ODBC protocols on Apache Spark. + +### Spark deploy mode of Client + +To start STS in client mode, excute the following command + +$ sbin/start-thriftserver.sh \ +--master k8s://https://: + +### Spark deploy mode of Cluster + +To start STS in cluster mode, excute the following command + +$ sbin/start-thriftserver.sh \ +--master k8s://https://: \ +--deploy-mode cluster + +The most basic workflow is to use the pod name (driver pod name incase of cluster mode and self pod name incase of client --- End diff -- pod/container from which the STS command is executed --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22511: [SPARK-25422][CORE] Don't memory map blocks streamed to ...

Github user AmplabJenkins commented on the issue: https://github.com/apache/spark/pull/22511 Merged build finished. Test PASSed. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22511: [SPARK-25422][CORE] Don't memory map blocks streamed to ...

Github user AmplabJenkins commented on the issue: https://github.com/apache/spark/pull/22511 Test PASSed. Refer to this link for build results (access rights to CI server needed): https://amplab.cs.berkeley.edu/jenkins//job/SparkPullRequestBuilder/96408/ Test PASSed. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22512: [SPARK-25498][SQL][WIP] Fix SQLQueryTestSuite failures w...

Github user maropu commented on the issue:

https://github.com/apache/spark/pull/22512

This is a simple query to reproduce;

```

$ SPARK_TESTING=1 ./bin/spark-shell

scala> sql("SET spark.sql.codegen.factoryMode=NO_CODEGEN")

scala> sql("CREATE TABLE desc_col_table (key int COMMENT 'column_comment')

USING PARQUET")

scala> sql("""ANALYZE TABLE desc_col_table COMPUTE STATISTICS FOR COLUMNS

key""")

org.apache.spark.SparkException: Job aborted due to stage failure: Task 0

in stage 1.0 failed 1 times, most recent failure: Lost task 0.0 in stage 1.0

(TID 0, localhost, executor driver): java.lang.UnsupportedOperationException

at

org.apache.spark.sql.catalyst.expressions.UnsafeRow.update(UnsafeRow.java:206)

at

org.apache.spark.sql.catalyst.expressions.InterpretedMutableProjection.apply(InterpretedMutableProjection.scala:67)

at

org.apache.spark.sql.catalyst.expressions.InterpretedMutableProjection.apply(InterpretedMutableProjection.scala:31)

at

org.apache.spark.sql.execution.aggregate.TungstenAggregationIterator.createNewAggregationBuffer(TungstenAggregationIterator.scala:129)

at

org.apache.spark.sql.execution.aggregate.TungstenAggregationIterator.(TungstenAggregationIterator.scala:156)

at

org.apache.spark.sql.execution.aggregate.HashAggregateExec$$anonfun$doExecute$1$$anonfun$4.apply(HashAggregateExec.scala:112)

at

org.apache.spark.sql.execution.aggregate.HashAggregateExec$$anonfun$doExecute$1$$anonfun$4.apply(HashAggregateExec.scala:102)

```

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22511: [SPARK-25422][CORE] Don't memory map blocks streamed to ...

Github user SparkQA commented on the issue: https://github.com/apache/spark/pull/22511 **[Test build #96408 has finished](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/96408/testReport)** for PR 22511 at commit [`aee82ab`](https://github.com/apache/spark/commit/aee82abe4cd9fbefa14fb280644276fe491bcf9a). * This patch passes all tests. * This patch merges cleanly. * This patch adds no public classes. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #18544: [SPARK-21318][SQL]Improve exception message throw...

Github user stanzhai commented on a diff in the pull request:

https://github.com/apache/spark/pull/18544#discussion_r219468948

--- Diff:

sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/catalog/SessionCatalogSuite.scala

---

@@ -1440,6 +1441,8 @@ abstract class SessionCatalogSuite extends

AnalysisTest {

}

assert(cause.getMessage.contains("Undefined function:

'undefined_fn'"))

+// SPARK-21318: the error message should contains the current

database name

--- End diff --

org.apache.spark.sql.AnalysisException: Undefined function: 'undefined_fn'.

This function is neither a registered temporary function nor a permanent

function registered in the database 'db1'.;

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22515: [SPARK-19724][SQL] allowCreatingManagedTableUsingNonempt...

Github user AmplabJenkins commented on the issue: https://github.com/apache/spark/pull/22515 Merged build finished. Test PASSed. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22515: [SPARK-19724][SQL] allowCreatingManagedTableUsingNonempt...

Github user AmplabJenkins commented on the issue: https://github.com/apache/spark/pull/22515 Test PASSed. Refer to this link for build results (access rights to CI server needed): https://amplab.cs.berkeley.edu/jenkins//job/SparkPullRequestBuilder/96412/ Test PASSed. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22515: [SPARK-19724][SQL] allowCreatingManagedTableUsingNonempt...

Github user SparkQA commented on the issue: https://github.com/apache/spark/pull/22515 **[Test build #96412 has finished](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/96412/testReport)** for PR 22515 at commit [`0f32b01`](https://github.com/apache/spark/commit/0f32b0170fe6295bfef604b5a679f9391b5ec78f). * This patch passes all tests. * This patch merges cleanly. * This patch adds no public classes. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22458: [SPARK-25459] Add viewOriginalText back to CatalogTable

Github user AmplabJenkins commented on the issue: https://github.com/apache/spark/pull/22458 Merged build finished. Test PASSed. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22458: [SPARK-25459] Add viewOriginalText back to CatalogTable

Github user AmplabJenkins commented on the issue: https://github.com/apache/spark/pull/22458 Test PASSed. Refer to this link for build results (access rights to CI server needed): https://amplab.cs.berkeley.edu/jenkins//job/SparkPullRequestBuilder/96411/ Test PASSed. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

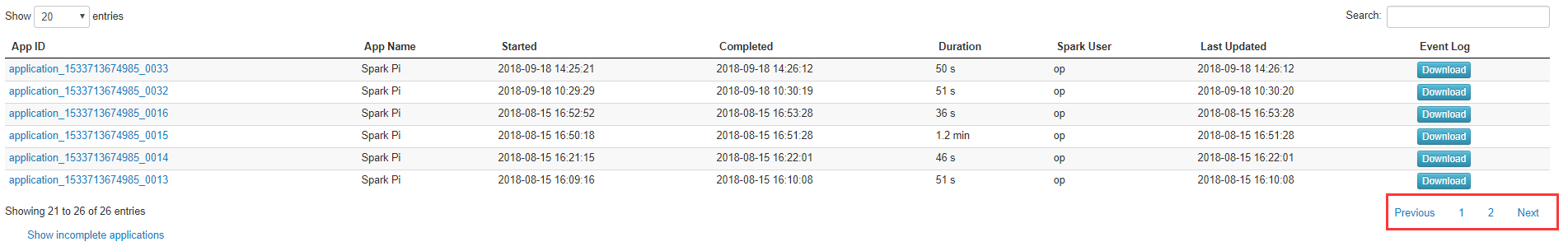

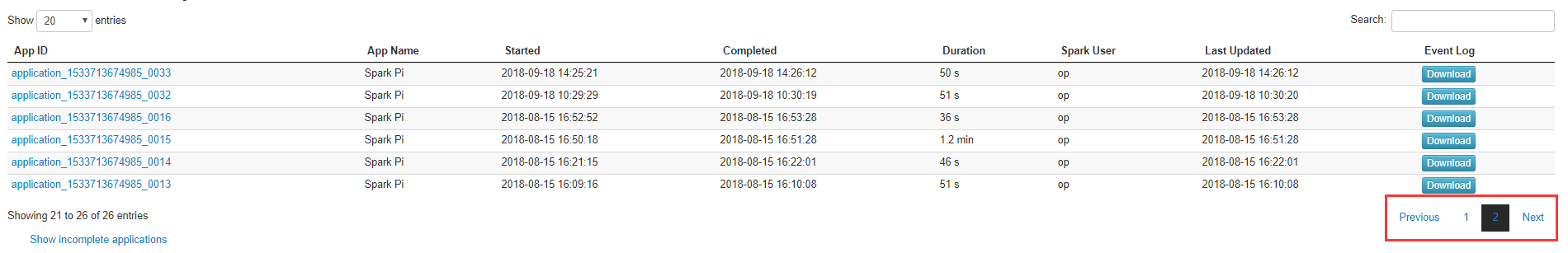

[GitHub] spark issue #22516: [SPARK-25468]Highlight current page index in the history...

Github user Adamyuanyuan commented on the issue: https://github.com/apache/spark/pull/22516 We have the same problem, just change the css file jquery.dataTables.1.10.4.min.css can slove this miror. Although it's less modified, I think it is really important for spark history server users. Please give me some aesthetic advice :) --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22458: [SPARK-25459] Add viewOriginalText back to CatalogTable

Github user SparkQA commented on the issue: https://github.com/apache/spark/pull/22458 **[Test build #96411 has finished](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/96411/testReport)** for PR 22458 at commit [`f3d3100`](https://github.com/apache/spark/commit/f3d3100399be442da9fd5e417aeefb9662903c49). * This patch passes all tests. * This patch merges cleanly. * This patch adds no public classes. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22494: [SPARK-22036][SQL][followup] DECIMAL_OPERATIONS_ALLOW_PR...

Github user AmplabJenkins commented on the issue: https://github.com/apache/spark/pull/22494 Test PASSed. Refer to this link for build results (access rights to CI server needed): https://amplab.cs.berkeley.edu/jenkins//job/SparkPullRequestBuilder/96406/ Test PASSed. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22494: [SPARK-22036][SQL][followup] DECIMAL_OPERATIONS_ALLOW_PR...

Github user AmplabJenkins commented on the issue: https://github.com/apache/spark/pull/22494 Merged build finished. Test PASSed. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22494: [SPARK-22036][SQL][followup] DECIMAL_OPERATIONS_ALLOW_PR...

Github user SparkQA commented on the issue: https://github.com/apache/spark/pull/22494 **[Test build #96406 has finished](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/96406/testReport)** for PR 22494 at commit [`1ee9f02`](https://github.com/apache/spark/commit/1ee9f0208a3cb6de373e05366c19bf69967eecd8). * This patch passes all tests. * This patch merges cleanly. * This patch adds no public classes. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22514: [SPARK-25271][SQL] Hive ctas commands should use data so...

Github user AmplabJenkins commented on the issue: https://github.com/apache/spark/pull/22514 Test PASSed. Refer to this link for build results (access rights to CI server needed): https://amplab.cs.berkeley.edu/jenkins//job/SparkPullRequestBuilder/96410/ Test PASSed. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22514: [SPARK-25271][SQL] Hive ctas commands should use data so...

Github user AmplabJenkins commented on the issue: https://github.com/apache/spark/pull/22514 Merged build finished. Test PASSed. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22514: [SPARK-25271][SQL] Hive ctas commands should use data so...

Github user SparkQA commented on the issue: https://github.com/apache/spark/pull/22514 **[Test build #96410 has finished](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/96410/testReport)** for PR 22514 at commit [`5debc60`](https://github.com/apache/spark/commit/5debc6096ae6e505d3386fd7eb569d154f158d55). * This patch passes all tests. * This patch merges cleanly. * This patch adds no public classes. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22516: [SPARK-25468]Highlight current page index in the history...

Github user AmplabJenkins commented on the issue: https://github.com/apache/spark/pull/22516 Can one of the admins verify this patch? --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22516: [SPARK-25468]Highlight current page index in the history...

Github user AmplabJenkins commented on the issue: https://github.com/apache/spark/pull/22516 Can one of the admins verify this patch? --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22516: [SPARK-25468]Highlight current page index in the history...

Github user AmplabJenkins commented on the issue: https://github.com/apache/spark/pull/22516 Can one of the admins verify this patch? --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22516: [SPARK-25468]Highlight current page index in the ...

GitHub user Adamyuanyuan opened a pull request: https://github.com/apache/spark/pull/22516 [SPARK-25468]Highlight current page index in the history server ## What changes were proposed in this pull request? This PR is highlight current page index in the history server ## How was this patch tested? Manual tests for Chrome, Firefox and Safari Before modifying:  After modifying:  You can merge this pull request into a Git repository by running: $ git pull https://github.com/Adamyuanyuan/spark spark-adam-25468 Alternatively you can review and apply these changes as the patch at: https://github.com/apache/spark/pull/22516.patch To close this pull request, make a commit to your master/trunk branch with (at least) the following in the commit message: This closes #22516 commit 0a28887167e8834bb4ccf4e3988e4179b1de52b4 Author: çå°å Date: 2018-09-21T10:41:40Z Highlight current page index in the history server --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22316: [SPARK-25048][SQL] Pivoting by multiple columns in Scala...

Github user SparkQA commented on the issue: https://github.com/apache/spark/pull/22316 **[Test build #96420 has started](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/96420/testReport)** for PR 22316 at commit [`382640b`](https://github.com/apache/spark/commit/382640be9bb9739929daea0bceb3093836d7f78d). --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22316: [SPARK-25048][SQL] Pivoting by multiple columns in Scala...

Github user MaxGekk commented on the issue: https://github.com/apache/spark/pull/22316 jenkins, retest this, please --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22495: [SPARK-25486][TEST] Refactor SortBenchmark to use main m...

Github user SparkQA commented on the issue: https://github.com/apache/spark/pull/22495 **[Test build #96419 has started](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/96419/testReport)** for PR 22495 at commit [`bbdc202`](https://github.com/apache/spark/commit/bbdc2029c992803e75d04be9e5b2507d6df1779f). --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22375: [SPARK-25388][Test][SQL] Detect incorrect nullabl...

Github user mgaido91 commented on a diff in the pull request:

https://github.com/apache/spark/pull/22375#discussion_r219454650

--- Diff:

sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/expressions/ExpressionEvalHelperSuite.scala

---

@@ -35,6 +36,13 @@ class ExpressionEvalHelperSuite extends SparkFunSuite

with ExpressionEvalHelper

val e = intercept[RuntimeException] {

checkEvaluation(BadCodegenExpression(), 10) }

assert(e.getMessage.contains("some_variable"))

}

+

+ test("SPARK-25388: checkEvaluation should fail if nullable in DataType

is incorrect") {

+val e = intercept[RuntimeException] {

+ checkEvaluation(MapIncorrectDataTypeExpression(), Map(3 -> 7, 6 ->

null))

--- End diff --

The motivations are the 2 mentioned above. Basically, I am proposing the

same suggestion @cloud-fan has just commented

[here](https://github.com/apache/spark/pull/22375#discussion_r219452615)

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22316: [SPARK-25048][SQL] Pivoting by multiple columns in Scala...

Github user AmplabJenkins commented on the issue: https://github.com/apache/spark/pull/22316 Merged build finished. Test FAILed. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22316: [SPARK-25048][SQL] Pivoting by multiple columns in Scala...

Github user AmplabJenkins commented on the issue: https://github.com/apache/spark/pull/22316 Test FAILed. Refer to this link for build results (access rights to CI server needed): https://amplab.cs.berkeley.edu/jenkins//job/SparkPullRequestBuilder/96409/ Test FAILed. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22494: [SPARK-22036][SQL][followup] DECIMAL_OPERATIONS_ALLOW_PR...

Github user mgaido91 commented on the issue: https://github.com/apache/spark/pull/22494 > I'm talking about the specific query reported at https://issues.apache.org/jira/browse/SPARK-22036?focusedCommentId=16618104=com.atlassian.jira.plugin.system.issuetabpanels%3Acomment-tabpanel#comment-16618104 , which needs to turn off DECIMAL_OPERATIONS_ALLOW_PREC_LOSS. Yes I am talking about that too, and there is no need to turn off `DECIMAL_OPERATIONS_ALLOW_PREC_LOSS` for that query. If you define another config and you switch only the other config, you'll see that the result is correct. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22316: [SPARK-25048][SQL] Pivoting by multiple columns in Scala...

Github user SparkQA commented on the issue: https://github.com/apache/spark/pull/22316 **[Test build #96409 has finished](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/96409/testReport)** for PR 22316 at commit [`382640b`](https://github.com/apache/spark/commit/382640be9bb9739929daea0bceb3093836d7f78d). * This patch **fails Spark unit tests**. * This patch merges cleanly. * This patch adds no public classes. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22375: [SPARK-25388][Test][SQL] Detect incorrect nullabl...

Github user cloud-fan commented on a diff in the pull request:

https://github.com/apache/spark/pull/22375#discussion_r219452615

--- Diff:

sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/expressions/ExpressionEvalHelper.scala

---

@@ -223,8 +223,16 @@ trait ExpressionEvalHelper extends

GeneratorDrivenPropertyChecks with PlanTestBa

}

} else {

val lit = InternalRow(expected, expected)

--- End diff --

I think a more straightforward approach is, validate the `expected`

according to the nullability of the given expression.

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22375: [SPARK-25388][Test][SQL] Detect incorrect nullabl...

Github user kiszk commented on a diff in the pull request:

https://github.com/apache/spark/pull/22375#discussion_r219448432

--- Diff:

sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/expressions/ExpressionEvalHelperSuite.scala

---

@@ -35,6 +36,13 @@ class ExpressionEvalHelperSuite extends SparkFunSuite

with ExpressionEvalHelper

val e = intercept[RuntimeException] {

checkEvaluation(BadCodegenExpression(), 10) }

assert(e.getMessage.contains("some_variable"))

}

+

+ test("SPARK-25388: checkEvaluation should fail if nullable in DataType

is incorrect") {

+val e = intercept[RuntimeException] {

+ checkEvaluation(MapIncorrectDataTypeExpression(), Map(3 -> 7, 6 ->

null))

--- End diff --

I may not still understand your motivation correctly. What is the

motivation to introduce this assertion?

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #20999: [SPARK-14922][SPARK-17732][SPARK-23866][SQL] Support par...

Github user mgaido91 commented on the issue: https://github.com/apache/spark/pull/20999 any more comments on this @maropu apart from https://github.com/apache/spark/pull/20999#discussion_r216525719 where we are waiting for others' feedback? @cloud-fan @dongjoon-hyun @gatorsmile @viirya may you please take a look at this if you have time? Thanks. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22494: [SPARK-22036][SQL][followup] DECIMAL_OPERATIONS_ALLOW_PR...

Github user cloud-fan commented on the issue: https://github.com/apache/spark/pull/22494 Sorry my mistake. I'm talking about the specific query reported at https://issues.apache.org/jira/browse/SPARK-22036?focusedCommentId=16618104=com.atlassian.jira.plugin.system.issuetabpanels%3Acomment-tabpanel#comment-16618104 , which needs to turn off `DECIMAL_OPERATIONS_ALLOW_PREC_LOSS`. SPARK-25454 is a long-standing bug and currently we can't help users to work around it. My point is, to work around [this regression](https://issues.apache.org/jira/browse/SPARK-22036?focusedCommentId=16618104=com.atlassian.jira.plugin.system.issuetabpanels%3Acomment-tabpanel#comment-16618104), user must turn off both `DECIMAL_OPERATIONS_ALLOW_PREC_LOSS` and the new config, which makes me think we should not create a new config. > After this patch, this query would return null instead, as an overflow would happen. So this patch is "correcting" a regression from 2.2 but it is introducing another one from 2.3.0-2.3.1. I don't agree with it. Users can turn on `DECIMAL_OPERATIONS_ALLOW_PREC_LOSS` to make the query work. We should not fix values of some configs and then define regression, that's not a regression. The reason why [this](https://issues.apache.org/jira/browse/SPARK-22036?focusedCommentId=16618104=com.atlassian.jira.plugin.system.issuetabpanels%3Acomment-tabpanel#comment-16618104) is a regression is: users have no way to get the same result of 2.3 in 2.4. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22466: [SPARK-25464][SQL]On dropping the Database it wil...

Github user sujith71955 commented on a diff in the pull request:

https://github.com/apache/spark/pull/22466#discussion_r219443041

--- Diff:

sql/hive/src/main/scala/org/apache/spark/sql/hive/client/HiveClientImpl.scala

---

@@ -321,8 +321,19 @@ private[hive] class HiveClientImpl(

override def dropDatabase(

name: String,

ignoreIfNotExists: Boolean,

- cascade: Boolean): Unit = withHiveState {

-client.dropDatabase(name, true, ignoreIfNotExists, cascade)

+ cascade: Boolean): Unit = {

+var isExternalDB = false

+try {

+ val db: CatalogDatabase = getDatabase(name)

+ isExternalDB = db.properties.getOrElse("isExternal",

"false").matches("true")

+}

+catch {

--- End diff --

please pull catch to 1 level up inline with the close brace of try

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22466: [SPARK-25464][SQL]On dropping the Database it wil...

Github user sujith71955 commented on a diff in the pull request:

https://github.com/apache/spark/pull/22466#discussion_r219442479

--- Diff:

sql/hive/src/test/scala/org/apache/spark/sql/hive/execution/HiveDDLSuite.scala

---

@@ -2348,4 +2348,41 @@ class HiveDDLSuite

}

}

}

+

+ test("SPARK-25464 Drop Database check location after dropping external

Database") {

+val catalog = spark.sessionState.catalog

+val dbName = "dbwithcustomlocation"

+withTempDir { tmpDir =>

+ val path = new Path(tmpDir.getCanonicalPath)

+ val fs = path.getFileSystem(new Configuration)

+ try {

+sql(s"CREATE DATABASE $dbName Location '${ path.toUri }'")

+val db1 = catalog.getDatabaseMetadata(dbName)

+

assert(db1.properties.getOrElse("isExternal","empty").equals("true"))

+val client =

+

spark.sharedState.externalCatalog.unwrapped.asInstanceOf[HiveExternalCatalog].client

+client.dropDatabase(dbName,false,true)

+assert(fs.exists(path))

+assert(!catalog.databaseExists(dbName))

+ }

+ finally {

--- End diff --

please pull finally to 1 level up inline with the close brace of try

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22490: [SPARK-25481][TEST] Refactor ColumnarBatchBenchmark to u...

Github user SparkQA commented on the issue: https://github.com/apache/spark/pull/22490 **[Test build #96418 has started](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/96418/testReport)** for PR 22490 at commit [`cbfac03`](https://github.com/apache/spark/commit/cbfac03f4e34c32b797f9e26ec9146136a0c14be). --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22466: [SPARK-25464][SQL]On dropping the Database it wil...

Github user sujith71955 commented on a diff in the pull request:

https://github.com/apache/spark/pull/22466#discussion_r219440831

--- Diff:

sql/core/src/main/scala/org/apache/spark/sql/execution/command/ddl.scala ---

@@ -67,12 +67,15 @@ case class CreateDatabaseCommand(

override def run(sparkSession: SparkSession): Seq[Row] = {

val catalog = sparkSession.sessionState.catalog

+// if the path is specified by the user then need to consider DB as

external DB

--- End diff --

nit: If the location is specified by the user then need to consider DB as

external DB,

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22466: [SPARK-25464][SQL]On dropping the Database it wil...

Github user sujith71955 commented on a diff in the pull request:

https://github.com/apache/spark/pull/22466#discussion_r219440456

--- Diff:

sql/core/src/main/scala/org/apache/spark/sql/execution/command/ddl.scala ---

@@ -67,12 +67,15 @@ case class CreateDatabaseCommand(

override def run(sparkSession: SparkSession): Seq[Row] = {

val catalog = sparkSession.sessionState.catalog

+// if the path is specified by the user then need to consider DB as

external DB

+// User will take care of deleting the path after DB is dropped

--- End diff --

nit: user shall take care of deleting the data from external location.

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #20276: [SPARK-14948][SQL] disambiguate attributes in joi...

Github user cloud-fan closed the pull request at: https://github.com/apache/spark/pull/20276 --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #20276: [SPARK-14948][SQL] disambiguate attributes in join condi...

Github user cloud-fan commented on the issue: https://github.com/apache/spark/pull/20276 I'm closing it since `AnalysisBarrier` is no longer there. We should revisit the whole self-join problem and fix it in 3.0, with breaking changes if necessary. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22471: [SPARK-25469][SQL] Eval methods of Concat, Revers...

Github user asfgit closed the pull request at: https://github.com/apache/spark/pull/22471 --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22494: [SPARK-22036][SQL][followup] DECIMAL_OPERATIONS_ALLOW_PR...

Github user mgaido91 commented on the issue: https://github.com/apache/spark/pull/22494 >The problem is, when users hit SPARK-25454, they must turn off both the DECIMAL_OPERATIONS_ALLOW_PREC_LOSS and the new config. If a user hits SPARK-25454, the value of `DECIMAL_OPERATIONS_ALLOW_PREC_LOSS` is not relevant. > The only reason to turn it off is: we hit SPARK-25454 No if SPARK-25454, turning it off is helpless. The only reason to turn it off is to get `null` instead of facing a precision loss when we need a precision higher than 38 and a scale higher than 6. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22471: [SPARK-25469][SQL] Eval methods of Concat, Reverse and E...

Github user maropu commented on the issue: https://github.com/apache/spark/pull/22471 Thanks! merging to master/2.4. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22466: [SPARK-25464][SQL]On dropping the Database it will drop ...

Github user sujith71955 commented on the issue: https://github.com/apache/spark/pull/22466 @srowen @HyukjinKwon I think this can be a risk if the location of the newly created database points to an existing one, if user drop the db both the db data will be lost . --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22513: [SPARK-25499][TEST]Refactor BenchmarkBase and Benchmark

Github user cloud-fan commented on the issue: https://github.com/apache/spark/pull/22513 LGTM --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22375: [SPARK-25388][Test][SQL] Detect incorrect nullabl...

Github user mgaido91 commented on a diff in the pull request:

https://github.com/apache/spark/pull/22375#discussion_r219435279

--- Diff:

sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/expressions/ExpressionEvalHelperSuite.scala

---

@@ -35,6 +36,13 @@ class ExpressionEvalHelperSuite extends SparkFunSuite

with ExpressionEvalHelper

val e = intercept[RuntimeException] {

checkEvaluation(BadCodegenExpression(), 10) }

assert(e.getMessage.contains("some_variable"))

}

+

+ test("SPARK-25388: checkEvaluation should fail if nullable in DataType

is incorrect") {

+val e = intercept[RuntimeException] {

+ checkEvaluation(MapIncorrectDataTypeExpression(), Map(3 -> 7, 6 ->

null))

--- End diff --

Yes, I said that the suggestion above is wrong and needs to be rewritten in

a recursive way. Sorry for the bad suggestion, I just meant to show my idea. So

it should be something like:

```

assert(!containsNullWhereNotNullable(expected, expression.dataType))

```

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22494: [SPARK-22036][SQL][followup] DECIMAL_OPERATIONS_ALLOW_PR...

Github user cloud-fan commented on the issue: https://github.com/apache/spark/pull/22494 I tried to add a new config, but decided to not do it. The problem is, when users hit SPARK-25454, they must turn off both the `DECIMAL_OPERATIONS_ALLOW_PREC_LOSS` and the new config. `DECIMAL_OPERATIONS_ALLOW_PREC_LOSS` is an internal config and is true by default. The only reason to turn it off is: we hit SPARK-25454. That said, I won't treat it as a regression, as a user will not turn it off to run `select 1234567891 / (1.1 * 2 * 2 * 2 * 2)`. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22450: [SPARK-25454][SQL] Avoid precision loss in division with...

Github user SparkQA commented on the issue: https://github.com/apache/spark/pull/22450 **[Test build #96417 has started](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/96417/testReport)** for PR 22450 at commit [`4e240d9`](https://github.com/apache/spark/commit/4e240d9abea9ea67312f31e3af129416b8c3381a). --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22466: [SPARK-25464][SQL]When database is dropped all the data ...

Github user sujith71955 commented on the issue: https://github.com/apache/spark/pull/22466 @sandeep-katta can you please update the tile, i think as per your description it seems to be the data will be dropped if the location of the newly created database points to some existing database location. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22450: [SPARK-25454][SQL] Avoid precision loss in division with...

Github user AmplabJenkins commented on the issue: https://github.com/apache/spark/pull/22450 Test PASSed. Refer to this link for build results (access rights to CI server needed): https://amplab.cs.berkeley.edu/jenkins//job/testing-k8s-prb-make-spark-distribution-unified/3339/ Test PASSed. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22450: [SPARK-25454][SQL] Avoid precision loss in division with...

Github user AmplabJenkins commented on the issue: https://github.com/apache/spark/pull/22450 Merged build finished. Test PASSed. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22502: [SPARK-25474][SQL]When the "fallBackToHdfsForStatsEnable...

Github user shahidki31 commented on the issue: https://github.com/apache/spark/pull/22502 cc @cloud-fan --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22375: [SPARK-25388][Test][SQL] Detect incorrect nullabl...

Github user kiszk commented on a diff in the pull request:

https://github.com/apache/spark/pull/22375#discussion_r219432959

--- Diff:

sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/expressions/ExpressionEvalHelperSuite.scala

---

@@ -35,6 +36,13 @@ class ExpressionEvalHelperSuite extends SparkFunSuite

with ExpressionEvalHelper

val e = intercept[RuntimeException] {

checkEvaluation(BadCodegenExpression(), 10) }

assert(e.getMessage.contains("some_variable"))

}

+

+ test("SPARK-25388: checkEvaluation should fail if nullable in DataType

is incorrect") {

+val e = intercept[RuntimeException] {

+ checkEvaluation(MapIncorrectDataTypeExpression(), Map(3 -> 7, 6 ->

null))

--- End diff --

Even if we make it checking recursively, I think that this case cannot be

detected. This is because the mismatch occurs in the different recursive path.

Would it be possible to share the case where we distingished a wrong output

from a bad written UT in other places, as you proposed?

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22513: [SPARK-25499][TEST]Refactor BenchmarkBase and Benchmark

Github user gengliangwang commented on the issue: https://github.com/apache/spark/pull/22513 @cloud-fan @wangyum Thanks for the suggestion. I have updated the target package and related PR description. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22513: [SPARK-25499][TEST]Refactor BenchmarkBase and Benchmark

Github user AmplabJenkins commented on the issue: https://github.com/apache/spark/pull/22513 Merged build finished. Test PASSed. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22513: [SPARK-25499][TEST]Refactor BenchmarkBase and Benchmark

Github user AmplabJenkins commented on the issue: https://github.com/apache/spark/pull/22513 Test PASSed. Refer to this link for build results (access rights to CI server needed): https://amplab.cs.berkeley.edu/jenkins//job/testing-k8s-prb-make-spark-distribution-unified/3338/ Test PASSed. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22513: [SPARK-25499][TEST]Refactor BenchmarkBase and Benchmark

Github user SparkQA commented on the issue: https://github.com/apache/spark/pull/22513 **[Test build #96415 has started](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/96415/testReport)** for PR 22513 at commit [`1a5e1e9`](https://github.com/apache/spark/commit/1a5e1e927072e4438ea1ce7dc579a6d5b0986835). --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22461: [SPARK-25453][SQL][TEST] OracleIntegrationSuite IllegalA...

Github user SparkQA commented on the issue: https://github.com/apache/spark/pull/22461 **[Test build #96416 has started](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/96416/testReport)** for PR 22461 at commit [`9a948e5`](https://github.com/apache/spark/commit/9a948e5e59ccb6a39e9fa844273188ae331d5abd). --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22138: [SPARK-25151][SS] Apply Apache Commons Pool to KafkaData...

Github user gaborgsomogyi commented on the issue: https://github.com/apache/spark/pull/22138 One more thing just came to my mind is the documentation. The parameter documentation is a gap even for the original feature. As it has been grown and several additional parameters added it would be good to document them on the kafka integration page. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22138: [SPARK-25151][SS] Apply Apache Commons Pool to Ka...

Github user gaborgsomogyi commented on a diff in the pull request:

https://github.com/apache/spark/pull/22138#discussion_r219418553

--- Diff:

external/kafka-0-10-sql/src/main/scala/org/apache/spark/sql/kafka010/InternalKafkaConsumerPool.scala

---

@@ -0,0 +1,241 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one or more

+ * contributor license agreements. See the NOTICE file distributed with

+ * this work for additional information regarding copyright ownership.

+ * The ASF licenses this file to You under the Apache License, Version 2.0

+ * (the "License"); you may not use this file except in compliance with

+ * the License. You may obtain a copy of the License at

+ *

+ *http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ */

+

+package org.apache.spark.sql.kafka010

+

+import java.{util => ju}

+import java.util.concurrent.ConcurrentHashMap

+

+import org.apache.commons.pool2.{BaseKeyedPooledObjectFactory,

PooledObject, SwallowedExceptionListener}

+import org.apache.commons.pool2.impl.{DefaultEvictionPolicy,

DefaultPooledObject, GenericKeyedObjectPool, GenericKeyedObjectPoolConfig}

+

+import org.apache.spark.SparkEnv

+import org.apache.spark.internal.Logging

+import org.apache.spark.sql.kafka010.InternalKafkaConsumerPool._

+import org.apache.spark.sql.kafka010.KafkaDataConsumer.CacheKey

+

+/**

+ * Provides object pool for [[InternalKafkaConsumer]] which is grouped by

[[CacheKey]].

+ *

+ * This class leverages [[GenericKeyedObjectPool]] internally, hence

providing methods based on

+ * the class, and same contract applies: after using the borrowed object,

you must either call

+ * returnObject() if the object is healthy to return to pool, or

invalidateObject() if the object

+ * should be destroyed.

+ *

+ * The soft capacity of pool is determined by

"spark.sql.kafkaConsumerCache.capacity" config value,

+ * and the pool will have reasonable default value if the value is not

provided.

+ * (The instance will do its best effort to respect soft capacity but it

can exceed when there's

+ * a borrowing request and there's neither free space nor idle object to

clear.)

+ *

+ * This class guarantees that no caller will get pooled object once the

object is borrowed and

+ * not yet returned, hence provide thread-safety usage of non-thread-safe

[[InternalKafkaConsumer]]

+ * unless caller shares the object to multiple threads.

+ */

+private[kafka010] class InternalKafkaConsumerPool(

+objectFactory: ObjectFactory,

+poolConfig: PoolConfig) {

+

+ // the class is intended to have only soft capacity

+ assert(poolConfig.getMaxTotal < 0)

+

+ private lazy val pool = {

+val internalPool = new GenericKeyedObjectPool[CacheKey,

InternalKafkaConsumer](

+ objectFactory, poolConfig)

+

internalPool.setSwallowedExceptionListener(CustomSwallowedExceptionListener)

+internalPool

+ }

+

+ /**

+ * Borrows [[InternalKafkaConsumer]] object from the pool. If there's no

idle object for the key,

+ * the pool will create the [[InternalKafkaConsumer]] object.

+ *

+ * If the pool doesn't have idle object for the key and also exceeds the

soft capacity,

+ * pool will try to clear some of idle objects.

+ *

+ * Borrowed object must be returned by either calling returnObject or

invalidateObject, otherwise

+ * the object will be kept in pool as active object.

+ */

+ def borrowObject(key: CacheKey, kafkaParams: ju.Map[String, Object]):

InternalKafkaConsumer = {

+updateKafkaParamForKey(key, kafkaParams)

+

+if (getTotal == poolConfig.getSoftMaxTotal()) {

+ pool.clearOldest()

+}

+

+pool.borrowObject(key)

+ }

+

+ /** Returns borrowed object to the pool. */

+ def returnObject(consumer: InternalKafkaConsumer): Unit = {

+pool.returnObject(extractCacheKey(consumer), consumer)

+ }

+

+ /** Invalidates (destroy) borrowed object to the pool. */

+ def invalidateObject(consumer: InternalKafkaConsumer): Unit = {

+pool.invalidateObject(extractCacheKey(consumer), consumer)

+ }

+

+ /** Invalidates all idle consumers for the key */

+ def invalidateKey(key: CacheKey): Unit = {

+pool.clear(key)

+ }

+

+ /**

+ * Closes the keyed object pool. Once the pool is closed,

+ * borrowObject will fail with [[IllegalStateException]], but

[GitHub] spark issue #22419: [SPARK-23906][SQL] Add built-in UDF TRUNCATE(number)

Github user ueshin commented on the issue:

https://github.com/apache/spark/pull/22419

On second thoughts, I'm wondering whether we can reuse `RoundBase`?

I mean:

```scala

case class Truncate(child: Expression, scale: Expression)

extends RoundBase(child, scale, BigDecimal.RoundingMode.DOWN,

"ROUND_DOWN")

with Serializable with ImplicitCastInputTypes {

def this(child: Expression) = this(child, Literal(0))

}

```

If we want to round negative values towards negative infinity instead of

towards zero, we should use `RoundingMode.FLOOR` instead of `DOWN`, thought.

Btw, could you add test cases for negative value child as well?

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22513: [SPARK-25499][TEST]Refactor BenchmarkBase and Benchmark

Github user cloud-fan commented on the issue: https://github.com/apache/spark/pull/22513 Please also explain which module(core or sql?) these benchmark classes should be, in the PR description. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22513: [SPARK-25499][TEST]Refactor BenchmarkBase and Ben...

Github user cloud-fan commented on a diff in the pull request: https://github.com/apache/spark/pull/22513#discussion_r219414758 --- Diff: core/src/main/scala/org/apache/spark/sql/execution/benchmark/BenchmarkBase.scala --- @@ -15,7 +15,7 @@ * limitations under the License. */ -package org.apache.spark.util +package org.apache.spark.sql.execution.benchmark --- End diff -- ditto --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22513: [SPARK-25499][TEST]Refactor BenchmarkBase and Ben...

Github user cloud-fan commented on a diff in the pull request: https://github.com/apache/spark/pull/22513#discussion_r219414641 --- Diff: core/src/main/scala/org/apache/spark/sql/execution/benchmark/Benchmark.scala --- @@ -15,7 +15,7 @@ * limitations under the License. */ -package org.apache.spark.util +package org.apache.spark.sql.execution.benchmark --- End diff -- this class is in core and we should not have `sql` in the package name --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22411: [SPARK-25421][SQL] Abstract an output path field in trai...

Github user LantaoJin commented on the issue: https://github.com/apache/spark/pull/22411 Gently ping @cloud-fan --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22461: [SPARK-25453][SQL][TEST] OracleIntegrationSuite IllegalA...

Github user SparkQA commented on the issue: https://github.com/apache/spark/pull/22461 **[Test build #96414 has started](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/96414/testReport)** for PR 22461 at commit [`100e808`](https://github.com/apache/spark/commit/100e808544bbe9d2c618bf623d94991b46adbae5). --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #18544: [SPARK-21318][SQL]Improve exception message throw...

Github user cloud-fan commented on a diff in the pull request:

https://github.com/apache/spark/pull/18544#discussion_r219413664

--- Diff: sql/hive/src/test/scala/org/apache/spark/sql/hive/UDFSuite.scala

---

@@ -193,4 +193,29 @@ class UDFSuite

}

}

}

+

+ test("SPARK-21318: The correct exception message should be thrown " +

+"if a UDF/UDAF has already been registered") {

+val UDAFName = "empty"

+val UDAFClassName =

classOf[org.apache.spark.sql.hive.execution.UDAFEmpty].getCanonicalName

+

+withTempDatabase { dbName =>

--- End diff --

why do we have to test it inside a database? can't the default database

work?

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #18544: [SPARK-21318][SQL]Improve exception message throw...

Github user cloud-fan commented on a diff in the pull request:

https://github.com/apache/spark/pull/18544#discussion_r219412088

--- Diff:

sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/catalog/SessionCatalogSuite.scala

---

@@ -1440,6 +1441,8 @@ abstract class SessionCatalogSuite extends

AnalysisTest {

}

assert(cause.getMessage.contains("Undefined function:

'undefined_fn'"))

+// SPARK-21318: the error message should contains the current

database name

--- End diff --

what's the full error message?

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22455: [SPARK-24572][SPARKR] "eager execution" for R she...

Github user viirya commented on a diff in the pull request:

https://github.com/apache/spark/pull/22455#discussion_r219410274

--- Diff: R/pkg/R/DataFrame.R ---

@@ -226,7 +226,8 @@ setMethod("showDF",

#' show

#'

-#' Print class and type information of a Spark object.

+#' If eager evaluation is enabled and the Spark object is a

SparkDataFrame, return the data of

--- End diff --

return the data of the SparkDataFrame object -> evaluate the SparkDataFrame

and print top rows of the SparkDataFrame

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22511: [SPARK-25422][CORE] Don't memory map blocks streamed to ...

Github user cloud-fan commented on the issue: https://github.com/apache/spark/pull/22511 is this a long-standing bug? --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22305: [SPARK-24561][SQL][Python] User-defined window aggregati...

Github user felixcheung commented on the issue: https://github.com/apache/spark/pull/22305 @gatorsmile @cloud-fan --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #21596: [SPARK-24601] Update Jackson to 2.9.6

Github user SparkQA commented on the issue: https://github.com/apache/spark/pull/21596 **[Test build #96413 has started](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/96413/testReport)** for PR 21596 at commit [`2bab06f`](https://github.com/apache/spark/commit/2bab06f8e73be0e2724bbd5c836360ab5f107d44). --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22512: [SPARK-25498][SQL][WIP] Fix SQLQueryTestSuite failures w...

Github user SparkQA commented on the issue: https://github.com/apache/spark/pull/22512 **[Test build #96405 has finished](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/96405/testReport)** for PR 22512 at commit [`39c5e92`](https://github.com/apache/spark/commit/39c5e92713b86f342e756591235f9cbe25126f90). * This patch **fails Spark unit tests**. * This patch merges cleanly. * This patch adds the following public classes _(experimental)_: * ` s\"but $` * `case class Literal(value: Any, dataType: DataType) extends LeafExpression ` --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22512: [SPARK-25498][SQL][WIP] Fix SQLQueryTestSuite failures w...

Github user AmplabJenkins commented on the issue: https://github.com/apache/spark/pull/22512 Test FAILed. Refer to this link for build results (access rights to CI server needed): https://amplab.cs.berkeley.edu/jenkins//job/SparkPullRequestBuilder/96405/ Test FAILed. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22512: [SPARK-25498][SQL][WIP] Fix SQLQueryTestSuite failures w...

Github user AmplabJenkins commented on the issue: https://github.com/apache/spark/pull/22512 Merged build finished. Test FAILed. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22375: [SPARK-25388][Test][SQL] Detect incorrect nullabl...

Github user mgaido91 commented on a diff in the pull request:

https://github.com/apache/spark/pull/22375#discussion_r219406170

--- Diff:

sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/expressions/ExpressionEvalHelperSuite.scala

---

@@ -35,6 +36,13 @@ class ExpressionEvalHelperSuite extends SparkFunSuite

with ExpressionEvalHelper

val e = intercept[RuntimeException] {

checkEvaluation(BadCodegenExpression(), 10) }

assert(e.getMessage.contains("some_variable"))

}

+

+ test("SPARK-25388: checkEvaluation should fail if nullable in DataType

is incorrect") {

+val e = intercept[RuntimeException] {

+ checkEvaluation(MapIncorrectDataTypeExpression(), Map(3 -> 7, 6 ->

null))

--- End diff --

Yes, you're right, my suggestion doesn't work in a case like that, sorry.

We would need to make it checking recursively. But I think you got the idea of

what I am proposing here. Thanks.

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22455: [SPARK-24572][SPARKR] "eager execution" for R shell, IDE

Github user viirya commented on the issue: https://github.com/apache/spark/pull/22455 Let's also update the doc of `REPL_EAGER_EVAL_ENABLED` in `SQLConf`. After this patch, eager evaluation is not only supported in PySpark. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22511: [SPARK-25422][CORE] Don't memory map blocks streamed to ...

Github user cloud-fan commented on the issue: https://github.com/apache/spark/pull/22511 this seems like a big change, will we hit perf regression? --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22450: [SPARK-25454][SQL] Avoid precision loss in division with...

Github user mgaido91 commented on the issue: https://github.com/apache/spark/pull/22450 Thank you for your help and guidance. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22494: [SPARK-22036][SQL][followup] DECIMAL_OPERATIONS_ALLOW_PR...

Github user mgaido91 commented on the issue: https://github.com/apache/spark/pull/22494 > If your argument is, picking a precise precision for literal is an individual featue and not related to #20023, I'm OK to create a new config for it. Yes this is - I think - a better option. Indeed, what I meant was this: let's imagine I am a Spark 2.3.0 user and I have `DECIMAL_OPERATIONS_ALLOW_PREC_LOSS` turned to `false`. Before this patch, I can successfully run `select 1234567891 / (1.1 * 2 * 2 * 2 * 2)`. After this patch, this query would return `null` instead, as an overflow would happen. So this patch is "correcting" a regression from 2.2 but it is introducing another one from 2.3.0-2.3.1. Using another config is therefore a better workaround IMO. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org