Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18904

Thanks @MLnick, I will be glad if you can continue it.

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

Github user mpjlu closed the pull request at:

https://github.com/apache/spark/pull/18624

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

Github user mpjlu closed the pull request at:

https://github.com/apache/spark/pull/19516

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

Github user mpjlu closed the pull request at:

https://github.com/apache/spark/pull/19337

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

Github user mpjlu closed the pull request at:

https://github.com/apache/spark/pull/19536

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

Github user mpjlu closed the pull request at:

https://github.com/apache/spark/pull/17739

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/17739

Because I don't have the environment to continue this work, I will close

it. Thanks.

---

-

To unsubscribe, e-mail: reviews

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18624

Because I don't have the environment to continue this work, I will close

it. Thanks.

---

-

To unsubscribe, e-mail: reviews

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/19337

Because I don't have the environment to continue this work, I will close

it. Thanks.

---

-

To unsubscribe, e-mail: reviews

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/19536

Because I don't have the environment to continue this work, I will close

it. Thanks.

---

-

To unsubscribe, e-mail: reviews

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/19516

Because I don't have the environment to continue this work, I will close

it. Thanks.

---

-

To unsubscribe, e-mail: reviews

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18904

Because I don't have the environment to continue this work, I will close

it.

---

-

To unsubscribe, e-mail: reviews-unsubscr

Github user mpjlu closed the pull request at:

https://github.com/apache/spark/pull/18904

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18904

This is another case.

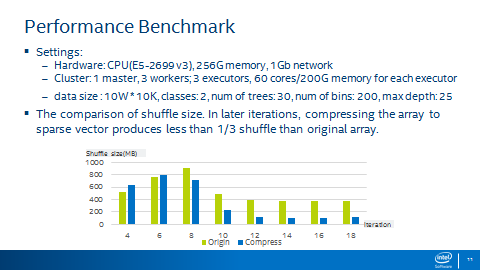

Table 1 shows the improvement of random tree algorithm with sparse

expression. We can see that when we use sparse expression, I/O can be reduced

by 61% and total run time

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18904

This is one of my test results.

Now, I am not working on Spark MLLIB, and don't

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18624

retest this please

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18624

Hi @holdenk, this is the PR we have discussed in Strata conference.

100k*100k, sparsity 0.05 | old | old | New

Github user mpjlu commented on a diff in the pull request:

https://github.com/apache/spark/pull/19536#discussion_r145735791

--- Diff: mllib/src/main/scala/org/apache/spark/ml/recommendation/ALS.scala

---

@@ -589,6 +602,9 @@ class ALS(@Since("1.4.0") override val u

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/19536

Hi @yanboliang , I reopen this PR per your suggestion, thanks.

I have tested the code, the performance result is matched with @hqzizania

's results.

Thanks @hqzizania

GitHub user mpjlu opened a pull request:

https://github.com/apache/spark/pull/19536

[SPARK-6685][ML]Use DSYRK to compute AtA in ALS

## What changes were proposed in this pull request?

This is a reopen of PR: https://github.com/apache/spark/pull/13891 with

mima fix

GitHub user mpjlu opened a pull request:

https://github.com/apache/spark/pull/19516

[SPARK-22277][ML]fix the bug of ChiSqSelector on preparing the output column

## What changes were proposed in this pull request?

To prepare the output columns when use ChiSqSelector

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/19337

For the comments about change the name of epsilon and add setter in

localLADModel, we have agreed not to change it now after some offline

discussion.

Because epsilon doesn't control model

Github user mpjlu commented on a diff in the pull request:

https://github.com/apache/spark/pull/19337#discussion_r144060858

--- Diff: mllib/src/main/scala/org/apache/spark/ml/clustering/LDA.scala ---

@@ -224,6 +224,24 @@ private[clustering] trait LDAParams extends Params

Github user mpjlu commented on a diff in the pull request:

https://github.com/apache/spark/pull/19337#discussion_r143723408

--- Diff:

mllib/src/main/scala/org/apache/spark/mllib/clustering/LDAOptimizer.scala ---

@@ -573,7 +584,8 @@ private[clustering] object OnlineLDAOptimizer

Github user mpjlu commented on a diff in the pull request:

https://github.com/apache/spark/pull/19337#discussion_r143705102

--- Diff:

mllib/src/test/scala/org/apache/spark/ml/clustering/LDASuite.scala ---

@@ -119,6 +121,8 @@ class LDASuite extends SparkFunSuite

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/19337

Thanks, @hhbyyh.

I will create a JIRA for python API

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

Github user mpjlu commented on a diff in the pull request:

https://github.com/apache/spark/pull/19337#discussion_r141272887

--- Diff: mllib/src/main/scala/org/apache/spark/ml/clustering/LDA.scala ---

@@ -224,6 +224,20 @@ private[clustering] trait LDAParams extends Params

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/19337

Thanks @mgaido91 , I will update the ML api, maybe also python and java API.

---

-

To unsubscribe, e-mail: reviews-unsubscr

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/19337

For LDA, the implementation is in mllib, ml calls mllib.

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/19337

Ok, thanks. we don't need to change the code here.

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/19337

Sorry, I got wrong.

So you think assert is better here? now we use require.

---

-

To unsubscribe, e-mail: reviews-unsubscr

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/19337

Because epsilon is Double, negative value should not cause the code run

into dead loop. All other setting in LDA using require for check or no check.

Should we use assert only for this change

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/19337

OK, I will change it to assert.

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/19337

Not check is also ok, user should know epsilon > 0

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.

Github user mpjlu commented on a diff in the pull request:

https://github.com/apache/spark/pull/19337#discussion_r140727204

--- Diff:

mllib/src/main/scala/org/apache/spark/mllib/clustering/LDAOptimizer.scala ---

@@ -573,7 +584,8 @@ private[clustering] object OnlineLDAOptimizer

GitHub user mpjlu opened a pull request:

https://github.com/apache/spark/pull/19337

[SPARK-22114][ML][MLLIB]add epsilon for LDA

## What changes were proposed in this pull request?

The current convergence condition of OnlineLDAOptimizer is:

while(meanGammaChange > 1

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18748

LGTM

---

If your project is set up for it, you can reply to this email and have your

reply appear on GitHub as well. If your project does not have this feature

enabled and wishes so

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18624

Thanks @MLnick , I think the ML ALS suite is ok, just MLLIB ALS suite is

too simple. One possible enhancement is to add the same test cases as ML ALS

suite. How do you think about it?

---

If your

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18748

Thanks @MLnick . I have double checked my test.

Since there is no recommendForUserSubset , my previous test is MLLIB

MatrixFactorizationModel::predict(RDD(Int, Int)), which predicts the rating

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18899

I have tested the performance of toSparse and toSparseWithSize separately.

There is about 35% performance improvement for this change.

---

If your project is set up for it, you can reply

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18904

retest this please

---

If your project is set up for it, you can reply to this email and have your

reply appear on GitHub as well. If your project does not have this feature

enabled and wishes so

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18899

Thanks @sethah @srowen . The comment is added.

---

If your project is set up for it, you can reply to this email and have your

reply appear on GitHub as well. If your project does not have

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18899

I did not only test this PR. Only work for PR 18904 and find this

performance difference.

---

If your project is set up for it, you can reply to this email and have your

reply appear on GitHub

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18899

Hi @srowen; how about using our first version? though duplicate some code,

but change is small.

---

If your project is set up for it, you can reply to this email and have your

reply appear on GitHub

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18899

For PR-18904, before this change, one iteration is about 58s, after this

change, one iteration is about:40s

---

If your project is set up for it, you can reply to this email and have your

reply

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18904

A gentle ping: @sethah @jkbradley

---

If your project is set up for it, you can reply to this email and have your

reply appear on GitHub as well. If your project does not have this feature

enabled

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18899

retest this please

---

If your project is set up for it, you can reply to this email and have your

reply appear on GitHub as well. If your project does not have this feature

enabled and wishes so

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18899

retest this please

---

If your project is set up for it, you can reply to this email and have your

reply appear on GitHub as well. If your project does not have this feature

enabled and wishes so

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18899

Hi @sethah , the unit test is added. Thanks

---

If your project is set up for it, you can reply to this email and have your

reply appear on GitHub as well. If your project does not have this feature

Github user mpjlu commented on a diff in the pull request:

https://github.com/apache/spark/pull/18899#discussion_r132610165

--- Diff: project/MimaExcludes.scala ---

@@ -1012,6 +1012,10 @@ object MimaExcludes {

ProblemFilters.exclude[IncompatibleResultTypeProblem

Github user mpjlu commented on a diff in the pull request:

https://github.com/apache/spark/pull/18899#discussion_r132610049

--- Diff:

mllib-local/src/main/scala/org/apache/spark/ml/linalg/Vectors.scala ---

@@ -635,8 +642,9 @@ class SparseVector @Since("2.0.0") (

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18868

Yes, that is right. Thanks.

---

If your project is set up for it, you can reply to this email and have your

reply appear on GitHub as well. If your project does not have this feature

enabled

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18899

Thanks @srowen.

I will revise the code per your suggestion.

when I wrote the code, I just concerned user call toSparse(size) and give a

very small size.

---

If your project is set up

GitHub user mpjlu opened a pull request:

https://github.com/apache/spark/pull/18904

[SPARK-21624]optimzie RF communicaiton cost

## What changes were proposed in this pull request?

The implementation of RF is bound by either the cost of statistics

computation on workers

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18899

Yes, I just concern if add toSparse(size) we should check the size in the

code, there will be no performance gain. If we don't need to check the "size"

(comparing size with numNonZero) i

GitHub user mpjlu opened a pull request:

https://github.com/apache/spark/pull/18899

[SPARK-21680][ML][MLLIB]optimzie Vector coompress

## What changes were proposed in this pull request?

When use Vector.compressed to change a Vector to SparseVector, the

performance is very

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18868

retest this please

---

If your project is set up for it, you can reply to this email and have your

reply appear on GitHub as well. If your project does not have this feature

enabled and wishes so

Github user mpjlu commented on a diff in the pull request:

https://github.com/apache/spark/pull/18868#discussion_r131605981

--- Diff:

mllib/src/main/scala/org/apache/spark/ml/tree/impl/RandomForest.scala ---

@@ -1107,9 +1108,11 @@ private[spark] object RandomForest extends Logging

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18832

Thanks @srowen , I revised the comments per Seth's suggestion: "Parent

stats need to be explicitly tracked in the DTStatsAggregator because the parent

[[Node]] object does not have Impurity

GitHub user mpjlu opened a pull request:

https://github.com/apache/spark/pull/18868

[SPARK-21638][ML]Fix RF/GBT Warning message error

## What changes were proposed in this pull request?

When train RF model, there are many warning messages like this:

> W

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18832

Thanks @sethah .

I strongly think we should update the commend or just delete the comment as

the current PR.

Another reason is: there are three kinds of feature: categorical, ordered

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18832

I agree with you. Do you think we should update the comment to help others

understand the code.

Since parantStats is updated and used in each iteration.

Thanks.

---

If your project is set

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18832

I know your point.

I am confusing the code doesn't work that way.

The code update parentStats for each iteration. Actually, we only need to

update parentStats for the first Iteration

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18832

parentStats is used in this

code:ãbinAggregates.getParentImpurityCalculator(), this is used in all

iteration.

So that comment seems very misleading.

`} else

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18832

node.stats is ImpurityStats, and parentStats is Array[Double], there are

different. Maybe this comment should be used on node.stats, but not on

parentStats. Is my understanding wrong?

---

If your

GitHub user mpjlu opened a pull request:

https://github.com/apache/spark/pull/18832

[SPARK-21623][ML]fix RF doc

## What changes were proposed in this pull request?

comments of parentStats in RF are wrong.

parentStats is not only used for the first iteration, it is used

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18748

Thanks.

This is my test setting:

3 workersï¼ each: 40 cores, 196G memory, 1 executor.

Data Size: user 480,000, item 17,000

---

If your project is set up for it, you can reply

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18748

Did you test the performance of this, I tested the performance of MLLIB

recommendForUserSubset some days ago, the performance is not good. Suppose the

time of recommendForAll is 35s, recommend for 1

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18624

Hi @srowen @MLnick @jkbradley @mengxr @yanboliang

Is this change acceptable? if it is acceptable, I will update ALS ML code

following this method. Also update Test Suite, which are too simple

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18620

Thanks @srowen , my test also said pq.poll is a little faster on some

cases.

One possible benefit here is if we provide pq.poll, user's first choice may

use pq.poll, not pq.toArray.sorted, which

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18620

I am ok to close this. Thanks @MLnick

---

If your project is set up for it, you can reply to this email and have your

reply appear on GitHub as well. If your project does not have this feature

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18620

My micro benchmark (write a program only test pq.toArray.sorted and

pq.Array.sortBy and pq.poll), not find significant performance difference. Only

in the Spark job, there is big difference. Confused

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18620

I also very confused about this. You can change

https://github.com/apache/spark/pull/18624 to sorted and test.

---

If your project is set up for it, you can reply to this email and have your

reply

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18620

Hi @MLnick , @srowen .

My test showing: pq.poll is not significantly faster than

pq.toArray.sortBy, but significantly faster than pq.toArray.sorted. Seems not

each pq.toArray.sorted

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18620

Hi @MLnick ,

pq.toArray.sorted also used in other places, like word2vector and LDA, how

about waiting for my other benchmark results. Then decide to close it or not.

Thanks.

---

If your

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18620

I have tested much about poll and toArray.sorted.

If the queue is much ordered (suppose offer 2000 times for queue size 20).

Use pq.toArray.sorted is faster.

If the queue is much disordered

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18620

Keep it or close it, both is ok for me. We have much discussion on:

https://issues.apache.org/jira/browse/SPARK-21401

---

If your project is set up for it, you can reply to this email and have

Github user mpjlu commented on a diff in the pull request:

https://github.com/apache/spark/pull/18624#discussion_r127669102

--- Diff:

mllib/src/main/scala/org/apache/spark/mllib/recommendation/MatrixFactorizationModel.scala

---

@@ -286,40 +288,120 @@ object

Github user mpjlu commented on a diff in the pull request:

https://github.com/apache/spark/pull/18624#discussion_r127641933

--- Diff:

mllib/src/main/scala/org/apache/spark/mllib/recommendation/MatrixFactorizationModel.scala

---

@@ -286,40 +288,120 @@ object

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18624

I have checked the results with the master method, the recommendation

results are right.

The master TestSuite is too simple, should be updated. I will update it.

Thanks.

---

If your

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18624

If no poll, we have to use toArray.sorted, which performance is bad.

---

If your project is set up for it, you can reply to this email and have your

reply appear on GitHub as well. If your project

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18624

We need the value is in order here.

---

If your project is set up for it, you can reply to this email and have your

reply appear on GitHub as well. If your project does not have this feature

enabled

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18624

An user block, after Cartesian, will generate many blocks(Number of Item

blocks), all these blocks should be aggregated. Thanks.

---

If your project is set up for it, you can reply to this email

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18620

retest this please

---

If your project is set up for it, you can reply to this email and have your

reply appear on GitHub as well. If your project does not have this feature

enabled and wishes so

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/17742

I have submitted PR for ALS optimization with GEMM. and it is ready for

review.

The performance is about 50% improvement comparing with the master method.

https://github.com/apache/spark/pull

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18620

Hi @srowen , I have added Test Suite for BoundedPriorityQueue. Thanks.

---

If your project is set up for it, you can reply to this email and have your

reply appear on GitHub as well. If your project

Github user mpjlu commented on a diff in the pull request:

https://github.com/apache/spark/pull/18624#discussion_r127214361

--- Diff:

mllib/src/main/scala/org/apache/spark/mllib/recommendation/MatrixFactorizationModel.scala

---

@@ -286,40 +288,124 @@ object

GitHub user mpjlu opened a pull request:

https://github.com/apache/spark/pull/18624

[SPARK-21389][ML][MLLIB] Optimize ALS recommendForAll by gemm with about

50% performance improvement

## What changes were proposed in this pull request?

In Spark 2.2, we have optimized ALS

Github user mpjlu commented on the issue:

https://github.com/apache/spark/pull/18620

Ok, thanks @srowen .

I will create a JIRA, and show the usage and performance comparing.

---

If your project is set up for it, you can reply to this email and have your

reply appear on GitHub

1 - 100 of 270 matches

Mail list logo