wahsmail opened a new issue #15752:

URL: https://github.com/apache/airflow/issues/15752

**Apache Airflow version**: 2.0.1

**Environment**:

- **OS** (e.g. from /etc/os-release): CentOS Linux 7 (Core)

- **Kernel** (e.g. `uname -a`): Linux 3.10.0-957.27.2.el7.x86_64

- **Install tools**: conda install airflow airflow-with-ldap psycopg2

sqlalchemy=1.3

**What happened**:

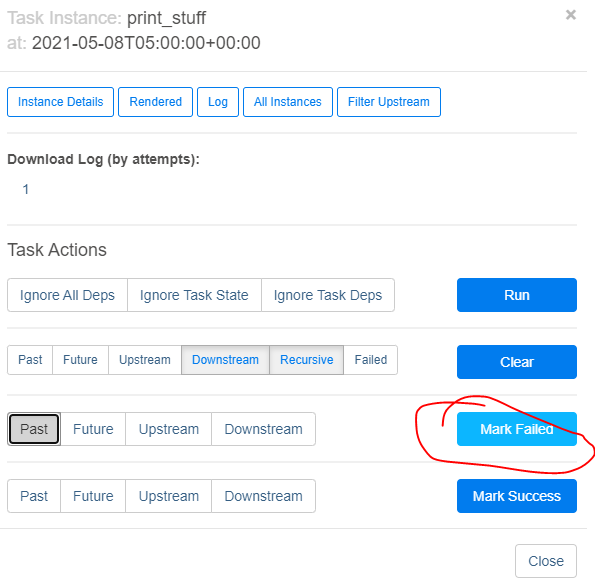

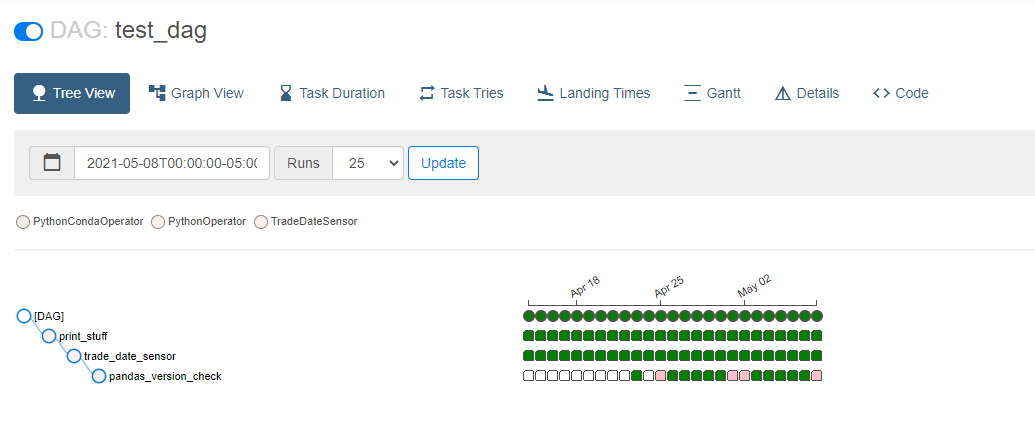

When I want to backfill tasks using only the UI, I usually pick how far I

want to backfill to, mark as failed with the "future" option selected, then

clear with the "future" option selected (with various dependency options as

well). After upgrading our production server to 2.x, the "Wait a minute prompt"

only shows the selected task when there are multiple executions dates following

it.

One interesting thing to note is that this behavior works as expected when

**clearing** tasks, just not marking success/failure.

**What you expected to happen**:

I expected all the task instances for on and after the selected execution

dates to be affected. Instead I have to manually fail each task-date or find

another workaround, but this is how our non-power-users have been backfilling

processes.

**How to reproduce it**:

We created a fresh conda environment with Python 3.8, ran `conda install

airflow airflow-with-ldap psycopg2 sqlalchemy=1.3` and continued the setup for

the scheduler and webservice. Python package environment is airflow-centric,

not much else in there. We are using the default timezone "America/Chicago" and

cron expression schedules "0 0 * * *" to ensure our dags run every night at

midnight local time, instead of 11pm/12am/1am depending on daylight savings

time / start_date. For the DAG/task start_date I have tried passing a naive

datetime.datetime, a datetime.datetime object with

tzinfo=pedulum.timezone("America/Chicago"), a pendulum.datetime object with

tz="America/Chicago", and a airflow.utils.timezone.datetime object. All suffer

from the same issue. Here is an example DAG suffering from this:

`

from airflow import DAG

from airflow.operators.python_operator import PythonOperator

from airflow.utils.timezone import datetime

default_args = {

'owner': 'wahsmail',

'depends_on_past': False,

'start_date': datetime(2021, 3, 1),

'email_on_failure': False,

'email_on_retry': False,

'retries': 0,

}

dag = DAG('test_dag', default_args=default_args, schedule_interval='0 0 * *

*', catchup=True)

def print_stuff_func(**context):

print('---- airflow macros ----')

print(str(context).replace(',', ',\n'))

print_stuff = PythonOperator(

task_id='print_stuff',

python_callable=print_stuff_func,

dag=dag

)

`

**Anything else we need to know**:

Step 1:

Step 2:

Step 3:

Step 4:

Actually in this example, not even the selected date itself was marked as

failed... not sure what's going on here.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

[email protected]