CSammy commented on issue #19743: URL: https://github.com/apache/airflow/issues/19743#issuecomment-980078753

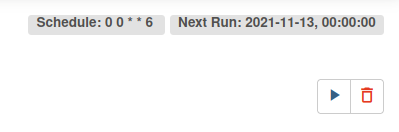

Executing `airflow info` on the `scheduler` pod throws the following error: `airflow command error: argument subcommand: invalid choice: 'info'` I'm using the standard Helm chart in version 1.3.0, Docker tag pinned to `2.2.2-python3.9`. Configuration is adjusted only in minor details. Below is the complete DAG I'm using. It results in the following output in the UI (screenshot is including the button I'm using to run the DAG).  DAG file: ```python import datetime import os from airflow import DAG from airflow.contrib.operators.kubernetes_pod_operator import KubernetesPodOperator default_args = { "namespace": os.environ["K8S_NAMESPACE"], # K8s service account linked to the GCP service account "service_account_name": "airflow2-dag-default", "image_pull_policy": "Always", "get_logs": True, # -- DAG-SPECIFIC "image": "google/cloud-sdk:slim", "env_vars": {"GCP_PROJECT": os.environ["GCP_PROJECT"]}, } with DAG( dag_id="debug_dag", # Saturday midnight schedule_interval="0 0 * * 6", start_date=datetime.datetime(2021, 11, 1), catchup=False, tags=["debug dag for catchup tests"], default_args=default_args, ) as dag: gcp_test_task = KubernetesPodOperator( # Task name in Airflow 2 UI task_id="gcp-test-task", # Pod name name="task-gcp-test-task", cmds=["sleep", "infinity"], ) gcp_test_task ``` My impression is that not the DebugExecutor is at fault. I believe the `catchup` parameter is not effective at the moment, for some reason. I'm happy to open a ticket for "`catchup` is always `True` no matter what you specify with the default Executor`" if that matches your guidelines for tickets better here, but I felt like it wouldn't make much sense to open a second ticket for what I perceive to be the same issue just with a different executor. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected]