isMrH opened a new issue, #2698: URL: https://github.com/apache/incubator-seatunnel/issues/2698

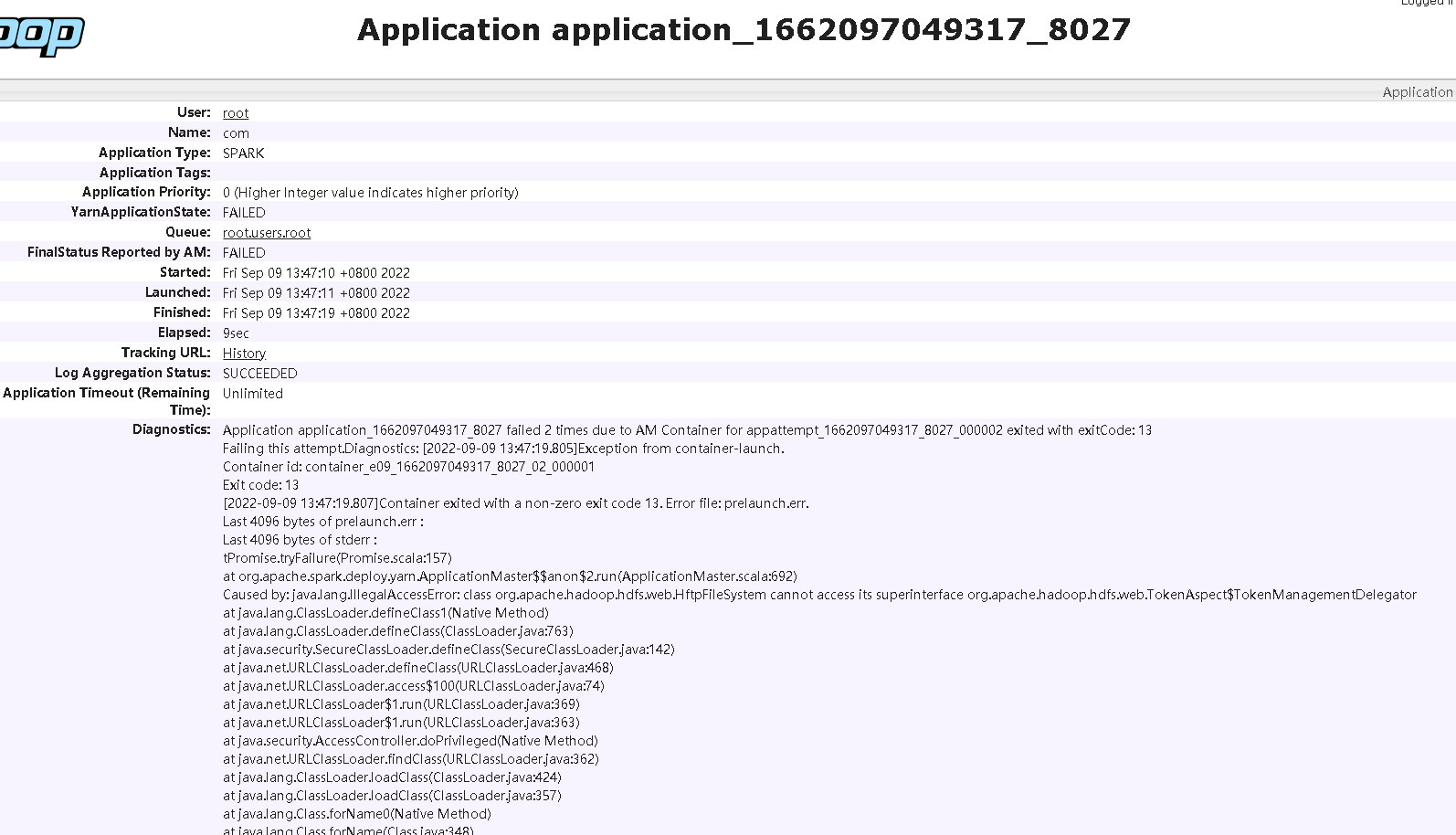

### Search before asking - [X] I had searched in the [issues](https://github.com/apache/incubator-seatunnel/issues?q=is%3Aissue+label%3A%22bug%22) and found no similar issues. ### What happened I encountered an exception when using sink tidb. Because I can't control the batch size when I use `Spark sink JDBC` to connect to `tidb`, the transaction is too large when I insert tidb, resulting in memory overflow, so I want to use `Sink tidb` for data synchronization of billion level, but an error occurs and I can't continue. ### SeaTunnel Version 2.1.2 ### SeaTunnel Config ```conf env { spark.app.name = "com" spark.executor.instances = 10 spark.executor.cores = 4 spark.executor.memory = "3g" spark.sql.catalogImplementation = "hive" spark.tispark.pd.addresses = "10.32.48.1:2379,10.32.48.2:2379,10.32.48.3:2379" spark.sql.extensions = "org.apache.spark.sql.TiExtensions" } source { hive { pre_sql = """ select id from test limit 10 """ result_table_name = "test" } } transform { } sink { tidb { addr = "10.32.48.xx", port = "4000" database = "test" table = "test" user = "user " password = "password " source_table_name = "test" } } ``` ### Running Command ```shell ./bin/start-seatunnel-spark.sh -c ./config/st_export_test.conf -e cluster -m yarn ``` ### Error Exception ```log [2022-09-09 13:47:19.807]Container exited with a non-zero exit code 13. Error file: prelaunch.err. Last 4096 bytes of prelaunch.err : Last 4096 bytes of stderr : tPromise.tryFailure(Promise.scala:157) at org.apache.spark.deploy.yarn.ApplicationMaster$$anon$2.run(ApplicationMaster.scala:692) Caused by: java.lang.IllegalAccessError: class org.apache.hadoop.hdfs.web.HftpFileSystem cannot access its superinterface org.apache.hadoop.hdfs.web.TokenAspect$TokenManagementDelegator at java.lang.ClassLoader.defineClass1(Native Method) at java.lang.ClassLoader.defineClass(ClassLoader.java:763) at java.security.SecureClassLoader.defineClass(SecureClassLoader.java:142) at java.net.URLClassLoader.defineClass(URLClassLoader.java:468) at java.net.URLClassLoader.access$100(URLClassLoader.java:74) at java.net.URLClassLoader$1.run(URLClassLoader.java:369) at java.net.URLClassLoader$1.run(URLClassLoader.java:363) at java.security.AccessController.doPrivileged(Native Method) at java.net.URLClassLoader.findClass(URLClassLoader.java:362) at java.lang.ClassLoader.loadClass(ClassLoader.java:424) at java.lang.ClassLoader.loadClass(ClassLoader.java:357) at java.lang.Class.forName0(Native Method) at java.lang.Class.forName(Class.java:348) at java.util.ServiceLoader$LazyIterator.nextService(ServiceLoader.java:370) at java.util.ServiceLoader$LazyIterator.next(ServiceLoader.java:404) at java.util.ServiceLoader$1.next(ServiceLoader.java:480) at org.apache.hadoop.fs.FileSystem.loadFileSystems(FileSystem.java:3202) at org.apache.hadoop.fs.FileSystem.getFileSystemClass(FileSystem.java:3247) at org.apache.hadoop.fs.FileSystem.createFileSystem(FileSystem.java:3286) at org.apache.hadoop.fs.FileSystem.access$200(FileSystem.java:123) at org.apache.hadoop.fs.FileSystem$Cache.getInternal(FileSystem.java:3337) at org.apache.hadoop.fs.FileSystem$Cache.get(FileSystem.java:3305) at org.apache.hadoop.fs.FileSystem.get(FileSystem.java:476) at org.apache.spark.util.Utils$.getHadoopFileSystem(Utils.scala:1914) at org.apache.spark.scheduler.EventLoggingListener.<init>(EventLoggingListener.scala:74) at org.apache.spark.SparkContext.<init>(SparkContext.scala:531) at org.apache.spark.SparkContext$.getOrCreate(SparkContext.scala:2549) at org.apache.spark.sql.SparkSession$Builder$$anonfun$7.apply(SparkSession.scala:944) at org.apache.spark.sql.SparkSession$Builder$$anonfun$7.apply(SparkSession.scala:935) at scala.Option.getOrElse(Option.scala:121) at org.apache.spark.sql.SparkSession$Builder.getOrCreate(SparkSession.scala:935) at org.apache.seatunnel.spark.SparkEnvironment.prepare(SparkEnvironment.java:106) at org.apache.seatunnel.spark.SparkEnvironment.prepare(SparkEnvironment.java:43) at org.apache.seatunnel.core.base.config.EnvironmentFactory.getEnvironment(EnvironmentFactory.java:61) at org.apache.seatunnel.core.base.config.ExecutionContext.<init>(ExecutionContext.java:49) at org.apache.seatunnel.core.spark.command.SparkTaskExecuteCommand.execute(SparkTaskExecuteCommand.java:62) at org.apache.seatunnel.core.base.Seatunnel.run(Seatunnel.java:39) at org.apache.seatunnel.core.spark.SeatunnelSpark.main(SeatunnelSpark.java:32) at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62) at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) at java.lang.reflect.Method.invoke(Method.java:498) at org.apache.spark.deploy.yarn.ApplicationMaster$$anon$2.run(ApplicationMaster.scala:673) 22/09/09 13:47:18 INFO yarn.ApplicationMaster: Deleting staging directory hdfs://cdh-09.qd.link-x.host:8020/user/root/.sparkStaging/application_1662097049317_8027 22/09/09 13:47:19 INFO storage.DiskBlockManager: Shutdown hook called 22/09/09 13:47:19 INFO util.ShutdownHookManager: Shutdown hook called 22/09/09 13:47:19 INFO util.ShutdownHookManager: Deleting directory /data/yarn/nm/usercache/root/appcache/application_1662097049317_8027/spark-f4c41e1d-e33f-41bb-87c7-c1392b96741d 22/09/09 13:47:19 INFO util.ShutdownHookManager: Deleting directory /data/yarn/nm/usercache/root/appcache/application_1662097049317_8027/spark-f4c41e1d-e33f-41bb-87c7-c1392b96741d/userFiles-77997d06-f58d-429f-9c01-72196f3658bf ``` ### Flink or Spark Version Spark version 2.4.0-cdh6.2.1 ### Java or Scala Version Scala version 2.11.12 ### Screenshots  ### Are you willing to submit PR? - [X] Yes I am willing to submit a PR! ### Code of Conduct - [X] I agree to follow this project's [Code of Conduct](https://www.apache.org/foundation/policies/conduct) -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected]