This is an automated email from the ASF dual-hosted git repository.

gengliang pushed a commit to branch master

in repository https://gitbox.apache.org/repos/asf/spark.git

The following commit(s) were added to refs/heads/master by this push:

new 9ffe23d8c92 [SPARK-39440][CORE][UI] Add a config to disable event

timeline

9ffe23d8c92 is described below

commit 9ffe23d8c92c66871fad240c9ad7adf2428d09da

Author: Yuming Wang <[email protected]>

AuthorDate: Fri Jun 10 20:11:32 2022 -0700

[SPARK-39440][CORE][UI] Add a config to disable event timeline

### What changes were proposed in this pull request?

This pr introduces a config to disable event timeline on UI pages.

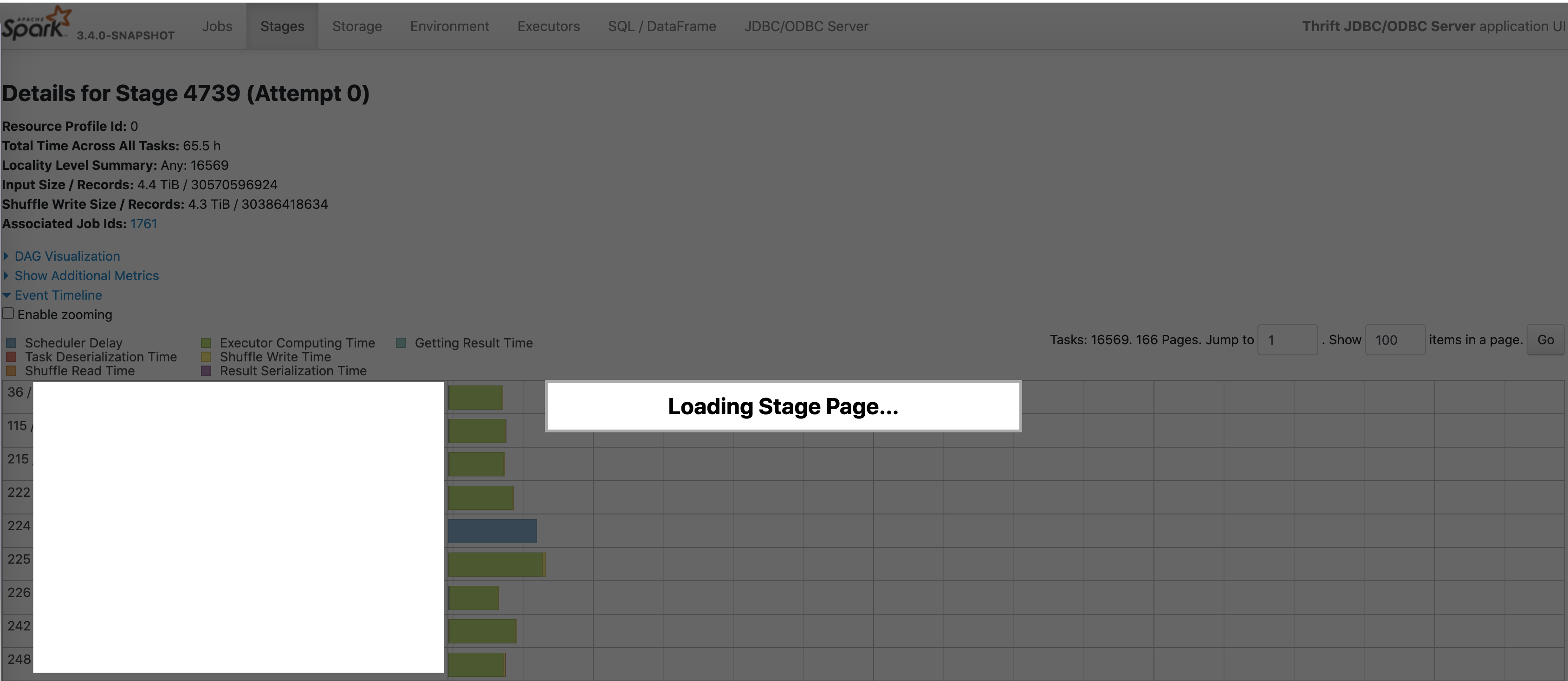

### Why are the changes needed?

Speed up UI. We usually don't care about the event timeline:

### Does this PR introduce _any_ user-facing change?

No.

### How was this patch tested?

manual and unit test.

Closes #36833 from wangyum/SPARK-39440.

Authored-by: Yuming Wang <[email protected]>

Signed-off-by: Gengliang Wang <[email protected]>

---

.../scala/org/apache/spark/internal/config/UI.scala | 6 ++++++

.../scala/org/apache/spark/ui/jobs/AllJobsPage.scala | 3 +++

.../scala/org/apache/spark/ui/jobs/JobPage.scala | 3 +++

.../scala/org/apache/spark/ui/jobs/StagePage.scala | 5 +++++

.../scala/org/apache/spark/ui/UISeleniumSuite.scala | 20 ++++++++++++++++++++

docs/configuration.md | 8 ++++++++

6 files changed, 45 insertions(+)

diff --git a/core/src/main/scala/org/apache/spark/internal/config/UI.scala

b/core/src/main/scala/org/apache/spark/internal/config/UI.scala

index 464034b8fcd..d09620b8e34 100644

--- a/core/src/main/scala/org/apache/spark/internal/config/UI.scala

+++ b/core/src/main/scala/org/apache/spark/internal/config/UI.scala

@@ -129,6 +129,12 @@ private[spark] object UI {

.bytesConf(ByteUnit.BYTE)

.createWithDefaultString("8k")

+ val UI_TIMELINE_ENABLED = ConfigBuilder("spark.ui.timelineEnabled")

+ .doc("Whether to display event timeline data on UI pages.")

+ .version("3.4.0")

+ .booleanConf

+ .createWithDefault(true)

+

val UI_TIMELINE_TASKS_MAXIMUM =

ConfigBuilder("spark.ui.timeline.tasks.maximum")

.version("1.4.0")

.intConf

diff --git a/core/src/main/scala/org/apache/spark/ui/jobs/AllJobsPage.scala

b/core/src/main/scala/org/apache/spark/ui/jobs/AllJobsPage.scala

index ae0e4728a9e..f4fe468bd93 100644

--- a/core/src/main/scala/org/apache/spark/ui/jobs/AllJobsPage.scala

+++ b/core/src/main/scala/org/apache/spark/ui/jobs/AllJobsPage.scala

@@ -41,6 +41,7 @@ private[ui] class AllJobsPage(parent: JobsTab, store:

AppStatusStore) extends We

import ApiHelper._

+ private val TIMELINE_ENABLED = parent.conf.get(UI_TIMELINE_ENABLED)

private val MAX_TIMELINE_JOBS = parent.conf.get(UI_TIMELINE_JOBS_MAXIMUM)

private val MAX_TIMELINE_EXECUTORS =

parent.conf.get(UI_TIMELINE_EXECUTORS_MAXIMUM)

@@ -174,6 +175,8 @@ private[ui] class AllJobsPage(parent: JobsTab, store:

AppStatusStore) extends We

executors: Seq[v1.ExecutorSummary],

startTime: Long): Seq[Node] = {

+ if (!TIMELINE_ENABLED) return Seq.empty[Node]

+

val jobEventJsonAsStrSeq = makeJobEvent(jobs)

val executorEventJsonAsStrSeq = makeExecutorEvent(executors)

diff --git a/core/src/main/scala/org/apache/spark/ui/jobs/JobPage.scala

b/core/src/main/scala/org/apache/spark/ui/jobs/JobPage.scala

index 1de000bbdd8..bc821ec1858 100644

--- a/core/src/main/scala/org/apache/spark/ui/jobs/JobPage.scala

+++ b/core/src/main/scala/org/apache/spark/ui/jobs/JobPage.scala

@@ -35,6 +35,7 @@ import org.apache.spark.ui._

/** Page showing statistics and stage list for a given job */

private[ui] class JobPage(parent: JobsTab, store: AppStatusStore) extends

WebUIPage("job") {

+ private val TIMELINE_ENABLED = parent.conf.get(UI_TIMELINE_ENABLED)

private val MAX_TIMELINE_STAGES = parent.conf.get(UI_TIMELINE_STAGES_MAXIMUM)

private val MAX_TIMELINE_EXECUTORS =

parent.conf.get(UI_TIMELINE_EXECUTORS_MAXIMUM)

@@ -154,6 +155,8 @@ private[ui] class JobPage(parent: JobsTab, store:

AppStatusStore) extends WebUIP

executors: Seq[v1.ExecutorSummary],

appStartTime: Long): Seq[Node] = {

+ if (!TIMELINE_ENABLED) return Seq.empty[Node]

+

val stageEventJsonAsStrSeq = makeStageEvent(stages)

val executorsJsonAsStrSeq = makeExecutorEvent(executors)

diff --git a/core/src/main/scala/org/apache/spark/ui/jobs/StagePage.scala

b/core/src/main/scala/org/apache/spark/ui/jobs/StagePage.scala

index fe99d635a60..3f92719aca9 100644

--- a/core/src/main/scala/org/apache/spark/ui/jobs/StagePage.scala

+++ b/core/src/main/scala/org/apache/spark/ui/jobs/StagePage.scala

@@ -39,6 +39,8 @@ import org.apache.spark.util.Utils

private[ui] class StagePage(parent: StagesTab, store: AppStatusStore) extends

WebUIPage("stage") {

import ApiHelper._

+ private val TIMELINE_ENABLED = parent.conf.get(UI_TIMELINE_ENABLED)

+

private val TIMELINE_LEGEND = {

<div class="legend-area">

<svg>

@@ -253,6 +255,9 @@ private[ui] class StagePage(parent: StagesTab, store:

AppStatusStore) extends We

stageId: Int,

stageAttemptId: Int,

totalTasks: Int): Seq[Node] = {

+

+ if (!TIMELINE_ENABLED) return Seq.empty[Node]

+

val executorsSet = new HashSet[(String, String)]

var minLaunchTime = Long.MaxValue

var maxFinishTime = Long.MinValue

diff --git a/core/src/test/scala/org/apache/spark/ui/UISeleniumSuite.scala

b/core/src/test/scala/org/apache/spark/ui/UISeleniumSuite.scala

index 015f299fc6b..47fffa0572b 100644

--- a/core/src/test/scala/org/apache/spark/ui/UISeleniumSuite.scala

+++ b/core/src/test/scala/org/apache/spark/ui/UISeleniumSuite.scala

@@ -109,6 +109,7 @@ class UISeleniumSuite extends SparkFunSuite with WebBrowser

with Matchers with B

*/

private def newSparkContext(

killEnabled: Boolean = true,

+ timelineEnabled: Boolean = true,

master: String = "local",

additionalConfs: Map[String, String] = Map.empty): SparkContext = {

val conf = new SparkConf()

@@ -117,6 +118,7 @@ class UISeleniumSuite extends SparkFunSuite with WebBrowser

with Matchers with B

.set(UI_ENABLED, true)

.set(UI_PORT, 0)

.set(UI_KILL_ENABLED, killEnabled)

+ .set(UI_TIMELINE_ENABLED, timelineEnabled)

.set(MEMORY_OFFHEAP_SIZE.key, "64m")

additionalConfs.foreach { case (k, v) => conf.set(k, v) }

val sc = new SparkContext(conf)

@@ -800,6 +802,24 @@ class UISeleniumSuite extends SparkFunSuite with

WebBrowser with Matchers with B

}

}

+ test("Support disable event timeline") {

+ Seq(true, false).foreach { timelineEnabled =>

+ withSpark(newSparkContext(timelineEnabled = timelineEnabled)) { sc =>

+ sc.range(1, 3).collect()

+ eventually(timeout(10.seconds), interval(50.milliseconds)) {

+ goToUi(sc, "/jobs")

+ assert(findAll(className("expand-application-timeline")).nonEmpty

=== timelineEnabled)

+

+ goToUi(sc, "/jobs/job/?id=0")

+ assert(findAll(className("expand-job-timeline")).nonEmpty ===

timelineEnabled)

+

+ goToUi(sc, "/stages/stage/?id=0&attempt=0")

+

assert(findAll(className("expand-task-assignment-timeline")).nonEmpty ===

timelineEnabled)

+ }

+ }

+ }

+ }

+

def goToUi(sc: SparkContext, path: String): Unit = {

goToUi(sc.ui.get, path)

}

diff --git a/docs/configuration.md b/docs/configuration.md

index 22f16b8132e..a6d2a8b9d52 100644

--- a/docs/configuration.md

+++ b/docs/configuration.md

@@ -1437,6 +1437,14 @@ Apart from these, the following properties are also

available, and may be useful

</td>

<td>2.2.3</td>

</tr>

+<tr>

+ <td><code>spark.ui.timelineEnabled</code></td>

+ <td>true</td>

+ <td>

+ Whether to display event timeline data on UI pages.

+ </td>

+ <td>3.4.0</td>

+</tr>

<tr>

<td><code>spark.ui.timeline.executors.maximum</code></td>

<td>250</td>

---------------------------------------------------------------------

To unsubscribe, e-mail: [email protected]

For additional commands, e-mail: [email protected]