[

https://issues.apache.org/jira/browse/HDFS-16316?focusedWorklogId=727458&page=com.atlassian.jira.plugin.system.issuetabpanels:worklog-tabpanel#worklog-727458

]

ASF GitHub Bot logged work on HDFS-16316:

-----------------------------------------

Author: ASF GitHub Bot

Created on: 15/Feb/22 18:52

Start Date: 15/Feb/22 18:52

Worklog Time Spent: 10m

Work Description: jianghuazhu removed a comment on pull request #3861:

URL: https://github.com/apache/hadoop/pull/3861#issuecomment-1039826045

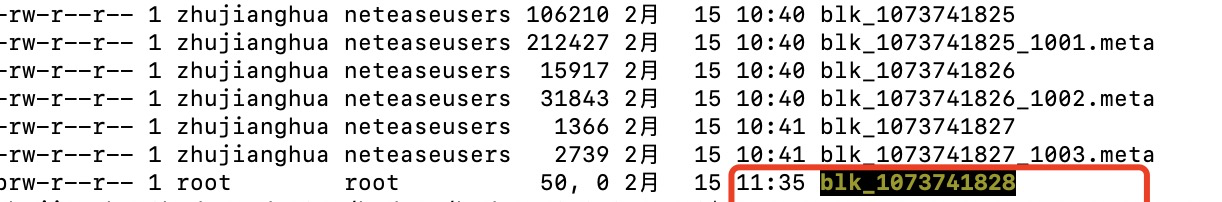

Here are some examples of online clusters.

We construct a block device file such as:

This file is non-standard.

This kind of file is found when DirectoryScanner is working.

log:

`

2022-02-15 11:24:10,286 WARN

org.apache.hadoop.hdfs.server.datanode.fsdataset.impl.FsDatasetImpl:

Block:1073741828 is not a regular file.

`

`

2022-02-15 11:24:10,286 WARN

org.apache.hadoop.hdfs.server.datanode.fsdataset.impl.FsDatasetImpl: Reporting

the block blk_1073741828_0 as corrupt due to length mismatch

`

Then DataNode will tell NameNode that there are some unqualified blocks

through NameNodeRpcServer#reportBadBlocks(). After the NameNode gets the data,

it will process it further.

After a period of time, the DataNode will automatically clean up these

unqualified replica data.

Can you help review this pr again, @jojochuang .

Thank you so much.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

Issue Time Tracking

-------------------

Worklog Id: (was: 727458)

Time Spent: 3h 10m (was: 3h)

> Improve DirectoryScanner: add regular file check related block

> --------------------------------------------------------------

>

> Key: HDFS-16316

> URL: https://issues.apache.org/jira/browse/HDFS-16316

> Project: Hadoop HDFS

> Issue Type: Bug

> Components: datanode

> Affects Versions: 2.9.2

> Reporter: JiangHua Zhu

> Assignee: JiangHua Zhu

> Priority: Major

> Labels: pull-request-available

> Attachments: screenshot-1.png, screenshot-2.png, screenshot-3.png,

> screenshot-4.png

>

> Time Spent: 3h 10m

> Remaining Estimate: 0h

>

> Something unusual happened in the online environment.

> The DataNode is configured with 11 disks (${dfs.datanode.data.dir}). It is

> normal for 10 disks to calculate the used capacity, and the calculated value

> for the other 1 disk is much larger, which is very strange.

> This is about the live view on the NameNode:

> !screenshot-1.png!

> This is about the live view on the DataNode:

> !screenshot-2.png!

> We can look at the view on linux:

> !screenshot-3.png!

> There is a big gap here, regarding'/mnt/dfs/11/data'. This situation should

> be prohibited from happening.

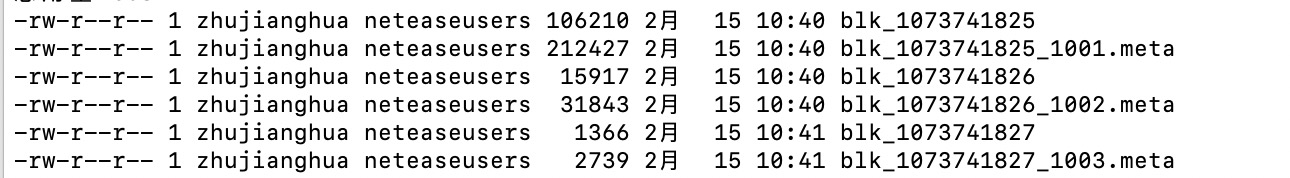

> I found that there are some abnormal block files.

> There are wrong blk_xxxx.meta in some subdir directories, causing abnormal

> computing space.

> Here are some abnormal block files:

> !screenshot-4.png!

> Such files should not be used as normal blocks. They should be actively

> identified and filtered, which is good for cluster stability.

--

This message was sent by Atlassian Jira

(v8.20.1#820001)

---------------------------------------------------------------------

To unsubscribe, e-mail: hdfs-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: hdfs-issues-h...@hadoop.apache.org