LuciferYang commented on PR #43526:

URL: https://github.com/apache/spark/pull/43526#issuecomment-1822026592

> > Thank you very much @laglangyue , I will review this PR as soon as

possible

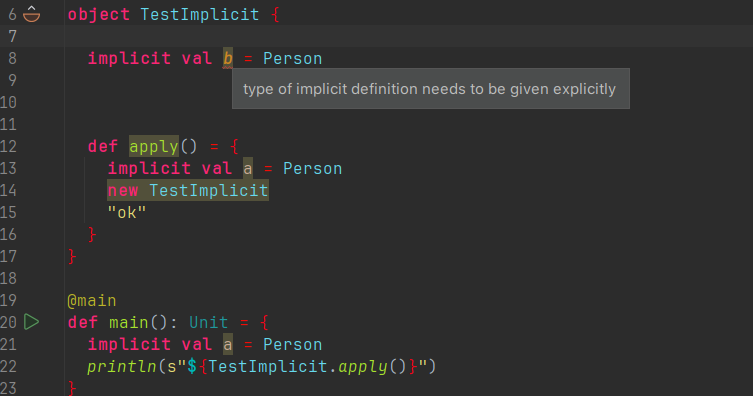

> > > > I new a project and test code as bellow,and `sbt compile`,and it

will not cause error, why it will cause error for spark. I also test them is

scala3 , it also compile successful,and there are be no warning.Surely,

according to the scala3 community, it is recommended to use `Using` and `Given`

instead of implicits, while retaining implicits and removing them in the

future. I don't know how to compile spark for scala3. Replace scala's related

dependencies and compile them?

> > >

> > >

> > > ```scala

> > > Welcome to Scala 3.3.1 (17.0.8, Java OpenJDK 64-Bit Server VM).

> > > Type in expressions for evaluation. Or try :help.

> > >

> > > scala> implicit val ix = 1

> > > -- Error:

----------------------------------------------------------------------

> > > 1 |implicit val ix = 1

> > > | ^

> > > | type of implicit definition needs to be given

explicitly

> > > 1 error found

> > > ```

> > >

> > >

> > >

> > >

> > >

> > >

> > >

> > >

> > >

> > >

> > >

> > > But why does the above statement report an error in Scala 3.3.1?

> >

> >

> > Additionally, if this is confirmed, we should mention this in the pr

description.

> > also cc @srowen FYI

>

> It is ok to use implicit within a method without declaring a type,but not

for global variables

>

>

So, was it my testing approach in the Scala shell that was incorrect, or is

there another reason we came to different conclusions?

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

---------------------------------------------------------------------

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org