Github user wuxianxingkong commented on a diff in the pull request:

https://github.com/apache/spark/pull/14191#discussion_r71067768

--- Diff:

sql/catalyst/src/main/antlr4/org/apache/spark/sql/catalyst/parser/SqlBase.g4 ---

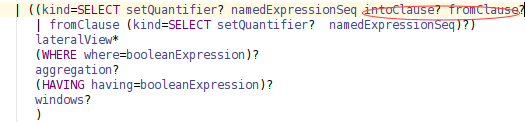

@@ -338,7 +338,7 @@ querySpecification

(RECORDREADER recordReader=STRING)?

fromClause?

(WHERE where=booleanExpression)?)

- | ((kind=SELECT setQuantifier? namedExpressionSeq fromClause?

+ | ((kind=SELECT setQuantifier? namedExpressionSeq (intoClause?

fromClause)?

--- End diff --

At first, I modify grammar:

But it will affect multiInsertQueryBody rule, i.e.:

```sql

FROM OLD_TABLE

INSERT INTO T1

SELECT C1

INSERT INTO T2

SELECT C2

```

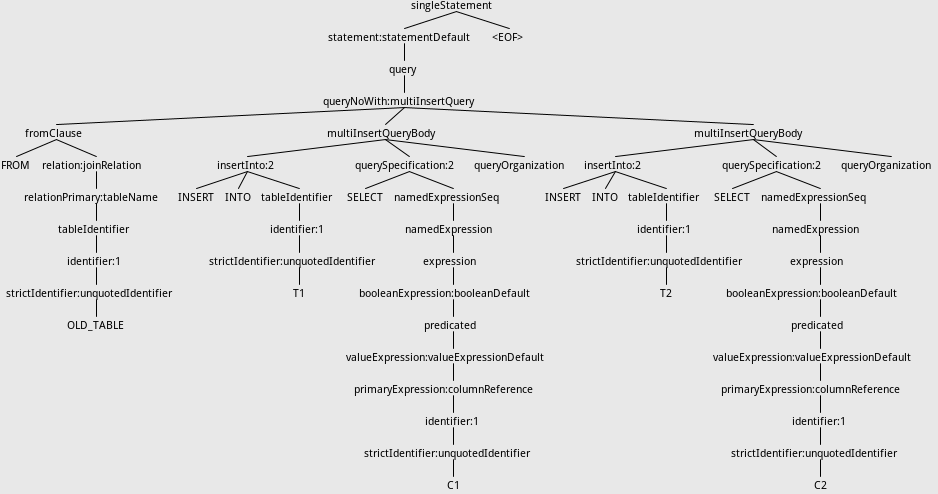

The Syntax tree before adding intoClause is:

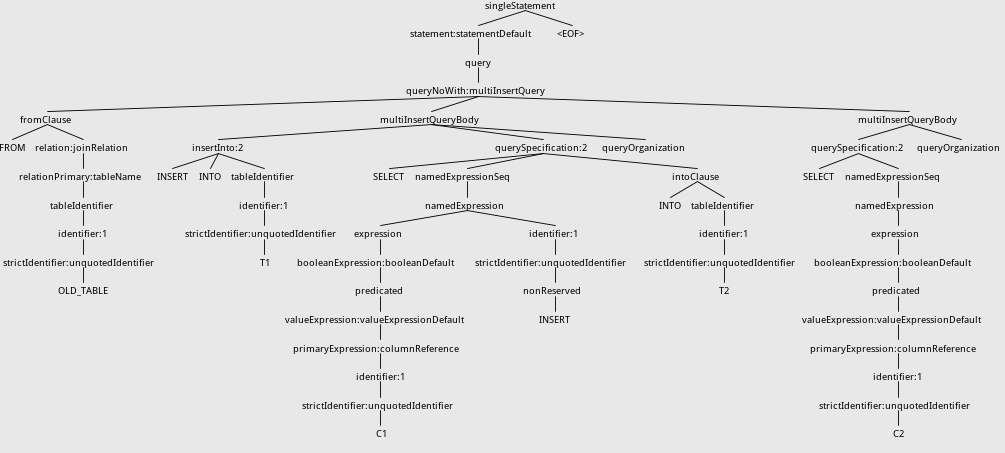

After adding intoClause ,the tree will be:

This is because INSERT is a nonreserved keyword and matching strategy of antlr.

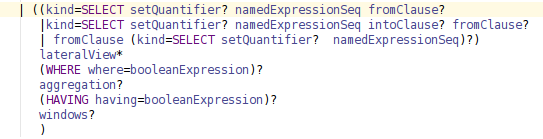

One of the ways I can think of is to change grammar like this:

This can solve the problem because antlr parser chooses the alternative

specified first.

By the way, the grammar now can support "SELECT 1 INTO newtable" now.

But this will cause confusion about querySpecification rule because of the

duplication. Is there any way to solve this problem?Thanks.

---

If your project is set up for it, you can reply to this email and have your

reply appear on GitHub as well. If your project does not have this feature

enabled and wishes so, or if the feature is enabled but not working, please

contact infrastructure at infrastruct...@apache.org or file a JIRA ticket

with INFRA.

---

---------------------------------------------------------------------

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org