GitHub user sujith71955 opened a pull request:

https://github.com/apache/spark/pull/22572

[SPARK-25521][SQL]Job id showing null in the logs when insert into command

Job is finished.

## What changes were proposed in this pull request?

``As part of insert command in FileFormatWriter, a job context is created

for handling the write operation , While initializing the job context

setupJob() API

in HadoopMapReduceCommitProtocol sets the jobid in the Jobcontext

configuration, Since we are directly getting the jobId from the map reduce

JobContext the job id will come as

null in the logs. As a solution we shall get the jobID from the

configuration of the map reduce Jobcontext.``

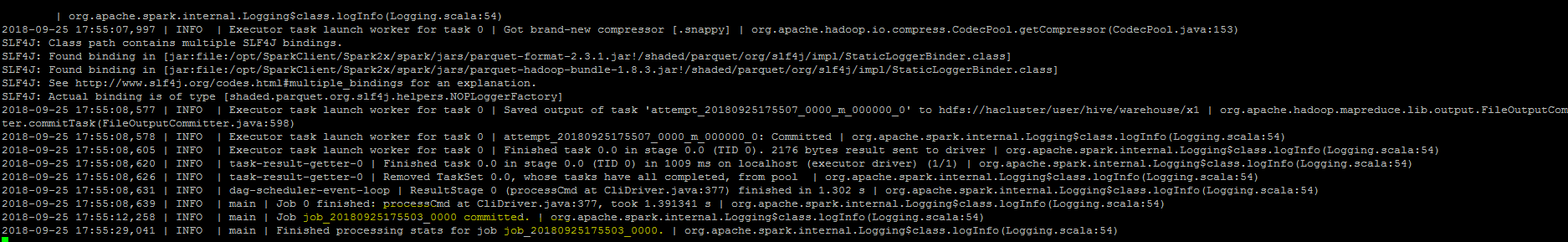

## How was this patch tested?

Manually, verified the logs after the changes.

You can merge this pull request into a Git repository by running:

$ git pull https://github.com/sujith71955/spark master_log_issue

Alternatively you can review and apply these changes as the patch at:

https://github.com/apache/spark/pull/22572.patch

To close this pull request, make a commit to your master/trunk branch

with (at least) the following in the commit message:

This closes #22572

----

commit 23a1b063e8317b2422acdc05a4635fce14b2bc49

Author: s71955 <sujithchacko.2010@...>

Date: 2018-09-27T15:33:51Z

[SPARK-25521][SQL]Job id showing null in the logs when insert into command

Job is finished.

## What changes were proposed in this pull request?

As part of insert command in FileFormatWriter, a job context is created

for handling the write operation , While initializing the job context

setupJob() API

in HadoopMapReduceCommitProtocol sets the jobid in the Jobcontext

configuration, Since we are directly getting the jobId from the map reduce

JobContext the job id will come as

null in the logs. As a solution we shall get the jobID from the

configuration of the map reduce Jobcontext

## How was this patch tested?

Manually, verified the logs after the changes.

----

---

---------------------------------------------------------------------

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org