[GitHub] [airflow] ephraimbuddy closed pull request #17324: Improve `dag_maker` fixture

ephraimbuddy closed pull request #17324: URL: https://github.com/apache/airflow/pull/17324 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] ManiBharataraju edited a comment on issue #17176: TriggerDagRunOperator triggering subdags out of order

ManiBharataraju edited a comment on issue #17176: URL: https://github.com/apache/airflow/issues/17176#issuecomment-890295058 @enriqueayala - We created a custom TriggerDagRunOperator along with the changes in trigger_dag.py. I just copied the trigger dag run operator code and trigger_dag.py code and made the one change that I have mentioned. That worked. But I am unsure of why that was done or if that is the real reason behind this issue. As a temporary fix, u can do that. With subdags having too many issues, we are planning to use taskgroups as a long-term fix. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] ManiBharataraju edited a comment on issue #17176: TriggerDagRunOperator triggering subdags out of order

ManiBharataraju edited a comment on issue #17176: URL: https://github.com/apache/airflow/issues/17176#issuecomment-890295058 @enriqueayala - We created a custom TriggerDagRunOperator along with the changes in trigger_dag.py. I just copied the trigger dag run operator code and trigger_dag.py code and made the one change that I have mentioned. That worked. But I am unsure of why that was done or if that is the real reason behind this issue. As a temporary fix, u can do that. With subdags having too many issues, we are planning to use taskgroups instead as a long term fix. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] ManiBharataraju commented on issue #17176: TriggerDagRunOperator triggering subdags out of order

ManiBharataraju commented on issue #17176: URL: https://github.com/apache/airflow/issues/17176#issuecomment-890295058 @enriqueayala - We created a custom TriggerDagRunOperator along with the changes in trigger_dag.py. I just copied the trigger dag run operator code and trigger_dag.py code and made the one change that I have mentioned. That worked. But I am unsure of why that was done or if that is the real reason behind this issue. As a temporary fix, u can do that. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[airflow] branch main updated: Handle connection parameters added to Extra and custom fields (#17269)

This is an automated email from the ASF dual-hosted git repository.

uranusjr pushed a commit to branch main

in repository https://gitbox.apache.org/repos/asf/airflow.git

The following commit(s) were added to refs/heads/main by this push:

new 1941f94 Handle connection parameters added to Extra and custom fields

(#17269)

1941f94 is described below

commit 1941f9486e72b9c70654ea9aa285d566239f6ba1

Author: josh-fell <48934154+josh-f...@users.noreply.github.com>

AuthorDate: Sat Jul 31 01:35:12 2021 -0400

Handle connection parameters added to Extra and custom fields (#17269)

---

airflow/www/views.py | 22 ++

tests/www/views/test_views_connection.py | 52

2 files changed, 74 insertions(+)

diff --git a/airflow/www/views.py b/airflow/www/views.py

index 8309d99..3769c1a 100644

--- a/airflow/www/views.py

+++ b/airflow/www/views.py

@@ -3179,11 +3179,33 @@ class ConnectionModelView(AirflowModelView):

def process_form(self, form, is_created):

"""Process form data."""

conn_type = form.data['conn_type']

+conn_id = form.data["conn_id"]

extra = {

key: form.data[key]

for key in self.extra_fields

if key in form.data and key.startswith(f"extra__{conn_type}__")

}

+

+# If parameters are added to the classic `Extra` field, include these

values along with

+# custom-field extras.

+extra_conn_params = form.data.get("extra")

+

+if extra_conn_params:

+try:

+extra.update(json.loads(extra_conn_params))

+except (JSONDecodeError, TypeError):

+flash(

+Markup(

+"The Extra connection field contained an

invalid value for Conn ID: "

+f"{conn_id}."

+"If connection parameters need to be added to

Extra, "

+"please make sure they are in the form of a single,

valid JSON object."

+"The following Extra parameters were

not added to the connection:"

+f"{extra_conn_params}",

+),

+category="error",

+)

+

if extra.keys():

form.extra.data = json.dumps(extra)

diff --git a/tests/www/views/test_views_connection.py

b/tests/www/views/test_views_connection.py

index 5576977..249bf2a 100644

--- a/tests/www/views/test_views_connection.py

+++ b/tests/www/views/test_views_connection.py

@@ -15,6 +15,7 @@

# KIND, either express or implied. See the License for the

# specific language governing permissions and limitations

# under the License.

+import json

from unittest import mock

import pytest

@@ -56,6 +57,57 @@ def test_prefill_form_null_extra():

cmv.prefill_form(form=mock_form, pk=1)

+def test_process_form_extras():

+"""

+Test the handling of connection parameters set with the classic `Extra`

field as well as custom fields.

+"""

+

+# Testing parameters set in both `Extra` and custom fields.

+mock_form = mock.Mock()

+mock_form.data = {

+"conn_type": "test",

+"conn_id": "extras_test",

+"extra": '{"param1": "param1_val"}',

+"extra__test__custom_field": "custom_field_val",

+}

+

+cmv = ConnectionModelView()

+cmv.extra_fields = ["extra__test__custom_field"] # Custom field

+cmv.process_form(form=mock_form, is_created=True)

+

+assert json.loads(mock_form.extra.data) == {

+"extra__test__custom_field": "custom_field_val",

+"param1": "param1_val",

+}

+

+# Testing parameters set in `Extra` field only.

+mock_form = mock.Mock()

+mock_form.data = {

+"conn_type": "test2",

+"conn_id": "extras_test2",

+"extra": '{"param2": "param2_val"}',

+}

+

+cmv = ConnectionModelView()

+cmv.process_form(form=mock_form, is_created=True)

+

+assert json.loads(mock_form.extra.data) == {"param2": "param2_val"}

+

+# Testing parameters set in custom fields only.

+mock_form = mock.Mock()

+mock_form.data = {

+"conn_type": "test3",

+"conn_id": "extras_test3",

+"extra__test3__custom_field": "custom_field_val3",

+}

+

+cmv = ConnectionModelView()

+cmv.extra_fields = ["extra__test3__custom_field"] # Custom field

+cmv.process_form(form=mock_form, is_created=True)

+

+assert json.loads(mock_form.extra.data) == {"extra__test3__custom_field":

"custom_field_val3"}

+

+

def test_duplicate_connection(admin_client):

"""Test Duplicate multiple connection with suffix"""

conn1 = Connection(

[GitHub] [airflow] uranusjr merged pull request #17269: Handle connection parameters added to Extra and custom fields

uranusjr merged pull request #17269: URL: https://github.com/apache/airflow/pull/17269 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] uranusjr closed issue #17235: Connection inputs in Extra field are overwritten by custom form widget fields

uranusjr closed issue #17235: URL: https://github.com/apache/airflow/issues/17235 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] uranusjr commented on pull request #17305: [Bug] Backfill job fails to run when there are tasks run into rescheduling state.

uranusjr commented on pull request #17305: URL: https://github.com/apache/airflow/pull/17305#issuecomment-890294285 Could you rebase this to the latest main? We had some disruptions in CI that caused the tests to fail in this PR. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] uranusjr commented on a change in pull request #17335: Fix typo in AUTOMATICALLY GENERATED marker

uranusjr commented on a change in pull request #17335:

URL: https://github.com/apache/airflow/pull/17335#discussion_r680309794

##

File path: dev/provider_packages/prepare_provider_packages.py

##

@@ -1694,9 +1694,8 @@ def replace_content(file_path, old_text, new_text,

provider_package_id):

os.remove(temp_file_path)

-AUTOMATICALLY_GENERATED_CONTENT = (

-".. THE REMINDER OF THE FILE IS AUTOMATICALLY GENERATED. IT WILL BE

OVERWRITTEN AT RELEASE TIME!"

-)

+AUTOMATICALLY_GENERATED_MARKER = "AUTOMATICALLY GENERATED"

+AUTOMATICALLY_GENERATED_CONTENT = f".. THE REMAINDER OF THE FILE IS

{AUTOMATICALLY_GENERATED_MARKER}. IT WILL BE OVERWRITTEN AT RELEASE TIME!"

Review comment:

```suggestion

AUTOMATICALLY_GENERATED_CONTENT = (

f".. THE REMAINDER OF THE FILE IS {AUTOMATICALLY_GENERATED_MARKER}. "

f"IT WILL BE OVERWRITTEN AT RELEASE TIME!"

)

```

Flake8 says this is too long.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [airflow] bravefoot opened a new pull request #17354: Add Docker Sensor

bravefoot opened a new pull request #17354: URL: https://github.com/apache/airflow/pull/17354 --- **^ Add meaningful description above** Read the **[Pull Request Guidelines](https://github.com/apache/airflow/blob/main/CONTRIBUTING.rst#pull-request-guidelines)** for more information. In case of fundamental code change, Airflow Improvement Proposal ([AIP](https://cwiki.apache.org/confluence/display/AIRFLOW/Airflow+Improvements+Proposals)) is needed. In case of a new dependency, check compliance with the [ASF 3rd Party License Policy](https://www.apache.org/legal/resolved.html#category-x). In case of backwards incompatible changes please leave a note in [UPDATING.md](https://github.com/apache/airflow/blob/main/UPDATING.md). -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] uranusjr closed issue #17226: [QUARANTINE] Quarantine test_verify_integrity_if_dag_changed

uranusjr closed issue #17226: URL: https://github.com/apache/airflow/issues/17226 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] uranusjr commented on issue #17226: [QUARANTINE] Quarantine test_verify_integrity_if_dag_changed

uranusjr commented on issue #17226: URL: https://github.com/apache/airflow/issues/17226#issuecomment-890292774 I’m assuming this can be closed since #17227 has been merged. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] uranusjr commented on issue #17012: NONE_FAILED_OR_SKIPPED and NONE_FAILED do the same

uranusjr commented on issue #17012: URL: https://github.com/apache/airflow/issues/17012#issuecomment-890292670 “Trigger this task if none (of the upstreams) failed or [were] skipped” reads reasonable to me, so IMO a rename is not necessary. But English is hard and also not my first langauge, so don’t take my word for this 🙂 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] uranusjr commented on pull request #16634: Require can_edit on DAG privileges to modify TaskInstances and DagRuns

uranusjr commented on pull request #16634: URL: https://github.com/apache/airflow/pull/16634#issuecomment-890292297 CI seems to work now -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] uranusjr commented on pull request #17209: ECSOperator returns last logs when ECS task fails

uranusjr commented on pull request #17209: URL: https://github.com/apache/airflow/pull/17209#issuecomment-890292216 (Forgot to comment when hitting submit: the implementation itself looks good to me, but the tests can be improved.) -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] uranusjr commented on a change in pull request #17209: ECSOperator returns last logs when ECS task fails

uranusjr commented on a change in pull request #17209:

URL: https://github.com/apache/airflow/pull/17209#discussion_r680306905

##

File path: airflow/providers/amazon/aws/operators/ecs.py

##

@@ -136,13 +136,17 @@ class ECSOperator(BaseOperator):

Only required if you want logs to be shown in the Airflow UI after

your job has

finished.

:type awslogs_stream_prefix: str

+:param quota_retry: Config if and how to retry _start_task() for transient

errors.

+:type quota_retry: dict

:param reattach: If set to True, will check if the task previously

launched by the task_instance

is already running. If so, the operator will attach to it instead of

starting a new task.

This is to avoid relaunching a new task when the connection drops

between Airflow and ECS while

the task is running (when the Airflow worker is restarted for example).

:type reattach: bool

-:param quota_retry: Config if and how to retry _start_task() for transient

errors.

-:type quota_retry: dict

+:param number_logs_exception: number of lines from the last Cloudwatch

logs to return in the

+AirflowException if an ECS task is stopped (to receive Airflow alerts

with the logs of what

+failed in the code running in ECS)

Review comment:

```suggestion

:param number_logs_exception: Number of lines from the last Cloudwatch

logs to return in the

AirflowException if an ECS task is stopped (to receive Airflow

alerts with the logs of what

failed in the code running in ECS).

```

Nit, the description should be a complete sentence.

##

File path: tests/providers/amazon/aws/operators/test_ecs.py

##

@@ -228,11 +229,11 @@ def test_check_success_tasks_raises(self):

with pytest.raises(Exception) as ctx:

self.ecs._check_success_task()

-# Ordering of str(dict) is not guaranteed.

-assert "This task is not in success state " in str(ctx.value)

-assert "'name': 'foo'" in str(ctx.value)

-assert "'lastStatus': 'STOPPED'" in str(ctx.value)

-assert "'exitCode': 1" in str(ctx.value)

+expected_last_logs = "\n".join(mock_last_log_messages.return_value)

+assert (

+str(ctx.value)

+== f"This task is not in success state - last logs from

Cloudwatch:\n{expected_last_logs}"

+)

Review comment:

```suggestion

assert str(ctx.value) == (

f"This task is not in success state - last logs from

Cloudwatch:\n1\n2\n3\n4\n5"

)

```

Test code is encouraged to duplicate things, so the assertion call is as

close to human perception as possible.

##

File path: tests/providers/amazon/aws/operators/test_ecs.py

##

@@ -415,17 +416,17 @@ def test_reattach_save_task_arn_xcom(

xcom_del_mock.assert_called_once()

assert self.ecs.arn ==

'arn:aws:ecs:us-east-1:012345678910:task/d8c67b3c-ac87-4ffe-a847-4785bc3a8b55'

-@mock.patch.object(ECSOperator, '_last_log_message', return_value="Log

output")

+@mock.patch.object(ECSOperator, '_last_log_messages', return_value=["Log

output"])

def test_execute_xcom_with_log(self, mock_cloudwatch_log_message):

self.ecs.do_xcom_push = True

-assert self.ecs.execute(None) ==

mock_cloudwatch_log_message.return_value

+assert self.ecs.execute(None) ==

mock_cloudwatch_log_message.return_value[-1]

Review comment:

```suggestion

assert self.ecs.execute(None) == "Log output"

```

Same idea.

##

File path: airflow/providers/amazon/aws/operators/ecs.py

##

@@ -378,7 +387,10 @@ def _check_success_task(self) -> None:

containers = task['containers']

for container in containers:

if container.get('lastStatus') == 'STOPPED' and

container['exitCode'] != 0:

-raise AirflowException(f'This task is not in success state

{task}')

+last_logs =

"\n".join(self._last_log_messages(self.number_logs_exception))

+raise AirflowException(

+f"This task is not in success state - last logs from

Cloudwatch:\n{last_logs}"

+)

Review comment:

How about including the count in the message?

```suggestion

raise AirflowException(

f"This task is not in success state - last

{self.number_logs_exception} "

f"logs from Cloudwatch:\n{last_logs}"

)

```

##

File path: airflow/providers/amazon/aws/operators/ecs.py

##

@@ -178,6 +182,7 @@ def __init__(

propagate_tags: Optional[str] = None,

quota_retry: Optional[dict] = None,

reattach: bool = False,

+number_logs_exception=10,

Review comment:

```suggestion

number_logs_exception: int = 10,

``

[GitHub] [airflow] uranusjr commented on a change in pull request #17209: ECSOperator returns last logs when ECS task fails

uranusjr commented on a change in pull request #17209: URL: https://github.com/apache/airflow/pull/17209#discussion_r680306841 ## File path: airflow/providers/amazon/aws/operators/ecs.py ## @@ -136,13 +136,17 @@ class ECSOperator(BaseOperator): Only required if you want logs to be shown in the Airflow UI after your job has finished. :type awslogs_stream_prefix: str +:param quota_retry: Config if and how to retry _start_task() for transient errors. +:type quota_retry: dict Review comment: `_start_task()` is a private function, and an end user wouldn’t have a clue what this means without reading the source code. So this is a good change to rewrite the doc to describe what `_start_task()` actually does and how this config interacts with it. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] github-actions[bot] commented on pull request #17351: Fix link

github-actions[bot] commented on pull request #17351: URL: https://github.com/apache/airflow/pull/17351#issuecomment-890282648 The PR is likely ready to be merged. No tests are needed as no important environment files, nor python files were modified by it. However, committers might decide that full test matrix is needed and add the 'full tests needed' label. Then you should rebase it to the latest main or amend the last commit of the PR, and push it with --force-with-lease. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] yuqian90 commented on a change in pull request #16681: Introduce RESTARTING state

yuqian90 commented on a change in pull request #16681:

URL: https://github.com/apache/airflow/pull/16681#discussion_r680296647

##

File path: airflow/www/static/css/tree.css

##

@@ -67,6 +67,10 @@ rect.shutdown {

fill: blue;

}

+rect.restarting {

+ fill: violet;

Review comment:

Thanks @kaxil. I don't think that's necessary. Actually, the color is

already hardcode in `state_color`, and then `STATE_COLORS` is used to override

the values in `state_color` on this line:

https://github.com/apache/airflow/blob/c384f9b0f509bab704a70380465be18754800a52/airflow/utils/state.py#L117

Some states are already missing from `STATE_COLORS`, such as `NONE` and

`SHUTDOWN`. I think we should either deprecate `STATE_COLORS` or auto-populate

it from `state_color`. But maybe that's for a different PR.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [airflow] tooptoop4 commented on pull request #15850: Add AWS DMS replication task operators

tooptoop4 commented on pull request #15850: URL: https://github.com/apache/airflow/pull/15850#issuecomment-890273515 @potiuk this doesn't seem to be in any release? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] enriqueayala commented on issue #17176: TriggerDagRunOperator triggering subdags out of order

enriqueayala commented on issue #17176: URL: https://github.com/apache/airflow/issues/17176#issuecomment-890271010 We're having same issue on 2.1.0 with same use case: TriggerDagRunOperator -> DAG with a subdag . This is affecting subdag tasks relying on xcom values from a parent dag task (which produces incorrect/failed process cause xcom value doesn't exist yet) . Is there any workaround? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] boring-cyborg[bot] commented on pull request #17351: Fix link

boring-cyborg[bot] commented on pull request #17351: URL: https://github.com/apache/airflow/pull/17351#issuecomment-890268363 Congratulations on your first Pull Request and welcome to the Apache Airflow community! If you have any issues or are unsure about any anything please check our Contribution Guide (https://github.com/apache/airflow/blob/main/CONTRIBUTING.rst) Here are some useful points: - Pay attention to the quality of your code (flake8, mypy and type annotations). Our [pre-commits]( https://github.com/apache/airflow/blob/main/STATIC_CODE_CHECKS.rst#prerequisites-for-pre-commit-hooks) will help you with that. - In case of a new feature add useful documentation (in docstrings or in `docs/` directory). Adding a new operator? Check this short [guide](https://github.com/apache/airflow/blob/main/docs/apache-airflow/howto/custom-operator.rst) Consider adding an example DAG that shows how users should use it. - Consider using [Breeze environment](https://github.com/apache/airflow/blob/main/BREEZE.rst) for testing locally, it’s a heavy docker but it ships with a working Airflow and a lot of integrations. - Be patient and persistent. It might take some time to get a review or get the final approval from Committers. - Please follow [ASF Code of Conduct](https://www.apache.org/foundation/policies/conduct) for all communication including (but not limited to) comments on Pull Requests, Mailing list and Slack. - Be sure to read the [Airflow Coding style]( https://github.com/apache/airflow/blob/main/CONTRIBUTING.rst#coding-style-and-best-practices). Apache Airflow is a community-driven project and together we are making it better 🚀. In case of doubts contact the developers at: Mailing List: d...@airflow.apache.org Slack: https://s.apache.org/airflow-slack -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] zseta opened a new pull request #17351: Fix link

zseta opened a new pull request #17351: URL: https://github.com/apache/airflow/pull/17351 Just a little link fix -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] github-actions[bot] commented on pull request #15277: Remove support for FAB `APP_THEME`

github-actions[bot] commented on pull request #15277: URL: https://github.com/apache/airflow/pull/15277#issuecomment-890259909 This pull request has been automatically marked as stale because it has not had recent activity. It will be closed in 5 days if no further activity occurs. Thank you for your contributions. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] github-actions[bot] commented on issue #16107: Airflow backfilling can't be disabled

github-actions[bot] commented on issue #16107: URL: https://github.com/apache/airflow/issues/16107#issuecomment-890259879 This issue has been automatically marked as stale because it has been open for 30 days with no response from the author. It will be closed in next 7 days if no further activity occurs from the issue author. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] github-actions[bot] commented on pull request #15195: Fix dag sort in dag stats

github-actions[bot] commented on pull request #15195: URL: https://github.com/apache/airflow/pull/15195#issuecomment-890259931 This pull request has been automatically marked as stale because it has not had recent activity. It will be closed in 5 days if no further activity occurs. Thank you for your contributions. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] github-actions[bot] commented on pull request #15233: Allow SQSSensor to filter messages by their attribute names

github-actions[bot] commented on pull request #15233: URL: https://github.com/apache/airflow/pull/15233#issuecomment-890259919 This pull request has been automatically marked as stale because it has not had recent activity. It will be closed in 5 days if no further activity occurs. Thank you for your contributions. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] SamWheating commented on a change in pull request #17347: Handle and log exceptions raised during task callback

SamWheating commented on a change in pull request #17347:

URL: https://github.com/apache/airflow/pull/17347#discussion_r680267508

##

File path: airflow/models/taskinstance.py

##

@@ -1343,18 +1343,27 @@ def _run_finished_callback(self, error:

Optional[Union[str, Exception]] = None)

if task.on_failure_callback is not None:

context = self.get_template_context()

context["exception"] = error

-task.on_failure_callback(context)

+try:

+task.on_failure_callback(context)

+except Exception:

+log.exception("Failed when executing execute

on_failure_callback:")

elif self.state == State.SUCCESS:

task = self.task

if task.on_success_callback is not None:

context = self.get_template_context()

-task.on_success_callback(context)

+try:

+task.on_success_callback(context)

+except Exception:

Review comment:

Great suggestion - I've added a test and in doing so I even found a

minor bug which has been fixed now.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [airflow] jedcunningham commented on issue #17343: Dags added by DagBag interrupt randomly

jedcunningham commented on issue #17343:

URL: https://github.com/apache/airflow/issues/17343#issuecomment-890215689

Try removing the `trigger.execute({})` line. This is causing the scheduler

to trigger the DAG every time the DAG is parsed (which can be roughly every

30s), probably not what you intended.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [airflow] jedcunningham commented on a change in pull request #17333: Regression on pid reset to allow task start after heartbeat

jedcunningham commented on a change in pull request #17333: URL: https://github.com/apache/airflow/pull/17333#discussion_r680211691 ## File path: airflow/models/taskinstance.py ## @@ -,6 +,7 @@ def check_and_change_state_before_execution( if not test_mode: session.add(Log(State.RUNNING, self)) self.state = State.RUNNING +self.pid = None Review comment: Should this be up with line 1043-1044 instead? ## File path: airflow/models/taskinstance.py ## @@ -,6 +,7 @@ def check_and_change_state_before_execution( if not test_mode: session.add(Log(State.RUNNING, self)) self.state = State.RUNNING +self.pid = None Review comment: Should this be up with lines 1043-1044 instead? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[airflow-site] branch gh-pages updated: Deploying to gh-pages from @ a7f94c1d28afa10059621a4b9e634cd2f43d3ccb 🚀

This is an automated email from the ASF dual-hosted git repository.

github-bot pushed a commit to branch gh-pages

in repository https://gitbox.apache.org/repos/asf/airflow-site.git

The following commit(s) were added to refs/heads/gh-pages by this push:

new 5868efe Deploying to gh-pages from @

a7f94c1d28afa10059621a4b9e634cd2f43d3ccb 🚀

5868efe is described below

commit 5868efec3ed36c8d09eba1af0173266074d82466

Author: feng-tao

AuthorDate: Fri Jul 30 20:38:32 2021 +

Deploying to gh-pages from @ a7f94c1d28afa10059621a4b9e634cd2f43d3ccb 🚀

---

_gen/packages-metadata.json| 386 ++---

blog/airflow-1.10.10/index.html| 4 +-

blog/airflow-1.10.12/index.html| 4 +-

blog/airflow-1.10.8-1.10.9/index.html | 4 +-

blog/airflow-survey-2020/index.html| 4 +-

blog/airflow-survey/index.html | 4 +-

blog/airflow-two-point-oh-is-here/index.html | 4 +-

blog/airflow_summit_2021/index.html| 4 +-

blog/announcing-new-website/index.html | 4 +-

blog/apache-airflow-for-newcomers/index.html | 4 +-

.../index.html | 4 +-

.../index.html | 4 +-

.../index.html | 4 +-

.../index.html | 4 +-

.../index.html | 4 +-

.../index.html | 4 +-

ecosystem/index.html | 2 +

index.html | 30 +-

search/index.html | 4 +-

sitemap.xml| 86 ++---

use-cases/adobe/index.html | 4 +-

use-cases/big-fish-games/index.html| 4 +-

use-cases/dish/index.html | 4 +-

use-cases/experity/index.html | 4 +-

use-cases/onefootball/index.html | 4 +-

use-cases/plarium-krasnodar/index.html | 4 +-

use-cases/sift/index.html | 4 +-

27 files changed, 299 insertions(+), 297 deletions(-)

diff --git a/_gen/packages-metadata.json b/_gen/packages-metadata.json

index c995cc7..cd6adae 100644

--- a/_gen/packages-metadata.json

+++ b/_gen/packages-metadata.json

@@ -1,30 +1,15 @@

[

{

-"package-name": "apache-airflow-providers-google",

-"stable-version": "4.0.0",

-"all-versions": [

- "0.0.1",

- "0.0.2",

- "1.0.0",

- "2.0.0",

- "2.1.0",

- "2.2.0",

- "3.0.0",

- "4.0.0"

-]

- },

- {

-"package-name": "apache-airflow-providers-ftp",

+"package-name": "apache-airflow-providers-plexus",

"stable-version": "2.0.0",

"all-versions": [

"1.0.0",

"1.0.1",

- "1.1.0",

"2.0.0"

]

},

{

-"package-name": "apache-airflow-providers-qubole",

+"package-name": "apache-airflow-providers-sendgrid",

"stable-version": "2.0.0",

"all-versions": [

"1.0.0",

@@ -34,49 +19,52 @@

]

},

{

-"package-name": "apache-airflow-providers-odbc",

+"package-name": "apache-airflow-providers-jira",

"stable-version": "2.0.0",

"all-versions": [

"1.0.0",

"1.0.1",

+ "1.0.2",

"2.0.0"

]

},

{

-"package-name": "apache-airflow-providers-airbyte",

+"package-name": "apache-airflow-providers-exasol",

"stable-version": "2.0.0",

"all-versions": [

"1.0.0",

+ "1.1.0",

+ "1.1.1",

"2.0.0"

]

},

{

-"package-name": "apache-airflow-providers-apache-beam",

-"stable-version": "3.0.0",

+"package-name": "apache-airflow-providers-apache-sqoop",

+"stable-version": "2.0.0",

"all-versions": [

"1.0.0",

"1.0.1",

- "2.0.0",

- "3.0.0"

+ "2.0.0"

]

},

{

-"package-name": "apache-airflow-providers-microsoft-mssql",

+"package-name": "apache-airflow-providers-redis",

"stable-version": "2.0.0",

"all-versions": [

"1.0.0",

"1.0.1",

- "1.1.0",

"2.0.0"

]

},

{

-"package-name": "apache-airflow-providers-presto",

+"package-name": "apache-airflow-providers-snowflake",

"stable-version": "2.0.0",

"all-versions": [

"1.0.0",

- "1.0.1",

- "1.0.2",

+ "1.1.0",

+ "1.1.1",

+ "1.2.0",

+ "1.3.0",

"2.0.0"

]

},

@@ -90,77 +78,63 @@

]

},

{

-"package-name": "apache-airflow-providers-slack",

-"stable-version": "4.0.0",

-"all-versions": [

- "1.0.0",

- "2.0.0",

- "3.0.0",

- "4.0.0"

-]

- },

- {

-"package-name": "apache-airflow-providers-http",

+"package-name": "apache-airflow-providers-discord",

"stable-version": "2.0.0",

"all-versions": [

"1.0.0",

- "1.1.0

[GitHub] [airflow-site] feng-tao commented on pull request #460: Add Amundsen to airflow ecosystem page

feng-tao commented on pull request #460: URL: https://github.com/apache/airflow-site/pull/460#issuecomment-890138563 thanks @potiuk ! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow-site] feng-tao merged pull request #460: Add Amundsen to airflow ecosystem page

feng-tao merged pull request #460: URL: https://github.com/apache/airflow-site/pull/460 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[airflow-site] branch main updated: Add Amundsen to airflow ecosystem page (#460)

This is an automated email from the ASF dual-hosted git repository. tfeng pushed a commit to branch main in repository https://gitbox.apache.org/repos/asf/airflow-site.git The following commit(s) were added to refs/heads/main by this push: new a7f94c1 Add Amundsen to airflow ecosystem page (#460) a7f94c1 is described below commit a7f94c1d28afa10059621a4b9e634cd2f43d3ccb Author: Tao Feng AuthorDate: Fri Jul 30 13:32:00 2021 -0700 Add Amundsen to airflow ecosystem page (#460) --- landing-pages/site/content/en/ecosystem/_index.md | 2 ++ 1 file changed, 2 insertions(+) diff --git a/landing-pages/site/content/en/ecosystem/_index.md b/landing-pages/site/content/en/ecosystem/_index.md index 076c817..28b322a 100644 --- a/landing-pages/site/content/en/ecosystem/_index.md +++ b/landing-pages/site/content/en/ecosystem/_index.md @@ -81,6 +81,8 @@ Apache Airflow releases the [Official Apache Airflow Community Chart](https://ai [Airflow Ditto](https://github.com/angadsingh/airflow-ditto) - An extensible framework to do transformations to an Airflow DAG and convert it into another DAG which is flow-isomorphic with the original DAG, to be able to run it on different environments (e.g. on different clouds, or even different container frameworks - Apache Spark on YARN vs Kubernetes). Comes with out-of-the-box support for EMR-to-HDInsight-DAG transforms. +[Amundsen](https://github.com/amundsen-io/amundsen) - Amundsen is a data discovery and metadata platform for improving the productivity of data analysts, data scientists and engineers when interacting with data. It can surface which Airflow task generates a given table. + [Apache-Liminal-Incubating](https://github.com/apache/incubator-liminal) - Liminal provides a domain-specific-language (DSL) to build ML/AI workflows on top of Apache Airflow. Its goal is to operationalise the machine learning process, allowing data scientists to quickly transition from a successful experiment to an automated pipeline of model training, validation, deployment and inference in production. [Chartis](https://github.com/trejas/chartis) - Python package to convert Common Workflow Language (CWL) into Airflow DAG.

[airflow-site] branch feng-tao-patch-1 created (now dc7642e)

This is an automated email from the ASF dual-hosted git repository. tfeng pushed a change to branch feng-tao-patch-1 in repository https://gitbox.apache.org/repos/asf/airflow-site.git. at dc7642e Add Amundsen to airflow ecosystem page No new revisions were added by this update.

[GitHub] [airflow-site] feng-tao opened a new pull request #460: Add Amundsen to airflow ecosystem page

feng-tao opened a new pull request #460: URL: https://github.com/apache/airflow-site/pull/460 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk closed issue #17350: "Failed to fetch log file from worker" when running CeleryExecutor in docker worker

potiuk closed issue #17350: URL: https://github.com/apache/airflow/issues/17350 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk commented on issue #17350: "Failed to fetch log file from worker" when running CeleryExecutor in docker worker

potiuk commented on issue #17350: URL: https://github.com/apache/airflow/issues/17350#issuecomment-890127667 this is very typical secret key of a flask server https://newbedev.com/where-do-i-get-a-secret-key-for-flask -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk commented on issue #17350: "Failed to fetch log file from worker" when running CeleryExecutor in docker worker

potiuk commented on issue #17350: URL: https://github.com/apache/airflow/issues/17350#issuecomment-890125922 I know 42 is the ultimate answer, but probably not here :) -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] paantya commented on issue #17350: "Failed to fetch log file from worker" when running CeleryExecutor in docker worker

paantya commented on issue #17350: URL: https://github.com/apache/airflow/issues/17350#issuecomment-890123512 or some more complex hash? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] paantya commented on issue #17350: "Failed to fetch log file from worker" when running CeleryExecutor in docker worker

paantya commented on issue #17350: URL: https://github.com/apache/airflow/issues/17350#issuecomment-890123158 like this? `AIRFLOW__WEBSERVER__SECRET_KEY=42` `docker run --rm -it -e AIRFLOW__CORE__EXECUTOR="CeleryExecutor" -e AIRFLOW__CORE__SQL_ALCHEMY_CONN="postgresql+psycopg2://airflow:airflow@10.0.0.197:5432/airflow" e AIRFLOW__CELERY__RESULT_BACKEND="db+postgresql://airflow:airflow@10.0.0.197:5432/airflow" -e AIRFLOW__CELERY__BROKER_URL="redis://:@10.0.0.197:6380/0" -e AIRFLOW__CORE__FERNET_KEY="" -e AIRFLOW__CORE__DAGS_ARE_PAUSED_AT_CREATION="true" -e AIRFLOW__CORE__LOAD_EXAMPLES="true" -e AIRFLOW__API__AUTH_BACKEND="airflow.api.auth.backend.basic_auth" -e AIRFLOW__WEBSERVER__SECRET_KEY=42 -e _PIP_ADDITIONAL_REQUIREMENTS="" -v /home/apatshin/tmp/airflow-worker/dags:/opt/airflow/dags -v /home/apatshin/tmp/airflow-worker/logs:/opt/airflow/logs -v /home/apatshin/tmp/airflow-worker/plugins:/opt/airflow/plugins -p "6380:6379" -p "5432:5432" -p "8793:8793" -e DB_HOST="10.0.0.197" --user 1012:0 --hostname="host197" "apache/airflow:2.1.2-python3.7" celery worker` -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] paantya commented on issue #17350: "Failed to fetch log file from worker" when running CeleryExecutor in docker worker

paantya commented on issue #17350: URL: https://github.com/apache/airflow/issues/17350#issuecomment-890122484 @potiuk Please tell me if you have an example of how to install it? How to generate? I have not come across a similar one should there be a prime number or some specific file? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] jedcunningham commented on issue #17238: Unexpected skipped state for tasks run with KubernetesExecutor

jedcunningham commented on issue #17238:

URL: https://github.com/apache/airflow/issues/17238#issuecomment-890121751

I've tried to replicate this with the following DAG without success:

```

import time

from datetime import datetime

from airflow import DAG

from airflow.operators.dummy import DummyOperator

from airflow.operators.python import PythonOperator

default_args = {"start_date": datetime(2021, 7, 30)}

with DAG("skipped", default_args=default_args, schedule_interval=None) as

dag:

start = DummyOperator(task_id="start")

end = DummyOperator(task_id="end")

for i in range(5):

prev = start

for j in range(3):

t = PythonOperator(

task_id=f"t-{i}-{j}", python_callable=lambda: time.sleep(10)

)

prev >> t

prev = t

t >> end

```

@mgorsk1, any chance you could try in your instance with that DAG? Also,

filtering down to the pod name might be missing an important log message. I'd

try filtering by a unique task_id (if there is one) too.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [airflow] potiuk commented on issue #17350: "Failed to fetch log file from worker" when running CeleryExecutor in docker worker

potiuk commented on issue #17350: URL: https://github.com/apache/airflow/issues/17350#issuecomment-890120073 More explanation: This is the result of fixing the potential security vulnerability (logs could be retrieved without authentication). By specifying same secret keys on both servers you allow the webserver to authenticate when retrieving logs. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk commented on issue #17350: "Failed to fetch log file from worker" when running CeleryExecutor in docker worker

potiuk commented on issue #17350: URL: https://github.com/apache/airflow/issues/17350#issuecomment-890119166 It's the problem of misconfiguration of both servers. You need to specify the same secret key to be able to access logs. Set AIRFLOW__WEBSERVER__SECRET_KEY to randomly generated key (but should be the same on both - the workers and webserver). -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk commented on pull request #17321: Enable specifying dictionary paths in `template_fields_renderers`

potiuk commented on pull request #17321: URL: https://github.com/apache/airflow/pull/17321#issuecomment-890115179 Can you please rebase @nathadfield ? We have fixed some failures in main , and it would be great to re-run that one through all tests? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] github-actions[bot] commented on pull request #17321: Enable specifying dictionary paths in `template_fields_renderers`

github-actions[bot] commented on pull request #17321: URL: https://github.com/apache/airflow/pull/17321#issuecomment-890115026 The PR is likely OK to be merged with just subset of tests for default Python and Database versions without running the full matrix of tests, because it does not modify the core of Airflow. If the committers decide that the full tests matrix is needed, they will add the label 'full tests needed'. Then you should rebase to the latest main or amend the last commit of the PR, and push it with --force-with-lease. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk commented on a change in pull request #17339: Adding support for multiple task-ids in the external task sensor

potiuk commented on a change in pull request #17339:

URL: https://github.com/apache/airflow/pull/17339#discussion_r680175088

##

File path: airflow/sensors/external_task.py

##

@@ -111,12 +117,23 @@ def __init__(

"`{}` and failed states `{}`".format(self.allowed_states,

self.failed_states)

)

-if external_task_id:

+if external_task_id is not None and external_task_ids is not None:

+raise ValueError(

+'Only one of `external_task_id` or `external_task_ids` may '

+'be provided to ExternalTaskSensor; not both.'

+)

+

+if external_task_id is not None:

+external_task_ids = [external_task_id]

+

+if external_task_ids:

if not total_states <= set(State.task_states):

raise ValueError(

f'Valid values for `allowed_states` and `failed_states` '

-f'when `external_task_id` is not `None`:

{State.task_states}'

+f'when `external_task_id` or `external_task_ids` is not

`None`: {State.task_states}'

)

+if len(external_task_ids) > len(set(external_task_ids)):

+raise ValueError('Duplicate task_ids passed in

external_task_ids parameter')

Review comment:

Nice check!

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [airflow] potiuk commented on issue #17340: Retrieve session logs when using Livy Operator

potiuk commented on issue #17340: URL: https://github.com/apache/airflow/issues/17340#issuecomment-890107560 Assigned you @sreenath-kamath ! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] github-actions[bot] commented on pull request #17349: #16037 Add support for passing templated requirements.txt in PythonVirtualenvOperator

github-actions[bot] commented on pull request #17349: URL: https://github.com/apache/airflow/pull/17349#issuecomment-890106651 The PR most likely needs to run full matrix of tests because it modifies parts of the core of Airflow. However, committers might decide to merge it quickly and take the risk. If they don't merge it quickly - please rebase it to the latest main at your convenience, or amend the last commit of the PR, and push it with --force-with-lease. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[airflow] branch main updated: Added print statements for clarity in provider yaml checks (#17322)

This is an automated email from the ASF dual-hosted git repository.

potiuk pushed a commit to branch main

in repository https://gitbox.apache.org/repos/asf/airflow.git

The following commit(s) were added to refs/heads/main by this push:

new 76e6315 Added print statements for clarity in provider yaml checks

(#17322)

76e6315 is described below

commit 76e6315473671b87f3d5fe64e4c35a79658789d3

Author: Kanthi

AuthorDate: Fri Jul 30 15:18:26 2021 -0400

Added print statements for clarity in provider yaml checks (#17322)

---

.../ci/pre_commit/pre_commit_check_provider_yaml_files.py | 14 ++

1 file changed, 10 insertions(+), 4 deletions(-)

diff --git a/scripts/ci/pre_commit/pre_commit_check_provider_yaml_files.py

b/scripts/ci/pre_commit/pre_commit_check_provider_yaml_files.py

index 24d963b..c6c0584 100755

--- a/scripts/ci/pre_commit/pre_commit_check_provider_yaml_files.py

+++ b/scripts/ci/pre_commit/pre_commit_check_provider_yaml_files.py

@@ -119,13 +119,13 @@ def assert_sets_equal(set1, set2):

lines = []

if difference1:

-lines.append('Items in the first set but not the second:')

+lines.append('-- Items in the left set but not the right:')

for item in sorted(difference1):

-lines.append(repr(item))

+lines.append(f' {item!r}')

if difference2:

-lines.append('Items in the second set but not the first:')

+lines.append('-- Items in the right set but not the left:')

for item in sorted(difference2):

-lines.append(repr(item))

+lines.append(f' {item!r}')

standard_msg = '\n'.join(lines)

raise AssertionError(standard_msg)

@@ -155,6 +155,7 @@ def parse_module_data(provider_data, resource_type,

yaml_file_path):

def check_completeness_of_list_of_hooks_sensors_hooks(yaml_files: Dict[str,

Dict]):

print("Checking completeness of list of {sensors, hooks, operators}")

+print(" -- {sensors, hooks, operators} - Expected modules(Left): Current

Modules(Right)")

for (yaml_file_path, provider_data), resource_type in product(

yaml_files.items(), ["sensors", "operators", "hooks"]

):

@@ -193,6 +194,8 @@ def

check_duplicates_in_integrations_names_of_hooks_sensors_operators(yaml_files

def check_completeness_of_list_of_transfers(yaml_files: Dict[str, Dict]):

print("Checking completeness of list of transfers")

resource_type = 'transfers'

+

+print(" -- Expected transfers modules(Left): Current transfers

Modules(Right)")

for yaml_file_path, provider_data in yaml_files.items():

expected_modules, provider_package, resource_data = parse_module_data(

provider_data, resource_type, yaml_file_path

@@ -309,7 +312,10 @@ def check_doc_files(yaml_files: Dict[str, Dict]):

}

try:

+print(" -- Checking document urls: expected(left), current(right)")

assert_sets_equal(set(expected_doc_urls), set(current_doc_urls))

+

+print(" -- Checking logo urls: expected(left), current(right)")

assert_sets_equal(set(expected_logo_urls), set(current_logo_urls))

except AssertionError as ex:

print(ex)

[GitHub] [airflow] potiuk closed issue #17316: Docs validation function - Add meaningful errors

potiuk closed issue #17316: URL: https://github.com/apache/airflow/issues/17316 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk merged pull request #17322: Added print statements for clarity in provider yaml checks

potiuk merged pull request #17322: URL: https://github.com/apache/airflow/pull/17322 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk commented on pull request #17322: Added print statements for clarity in provider yaml checks

potiuk commented on pull request #17322: URL: https://github.com/apache/airflow/pull/17322#issuecomment-890102252 > Thanks @potiuk , fixed the static checks, but the 2 image failures doesnt seem to be related to the commits Yeah. Those are unrelated. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] andormarkus commented on pull request #16571: Implemented Basic EKS Integration

andormarkus commented on pull request #16571: URL: https://github.com/apache/airflow/pull/16571#issuecomment-890095899 Hi @ferruzzi, I would like to describe a special problem which are having problem when we are create EKS managed nodegroups with boto3. We got 3 environment on 3 separate AWS account. By AWS design, AZ are randomly assigned. If an instance type is available on account A in AZ 1 it might be not available in account B an AZ1. We are running into this issue: ```bash Your requested instance type (m5ad.4xlarge) is not supported in your requested Availability Zone (eu-central-1c). Please retry your request by not specifying an Availability Zone or choosing eu-central-1a, eu-central-1b. ``` In this case, node group creation will fail with `CREATE_FAILED` error and the node group be available on EKS. When Airflow retry come, the second job will fail with the following error: ```bash botocore.errorfactory.ResourceInUseException: An error occurred (ResourceInUseException) when calling the CreateNodegroup operation: NodeGroup already exists with name [my_node] and cluster name [my_cluster] ``` It would be great is there would be an option in this integration if create jobs fails with `CREATE_FAILED` than it would delete the failed node group. I hope I was clear, if not feel free to ask any question. Thanks, Andor -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

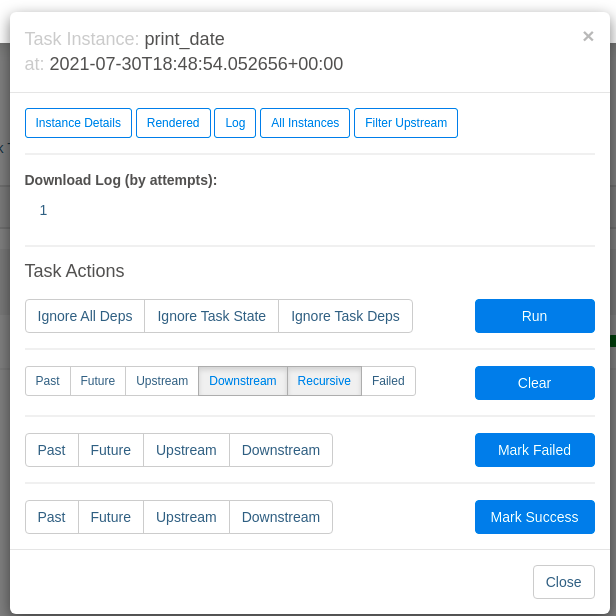

[GitHub] [airflow] paantya opened a new issue #17350: "Failed to fetch log file from worker" when running CeleryExecutor in docker worker

paantya opened a new issue #17350:

URL: https://github.com/apache/airflow/issues/17350

** Apache Airflow version **:

AIRFLOW_IMAGE_NAME: -apache / airflow: 2.1.2-python3.7

**Environment**:

- ** running in docker on ubuntu 18.04**

here is the config

curl -LfO

'https://airflow.apache.org/docs/apache-airflow/2.1.2/docker-compose.yaml'

**What happened**:

I run on one server a docker compose without workers, and on another server

a worker using docker.

When the task has been counted, I cannot get the logs from another server.

** What did you expect **:

something in the docker worker run settings on the second server.

** How to reproduce this **:

https://airflow.apache.org/docs/apache-airflow/2.1.2/docker-compose.yaml'

mkdir ./dags ./logs ./plugins

echo -e "AIRFLOW_UID=$(id -u)\nAIRFLOW_GID=0" > .env

```

chamge imade name to `apache/airflow:2.1.2-python3.7`

add to docker-compose.yaml file open ports for postgres:

```

postgres:

image: postgres: 13

ports:

- 5432:5432

```

and change output ports for redis to 6380:

```

redis:

image: redis:latest

ports:

- 6380:6379

```

add to webserver ports 8793 for logs (not sure what is needed)

```

airflow-webserver:

<<: *airflow-common

command: webserver

ports:

- 8080:8080

- 8793:8793

```

Comment out or delete the worker description. like:

```

# airflow-worker:

#<<: *airflow-common

#command: celery worker

#healthcheck:

# test:

#- "CMD-SHELL"

#- 'celery --app airflow.executors.celery_executor.app inspect ping

-d "celery@$${HOSTNAME}"'

# interval: 10s

# timeout: 10s

# retries: 5

#restart: always

```

next, run in terminal

```

docker-compose up airflow-init

docker-compose up

```

### then run in server2 worker:

```

mkdir tmp-airflow

cd tmp-airflow

curl -LfO

'https://airflow.apache.org/docs/apache-airflow/2.1.2/docker-compose.yaml'

mkdir ./dags ./logs ./plugins

echo -e "AIRFLOW_UID=$(id -u)\nAIRFLOW_GID=0" > .env

```

`docker run --rm -it -e AIRFLOW__CORE__EXECUTOR="CeleryExecutor" -e

AIRFLOW__CORE__SQL_ALCHEMY_CONN="postgresql+psycopg2://airflow:airflow@10.0.0.197:5432/airflow"

e

AIRFLOW__CELERY__RESULT_BACKEND="db+postgresql://airflow:airflow@10.0.0.197:5432/airflow"

-e AIRFLOW__CELERY__BROKER_URL="redis://:@10.0.0.197:6380/0" -e

AIRFLOW__CORE__FERNET_KEY="" -e

AIRFLOW__CORE__DAGS_ARE_PAUSED_AT_CREATION="true" -e

AIRFLOW__CORE__LOAD_EXAMPLES="true" -e

AIRFLOW__API__AUTH_BACKEND="airflow.api.auth.backend.basic_auth" -e

_PIP_ADDITIONAL_REQUIREMENTS="" -v

/home/apatshin/tmp/airflow-worker/dags:/opt/airflow/dags -v

/home/apatshin/tmp/airflow-worker/logs:/opt/airflow/logs -v

/home/apatshin/tmp/airflow-worker/plugins:/opt/airflow/plugins -p "6380:6379"

-p "5432:5432" -p "8793:8793" -e DB_HOST="10.0.0.197" --user 1012:0

--hostname="host197" "apache/airflow:2.1.2-python3.7" celery worker`

`10.0.0.197` -- ip server1

`host197` -- naming in local network for server2

`--user 1012:0` -- `1012` it is in file ./.env in "AIRFLOW_UID"

see in you files and replace:

`cat ./.env

`

### next, run DAG in webserver on server1:

then go to in browser to `10.0.0.197:8080` or `0.0.0.0:8080`

and run DAG "tutorial"

and then see log on ferst tasks

### errors

and see next:

```

*** Log file does not exist:

/opt/airflow/logs/tutorial/print_date/2021-07-30T18:48:54.052656+00:00/1.log

*** Fetching from:

http://host197:8793/log/tutorial/print_date/2021-07-30T18:48:54.052656+00:00/1.log

*** Failed to fetch log file from worker. 403 Client Error: FORBIDDEN for

url:

http://host197:8793/log/tutorial/print_date/2021-07-30T18:48:54.052656+00:00/1.log

For more information check: https://httpstatuses.com/403

```

in terminal in server2:

`[2021-07-30 18:49:12,966] {_internal.py:113} INFO - 10.77.135.197 - -

[30/Jul/2021 18:49:12] "GET

/log/tutorial/print_date/2021-07-30T18:48:54.052656+00:00/1.log HTTP/1.1" 403 -`

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [airflow] rounakdatta commented on issue #16037: allow using requirments.txt in PythonVirtualEnvOperator

rounakdatta commented on issue #16037: URL: https://github.com/apache/airflow/issues/16037#issuecomment-890093215 Hello @potiuk @alexInhert, I have tried an implementation of the above discussions, can you review once? Thanks. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] rounakdatta opened a new pull request #17349: #16037 Add support for passing templated requirements.txt in PythonVirtualenvOperator

rounakdatta opened a new pull request #17349: URL: https://github.com/apache/airflow/pull/17349 Closes: https://github.com/apache/airflow/issues/16037 This pull request addresses the changes discussed in the aforementioned issue. - [x] Added new unit tests - [x] This change is not breaking Changes: - Support for passing a `requirements.txt` file in the `requirements` field of the PythonVirtualenvOperator. - The file can be named `*.txt` (anything ending with .txt), and can be jinja templated. Note: `template_searchpath` must be appropriately set while using arbitrary locations of the template file. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] leahecole opened a new issue #17348: Add support for hyperparameter tuning on GCP Cloud AI

leahecole opened a new issue #17348: URL: https://github.com/apache/airflow/issues/17348 @darshan-majithiya had opened #15429 to add the hyperparameter tuning PR but it's gone stale. I'm adding this issue to see if they want to pick it back up, or if not, if someone wants to pick up where they left off in the spirit of open source 😄 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] ephraimbuddy commented on a change in pull request #17347: Handle and log exceptions raised during task callback

ephraimbuddy commented on a change in pull request #17347:

URL: https://github.com/apache/airflow/pull/17347#discussion_r680145422

##

File path: airflow/models/taskinstance.py

##

@@ -1343,18 +1343,27 @@ def _run_finished_callback(self, error:

Optional[Union[str, Exception]] = None)

if task.on_failure_callback is not None:

context = self.get_template_context()

context["exception"] = error

-task.on_failure_callback(context)

+try:

+task.on_failure_callback(context)

+except Exception:

+log.exception("Failed when executing execute

on_failure_callback:")

elif self.state == State.SUCCESS:

task = self.task

if task.on_success_callback is not None:

context = self.get_template_context()

-task.on_success_callback(context)

+try:

+task.on_success_callback(context)

+except Exception:

Review comment:

Nice. I’m suggesting we have a test for this change

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [airflow] sreenath-kamath commented on issue #17340: Retrieve session logs when using Livy Operator

sreenath-kamath commented on issue #17340: URL: https://github.com/apache/airflow/issues/17340#issuecomment-890069349 I can submit a PR for this, Will be a good addition to the LivyOpeartor -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] mehmax commented on pull request #17321: Enable specifying dictionary paths in `template_fields_renderers`

mehmax commented on pull request #17321: URL: https://github.com/apache/airflow/pull/17321#issuecomment-890058655 Hi @nathadfield, checking and rendering op_kwargs individually would totally solve the Issue I faced. Looks good to me now! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] jedcunningham commented on a change in pull request #17236: [Airflow 16364] Add conn_timeout and cmd_timeout params to SSHOperator; add conn_timeout param to SSHHook

jedcunningham commented on a change in pull request #17236:

URL: https://github.com/apache/airflow/pull/17236#discussion_r680117132

##

File path: airflow/providers/ssh/hooks/ssh.py

##

@@ -56,7 +58,9 @@ class SSHHook(BaseHook):

:type key_file: str

:param port: port of remote host to connect (Default is paramiko SSH_PORT)

:type port: int

-:param timeout: timeout for the attempt to connect to the remote_host.

+:param conn_timeout: timeout for the attempt to connect to the remote_host.

+:type conn_timeout: int

+:param timeout: (Deprecated). timeout for the attempt to connect to the

remote_host.

Review comment:

Should we mention what param to use instead?

##

File path: airflow/providers/ssh/operators/ssh.py

##

@@ -43,7 +46,11 @@ class SSHOperator(BaseOperator):

:type remote_host: str

:param command: command to execute on remote host. (templated)

:type command: str

-:param timeout: timeout (in seconds) for executing the command. The

default is 10 seconds.

+:param conn_timeout: timeout (in seconds) for maintaining the connection.

The default is 10 seconds.

+:type conn_timeout: int

+:param cmd_timeout: timeout (in seconds) for executing the command. The

default is 10 seconds.

+:type cmd_timeout: int

+:param timeout: (deprecated) timeout (in seconds) for executing the

command. The default is 10 seconds.

Review comment:

Here as well?

##

File path: tests/providers/ssh/hooks/test_ssh.py

##

@@ -475,6 +504,120 @@ def

test_ssh_connection_with_no_host_key_where_no_host_key_check_is_false(self,

assert ssh_client.return_value.connect.called is True

assert

ssh_client.return_value.get_host_keys.return_value.add.called is False

+@mock.patch('airflow.providers.ssh.hooks.ssh.paramiko.SSHClient')

+def test_ssh_connection_with_conn_timeout(self, ssh_mock):

+hook = SSHHook(

+remote_host='remote_host',

+port='port',

+username='username',

+password='password',

+conn_timeout=20,

+key_file='fake.file',

+)

+

+with hook.get_conn():

+ssh_mock.return_value.connect.assert_called_once_with(

+hostname='remote_host',

+username='username',

+password='password',

+key_filename='fake.file',

+timeout=20,

+compress=True,

+port='port',

+sock=None,

+look_for_keys=True,

+)

+

+@mock.patch('airflow.providers.ssh.hooks.ssh.paramiko.SSHClient')

+def test_ssh_connection_with_conn_timeout_and_timeout(self, ssh_mock):

+hook = SSHHook(

+remote_host='remote_host',

+port='port',

+username='username',

+password='password',

+timeout=10,

+conn_timeout=20,

+key_file='fake.file',

+)

+

+with hook.get_conn():

+ssh_mock.return_value.connect.assert_called_once_with(

+hostname='remote_host',

+username='username',

+password='password',

+key_filename='fake.file',

+timeout=20,

+compress=True,

+port='port',

+sock=None,

+look_for_keys=True,

+)

+

+@mock.patch('airflow.providers.ssh.hooks.ssh.paramiko.SSHClient')

+def test_ssh_connection_with_timeout_extra(self, ssh_mock):

+hook = SSHHook(

+ssh_conn_id=self.CONN_SSH_WITH_TIMEOUT_EXTRA,

+remote_host='remote_host',

+port='port',

+username='username',

+timeout=10,

+)

+

+with hook.get_conn():

+ssh_mock.return_value.connect.assert_called_once_with(

+hostname='remote_host',

+username='username',

+timeout=20,

Review comment:

I realize the existing behavior was for extras to win, but that seems

backwards to me. I'd expect the param to win.

Maybe we leave `timeout` as-is, but invert it for `conn_timeout` since it is

new?

(`hostname` is a good example)

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [airflow] subkanthi commented on pull request #17322: Added print statements for clarity in provider yaml checks

subkanthi commented on pull request #17322: URL: https://github.com/apache/airflow/pull/17322#issuecomment-890056376 > still some static test fail (also build images) - can you please use pre-commits and rebase to latest main ? Thanks @potiuk , fixed the static checks, but the 2 image failures doesnt seem to be related to the commits -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] mehmax commented on pull request #17239: Improved UI rendering of templated dicts and lists