[GitHub] [airflow] uranusjr commented on a change in pull request #22333: Patch sql_alchemy_conn if old postgres scheme used

uranusjr commented on a change in pull request #22333:

URL: https://github.com/apache/airflow/pull/22333#discussion_r828800148

##

File path: airflow/settings.py

##

@@ -228,6 +229,19 @@ def configure_vars():

global PLUGINS_FOLDER

global DONOT_MODIFY_HANDLERS

SQL_ALCHEMY_CONN = conf.get('core', 'SQL_ALCHEMY_CONN')

+

+# as of sqlalchemy 1.4, scheme `postgres+psycopg2` must be replaced with

`postgresql`

+parsed = urlparse(SQL_ALCHEMY_CONN)

+bad_scheme = 'postgres+psycopg2'

+if parsed.scheme == bad_scheme:

+warnings.warn(

+f"Scheme for metadata sql alchemy connection is `{bad_scheme}`."

+"As of sqlalchemy 1.4 this is no longer supported. You must

change "

+"to `postgresql`",

+PendingDeprecationWarning,

Review comment:

Should this be `DeprecationWarning`?

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [airflow] uranusjr commented on a change in pull request #22332: Events Timetable

uranusjr commented on a change in pull request #22332: URL: https://github.com/apache/airflow/pull/22332#discussion_r828798735 ## File path: airflow/timetables/events.py ## @@ -0,0 +1,83 @@ +# Licensed to the Apache Software Foundation (ASF) under one +# or more contributor license agreements. See the NOTICE file +# distributed with this work for additional information +# regarding copyright ownership. The ASF licenses this file +# to you under the Apache License, Version 2.0 (the +# "License"); you may not use this file except in compliance +# with the License. You may obtain a copy of the License at +# +# http://www.apache.org/licenses/LICENSE-2.0 +# +# Unless required by applicable law or agreed to in writing, +# software distributed under the License is distributed on an +# "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY +# KIND, either express or implied. See the License for the +# specific language governing permissions and limitations +# under the License. + +from typing import Iterable, Optional + +import numpy as np +import pendulum +from pendulum import DateTime + +from airflow.timetables.base import DagRunInfo, DataInterval, TimeRestriction, Timetable +from airflow.timetables.simple import NullTimetable + + +class EventsTimetable(NullTimetable): Review comment: `NullTimetable` (and other non-recurring timetables) has some special treatments in UI. I think it’s better to inherit `Timetable` instead (the root class). Also it should be useful to implement `__repr__`, `summary`, and `description` For UI representation. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] uranusjr commented on a change in pull request #22332: Events Timetable

uranusjr commented on a change in pull request #22332: URL: https://github.com/apache/airflow/pull/22332#discussion_r828798735 ## File path: airflow/timetables/events.py ## @@ -0,0 +1,83 @@ +# Licensed to the Apache Software Foundation (ASF) under one +# or more contributor license agreements. See the NOTICE file +# distributed with this work for additional information +# regarding copyright ownership. The ASF licenses this file +# to you under the Apache License, Version 2.0 (the +# "License"); you may not use this file except in compliance +# with the License. You may obtain a copy of the License at +# +# http://www.apache.org/licenses/LICENSE-2.0 +# +# Unless required by applicable law or agreed to in writing, +# software distributed under the License is distributed on an +# "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY +# KIND, either express or implied. See the License for the +# specific language governing permissions and limitations +# under the License. + +from typing import Iterable, Optional + +import numpy as np +import pendulum +from pendulum import DateTime + +from airflow.timetables.base import DagRunInfo, DataInterval, TimeRestriction, Timetable +from airflow.timetables.simple import NullTimetable + + +class EventsTimetable(NullTimetable): Review comment: `NullTimetable` (and other non-recurring timetables) has some special treatments in UI. I think it’s better to inherit `Timetable` instead (the root class). Also it should be useful to implement `__repr__` and `summary` For UI representation. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] uranusjr opened a new pull request #22334: Add fk between xcom and task instance

uranusjr opened a new pull request #22334: URL: https://github.com/apache/airflow/pull/22334 As discussed previously. Also improved the dag_run relationship to use the dag_run_id field directly because why not? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] shuhoy commented on pull request #22252: Refactor BigQuery to GCS Operator

shuhoy commented on pull request #22252: URL: https://github.com/apache/airflow/pull/22252#issuecomment-1070349062 All checks passed!!:rocket: -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

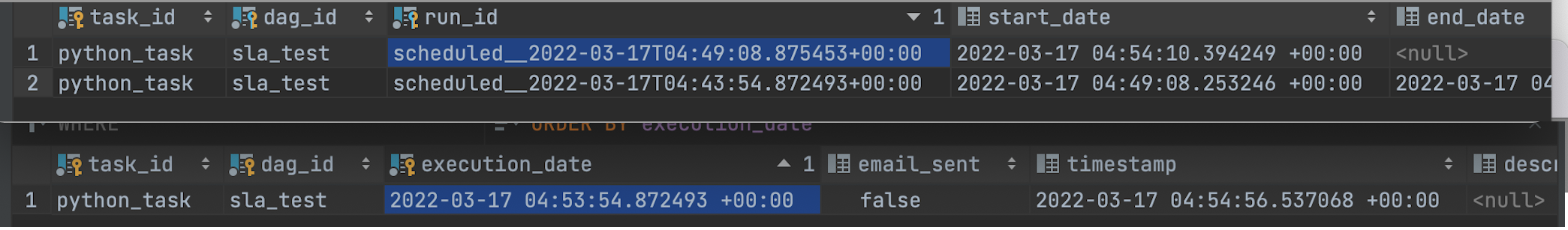

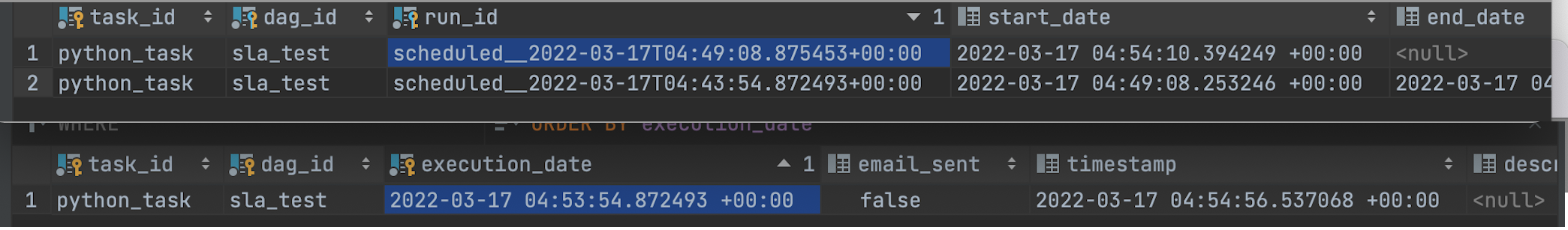

[GitHub] [airflow] dstandish commented on a change in pull request #22184: Add run_id and map_index to SlaMiss

dstandish commented on a change in pull request #22184: URL: https://github.com/apache/airflow/pull/22184#discussion_r828760938 ## File path: airflow/dag_processing/processor.py ## @@ -412,20 +410,20 @@ def manage_slas(self, dag: DAG, session: Session = None) -> None: else: while next_info.logical_date < ts: next_info = dag.next_dagrun_info(next_info.data_interval, restricted=False) - if next_info is None: break -if (ti.dag_id, ti.task_id, next_info.logical_date) in recorded_slas_query: +next_run_id = DR.generate_run_id(DagRunType.SCHEDULED, next_info.logical_date) +if (ti.dag_id, ti.task_id, next_run_id, ti.map_index) in recorded_sla_misses: Review comment: though it is weird ... i don't understand why we immediately call next run here https://github.com/apache/airflow/blob/main/airflow/dag_processing/processor.py#L414 we're already at "the next run" relative to the last completed TI -- why don't we see if _that_ run has an SLA miss? it seems we skip to "the run _after_ the run after" the latest TI. so that we could only get an SLA miss if airflow is 2 runs behind 🤪 i need to actually do some live testing to understand how this actually behaves. update: i did some live testing, and yeah, SLAs are all screwed up here's an example:  i created a task that is sleep(300), SLA of one minute, and runs every 5 minutes. here you can see that first TI gets no miss (expected) and the second TI does not get any miss. the one that is created is always 2 runs ahead of the latest successful TI 🤦 and one ahead of the one that is running. also visible here is an odd quirk that appears to be a bug re timetables or data intervals or something in that area: the execution dates are wiggly i.e. not a value `N * timedelta + dag.start_date`. they have seemingly random millis precision. and as a consequence, not only are the SlaMiss records in the future, but i have found that they may _never_ correspond to TIs that actually come into existence. e.g. the SlaMiss you see above is 53m:54s and change but observe here after waiting a few minutes the exec date for the TI actually created is 54m:11s (we never end up seeing a TI with 53m:54s). https://user-images.githubusercontent.com/15932138/158741876-f8085c2c-70c7-4c47-a663-216a545f701b.png";> -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] dstandish commented on a change in pull request #22184: Add run_id and map_index to SlaMiss

dstandish commented on a change in pull request #22184: URL: https://github.com/apache/airflow/pull/22184#discussion_r828760938 ## File path: airflow/dag_processing/processor.py ## @@ -412,20 +410,20 @@ def manage_slas(self, dag: DAG, session: Session = None) -> None: else: while next_info.logical_date < ts: next_info = dag.next_dagrun_info(next_info.data_interval, restricted=False) - if next_info is None: break -if (ti.dag_id, ti.task_id, next_info.logical_date) in recorded_slas_query: +next_run_id = DR.generate_run_id(DagRunType.SCHEDULED, next_info.logical_date) +if (ti.dag_id, ti.task_id, next_run_id, ti.map_index) in recorded_sla_misses: Review comment: though it is weird ... i don't understand why we immediately call next run here https://github.com/apache/airflow/blob/main/airflow/dag_processing/processor.py#L414 we're already at "the next run" relative to the last completed TI -- why don't we see if _that_ run has an SLA miss? it seems we skip to "the run _after_ the run after" the latest TI. so that we could only get an SLA miss if airflow is 2 runs behind 🤪 i need to actually do some live testing to understand how this actually behaves. update: i did some live testing, and yeah, SLAs are all screwed up here's an example:  i created a task that is sleep(300), SLA of one minute, and runs every 5 minutes. here you can see that first TI gets no miss (expected) and the second TI does not get any miss. the one that is created is always 2 runs ahead of the latest successful TI 🤦 and one ahead of the one that is running. also visible here is an odd quirk that appears to be a bug re timetables or data intervals or something in that area: the execution dates are wiggly i.e. not a value `N * timedelta + dag.start_date`. they have seemingly random millis precision. and as a consequence, not only are the SlaMiss records in the future, but i have found that they may _never_ correspond to TIs that actually come into existence. 🤷 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] uranusjr commented on a change in pull request #22272: Add map_index support to al task instance-related views

uranusjr commented on a change in pull request #22272:

URL: https://github.com/apache/airflow/pull/22272#discussion_r828749020

##

File path: airflow/www/static/js/tree/StatusBox.jsx

##

@@ -36,9 +36,9 @@ const StatusBox = ({

group, instance, containerRef, extraLinks = [],

}) => {

const {

-executionDate, taskId, tryNumber = 0, operator, runId,

+executionDate, taskId, tryNumber = 0, operator, runId, mapIndex,

} = instance;

- const onClick = () => executionDate && callModal(taskId, executionDate,

extraLinks, tryNumber, operator === 'SubDagOperator' || undefined, runId);

+ const onClick = () => executionDate && callModal(taskId, executionDate,

extraLinks, tryNumber, operator === 'SubDagOperator', runId, mapIndex);

Review comment:

Same, does it matter to use false vs undefined?

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [airflow] uranusjr commented on a change in pull request #22272: Add map_index support to al task instance-related views

uranusjr commented on a change in pull request #22272:

URL: https://github.com/apache/airflow/pull/22272#discussion_r828748779

##

File path: airflow/www/static/js/graph.js

##

@@ -172,8 +172,15 @@ function draw() {

const task = tasks[nodeId];

const tryNumber = taskInstances[nodeId].try_number || 0;

- if (task.task_type === 'SubDagOperator') callModal(nodeId,

executionDate, task.extra_links, tryNumber, true, dagRunId);

- else callModal(nodeId, executionDate, task.extra_links, tryNumber,

undefined, dagRunId);

+ callModal(

+nodeId,

+executionDate,

+task.extra_links,

+tryNumber,

+task.task_tupe === 'SubDagOperator',

Review comment:

```suggestion

task.task_tupe === 'SubDagOperator' ? true : undefined,

```

Does it make a difference? This is closer to the original.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [airflow] uranusjr commented on a change in pull request #22272: Add map_index support to al task instance-related views

uranusjr commented on a change in pull request #22272:

URL: https://github.com/apache/airflow/pull/22272#discussion_r828748779

##

File path: airflow/www/static/js/graph.js

##

@@ -172,8 +172,15 @@ function draw() {

const task = tasks[nodeId];

const tryNumber = taskInstances[nodeId].try_number || 0;

- if (task.task_type === 'SubDagOperator') callModal(nodeId,

executionDate, task.extra_links, tryNumber, true, dagRunId);

- else callModal(nodeId, executionDate, task.extra_links, tryNumber,

undefined, dagRunId);

+ callModal(

+nodeId,

+executionDate,

+task.extra_links,

+tryNumber,

+task.task_tupe === 'SubDagOperator',

Review comment:

```suggestion

task.task_tupe === 'SubDagOperator' ? true : undefined,

```

Does it make a difference? This matches closer to the original.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [airflow] collinmcnulty opened a new pull request #22332: Events Timetable

collinmcnulty opened a new pull request #22332: URL: https://github.com/apache/airflow/pull/22332 I've added a new Timetable that I believe will be widely useful for timing based on sporting events, planned communication campaigns, and other schedules that are arbitrary and irregular but predictable. I need to put more thought into (and could use help with) testing, but I wanted to put it out in draft form to solicit feedback. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] pohek321 commented on issue #22330: Add functionality to DatabricksHook to create notebooks

pohek321 commented on issue #22330: URL: https://github.com/apache/airflow/issues/22330#issuecomment-1070306241 Created pull request #22331 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] bbovenzi commented on issue #21201: Add Trigger Rule Display to Graph View

bbovenzi commented on issue #21201: URL: https://github.com/apache/airflow/issues/21201#issuecomment-1070306044 I agree we can do it in a new graph view, but I don't think this would be too complicated to do for the current one either. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] bbovenzi commented on issue #22325: ReST API : get_dag should return more than a simplified view of the dag

bbovenzi commented on issue #22325: URL: https://github.com/apache/airflow/issues/22325#issuecomment-1070305496 Good idea! I wonder if it should be on get dag or get dag details. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] boring-cyborg[bot] commented on pull request #22331: Add import_notebook method to databricks hook

boring-cyborg[bot] commented on pull request #22331: URL: https://github.com/apache/airflow/pull/22331#issuecomment-1070305458 Congratulations on your first Pull Request and welcome to the Apache Airflow community! If you have any issues or are unsure about any anything please check our Contribution Guide (https://github.com/apache/airflow/blob/main/CONTRIBUTING.rst) Here are some useful points: - Pay attention to the quality of your code (flake8, mypy and type annotations). Our [pre-commits]( https://github.com/apache/airflow/blob/main/STATIC_CODE_CHECKS.rst#prerequisites-for-pre-commit-hooks) will help you with that. - In case of a new feature add useful documentation (in docstrings or in `docs/` directory). Adding a new operator? Check this short [guide](https://github.com/apache/airflow/blob/main/docs/apache-airflow/howto/custom-operator.rst) Consider adding an example DAG that shows how users should use it. - Consider using [Breeze environment](https://github.com/apache/airflow/blob/main/BREEZE.rst) for testing locally, it’s a heavy docker but it ships with a working Airflow and a lot of integrations. - Be patient and persistent. It might take some time to get a review or get the final approval from Committers. - Please follow [ASF Code of Conduct](https://www.apache.org/foundation/policies/conduct) for all communication including (but not limited to) comments on Pull Requests, Mailing list and Slack. - Be sure to read the [Airflow Coding style]( https://github.com/apache/airflow/blob/main/CONTRIBUTING.rst#coding-style-and-best-practices). Apache Airflow is a community-driven project and together we are making it better 🚀. In case of doubts contact the developers at: Mailing List: d...@airflow.apache.org Slack: https://s.apache.org/airflow-slack -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] pohek321 opened a new pull request #22331: Add import_notebook method to databricks hook

pohek321 opened a new pull request #22331: URL: https://github.com/apache/airflow/pull/22331 --- **^ Add meaningful description above** Read the **[Pull Request Guidelines](https://github.com/apache/airflow/blob/main/CONTRIBUTING.rst#pull-request-guidelines)** for more information. In case of fundamental code change, Airflow Improvement Proposal ([AIP](https://cwiki.apache.org/confluence/display/AIRFLOW/Airflow+Improvements+Proposals)) is needed. In case of a new dependency, check compliance with the [ASF 3rd Party License Policy](https://www.apache.org/legal/resolved.html#category-x). In case of backwards incompatible changes please leave a note in [UPDATING.md](https://github.com/apache/airflow/blob/main/UPDATING.md). -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] bbovenzi commented on issue #22325: ReST API : get_dag should return more than a simplified view of the dag

bbovenzi commented on issue #22325: URL: https://github.com/apache/airflow/issues/22325#issuecomment-1070304848 Good idea! I wonder if it should be on get dag or get dag details. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[airflow] branch main updated: Add recipe for BeamRunGoPipelineOperator (#22296)

This is an automated email from the ASF dual-hosted git repository.

kamilbregula pushed a commit to branch main

in repository https://gitbox.apache.org/repos/asf/airflow.git

The following commit(s) were added to refs/heads/main by this push:

new 4a1503b Add recipe for BeamRunGoPipelineOperator (#22296)

4a1503b is described below

commit 4a1503b39b0aaf50940c29ac886c6eeda35a79ff

Author: pierrejeambrun

AuthorDate: Thu Mar 17 04:57:22 2022 +0100

Add recipe for BeamRunGoPipelineOperator (#22296)

---

airflow/providers/apache/beam/hooks/beam.py| 10 +-

.../docker-images-recipes/go-beam.Dockerfile | 37 ++

docs/docker-stack/recipes.rst | 20

tests/providers/apache/beam/hooks/test_beam.py | 21 +++-

4 files changed, 86 insertions(+), 2 deletions(-)

diff --git a/airflow/providers/apache/beam/hooks/beam.py

b/airflow/providers/apache/beam/hooks/beam.py

index 9be1a75..0644e02 100644

--- a/airflow/providers/apache/beam/hooks/beam.py

+++ b/airflow/providers/apache/beam/hooks/beam.py

@@ -20,12 +20,13 @@ import json

import os

import select

import shlex

+import shutil

import subprocess

import textwrap

from tempfile import TemporaryDirectory

from typing import Callable, List, Optional

-from airflow.exceptions import AirflowException

+from airflow.exceptions import AirflowConfigException, AirflowException

from airflow.hooks.base import BaseHook

from airflow.providers.google.go_module_utils import init_module,

install_dependencies

from airflow.utils.log.logging_mixin import LoggingMixin

@@ -307,6 +308,13 @@ class BeamHook(BaseHook):

source with GCSHook.

:return:

"""

+if shutil.which("go") is None:

+raise AirflowConfigException(

+"You need to have Go installed to run beam go pipeline. See

https://go.dev/doc/install "

+"installation guide. If you are running airflow in Docker see

more info at "

+"'https://airflow.apache.org/docs/docker-stack/recipes.html'."

+)

+

if "labels" in variables:

variables["labels"] = json.dumps(variables["labels"],

separators=(",", ":"))

diff --git a/docs/docker-stack/docker-images-recipes/go-beam.Dockerfile

b/docs/docker-stack/docker-images-recipes/go-beam.Dockerfile

new file mode 100644

index 000..b224fe1

--- /dev/null

+++ b/docs/docker-stack/docker-images-recipes/go-beam.Dockerfile

@@ -0,0 +1,37 @@

+# Licensed to the Apache Software Foundation (ASF) under one or more

+# contributor license agreements. See the NOTICE file distributed with

+# this work for additional information regarding copyright ownership.

+# The ASF licenses this file to You under the Apache License, Version 2.0

+# (the "License"); you may not use this file except in compliance with

+# the License. You may obtain a copy of the License at

+#

+#http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+ARG BASE_AIRFLOW_IMAGE

+FROM ${BASE_AIRFLOW_IMAGE}

+

+SHELL ["/bin/bash", "-o", "pipefail", "-e", "-u", "-x", "-c"]

+

+USER 0

+

+ARG GO_VERSION=1.16.4

+ENV GO_INSTALL_DIR=/usr/local/go

+

+# Install Go

+RUN if [[ "$(uname -a)" = *"x86_64"* ]] ; then export ARCH=amd64 ; else export

ARCH=arm64 ; fi \

+&&

DOWNLOAD_URL="https://dl.google.com/go/go${GO_VERSION}.linux-${ARCH}.tar.gz"; \

+&& TMP_DIR="$(mktemp -d)" \

+&& curl -fL "${DOWNLOAD_URL}" --output

"${TMP_DIR}/go.linux-${ARCH}.tar.gz" \

+&& mkdir -p "${GO_INSTALL_DIR}" \

+&& tar xzf "${TMP_DIR}/go.linux-${ARCH}.tar.gz" -C "${GO_INSTALL_DIR}"

--strip-components=1 \

+&& rm -rf "${TMP_DIR}"

+

+ENV GOROOT=/usr/local/go

+ENV PATH="$GOROOT/bin:$PATH"

+

+USER ${AIRFLOW_UID}

diff --git a/docs/docker-stack/recipes.rst b/docs/docker-stack/recipes.rst

index a1c5777..1d258ab 100644

--- a/docs/docker-stack/recipes.rst

+++ b/docs/docker-stack/recipes.rst

@@ -70,3 +70,23 @@ Then build a new image.

--pull \

--build-arg BASE_AIRFLOW_IMAGE="apache/airflow:2.0.2" \

--tag my-airflow-image:0.0.1

+

+Apache Beam Go Stack installation

+-

+

+To be able to run Beam Go Pipeline with the

:class:`~airflow.providers.apache.beam.operators.beam.BeamRunGoPipelineOperator`,

+you will need Go in your container. Install airflow with

``apache-airflow-providers-google>=6.5.0`` and

``apache-airflow-providers-apache-beam>=3.2.0``

+

+Create a new Dockerfile like the one shown below.

+

+.. exampleinclude:: /docker-images-recipes/go-beam.Dockerfile

+:language: dockerfile

+

+Then build a new image.

+

+.. code-block:: bash

+

+ docker build . \

+--pull \

+--build-arg BASE_AIRFLOW_

[GitHub] [airflow] mik-laj merged pull request #22296: Add recipe for BeamRunGoPipelineOperator

mik-laj merged pull request #22296: URL: https://github.com/apache/airflow/pull/22296 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] mik-laj closed issue #21545: Add Go to docker images

mik-laj closed issue #21545: URL: https://github.com/apache/airflow/issues/21545 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] github-actions[bot] commented on pull request #22296: Add recipe for BeamRunGoPipelineOperator

github-actions[bot] commented on pull request #22296: URL: https://github.com/apache/airflow/pull/22296#issuecomment-1070279820 The PR is likely OK to be merged with just subset of tests for default Python and Database versions without running the full matrix of tests, because it does not modify the core of Airflow. If the committers decide that the full tests matrix is needed, they will add the label 'full tests needed'. Then you should rebase to the latest main or amend the last commit of the PR, and push it with --force-with-lease. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] pohek321 opened a new issue #22330: Add functionality to DatabricksHook to create notebooks

pohek321 opened a new issue #22330: URL: https://github.com/apache/airflow/issues/22330 ### Description Currently, there is no way to programmatically create a notebook in Databricks using the core provider components ([DatabricksHook](https://registry.astronomer.io/providers/databricks/modules/databrickshook), [DatabricksRunNowOperator](https://registry.astronomer.io/providers/databricks/modules/databricksrunnowoperator), or [DatabricksSubmitRunOperator](https://registry.astronomer.io/providers/databricks/modules/databrickssubmitrunoperator)). If implemented, this change would allow Airflow users to create a Scala, R, Python, or SQL notebook in DBFS programmatically from Airflow. ### Use case/motivation This would be useful for our users who are hosting their notebooks in an Airflow repository and would like to utilize advantages of tools like jinja templating, orchestration, git version control, etc. ### Related issues _No response_ ### Are you willing to submit a PR? - [X] Yes I am willing to submit a PR! ### Code of Conduct - [X] I agree to follow this project's [Code of Conduct](https://github.com/apache/airflow/blob/main/CODE_OF_CONDUCT.md) -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] Madaditya commented on issue #20110: CORS access_control_allow_origin header never returned

Madaditya commented on issue #20110: URL: https://github.com/apache/airflow/issues/20110#issuecomment-1070231488 Was anybody able to fix a fix to the CORS issue for airflow API? We are facing a similar issue and wondered if its was a v2.2..2 specific. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] bskim45 commented on issue #21072: manage_sla firing notifications for the same sla miss instances repeatedly

bskim45 commented on issue #21072: URL: https://github.com/apache/airflow/issues/21072#issuecomment-1070181632 I'm experiencing a similar issue somewhat related to this. When the `sla` argument is provided but an SLA miss email is not sent nor `sla_miss_callback` is not specified, SlaMiss entries are piled up on the `sla_miss` table with `notification_sent=false`. This causes calling `DAGFileProcessor.manage_slas` times out for that callback processing. quick example: ```python with DAG( dag_id="example_dag", schedule_interval='@hourly', start_date=days_ago(1), catchup=False, ) as dag: dummy_task = DummyOperator( task_id='dummy_task', sla=datetime.timedelta(hours=18), ) ``` -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Commented] (AIRFLOW-5071) Thousand os Executor reports task instance X finished (success) although the task says its queued. Was the task killed externally?

[

https://issues.apache.org/jira/browse/AIRFLOW-5071?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=17507936#comment-17507936

]

ASF GitHub Bot commented on AIRFLOW-5071:

-

kenny813x201 commented on issue #10790:

URL: https://github.com/apache/airflow/issues/10790#issuecomment-1069820469

We also got the same error message. In our case, it turns out that we are

using the same name for different dags.

Changing different dags from `as dag` to like `as dags1` and `as dags2`

solve the issue for us.

```

with DAG(

"dag_name",

) as dag:

```

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

> Thousand os Executor reports task instance X finished (success) although the

> task says its queued. Was the task killed externally?

> --

>

> Key: AIRFLOW-5071

> URL: https://issues.apache.org/jira/browse/AIRFLOW-5071

> Project: Apache Airflow

> Issue Type: Bug

> Components: DAG, scheduler

>Affects Versions: 1.10.3

>Reporter: msempere

>Priority: Critical

> Fix For: 1.10.12

>

> Attachments: image-2020-01-27-18-10-29-124.png,

> image-2020-07-08-07-58-42-972.png

>

>

> I'm opening this issue because since I update to 1.10.3 I'm seeing thousands

> of daily messages like the following in the logs:

>

> ```

> {{__init__.py:1580}} ERROR - Executor reports task instance 2019-07-29 00:00:00+00:00 [queued]> finished (success) although the task says

> its queued. Was the task killed externally?

> {{jobs.py:1484}} ERROR - Executor reports task instance 2019-07-29 00:00:00+00:00 [queued]> finished (success) although the task says

> its queued. Was the task killed externally?

> ```

> -And looks like this is triggering also thousand of daily emails because the

> flag to send email in case of failure is set to True.-

> I have Airflow setup to use Celery and Redis as a backend queue service.

--

This message was sent by Atlassian Jira

(v8.20.1#820001)

[GitHub] [airflow] kenny813x201 commented on issue #10790: Copy of [AIRFLOW-5071] JIRA: Thousands of Executor reports task instance X finished (success) although the task says its queued. Was the task

kenny813x201 commented on issue #10790: URL: https://github.com/apache/airflow/issues/10790#issuecomment-1069820469 We also got the same error message. In our case, it turns out that we are using the same name for different dags. Changing different dags from `as dag` to like `as dags1` and `as dags2` solve the issue for us. ``` with DAG( "dag_name", ) as dag: ``` -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] EricGao888 commented on pull request #21961: Add support for Alibaba-Cloud EMR cluster template (#21957)

EricGao888 commented on pull request #21961: URL: https://github.com/apache/airflow/pull/21961#issuecomment-1069771576 > @EricGao888 I marked the PR as draft. When finished please convert to ready so we can review. Sure, thanks! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] boring-cyborg[bot] commented on issue #22328: bigquery provider's - BigQueryCursor missing implementation for description prooerty.

boring-cyborg[bot] commented on issue #22328: URL: https://github.com/apache/airflow/issues/22328#issuecomment-1069764906 Thanks for opening your first issue here! Be sure to follow the issue template! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] utkarsharma2 opened a new issue #22328: bigquery provider's - BigQueryCursor missing implementation for description prooerty.

utkarsharma2 opened a new issue #22328: URL: https://github.com/apache/airflow/issues/22328 ### Apache Airflow version 2.2.4 (latest released) ### What happened When trying to run following code: ``` import pandas as pd from airflow.providers.google.cloud.hooks.bigquery import BigqueryHook #using default connection hook = BigqueryHook() df = pd.read_sql( "SELECT * FROM table_name", con=hook.get_conn() ) ``` Running into following issue: ```Traceback (most recent call last): File "/Applications/PyCharm CE.app/Contents/plugins/python-ce/helpers/pydev/_pydevd_bundle/pydevd_exec2.py", line 3, in Exec exec(exp, global_vars, local_vars) File "", line 1, in File "/Users/utkarsharma/sandbox/astronomer/astro/.nox/dev/lib/python3.8/site-packages/pandas/io/sql.py", line 602, in read_sql return pandas_sql.read_query( File "/Users/utkarsharma/sandbox/astronomer/astro/.nox/dev/lib/python3.8/site-packages/pandas/io/sql.py", line 2117, in read_query columns = [col_desc[0] for col_desc in cursor.description] File "/Users/utkarsharma/sandbox/astronomer/astro/.nox/dev/lib/python3.8/site-packages/airflow/providers/google/cloud/hooks/bigquery.py", line 2599, in description raise NotImplementedError NotImplementedError ``` ### What you think should happen instead The property should be implemented in a similar manner as [postgres_to_gcs.py](https://github.com/apache/airflow/blob/7bd165fbe2cbbfa8208803ec352c5d16ca2bd3ec/airflow/providers/google/cloud/transfers/postgres_to_gcs.py#L58) ### How to reproduce _No response_ ### Operating System macOS ### Versions of Apache Airflow Providers apache-airflow-providers-google==6.5.0 ### Deployment Virtualenv installation ### Deployment details _No response_ ### Anything else _No response_ ### Are you willing to submit PR? - [X] Yes I am willing to submit a PR! ### Code of Conduct - [X] I agree to follow this project's [Code of Conduct](https://github.com/apache/airflow/blob/main/CODE_OF_CONDUCT.md) -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] github-actions[bot] closed pull request #18759: Movin trigger dag to operations folder

github-actions[bot] closed pull request #18759: URL: https://github.com/apache/airflow/pull/18759 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] github-actions[bot] commented on issue #8687: Airflow webserver should handle signals immediately when starting

github-actions[bot] commented on issue #8687: URL: https://github.com/apache/airflow/issues/8687#issuecomment-1069763273 This issue has been closed because it has not received response from the issue author. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] github-actions[bot] commented on issue #19158: Macros time operations not working

github-actions[bot] commented on issue #19158: URL: https://github.com/apache/airflow/issues/19158#issuecomment-1069763182 This issue has been closed because it has not received response from the issue author. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] github-actions[bot] closed issue #8687: Airflow webserver should handle signals immediately when starting

github-actions[bot] closed issue #8687: URL: https://github.com/apache/airflow/issues/8687 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] github-actions[bot] closed pull request #21080: Fix wrong use of $ref and nullable

github-actions[bot] closed pull request #21080: URL: https://github.com/apache/airflow/pull/21080 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] github-actions[bot] closed issue #19158: Macros time operations not working

github-actions[bot] closed issue #19158: URL: https://github.com/apache/airflow/issues/19158 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] alexbegg commented on issue #22320: Copying DAG ID from UI and pasting in Slack includes schedule

alexbegg commented on issue #22320: URL: https://github.com/apache/airflow/issues/22320#issuecomment-1069750132 > I can confirm this indeed happens with paste ( CMD + V) > > However if you will paste with CMD + SHIFT + V - it will behave as you expect > > Good catch on the CMD + SHIFT + V, I do that sometimes when I didn't want to paste HTML. I'll keep that in mind. It will be nice though if selecting the text just selects that DAG ID and not the other stuff. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] dstandish commented on a change in pull request #22184: Add run_id and map_index to SlaMiss

dstandish commented on a change in pull request #22184: URL: https://github.com/apache/airflow/pull/22184#discussion_r828511240 ## File path: airflow/dag_processing/processor.py ## @@ -412,20 +410,20 @@ def manage_slas(self, dag: DAG, session: Session = None) -> None: else: while next_info.logical_date < ts: next_info = dag.next_dagrun_info(next_info.data_interval, restricted=False) - if next_info is None: break -if (ti.dag_id, ti.task_id, next_info.logical_date) in recorded_slas_query: +next_run_id = DR.generate_run_id(DagRunType.SCHEDULED, next_info.logical_date) +if (ti.dag_id, ti.task_id, next_run_id, ti.map_index) in recorded_sla_misses: Review comment: > the current SLA behaviour of creating the SlaMiss record against the next execution date is confusing (and likely wrong) so lets not confuse matters more by changing it to by against a future run_id that may never exist. i think that the notion of "next execution date" is maybe a little misleading. it just means next, relative to the last one examined. so we're in `manage_slas`. we start with a task. we look at the "last successful or skipped TI". then we say, ok, let's look at the next run for that task -- relative to the last one that's done. and let's see if it's failed its SLA. (e.g. cus it is still running). if so, let's create an SlaMiss for it. the only time that the TI would not exist is when scheduler for whatever reason isn't even creating the TI -- e.g. because it's catchup=True, or max_active_tasks=1, or scheduler is having trouble. `next_run_id` would probably be more accurately called `curr_run_id`. wdyt? for now i'll rename that variable. > (i.e. throw away most of this PR, sorry.) no worries if that's how it goes. we should do what we need to do, and it was a good exercise in any case. but i'm not convinced that it's not the right change. > create a parse-time-error if someone tries to set an sla property on a mapped task. might be better to just do a warning and ignore the SLA because e.g. if you have cluster policy putting a default SLA on everything, or if you apply it to all tasks in a dag with `default_args` this could be a little inconvenient. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] dstandish commented on a change in pull request #22184: Add run_id and map_index to SlaMiss

dstandish commented on a change in pull request #22184: URL: https://github.com/apache/airflow/pull/22184#discussion_r828511240 ## File path: airflow/dag_processing/processor.py ## @@ -412,20 +410,20 @@ def manage_slas(self, dag: DAG, session: Session = None) -> None: else: while next_info.logical_date < ts: next_info = dag.next_dagrun_info(next_info.data_interval, restricted=False) - if next_info is None: break -if (ti.dag_id, ti.task_id, next_info.logical_date) in recorded_slas_query: +next_run_id = DR.generate_run_id(DagRunType.SCHEDULED, next_info.logical_date) +if (ti.dag_id, ti.task_id, next_run_id, ti.map_index) in recorded_sla_misses: Review comment: > the current SLA behaviour of creating the SlaMiss record against the next execution date is confusing (and likely wrong) so lets not confuse matters more by changing it to by against a future run_id that may never exist. i think that the notion of "next execution date" is maybe a little misleading. it just means next, relative to the last one examined. so we're in `manage_slas`. we start with a task. we look at the "last successful or skipped TI". then we say, ok, let's look at the next run for that task -- relative to the last one that's done. and let's see if it's failed its SLA. (e.g. cus it is still running). if so, let's create an SlaMiss for it. the only time that the TI would not exist is when scheduler for whatever reason isn't even creating the TI -- e.g. because it's catchup=True, or max_active_tasks=1, or scheduler is having trouble. `next_run_id` would probably be more accurately called `curr_run_id`. wdyt? for now i'll rename that variable. > (i.e. throw away most of this PR, sorry.) no worries if that's how it goes. we should do what we need to do, and it was a good exercise in any case. but i'm not convinced that it's not the right change. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] ashb commented on a change in pull request #22184: Add run_id and map_index to SlaMiss

ashb commented on a change in pull request #22184: URL: https://github.com/apache/airflow/pull/22184#discussion_r828502785 ## File path: airflow/dag_processing/processor.py ## @@ -412,20 +410,20 @@ def manage_slas(self, dag: DAG, session: Session = None) -> None: else: while next_info.logical_date < ts: next_info = dag.next_dagrun_info(next_info.data_interval, restricted=False) - if next_info is None: break -if (ti.dag_id, ti.task_id, next_info.logical_date) in recorded_slas_query: +next_run_id = DR.generate_run_id(DagRunType.SCHEDULED, next_info.logical_date) +if (ti.dag_id, ti.task_id, next_run_id, ti.map_index) in recorded_sla_misses: Review comment: I.e. this dag should throw an error at parse time: ```python with DAG(dag_id='test'): BashOperator.partial(sla=timedelta(seconds=10)).expand(bash_command=["echo true", "echo false"]) ``` -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] ashb commented on a change in pull request #22184: Add run_id and map_index to SlaMiss

ashb commented on a change in pull request #22184: URL: https://github.com/apache/airflow/pull/22184#discussion_r828502055 ## File path: airflow/dag_processing/processor.py ## @@ -412,20 +410,20 @@ def manage_slas(self, dag: DAG, session: Session = None) -> None: else: while next_info.logical_date < ts: next_info = dag.next_dagrun_info(next_info.data_interval, restricted=False) - if next_info is None: break -if (ti.dag_id, ti.task_id, next_info.logical_date) in recorded_slas_query: +next_run_id = DR.generate_run_id(DagRunType.SCHEDULED, next_info.logical_date) +if (ti.dag_id, ti.task_id, next_run_id, ti.map_index) in recorded_sla_misses: Review comment: I think this behaviour is _even_ more confusing. Let's not touch SlaMiss _at all_, and instead create a parse-time-error if someone tries to set an `sla` property on a mapped task. (i.e. throw away most of this PR, sorry.) My reason: the current SLA behaviour of creating the SlaMiss record against the next execution date is confusing (and likely wrong) so lets not confuse matters more by changing it to by against a future run_id that _may never exist_. We can come back and re-visit this once we have made SlaMiss more sensible. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] github-actions[bot] commented on pull request #22272: Add map_index support to al task instance-related views

github-actions[bot] commented on pull request #22272: URL: https://github.com/apache/airflow/pull/22272#issuecomment-1069693314 The PR is likely OK to be merged with just subset of tests for default Python and Database versions without running the full matrix of tests, because it does not modify the core of Airflow. If the committers decide that the full tests matrix is needed, they will add the label 'full tests needed'. Then you should rebase to the latest main or amend the last commit of the PR, and push it with --force-with-lease. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] ashb commented on a change in pull request #22272: Add map_index support to al task instance-related views

ashb commented on a change in pull request #22272:

URL: https://github.com/apache/airflow/pull/22272#discussion_r828494223

##

File path: airflow/www/static/js/dag.js

##

@@ -151,46 +163,41 @@ export function callModal(t, d, extraLinks, tryNumbers,

sd, drID) {

$('#try_index > li').remove();

$('#redir_log_try_index > li').remove();

const startIndex = (tryNumbers > 2 ? 0 : 1);

- for (let index = startIndex; index < tryNumbers; index += 1) {

-let url = `${logsWithMetadataUrl

-}?dag_id=${encodeURIComponent(dagId)

-}&task_id=${encodeURIComponent(taskId)

-}&execution_date=${encodeURIComponent(executionDate)

-}&metadata=null`

- + '&format=file';

Review comment:

Whoops, lost this. One moment

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [airflow] edithturn opened a new pull request #22327: Rewrite Selective Check in Python

edithturn opened a new pull request #22327: URL: https://github.com/apache/airflow/pull/22327 closes #19971 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] dstandish opened a new pull request #22326: Remove incorrect deprecation warning in secrets backend

dstandish opened a new pull request #22326: URL: https://github.com/apache/airflow/pull/22326 When the no value is found with `get_conn_value`, the warning was being triggered, even though `get_conn_value` was implemented and just returned no value (cus there wasn't one). Now we make the logic a little tighter and only raise the dep warning when `get_conn_value` not implemented, which is what we intended to do in the first place. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] ashb commented on a change in pull request #22272: Add map_index support to al task instance-related views

ashb commented on a change in pull request #22272: URL: https://github.com/apache/airflow/pull/22272#discussion_r828471044 ## File path: airflow/www/static/js/dag.js ## @@ -53,6 +53,7 @@ let taskId = ''; let executionDate = ''; let subdagId = ''; let dagRunId = ''; +let mapIndex = undefined; Review comment: I'm on this. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] pierrejeambrun commented on pull request #22296: Add recipe for BeamRunGoPipelineOperator

pierrejeambrun commented on pull request #22296: URL: https://github.com/apache/airflow/pull/22296#issuecomment-1069657325 @mik-laj Thank you for your comments. I just updated the PR, let me know what you think. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] pierrejeambrun commented on a change in pull request #22296: Add recipe for BeamRunGoPipelineOperator

pierrejeambrun commented on a change in pull request #22296:

URL: https://github.com/apache/airflow/pull/22296#discussion_r828468900

##

File path: docs/docker-stack/docker-images-recipes/go-beam.Dockerfile

##

@@ -0,0 +1,35 @@

+# Licensed to the Apache Software Foundation (ASF) under one or more

+# contributor license agreements. See the NOTICE file distributed with

+# this work for additional information regarding copyright ownership.

+# The ASF licenses this file to You under the Apache License, Version 2.0

+# (the "License"); you may not use this file except in compliance with

+# the License. You may obtain a copy of the License at

+#

+#http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+ARG BASE_AIRFLOW_IMAGE

+FROM ${BASE_AIRFLOW_IMAGE}

+

+SHELL ["/bin/bash", "-o", "pipefail", "-e", "-u", "-x", "-c"]

+

+USER 0

+

+ENV GO_INSTALL_DIR=/usr/local/go

+

+# Install Go

+RUN DOWNLOAD_URL="https://dl.google.com/go/go1.16.4.linux-amd64.tar.gz"; \

+&& TMP_DIR="$(mktemp -d)" \

+&& curl -fL "${DOWNLOAD_URL}" --output "${TMP_DIR}/go.linux-amd64.tar.gz" \

+&& mkdir -p "${GO_INSTALL_DIR}" \

+&& tar xzf "${TMP_DIR}/go.linux-amd64.tar.gz" -C "${GO_INSTALL_DIR}"

--strip-components=1 \

+&& rm -rf "${TMP_DIR}"

+

+ENV GOROOT=/usr/local/go

+ENV PATH="$GOROOT/bin:$PATH"

Review comment:

Good idea. I just added that check and a test as well to validate this

behavior.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [airflow] pierrejeambrun commented on a change in pull request #22296: Add recipe for BeamRunGoPipelineOperator

pierrejeambrun commented on a change in pull request #22296:

URL: https://github.com/apache/airflow/pull/22296#discussion_r828468492

##

File path: docs/docker-stack/docker-images-recipes/go-beam.Dockerfile

##

@@ -0,0 +1,35 @@

+# Licensed to the Apache Software Foundation (ASF) under one or more

+# contributor license agreements. See the NOTICE file distributed with

+# this work for additional information regarding copyright ownership.

+# The ASF licenses this file to You under the Apache License, Version 2.0

+# (the "License"); you may not use this file except in compliance with

+# the License. You may obtain a copy of the License at

+#

+#http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+ARG BASE_AIRFLOW_IMAGE

+FROM ${BASE_AIRFLOW_IMAGE}

+

+SHELL ["/bin/bash", "-o", "pipefail", "-e", "-u", "-x", "-c"]

+

+USER 0

+

+ENV GO_INSTALL_DIR=/usr/local/go

+

+# Install Go

+RUN DOWNLOAD_URL="https://dl.google.com/go/go1.16.4.linux-amd64.tar.gz"; \

Review comment:

Nice idea. I tweaked a little bit the run command. It kind of feel a bit

hacky.

Let me know if you have a better idea on how to do that, I am not really

familiar with multi platform docker images.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [airflow] pierrejeambrun commented on a change in pull request #22296: Add recipe for BeamRunGoPipelineOperator

pierrejeambrun commented on a change in pull request #22296:

URL: https://github.com/apache/airflow/pull/22296#discussion_r828467003

##

File path: docs/docker-stack/docker-images-recipes/go-beam.Dockerfile

##

@@ -0,0 +1,35 @@

+# Licensed to the Apache Software Foundation (ASF) under one or more

+# contributor license agreements. See the NOTICE file distributed with

+# this work for additional information regarding copyright ownership.

+# The ASF licenses this file to You under the Apache License, Version 2.0

+# (the "License"); you may not use this file except in compliance with

+# the License. You may obtain a copy of the License at

+#

+#http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+ARG BASE_AIRFLOW_IMAGE

+FROM ${BASE_AIRFLOW_IMAGE}

+

+SHELL ["/bin/bash", "-o", "pipefail", "-e", "-u", "-x", "-c"]

+

+USER 0

+

+ENV GO_INSTALL_DIR=/usr/local/go

+

+# Install Go

+RUN DOWNLOAD_URL="https://dl.google.com/go/go1.16.4.linux-amd64.tar.gz"; \

Review comment:

You are right, good catch. Done :)

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [airflow] mik-laj edited a comment on issue #22306: DataflowStartFlexTemplateOperator is missing the impersonation_chain argument

mik-laj edited a comment on issue #22306: URL: https://github.com/apache/airflow/issues/22306#issuecomment-1069648241 We should make similar change to: https://github.com/apache/airflow/pull/19518#discussion_r748632907 (CC: @lwyszomi ) -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] mik-laj commented on issue #22306: DataflowStartFlexTemplateOperator is missing the impersonation_chain argument

mik-laj commented on issue #22306: URL: https://github.com/apache/airflow/issues/22306#issuecomment-1069648241 This problem has been documented here: https://airflow.apache.org/docs/apache-airflow-providers-google/stable/connections/gcp.html#direct-impersonation-of-a-service-account Here's a longer discussion on this issue: https://github.com/apache/airflow/pull/19518#discussion_r748632907 (CC: @lwyszomi ) -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] ianbuss commented on a change in pull request #22051: Support glob syntax in .airflowignore files (#21392)

ianbuss commented on a change in pull request #22051: URL: https://github.com/apache/airflow/pull/22051#discussion_r828447783 ## File path: airflow/config_templates/config.yml ## @@ -347,6 +347,14 @@ type: string example: ~ default: "True" +- name: dag_ignorefile_syntax + description: | +The pattern syntax used in the ".airflowignore" files in the DAG directories. Valid values are +``regexp`` or ``glob``. + version_added: 2.3.0 + type: string + example: ~ + default: "regexp" Review comment: Done -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] josh-fell commented on a change in pull request #22280: Add links for BigQuery Data Transfer

josh-fell commented on a change in pull request #22280:

URL: https://github.com/apache/airflow/pull/22280#discussion_r827246403

##

File path: airflow/providers/google/cloud/links/bigquery_dts.py

##

@@ -0,0 +1,49 @@

+#

+# Licensed to the Apache Software Foundation (ASF) under one

+# or more contributor license agreements. See the NOTICE file

+# distributed with this work for additional information

+# regarding copyright ownership. The ASF licenses this file

+# to you under the Apache License, Version 2.0 (the

+# "License"); you may not use this file except in compliance

+# with the License. You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing,

+# software distributed under the License is distributed on an

+# "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

+# KIND, either express or implied. See the License for the

+# specific language governing permissions and limitations

+# under the License.

+"""This module contains Google BigQuery Data Transfer links."""

+from typing import TYPE_CHECKING, Optional

+

+from airflow.providers.google.cloud.links.base import BaseGoogleLink

+

+if TYPE_CHECKING:

+from airflow.utils.context import Context

+

+BIGQUERY_BASE_LINK = "https://console.cloud.google.com/bigquery/transfers";

+BIGQUERY_DTS_LINK = BIGQUERY_BASE_LINK +

"/locations/{region}/configs/{config_id}/runs?project={project_id}"

+

+

+class BigQueryDataTransferConfigLink(BaseGoogleLink):

+"""Helper class for constructing BigQuery Data Transfer Config Link"""

+

+name = "BigQuery Data Transfer Config"

+key = "bigquery_dts_config"

+format_str = BIGQUERY_DTS_LINK

+

+@staticmethod

+def persist(

+context: "Context",

+task_instance,

+region: Optional[str],

+config_id: Optional[str],

+project_id: Optional[str],

Review comment:

These args don't seem to be optional. Looks like they will always be

provided when `BigQueryDataTransferConfigLink.persist()` is called but I could

be missing something along the way here. I suspect the link value wouldn't be

correct if these were missing too?

```suggestion

region: str,

config_id: str,

project_id: str,

```

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [airflow] edithturn edited a comment on issue #19971: CI: Rewrite selective check script in Python

edithturn edited a comment on issue #19971: URL: https://github.com/apache/airflow/issues/19971#issuecomment-1004284910 > It's used in two places: ci.yml and build.yml: The right file should be build-images.yml :) Close: #19971 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] carmoreno1 commented on issue #8468: DbApiHook: add chunksize to get_pandas_df parameters

carmoreno1 commented on issue #8468: URL: https://github.com/apache/airflow/issues/8468#issuecomment-1069620108 > There are a problem with the chunksize, because the get_pandas_df close the connection in the first call of the iterator[DataFrame] returned Hi @imorales-mosaico is there a workaround about `chunksize` using Airflow? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] dylanbstorey opened a new issue #22325: ReST API : get_dag should return more than a simplified view of the dag

dylanbstorey opened a new issue #22325: URL: https://github.com/apache/airflow/issues/22325 ### Description The current response payload from https://airflow.apache.org/docs/apache-airflow/stable/stable-rest-api-ref.html#operation/get_dag is a useful but simple view of the state of a given DAG. However it is missing some additional attributes that I feel would be useful for indiduals/groups who are choosing to interact with Airflow primarily through the ReST interface. ### Use case/motivation As part of a testing workflow we upload DAGs to a running airflow instance and want to trigger an execution of the DAG after we know that the scheduler has updated it. We're currently automating this process through the ReST API, but the `last_updated` is not exposed. This should be implemented from the dag_source endpoint. https://github.com/apache/airflow/blob/main/airflow/api_connexion/endpoints/dag_source_endpoint.py ### Related issues _No response_ ### Are you willing to submit a PR? - [X] Yes I am willing to submit a PR! ### Code of Conduct - [X] I agree to follow this project's [Code of Conduct](https://github.com/apache/airflow/blob/main/CODE_OF_CONDUCT.md) -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] boring-cyborg[bot] commented on issue #22325: ReST API : get_dag should return more than a simplified view of the dag

boring-cyborg[bot] commented on issue #22325: URL: https://github.com/apache/airflow/issues/22325#issuecomment-1069618240 Thanks for opening your first issue here! Be sure to follow the issue template! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] boring-cyborg[bot] commented on pull request #22324: Update ssh.py

boring-cyborg[bot] commented on pull request #22324: URL: https://github.com/apache/airflow/pull/22324#issuecomment-1069612287 Congratulations on your first Pull Request and welcome to the Apache Airflow community! If you have any issues or are unsure about any anything please check our Contribution Guide (https://github.com/apache/airflow/blob/main/CONTRIBUTING.rst) Here are some useful points: - Pay attention to the quality of your code (flake8, mypy and type annotations). Our [pre-commits]( https://github.com/apache/airflow/blob/main/STATIC_CODE_CHECKS.rst#prerequisites-for-pre-commit-hooks) will help you with that. - In case of a new feature add useful documentation (in docstrings or in `docs/` directory). Adding a new operator? Check this short [guide](https://github.com/apache/airflow/blob/main/docs/apache-airflow/howto/custom-operator.rst) Consider adding an example DAG that shows how users should use it. - Consider using [Breeze environment](https://github.com/apache/airflow/blob/main/BREEZE.rst) for testing locally, it’s a heavy docker but it ships with a working Airflow and a lot of integrations. - Be patient and persistent. It might take some time to get a review or get the final approval from Committers. - Please follow [ASF Code of Conduct](https://www.apache.org/foundation/policies/conduct) for all communication including (but not limited to) comments on Pull Requests, Mailing list and Slack. - Be sure to read the [Airflow Coding style]( https://github.com/apache/airflow/blob/main/CONTRIBUTING.rst#coding-style-and-best-practices). Apache Airflow is a community-driven project and together we are making it better 🚀. In case of doubts contact the developers at: Mailing List: d...@airflow.apache.org Slack: https://s.apache.org/airflow-slack -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] a246530 opened a new pull request #22324: Update ssh.py

a246530 opened a new pull request #22324: URL: https://github.com/apache/airflow/pull/22324 Incorrect logic for self.allow_host_key_change warning regarding "Remote Identification Change is not verified". This was identified in https://github.com/apache/airflow/issues/9510 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] ajbosco opened a new pull request #22323: enable optional subPath for dags volume mount

ajbosco opened a new pull request #22323: URL: https://github.com/apache/airflow/pull/22323 Users may have their DAGs on a volume that includes other things and as such want to use a `subPath` on the Volume Mount. This is similar to how the `gitSync` Volume Mount is setup. --- **^ Add meaningful description above** Read the **[Pull Request Guidelines](https://github.com/apache/airflow/blob/main/CONTRIBUTING.rst#pull-request-guidelines)** for more information. In case of fundamental code change, Airflow Improvement Proposal ([AIP](https://cwiki.apache.org/confluence/display/AIRFLOW/Airflow+Improvements+Proposals)) is needed. In case of a new dependency, check compliance with the [ASF 3rd Party License Policy](https://www.apache.org/legal/resolved.html#category-x). In case of backwards incompatible changes please leave a note in [UPDATING.md](https://github.com/apache/airflow/blob/main/UPDATING.md). -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[airflow] branch constraints-main updated: Updating constraints. Build id:1994689956