[GitHub] [druid] clintropolis merged pull request #9203: [Backport] Web console: fix refresh button in segments view

clintropolis merged pull request #9203: [Backport] Web console: fix refresh button in segments view URL: https://github.com/apache/druid/pull/9203 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[druid] branch 0.17.0 updated (7c7fffc -> 6874194)

This is an automated email from the ASF dual-hosted git repository. cwylie pushed a change to branch 0.17.0 in repository https://gitbox.apache.org/repos/asf/druid.git. from 7c7fffc Update Kinesis resharding information about task failures (#9104) (#9201) add 6874194 fix refresh button (#9195) (#9203) No new revisions were added by this update. Summary of changes: .../src/views/segments-view/segments-view.tsx | 29 +++--- 1 file changed, 14 insertions(+), 15 deletions(-) - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] clintropolis merged pull request #9201: [Backport] Update Kinesis resharding information about task failures (#9104)

clintropolis merged pull request #9201: [Backport] Update Kinesis resharding information about task failures (#9104) URL: https://github.com/apache/druid/pull/9201 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[druid] branch 0.17.0 updated (e6246c9 -> 7c7fffc)

This is an automated email from the ASF dual-hosted git repository. cwylie pushed a change to branch 0.17.0 in repository https://gitbox.apache.org/repos/asf/druid.git. from e6246c9 Fix deserialization of maxBytesInMemory (#9092) (#9170) add 7c7fffc Update Kinesis resharding information about task failures (#9104) (#9201) No new revisions were added by this update. Summary of changes: docs/development/extensions-core/kinesis-ingestion.md | 7 --- 1 file changed, 4 insertions(+), 3 deletions(-) - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] jihoonson commented on a change in pull request #9171: Doc update for the new input source and the new input format

jihoonson commented on a change in pull request #9171: Doc update for the new input source and the new input format URL: https://github.com/apache/druid/pull/9171#discussion_r367775037 ## File path: docs/development/extensions-core/hdfs.md ## @@ -94,7 +94,7 @@ For more configurations, see the [Hadoop AWS module](https://hadoop.apache.org/d Configuration for Google Cloud Storage -To use the Google cloud Storage as the deep storage, you need to configure `druid.storage.storageDirectory` properly. +To use the Google Cloud Storage as the deep storage, you need to configure `druid.storage.storageDirectory` properly. Review comment: Thanks, I made changed based on the suggestions. But I would still want to keep the example properties for GCS, since they are pretty mandatory. The similar pattern is applied to [S3 configuration](https://github.com/apache/druid/pull/9171/files#diff-51abd0f049462a98772db4c6ea063be3R66-R93). This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] jihoonson commented on a change in pull request #9171: Doc update for the new input source and the new input format

jihoonson commented on a change in pull request #9171: Doc update for the new input source and the new input format URL: https://github.com/apache/druid/pull/9171#discussion_r367775037 ## File path: docs/development/extensions-core/hdfs.md ## @@ -94,7 +94,7 @@ For more configurations, see the [Hadoop AWS module](https://hadoop.apache.org/d Configuration for Google Cloud Storage -To use the Google cloud Storage as the deep storage, you need to configure `druid.storage.storageDirectory` properly. +To use the Google Cloud Storage as the deep storage, you need to configure `druid.storage.storageDirectory` properly. Review comment: Thanks, I added suggestions. But I would still want to keep the example properties for GCS, since they are pretty mandatory. The similar pattern is applied to [S3 configuration](https://github.com/apache/druid/pull/9171/files#diff-51abd0f049462a98772db4c6ea063be3R66-R93). This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] clintropolis merged pull request #9198: Web console: fix bug where arrays can not be emptied out in the coordinator dialog

clintropolis merged pull request #9198: Web console: fix bug where arrays can not be emptied out in the coordinator dialog URL: https://github.com/apache/druid/pull/9198 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] clintropolis opened a new pull request #9206: [Backport] Web console: fix bug where arrays can not be emptied out in the coordinator dialog

clintropolis opened a new pull request #9206: [Backport] Web console: fix bug where arrays can not be emptied out in the coordinator dialog URL: https://github.com/apache/druid/pull/9206 Backport of #9198 to 0.17.0. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[druid] branch master updated: allow empty values to be set in the auto form (#9198)

This is an automated email from the ASF dual-hosted git repository.

cwylie pushed a commit to branch master

in repository https://gitbox.apache.org/repos/asf/druid.git

The following commit(s) were added to refs/heads/master by this push:

new ab26725 allow empty values to be set in the auto form (#9198)

ab26725 is described below

commit ab2672514b306243b8b72d64e7419fd8e8a18fe4

Author: Vadim Ogievetsky

AuthorDate: Thu Jan 16 21:06:51 2020 -0800

allow empty values to be set in the auto form (#9198)

---

web-console/src/components/auto-form/auto-form.tsx| 15 +++

.../coordinator-dynamic-config-dialog.tsx | 3 +++

2 files changed, 14 insertions(+), 4 deletions(-)

diff --git a/web-console/src/components/auto-form/auto-form.tsx

b/web-console/src/components/auto-form/auto-form.tsx

index 110bf49..66dffde 100644

--- a/web-console/src/components/auto-form/auto-form.tsx

+++ b/web-console/src/components/auto-form/auto-form.tsx

@@ -45,6 +45,7 @@ export interface Field {

| 'json'

| 'interval';

defaultValue?: any;

+ emptyValue?: any;

suggestions?: Functor;

placeholder?: string;

min?: number;

@@ -99,10 +100,16 @@ export class AutoForm>

extends React.PureComponent

const { model } = this.props;

if (!model) return;

-const newModel =

- typeof newValue === 'undefined'

-? deepDelete(model, field.name)

-: deepSet(model, field.name, newValue);

+let newModel: T;

+if (typeof newValue === 'undefined') {

+ if (typeof field.emptyValue === 'undefined') {

+newModel = deepDelete(model, field.name);

+ } else {

+newModel = deepSet(model, field.name, field.emptyValue);

+ }

+} else {

+ newModel = deepSet(model, field.name, newValue);

+}

this.modelChange(newModel);

};

diff --git

a/web-console/src/dialogs/coordinator-dynamic-config-dialog/coordinator-dynamic-config-dialog.tsx

b/web-console/src/dialogs/coordinator-dynamic-config-dialog/coordinator-dynamic-config-dialog.tsx

index 8d82c0c..044e7ea 100644

---

a/web-console/src/dialogs/coordinator-dynamic-config-dialog/coordinator-dynamic-config-dialog.tsx

+++

b/web-console/src/dialogs/coordinator-dynamic-config-dialog/coordinator-dynamic-config-dialog.tsx

@@ -180,6 +180,7 @@ export class CoordinatorDynamicConfigDialog extends

React.PureComponent<

{

name: 'killDataSourceWhitelist',

type: 'string-array',

+ emptyValue: [],

info: (

<>

List of dataSources for which kill tasks are sent if

property{' '}

@@ -191,6 +192,7 @@ export class CoordinatorDynamicConfigDialog extends

React.PureComponent<

{

name: 'killPendingSegmentsSkipList',

type: 'string-array',

+ emptyValue: [],

info: (

<>

List of dataSources for which pendingSegments are NOT

cleaned up if property{' '}

@@ -259,6 +261,7 @@ export class CoordinatorDynamicConfigDialog extends

React.PureComponent<

{

name: 'decommissioningNodes',

type: 'string-array',

+ emptyValue: [],

info: (

<>

List of historical services to 'decommission'. Coordinator

will not assign new

-

To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org

For additional commands, e-mail: commits-h...@druid.apache.org

[druid] branch master updated (448da78 -> 68ed2a2)

This is an automated email from the ASF dual-hosted git repository. cwylie pushed a change to branch master in repository https://gitbox.apache.org/repos/asf/druid.git. from 448da78 Speed up String first/last aggregators when folding isn't needed. (#9181) add 68ed2a2 Fix LATEST / EARLIEST Buffer Aggregator does not work on String column (#9197) No new revisions were added by this update. Summary of changes: .../aggregation/first/StringFirstLastUtils.java| 2 +- .../first/StringFirstLastUtilsTest.java| 59 + .../apache/druid/sql/calcite/CalciteQueryTest.java | 147 - 3 files changed, 202 insertions(+), 6 deletions(-) create mode 100644 processing/src/test/java/org/apache/druid/query/aggregation/first/StringFirstLastUtilsTest.java - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] clintropolis merged pull request #9197: Fix LATEST / EARLIEST Buffer Aggregator does not work on String column

clintropolis merged pull request #9197: Fix LATEST / EARLIEST Buffer Aggregator does not work on String column URL: https://github.com/apache/druid/pull/9197 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[druid] branch master updated (486c0fd -> 448da78)

This is an automated email from the ASF dual-hosted git repository. cwylie pushed a change to branch master in repository https://gitbox.apache.org/repos/asf/druid.git. from 486c0fd Bump Apache Parquet to 1.11.0 (#9129) add 448da78 Speed up String first/last aggregators when folding isn't needed. (#9181) No new revisions were added by this update. Summary of changes: .../apache/druid/java/util/common/StringUtils.java | 17 ++- .../druid/java/util/common/StringUtilsTest.java| 28 +++ .../aggregation/first/StringFirstAggregator.java | 44 +++--- .../first/StringFirstAggregatorFactory.java| 13 -- .../first/StringFirstBufferAggregator.java | 54 -- .../aggregation/first/StringFirstLastUtils.java| 29 +++- .../aggregation/last/StringLastAggregator.java | 44 +++--- .../last/StringLastAggregatorFactory.java | 14 -- .../last/StringLastBufferAggregator.java | 54 -- .../first/StringFirstAggregationTest.java | 8 +++- .../first/StringFirstBufferAggregatorTest.java | 46 -- .../last/StringLastAggregationTest.java| 5 ++ .../last/StringLastBufferAggregatorTest.java | 50 ++-- 13 files changed, 321 insertions(+), 85 deletions(-) - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] clintropolis merged pull request #9181: Speed up String first/last aggregators when folding isn't needed.

clintropolis merged pull request #9181: Speed up String first/last aggregators when folding isn't needed. URL: https://github.com/apache/druid/pull/9181 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] jon-wei commented on issue #9199: Fix TSV bugs

jon-wei commented on issue #9199: Fix TSV bugs URL: https://github.com/apache/druid/pull/9199#issuecomment-575449429 @jihoonson thanks, latest update lgtm This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] jon-wei commented on a change in pull request #9183: fix topn aggregation on numeric columns with null values

jon-wei commented on a change in pull request #9183: fix topn aggregation on

numeric columns with null values

URL: https://github.com/apache/druid/pull/9183#discussion_r367748951

##

File path:

processing/src/main/java/org/apache/druid/query/topn/types/NullableNumericTopNColumnAggregatesProcessor.java

##

@@ -0,0 +1,137 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one

+ * or more contributor license agreements. See the NOTICE file

+ * distributed with this work for additional information

+ * regarding copyright ownership. The ASF licenses this file

+ * to you under the Apache License, Version 2.0 (the

+ * "License"); you may not use this file except in compliance

+ * with the License. You may obtain a copy of the License at

+ *

+ * http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing,

+ * software distributed under the License is distributed on an

+ * "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

+ * KIND, either express or implied. See the License for the

+ * specific language governing permissions and limitations

+ * under the License.

+ */

+

+package org.apache.druid.query.topn.types;

+

+import org.apache.druid.common.config.NullHandling;

+import org.apache.druid.query.aggregation.Aggregator;

+import org.apache.druid.query.topn.BaseTopNAlgorithm;

+import org.apache.druid.query.topn.TopNParams;

+import org.apache.druid.query.topn.TopNQuery;

+import org.apache.druid.query.topn.TopNResultBuilder;

+import org.apache.druid.segment.BaseNullableColumnValueSelector;

+import org.apache.druid.segment.Cursor;

+import org.apache.druid.segment.StorageAdapter;

+

+import java.util.Map;

+import java.util.function.Function;

+

+public abstract class NullableNumericTopNColumnAggregatesProcessor

+implements TopNColumnAggregatesProcessor

+{

+ private final boolean hasNulls = !NullHandling.replaceWithDefault();

+ final Function> converter;

+ Aggregator[] nullValueAggregates;

+

+ protected NullableNumericTopNColumnAggregatesProcessor(Function> converter)

+ {

+this.converter = converter;

+ }

+

+ abstract Aggregator[] getValueAggregators(TopNQuery query, Selector

selector, Cursor cursor);

Review comment:

Can you add javadocs for the abstract methods?

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org

For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] jon-wei commented on a change in pull request #9183: fix topn aggregation on numeric columns with null values

jon-wei commented on a change in pull request #9183: fix topn aggregation on

numeric columns with null values

URL: https://github.com/apache/druid/pull/9183#discussion_r367748677

##

File path:

processing/src/main/java/org/apache/druid/query/topn/types/NullableNumericTopNColumnAggregatesProcessor.java

##

@@ -0,0 +1,137 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one

+ * or more contributor license agreements. See the NOTICE file

+ * distributed with this work for additional information

+ * regarding copyright ownership. The ASF licenses this file

+ * to you under the Apache License, Version 2.0 (the

+ * "License"); you may not use this file except in compliance

+ * with the License. You may obtain a copy of the License at

+ *

+ * http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing,

+ * software distributed under the License is distributed on an

+ * "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

+ * KIND, either express or implied. See the License for the

+ * specific language governing permissions and limitations

+ * under the License.

+ */

+

+package org.apache.druid.query.topn.types;

+

+import org.apache.druid.common.config.NullHandling;

+import org.apache.druid.query.aggregation.Aggregator;

+import org.apache.druid.query.topn.BaseTopNAlgorithm;

+import org.apache.druid.query.topn.TopNParams;

+import org.apache.druid.query.topn.TopNQuery;

+import org.apache.druid.query.topn.TopNResultBuilder;

+import org.apache.druid.segment.BaseNullableColumnValueSelector;

+import org.apache.druid.segment.Cursor;

+import org.apache.druid.segment.StorageAdapter;

+

+import java.util.Map;

+import java.util.function.Function;

+

+public abstract class NullableNumericTopNColumnAggregatesProcessor

+implements TopNColumnAggregatesProcessor

+{

+ private final boolean hasNulls = !NullHandling.replaceWithDefault();

+ final Function> converter;

+ Aggregator[] nullValueAggregates;

+

+ protected NullableNumericTopNColumnAggregatesProcessor(Function> converter)

+ {

+this.converter = converter;

+ }

+

+ abstract Aggregator[] getValueAggregators(TopNQuery query, Selector

selector, Cursor cursor);

+

+ abstract Map getAggregatesStore();

+

+ abstract Comparable convertAggregatorStoreKeyToColumnValue(Object

aggregatorStoreKey);

+

+ @Override

+ public int getCardinality(Selector selector)

+ {

+return TopNParams.CARDINALITY_UNKNOWN;

+ }

+

+ @Override

+ public Aggregator[][] getRowSelector(TopNQuery query, TopNParams params,

StorageAdapter storageAdapter)

+ {

+return null;

+ }

+

+ @Override

+ public long scanAndAggregate(

+ TopNQuery query,

+ Selector selector,

+ Cursor cursor,

+ Aggregator[][] rowSelector

+ )

+ {

+initAggregateStore();

Review comment:

I think the `initAggregateStore` call could be moved into

`HeapBasedTopNAlgorithm.scanAndAggregate` since both impls call it as the first

step

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org

For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] lgtm-com[bot] commented on issue #9181: Speed up String first/last aggregators when folding isn't needed.

lgtm-com[bot] commented on issue #9181: Speed up String first/last aggregators when folding isn't needed. URL: https://github.com/apache/druid/pull/9181#issuecomment-575439630 This pull request **fixes 1 alert** when merging de0697cb1834f77a2fafc57e5d56673a558c5e83 into 486c0fd149d9837a64550ecb9e85d9b6cd4beb24 - [view on LGTM.com](https://lgtm.com/projects/g/apache/druid/rev/pr-cbc76f7454ddad92381f9db32c521dcbd504afb8) **fixed alerts:** * 1 for Useless null check This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] jihoonson commented on issue #9199: Fix TSV bugs

jihoonson commented on issue #9199: Fix TSV bugs URL: https://github.com/apache/druid/pull/9199#issuecomment-575437622 @jon-wei @clintropolis thanks for the review. I needed to delete one test and modify another which was added in https://github.com/apache/druid/pull/8915 because the delimited input format doesn't support those functionalities (recognizing quotes). This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] suneet-s opened a new pull request #9205: [0.17.0] Tutorials use new ingestion spec where possible (#9155)

suneet-s opened a new pull request #9205: [0.17.0] Tutorials use new ingestion spec where possible (#9155) URL: https://github.com/apache/druid/pull/9205 Backports the following commits to 0.17.0: - Tutorials use new ingestion spec where possible (#9155) This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] suneet-s opened a new pull request #9204: [0.17.0] Link javaOpts to middlemanager runtime.properties docs (#9101)

suneet-s opened a new pull request #9204: [0.17.0] Link javaOpts to middlemanager runtime.properties docs (#9101) URL: https://github.com/apache/druid/pull/9204 Backports the following commits to 0.17.0: - Link javaOpts to middlemanager runtime.properties docs (#9101) This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] clintropolis commented on a change in pull request #9181: Speed up String first/last aggregators when folding isn't needed.

clintropolis commented on a change in pull request #9181: Speed up String

first/last aggregators when folding isn't needed.

URL: https://github.com/apache/druid/pull/9181#discussion_r367736165

##

File path:

core/src/test/java/org/apache/druid/java/util/common/StringUtilsTest.java

##

@@ -246,4 +246,32 @@ public void testRpad()

Assert.assertEquals(s5, null);

}

+ @Test

+ public void testChop()

+ {

+Assert.assertEquals("foo", StringUtils.chop("foo", 5));

+Assert.assertEquals("fo", StringUtils.chop("foo", 2));

+Assert.assertEquals("", StringUtils.chop("foo", 0));

+Assert.assertEquals("smile for", StringUtils.chop("smile for the

camera", 14));

Review comment:

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org

For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] clintropolis opened a new pull request #9203: [Backport] Web console: fix refresh button in segments view

clintropolis opened a new pull request #9203: [Backport] Web console: fix refresh button in segments view URL: https://github.com/apache/druid/pull/9203 Backport of #9195 to 0.17.0. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] clintropolis opened a new pull request #9202: [Backport] fix null handling for arithmetic post aggregator comparator

clintropolis opened a new pull request #9202: [Backport] fix null handling for arithmetic post aggregator comparator URL: https://github.com/apache/druid/pull/9202 Backport of #9159 to 0.17.0. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] gianm commented on issue #9181: Speed up String first/last aggregators when folding isn't needed.

gianm commented on issue #9181: Speed up String first/last aggregators when folding isn't needed. URL: https://github.com/apache/druid/pull/9181#issuecomment-575427555 > There's a TC error about an unresolved reference to the chop method That was from a javadoc for `fastLooseChop`. It looks like `chop` was moved to StringUtils, so I moved `fastLooseChop` to the same place. And added unit tests for good measure. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] jon-wei opened a new pull request #9201: [Backport] Update Kinesis resharding information about task failures (#9104)

jon-wei opened a new pull request #9201: [Backport] Update Kinesis resharding information about task failures (#9104) URL: https://github.com/apache/druid/pull/9201 Backport #9104 to 0.17.0 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] vogievetsky commented on issue #9190: Docs: move search to the left

vogievetsky commented on issue #9190: Docs: move search to the left URL: https://github.com/apache/druid/pull/9190#issuecomment-575421536 @fjy the [docusaurus](https://docusaurus.io/docs/en/search) template forces you to have a search in the header. Putting it in the ToC would be a lot more work. Do you think this position is better than before? This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] jon-wei commented on issue #9181: Speed up String first/last aggregators when folding isn't needed.

jon-wei commented on issue #9181: Speed up String first/last aggregators when folding isn't needed. URL: https://github.com/apache/druid/pull/9181#issuecomment-575418815 There's a TC error about an resolved reference to the chop method This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] jon-wei edited a comment on issue #9181: Speed up String first/last aggregators when folding isn't needed.

jon-wei edited a comment on issue #9181: Speed up String first/last aggregators when folding isn't needed. URL: https://github.com/apache/druid/pull/9181#issuecomment-575418815 There's a TC error about an unresolved reference to the chop method This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] jon-wei commented on a change in pull request #9171: Doc update for the new input source and the new input format

jon-wei commented on a change in pull request #9171: Doc update for the new

input source and the new input format

URL: https://github.com/apache/druid/pull/9171#discussion_r367716973

##

File path: docs/ingestion/hadoop.md

##

@@ -149,11 +149,12 @@ For example, using the static input paths:

```

You can also read from cloud storage such as AWS S3 or Google Cloud Storage.

-To do so, you need to install the necessary library under

`${DRUID_HOME}/hadoop-dependencies` in _all MiddleManager or Indexer processes_.

+To do so, you need to install the necessary library under Druid's classpath in

_all MiddleManager or Indexer processes_.

For S3, you can run the below command to install the [Hadoop AWS

module](https://hadoop.apache.org/docs/current/hadoop-aws/tools/hadoop-aws/index.html).

```bash

java -classpath "${DRUID_HOME}lib/*" org.apache.druid.cli.Main tools pull-deps

-h "org.apache.hadoop:hadoop-aws:${HADOOP_VERSION}";

+cp

${DRUID_HOME}/hadoop-dependencies/hadoop-aws/${HADOOP_VERSION}/hadoop-aws-${HADOOP_VERSION}.jar

${DRUID_HOME}/extensions/druid-hdfs-storage/

Review comment:

This should go before the java command

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org

For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] jon-wei commented on a change in pull request #9171: Doc update for the new input source and the new input format

jon-wei commented on a change in pull request #9171: Doc update for the new

input source and the new input format

URL: https://github.com/apache/druid/pull/9171#discussion_r367716973

##

File path: docs/ingestion/hadoop.md

##

@@ -149,11 +149,12 @@ For example, using the static input paths:

```

You can also read from cloud storage such as AWS S3 or Google Cloud Storage.

-To do so, you need to install the necessary library under

`${DRUID_HOME}/hadoop-dependencies` in _all MiddleManager or Indexer processes_.

+To do so, you need to install the necessary library under Druid's classpath in

_all MiddleManager or Indexer processes_.

For S3, you can run the below command to install the [Hadoop AWS

module](https://hadoop.apache.org/docs/current/hadoop-aws/tools/hadoop-aws/index.html).

```bash

java -classpath "${DRUID_HOME}lib/*" org.apache.druid.cli.Main tools pull-deps

-h "org.apache.hadoop:hadoop-aws:${HADOOP_VERSION}";

+cp

${DRUID_HOME}/hadoop-dependencies/hadoop-aws/${HADOOP_VERSION}/hadoop-aws-${HADOOP_VERSION}.jar

${DRUID_HOME}/extensions/druid-hdfs-storage/

Review comment:

This should go before the java command

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org

For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] jon-wei commented on a change in pull request #9171: Doc update for the new input source and the new input format

jon-wei commented on a change in pull request #9171: Doc update for the new input source and the new input format URL: https://github.com/apache/druid/pull/9171#discussion_r367720309 ## File path: docs/development/extensions-core/hdfs.md ## @@ -94,7 +94,7 @@ For more configurations, see the [Hadoop AWS module](https://hadoop.apache.org/d Configuration for Google Cloud Storage -To use the Google cloud Storage as the deep storage, you need to configure `druid.storage.storageDirectory` properly. +To use the Google Cloud Storage as the deep storage, you need to configure `druid.storage.storageDirectory` properly. Review comment: For the installation section below, I think we could point to https://github.com/GoogleCloudPlatform/bigdata-interop/blob/master/gcs/INSTALL.md and say the following, and remove the parts where we duplicate their setup instructions: > Please follow the instructions at https://github.com/GoogleCloudPlatform/bigdata-interop/blob/master/gcs/INSTALL.md for configuring your `core-site.xml` with the filesystem and authentication properties needed for GCS." We can also add the following (it took me a while to find a download link for the connector): > The GCS connector library is available at https://cloud.google.com/dataproc/docs/concepts/connectors/cloud-storage#other_sparkhadoop_clusters The line below: "Tested with Druid 0.9.0, Hadoop 2.7.2 and gcs-connector jar 1.4.4-hadoop2." can be updated to "Tested with Druid 0.17.0, Hadoop 2.8.5 and gcs-connector jar 2.0.0-hadoop2. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] gianm commented on a change in pull request #9200: Optimize JoinCondition matching

gianm commented on a change in pull request #9200: Optimize JoinCondition

matching

URL: https://github.com/apache/druid/pull/9200#discussion_r367719384

##

File path:

processing/src/main/java/org/apache/druid/segment/join/JoinConditionAnalysis.java

##

@@ -133,26 +142,23 @@ public String getOriginalExpression()

*/

public boolean isAlwaysFalse()

{

-return nonEquiConditions.stream()

-.anyMatch(expr -> expr.isLiteral() &&

!expr.eval(ExprUtils.nilBindings()).asBoolean());

+return anyFalseLiteralNonEquiConditions;

}

/**

* Return whether this condition is a constant that is always true.

*/

public boolean isAlwaysTrue()

{

-return equiConditions.isEmpty() &&

- nonEquiConditions.stream()

-.allMatch(expr -> expr.isLiteral() &&

expr.eval(ExprUtils.nilBindings()).asBoolean());

+return equiConditions.isEmpty() && allTrueLiteralNonEquiConditions;

Review comment:

It seems like `allTrueLiteralNonEquiConditions` is only used here; how about

caching `isAlwaysTrue` directly?

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org

For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] gianm commented on a change in pull request #9200: Optimize JoinCondition matching

gianm commented on a change in pull request #9200: Optimize JoinCondition

matching

URL: https://github.com/apache/druid/pull/9200#discussion_r367719499

##

File path:

processing/src/main/java/org/apache/druid/segment/join/JoinConditionAnalysis.java

##

@@ -133,26 +142,23 @@ public String getOriginalExpression()

*/

public boolean isAlwaysFalse()

{

-return nonEquiConditions.stream()

-.anyMatch(expr -> expr.isLiteral() &&

!expr.eval(ExprUtils.nilBindings()).asBoolean());

+return anyFalseLiteralNonEquiConditions;

Review comment:

Why not call this `isAlwaysFalse`? (It looks like it isn't used anywhere

else, and it seems to me to be easier to understand the meaning of the field if

it's named after what we want it to mean.)

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org

For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] clintropolis merged pull request #9129: Bump Apache Parquet to 1.11.0

clintropolis merged pull request #9129: Bump Apache Parquet to 1.11.0 URL: https://github.com/apache/druid/pull/9129 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[druid] branch master updated (bd49ec0 -> 486c0fd)

This is an automated email from the ASF dual-hosted git repository. cwylie pushed a change to branch master in repository https://gitbox.apache.org/repos/asf/druid.git. from bd49ec0 Move result-to-array logic from SQL layer into QueryToolChests. (#9130) add 486c0fd Bump Apache Parquet to 1.11.0 (#9129) No new revisions were added by this update. Summary of changes: extensions-core/parquet-extensions/pom.xml | 2 +- licenses.yaml | 3 ++- 2 files changed, 3 insertions(+), 2 deletions(-) - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] jihoonson commented on a change in pull request #9199: Fix TSV bugs

jihoonson commented on a change in pull request #9199: Fix TSV bugs

URL: https://github.com/apache/druid/pull/9199#discussion_r367709959

##

File path: core/src/main/java/org/apache/druid/data/input/impl/CSVParser.java

##

@@ -0,0 +1,56 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one

+ * or more contributor license agreements. See the NOTICE file

+ * distributed with this work for additional information

+ * regarding copyright ownership. The ASF licenses this file

+ * to you under the Apache License, Version 2.0 (the

+ * "License"); you may not use this file except in compliance

+ * with the License. You may obtain a copy of the License at

+ *

+ * http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing,

+ * software distributed under the License is distributed on an

+ * "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

+ * KIND, either express or implied. See the License for the

+ * specific language governing permissions and limitations

+ * under the License.

+ */

+

+package org.apache.druid.data.input.impl;

+

+import com.opencsv.RFC4180Parser;

+import com.opencsv.RFC4180ParserBuilder;

+import com.opencsv.enums.CSVReaderNullFieldIndicator;

+import org.apache.druid.common.config.NullHandling;

+import

org.apache.druid.data.input.impl.DelimitedValueReader.DelimitedValueParser;

+

+import java.io.IOException;

+import java.util.Arrays;

+import java.util.List;

+

+public class CSVParser implements DelimitedValueParser

+{

+ private static final char SEPERATOR = ',';

Review comment:

Thanks, fixed.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org

For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] jihoonson commented on a change in pull request #9199: Fix TSV bugs

jihoonson commented on a change in pull request #9199: Fix TSV bugs

URL: https://github.com/apache/druid/pull/9199#discussion_r367710195

##

File path:

core/src/main/java/org/apache/druid/data/input/impl/FlatTextInputFormat.java

##

@@ -0,0 +1,140 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one

+ * or more contributor license agreements. See the NOTICE file

+ * distributed with this work for additional information

+ * regarding copyright ownership. The ASF licenses this file

+ * to you under the Apache License, Version 2.0 (the

+ * "License"); you may not use this file except in compliance

+ * with the License. You may obtain a copy of the License at

+ *

+ * http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing,

+ * software distributed under the License is distributed on an

+ * "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

+ * KIND, either express or implied. See the License for the

+ * specific language governing permissions and limitations

+ * under the License.

+ */

+

+package org.apache.druid.data.input.impl;

+

+import com.fasterxml.jackson.annotation.JsonProperty;

+import com.google.common.base.Preconditions;

+import com.google.common.collect.ImmutableList;

+import org.apache.druid.data.input.InputFormat;

+import org.apache.druid.indexer.Checks;

+import org.apache.druid.indexer.Property;

+

+import javax.annotation.Nullable;

+import java.util.Collections;

+import java.util.List;

+import java.util.Objects;

+

+public abstract class FlatTextInputFormat implements InputFormat

+{

+ private final List columns;

+ private final String listDelimiter;

+ private final String delimiter;

+ private final boolean findColumnsFromHeader;

+ private final int skipHeaderRows;

+

+ FlatTextInputFormat(

+ @Nullable List columns,

+ @Nullable String listDelimiter,

+ String delimiter,

+ @Nullable Boolean hasHeaderRow,

+ @Nullable Boolean findColumnsFromHeader,

+ int skipHeaderRows

+ )

+ {

+this.columns = columns == null ? Collections.emptyList() : columns;

+this.listDelimiter = listDelimiter;

+this.delimiter = Preconditions.checkNotNull(delimiter, "delimiter");

+//noinspection ConstantConditions

+if (columns == null || columns.isEmpty()) {

+ this.findColumnsFromHeader = Checks.checkOneNotNullOrEmpty(

+ ImmutableList.of(

+ new Property<>("hasHeaderRow", hasHeaderRow),

+ new Property<>("findColumnsFromHeader", findColumnsFromHeader)

+ )

+ ).getValue();

+} else {

+ this.findColumnsFromHeader = false;

+}

+this.skipHeaderRows = skipHeaderRows;

+Preconditions.checkArgument(

+!delimiter.equals(listDelimiter),

+"Cannot have same delimiter and list delimiter of [%s]",

+delimiter

+);

+if (!this.columns.isEmpty()) {

+ for (String column : this.columns) {

+Preconditions.checkArgument(

Review comment:

Hmm, I'm not sure why we do this check.. I guess it wouldn't harm anything

if the column name contains the delimiter. Maybe we can remove this check later.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org

For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] jon-wei commented on a change in pull request #9199: Fix TSV bugs

jon-wei commented on a change in pull request #9199: Fix TSV bugs

URL: https://github.com/apache/druid/pull/9199#discussion_r367707521

##

File path:

core/src/main/java/org/apache/druid/data/input/impl/FlatTextInputFormat.java

##

@@ -0,0 +1,140 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one

+ * or more contributor license agreements. See the NOTICE file

+ * distributed with this work for additional information

+ * regarding copyright ownership. The ASF licenses this file

+ * to you under the Apache License, Version 2.0 (the

+ * "License"); you may not use this file except in compliance

+ * with the License. You may obtain a copy of the License at

+ *

+ * http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing,

+ * software distributed under the License is distributed on an

+ * "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

+ * KIND, either express or implied. See the License for the

+ * specific language governing permissions and limitations

+ * under the License.

+ */

+

+package org.apache.druid.data.input.impl;

+

+import com.fasterxml.jackson.annotation.JsonProperty;

+import com.google.common.base.Preconditions;

+import com.google.common.collect.ImmutableList;

+import org.apache.druid.data.input.InputFormat;

+import org.apache.druid.indexer.Checks;

+import org.apache.druid.indexer.Property;

+

+import javax.annotation.Nullable;

+import java.util.Collections;

+import java.util.List;

+import java.util.Objects;

+

+public abstract class FlatTextInputFormat implements InputFormat

+{

+ private final List columns;

+ private final String listDelimiter;

+ private final String delimiter;

+ private final boolean findColumnsFromHeader;

+ private final int skipHeaderRows;

+

+ FlatTextInputFormat(

+ @Nullable List columns,

+ @Nullable String listDelimiter,

+ String delimiter,

+ @Nullable Boolean hasHeaderRow,

+ @Nullable Boolean findColumnsFromHeader,

+ int skipHeaderRows

+ )

+ {

+this.columns = columns == null ? Collections.emptyList() : columns;

+this.listDelimiter = listDelimiter;

+this.delimiter = Preconditions.checkNotNull(delimiter, "delimiter");

+//noinspection ConstantConditions

+if (columns == null || columns.isEmpty()) {

+ this.findColumnsFromHeader = Checks.checkOneNotNullOrEmpty(

+ ImmutableList.of(

+ new Property<>("hasHeaderRow", hasHeaderRow),

+ new Property<>("findColumnsFromHeader", findColumnsFromHeader)

+ )

+ ).getValue();

+} else {

+ this.findColumnsFromHeader = false;

+}

+this.skipHeaderRows = skipHeaderRows;

+Preconditions.checkArgument(

+!delimiter.equals(listDelimiter),

+"Cannot have same delimiter and list delimiter of [%s]",

+delimiter

+);

+if (!this.columns.isEmpty()) {

+ for (String column : this.columns) {

+Preconditions.checkArgument(

Review comment:

Does this need to check for `listDelimiter` in the column names as well?

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org

For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] jon-wei commented on a change in pull request #9199: Fix TSV bugs

jon-wei commented on a change in pull request #9199: Fix TSV bugs

URL: https://github.com/apache/druid/pull/9199#discussion_r367704911

##

File path: core/src/main/java/org/apache/druid/data/input/impl/CSVParser.java

##

@@ -0,0 +1,56 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one

+ * or more contributor license agreements. See the NOTICE file

+ * distributed with this work for additional information

+ * regarding copyright ownership. The ASF licenses this file

+ * to you under the Apache License, Version 2.0 (the

+ * "License"); you may not use this file except in compliance

+ * with the License. You may obtain a copy of the License at

+ *

+ * http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing,

+ * software distributed under the License is distributed on an

+ * "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

+ * KIND, either express or implied. See the License for the

+ * specific language governing permissions and limitations

+ * under the License.

+ */

+

+package org.apache.druid.data.input.impl;

+

+import com.opencsv.RFC4180Parser;

+import com.opencsv.RFC4180ParserBuilder;

+import com.opencsv.enums.CSVReaderNullFieldIndicator;

+import org.apache.druid.common.config.NullHandling;

+import

org.apache.druid.data.input.impl.DelimitedValueReader.DelimitedValueParser;

+

+import java.io.IOException;

+import java.util.Arrays;

+import java.util.List;

+

+public class CSVParser implements DelimitedValueParser

+{

+ private static final char SEPERATOR = ',';

Review comment:

SEPERATOR -> SEPARATOR

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org

For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] jihoonson commented on a change in pull request #9171: Doc update for the new input source and the new input format

jihoonson commented on a change in pull request #9171: Doc update for the new

input source and the new input format

URL: https://github.com/apache/druid/pull/9171#discussion_r367707446

##

File path: docs/development/extensions-core/hdfs.md

##

@@ -36,49 +36,110 @@ To use this Apache Druid extension, make sure to

[include](../../development/ext

|`druid.hadoop.security.kerberos.principal`|`dr...@example.com`| Principal

user name |empty|

|`druid.hadoop.security.kerberos.keytab`|`/etc/security/keytabs/druid.headlessUser.keytab`|Path

to keytab file|empty|

-If you are using the Hadoop indexer, set your output directory to be a

location on Hadoop and it will work.

+Besides the above settings, you also need to include all Hadoop configuration

files (such as `core-site.xml`, `hdfs-site.xml`)

+in the Druid classpath. One way to do this is copying all those files under

`${DRUID_HOME}/conf/_common`.

+

+If you are using the Hadoop ingestion, set your output directory to be a

location on Hadoop and it will work.

If you want to eagerly authenticate against a secured hadoop/hdfs cluster you

must set `druid.hadoop.security.kerberos.principal` and

`druid.hadoop.security.kerberos.keytab`, this is an alternative to the cron job

method that runs `kinit` command periodically.

-### Configuration for Google Cloud Storage

+### Configuration for Cloud Storage

+

+You can also use the AWS S3 or the Google Cloud Storage as the deep storage

via HDFS.

+

+ Configuration for AWS S3

-The HDFS extension can also be used for GCS as deep storage.

+To use the AWS S3 as the deep storage, you need to configure

`druid.storage.storageDirectory` properly.

|Property|Possible Values|Description|Default|

||---|---|---|

-|`druid.storage.type`|hdfs||Must be set.|

-|`druid.storage.storageDirectory`||gs://bucket/example/directory|Must be set.|

+|`druid.storage.type`|hdfs| |Must be set.|

+|`druid.storage.storageDirectory`|s3a://bucket/example/directory or

s3n://bucket/example/directory|Path to the deep storage|Must be set.|

-All services that need to access GCS need to have the [GCS connector

jar](https://cloud.google.com/hadoop/google-cloud-storage-connector#manualinstallation)

in their class path. One option is to place this jar in /lib/ and

/extensions/druid-hdfs-storage/

+You also need to include the [Hadoop AWS

module](https://hadoop.apache.org/docs/current/hadoop-aws/tools/hadoop-aws/index.html),

especially the `hadoop-aws.jar` in the Druid classpath.

+Run the below command to install the `hadoop-aws.jar` file under

`${DRUID_HOME}/extensions/druid-hdfs-storage` in all nodes.

-Tested with Druid 0.9.0, Hadoop 2.7.2 and gcs-connector jar 1.4.4-hadoop2.

-

-

+```bash

+java -classpath "${DRUID_HOME}lib/*" org.apache.druid.cli.Main tools pull-deps

-h "org.apache.hadoop:hadoop-aws:${HADOOP_VERSION}";

+cp

${DRUID_HOME}/hadoop-dependencies/hadoop-aws/${HADOOP_VERSION}/hadoop-aws-${HADOOP_VERSION}.jar

${DRUID_HOME}/extensions/druid-hdfs-storage/

+```

-## Native batch ingestion

+Finally, you need to add the below properties in the `core-site.xml`.

+For more configurations, see the [Hadoop AWS

module](https://hadoop.apache.org/docs/current/hadoop-aws/tools/hadoop-aws/index.html).

+

+```xml

+

+ fs.s3a.impl

+ org.apache.hadoop.fs.s3a.S3AFileSystem

+ The implementation class of the S3A Filesystem

+

+

+

+ fs.AbstractFileSystem.s3a.impl

+ org.apache.hadoop.fs.s3a.S3A

+ The implementation class of the S3A

AbstractFileSystem.

+

+

+

+ fs.s3a.access.key

+ AWS access key ID. Omit for IAM role-based or provider-based

authentication.

+ your access key

+

+

+

+ fs.s3a.secret.key

+ AWS secret key. Omit for IAM role-based or provider-based

authentication.

+ your secret key

+

+```

-This firehose ingests events from a predefined list of files from a Hadoop

filesystem.

-This firehose is _splittable_ and can be used by [native parallel index

tasks](../../ingestion/native-batch.md#parallel-task).

-Since each split represents an HDFS file, each worker task of `index_parallel`

will read an object.

+ Configuration for Google Cloud Storage

-Sample spec:

+To use the Google cloud Storage as the deep storage, you need to configure

`druid.storage.storageDirectory` properly.

Review comment:

Thanks, fixed.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org

For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] jihoonson commented on a change in pull request #9171: Doc update for the new input source and the new input format

jihoonson commented on a change in pull request #9171: Doc update for the new

input source and the new input format

URL: https://github.com/apache/druid/pull/9171#discussion_r367707404

##

File path: docs/development/modules.md

##

@@ -148,29 +150,43 @@ To start a segment killing task, you need to access the

old Coordinator console

After the killing task ends, `index.zip` (`partitionNum_index.zip` for HDFS

data storage) file should be deleted from the data storage.

-### Adding a new Firehose

+### Adding support for a new input source

-There is an example of this in the `s3-extensions` module with the

StaticS3FirehoseFactory.

+Adding support for a new input source requires to implement three interfaces,

i.e., `InputSource`, `InputEntity`, and `InputSourceReader`.

+`InputSource` is to define where the input data is stored. `InputEntity` is to

define how data can be read in parallel

+in [native parallel indexing](../ingestion/native-batch.md).

+`InputSourceReader` defines how to read your new input source and you can

simply use the provided `InputEntityIteratingReader` in most cases.

-Adding a Firehose is done almost entirely through the Jackson Modules instead

of Guice. Specifically, note the implementation

+There is an example of this in the `druid-s3-extensions` module with the

`S3InputSource` and `S3Entity`.

+

+Adding an InputSource is done almost entirely through the Jackson Modules

instead of Guice. Specifically, note the implementation

``` java

@Override

public List getJacksonModules()

{

return ImmutableList.of(

- new SimpleModule().registerSubtypes(new

NamedType(StaticS3FirehoseFactory.class, "static-s3"))

+ new SimpleModule().registerSubtypes(new

NamedType(S3InputSource.class, "s3"))

);

}

```

-This is registering the FirehoseFactory with Jackson's polymorphic

serialization/deserialization layer. More concretely, having this will mean

that if you specify a `"firehose": { "type": "static-s3", ... }` in your

realtime config, then the system will load this FirehoseFactory for your

firehose.

+This is registering the InputSource with Jackson's polymorphic

serialization/deserialization layer. More concretely, having this will mean

that if you specify a `"inputSource": { "type": "s3", ... }` in your IO config,

then the system will load this InputSource for your `InputSource`

implementation.

+

+Note that inside of Druid, we have made the @JacksonInject annotation for

Jackson deserialized objects actually use the base Guice injector to resolve

the object to be injected. So, if your InputSource needs access to some

object, you can add a @JacksonInject annotation on a setter and it will get set

on instantiation.

Review comment:

Added.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org

For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] jihoonson commented on a change in pull request #9171: Doc update for the new input source and the new input format

jihoonson commented on a change in pull request #9171: Doc update for the new

input source and the new input format

URL: https://github.com/apache/druid/pull/9171#discussion_r367707398

##

File path: docs/development/modules.md

##

@@ -148,29 +150,43 @@ To start a segment killing task, you need to access the

old Coordinator console

After the killing task ends, `index.zip` (`partitionNum_index.zip` for HDFS

data storage) file should be deleted from the data storage.

-### Adding a new Firehose

+### Adding support for a new input source

-There is an example of this in the `s3-extensions` module with the

StaticS3FirehoseFactory.

+Adding support for a new input source requires to implement three interfaces,

i.e., `InputSource`, `InputEntity`, and `InputSourceReader`.

+`InputSource` is to define where the input data is stored. `InputEntity` is to

define how data can be read in parallel

+in [native parallel indexing](../ingestion/native-batch.md).

+`InputSourceReader` defines how to read your new input source and you can

simply use the provided `InputEntityIteratingReader` in most cases.

-Adding a Firehose is done almost entirely through the Jackson Modules instead

of Guice. Specifically, note the implementation

+There is an example of this in the `druid-s3-extensions` module with the

`S3InputSource` and `S3Entity`.

+

+Adding an InputSource is done almost entirely through the Jackson Modules

instead of Guice. Specifically, note the implementation

``` java

@Override

public List getJacksonModules()

{

return ImmutableList.of(

- new SimpleModule().registerSubtypes(new

NamedType(StaticS3FirehoseFactory.class, "static-s3"))

+ new SimpleModule().registerSubtypes(new

NamedType(S3InputSource.class, "s3"))

);

}

```

-This is registering the FirehoseFactory with Jackson's polymorphic

serialization/deserialization layer. More concretely, having this will mean

that if you specify a `"firehose": { "type": "static-s3", ... }` in your

realtime config, then the system will load this FirehoseFactory for your

firehose.

+This is registering the InputSource with Jackson's polymorphic

serialization/deserialization layer. More concretely, having this will mean

that if you specify a `"inputSource": { "type": "s3", ... }` in your IO config,

then the system will load this InputSource for your `InputSource`

implementation.

+

+Note that inside of Druid, we have made the @JacksonInject annotation for

Jackson deserialized objects actually use the base Guice injector to resolve

the object to be injected. So, if your InputSource needs access to some

object, you can add a @JacksonInject annotation on a setter and it will get set

on instantiation.

+

+### Adding support for a new data format

+

+Adding support for a new data format requires to implement two interfaces,

i.e., `InputFormat` and `InputEntityReader`.

Review comment:

Fixed, thanks.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org

For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] jihoonson commented on a change in pull request #9171: Doc update for the new input source and the new input format

jihoonson commented on a change in pull request #9171: Doc update for the new

input source and the new input format

URL: https://github.com/apache/druid/pull/9171#discussion_r367707455

##

File path: docs/development/extensions-core/hdfs.md

##

@@ -36,49 +36,110 @@ To use this Apache Druid extension, make sure to

[include](../../development/ext

|`druid.hadoop.security.kerberos.principal`|`dr...@example.com`| Principal

user name |empty|

|`druid.hadoop.security.kerberos.keytab`|`/etc/security/keytabs/druid.headlessUser.keytab`|Path

to keytab file|empty|

-If you are using the Hadoop indexer, set your output directory to be a

location on Hadoop and it will work.

+Besides the above settings, you also need to include all Hadoop configuration

files (such as `core-site.xml`, `hdfs-site.xml`)

+in the Druid classpath. One way to do this is copying all those files under

`${DRUID_HOME}/conf/_common`.

+

+If you are using the Hadoop ingestion, set your output directory to be a

location on Hadoop and it will work.

If you want to eagerly authenticate against a secured hadoop/hdfs cluster you

must set `druid.hadoop.security.kerberos.principal` and

`druid.hadoop.security.kerberos.keytab`, this is an alternative to the cron job

method that runs `kinit` command periodically.

-### Configuration for Google Cloud Storage

+### Configuration for Cloud Storage

+

+You can also use the AWS S3 or the Google Cloud Storage as the deep storage

via HDFS.

+

+ Configuration for AWS S3

-The HDFS extension can also be used for GCS as deep storage.

+To use the AWS S3 as the deep storage, you need to configure

`druid.storage.storageDirectory` properly.

|Property|Possible Values|Description|Default|

||---|---|---|

-|`druid.storage.type`|hdfs||Must be set.|

-|`druid.storage.storageDirectory`||gs://bucket/example/directory|Must be set.|

+|`druid.storage.type`|hdfs| |Must be set.|

+|`druid.storage.storageDirectory`|s3a://bucket/example/directory or

s3n://bucket/example/directory|Path to the deep storage|Must be set.|

-All services that need to access GCS need to have the [GCS connector

jar](https://cloud.google.com/hadoop/google-cloud-storage-connector#manualinstallation)

in their class path. One option is to place this jar in /lib/ and

/extensions/druid-hdfs-storage/

+You also need to include the [Hadoop AWS

module](https://hadoop.apache.org/docs/current/hadoop-aws/tools/hadoop-aws/index.html),

especially the `hadoop-aws.jar` in the Druid classpath.

+Run the below command to install the `hadoop-aws.jar` file under

`${DRUID_HOME}/extensions/druid-hdfs-storage` in all nodes.

-Tested with Druid 0.9.0, Hadoop 2.7.2 and gcs-connector jar 1.4.4-hadoop2.

-

-

+```bash

+java -classpath "${DRUID_HOME}lib/*" org.apache.druid.cli.Main tools pull-deps

-h "org.apache.hadoop:hadoop-aws:${HADOOP_VERSION}";

+cp

${DRUID_HOME}/hadoop-dependencies/hadoop-aws/${HADOOP_VERSION}/hadoop-aws-${HADOOP_VERSION}.jar

${DRUID_HOME}/extensions/druid-hdfs-storage/

+```

-## Native batch ingestion

+Finally, you need to add the below properties in the `core-site.xml`.

+For more configurations, see the [Hadoop AWS

module](https://hadoop.apache.org/docs/current/hadoop-aws/tools/hadoop-aws/index.html).

+

+```xml

+

+ fs.s3a.impl

+ org.apache.hadoop.fs.s3a.S3AFileSystem

+ The implementation class of the S3A Filesystem

+

+

+

+ fs.AbstractFileSystem.s3a.impl

+ org.apache.hadoop.fs.s3a.S3A

+ The implementation class of the S3A

AbstractFileSystem.

+

+

+

+ fs.s3a.access.key

+ AWS access key ID. Omit for IAM role-based or provider-based

authentication.

+ your access key

+

+

+

+ fs.s3a.secret.key

+ AWS secret key. Omit for IAM role-based or provider-based

authentication.

+ your secret key

+

+```

-This firehose ingests events from a predefined list of files from a Hadoop

filesystem.

-This firehose is _splittable_ and can be used by [native parallel index

tasks](../../ingestion/native-batch.md#parallel-task).

-Since each split represents an HDFS file, each worker task of `index_parallel`

will read an object.

+ Configuration for Google Cloud Storage

Review comment:

I added `google.cloud.auth.service.account.enable` property. Haven't checked

how it works, but just copied from

https://github.com/GoogleCloudDataproc/bigdata-interop/blob/master/gcs/INSTALL.md.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org

For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] lgtm-com[bot] commented on issue #9181: Speed up String first/last aggregators when folding isn't needed.

lgtm-com[bot] commented on issue #9181: Speed up String first/last aggregators when folding isn't needed. URL: https://github.com/apache/druid/pull/9181#issuecomment-575400116 This pull request **fixes 1 alert** when merging c56d895caf30f0b3171ea5cc09615e551adeeae4 into 42359c93dd53f16e52ed79dcd8b63829f4bf2f7b - [view on LGTM.com](https://lgtm.com/projects/g/apache/druid/rev/pr-60ba177285eee825f576c2665f6a7661b4aff17a) **fixed alerts:** * 1 for Useless null check This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[druid] branch master updated (bfcb30e -> bd49ec0)

This is an automated email from the ASF dual-hosted git repository.

gian pushed a change to branch master

in repository https://gitbox.apache.org/repos/asf/druid.git.

from bfcb30e Add javadocs and small improvements to join code. (#9196)

add bd49ec0 Move result-to-array logic from SQL layer into

QueryToolChests. (#9130)

No new revisions were added by this update.

Summary of changes:

.../java/org/apache/druid/query/BaseQuery.java | 1 +

.../main/java/org/apache/druid/query/Query.java| 1 +

.../org/apache/druid/query/QueryToolChest.java | 49 +++-

.../query/groupby/GroupByQueryQueryToolChest.java | 11 +

.../apache/druid/query/scan/ScanQueryEngine.java | 6 +-

.../druid/query/scan/ScanQueryQueryToolChest.java | 75 ++

.../timeseries/TimeseriesQueryQueryToolChest.java | 43

.../druid/query/topn/TopNQueryQueryToolChest.java | 49

.../druid/query/QueryToolChestTestHelper.java} | 18 +-

.../groupby/GroupByQueryQueryToolChestTest.java| 109 +

.../query/scan/ScanQueryQueryToolChestTest.java| 205 +

.../TimeseriesQueryQueryToolChestTest.java | 64 +-

.../query/topn/TopNQueryQueryToolChestTest.java| 72 ++

.../org/apache/druid/server/QueryLifecycle.java| 1 +

.../sql/calcite/expression/SimpleExtraction.java | 28 ++-

.../apache/druid/sql/calcite/rel/QueryMaker.java | 254 +++--

16 files changed, 789 insertions(+), 197 deletions(-)

copy processing/src/{main/java/org/apache/druid/query/NoopQueryRunner.java =>

test/java/org/apache/druid/query/QueryToolChestTestHelper.java} (65%)

create mode 100644

processing/src/test/java/org/apache/druid/query/scan/ScanQueryQueryToolChestTest.java

-

To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org

For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] gianm merged pull request #9130: Move result-to-array logic from SQL layer into QueryToolChests.

gianm merged pull request #9130: Move result-to-array logic from SQL layer into QueryToolChests. URL: https://github.com/apache/druid/pull/9130 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

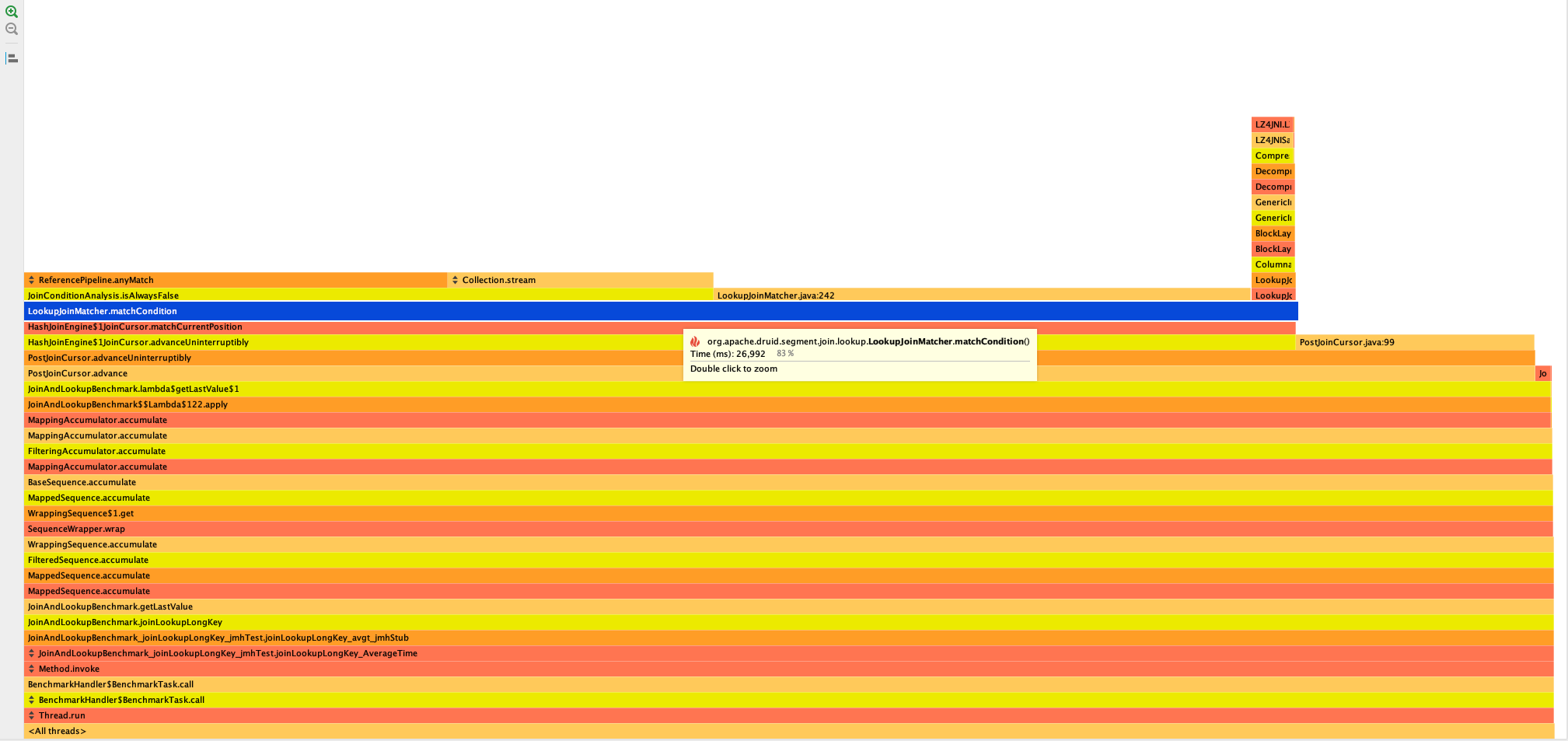

[GitHub] [druid] suneet-s opened a new pull request #9200: Optimize JoinCondition matching

suneet-s opened a new pull request #9200: Optimize JoinCondition matching URL: https://github.com/apache/druid/pull/9200 ### Description The LookupJoinMatcher needs to check if a condition is always true or false multiple times. This can be pre-computed to speed up the match checking This change reduces the time it takes to perform a for joining on a long key from ~ 36 ms/op to 23 ms/ op  This PR has: - [ ] been self-reviewed. - [ ] using the [concurrency checklist](https://github.com/apache/druid/blob/master/dev/code-review/concurrency.md) (Remove this item if the PR doesn't have any relation to concurrency.) - [ ] added documentation for new or modified features or behaviors. - [ ] added Javadocs for most classes and all non-trivial methods. Linked related entities via Javadoc links. - [ ] added or updated version, license, or notice information in [licenses.yaml](https://github.com/apache/druid/blob/master/licenses.yaml) - [ ] added comments explaining the "why" and the intent of the code wherever would not be obvious for an unfamiliar reader. - [ ] added unit tests or modified existing tests to cover new code paths. - [ ] added integration tests. - [ ] been tested in a test Druid cluster. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] [druid] jihoonson opened a new pull request #9199: Fix TSV bugs