[GitHub] [hudi] hudi-bot edited a comment on pull request #3173: [HUDI-1951] Add bucket hash index, compatible with the hive bucket

hudi-bot edited a comment on pull request #3173: URL: https://github.com/apache/hudi/pull/3173#issuecomment-869653867 ## CI report: * 7aef86d8976d44c1427ccf944d3ecfa00b629159 Azure: [FAILURE](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=763) * 5afbbaabe333b8d290c79dfdeb10d8d3aaca11c7 UNKNOWN Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Commented] (HUDI-1860) Add INSERT_OVERWRITE support to DeltaStreamer

[ https://issues.apache.org/jira/browse/HUDI-1860?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=17378064#comment-17378064 ] ASF GitHub Bot commented on HUDI-1860: -- hudi-bot edited a comment on pull request #3184: URL: https://github.com/apache/hudi/pull/3184#issuecomment-870410669 ## CI report: * 15ea5785bc8556cd17b6dc4da5cce7d542fbd896 Azure: [FAILURE](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=674) Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=682) * 4f3a389384a1fbf0deb654caa490f3b32c3b7e41 Azure: [PENDING](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=821) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org > Add INSERT_OVERWRITE support to DeltaStreamer > - > > Key: HUDI-1860 > URL: https://issues.apache.org/jira/browse/HUDI-1860 > Project: Apache Hudi > Issue Type: Sub-task >Reporter: Sagar Sumit >Assignee: Samrat Deb >Priority: Major > Labels: pull-request-available > Original Estimate: 72h > Remaining Estimate: 72h > > As discussed in [this > RFC|https://cwiki.apache.org/confluence/display/HUDI/RFC+-+14+%3A+JDBC+incremental+puller], > having full fetch mode use the inser_overwrite to write to sync would be > better as it can handle schema changes. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [hudi] hudi-bot edited a comment on pull request #3184: [HUDI-1860] Add INSERT_OVERWRITE and INSERT_OVERWRITE_TABLE support to DeltaStreamer

hudi-bot edited a comment on pull request #3184: URL: https://github.com/apache/hudi/pull/3184#issuecomment-870410669 ## CI report: * 15ea5785bc8556cd17b6dc4da5cce7d542fbd896 Azure: [FAILURE](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=674) Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=682) * 4f3a389384a1fbf0deb654caa490f3b32c3b7e41 Azure: [PENDING](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=821) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] codecov-commenter commented on pull request #3248: [MINOR] Fix some wrong assert reasons

codecov-commenter commented on pull request #3248: URL: https://github.com/apache/hudi/pull/3248#issuecomment-877187703 # [Codecov](https://codecov.io/gh/apache/hudi/pull/3248?src=pr&el=h1&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation) Report > Merging [#3248](https://codecov.io/gh/apache/hudi/pull/3248?src=pr&el=desc&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation) (64ad40a) into [master](https://codecov.io/gh/apache/hudi/commit/371526789d663dee85041eb31c27c52c81ef87ef?el=desc&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation) (3715267) will **decrease** coverage by `24.52%`. > The diff coverage is `n/a`. [](https://codecov.io/gh/apache/hudi/pull/3248?src=pr&el=tree&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation) ```diff @@ Coverage Diff @@ ## master #3248 +/- ## - Coverage 27.40% 2.88% -24.53% + Complexity 1287 85 -1202 Files 381 281 -100 Lines 15108 11620 -3488 Branches 1305 952 -353 - Hits 4141 335 -3806 - Misses10667 11259 +592 + Partials300 26 -274 ``` | Flag | Coverage Δ | | |---|---|---| | hudiclient | `0.00% <ø> (-21.06%)` | :arrow_down: | | hudisync | `5.37% <ø> (ø)` | | | hudiutilities | `9.25% <ø> (-49.32%)` | :arrow_down: | Flags with carried forward coverage won't be shown. [Click here](https://docs.codecov.io/docs/carryforward-flags?utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation#carryforward-flags-in-the-pull-request-comment) to find out more. | [Impacted Files](https://codecov.io/gh/apache/hudi/pull/3248?src=pr&el=tree&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation) | Coverage Δ | | |---|---|---| | [...va/org/apache/hudi/utilities/IdentitySplitter.java](https://codecov.io/gh/apache/hudi/pull/3248/diff?src=pr&el=tree&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation#diff-aHVkaS11dGlsaXRpZXMvc3JjL21haW4vamF2YS9vcmcvYXBhY2hlL2h1ZGkvdXRpbGl0aWVzL0lkZW50aXR5U3BsaXR0ZXIuamF2YQ==) | `0.00% <0.00%> (-100.00%)` | :arrow_down: | | [...va/org/apache/hudi/utilities/schema/SchemaSet.java](https://codecov.io/gh/apache/hudi/pull/3248/diff?src=pr&el=tree&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation#diff-aHVkaS11dGlsaXRpZXMvc3JjL21haW4vamF2YS9vcmcvYXBhY2hlL2h1ZGkvdXRpbGl0aWVzL3NjaGVtYS9TY2hlbWFTZXQuamF2YQ==) | `0.00% <0.00%> (-100.00%)` | :arrow_down: | | [...a/org/apache/hudi/utilities/sources/RowSource.java](https://codecov.io/gh/apache/hudi/pull/3248/diff?src=pr&el=tree&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation#diff-aHVkaS11dGlsaXRpZXMvc3JjL21haW4vamF2YS9vcmcvYXBhY2hlL2h1ZGkvdXRpbGl0aWVzL3NvdXJjZXMvUm93U291cmNlLmphdmE=) | `0.00% <0.00%> (-100.00%)` | :arrow_down: | | [.../org/apache/hudi/utilities/sources/AvroSource.java](https://codecov.io/gh/apache/hudi/pull/3248/diff?src=pr&el=tree&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation#diff-aHVkaS11dGlsaXRpZXMvc3JjL21haW4vamF2YS9vcmcvYXBhY2hlL2h1ZGkvdXRpbGl0aWVzL3NvdXJjZXMvQXZyb1NvdXJjZS5qYXZh) | `0.00% <0.00%> (-100.00%)` | :arrow_down: | | [.../org/apache/hudi/utilities/sources/JsonSource.java](https://codecov.io/gh/apache/hudi/pull/3248/diff?src=pr&el=tree&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation#diff-aHVkaS11dGlsaXRpZXMvc3JjL21haW4vamF2YS9vcmcvYXBhY2hlL2h1ZGkvdXRpbGl0aWVzL3NvdXJjZXMvSnNvblNvdXJjZS5qYXZh) | `0.00% <0.00%> (-100.00%)` | :arrow_down: | | [...rg/apache/hudi/utilities/sources/CsvDFSSource.java](https://codecov.io/gh/apache/hudi/pull/3248/diff?src=pr&el=tree&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation#diff

[jira] [Created] (HUDI-2157) Spark write the bucket index table

XiaoyuGeng created HUDI-2157: Summary: Spark write the bucket index table Key: HUDI-2157 URL: https://issues.apache.org/jira/browse/HUDI-2157 Project: Apache Hudi Issue Type: New Feature Reporter: XiaoyuGeng Assignee: XiaoyuGeng -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [hudi] hudi-bot edited a comment on pull request #3184: [HUDI-1860] Add INSERT_OVERWRITE and INSERT_OVERWRITE_TABLE support to DeltaStreamer

hudi-bot edited a comment on pull request #3184: URL: https://github.com/apache/hudi/pull/3184#issuecomment-870410669 ## CI report: * 15ea5785bc8556cd17b6dc4da5cce7d542fbd896 Azure: [FAILURE](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=674) Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=682) * 4f3a389384a1fbf0deb654caa490f3b32c3b7e41 UNKNOWN Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Commented] (HUDI-1860) Add INSERT_OVERWRITE support to DeltaStreamer

[ https://issues.apache.org/jira/browse/HUDI-1860?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=17378063#comment-17378063 ] ASF GitHub Bot commented on HUDI-1860: -- hudi-bot edited a comment on pull request #3184: URL: https://github.com/apache/hudi/pull/3184#issuecomment-870410669 ## CI report: * 15ea5785bc8556cd17b6dc4da5cce7d542fbd896 Azure: [FAILURE](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=674) Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=682) * 4f3a389384a1fbf0deb654caa490f3b32c3b7e41 UNKNOWN Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org > Add INSERT_OVERWRITE support to DeltaStreamer > - > > Key: HUDI-1860 > URL: https://issues.apache.org/jira/browse/HUDI-1860 > Project: Apache Hudi > Issue Type: Sub-task >Reporter: Sagar Sumit >Assignee: Samrat Deb >Priority: Major > Labels: pull-request-available > Original Estimate: 72h > Remaining Estimate: 72h > > As discussed in [this > RFC|https://cwiki.apache.org/confluence/display/HUDI/RFC+-+14+%3A+JDBC+incremental+puller], > having full fetch mode use the inser_overwrite to write to sync would be > better as it can handle schema changes. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [hudi] hudi-bot edited a comment on pull request #3250: [MINOR] Fix EXTERNAL_RECORD_AND_SCHEMA_TRANSFORMATION config

hudi-bot edited a comment on pull request #3250: URL: https://github.com/apache/hudi/pull/3250#issuecomment-877145194 ## CI report: * 0420f87e4e013d2c6c1fdff56b82e26d464c635c Azure: [FAILURE](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=820) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Created] (HUDI-2156) Cluster the table with bucket index

XiaoyuGeng created HUDI-2156: Summary: Cluster the table with bucket index Key: HUDI-2156 URL: https://issues.apache.org/jira/browse/HUDI-2156 Project: Apache Hudi Issue Type: New Feature Reporter: XiaoyuGeng -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Updated] (HUDI-2155) Bulk insert support bucket index

[ https://issues.apache.org/jira/browse/HUDI-2155?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] XiaoyuGeng updated HUDI-2155: - Summary: Bulk insert support bucket index (was: Bulk insert support Hive index) > Bulk insert support bucket index > > > Key: HUDI-2155 > URL: https://issues.apache.org/jira/browse/HUDI-2155 > Project: Apache Hudi > Issue Type: New Feature > Components: Index >Reporter: XiaoyuGeng >Assignee: XiaoyuGeng >Priority: Major > > when index key is String type and the index config is empty -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Created] (HUDI-2155) Bulk insert support Hive index

XiaoyuGeng created HUDI-2155: Summary: Bulk insert support Hive index Key: HUDI-2155 URL: https://issues.apache.org/jira/browse/HUDI-2155 Project: Apache Hudi Issue Type: New Feature Components: Index Reporter: XiaoyuGeng Assignee: XiaoyuGeng when index key is String type and the index config is empty -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Commented] (HUDI-2141) Integration flink metric in flink stream

[ https://issues.apache.org/jira/browse/HUDI-2141?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=17378037#comment-17378037 ] ASF GitHub Bot commented on HUDI-2141: -- hudi-bot edited a comment on pull request #3235: URL: https://github.com/apache/hudi/pull/3235#issuecomment-875512974 ## CI report: * 12f9fc5e0391242aaddc4e0c09ada8ee3c745a47 Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=819) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org > Integration flink metric in flink stream > > > Key: HUDI-2141 > URL: https://issues.apache.org/jira/browse/HUDI-2141 > Project: Apache Hudi > Issue Type: Improvement > Components: Flink Integration >Reporter: yuzhaojing >Assignee: yuzhaojing >Priority: Major > Labels: pull-request-available > > Now hoodie metrics can't work in flink stream because Designed for batch > processing, integration flink metric in flink stream. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [hudi] hudi-bot edited a comment on pull request #3235: [HUDI-2141] Integration flink metric in flink stream

hudi-bot edited a comment on pull request #3235: URL: https://github.com/apache/hudi/pull/3235#issuecomment-875512974 ## CI report: * 12f9fc5e0391242aaddc4e0c09ada8ee3c745a47 Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=819) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3250: [MINOR] Fix EXTERNAL_RECORD_AND_SCHEMA_TRANSFORMATION config

hudi-bot edited a comment on pull request #3250: URL: https://github.com/apache/hudi/pull/3250#issuecomment-877145194 ## CI report: * 0420f87e4e013d2c6c1fdff56b82e26d464c635c Azure: [PENDING](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=820) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot commented on pull request #3250: [MINOR] Fix EXTERNAL_RECORD_AND_SCHEMA_TRANSFORMATION config

hudi-bot commented on pull request #3250: URL: https://github.com/apache/hudi/pull/3250#issuecomment-877145194 ## CI report: * 0420f87e4e013d2c6c1fdff56b82e26d464c635c UNKNOWN Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3142: [HUDI-1483] Support async clustering for deltastreamer and Spark streaming

hudi-bot edited a comment on pull request #3142: URL: https://github.com/apache/hudi/pull/3142#issuecomment-866996072 ## CI report: * 890e9822855fcd45c8387f83740975f43474cddc Azure: [FAILURE](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=814) Azure: [FAILURE](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=818) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Commented] (HUDI-1483) async clustering for deltastreamer

[ https://issues.apache.org/jira/browse/HUDI-1483?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=17378033#comment-17378033 ] ASF GitHub Bot commented on HUDI-1483: -- hudi-bot edited a comment on pull request #3142: URL: https://github.com/apache/hudi/pull/3142#issuecomment-866996072 ## CI report: * 890e9822855fcd45c8387f83740975f43474cddc Azure: [FAILURE](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=814) Azure: [FAILURE](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=818) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org > async clustering for deltastreamer > -- > > Key: HUDI-1483 > URL: https://issues.apache.org/jira/browse/HUDI-1483 > Project: Apache Hudi > Issue Type: Sub-task >Reporter: liwei >Assignee: liwei >Priority: Blocker > Labels: pull-request-available > Fix For: 0.9.0 > > -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [hudi] codope opened a new pull request #3250: [MINOR] Fix EXTERNAL_RECORD_AND_SCHEMA_TRANSFORMATION config

codope opened a new pull request #3250: URL: https://github.com/apache/hudi/pull/3250 ## What is the purpose of the pull request `EXTERNAL_RECORD_AND_SCHEMA_TRANSFORMATION` config key depends on `AVRO_SCHEMA`, which is no longer a static string. This PR sets the key correctly for the aforementioned config. ## Verify this pull request This pull request is already covered by existing tests, such as `TestHoodieWriteConfig`. Added assert for this particular config to verify the fix. ## Committer checklist - [ ] Has a corresponding JIRA in PR title & commit - [ ] Commit message is descriptive of the change - [ ] CI is green - [ ] Necessary doc changes done or have another open PR - [ ] For large changes, please consider breaking it into sub-tasks under an umbrella JIRA. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] jazzyMin opened a new issue #3249: execute insert into ... error:Unexpected exception. This is a bug. Please consider filing an issue.

jazzyMin opened a new issue #3249: URL: https://github.com/apache/hudi/issues/3249 **_Tips before filing an issue_** - Have you gone through our [FAQs](https://cwiki.apache.org/confluence/display/HUDI/FAQ)? - Join the mailing list to engage in conversations and get faster support at dev-subscr...@hudi.apache.org. - If you have triaged this as a bug, then file an [issue](https://issues.apache.org/jira/projects/HUDI/issues) directly. **Describe the problem you faced** A clear and concise description of the problem. **To Reproduce** Steps to reproduce the behavior: 1. 2. 3. 4. **Expected behavior** A clear and concise description of what you expected to happen. **Environment Description** * Hudi version :0.9.0 * Flink version : 1.12.2 * Hive version : 2.3.6 * Hadoop version :3.0 * Storage (HDFS/S3/GCS..) : * Running on Docker? (yes/no) : no **Additional context** Add any other context about the problem here. **Stacktrace** ```Add the stacktrace of the error.``` `Exception in thread "main" org.apache.flink.table.client.SqlClientException: Unexpected exception. This is a bug. Please consider filing an issue. at org.apache.flink.table.client.SqlClient.main(SqlClient.java:215) Caused by: java.lang.RuntimeException: Error running SQL job. at org.apache.flink.table.client.gateway.local.LocalExecutor.lambda$executeUpdateInternal$4(LocalExecutor.java:514) at org.apache.flink.table.client.gateway.local.ExecutionContext.wrapClassLoader(ExecutionContext.java:256) at org.apache.flink.table.client.gateway.local.LocalExecutor.executeUpdateInternal(LocalExecutor.java:507) at org.apache.flink.table.client.gateway.local.LocalExecutor.executeUpdate(LocalExecutor.java:428) at org.apache.flink.table.client.cli.CliClient.callInsert(CliClient.java:690) at org.apache.flink.table.client.cli.CliClient.callCommand(CliClient.java:327) at java.util.Optional.ifPresent(Optional.java:159) at org.apache.flink.table.client.cli.CliClient.open(CliClient.java:214) at org.apache.flink.table.client.SqlClient.openCli(SqlClient.java:144) at org.apache.flink.table.client.SqlClient.start(SqlClient.java:115) at org.apache.flink.table.client.SqlClient.main(SqlClient.java:201) Caused by: java.util.concurrent.ExecutionException: org.apache.flink.runtime.client.JobSubmissionException: Failed to submit JobGraph. at java.util.concurrent.CompletableFuture.reportGet(CompletableFuture.java:357) at java.util.concurrent.CompletableFuture.get(CompletableFuture.java:1895) at org.apache.flink.table.client.gateway.local.LocalExecutor.lambda$executeUpdateInternal$4(LocalExecutor.java:511) ... 10 more Caused by: org.apache.flink.runtime.client.JobSubmissionException: Failed to submit JobGraph. at org.apache.flink.client.program.rest.RestClusterClient.lambda$submitJob$7(RestClusterClient.java:400) at java.util.concurrent.CompletableFuture.uniExceptionally(CompletableFuture.java:870) at java.util.concurrent.CompletableFuture$UniExceptionally.tryFire(CompletableFuture.java:852) at java.util.concurrent.CompletableFuture.postComplete(CompletableFuture.java:474) at java.util.concurrent.CompletableFuture.completeExceptionally(CompletableFuture.java:1977) at org.apache.flink.runtime.concurrent.FutureUtils.lambda$retryOperationWithDelay$9(FutureUtils.java:390) at java.util.concurrent.CompletableFuture.uniWhenComplete(CompletableFuture.java:760) at java.util.concurrent.CompletableFuture$UniWhenComplete.tryFire(CompletableFuture.java:736) at java.util.concurrent.CompletableFuture.postComplete(CompletableFuture.java:474) at java.util.concurrent.CompletableFuture.completeExceptionally(CompletableFuture.java:1977) at org.apache.flink.runtime.rest.RestClient.lambda$submitRequest$1(RestClient.java:430) at org.apache.flink.shaded.netty4.io.netty.util.concurrent.DefaultPromise.notifyListener0(DefaultPromise.java:577) at org.apache.flink.shaded.netty4.io.netty.util.concurrent.DefaultPromise.notifyListeners0(DefaultPromise.java:570) at org.apache.flink.shaded.netty4.io.netty.util.concurrent.DefaultPromise.notifyListenersNow(DefaultPromise.java:549) at org.apache.flink.shaded.netty4.io.netty.util.concurrent.DefaultPromise.notifyListeners(DefaultPromise.java:490) at org.apache.flink.shaded.netty4.io.netty.util.concurrent.DefaultPromise.setValue0(DefaultPromise.java:615) at org.apache.flink.shaded.netty4.io.netty.util.concurrent.DefaultPromise.setFailure0(DefaultPromise.java:608) at org.apache.flink.shaded.netty4.io.netty.util.concurrent.DefaultPromise.tryFailure(DefaultPromise.java:117) at org.apache.fli

[jira] [Commented] (HUDI-2141) Integration flink metric in flink stream

[ https://issues.apache.org/jira/browse/HUDI-2141?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=17378018#comment-17378018 ] ASF GitHub Bot commented on HUDI-2141: -- hudi-bot edited a comment on pull request #3235: URL: https://github.com/apache/hudi/pull/3235#issuecomment-875512974 ## CI report: * fbf4eb53e9c96782796612e8bbdaed4aa22d8caf Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=788) * 12f9fc5e0391242aaddc4e0c09ada8ee3c745a47 Azure: [PENDING](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=819) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org > Integration flink metric in flink stream > > > Key: HUDI-2141 > URL: https://issues.apache.org/jira/browse/HUDI-2141 > Project: Apache Hudi > Issue Type: Improvement > Components: Flink Integration >Reporter: yuzhaojing >Assignee: yuzhaojing >Priority: Major > Labels: pull-request-available > > Now hoodie metrics can't work in flink stream because Designed for batch > processing, integration flink metric in flink stream. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [hudi] hudi-bot edited a comment on pull request #3235: [HUDI-2141] Integration flink metric in flink stream

hudi-bot edited a comment on pull request #3235: URL: https://github.com/apache/hudi/pull/3235#issuecomment-875512974 ## CI report: * fbf4eb53e9c96782796612e8bbdaed4aa22d8caf Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=788) * 12f9fc5e0391242aaddc4e0c09ada8ee3c745a47 Azure: [PENDING](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=819) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Commented] (HUDI-2141) Integration flink metric in flink stream

[ https://issues.apache.org/jira/browse/HUDI-2141?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=17378016#comment-17378016 ] ASF GitHub Bot commented on HUDI-2141: -- hudi-bot edited a comment on pull request #3235: URL: https://github.com/apache/hudi/pull/3235#issuecomment-875512974 ## CI report: * fbf4eb53e9c96782796612e8bbdaed4aa22d8caf Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=788) * 12f9fc5e0391242aaddc4e0c09ada8ee3c745a47 UNKNOWN Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org > Integration flink metric in flink stream > > > Key: HUDI-2141 > URL: https://issues.apache.org/jira/browse/HUDI-2141 > Project: Apache Hudi > Issue Type: Improvement > Components: Flink Integration >Reporter: yuzhaojing >Assignee: yuzhaojing >Priority: Major > Labels: pull-request-available > > Now hoodie metrics can't work in flink stream because Designed for batch > processing, integration flink metric in flink stream. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [hudi] hudi-bot edited a comment on pull request #3235: [HUDI-2141] Integration flink metric in flink stream

hudi-bot edited a comment on pull request #3235: URL: https://github.com/apache/hudi/pull/3235#issuecomment-875512974 ## CI report: * fbf4eb53e9c96782796612e8bbdaed4aa22d8caf Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=788) * 12f9fc5e0391242aaddc4e0c09ada8ee3c745a47 UNKNOWN Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Commented] (HUDI-2107) Support Read Log Only MOR Table For Spark

[ https://issues.apache.org/jira/browse/HUDI-2107?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=17378011#comment-17378011 ] ASF GitHub Bot commented on HUDI-2107: -- codecov-commenter edited a comment on pull request #3193: URL: https://github.com/apache/hudi/pull/3193#issuecomment-871511739 # [Codecov](https://codecov.io/gh/apache/hudi/pull/3193?src=pr&el=h1&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation) Report > Merging [#3193](https://codecov.io/gh/apache/hudi/pull/3193?src=pr&el=desc&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation) (4cb7cc5) into [master](https://codecov.io/gh/apache/hudi/commit/c50c24908a4eb1ed769efb8878e6075d3e162d55?el=desc&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation) (c50c249) will **decrease** coverage by `30.01%`. > The diff coverage is `n/a`. [](https://codecov.io/gh/apache/hudi/pull/3193?src=pr&el=tree&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation) ```diff @@ Coverage Diff @@ ## master#3193 +/- ## = - Coverage 47.62% 17.60% -30.02% + Complexity 5504 883 -4621 = Files 930 381 -549 Lines 4127515108-26167 Branches 4138 1305 -2833 = - Hits 19657 2660-16997 + Misses1987012283 -7587 + Partials 1748 165 -1583 ``` | Flag | Coverage Δ | | |---|---|---| | hudicli | `?` | | | hudiclient | `21.05% <ø> (-13.54%)` | :arrow_down: | | hudicommon | `?` | | | hudiflink | `?` | | | hudihadoopmr | `?` | | | hudisparkdatasource | `?` | | | hudisync | `5.37% <ø> (-49.15%)` | :arrow_down: | | huditimelineservice | `?` | | | hudiutilities | `9.25% <ø> (-49.32%)` | :arrow_down: | Flags with carried forward coverage won't be shown. [Click here](https://docs.codecov.io/docs/carryforward-flags?utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation#carryforward-flags-in-the-pull-request-comment) to find out more. | [Impacted Files](https://codecov.io/gh/apache/hudi/pull/3193?src=pr&el=tree&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation) | Coverage Δ | | |---|---|---| | [...va/org/apache/hudi/utilities/IdentitySplitter.java](https://codecov.io/gh/apache/hudi/pull/3193/diff?src=pr&el=tree&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation#diff-aHVkaS11dGlsaXRpZXMvc3JjL21haW4vamF2YS9vcmcvYXBhY2hlL2h1ZGkvdXRpbGl0aWVzL0lkZW50aXR5U3BsaXR0ZXIuamF2YQ==) | `0.00% <0.00%> (-100.00%)` | :arrow_down: | | [...va/org/apache/hudi/utilities/schema/SchemaSet.java](https://codecov.io/gh/apache/hudi/pull/3193/diff?src=pr&el=tree&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation#diff-aHVkaS11dGlsaXRpZXMvc3JjL21haW4vamF2YS9vcmcvYXBhY2hlL2h1ZGkvdXRpbGl0aWVzL3NjaGVtYS9TY2hlbWFTZXQuamF2YQ==) | `0.00% <0.00%> (-100.00%)` | :arrow_down: | | [...a/org/apache/hudi/utilities/sources/RowSource.java](https://codecov.io/gh/apache/hudi/pull/3193/diff?src=pr&el=tree&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation#diff-aHVkaS11dGlsaXRpZXMvc3JjL21haW4vamF2YS9vcmcvYXBhY2hlL2h1ZGkvdXRpbGl0aWVzL3NvdXJjZXMvUm93U291cmNlLmphdmE=) | `0.00% <0.00%> (-100.00%)` | :arrow_down: | | [.../org/apache/hudi/utilities/sources/AvroSource.java](https://codecov.io/gh/apache/hudi/pull/3193/diff?src=pr&el=tree&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation#diff-aHVkaS11dGlsaXRpZXMvc3JjL21haW4vamF2YS9vcmcvYXBhY2hlL2h1ZGkvdXRpbGl0aWVzL3NvdXJjZXMvQXZyb1NvdXJjZS5qYXZh) | `0.00% <0.00%> (-100.00%)` | :arrow_down: | | [.../org/apache/hudi/utilities/sources/JsonSource.java](https://codecov.io/gh/apache/hudi/pull/3193/diff?src=pr&el=tree&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign

[GitHub] [hudi] codecov-commenter edited a comment on pull request #3193: [HUDI-2107] Support Read Log Only MOR Table For Spark

codecov-commenter edited a comment on pull request #3193: URL: https://github.com/apache/hudi/pull/3193#issuecomment-871511739 # [Codecov](https://codecov.io/gh/apache/hudi/pull/3193?src=pr&el=h1&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation) Report > Merging [#3193](https://codecov.io/gh/apache/hudi/pull/3193?src=pr&el=desc&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation) (4cb7cc5) into [master](https://codecov.io/gh/apache/hudi/commit/c50c24908a4eb1ed769efb8878e6075d3e162d55?el=desc&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation) (c50c249) will **decrease** coverage by `30.01%`. > The diff coverage is `n/a`. [](https://codecov.io/gh/apache/hudi/pull/3193?src=pr&el=tree&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation) ```diff @@ Coverage Diff @@ ## master#3193 +/- ## = - Coverage 47.62% 17.60% -30.02% + Complexity 5504 883 -4621 = Files 930 381 -549 Lines 4127515108-26167 Branches 4138 1305 -2833 = - Hits 19657 2660-16997 + Misses1987012283 -7587 + Partials 1748 165 -1583 ``` | Flag | Coverage Δ | | |---|---|---| | hudicli | `?` | | | hudiclient | `21.05% <ø> (-13.54%)` | :arrow_down: | | hudicommon | `?` | | | hudiflink | `?` | | | hudihadoopmr | `?` | | | hudisparkdatasource | `?` | | | hudisync | `5.37% <ø> (-49.15%)` | :arrow_down: | | huditimelineservice | `?` | | | hudiutilities | `9.25% <ø> (-49.32%)` | :arrow_down: | Flags with carried forward coverage won't be shown. [Click here](https://docs.codecov.io/docs/carryforward-flags?utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation#carryforward-flags-in-the-pull-request-comment) to find out more. | [Impacted Files](https://codecov.io/gh/apache/hudi/pull/3193?src=pr&el=tree&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation) | Coverage Δ | | |---|---|---| | [...va/org/apache/hudi/utilities/IdentitySplitter.java](https://codecov.io/gh/apache/hudi/pull/3193/diff?src=pr&el=tree&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation#diff-aHVkaS11dGlsaXRpZXMvc3JjL21haW4vamF2YS9vcmcvYXBhY2hlL2h1ZGkvdXRpbGl0aWVzL0lkZW50aXR5U3BsaXR0ZXIuamF2YQ==) | `0.00% <0.00%> (-100.00%)` | :arrow_down: | | [...va/org/apache/hudi/utilities/schema/SchemaSet.java](https://codecov.io/gh/apache/hudi/pull/3193/diff?src=pr&el=tree&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation#diff-aHVkaS11dGlsaXRpZXMvc3JjL21haW4vamF2YS9vcmcvYXBhY2hlL2h1ZGkvdXRpbGl0aWVzL3NjaGVtYS9TY2hlbWFTZXQuamF2YQ==) | `0.00% <0.00%> (-100.00%)` | :arrow_down: | | [...a/org/apache/hudi/utilities/sources/RowSource.java](https://codecov.io/gh/apache/hudi/pull/3193/diff?src=pr&el=tree&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation#diff-aHVkaS11dGlsaXRpZXMvc3JjL21haW4vamF2YS9vcmcvYXBhY2hlL2h1ZGkvdXRpbGl0aWVzL3NvdXJjZXMvUm93U291cmNlLmphdmE=) | `0.00% <0.00%> (-100.00%)` | :arrow_down: | | [.../org/apache/hudi/utilities/sources/AvroSource.java](https://codecov.io/gh/apache/hudi/pull/3193/diff?src=pr&el=tree&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation#diff-aHVkaS11dGlsaXRpZXMvc3JjL21haW4vamF2YS9vcmcvYXBhY2hlL2h1ZGkvdXRpbGl0aWVzL3NvdXJjZXMvQXZyb1NvdXJjZS5qYXZh) | `0.00% <0.00%> (-100.00%)` | :arrow_down: | | [.../org/apache/hudi/utilities/sources/JsonSource.java](https://codecov.io/gh/apache/hudi/pull/3193/diff?src=pr&el=tree&utm_medium=referral&utm_source=github&utm_content=comment&utm_campaign=pr+comments&utm_term=The+Apache+Software+Foundation#diff-aHVkaS11dGlsaXRpZXMvc3JjL21haW4vamF2YS9vcmcvYXBhY2hlL2h1ZGkvdXRpbGl0aWVzL3NvdXJjZXMvSnNvblNvdXJjZS5qYXZh) | `0.00% <0.00%> (-100.00%)` | :arrow_down: | | [...rg/apache/hudi/utilities/source

[jira] [Commented] (HUDI-1483) async clustering for deltastreamer

[ https://issues.apache.org/jira/browse/HUDI-1483?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=17378009#comment-17378009 ] ASF GitHub Bot commented on HUDI-1483: -- hudi-bot edited a comment on pull request #3142: URL: https://github.com/apache/hudi/pull/3142#issuecomment-866996072 ## CI report: * 890e9822855fcd45c8387f83740975f43474cddc Azure: [FAILURE](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=814) Azure: [PENDING](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=818) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org > async clustering for deltastreamer > -- > > Key: HUDI-1483 > URL: https://issues.apache.org/jira/browse/HUDI-1483 > Project: Apache Hudi > Issue Type: Sub-task >Reporter: liwei >Assignee: liwei >Priority: Blocker > Labels: pull-request-available > Fix For: 0.9.0 > > -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [hudi] hudi-bot edited a comment on pull request #3142: [HUDI-1483] Support async clustering for deltastreamer and Spark streaming

hudi-bot edited a comment on pull request #3142: URL: https://github.com/apache/hudi/pull/3142#issuecomment-866996072 ## CI report: * 890e9822855fcd45c8387f83740975f43474cddc Azure: [FAILURE](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=814) Azure: [PENDING](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=818) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Commented] (HUDI-1483) async clustering for deltastreamer

[ https://issues.apache.org/jira/browse/HUDI-1483?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=17378008#comment-17378008 ] ASF GitHub Bot commented on HUDI-1483: -- codope commented on pull request #3142: URL: https://github.com/apache/hudi/pull/3142#issuecomment-877110964 @hudi-bot run azure -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org > async clustering for deltastreamer > -- > > Key: HUDI-1483 > URL: https://issues.apache.org/jira/browse/HUDI-1483 > Project: Apache Hudi > Issue Type: Sub-task >Reporter: liwei >Assignee: liwei >Priority: Blocker > Labels: pull-request-available > Fix For: 0.9.0 > > -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [hudi] codope commented on pull request #3142: [HUDI-1483] Support async clustering for deltastreamer and Spark streaming

codope commented on pull request #3142: URL: https://github.com/apache/hudi/pull/3142#issuecomment-877110964 @hudi-bot run azure -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Commented] (HUDI-2147) Remove unused class AvroConvertor in hudi-flink

[ https://issues.apache.org/jira/browse/HUDI-2147?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=17377991#comment-17377991 ] ASF GitHub Bot commented on HUDI-2147: -- wangxianghu commented on pull request #3243: URL: https://github.com/apache/hudi/pull/3243#issuecomment-877088985 hi @yanghua please take a look when free. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org > Remove unused class AvroConvertor in hudi-flink > --- > > Key: HUDI-2147 > URL: https://issues.apache.org/jira/browse/HUDI-2147 > Project: Apache Hudi > Issue Type: Improvement >Reporter: Xianghu Wang >Assignee: Xianghu Wang >Priority: Major > Labels: pull-request-available > -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [hudi] wangxianghu commented on pull request #3243: [HUDI-2147] Remove unused class AvroConvertor in hudi-flink

wangxianghu commented on pull request #3243: URL: https://github.com/apache/hudi/pull/3243#issuecomment-877088985 hi @yanghua please take a look when free. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Commented] (HUDI-2143) Tweak the default compaction target IO to 500GB when flink async compaction is off

[

https://issues.apache.org/jira/browse/HUDI-2143?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=17377988#comment-17377988

]

ASF GitHub Bot commented on HUDI-2143:

--

danny0405 commented on a change in pull request #3238:

URL: https://github.com/apache/hudi/pull/3238#discussion_r666847718

##

File path:

hudi-flink/src/main/java/org/apache/hudi/table/HoodieTableFactory.java

##

@@ -199,6 +199,12 @@ private static void setupCleaningOptions(Configuration

conf) {

conf.setInteger(FlinkOptions.ARCHIVE_MIN_COMMITS, commitsToRetain + 10);

conf.setInteger(FlinkOptions.ARCHIVE_MAX_COMMITS, commitsToRetain + 20);

}

+if (conf.getBoolean(FlinkOptions.COMPACTION_SCHEDULE_ENABLED)

Review comment:

There is already one, the default value is 5GB for online compaction,

this patch fix the offline compaction with default value as 500GB.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

> Tweak the default compaction target IO to 500GB when flink async compaction

> is off

> --

>

> Key: HUDI-2143

> URL: https://issues.apache.org/jira/browse/HUDI-2143

> Project: Apache Hudi

> Issue Type: Improvement

> Components: Flink Integration

>Reporter: Danny Chen

>Assignee: Danny Chen

>Priority: Major

> Labels: pull-request-available

> Fix For: 0.9.0

>

>

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

[GitHub] [hudi] danny0405 commented on a change in pull request #3238: [HUDI-2143] Tweak the default compaction target IO to 500GB when flin…

danny0405 commented on a change in pull request #3238:

URL: https://github.com/apache/hudi/pull/3238#discussion_r666847718

##

File path:

hudi-flink/src/main/java/org/apache/hudi/table/HoodieTableFactory.java

##

@@ -199,6 +199,12 @@ private static void setupCleaningOptions(Configuration

conf) {

conf.setInteger(FlinkOptions.ARCHIVE_MIN_COMMITS, commitsToRetain + 10);

conf.setInteger(FlinkOptions.ARCHIVE_MAX_COMMITS, commitsToRetain + 20);

}

+if (conf.getBoolean(FlinkOptions.COMPACTION_SCHEDULE_ENABLED)

Review comment:

There is already one, the default value is 5GB for online compaction,

this patch fix the offline compaction with default value as 500GB.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3248: [MINOR] Fix some wrong assert reasons

hudi-bot edited a comment on pull request #3248: URL: https://github.com/apache/hudi/pull/3248#issuecomment-877033209 ## CI report: * 64ad40af7f34ebdc4f3fac3331fbad8523051591 Azure: [FAILURE](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=817) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Commented] (HUDI-1468) incremental read support with clustering

[ https://issues.apache.org/jira/browse/HUDI-1468?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=17377966#comment-17377966 ] liwei commented on HUDI-1468: - [~vinoth] hello , is [https://github.com/apache/hudi/pull/3139/files] land it , this issue can close? > incremental read support with clustering > > > Key: HUDI-1468 > URL: https://issues.apache.org/jira/browse/HUDI-1468 > Project: Apache Hudi > Issue Type: Sub-task > Components: Incremental Pull >Affects Versions: 0.9.0 >Reporter: satish >Assignee: liwei >Priority: Blocker > Labels: pull-request-available > Fix For: 0.9.0 > > > As part of clustering, metadata such as hoodie_commit_time changes for > records that are clustered. This is specific to > SparkBulkInsertBasedRunClusteringStrategy implementation. Figure out a way to > carry commit_time from original record to support incremental queries. > Also, incremental queries dont work with 'replacecommit' used by clustering > HUDI-1264. Change incremental query to work for replacecommits created by > Clustering. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [hudi] hudi-bot edited a comment on pull request #3248: [MINOR] Fix some wrong assert reasons

hudi-bot edited a comment on pull request #3248: URL: https://github.com/apache/hudi/pull/3248#issuecomment-877033209 ## CI report: * 64ad40af7f34ebdc4f3fac3331fbad8523051591 Azure: [PENDING](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=817) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot commented on pull request #3248: [MINOR] Fix some wrong assert reasons

hudi-bot commented on pull request #3248: URL: https://github.com/apache/hudi/pull/3248#issuecomment-877033209 ## CI report: * 64ad40af7f34ebdc4f3fac3331fbad8523051591 UNKNOWN Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] yanghua opened a new pull request #3248: [MINOR] Fix some wrong assert reasons

yanghua opened a new pull request #3248: URL: https://github.com/apache/hudi/pull/3248 ## What is the purpose of the pull request *Fix some wrong assert reasons* ## Brief change log - *Fix some wrong assert reasons* ## Verify this pull request This pull request is a trivial rework / code cleanup without any test coverage. ## Committer checklist - [ ] Has a corresponding JIRA in PR title & commit - [ ] Commit message is descriptive of the change - [ ] CI is green - [ ] Necessary doc changes done or have another open PR - [ ] For large changes, please consider breaking it into sub-tasks under an umbrella JIRA. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Commented] (HUDI-2143) Tweak the default compaction target IO to 500GB when flink async compaction is off

[

https://issues.apache.org/jira/browse/HUDI-2143?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=17377947#comment-17377947

]

ASF GitHub Bot commented on HUDI-2143:

--

yanghua commented on a change in pull request #3238:

URL: https://github.com/apache/hudi/pull/3238#discussion_r666787064

##

File path:

hudi-flink/src/main/java/org/apache/hudi/table/HoodieTableFactory.java

##

@@ -199,6 +199,12 @@ private static void setupCleaningOptions(Configuration

conf) {

conf.setInteger(FlinkOptions.ARCHIVE_MIN_COMMITS, commitsToRetain + 10);

conf.setInteger(FlinkOptions.ARCHIVE_MAX_COMMITS, commitsToRetain + 20);

}

+if (conf.getBoolean(FlinkOptions.COMPACTION_SCHEDULE_ENABLED)

Review comment:

The rewritten value is a constant value. Why not provide an

`SYNC_COMPACTION_TARGET_IO` option and make it to be `500GB`?

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

> Tweak the default compaction target IO to 500GB when flink async compaction

> is off

> --

>

> Key: HUDI-2143

> URL: https://issues.apache.org/jira/browse/HUDI-2143

> Project: Apache Hudi

> Issue Type: Improvement

> Components: Flink Integration

>Reporter: Danny Chen

>Assignee: Danny Chen

>Priority: Major

> Labels: pull-request-available

> Fix For: 0.9.0

>

>

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

[GitHub] [hudi] yanghua commented on a change in pull request #3238: [HUDI-2143] Tweak the default compaction target IO to 500GB when flin…

yanghua commented on a change in pull request #3238:

URL: https://github.com/apache/hudi/pull/3238#discussion_r666787064

##

File path:

hudi-flink/src/main/java/org/apache/hudi/table/HoodieTableFactory.java

##

@@ -199,6 +199,12 @@ private static void setupCleaningOptions(Configuration

conf) {

conf.setInteger(FlinkOptions.ARCHIVE_MIN_COMMITS, commitsToRetain + 10);

conf.setInteger(FlinkOptions.ARCHIVE_MAX_COMMITS, commitsToRetain + 20);

}

+if (conf.getBoolean(FlinkOptions.COMPACTION_SCHEDULE_ENABLED)

Review comment:

The rewritten value is a constant value. Why not provide an

`SYNC_COMPACTION_TARGET_IO` option and make it to be `500GB`?

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[jira] [Commented] (HUDI-2107) Support Read Log Only MOR Table For Spark

[ https://issues.apache.org/jira/browse/HUDI-2107?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=17377940#comment-17377940 ] ASF GitHub Bot commented on HUDI-2107: -- hudi-bot edited a comment on pull request #3193: URL: https://github.com/apache/hudi/pull/3193#issuecomment-871363205 ## CI report: * 864dff7a0cc4389905067abee96046d5f72b004f UNKNOWN * f8bac2f4e7133eb3f9cbe4c15a20da49e30dd6eb UNKNOWN * 2a1ce1b4b826344ec024bd51b8af5ee5543a0986 UNKNOWN * ce51b2d836504936b23368492c071fdfe4d94594 UNKNOWN * a619116de9d59eff189e05f593b917cc0b762f25 UNKNOWN * 4cb7cc5952b6aa3cd81cd3367c6c974bba104d00 Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=815) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org > Support Read Log Only MOR Table For Spark > - > > Key: HUDI-2107 > URL: https://issues.apache.org/jira/browse/HUDI-2107 > Project: Apache Hudi > Issue Type: Bug > Components: Spark Integration >Reporter: pengzhiwei >Assignee: pengzhiwei >Priority: Major > Labels: pull-request-available > Fix For: 0.9.0 > > > Currently we cannot support read log-only mor table(which is generated by > index like InMemeoryIndex, HbaseIndex and FlinkIndex which support indexing > log file) for spark. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [hudi] hudi-bot edited a comment on pull request #3193: [HUDI-2107] Support Read Log Only MOR Table For Spark

hudi-bot edited a comment on pull request #3193: URL: https://github.com/apache/hudi/pull/3193#issuecomment-871363205 ## CI report: * 864dff7a0cc4389905067abee96046d5f72b004f UNKNOWN * f8bac2f4e7133eb3f9cbe4c15a20da49e30dd6eb UNKNOWN * 2a1ce1b4b826344ec024bd51b8af5ee5543a0986 UNKNOWN * ce51b2d836504936b23368492c071fdfe4d94594 UNKNOWN * a619116de9d59eff189e05f593b917cc0b762f25 UNKNOWN * 4cb7cc5952b6aa3cd81cd3367c6c974bba104d00 Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=815) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Created] (HUDI-2154) Add index key field into HoodieKey

XiaoyuGeng created HUDI-2154: Summary: Add index key field into HoodieKey Key: HUDI-2154 URL: https://issues.apache.org/jira/browse/HUDI-2154 Project: Apache Hudi Issue Type: New Feature Components: Index Reporter: XiaoyuGeng Assignee: XiaoyuGeng -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Commented] (HUDI-2143) Tweak the default compaction target IO to 500GB when flink async compaction is off

[

https://issues.apache.org/jira/browse/HUDI-2143?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=17377930#comment-17377930

]

ASF GitHub Bot commented on HUDI-2143:

--

danny0405 commented on a change in pull request #3238:

URL: https://github.com/apache/hudi/pull/3238#discussion_r666775074

##

File path:

hudi-flink/src/main/java/org/apache/hudi/table/HoodieTableFactory.java

##

@@ -199,6 +199,12 @@ private static void setupCleaningOptions(Configuration

conf) {

conf.setInteger(FlinkOptions.ARCHIVE_MIN_COMMITS, commitsToRetain + 10);

conf.setInteger(FlinkOptions.ARCHIVE_MAX_COMMITS, commitsToRetain + 20);

}

+if (conf.getBoolean(FlinkOptions.COMPACTION_SCHEDULE_ENABLED)

Review comment:

Why the user needs to know that ? They can set up the target IO by

themself if they want BTW.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

> Tweak the default compaction target IO to 500GB when flink async compaction

> is off

> --

>

> Key: HUDI-2143

> URL: https://issues.apache.org/jira/browse/HUDI-2143

> Project: Apache Hudi

> Issue Type: Improvement

> Components: Flink Integration

>Reporter: Danny Chen

>Assignee: Danny Chen

>Priority: Major

> Labels: pull-request-available

> Fix For: 0.9.0

>

>

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

[GitHub] [hudi] danny0405 commented on a change in pull request #3238: [HUDI-2143] Tweak the default compaction target IO to 500GB when flin…

danny0405 commented on a change in pull request #3238:

URL: https://github.com/apache/hudi/pull/3238#discussion_r666775074

##

File path:

hudi-flink/src/main/java/org/apache/hudi/table/HoodieTableFactory.java

##

@@ -199,6 +199,12 @@ private static void setupCleaningOptions(Configuration

conf) {

conf.setInteger(FlinkOptions.ARCHIVE_MIN_COMMITS, commitsToRetain + 10);

conf.setInteger(FlinkOptions.ARCHIVE_MAX_COMMITS, commitsToRetain + 20);

}

+if (conf.getBoolean(FlinkOptions.COMPACTION_SCHEDULE_ENABLED)

Review comment:

Why the user needs to know that ? They can set up the target IO by

themself if they want BTW.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[jira] [Commented] (HUDI-2143) Tweak the default compaction target IO to 500GB when flink async compaction is off

[

https://issues.apache.org/jira/browse/HUDI-2143?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=17377926#comment-17377926

]

ASF GitHub Bot commented on HUDI-2143:

--

yanghua commented on a change in pull request #3238:

URL: https://github.com/apache/hudi/pull/3238#discussion_r666768715

##

File path:

hudi-flink/src/main/java/org/apache/hudi/table/HoodieTableFactory.java

##

@@ -199,6 +199,12 @@ private static void setupCleaningOptions(Configuration

conf) {

conf.setInteger(FlinkOptions.ARCHIVE_MIN_COMMITS, commitsToRetain + 10);

conf.setInteger(FlinkOptions.ARCHIVE_MAX_COMMITS, commitsToRetain + 20);

}

+if (conf.getBoolean(FlinkOptions.COMPACTION_SCHEDULE_ENABLED)

Review comment:

Yes, but the user may not know it would be rewritten under some

condition like this?

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

> Tweak the default compaction target IO to 500GB when flink async compaction

> is off

> --

>

> Key: HUDI-2143

> URL: https://issues.apache.org/jira/browse/HUDI-2143

> Project: Apache Hudi

> Issue Type: Improvement

> Components: Flink Integration

>Reporter: Danny Chen

>Assignee: Danny Chen

>Priority: Major

> Labels: pull-request-available

> Fix For: 0.9.0

>

>

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

[GitHub] [hudi] yanghua commented on a change in pull request #3238: [HUDI-2143] Tweak the default compaction target IO to 500GB when flin…

yanghua commented on a change in pull request #3238:

URL: https://github.com/apache/hudi/pull/3238#discussion_r666768715

##

File path:

hudi-flink/src/main/java/org/apache/hudi/table/HoodieTableFactory.java

##

@@ -199,6 +199,12 @@ private static void setupCleaningOptions(Configuration

conf) {

conf.setInteger(FlinkOptions.ARCHIVE_MIN_COMMITS, commitsToRetain + 10);

conf.setInteger(FlinkOptions.ARCHIVE_MAX_COMMITS, commitsToRetain + 20);

}

+if (conf.getBoolean(FlinkOptions.COMPACTION_SCHEDULE_ENABLED)

Review comment:

Yes, but the user may not know it would be rewritten under some

condition like this?

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[jira] [Commented] (HUDI-2045) Support Read Hoodie As DataSource Table For Flink And DeltaStreamer

[ https://issues.apache.org/jira/browse/HUDI-2045?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=17377918#comment-17377918 ] ASF GitHub Bot commented on HUDI-2045: -- yanghua commented on pull request #3120: URL: https://github.com/apache/hudi/pull/3120#issuecomment-877001823 > Hi @yanghua , I have write a util to convert the parquet schema to spark schema json without the spark dependencies. Please take a look again~ OK, sounds good. Will review it soon. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org > Support Read Hoodie As DataSource Table For Flink And DeltaStreamer > --- > > Key: HUDI-2045 > URL: https://issues.apache.org/jira/browse/HUDI-2045 > Project: Apache Hudi > Issue Type: Improvement > Components: Hive Integration >Reporter: pengzhiwei >Assignee: pengzhiwei >Priority: Major > Labels: pull-request-available > Fix For: 0.9.0 > > > Currently we only support reading hoodie table as datasource table for spark > since [https://github.com/apache/hudi/pull/2283] > In order to support this feature for flink and DeltaStreamer, we need to sync > the spark table properties needed by datasource table to the meta store in > HiveSyncTool. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [hudi] yanghua commented on pull request #3120: [HUDI-2045] Support Read Hoodie As DataSource Table For Flink And DeltaStreamer

yanghua commented on pull request #3120: URL: https://github.com/apache/hudi/pull/3120#issuecomment-877001823 > Hi @yanghua , I have write a util to convert the parquet schema to spark schema json without the spark dependencies. Please take a look again~ OK, sounds good. Will review it soon. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

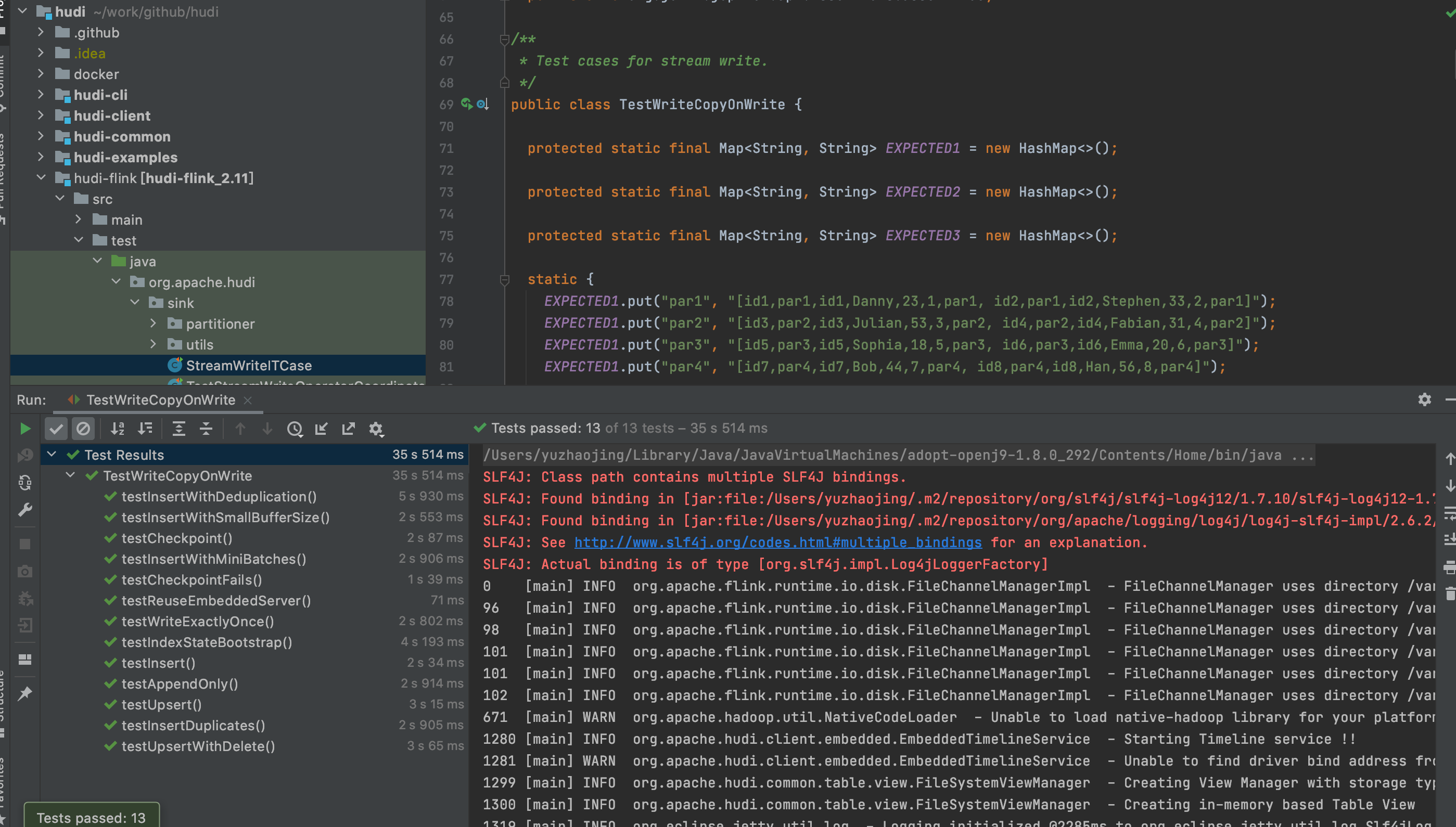

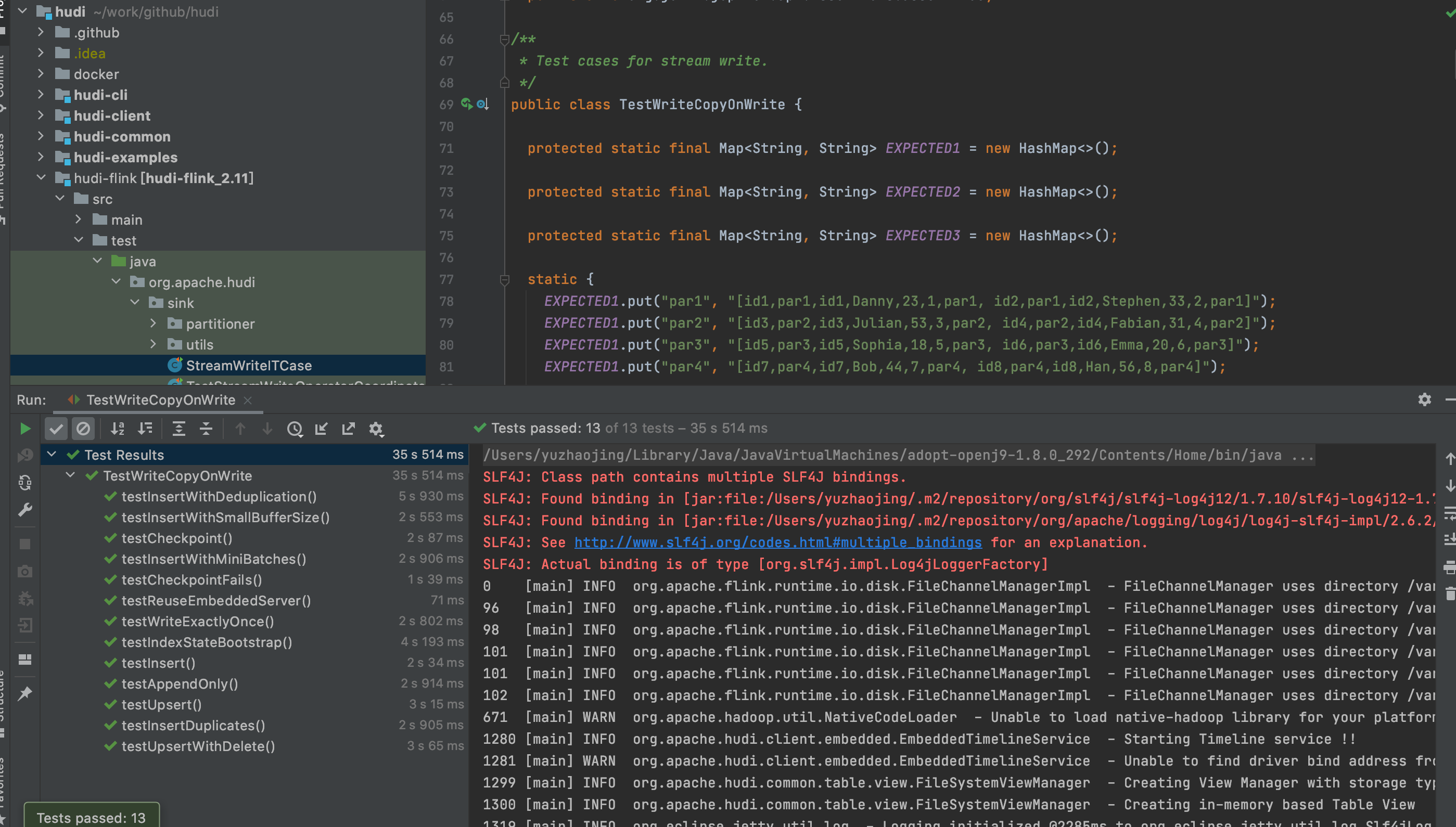

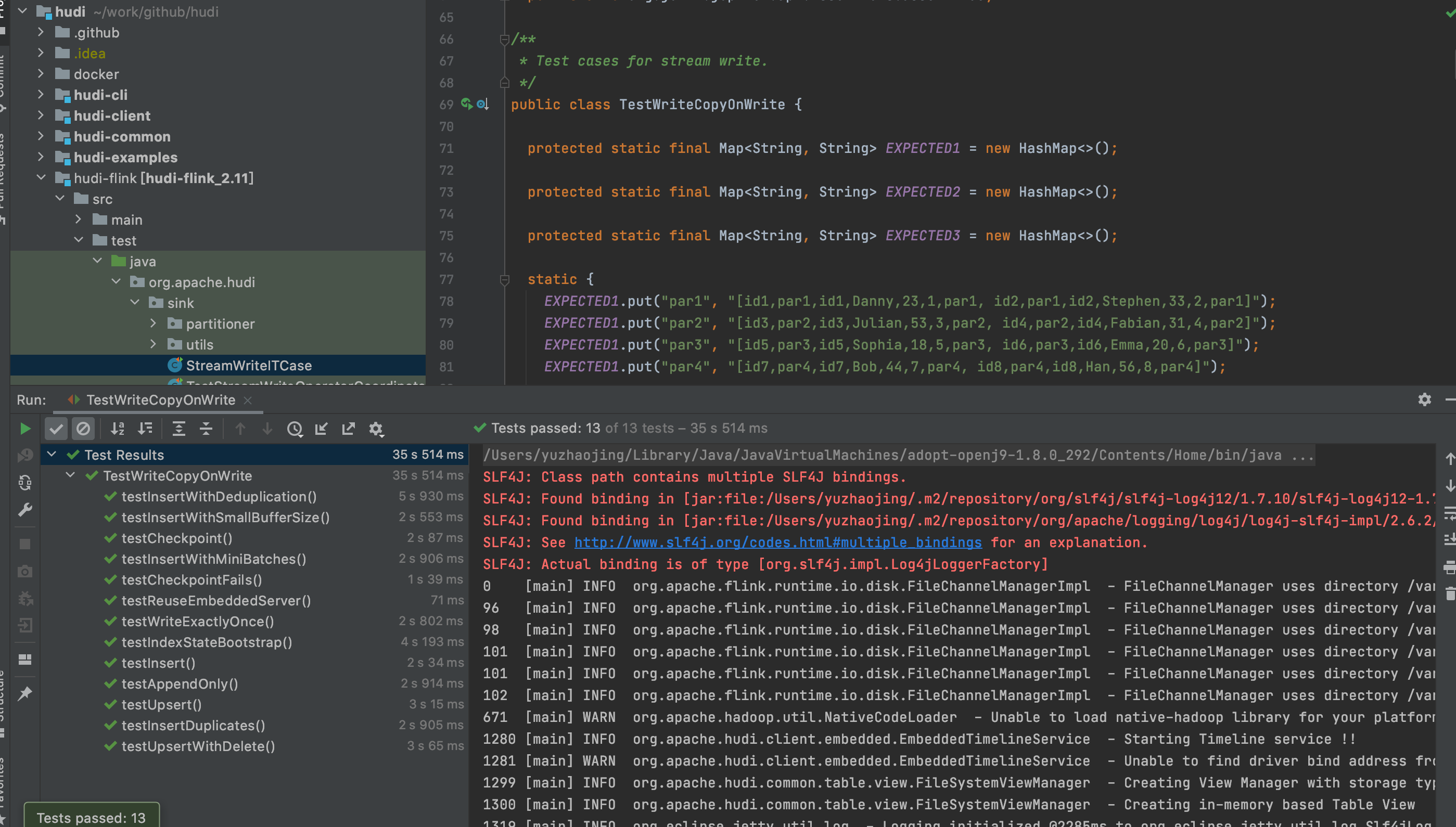

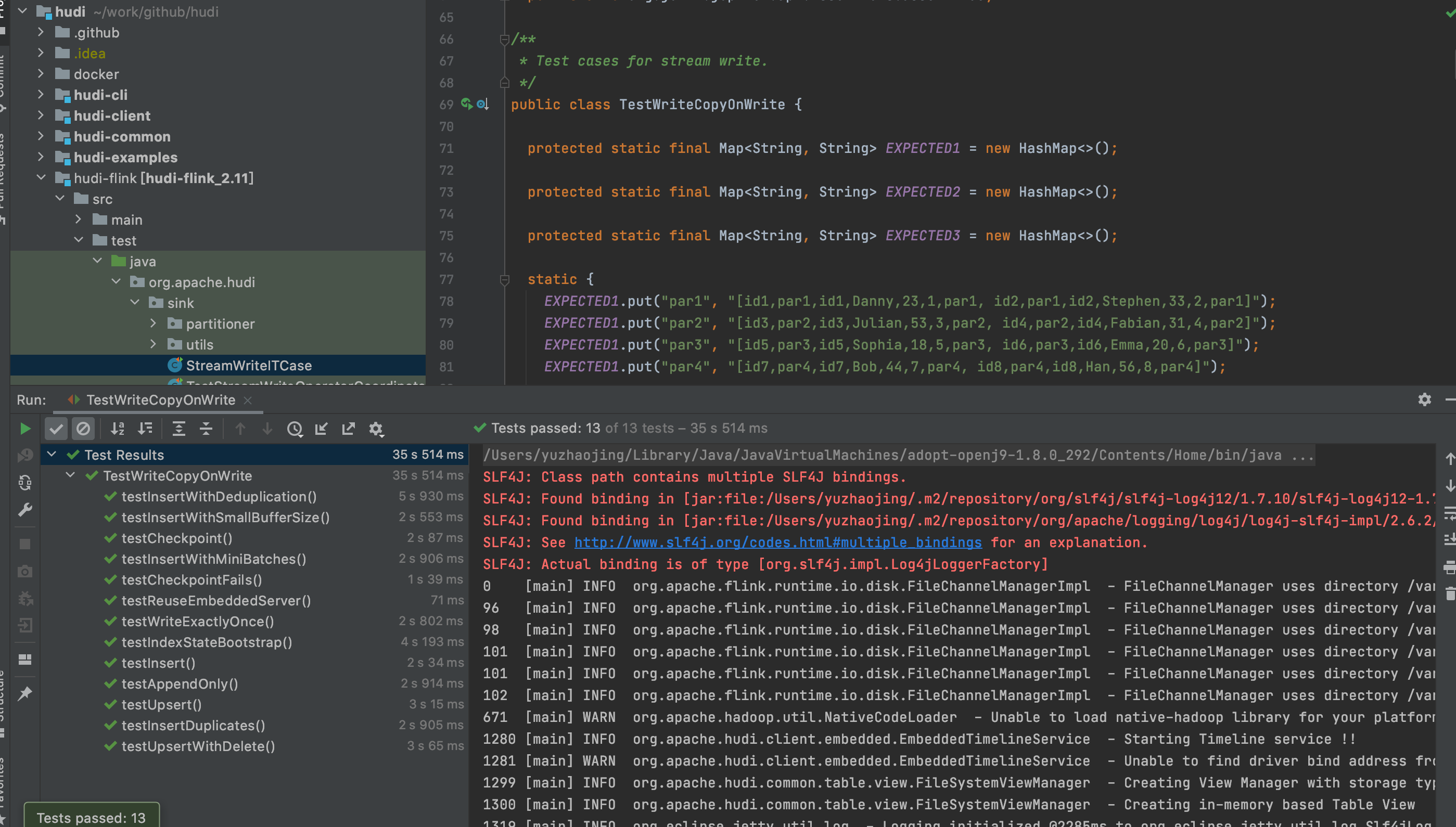

[jira] [Closed] (HUDI-2087) Support Append only in Flink stream

[ https://issues.apache.org/jira/browse/HUDI-2087?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] vinoyang closed HUDI-2087. -- Fix Version/s: 0.9.0 Resolution: Done 371526789d663dee85041eb31c27c52c81ef87ef > Support Append only in Flink stream > --- > > Key: HUDI-2087 > URL: https://issues.apache.org/jira/browse/HUDI-2087 > Project: Apache Hudi > Issue Type: Improvement > Components: Flink Integration >Reporter: yuzhaojing >Assignee: yuzhaojing >Priority: Major > Labels: pull-request-available > Fix For: 0.9.0 > > Attachments: image-2021-07-08-22-04-30-039.png, > image-2021-07-08-22-04-40-018.png > > > It is necessary to support append mode in flink stream, as the data lake > should be able to write log type data as parquet high performance without > merge. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Commented] (HUDI-2087) Support Append only in Flink stream

[ https://issues.apache.org/jira/browse/HUDI-2087?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=17377914#comment-17377914 ] ASF GitHub Bot commented on HUDI-2087: -- yanghua merged pull request #3174: URL: https://github.com/apache/hudi/pull/3174 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org > Support Append only in Flink stream > --- > > Key: HUDI-2087 > URL: https://issues.apache.org/jira/browse/HUDI-2087 > Project: Apache Hudi > Issue Type: Improvement > Components: Flink Integration >Reporter: yuzhaojing >Assignee: yuzhaojing >Priority: Major > Labels: pull-request-available > Attachments: image-2021-07-08-22-04-30-039.png, > image-2021-07-08-22-04-40-018.png > > > It is necessary to support append mode in flink stream, as the data lake > should be able to write log type data as parquet high performance without > merge. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Commented] (HUDI-2087) Support Append only in Flink stream

[ https://issues.apache.org/jira/browse/HUDI-2087?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=17377913#comment-17377913 ] ASF GitHub Bot commented on HUDI-2087: -- yanghua commented on pull request #3174: URL: https://github.com/apache/hudi/pull/3174#issuecomment-876999303 The failed test cases ran successfully in the contributor's local env. So merge it now. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org > Support Append only in Flink stream > --- > > Key: HUDI-2087 > URL: https://issues.apache.org/jira/browse/HUDI-2087 > Project: Apache Hudi > Issue Type: Improvement > Components: Flink Integration >Reporter: yuzhaojing >Assignee: yuzhaojing >Priority: Major > Labels: pull-request-available > Attachments: image-2021-07-08-22-04-30-039.png, > image-2021-07-08-22-04-40-018.png > > > It is necessary to support append mode in flink stream, as the data lake > should be able to write log type data as parquet high performance without > merge. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[hudi] branch master updated: [HUDI-2087] Support Append only in Flink stream (#3174)

This is an automated email from the ASF dual-hosted git repository.

vinoyang pushed a commit to branch master

in repository https://gitbox.apache.org/repos/asf/hudi.git

The following commit(s) were added to refs/heads/master by this push:

new 3715267 [HUDI-2087] Support Append only in Flink stream (#3174)

3715267 is described below

commit 371526789d663dee85041eb31c27c52c81ef87ef

Author: yuzhaojing <32435329+yuzhaoj...@users.noreply.github.com>

AuthorDate: Fri Jul 9 16:06:32 2021 +0800

[HUDI-2087] Support Append only in Flink stream (#3174)

Co-authored-by: 喻兆靖

---

.../hudi/table/action/commit/BucketType.java | 2 +-

.../apache/hudi/client/HoodieFlinkWriteClient.java | 10 ++-

.../java/org/apache/hudi/io/FlinkAppendHandle.java | 3 +-

.../java/org/apache/hudi/io/FlinkCreateHandle.java | 12

.../commit/BaseFlinkCommitActionExecutor.java | 5 +-

.../apache/hudi/configuration/FlinkOptions.java| 6 ++

.../sink/partitioner/BucketAssignFunction.java | 44 +---

.../hudi/sink/partitioner/BucketAssigner.java | 10 ++-

.../apache/hudi/streamer/FlinkStreamerConfig.java | 11 ++-

.../org/apache/hudi/table/HoodieTableFactory.java | 6 ++

.../org/apache/hudi/sink/TestWriteCopyOnWrite.java | 79 ++

.../org/apache/hudi/sink/TestWriteMergeOnRead.java | 15

.../hudi/sink/TestWriteMergeOnReadWithCompact.java | 15 ++--

.../test/java/org/apache/hudi/utils/TestData.java | 61 +

14 files changed, 246 insertions(+), 33 deletions(-)

diff --git

a/hudi-client/hudi-client-common/src/main/java/org/apache/hudi/table/action/commit/BucketType.java

b/hudi-client/hudi-client-common/src/main/java/org/apache/hudi/table/action/commit/BucketType.java

index 70ee473..e1fd161 100644

---

a/hudi-client/hudi-client-common/src/main/java/org/apache/hudi/table/action/commit/BucketType.java

+++

b/hudi-client/hudi-client-common/src/main/java/org/apache/hudi/table/action/commit/BucketType.java

@@ -19,5 +19,5 @@

package org.apache.hudi.table.action.commit;

public enum BucketType {

- UPDATE, INSERT

+ UPDATE, INSERT, APPEND_ONLY

}

diff --git

a/hudi-client/hudi-flink-client/src/main/java/org/apache/hudi/client/HoodieFlinkWriteClient.java

b/hudi-client/hudi-flink-client/src/main/java/org/apache/hudi/client/HoodieFlinkWriteClient.java

index 05e4481..71ca1b6 100644

---

a/hudi-client/hudi-flink-client/src/main/java/org/apache/hudi/client/HoodieFlinkWriteClient.java

+++

b/hudi-client/hudi-flink-client/src/main/java/org/apache/hudi/client/HoodieFlinkWriteClient.java

@@ -56,6 +56,7 @@ import org.apache.hudi.table.HoodieTable;

import org.apache.hudi.table.HoodieTimelineArchiveLog;

import org.apache.hudi.table.MarkerFiles;

import org.apache.hudi.table.action.HoodieWriteMetadata;

+import org.apache.hudi.table.action.commit.BucketType;

import org.apache.hudi.table.action.compact.FlinkCompactHelpers;

import org.apache.hudi.table.upgrade.FlinkUpgradeDowngrade;

import org.apache.hudi.util.FlinkClientUtil;

@@ -408,6 +409,12 @@ public class HoodieFlinkWriteClient extends

final HoodieRecordLocation loc = record.getCurrentLocation();

final String fileID = loc.getFileId();

final String partitionPath = record.getPartitionPath();

+// append only mode always use FlinkCreateHandle

+if (loc.getInstantTime().equals(BucketType.APPEND_ONLY.name())) {

+ return new FlinkCreateHandle<>(config, instantTime, table, partitionPath,

+ fileID, table.getTaskContextSupplier());

+}

+

if (bucketToHandles.containsKey(fileID)) {

MiniBatchHandle lastHandle = (MiniBatchHandle)

bucketToHandles.get(fileID);

if (lastHandle.shouldReplace()) {

@@ -424,7 +431,8 @@ public class HoodieFlinkWriteClient extends

if (isDelta) {

writeHandle = new FlinkAppendHandle<>(config, instantTime, table,

partitionPath, fileID, recordItr,

table.getTaskContextSupplier());

-} else if (loc.getInstantTime().equals("I")) {

+} else if (loc.getInstantTime().equals(BucketType.INSERT.name()) ||

loc.getInstantTime().equals(BucketType.APPEND_ONLY.name())) {

+ // use the same handle for insert bucket

writeHandle = new FlinkCreateHandle<>(config, instantTime, table,

partitionPath,

fileID, table.getTaskContextSupplier());

} else {

diff --git

a/hudi-client/hudi-flink-client/src/main/java/org/apache/hudi/io/FlinkAppendHandle.java

b/hudi-client/hudi-flink-client/src/main/java/org/apache/hudi/io/FlinkAppendHandle.java

index 987f335..41d0666 100644

---

a/hudi-client/hudi-flink-client/src/main/java/org/apache/hudi/io/FlinkAppendHandle.java

+++

b/hudi-client/hudi-flink-client/src/main/java/org/apache/hudi/io/FlinkAppendHandle.java

@@ -25,6 +25,7 @@ import org.apache.hudi.common.model.HoodieRecordPayload;

import org.apache.hudi.config.HoodieWriteConfig;

import org.apache.hudi.table.HoodieTable;

import org.apache.hudi.table.MarkerFiles;

+import org.apache.hudi.table.action.commit.BucketTy

[GitHub] [hudi] yanghua merged pull request #3174: [HUDI-2087] Support Append only in Flink stream

yanghua merged pull request #3174: URL: https://github.com/apache/hudi/pull/3174 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] yanghua commented on pull request #3174: [HUDI-2087] Support Append only in Flink stream