[GitHub] [hadoop] prasad-acit commented on pull request #4241: HDFS-16563. Namenode WebUI prints sensitive information on Token expiry

prasad-acit commented on PR #4241: URL: https://github.com/apache/hadoop/pull/4241#issuecomment-1112921828 Thanks @jojochuang I have added logs with Token Info. I will consider other error / improvement scenario and analyze further. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[jira] [Work logged] (HADOOP-18079) Upgrade Netty to 4.1.74

[

https://issues.apache.org/jira/browse/HADOOP-18079?focusedWorklogId=763989&page=com.atlassian.jira.plugin.system.issuetabpanels:worklog-tabpanel#worklog-763989

]

ASF GitHub Bot logged work on HADOOP-18079:

---

Author: ASF GitHub Bot

Created on: 29/Apr/22 04:42

Start Date: 29/Apr/22 04:42

Worklog Time Spent: 10m

Work Description: brahmareddybattula commented on code in PR #3977:

URL: https://github.com/apache/hadoop/pull/3977#discussion_r861245824

##

hadoop-project/pom.xml:

##

@@ -957,6 +957,72 @@

${netty4.version}

+

+io.netty

+netty-codec-socks

+${netty4.version}

+

+

+

+io.netty

+netty-handler-proxy

+${netty4.version}

+

+

+

+io.netty

+netty-resolver

+${netty4.version}

+

+

+

+io.netty

+netty-handler

+${netty4.version}

+

+

+

+io.netty

+netty-buffer

+${netty4.version}

+

+

+

+io.netty

+netty-transport

+${netty4.version}

+

+

+

+io.netty

+netty-common

+${netty4.version}

+

+

+

+io.netty

+netty-transport-native-unix-common

+${netty4.version}

+

+

+

+io.netty

+netty-transport

Review Comment:

Looks netty-transport given two times.. Here and #992

##

hadoop-project/pom.xml:

##

@@ -141,7 +141,7 @@

2.8.9

3.2.4

3.10.6.Final

-4.1.68.Final

+4.1.75.Final

Review Comment:

Sorry to ask again, looks now 4.1.76 also available. May be we can raise

another jira for this if not with this.

Issue Time Tracking

---

Worklog Id: (was: 763989)

Time Spent: 2h 20m (was: 2h 10m)

> Upgrade Netty to 4.1.74

> ---

>

> Key: HADOOP-18079

> URL: https://issues.apache.org/jira/browse/HADOOP-18079

> Project: Hadoop Common

> Issue Type: Bug

>Reporter: Renukaprasad C

>Priority: Major

> Labels: pull-request-available

> Time Spent: 2h 20m

> Remaining Estimate: 0h

>

> h4. Netty version - 4.1.71 has fix some CVEs. We can upgrade the netty to

> 4.1.7.1.Final or latest stable version - 4.1.7.2.Final.

--

This message was sent by Atlassian Jira

(v8.20.7#820007)

-

To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[GitHub] [hadoop] brahmareddybattula commented on a diff in pull request #3977: HADOOP-18079. Upgrade Netty to 4.1.75.

brahmareddybattula commented on code in PR #3977:

URL: https://github.com/apache/hadoop/pull/3977#discussion_r861245824

##

hadoop-project/pom.xml:

##

@@ -957,6 +957,72 @@

${netty4.version}

+

+io.netty

+netty-codec-socks

+${netty4.version}

+

+

+

+io.netty

+netty-handler-proxy

+${netty4.version}

+

+

+

+io.netty

+netty-resolver

+${netty4.version}

+

+

+

+io.netty

+netty-handler

+${netty4.version}

+

+

+

+io.netty

+netty-buffer

+${netty4.version}

+

+

+

+io.netty

+netty-transport

+${netty4.version}

+

+

+

+io.netty

+netty-common

+${netty4.version}

+

+

+

+io.netty

+netty-transport-native-unix-common

+${netty4.version}

+

+

+

+io.netty

+netty-transport

Review Comment:

Looks netty-transport given two times.. Here and #992

##

hadoop-project/pom.xml:

##

@@ -141,7 +141,7 @@

2.8.9

3.2.4

3.10.6.Final

-4.1.68.Final

+4.1.75.Final

Review Comment:

Sorry to ask again, looks now 4.1.76 also available. May be we can raise

another jira for this if not with this.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[GitHub] [hadoop] hadoop-yetus commented on pull request #4247: MAPREDUCE-7369. Fixed MapReduce tasks timing out when spends more time on MultipleOutputs#close

hadoop-yetus commented on PR #4247:

URL: https://github.com/apache/hadoop/pull/4247#issuecomment-1112842289

:confetti_ball: **+1 overall**

| Vote | Subsystem | Runtime | Logfile | Comment |

|::|--:|:|::|:---:|

| +0 :ok: | reexec | 1m 1s | | Docker mode activated. |

_ Prechecks _ |

| +1 :green_heart: | dupname | 0m 0s | | No case conflicting files

found. |

| +0 :ok: | codespell | 0m 0s | | codespell was not available. |

| +1 :green_heart: | @author | 0m 0s | | The patch does not contain

any @author tags. |

| +1 :green_heart: | test4tests | 0m 0s | | The patch appears to

include 1 new or modified test files. |

_ trunk Compile Tests _ |

| +0 :ok: | mvndep | 15m 6s | | Maven dependency ordering for branch |

| +1 :green_heart: | mvninstall | 24m 59s | | trunk passed |

| +1 :green_heart: | compile | 2m 50s | | trunk passed with JDK

Ubuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04 |

| +1 :green_heart: | compile | 2m 32s | | trunk passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | checkstyle | 1m 26s | | trunk passed |

| +1 :green_heart: | mvnsite | 2m 1s | | trunk passed |

| +1 :green_heart: | javadoc | 1m 33s | | trunk passed with JDK

Ubuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04 |

| +1 :green_heart: | javadoc | 1m 27s | | trunk passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | spotbugs | 3m 8s | | trunk passed |

| +1 :green_heart: | shadedclient | 20m 35s | | branch has no errors

when building and testing our client artifacts. |

_ Patch Compile Tests _ |

| +0 :ok: | mvndep | 0m 34s | | Maven dependency ordering for patch |

| +1 :green_heart: | mvninstall | 1m 20s | | the patch passed |

| +1 :green_heart: | compile | 2m 34s | | the patch passed with JDK

Ubuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04 |

| +1 :green_heart: | javac | 2m 34s | | the patch passed |

| +1 :green_heart: | compile | 2m 16s | | the patch passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | javac | 2m 16s | | the patch passed |

| +1 :green_heart: | blanks | 0m 0s | | The patch has no blanks

issues. |

| -0 :warning: | checkstyle | 1m 5s |

[/results-checkstyle-hadoop-mapreduce-project_hadoop-mapreduce-client.txt](https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4247/1/artifact/out/results-checkstyle-hadoop-mapreduce-project_hadoop-mapreduce-client.txt)

| hadoop-mapreduce-project/hadoop-mapreduce-client: The patch generated 2 new

+ 478 unchanged - 0 fixed = 480 total (was 478) |

| +1 :green_heart: | mvnsite | 1m 26s | | the patch passed |

| +1 :green_heart: | xml | 0m 1s | | The patch has no ill-formed XML

file. |

| +1 :green_heart: | javadoc | 1m 1s | | the patch passed with JDK

Ubuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04 |

| +1 :green_heart: | javadoc | 0m 59s | | the patch passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | spotbugs | 2m 47s | | the patch passed |

| +1 :green_heart: | shadedclient | 20m 16s | | patch has no errors

when building and testing our client artifacts. |

_ Other Tests _ |

| +1 :green_heart: | unit | 6m 39s | | hadoop-mapreduce-client-core in

the patch passed. |

| +1 :green_heart: | unit | 8m 51s | | hadoop-mapreduce-client-app in

the patch passed. |

| +1 :green_heart: | asflicense | 0m 52s | | The patch does not

generate ASF License warnings. |

| | | 129m 16s | | |

| Subsystem | Report/Notes |

|--:|:-|

| Docker | ClientAPI=1.41 ServerAPI=1.41 base:

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4247/1/artifact/out/Dockerfile

|

| GITHUB PR | https://github.com/apache/hadoop/pull/4247 |

| Optional Tests | dupname asflicense compile javac javadoc mvninstall

mvnsite unit shadedclient spotbugs checkstyle codespell xml |

| uname | Linux b5d123e9a357 4.15.0-65-generic #74-Ubuntu SMP Tue Sep 17

17:06:04 UTC 2019 x86_64 x86_64 x86_64 GNU/Linux |

| Build tool | maven |

| Personality | dev-support/bin/hadoop.sh |

| git revision | trunk / aa1e7fa48eb705be7746f86f02031a9548ec7130 |

| Default Java | Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| Multi-JDK versions |

/usr/lib/jvm/java-11-openjdk-amd64:Ubuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04

/usr/lib/jvm/java-8-openjdk-amd64:Private

Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| Test Results |

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4247/1/testReport/ |

| Max. process+thread count | 1247 (vs. ulimit of 5500) |

| modules | C:

hadoop-mapreduce-project/hadoop-mapredu

[GitHub] [hadoop] ashutoshcipher opened a new pull request, #4247: MAPREDUCE-7369. Fixed MapReduce tasks timing out when spends more time on MultipleOutputs#close

ashutoshcipher opened a new pull request, #4247: URL: https://github.com/apache/hadoop/pull/4247 ### Description of PR Fixed MapReduce tasks timing out when spends more time on MultipleOutputs#close * JIRA: MAPREDUCE-7369 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[jira] [Assigned] (HADOOP-18069) CVE-2021-0341 in okhttp@2.7.5 detected in hdfs-client

[ https://issues.apache.org/jira/browse/HADOOP-18069?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Ashutosh Gupta reassigned HADOOP-18069: --- Assignee: Ashutosh Gupta > CVE-2021-0341 in okhttp@2.7.5 detected in hdfs-client > --- > > Key: HADOOP-18069 > URL: https://issues.apache.org/jira/browse/HADOOP-18069 > Project: Hadoop Common > Issue Type: Bug > Components: hdfs-client >Affects Versions: 3.3.1 >Reporter: Eugene Shinn (Truveta) >Assignee: Ashutosh Gupta >Priority: Major > Labels: pull-request-available > Time Spent: 3h 50m > Remaining Estimate: 0h > > Our static vulnerability scanner (Fortify On Demand) detected [NVD - > CVE-2021-0341 > (nist.gov)|https://nvd.nist.gov/vuln/detail/CVE-2021-0341#VulnChangeHistorySection] > in our application. We traced the vulnerability to a transitive dependency > coming from hadoop-hdfs-client, which depends on okhttp@2.7.5 > ([hadoop/pom.xml at trunk · apache/hadoop > (github.com)|https://github.com/apache/hadoop/blob/trunk/hadoop-project/pom.xml#L137]). > To resolve this issue, okhttp should be upgraded to 4.9.2+ (ref: > [CVE-2021-0341 · Issue #6724 · square/okhttp > (github.com)|https://github.com/square/okhttp/issues/6724]). -- This message was sent by Atlassian Jira (v8.20.7#820007) - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[GitHub] [hadoop] hadoop-yetus commented on pull request #4245: HDFS-16564. Use uint32_t for hdfs_find

hadoop-yetus commented on PR #4245:

URL: https://github.com/apache/hadoop/pull/4245#issuecomment-1112767405

:broken_heart: **-1 overall**

| Vote | Subsystem | Runtime | Logfile | Comment |

|::|--:|:|::|:---:|

| +0 :ok: | reexec | 12m 1s | | Docker mode activated. |

_ Prechecks _ |

| +1 :green_heart: | dupname | 0m 0s | | No case conflicting files

found. |

| +0 :ok: | codespell | 0m 0s | | codespell was not available. |

| +1 :green_heart: | @author | 0m 0s | | The patch does not contain

any @author tags. |

| +1 :green_heart: | test4tests | 0m 0s | | The patch appears to

include 1 new or modified test files. |

_ trunk Compile Tests _ |

| +1 :green_heart: | mvninstall | 26m 3s | | trunk passed |

| +1 :green_heart: | compile | 3m 41s | | trunk passed |

| +1 :green_heart: | mvnsite | 0m 44s | | trunk passed |

| -1 :x: | shadedclient | 56m 29s | | branch has errors when building

and testing our client artifacts. |

_ Patch Compile Tests _ |

| +1 :green_heart: | mvninstall | 0m 24s | | the patch passed |

| +1 :green_heart: | compile | 3m 19s | | the patch passed |

| +1 :green_heart: | cc | 3m 19s | | the patch passed |

| +1 :green_heart: | golang | 3m 19s | | the patch passed |

| +1 :green_heart: | javac | 3m 19s | | the patch passed |

| +1 :green_heart: | blanks | 0m 0s | | The patch has no blanks

issues. |

| +1 :green_heart: | mvnsite | 0m 27s | | the patch passed |

| -1 :x: | shadedclient | 26m 1s | | patch has errors when building

and testing our client artifacts. |

_ Other Tests _ |

| +1 :green_heart: | unit | 32m 37s | | hadoop-hdfs-native-client in

the patch passed. |

| +1 :green_heart: | asflicense | 0m 46s | | The patch does not

generate ASF License warnings. |

| | | 134m 29s | | |

| Subsystem | Report/Notes |

|--:|:-|

| Docker | ClientAPI=1.41 ServerAPI=1.41 base:

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4245/1/artifact/out/Dockerfile

|

| GITHUB PR | https://github.com/apache/hadoop/pull/4245 |

| Optional Tests | dupname asflicense compile cc mvnsite javac unit

codespell golang |

| uname | Linux b65ac514df5a 4.15.0-156-generic #163-Ubuntu SMP Thu Aug 19

23:31:58 UTC 2021 x86_64 GNU/Linux |

| Build tool | maven |

| Personality | dev-support/bin/hadoop.sh |

| git revision | trunk / 2eef94c4ae6df5e3b45e5cb286d7914f338f94ae |

| Default Java | Debian-11.0.14+9-post-Debian-1deb10u1 |

| Test Results |

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4245/1/testReport/ |

| Max. process+thread count | 440 (vs. ulimit of 5500) |

| modules | C: hadoop-hdfs-project/hadoop-hdfs-native-client U:

hadoop-hdfs-project/hadoop-hdfs-native-client |

| Console output |

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4245/1/console |

| versions | git=2.20.1 maven=3.6.0 |

| Powered by | Apache Yetus 0.14.0-SNAPSHOT https://yetus.apache.org |

This message was automatically generated.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[jira] [Work logged] (HADOOP-18069) CVE-2021-0341 in okhttp@2.7.5 detected in hdfs-client

[ https://issues.apache.org/jira/browse/HADOOP-18069?focusedWorklogId=763941&page=com.atlassian.jira.plugin.system.issuetabpanels:worklog-tabpanel#worklog-763941 ] ASF GitHub Bot logged work on HADOOP-18069: --- Author: ASF GitHub Bot Created on: 29/Apr/22 00:11 Start Date: 29/Apr/22 00:11 Worklog Time Spent: 10m Work Description: ashutoshcipher commented on code in PR #4229: URL: https://github.com/apache/hadoop/pull/4229#discussion_r861393238 ## LICENSE-binary: ## @@ -242,6 +242,7 @@ com.google.guava:listenablefuture:.0-empty-to-avoid-conflict-with-guava com.microsoft.azure:azure-storage:7.0.0 com.nimbusds:nimbus-jose-jwt:9.8.1 com.squareup.okhttp:okhttp:2.7.5 Review Comment: Done Issue Time Tracking --- Worklog Id: (was: 763941) Time Spent: 3h 50m (was: 3h 40m) > CVE-2021-0341 in okhttp@2.7.5 detected in hdfs-client > --- > > Key: HADOOP-18069 > URL: https://issues.apache.org/jira/browse/HADOOP-18069 > Project: Hadoop Common > Issue Type: Bug > Components: hdfs-client >Affects Versions: 3.3.1 >Reporter: Eugene Shinn (Truveta) >Priority: Major > Labels: pull-request-available > Time Spent: 3h 50m > Remaining Estimate: 0h > > Our static vulnerability scanner (Fortify On Demand) detected [NVD - > CVE-2021-0341 > (nist.gov)|https://nvd.nist.gov/vuln/detail/CVE-2021-0341#VulnChangeHistorySection] > in our application. We traced the vulnerability to a transitive dependency > coming from hadoop-hdfs-client, which depends on okhttp@2.7.5 > ([hadoop/pom.xml at trunk · apache/hadoop > (github.com)|https://github.com/apache/hadoop/blob/trunk/hadoop-project/pom.xml#L137]). > To resolve this issue, okhttp should be upgraded to 4.9.2+ (ref: > [CVE-2021-0341 · Issue #6724 · square/okhttp > (github.com)|https://github.com/square/okhttp/issues/6724]). -- This message was sent by Atlassian Jira (v8.20.7#820007) - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[GitHub] [hadoop] ashutoshcipher commented on a diff in pull request #4229: HADOOP-18069. okhttp@2.7.5 to 4.9.3

ashutoshcipher commented on code in PR #4229: URL: https://github.com/apache/hadoop/pull/4229#discussion_r861393238 ## LICENSE-binary: ## @@ -242,6 +242,7 @@ com.google.guava:listenablefuture:.0-empty-to-avoid-conflict-with-guava com.microsoft.azure:azure-storage:7.0.0 com.nimbusds:nimbus-jose-jwt:9.8.1 com.squareup.okhttp:okhttp:2.7.5 Review Comment: Done -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[GitHub] [hadoop] hadoop-yetus commented on pull request #4241: HDFS-16563. Namenode WebUI prints sensitive information on Token expiry

hadoop-yetus commented on PR #4241:

URL: https://github.com/apache/hadoop/pull/4241#issuecomment-1112741832

:broken_heart: **-1 overall**

| Vote | Subsystem | Runtime | Logfile | Comment |

|::|--:|:|::|:---:|

| +0 :ok: | reexec | 0m 59s | | Docker mode activated. |

_ Prechecks _ |

| +1 :green_heart: | dupname | 0m 0s | | No case conflicting files

found. |

| +0 :ok: | codespell | 0m 1s | | codespell was not available. |

| +1 :green_heart: | @author | 0m 0s | | The patch does not contain

any @author tags. |

| -1 :x: | test4tests | 0m 0s | | The patch doesn't appear to include

any new or modified tests. Please justify why no new tests are needed for this

patch. Also please list what manual steps were performed to verify this patch.

|

_ trunk Compile Tests _ |

| +1 :green_heart: | mvninstall | 40m 56s | | trunk passed |

| +1 :green_heart: | compile | 25m 15s | | trunk passed with JDK

Ubuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04 |

| +1 :green_heart: | compile | 21m 47s | | trunk passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | checkstyle | 1m 32s | | trunk passed |

| +1 :green_heart: | mvnsite | 2m 0s | | trunk passed |

| -1 :x: | javadoc | 1m 38s |

[/branch-javadoc-hadoop-common-project_hadoop-common-jdkUbuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04.txt](https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4241/2/artifact/out/branch-javadoc-hadoop-common-project_hadoop-common-jdkUbuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04.txt)

| hadoop-common in trunk failed with JDK

Ubuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04. |

| +1 :green_heart: | javadoc | 2m 5s | | trunk passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | spotbugs | 3m 6s | | trunk passed |

| +1 :green_heart: | shadedclient | 25m 45s | | branch has no errors

when building and testing our client artifacts. |

_ Patch Compile Tests _ |

| +1 :green_heart: | mvninstall | 1m 4s | | the patch passed |

| +1 :green_heart: | compile | 24m 22s | | the patch passed with JDK

Ubuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04 |

| +1 :green_heart: | javac | 24m 22s | | the patch passed |

| +1 :green_heart: | compile | 21m 53s | | the patch passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | javac | 21m 53s | | the patch passed |

| +1 :green_heart: | blanks | 0m 0s | | The patch has no blanks

issues. |

| +1 :green_heart: | checkstyle | 1m 26s | | the patch passed |

| +1 :green_heart: | mvnsite | 1m 57s | | the patch passed |

| -1 :x: | javadoc | 1m 27s |

[/patch-javadoc-hadoop-common-project_hadoop-common-jdkUbuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04.txt](https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4241/2/artifact/out/patch-javadoc-hadoop-common-project_hadoop-common-jdkUbuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04.txt)

| hadoop-common in the patch failed with JDK

Ubuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04. |

| +1 :green_heart: | javadoc | 1m 59s | | the patch passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | spotbugs | 3m 1s | | the patch passed |

| +1 :green_heart: | shadedclient | 25m 42s | | patch has no errors

when building and testing our client artifacts. |

_ Other Tests _ |

| +1 :green_heart: | unit | 18m 7s | | hadoop-common in the patch

passed. |

| +1 :green_heart: | asflicense | 1m 18s | | The patch does not

generate ASF License warnings. |

| | | 227m 41s | | |

| Subsystem | Report/Notes |

|--:|:-|

| Docker | ClientAPI=1.41 ServerAPI=1.41 base:

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4241/2/artifact/out/Dockerfile

|

| GITHUB PR | https://github.com/apache/hadoop/pull/4241 |

| Optional Tests | dupname asflicense compile javac javadoc mvninstall

mvnsite unit shadedclient spotbugs checkstyle codespell |

| uname | Linux 9fd1af415c69 4.15.0-175-generic #184-Ubuntu SMP Thu Mar 24

17:48:36 UTC 2022 x86_64 x86_64 x86_64 GNU/Linux |

| Build tool | maven |

| Personality | dev-support/bin/hadoop.sh |

| git revision | trunk / 9986c15091c1b084d611ccc5d6c5035e13a94f0f |

| Default Java | Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| Multi-JDK versions |

/usr/lib/jvm/java-11-openjdk-amd64:Ubuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04

/usr/lib/jvm/java-8-openjdk-amd64:Private

Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| Test Results |

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4241/2/testReport/ |

| Max. process+thread count | 2396 (vs. ulimit of 5500) |

| modules | C: hadoop-common-project/hadoop-common

[jira] [Work logged] (HADOOP-18079) Upgrade Netty to 4.1.74

[ https://issues.apache.org/jira/browse/HADOOP-18079?focusedWorklogId=763917&page=com.atlassian.jira.plugin.system.issuetabpanels:worklog-tabpanel#worklog-763917 ] ASF GitHub Bot logged work on HADOOP-18079: --- Author: ASF GitHub Bot Created on: 28/Apr/22 22:09 Start Date: 28/Apr/22 22:09 Worklog Time Spent: 10m Work Description: jojochuang commented on PR #3977: URL: https://github.com/apache/hadoop/pull/3977#issuecomment-1112699848 The test failures do not reproduce in my local tree. I'm triggering a rebuild to double check. Issue Time Tracking --- Worklog Id: (was: 763917) Time Spent: 2h 10m (was: 2h) > Upgrade Netty to 4.1.74 > --- > > Key: HADOOP-18079 > URL: https://issues.apache.org/jira/browse/HADOOP-18079 > Project: Hadoop Common > Issue Type: Bug >Reporter: Renukaprasad C >Priority: Major > Labels: pull-request-available > Time Spent: 2h 10m > Remaining Estimate: 0h > > h4. Netty version - 4.1.71 has fix some CVEs. We can upgrade the netty to > 4.1.7.1.Final or latest stable version - 4.1.7.2.Final. -- This message was sent by Atlassian Jira (v8.20.7#820007) - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[GitHub] [hadoop] jojochuang commented on pull request #3977: HADOOP-18079. Upgrade Netty to 4.1.75.

jojochuang commented on PR #3977: URL: https://github.com/apache/hadoop/pull/3977#issuecomment-1112699848 The test failures do not reproduce in my local tree. I'm triggering a rebuild to double check. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[GitHub] [hadoop] hadoop-yetus commented on pull request #4245: HDFS-16564. Use uint32_t for hdfs_find

hadoop-yetus commented on PR #4245:

URL: https://github.com/apache/hadoop/pull/4245#issuecomment-1112692180

:confetti_ball: **+1 overall**

| Vote | Subsystem | Runtime | Logfile | Comment |

|::|--:|:|::|:---:|

| +0 :ok: | reexec | 21m 29s | | Docker mode activated. |

_ Prechecks _ |

| +1 :green_heart: | dupname | 0m 1s | | No case conflicting files

found. |

| +0 :ok: | codespell | 0m 0s | | codespell was not available. |

| +1 :green_heart: | @author | 0m 0s | | The patch does not contain

any @author tags. |

| +1 :green_heart: | test4tests | 0m 0s | | The patch appears to

include 1 new or modified test files. |

_ trunk Compile Tests _ |

| +1 :green_heart: | mvninstall | 22m 5s | | trunk passed |

| +1 :green_heart: | compile | 4m 11s | | trunk passed |

| +1 :green_heart: | mvnsite | 1m 2s | | trunk passed |

| +1 :green_heart: | shadedclient | 46m 29s | | branch has no errors

when building and testing our client artifacts. |

_ Patch Compile Tests _ |

| +1 :green_heart: | mvninstall | 0m 32s | | the patch passed |

| +1 :green_heart: | compile | 3m 42s | | the patch passed |

| +1 :green_heart: | cc | 3m 42s | | the patch passed |

| +1 :green_heart: | golang | 3m 42s | | the patch passed |

| +1 :green_heart: | javac | 3m 43s | | the patch passed |

| +1 :green_heart: | blanks | 0m 0s | | The patch has no blanks

issues. |

| +1 :green_heart: | mvnsite | 0m 35s | | the patch passed |

| +1 :green_heart: | shadedclient | 19m 1s | | patch has no errors

when building and testing our client artifacts. |

_ Other Tests _ |

| +1 :green_heart: | unit | 33m 38s | | hadoop-hdfs-native-client in

the patch passed. |

| +1 :green_heart: | asflicense | 0m 59s | | The patch does not

generate ASF License warnings. |

| | | 128m 53s | | |

| Subsystem | Report/Notes |

|--:|:-|

| Docker | ClientAPI=1.41 ServerAPI=1.41 base:

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4245/1/artifact/out/Dockerfile

|

| GITHUB PR | https://github.com/apache/hadoop/pull/4245 |

| Optional Tests | dupname asflicense compile cc mvnsite javac unit

codespell golang |

| uname | Linux 475f325777dc 4.15.0-156-generic #163-Ubuntu SMP Thu Aug 19

23:31:58 UTC 2021 x86_64 x86_64 x86_64 GNU/Linux |

| Build tool | maven |

| Personality | dev-support/bin/hadoop.sh |

| git revision | trunk / 2eef94c4ae6df5e3b45e5cb286d7914f338f94ae |

| Default Java | Red Hat, Inc.-1.8.0_312-b07 |

| Test Results |

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4245/1/testReport/ |

| Max. process+thread count | 549 (vs. ulimit of 5500) |

| modules | C: hadoop-hdfs-project/hadoop-hdfs-native-client U:

hadoop-hdfs-project/hadoop-hdfs-native-client |

| Console output |

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4245/1/console |

| versions | git=2.27.0 maven=3.6.3 |

| Powered by | Apache Yetus 0.14.0-SNAPSHOT https://yetus.apache.org |

This message was automatically generated.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[jira] [Work logged] (HADOOP-18214) Update BUILDING.txt

[ https://issues.apache.org/jira/browse/HADOOP-18214?focusedWorklogId=763897&page=com.atlassian.jira.plugin.system.issuetabpanels:worklog-tabpanel#worklog-763897 ] ASF GitHub Bot logged work on HADOOP-18214: --- Author: ASF GitHub Bot Created on: 28/Apr/22 21:22 Start Date: 28/Apr/22 21:22 Worklog Time Spent: 10m Work Description: ayushtkn commented on PR #3811: URL: https://github.com/apache/hadoop/pull/3811#issuecomment-1112666224 @gvieri well yes. This one got committed initially without a Jira since it wasn’t changing the core code, but in general we tend to have jira for almost everything. That’s how we track issues. BTW. If you have a Jira account and have it assigned on your name. You will get the credit in the Release Notes as well when Hadoop does the release. Just one of the things if that interests or motivates you :-) Issue Time Tracking --- Worklog Id: (was: 763897) Time Spent: 40m (was: 0.5h) > Update BUILDING.txt > --- > > Key: HADOOP-18214 > URL: https://issues.apache.org/jira/browse/HADOOP-18214 > Project: Hadoop Common > Issue Type: Improvement > Components: build, documentation >Affects Versions: 3.3.2 >Reporter: Steve Loughran >Priority: Minor > Labels: pull-request-available > Fix For: 3.4.0, 3.3.3 > > Time Spent: 40m > Remaining Estimate: 0h > > update building.txt to match the docker build settings. > this patch has already gone in, just without a jira in its name > https://github.com/apache/hadoop/pull/3811 -- This message was sent by Atlassian Jira (v8.20.7#820007) - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[GitHub] [hadoop] ayushtkn commented on pull request #3811: HADOOP-18214. Update BUILDING.txt

ayushtkn commented on PR #3811: URL: https://github.com/apache/hadoop/pull/3811#issuecomment-1112666224 @gvieri well yes. This one got committed initially without a Jira since it wasn’t changing the core code, but in general we tend to have jira for almost everything. That’s how we track issues. BTW. If you have a Jira account and have it assigned on your name. You will get the credit in the Release Notes as well when Hadoop does the release. Just one of the things if that interests or motivates you :-) -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[jira] [Work logged] (HADOOP-18214) Update BUILDING.txt

[ https://issues.apache.org/jira/browse/HADOOP-18214?focusedWorklogId=763890&page=com.atlassian.jira.plugin.system.issuetabpanels:worklog-tabpanel#worklog-763890 ] ASF GitHub Bot logged work on HADOOP-18214: --- Author: ASF GitHub Bot Created on: 28/Apr/22 21:05 Start Date: 28/Apr/22 21:05 Worklog Time Spent: 10m Work Description: gvieri commented on PR #3811: URL: https://github.com/apache/hadoop/pull/3811#issuecomment-1112653767 It is necessary to have ASF JIRA account ? Issue Time Tracking --- Worklog Id: (was: 763890) Time Spent: 0.5h (was: 20m) > Update BUILDING.txt > --- > > Key: HADOOP-18214 > URL: https://issues.apache.org/jira/browse/HADOOP-18214 > Project: Hadoop Common > Issue Type: Improvement > Components: build, documentation >Affects Versions: 3.3.2 >Reporter: Steve Loughran >Priority: Minor > Labels: pull-request-available > Fix For: 3.4.0, 3.3.3 > > Time Spent: 0.5h > Remaining Estimate: 0h > > update building.txt to match the docker build settings. > this patch has already gone in, just without a jira in its name > https://github.com/apache/hadoop/pull/3811 -- This message was sent by Atlassian Jira (v8.20.7#820007) - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[GitHub] [hadoop] gvieri commented on pull request #3811: HADOOP-18214. Update BUILDING.txt

gvieri commented on PR #3811: URL: https://github.com/apache/hadoop/pull/3811#issuecomment-1112653767 It is necessary to have ASF JIRA account ? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

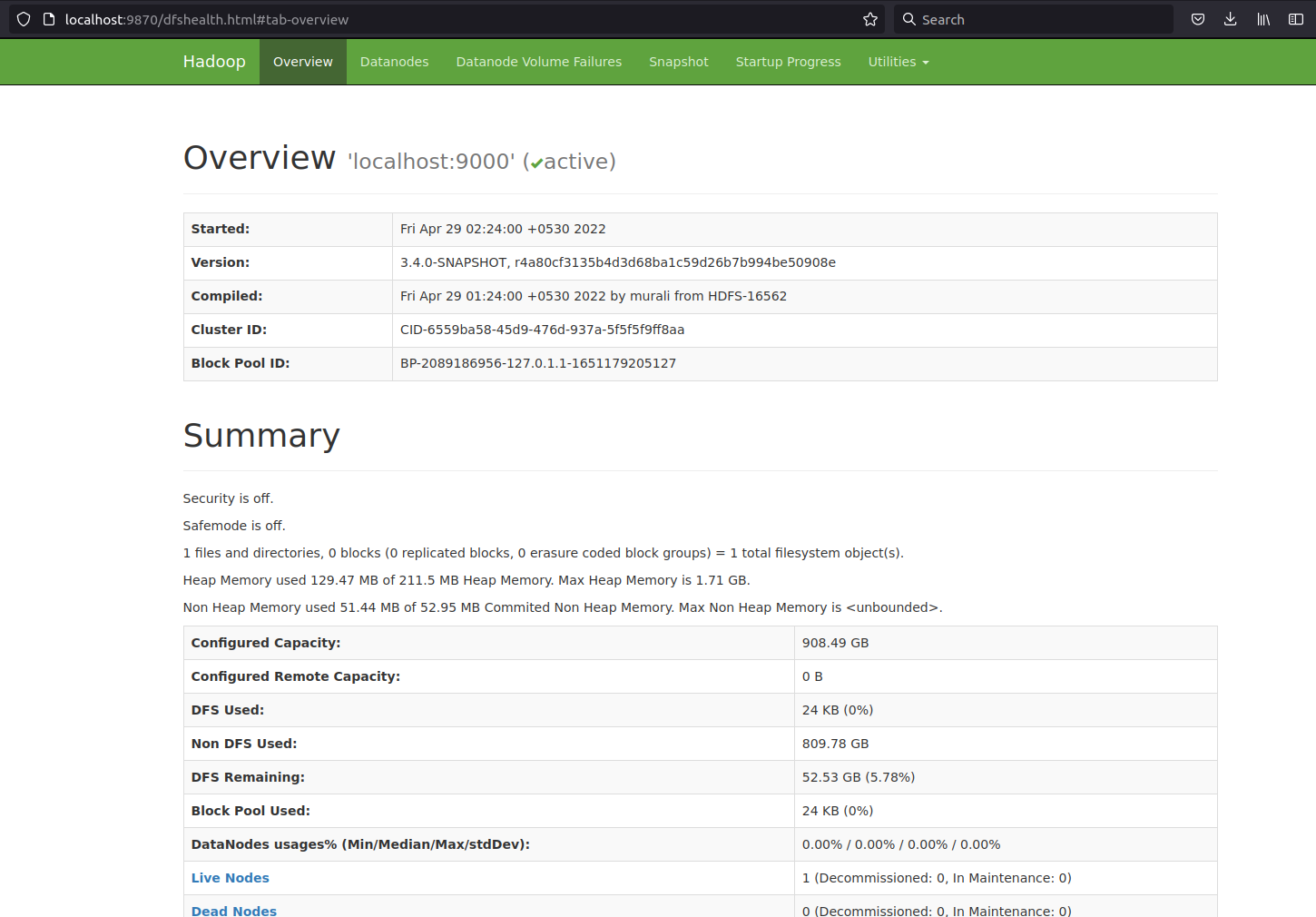

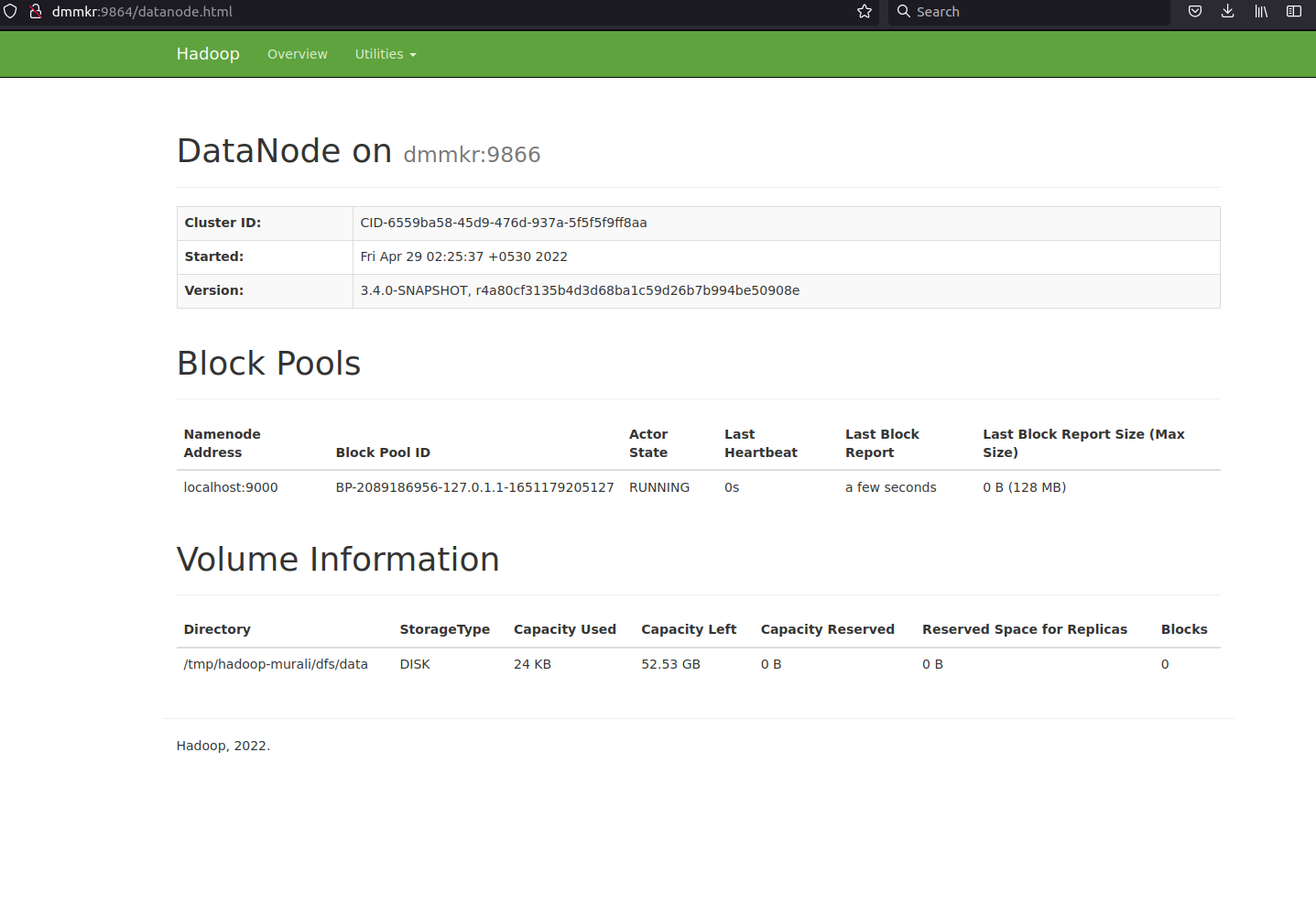

[GitHub] [hadoop] dmmkr commented on pull request #4240: HDFS-16562. Upgrade moment.min.js to 2.29.2

dmmkr commented on PR #4240: URL: https://github.com/apache/hadoop/pull/4240#issuecomment-1112651982 Attaching the namenode and datanode UI   -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[GitHub] [hadoop] prasad-acit commented on pull request #4241: HDFS-16563. Namenode WebUI prints sensitive information on Token expiry

prasad-acit commented on PR #4241: URL: https://github.com/apache/hadoop/pull/4241#issuecomment-1112597494 Thanks @hemanthboyina @Hexiaoqiao @steveloughran for the quick review & feedback. > the key and sensitive information is DelegationKey/Password for DelegationToken, the output message here does not include this information right? Yes, there is no password printed in it. But as per our internal security guidelines displaying the complete Token info is also prohibited. So, suppressed the token from being displayed in the browser. > if the issue is that toString leaks a secret, it should be fixed at that level, as it is likely to end up in logs. we don't want any output to expose secrets. Logging exception or full stack has no issue in this case. We are trying to avoid the token in the browser and keep the message abstract to the end-user. Here additional information is not necessary which can be avoided in the browser. Failed tests corrected, please review the changes. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[GitHub] [hadoop] hadoop-yetus commented on pull request #4245: HDFS-16564. Use uint32_t for hdfs_find

hadoop-yetus commented on PR #4245:

URL: https://github.com/apache/hadoop/pull/4245#issuecomment-1112595640

:confetti_ball: **+1 overall**

| Vote | Subsystem | Runtime | Logfile | Comment |

|::|--:|:|::|:---:|

| +0 :ok: | reexec | 38m 36s | | Docker mode activated. |

_ Prechecks _ |

| +1 :green_heart: | dupname | 0m 0s | | No case conflicting files

found. |

| +0 :ok: | codespell | 0m 0s | | codespell was not available. |

| +1 :green_heart: | @author | 0m 0s | | The patch does not contain

any @author tags. |

| +1 :green_heart: | test4tests | 0m 0s | | The patch appears to

include 1 new or modified test files. |

_ trunk Compile Tests _ |

| +1 :green_heart: | mvninstall | 39m 13s | | trunk passed |

| +1 :green_heart: | compile | 3m 52s | | trunk passed |

| +1 :green_heart: | mvnsite | 0m 39s | | trunk passed |

| +1 :green_heart: | shadedclient | 63m 1s | | branch has no errors

when building and testing our client artifacts. |

_ Patch Compile Tests _ |

| +1 :green_heart: | mvninstall | 0m 24s | | the patch passed |

| +1 :green_heart: | compile | 3m 38s | | the patch passed |

| +1 :green_heart: | cc | 3m 38s | | the patch passed |

| +1 :green_heart: | golang | 3m 38s | | the patch passed |

| +1 :green_heart: | javac | 3m 38s | | the patch passed |

| +1 :green_heart: | blanks | 0m 0s | | The patch has no blanks

issues. |

| +1 :green_heart: | mvnsite | 0m 28s | | the patch passed |

| +1 :green_heart: | shadedclient | 18m 48s | | patch has no errors

when building and testing our client artifacts. |

_ Other Tests _ |

| +1 :green_heart: | unit | 33m 19s | | hadoop-hdfs-native-client in

the patch passed. |

| +1 :green_heart: | asflicense | 0m 54s | | The patch does not

generate ASF License warnings. |

| | | 161m 37s | | |

| Subsystem | Report/Notes |

|--:|:-|

| Docker | ClientAPI=1.41 ServerAPI=1.41 base:

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4245/1/artifact/out/Dockerfile

|

| GITHUB PR | https://github.com/apache/hadoop/pull/4245 |

| Optional Tests | dupname asflicense compile cc mvnsite javac unit

codespell golang |

| uname | Linux 626333c8af82 4.15.0-156-generic #163-Ubuntu SMP Thu Aug 19

23:31:58 UTC 2021 x86_64 x86_64 x86_64 GNU/Linux |

| Build tool | maven |

| Personality | dev-support/bin/hadoop.sh |

| git revision | trunk / 2eef94c4ae6df5e3b45e5cb286d7914f338f94ae |

| Default Java | Red Hat, Inc.-1.8.0_322-b06 |

| Test Results |

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4245/1/testReport/ |

| Max. process+thread count | 544 (vs. ulimit of 5500) |

| modules | C: hadoop-hdfs-project/hadoop-hdfs-native-client U:

hadoop-hdfs-project/hadoop-hdfs-native-client |

| Console output |

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4245/1/console |

| versions | git=2.9.5 maven=3.6.3 |

| Powered by | Apache Yetus 0.14.0-SNAPSHOT https://yetus.apache.org |

This message was automatically generated.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[GitHub] [hadoop] brahmareddybattula commented on pull request #4240: HDFS-16562. Upgrade moment.min.js to 2.29.2

brahmareddybattula commented on PR #4240: URL: https://github.com/apache/hadoop/pull/4240#issuecomment-1112579621 lgtm. it will be good, if you can attach the UI with these changes.. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[jira] [Work logged] (HADOOP-18168) ITestMarkerTool.testRunLimitedLandsatAudit failing due to most of bucket content purged

[

https://issues.apache.org/jira/browse/HADOOP-18168?focusedWorklogId=763813&page=com.atlassian.jira.plugin.system.issuetabpanels:worklog-tabpanel#worklog-763813

]

ASF GitHub Bot logged work on HADOOP-18168:

---

Author: ASF GitHub Bot

Created on: 28/Apr/22 18:42

Start Date: 28/Apr/22 18:42

Worklog Time Spent: 10m

Work Description: hadoop-yetus commented on PR #4140:

URL: https://github.com/apache/hadoop/pull/4140#issuecomment-1112541391

:confetti_ball: **+1 overall**

| Vote | Subsystem | Runtime | Logfile | Comment |

|::|--:|:|::|:---:|

| +0 :ok: | reexec | 0m 35s | | Docker mode activated. |

_ Prechecks _ |

| +1 :green_heart: | dupname | 0m 0s | | No case conflicting files

found. |

| +0 :ok: | codespell | 0m 0s | | codespell was not available. |

| +1 :green_heart: | @author | 0m 0s | | The patch does not contain

any @author tags. |

| +1 :green_heart: | test4tests | 0m 0s | | The patch appears to

include 5 new or modified test files. |

_ trunk Compile Tests _ |

| +1 :green_heart: | mvninstall | 44m 31s | | trunk passed |

| +1 :green_heart: | compile | 0m 57s | | trunk passed with JDK

Ubuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04 |

| +1 :green_heart: | compile | 0m 52s | | trunk passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | checkstyle | 0m 50s | | trunk passed |

| +1 :green_heart: | mvnsite | 1m 2s | | trunk passed |

| +1 :green_heart: | javadoc | 0m 48s | | trunk passed with JDK

Ubuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04 |

| +1 :green_heart: | javadoc | 0m 51s | | trunk passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | spotbugs | 1m 31s | | trunk passed |

| +1 :green_heart: | shadedclient | 21m 43s | | branch has no errors

when building and testing our client artifacts. |

| -0 :warning: | patch | 22m 8s | | Used diff version of patch file.

Binary files and potentially other changes not applied. Please rebase and

squash commits if necessary. |

_ Patch Compile Tests _ |

| +1 :green_heart: | mvninstall | 0m 39s | | the patch passed |

| +1 :green_heart: | compile | 0m 45s | | the patch passed with JDK

Ubuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04 |

| +1 :green_heart: | javac | 0m 45s | | the patch passed |

| +1 :green_heart: | compile | 0m 36s | | the patch passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | javac | 0m 36s | | the patch passed |

| +1 :green_heart: | blanks | 0m 0s | | The patch has no blanks

issues. |

| +1 :green_heart: | checkstyle | 0m 25s | | the patch passed |

| +1 :green_heart: | mvnsite | 0m 43s | | the patch passed |

| +1 :green_heart: | xml | 0m 2s | | The patch has no ill-formed XML

file. |

| +1 :green_heart: | javadoc | 0m 23s | | the patch passed with JDK

Ubuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04 |

| +1 :green_heart: | javadoc | 0m 30s | | the patch passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | spotbugs | 1m 14s | | the patch passed |

| +1 :green_heart: | shadedclient | 20m 45s | | patch has no errors

when building and testing our client artifacts. |

_ Other Tests _ |

| +1 :green_heart: | unit | 2m 29s | | hadoop-aws in the patch passed.

|

| +1 :green_heart: | asflicense | 0m 43s | | The patch does not

generate ASF License warnings. |

| | | 103m 52s | | |

| Subsystem | Report/Notes |

|--:|:-|

| Docker | ClientAPI=1.41 ServerAPI=1.41 base:

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4140/9/artifact/out/Dockerfile

|

| GITHUB PR | https://github.com/apache/hadoop/pull/4140 |

| Optional Tests | dupname asflicense compile javac javadoc mvninstall

mvnsite unit shadedclient spotbugs checkstyle codespell xml |

| uname | Linux 6a40a55d820d 4.15.0-156-generic #163-Ubuntu SMP Thu Aug 19

23:31:58 UTC 2021 x86_64 x86_64 x86_64 GNU/Linux |

| Build tool | maven |

| Personality | dev-support/bin/hadoop.sh |

| git revision | trunk / 73eeb50311a3e06deaafe4b7ceb1fbb72107f538 |

| Default Java | Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| Multi-JDK versions |

/usr/lib/jvm/java-11-openjdk-amd64:Ubuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04

/usr/lib/jvm/java-8-openjdk-amd64:Private

Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| Test Results |

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4140/9/testReport/ |

| Max. process+thread count | 624 (vs. ulimit of 5500) |

| modules | C: hadoop-tools/hadoop-aws U: hadoo

[GitHub] [hadoop] hadoop-yetus commented on pull request #4140: HADOOP-18168. Fix S3A ITestMarkerTool dependency on purged public bucket

hadoop-yetus commented on PR #4140:

URL: https://github.com/apache/hadoop/pull/4140#issuecomment-1112541391

:confetti_ball: **+1 overall**

| Vote | Subsystem | Runtime | Logfile | Comment |

|::|--:|:|::|:---:|

| +0 :ok: | reexec | 0m 35s | | Docker mode activated. |

_ Prechecks _ |

| +1 :green_heart: | dupname | 0m 0s | | No case conflicting files

found. |

| +0 :ok: | codespell | 0m 0s | | codespell was not available. |

| +1 :green_heart: | @author | 0m 0s | | The patch does not contain

any @author tags. |

| +1 :green_heart: | test4tests | 0m 0s | | The patch appears to

include 5 new or modified test files. |

_ trunk Compile Tests _ |

| +1 :green_heart: | mvninstall | 44m 31s | | trunk passed |

| +1 :green_heart: | compile | 0m 57s | | trunk passed with JDK

Ubuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04 |

| +1 :green_heart: | compile | 0m 52s | | trunk passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | checkstyle | 0m 50s | | trunk passed |

| +1 :green_heart: | mvnsite | 1m 2s | | trunk passed |

| +1 :green_heart: | javadoc | 0m 48s | | trunk passed with JDK

Ubuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04 |

| +1 :green_heart: | javadoc | 0m 51s | | trunk passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | spotbugs | 1m 31s | | trunk passed |

| +1 :green_heart: | shadedclient | 21m 43s | | branch has no errors

when building and testing our client artifacts. |

| -0 :warning: | patch | 22m 8s | | Used diff version of patch file.

Binary files and potentially other changes not applied. Please rebase and

squash commits if necessary. |

_ Patch Compile Tests _ |

| +1 :green_heart: | mvninstall | 0m 39s | | the patch passed |

| +1 :green_heart: | compile | 0m 45s | | the patch passed with JDK

Ubuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04 |

| +1 :green_heart: | javac | 0m 45s | | the patch passed |

| +1 :green_heart: | compile | 0m 36s | | the patch passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | javac | 0m 36s | | the patch passed |

| +1 :green_heart: | blanks | 0m 0s | | The patch has no blanks

issues. |

| +1 :green_heart: | checkstyle | 0m 25s | | the patch passed |

| +1 :green_heart: | mvnsite | 0m 43s | | the patch passed |

| +1 :green_heart: | xml | 0m 2s | | The patch has no ill-formed XML

file. |

| +1 :green_heart: | javadoc | 0m 23s | | the patch passed with JDK

Ubuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04 |

| +1 :green_heart: | javadoc | 0m 30s | | the patch passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | spotbugs | 1m 14s | | the patch passed |

| +1 :green_heart: | shadedclient | 20m 45s | | patch has no errors

when building and testing our client artifacts. |

_ Other Tests _ |

| +1 :green_heart: | unit | 2m 29s | | hadoop-aws in the patch passed.

|

| +1 :green_heart: | asflicense | 0m 43s | | The patch does not

generate ASF License warnings. |

| | | 103m 52s | | |

| Subsystem | Report/Notes |

|--:|:-|

| Docker | ClientAPI=1.41 ServerAPI=1.41 base:

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4140/9/artifact/out/Dockerfile

|

| GITHUB PR | https://github.com/apache/hadoop/pull/4140 |

| Optional Tests | dupname asflicense compile javac javadoc mvninstall

mvnsite unit shadedclient spotbugs checkstyle codespell xml |

| uname | Linux 6a40a55d820d 4.15.0-156-generic #163-Ubuntu SMP Thu Aug 19

23:31:58 UTC 2021 x86_64 x86_64 x86_64 GNU/Linux |

| Build tool | maven |

| Personality | dev-support/bin/hadoop.sh |

| git revision | trunk / 73eeb50311a3e06deaafe4b7ceb1fbb72107f538 |

| Default Java | Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| Multi-JDK versions |

/usr/lib/jvm/java-11-openjdk-amd64:Ubuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04

/usr/lib/jvm/java-8-openjdk-amd64:Private

Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| Test Results |

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4140/9/testReport/ |

| Max. process+thread count | 624 (vs. ulimit of 5500) |

| modules | C: hadoop-tools/hadoop-aws U: hadoop-tools/hadoop-aws |

| Console output |

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4140/9/console |

| versions | git=2.25.1 maven=3.6.3 spotbugs=4.2.2 |

| Powered by | Apache Yetus 0.14.0-SNAPSHOT https://yetus.apache.org |

This message was automatically generated.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use

[jira] [Work logged] (HADOOP-16965) Introduce StreamContext for Abfs Input and Output streams.

[ https://issues.apache.org/jira/browse/HADOOP-16965?focusedWorklogId=763805&page=com.atlassian.jira.plugin.system.issuetabpanels:worklog-tabpanel#worklog-763805 ] ASF GitHub Bot logged work on HADOOP-16965: --- Author: ASF GitHub Bot Created on: 28/Apr/22 18:36 Start Date: 28/Apr/22 18:36 Worklog Time Spent: 10m Work Description: mukund-thakur commented on PR #4171: URL: https://github.com/apache/hadoop/pull/4171#issuecomment-1112536885 > > Yetus still failing with unit tests. Please fix those https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4171/3/artifact/out/patch-unit-hadoop-tools_hadoop-azure.txt > > Hi @mukund-thakur , those unit tests fails in branch-2.10 . This PR does not introduce any new failing tests. e.g. take this PR #4151. It is merged into branch-2.10 and it also has some failing unit tests. Okay if that's the case. I am okay with the change and no longer have any concerns with the current PR. Issue Time Tracking --- Worklog Id: (was: 763805) Time Spent: 4h 10m (was: 4h) > Introduce StreamContext for Abfs Input and Output streams. > -- > > Key: HADOOP-16965 > URL: https://issues.apache.org/jira/browse/HADOOP-16965 > Project: Hadoop Common > Issue Type: Improvement > Components: fs/azure >Reporter: Mukund Thakur >Assignee: Mukund Thakur >Priority: Major > Labels: pull-request-available > Fix For: 3.4.0 > > Time Spent: 4h 10m > Remaining Estimate: 0h > > The number of configuration keeps growing in AbfsOutputStream and > AbfsInputStream as we keep on adding new features. It is time to refactor the > configurations in a separate class like StreamContext and pass them around. > This is will improve the readability of code and reduce cherry-pick-backport > pain. -- This message was sent by Atlassian Jira (v8.20.7#820007) - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[GitHub] [hadoop] mukund-thakur commented on pull request #4171: HADOOP-16965. Refactor abfs stream configuration. (#1956)

mukund-thakur commented on PR #4171: URL: https://github.com/apache/hadoop/pull/4171#issuecomment-1112536885 > > Yetus still failing with unit tests. Please fix those https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4171/3/artifact/out/patch-unit-hadoop-tools_hadoop-azure.txt > > Hi @mukund-thakur , those unit tests fails in branch-2.10 . This PR does not introduce any new failing tests. e.g. take this PR #4151. It is merged into branch-2.10 and it also has some failing unit tests. Okay if that's the case. I am okay with the change and no longer have any concerns with the current PR. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[GitHub] [hadoop] goiri commented on a diff in pull request #4245: HDFS-16564. Use uint32_t for hdfs_find

goiri commented on code in PR #4245:

URL: https://github.com/apache/hadoop/pull/4245#discussion_r861205984

##

hadoop-hdfs-project/hadoop-hdfs-native-client/src/main/native/libhdfspp/tests/hdfspp_mini_dfs_smoke.cc:

##

@@ -34,7 +34,6 @@ TEST_F(HdfsMiniDfsSmokeTest, SmokeTest) {

EXPECT_NE(nullptr, connection.handle());

}

-

Review Comment:

Avoid

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[jira] [Work logged] (HADOOP-18079) Upgrade Netty to 4.1.74

[

https://issues.apache.org/jira/browse/HADOOP-18079?focusedWorklogId=763787&page=com.atlassian.jira.plugin.system.issuetabpanels:worklog-tabpanel#worklog-763787

]

ASF GitHub Bot logged work on HADOOP-18079:

---

Author: ASF GitHub Bot

Created on: 28/Apr/22 18:23

Start Date: 28/Apr/22 18:23

Worklog Time Spent: 10m

Work Description: hadoop-yetus commented on PR #3977:

URL: https://github.com/apache/hadoop/pull/3977#issuecomment-1112525743

:broken_heart: **-1 overall**

| Vote | Subsystem | Runtime | Logfile | Comment |

|::|--:|:|::|:---:|

| +0 :ok: | reexec | 0m 34s | | Docker mode activated. |

_ Prechecks _ |

| +1 :green_heart: | dupname | 0m 0s | | No case conflicting files

found. |

| +0 :ok: | codespell | 0m 1s | | codespell was not available. |

| +0 :ok: | shelldocs | 0m 1s | | Shelldocs was not available. |

| +1 :green_heart: | @author | 0m 0s | | The patch does not contain

any @author tags. |

| -1 :x: | test4tests | 0m 0s | | The patch doesn't appear to include

any new or modified tests. Please justify why no new tests are needed for this

patch. Also please list what manual steps were performed to verify this patch.

|

_ trunk Compile Tests _ |

| +0 :ok: | mvndep | 15m 14s | | Maven dependency ordering for branch |

| +1 :green_heart: | mvninstall | 25m 18s | | trunk passed |

| +1 :green_heart: | compile | 23m 13s | | trunk passed with JDK

Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 |

| +1 :green_heart: | compile | 20m 41s | | trunk passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | mvnsite | 19m 8s | | trunk passed |

| -1 :x: | javadoc | 1m 44s |

[/branch-javadoc-root-jdkPrivateBuild-11.0.15+10-Ubuntu-0ubuntu0.20.04.1.txt](https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-3977/3/artifact/out/branch-javadoc-root-jdkPrivateBuild-11.0.15+10-Ubuntu-0ubuntu0.20.04.1.txt)

| root in trunk failed with JDK Private

Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1. |

| +1 :green_heart: | javadoc | 8m 23s | | trunk passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | shadedclient | 29m 53s | | branch has no errors

when building and testing our client artifacts. |

_ Patch Compile Tests _ |

| +0 :ok: | mvndep | 1m 8s | | Maven dependency ordering for patch |

| +1 :green_heart: | mvninstall | 22m 4s | | the patch passed |

| +1 :green_heart: | compile | 22m 57s | | the patch passed with JDK

Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 |

| +1 :green_heart: | javac | 22m 57s | | the patch passed |

| +1 :green_heart: | compile | 20m 46s | | the patch passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | javac | 20m 46s | | the patch passed |

| +1 :green_heart: | blanks | 0m 0s | | The patch has no blanks

issues. |

| +1 :green_heart: | mvnsite | 19m 2s | | the patch passed |

| +1 :green_heart: | shellcheck | 0m 0s | | No new issues. |

| +1 :green_heart: | xml | 0m 1s | | The patch has no ill-formed XML

file. |

| -1 :x: | javadoc | 1m 34s |

[/patch-javadoc-root-jdkPrivateBuild-11.0.15+10-Ubuntu-0ubuntu0.20.04.1.txt](https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-3977/3/artifact/out/patch-javadoc-root-jdkPrivateBuild-11.0.15+10-Ubuntu-0ubuntu0.20.04.1.txt)

| root in the patch failed with JDK Private

Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1. |

| +1 :green_heart: | javadoc | 8m 22s | | the patch passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | shadedclient | 32m 15s | | patch has no errors

when building and testing our client artifacts. |

_ Other Tests _ |

| -1 :x: | unit | 794m 49s |

[/patch-unit-root.txt](https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-3977/3/artifact/out/patch-unit-root.txt)

| root in the patch passed. |

| +1 :green_heart: | asflicense | 2m 45s | | The patch does not

generate ASF License warnings. |

| | | 1052m 22s | | |

| Reason | Tests |

|---:|:--|

| Failed junit tests |

hadoop.yarn.client.TestResourceManagerAdministrationProtocolPBClientImpl |

| | hadoop.yarn.client.TestGetGroups |

| | hadoop.yarn.csi.client.TestCsiClient |

| | hadoop.yarn.server.timeline.webapp.TestTimelineWebServicesWithSSL |

| |

hadoop.yarn.server.timeline.security.TestTimelineAuthenticationFilterForV1 |

| |

hadoop.yarn.server.applicationhistoryservice.TestApplicationHistoryServer |

| | hadoop.yarn.server.resourcemanager.metrics.TestSystemMetricsPublisher |

| | hadoop.yarn.web

[GitHub] [hadoop] hadoop-yetus commented on pull request #3977: HADOOP-18079. Upgrade Netty to 4.1.74.

hadoop-yetus commented on PR #3977:

URL: https://github.com/apache/hadoop/pull/3977#issuecomment-1112525743

:broken_heart: **-1 overall**

| Vote | Subsystem | Runtime | Logfile | Comment |

|::|--:|:|::|:---:|

| +0 :ok: | reexec | 0m 34s | | Docker mode activated. |

_ Prechecks _ |

| +1 :green_heart: | dupname | 0m 0s | | No case conflicting files

found. |

| +0 :ok: | codespell | 0m 1s | | codespell was not available. |

| +0 :ok: | shelldocs | 0m 1s | | Shelldocs was not available. |

| +1 :green_heart: | @author | 0m 0s | | The patch does not contain

any @author tags. |

| -1 :x: | test4tests | 0m 0s | | The patch doesn't appear to include

any new or modified tests. Please justify why no new tests are needed for this

patch. Also please list what manual steps were performed to verify this patch.

|

_ trunk Compile Tests _ |

| +0 :ok: | mvndep | 15m 14s | | Maven dependency ordering for branch |

| +1 :green_heart: | mvninstall | 25m 18s | | trunk passed |

| +1 :green_heart: | compile | 23m 13s | | trunk passed with JDK

Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 |

| +1 :green_heart: | compile | 20m 41s | | trunk passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | mvnsite | 19m 8s | | trunk passed |

| -1 :x: | javadoc | 1m 44s |

[/branch-javadoc-root-jdkPrivateBuild-11.0.15+10-Ubuntu-0ubuntu0.20.04.1.txt](https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-3977/3/artifact/out/branch-javadoc-root-jdkPrivateBuild-11.0.15+10-Ubuntu-0ubuntu0.20.04.1.txt)

| root in trunk failed with JDK Private

Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1. |

| +1 :green_heart: | javadoc | 8m 23s | | trunk passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | shadedclient | 29m 53s | | branch has no errors

when building and testing our client artifacts. |

_ Patch Compile Tests _ |

| +0 :ok: | mvndep | 1m 8s | | Maven dependency ordering for patch |

| +1 :green_heart: | mvninstall | 22m 4s | | the patch passed |

| +1 :green_heart: | compile | 22m 57s | | the patch passed with JDK

Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 |

| +1 :green_heart: | javac | 22m 57s | | the patch passed |

| +1 :green_heart: | compile | 20m 46s | | the patch passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | javac | 20m 46s | | the patch passed |

| +1 :green_heart: | blanks | 0m 0s | | The patch has no blanks

issues. |

| +1 :green_heart: | mvnsite | 19m 2s | | the patch passed |

| +1 :green_heart: | shellcheck | 0m 0s | | No new issues. |

| +1 :green_heart: | xml | 0m 1s | | The patch has no ill-formed XML

file. |

| -1 :x: | javadoc | 1m 34s |

[/patch-javadoc-root-jdkPrivateBuild-11.0.15+10-Ubuntu-0ubuntu0.20.04.1.txt](https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-3977/3/artifact/out/patch-javadoc-root-jdkPrivateBuild-11.0.15+10-Ubuntu-0ubuntu0.20.04.1.txt)

| root in the patch failed with JDK Private

Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1. |

| +1 :green_heart: | javadoc | 8m 22s | | the patch passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | shadedclient | 32m 15s | | patch has no errors

when building and testing our client artifacts. |

_ Other Tests _ |

| -1 :x: | unit | 794m 49s |

[/patch-unit-root.txt](https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-3977/3/artifact/out/patch-unit-root.txt)

| root in the patch passed. |

| +1 :green_heart: | asflicense | 2m 45s | | The patch does not

generate ASF License warnings. |

| | | 1052m 22s | | |

| Reason | Tests |

|---:|:--|

| Failed junit tests |

hadoop.yarn.client.TestResourceManagerAdministrationProtocolPBClientImpl |

| | hadoop.yarn.client.TestGetGroups |

| | hadoop.yarn.csi.client.TestCsiClient |

| | hadoop.yarn.server.timeline.webapp.TestTimelineWebServicesWithSSL |

| |

hadoop.yarn.server.timeline.security.TestTimelineAuthenticationFilterForV1 |

| |

hadoop.yarn.server.applicationhistoryservice.TestApplicationHistoryServer |

| | hadoop.yarn.server.resourcemanager.metrics.TestSystemMetricsPublisher |

| | hadoop.yarn.webapp.TestRMWithXFSFilter |

| | hadoop.yarn.server.resourcemanager.TestClientRMService |

| |

hadoop.yarn.server.resourcemanager.webapp.TestRMWebServicesDelegationTokenAuthentication

|

| | hadoop.yarn.server.resourcemanager.webapp.TestRMWebappAuthentication |

| | hadoop.yarn.server.resourcemanager.TestRMHA |

| |

hadoop.yarn.server.resourcemanager.metrics.TestCombinedSystemMetricsPublisher |

| | hadoo

[jira] [Work logged] (HADOOP-18168) ITestMarkerTool.testRunLimitedLandsatAudit failing due to most of bucket content purged

[

https://issues.apache.org/jira/browse/HADOOP-18168?focusedWorklogId=763782&page=com.atlassian.jira.plugin.system.issuetabpanels:worklog-tabpanel#worklog-763782

]

ASF GitHub Bot logged work on HADOOP-18168:

---

Author: ASF GitHub Bot

Created on: 28/Apr/22 18:16

Start Date: 28/Apr/22 18:16

Worklog Time Spent: 10m

Work Description: hadoop-yetus commented on PR #4140:

URL: https://github.com/apache/hadoop/pull/4140#issuecomment-1112520064

:confetti_ball: **+1 overall**

| Vote | Subsystem | Runtime | Logfile | Comment |

|::|--:|:|::|:---:|

| +0 :ok: | reexec | 0m 49s | | Docker mode activated. |

_ Prechecks _ |

| +1 :green_heart: | dupname | 0m 0s | | No case conflicting files

found. |

| +0 :ok: | codespell | 0m 0s | | codespell was not available. |

| +1 :green_heart: | @author | 0m 0s | | The patch does not contain

any @author tags. |

| +1 :green_heart: | test4tests | 0m 0s | | The patch appears to

include 5 new or modified test files. |

_ trunk Compile Tests _ |

| +1 :green_heart: | mvninstall | 38m 46s | | trunk passed |

| +1 :green_heart: | compile | 0m 48s | | trunk passed with JDK

Ubuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04 |

| +1 :green_heart: | compile | 0m 39s | | trunk passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | checkstyle | 0m 36s | | trunk passed |

| +1 :green_heart: | mvnsite | 0m 49s | | trunk passed |

| +1 :green_heart: | javadoc | 0m 35s | | trunk passed with JDK

Ubuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04 |

| +1 :green_heart: | javadoc | 0m 35s | | trunk passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | spotbugs | 1m 22s | | trunk passed |

| +1 :green_heart: | shadedclient | 22m 42s | | branch has no errors

when building and testing our client artifacts. |

| -0 :warning: | patch | 23m 1s | | Used diff version of patch file.

Binary files and potentially other changes not applied. Please rebase and

squash commits if necessary. |

_ Patch Compile Tests _ |

| +1 :green_heart: | mvninstall | 0m 35s | | the patch passed |

| +1 :green_heart: | compile | 0m 42s | | the patch passed with JDK

Ubuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04 |

| +1 :green_heart: | javac | 0m 42s | | the patch passed |

| +1 :green_heart: | compile | 0m 31s | | the patch passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | javac | 0m 31s | | the patch passed |

| +1 :green_heart: | blanks | 0m 0s | | The patch has no blanks

issues. |

| -0 :warning: | checkstyle | 0m 21s |

[/results-checkstyle-hadoop-tools_hadoop-aws.txt](https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4140/8/artifact/out/results-checkstyle-hadoop-tools_hadoop-aws.txt)

| hadoop-tools/hadoop-aws: The patch generated 1 new + 1 unchanged - 0 fixed

= 2 total (was 1) |

| +1 :green_heart: | mvnsite | 0m 39s | | the patch passed |

| +1 :green_heart: | xml | 0m 1s | | The patch has no ill-formed XML

file. |

| +1 :green_heart: | javadoc | 0m 18s | | the patch passed with JDK

Ubuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04 |

| +1 :green_heart: | javadoc | 0m 25s | | the patch passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | spotbugs | 1m 8s | | the patch passed |

| +1 :green_heart: | shadedclient | 20m 51s | | patch has no errors

when building and testing our client artifacts. |

_ Other Tests _ |

| +1 :green_heart: | unit | 2m 21s | | hadoop-aws in the patch passed.

|

| +1 :green_heart: | asflicense | 0m 38s | | The patch does not

generate ASF License warnings. |

| | | 97m 2s | | |

| Subsystem | Report/Notes |

|--:|:-|

| Docker | ClientAPI=1.41 ServerAPI=1.41 base:

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4140/8/artifact/out/Dockerfile

|

| GITHUB PR | https://github.com/apache/hadoop/pull/4140 |

| Optional Tests | dupname asflicense compile javac javadoc mvninstall

mvnsite unit shadedclient spotbugs checkstyle codespell xml |

| uname | Linux 341d2ef8742d 4.15.0-169-generic #177-Ubuntu SMP Thu Feb 3

10:50:38 UTC 2022 x86_64 x86_64 x86_64 GNU/Linux |

| Build tool | maven |

| Personality | dev-support/bin/hadoop.sh |

| git revision | trunk / 364ce9eba20743aad75689f92cd14e0517ce0a5e |

| Default Java | Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| Multi-JDK versions |

/usr/lib/jvm/java-11-openjdk-amd64:Ubuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04

/usr/lib/jvm/java-8-openjdk-amd64:Private

Build-1.8

[GitHub] [hadoop] hadoop-yetus commented on pull request #4140: HADOOP-18168. Fix S3A ITestMarkerTool dependency on purged public bucket

hadoop-yetus commented on PR #4140:

URL: https://github.com/apache/hadoop/pull/4140#issuecomment-1112520064

:confetti_ball: **+1 overall**

| Vote | Subsystem | Runtime | Logfile | Comment |

|::|--:|:|::|:---:|

| +0 :ok: | reexec | 0m 49s | | Docker mode activated. |

_ Prechecks _ |

| +1 :green_heart: | dupname | 0m 0s | | No case conflicting files

found. |

| +0 :ok: | codespell | 0m 0s | | codespell was not available. |

| +1 :green_heart: | @author | 0m 0s | | The patch does not contain

any @author tags. |

| +1 :green_heart: | test4tests | 0m 0s | | The patch appears to

include 5 new or modified test files. |

_ trunk Compile Tests _ |

| +1 :green_heart: | mvninstall | 38m 46s | | trunk passed |

| +1 :green_heart: | compile | 0m 48s | | trunk passed with JDK

Ubuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04 |

| +1 :green_heart: | compile | 0m 39s | | trunk passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | checkstyle | 0m 36s | | trunk passed |

| +1 :green_heart: | mvnsite | 0m 49s | | trunk passed |

| +1 :green_heart: | javadoc | 0m 35s | | trunk passed with JDK

Ubuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04 |

| +1 :green_heart: | javadoc | 0m 35s | | trunk passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | spotbugs | 1m 22s | | trunk passed |

| +1 :green_heart: | shadedclient | 22m 42s | | branch has no errors

when building and testing our client artifacts. |

| -0 :warning: | patch | 23m 1s | | Used diff version of patch file.

Binary files and potentially other changes not applied. Please rebase and

squash commits if necessary. |

_ Patch Compile Tests _ |

| +1 :green_heart: | mvninstall | 0m 35s | | the patch passed |

| +1 :green_heart: | compile | 0m 42s | | the patch passed with JDK

Ubuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04 |

| +1 :green_heart: | javac | 0m 42s | | the patch passed |

| +1 :green_heart: | compile | 0m 31s | | the patch passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | javac | 0m 31s | | the patch passed |

| +1 :green_heart: | blanks | 0m 0s | | The patch has no blanks

issues. |

| -0 :warning: | checkstyle | 0m 21s |

[/results-checkstyle-hadoop-tools_hadoop-aws.txt](https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4140/8/artifact/out/results-checkstyle-hadoop-tools_hadoop-aws.txt)

| hadoop-tools/hadoop-aws: The patch generated 1 new + 1 unchanged - 0 fixed

= 2 total (was 1) |

| +1 :green_heart: | mvnsite | 0m 39s | | the patch passed |

| +1 :green_heart: | xml | 0m 1s | | The patch has no ill-formed XML

file. |

| +1 :green_heart: | javadoc | 0m 18s | | the patch passed with JDK

Ubuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04 |

| +1 :green_heart: | javadoc | 0m 25s | | the patch passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | spotbugs | 1m 8s | | the patch passed |

| +1 :green_heart: | shadedclient | 20m 51s | | patch has no errors

when building and testing our client artifacts. |

_ Other Tests _ |

| +1 :green_heart: | unit | 2m 21s | | hadoop-aws in the patch passed.

|

| +1 :green_heart: | asflicense | 0m 38s | | The patch does not

generate ASF License warnings. |

| | | 97m 2s | | |

| Subsystem | Report/Notes |

|--:|:-|

| Docker | ClientAPI=1.41 ServerAPI=1.41 base:

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4140/8/artifact/out/Dockerfile

|

| GITHUB PR | https://github.com/apache/hadoop/pull/4140 |

| Optional Tests | dupname asflicense compile javac javadoc mvninstall

mvnsite unit shadedclient spotbugs checkstyle codespell xml |

| uname | Linux 341d2ef8742d 4.15.0-169-generic #177-Ubuntu SMP Thu Feb 3

10:50:38 UTC 2022 x86_64 x86_64 x86_64 GNU/Linux |

| Build tool | maven |

| Personality | dev-support/bin/hadoop.sh |

| git revision | trunk / 364ce9eba20743aad75689f92cd14e0517ce0a5e |

| Default Java | Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| Multi-JDK versions |

/usr/lib/jvm/java-11-openjdk-amd64:Ubuntu-11.0.14.1+1-Ubuntu-0ubuntu1.20.04

/usr/lib/jvm/java-8-openjdk-amd64:Private

Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| Test Results |

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4140/8/testReport/ |

| Max. process+thread count | 718 (vs. ulimit of 5500) |

| modules | C: hadoop-tools/hadoop-aws U: hadoop-tools/hadoop-aws |

| Console output |

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4140/8/console |

| versions | git=2.25.1 maven=3.6.3 spotbugs=4.2.2 |

| Po

[GitHub] [hadoop] saintstack opened a new pull request, #4246: HDFS-16540. Data locality is lost when DataNode pod restarts in kubernetes (#4170)

saintstack opened a new pull request, #4246: URL: https://github.com/apache/hadoop/pull/4246 ### Description of PR Cherry-pick of 9ed8d60511dccf96108239c5c96e108a7d4bc975 ### How was this patch tested? ### For code changes: - [ ] Does the title or this PR starts with the corresponding JIRA issue id (e.g. 'HADOOP-17799. Your PR title ...')? - [ ] Object storage: have the integration tests been executed and the endpoint declared according to the connector-specific documentation? - [ ] If adding new dependencies to the code, are these dependencies licensed in a way that is compatible for inclusion under [ASF 2.0](http://www.apache.org/legal/resolved.html#category-a)? - [ ] If applicable, have you updated the `LICENSE`, `LICENSE-binary`, `NOTICE-binary` files? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[GitHub] [hadoop] hadoop-yetus commented on pull request #4244: YARN-11119. Backport YARN-10538 to branch-2.10

hadoop-yetus commented on PR #4244:

URL: https://github.com/apache/hadoop/pull/4244#issuecomment-1112471513

:confetti_ball: **+1 overall**

| Vote | Subsystem | Runtime | Logfile | Comment |

|::|--:|:|::|:---:|

| +0 :ok: | reexec | 12m 31s | | Docker mode activated. |

_ Prechecks _ |

| +1 :green_heart: | dupname | 0m 1s | | No case conflicting files

found. |

| +0 :ok: | codespell | 0m 0s | | codespell was not available. |

| +1 :green_heart: | @author | 0m 0s | | The patch does not contain

any @author tags. |

| +1 :green_heart: | test4tests | 0m 0s | | The patch appears to

include 1 new or modified test files. |

_ branch-2.10 Compile Tests _ |