[GitHub] [flink] flinkbot commented on pull request #19494: [FLINK-27267][contrib] Migrate tests to JUnit5

flinkbot commented on PR #19494: URL: https://github.com/apache/flink/pull/19494#issuecomment-1100499721 ## CI report: * 4b20ff4a6cbbc48cc8ce3e1baa10befdee58840a UNKNOWN Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: issues-unsubscr...@flink.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Updated] (FLINK-27267) [JUnit5 Migration] Module: flink-contrib

[ https://issues.apache.org/jira/browse/FLINK-27267?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] ASF GitHub Bot updated FLINK-27267: --- Labels: pull-request-available (was: ) > [JUnit5 Migration] Module: flink-contrib > > > Key: FLINK-27267 > URL: https://issues.apache.org/jira/browse/FLINK-27267 > Project: Flink > Issue Type: Sub-task > Components: Tests >Affects Versions: 1.16.0 >Reporter: RocMarshal >Priority: Major > Labels: pull-request-available > -- This message was sent by Atlassian Jira (v8.20.1#820001)

[GitHub] [flink] RocMarshal opened a new pull request, #19494: [FLINK-27267][contrib] Migrate tests to JUnit5

RocMarshal opened a new pull request, #19494: URL: https://github.com/apache/flink/pull/19494 ## What is the purpose of the change Migrate tests to JUnit5 ## Brief change log Migrate tests to JUnit5 ## Verifying this change This change is a trivial rework / code cleanup without any test coverage. ## Does this pull request potentially affect one of the following parts: - Dependencies (does it add or upgrade a dependency): (yes / **no**) - The public API, i.e., is any changed class annotated with `@Public(Evolving)`: (yes / **no**) - The serializers: (yes / **no** / don't know) - The runtime per-record code paths (performance sensitive): (yes / **no** / don't know) - Anything that affects deployment or recovery: JobManager (and its components), Checkpointing, Kubernetes/Yarn, ZooKeeper: (yes / **no** / don't know) - The S3 file system connector: (yes / **no** / don't know) ## Documentation - Does this pull request introduce a new feature? (yes / **no**) - If yes, how is the feature documented? (**not applicable** / docs / JavaDocs / not documented) -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: issues-unsubscr...@flink.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Created] (FLINK-27267) [JUnit5 Migration] Module: flink-contrib

RocMarshal created FLINK-27267: -- Summary: [JUnit5 Migration] Module: flink-contrib Key: FLINK-27267 URL: https://issues.apache.org/jira/browse/FLINK-27267 Project: Flink Issue Type: Sub-task Components: Tests Affects Versions: 1.16.0 Reporter: RocMarshal -- This message was sent by Atlassian Jira (v8.20.1#820001)

[jira] [Updated] (FLINK-21501) Sync Chinese documentation with FLINK-21343

[ https://issues.apache.org/jira/browse/FLINK-21501?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Flink Jira Bot updated FLINK-21501: --- Labels: auto-deprioritized-major auto-deprioritized-minor auto-unassigned pull-request-available (was: auto-deprioritized-major auto-unassigned pull-request-available stale-minor) Priority: Not a Priority (was: Minor) This issue was labeled "stale-minor" 7 days ago and has not received any updates so it is being deprioritized. If this ticket is actually Minor, please raise the priority and ask a committer to assign you the issue or revive the public discussion. > Sync Chinese documentation with FLINK-21343 > --- > > Key: FLINK-21501 > URL: https://issues.apache.org/jira/browse/FLINK-21501 > Project: Flink > Issue Type: Bug > Components: Documentation >Affects Versions: 1.13.0 >Reporter: Dawid Wysakowicz >Priority: Not a Priority > Labels: auto-deprioritized-major, auto-deprioritized-minor, > auto-unassigned, pull-request-available > > We should update the Chinese documentation with changes introduced in > FLINK-21343 -- This message was sent by Atlassian Jira (v8.20.1#820001)

[jira] [Updated] (FLINK-20561) Add documentation for `records-lag-max` metric.

[ https://issues.apache.org/jira/browse/FLINK-20561?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Flink Jira Bot updated FLINK-20561: --- Labels: auto-deprioritized-major auto-deprioritized-minor pull-request-available (was: auto-deprioritized-major pull-request-available stale-minor) Priority: Not a Priority (was: Minor) This issue was labeled "stale-minor" 7 days ago and has not received any updates so it is being deprioritized. If this ticket is actually Minor, please raise the priority and ask a committer to assign you the issue or revive the public discussion. > Add documentation for `records-lag-max` metric. > > > Key: FLINK-20561 > URL: https://issues.apache.org/jira/browse/FLINK-20561 > Project: Flink > Issue Type: Improvement > Components: Documentation >Affects Versions: 1.11.0, 1.12.0 >Reporter: xiaozilong >Priority: Not a Priority > Labels: auto-deprioritized-major, auto-deprioritized-minor, > pull-request-available > > Currently, there are no metric description for kafka topic's lag in f[link > metrics > docs|https://ci.apache.org/projects/flink/flink-docs-release-1.11/monitoring/metrics.html#connectors]. > But this metric was reported in flink actually. So we should add some docs > to guide the users to use it. > -- This message was sent by Atlassian Jira (v8.20.1#820001)

[jira] [Updated] (FLINK-20778) [FLINK-20778] the comment for the type of kafka consumer is wrong at KafkaPartitionSplit

[

https://issues.apache.org/jira/browse/FLINK-20778?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Flink Jira Bot updated FLINK-20778:

---

Labels: auto-deprioritized-major auto-deprioritized-minor auto-unassigned

pull-request-available (was: auto-deprioritized-major auto-unassigned

pull-request-available stale-minor)

Priority: Not a Priority (was: Minor)

This issue was labeled "stale-minor" 7 days ago and has not received any

updates so it is being deprioritized. If this ticket is actually Minor, please

raise the priority and ask a committer to assign you the issue or revive the

public discussion.

> [FLINK-20778] the comment for the type of kafka consumer is wrong at

> KafkaPartitionSplit

> -

>

> Key: FLINK-20778

> URL: https://issues.apache.org/jira/browse/FLINK-20778

> Project: Flink

> Issue Type: Improvement

> Components: Connectors / Kafka

>Reporter: Jeremy Mei

>Priority: Not a Priority

> Labels: auto-deprioritized-major, auto-deprioritized-minor,

> auto-unassigned, pull-request-available

> Attachments: Screen Capture_select-area_20201228011944.png

>

>

> the current code:

> {code:java}

> // Indicating the split should consume from the earliest.

> public static final long LATEST_OFFSET = -1;

> // Indicating the split should consume from the latest.

> public static final long EARLIEST_OFFSET = -2;

> {code}

> should be adjusted as blew

> {code:java}

> // Indicating the split should consume from the latest.

> public static final long LATEST_OFFSET = -1;

> // Indicating the split should consume from the earliest.

> public static final long EARLIEST_OFFSET = -2;

> {code}

--

This message was sent by Atlassian Jira

(v8.20.1#820001)

[jira] [Updated] (FLINK-26104) KeyError: 'type_info' in PyFlink test

[

https://issues.apache.org/jira/browse/FLINK-26104?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Flink Jira Bot updated FLINK-26104:

---

Labels: stale-major test-stability (was: test-stability)

I am the [Flink Jira Bot|https://github.com/apache/flink-jira-bot/] and I help

the community manage its development. I see this issues has been marked as

Major but is unassigned and neither itself nor its Sub-Tasks have been updated

for 60 days. I have gone ahead and added a "stale-major" to the issue". If this

ticket is a Major, please either assign yourself or give an update. Afterwards,

please remove the label or in 7 days the issue will be deprioritized.

> KeyError: 'type_info' in PyFlink test

> -

>

> Key: FLINK-26104

> URL: https://issues.apache.org/jira/browse/FLINK-26104

> Project: Flink

> Issue Type: Bug

> Components: API / Python

>Affects Versions: 1.14.3

>Reporter: Huang Xingbo

>Priority: Major

> Labels: stale-major, test-stability

>

> {code:java}

> 2022-02-14T04:33:10.9891373Z Feb 14 04:33:10 E Caused by:

> java.lang.RuntimeException: Failed to create stage bundle factory!

> INFO:root:Initializing Python harness:

> /__w/1/s/flink-python/pyflink/fn_execution/beam/beam_boot.py --id=103-1

> --provision_endpoint=localhost:46669

> 2022-02-14T04:33:10.9892470Z Feb 14 04:33:10 E

> INFO:root:Starting up Python harness in a standalone process.

> 2022-02-14T04:33:10.9893079Z Feb 14 04:33:10 E Traceback

> (most recent call last):

> 2022-02-14T04:33:10.9894030Z Feb 14 04:33:10 E File

> "/__w/1/s/flink-python/dev/.conda/lib/python3.7/runpy.py", line 193, in

> _run_module_as_main

> 2022-02-14T04:33:10.9894791Z Feb 14 04:33:10 E

> "__main__", mod_spec)

> 2022-02-14T04:33:10.9895653Z Feb 14 04:33:10 E File

> "/__w/1/s/flink-python/dev/.conda/lib/python3.7/runpy.py", line 85, in

> _run_code

> 2022-02-14T04:33:10.9896395Z Feb 14 04:33:10 E

> exec(code, run_globals)

> 2022-02-14T04:33:10.9904913Z Feb 14 04:33:10 E File

> "/__w/1/s/flink-python/pyflink/fn_execution/beam/beam_boot.py", line 116, in

>

> 2022-02-14T04:33:10.9930244Z Feb 14 04:33:10 E from

> pyflink.fn_execution.beam import beam_sdk_worker_main

> 2022-02-14T04:33:10.9931563Z Feb 14 04:33:10 E File

> "/__w/1/s/flink-python/pyflink/fn_execution/beam/beam_sdk_worker_main.py",

> line 21, in

> 2022-02-14T04:33:10.9932630Z Feb 14 04:33:10 E import

> pyflink.fn_execution.beam.beam_operations # noqa # pylint:

> disable=unused-import

> 2022-02-14T04:33:10.9933754Z Feb 14 04:33:10 E File

> "/__w/1/s/flink-python/pyflink/fn_execution/beam/beam_operations.py", line

> 23, in

> 2022-02-14T04:33:10.9934415Z Feb 14 04:33:10 E from

> pyflink.fn_execution import flink_fn_execution_pb2

> 2022-02-14T04:33:10.9935335Z Feb 14 04:33:10 E File

> "/__w/1/s/flink-python/pyflink/fn_execution/flink_fn_execution_pb2.py", line

> 2581, in

> 2022-02-14T04:33:10.9936378Z Feb 14 04:33:10 E

> _SCHEMA_FIELDTYPE.fields_by_name['time_info'].containing_oneof =

> _SCHEMA_FIELDTYPE.oneofs_by_name['type_info']

> 2022-02-14T04:33:10.9946519Z Feb 14 04:33:10 E KeyError:

> 'type_info'

> 2022-02-14T04:33:10.9947110Z Feb 14 04:33:10 E

> 2022-02-14T04:33:10.9947911Z Feb 14 04:33:10 Eat

> org.apache.flink.streaming.api.runners.python.beam.BeamPythonFunctionRunner.createStageBundleFactory(BeamPythonFunctionRunner.java:566)

> 2022-02-14T04:33:10.9949048Z Feb 14 04:33:10 Eat

> org.apache.flink.streaming.api.runners.python.beam.BeamPythonFunctionRunner.open(BeamPythonFunctionRunner.java:255)

> 2022-02-14T04:33:10.9950162Z Feb 14 04:33:10 Eat

> org.apache.flink.streaming.api.operators.python.AbstractPythonFunctionOperator.open(AbstractPythonFunctionOperator.java:131)

> 2022-02-14T04:33:10.9951344Z Feb 14 04:33:10 Eat

> org.apache.flink.streaming.api.operators.python.AbstractOneInputPythonFunctionOperator.open(AbstractOneInputPythonFunctionOperator.java:116)

> 2022-02-14T04:33:10.9952487Z Feb 14 04:33:10 Eat

> org.apache.flink.streaming.api.operators.python.PythonKeyedProcessOperator.open(PythonKeyedProcessOperator.java:121)

> 2022-02-14T04:33:10.9953561Z Feb 14 04:33:10 Eat

> org.apache.flink.streaming.runtime.tasks.RegularOperatorChain.initializeStateAndOpenOperators(RegularOperatorChain.java:110)

> 2022-02-14T04:33:10.9954565Z Feb 14 04:33:10 Eat

>

[jira] [Updated] (FLINK-25442) HBaseConnectorITCase.testTableSink failed on azure

[

https://issues.apache.org/jira/browse/FLINK-25442?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Flink Jira Bot updated FLINK-25442:

---

Labels: auto-deprioritized-critical test-stability (was: stale-critical

test-stability)

Priority: Major (was: Critical)

This issue was labeled "stale-critical" 7 days ago and has not received any

updates so it is being deprioritized. If this ticket is actually Critical,

please raise the priority and ask a committer to assign you the issue or revive

the public discussion.

> HBaseConnectorITCase.testTableSink failed on azure

> --

>

> Key: FLINK-25442

> URL: https://issues.apache.org/jira/browse/FLINK-25442

> Project: Flink

> Issue Type: Bug

> Components: Connectors / HBase

>Affects Versions: 1.15.0, 1.14.2, 1.16.0

>Reporter: Yun Gao

>Priority: Major

> Labels: auto-deprioritized-critical, test-stability

>

> {code:java}

> Dec 24 00:48:54 Picked up JAVA_TOOL_OPTIONS: -XX:+HeapDumpOnOutOfMemoryError

> Dec 24 00:48:54 OpenJDK 64-Bit Server VM warning: ignoring option

> MaxPermSize=128m; support was removed in 8.0

> Dec 24 00:48:54 Running org.apache.flink.connector.hbase2.HBaseConnectorITCase

> Dec 24 00:48:59 Formatting using clusterid: testClusterID

> Dec 24 00:50:15 java.lang.ThreadGroup[name=PEWorkerGroup,maxpri=10]

> Dec 24 00:50:15 Thread[HFileArchiver-8,5,PEWorkerGroup]

> Dec 24 00:50:15 Thread[HFileArchiver-9,5,PEWorkerGroup]

> Dec 24 00:50:15 Thread[HFileArchiver-10,5,PEWorkerGroup]

> Dec 24 00:50:15 Thread[HFileArchiver-11,5,PEWorkerGroup]

> Dec 24 00:50:15 Thread[HFileArchiver-12,5,PEWorkerGroup]

> Dec 24 00:50:15 Thread[HFileArchiver-13,5,PEWorkerGroup]

> Dec 24 00:50:16 Tests run: 9, Failures: 1, Errors: 0, Skipped: 0, Time

> elapsed: 82.068 sec <<< FAILURE! - in

> org.apache.flink.connector.hbase2.HBaseConnectorITCase

> Dec 24 00:50:16

> testTableSink(org.apache.flink.connector.hbase2.HBaseConnectorITCase) Time

> elapsed: 8.534 sec <<< FAILURE!

> Dec 24 00:50:16 java.lang.AssertionError: expected:<8> but was:<5>

> Dec 24 00:50:16 at org.junit.Assert.fail(Assert.java:89)

> Dec 24 00:50:16 at org.junit.Assert.failNotEquals(Assert.java:835)

> Dec 24 00:50:16 at org.junit.Assert.assertEquals(Assert.java:120)

> Dec 24 00:50:16 at org.junit.Assert.assertEquals(Assert.java:146)

> Dec 24 00:50:16 at

> org.apache.flink.connector.hbase2.HBaseConnectorITCase.testTableSink(HBaseConnectorITCase.java:291)

> Dec 24 00:50:16 at sun.reflect.NativeMethodAccessorImpl.invoke0(Native

> Method)

> Dec 24 00:50:16 at

> sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

> Dec 24 00:50:16 at

> sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

> Dec 24 00:50:16 at java.lang.reflect.Method.invoke(Method.java:498)

> Dec 24 00:50:16 at

> org.junit.runners.model.FrameworkMethod$1.runReflectiveCall(FrameworkMethod.java:59)

> Dec 24 00:50:16 at

> org.junit.internal.runners.model.ReflectiveCallable.run(ReflectiveCallable.java:12)

> Dec 24 00:50:16 at

> org.junit.runners.model.FrameworkMethod.invokeExplosively(FrameworkMethod.java:56)

> Dec 24 00:50:16 at

> org.junit.internal.runners.statements.InvokeMethod.evaluate(InvokeMethod.java:17)

> Dec 24 00:50:16 at

> org.junit.internal.runners.statements.RunBefores.evaluate(RunBefores.java:26)

> Dec 24 00:50:16 at

> org.junit.runners.ParentRunner$3.evaluate(ParentRunner.java:306)

> Dec 24 00:50:16 at

> org.junit.runners.BlockJUnit4ClassRunner$1.evaluate(BlockJUnit4ClassRunner.java:100)

> Dec 24 00:50:16 at

> org.junit.runners.ParentRunner.runLeaf(ParentRunner.java:366)

> Dec 24 00:50:16 at

> org.junit.runners.BlockJUnit4ClassRunner.runChild(BlockJUnit4ClassRunner.java:103)

> Dec 24 00:50:16 at

> org.junit.runners.BlockJUnit4ClassRunner.runChild(BlockJUnit4ClassRunner.java:63)

> Dec 24 00:50:16 at

> org.junit.runners.ParentRunner$4.run(ParentRunner.java:331)

> Dec 24 00:50:16 at

> org.junit.runners.ParentRunner$1.schedule(ParentRunner.java:79)

> Dec 24 00:50:16 at

> org.junit.runners.ParentRunner.runChildren(ParentRunner.java:329)

> Dec 24 00:50:16 at

> org.junit.runners.ParentRunner.access$100(ParentRunner.java:66)

> {code}

--

This message was sent by Atlassian Jira

(v8.20.1#820001)

[jira] [Updated] (FLINK-20918) Avoid excessive flush of Hadoop output stream

[ https://issues.apache.org/jira/browse/FLINK-20918?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Flink Jira Bot updated FLINK-20918: --- Labels: auto-deprioritized-major auto-deprioritized-minor pull-request-available (was: auto-deprioritized-major pull-request-available stale-minor) Priority: Not a Priority (was: Minor) This issue was labeled "stale-minor" 7 days ago and has not received any updates so it is being deprioritized. If this ticket is actually Minor, please raise the priority and ask a committer to assign you the issue or revive the public discussion. > Avoid excessive flush of Hadoop output stream > - > > Key: FLINK-20918 > URL: https://issues.apache.org/jira/browse/FLINK-20918 > Project: Flink > Issue Type: Bug > Components: Connectors / Hadoop Compatibility, FileSystems >Affects Versions: 1.11.3, 1.12.0 >Reporter: Paul Lin >Priority: Not a Priority > Labels: auto-deprioritized-major, auto-deprioritized-minor, > pull-request-available > > [HadoopRecoverableFsDataOutputStream#sync|https://github.com/apache/flink/blob/67d167ccd45046fc5ed222ac1f1e3ba5e6ec434b/flink-filesystems/flink-hadoop-fs/src/main/java/org/apache/flink/runtime/fs/hdfs/HadoopRecoverableFsDataOutputStream.java#L123] > calls both `hflush` and `hsync`, whereas `hsync` is an enhanced version of > `hflush`. We should remove the `hflush` call to avoid the excessive flush. -- This message was sent by Atlassian Jira (v8.20.1#820001)

[jira] [Updated] (FLINK-20938) Implement Flink's own tencent COS filesystem

[ https://issues.apache.org/jira/browse/FLINK-20938?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Flink Jira Bot updated FLINK-20938: --- Labels: auto-deprioritized-major auto-deprioritized-minor pull-request-available (was: auto-deprioritized-major pull-request-available stale-minor) Priority: Not a Priority (was: Minor) This issue was labeled "stale-minor" 7 days ago and has not received any updates so it is being deprioritized. If this ticket is actually Minor, please raise the priority and ask a committer to assign you the issue or revive the public discussion. > Implement Flink's own tencent COS filesystem > > > Key: FLINK-20938 > URL: https://issues.apache.org/jira/browse/FLINK-20938 > Project: Flink > Issue Type: New Feature > Components: FileSystems >Affects Versions: 1.12.0 >Reporter: hayden zhou >Priority: Not a Priority > Labels: auto-deprioritized-major, auto-deprioritized-minor, > pull-request-available > > Tencent's COS is widely used among China's cloud users, and Hadoop supports > Tencent COS since 3.3.0. > Open this jira to wrap CosNFileSystem in FLINK(similar to oss support), so > that users can read from & write to COS more easily in FLINK. -- This message was sent by Atlassian Jira (v8.20.1#820001)

[jira] [Updated] (FLINK-26110) AvroStreamingFileSinkITCase failed on azure

[

https://issues.apache.org/jira/browse/FLINK-26110?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Flink Jira Bot updated FLINK-26110:

---

Labels: stale-major test-stability (was: test-stability)

I am the [Flink Jira Bot|https://github.com/apache/flink-jira-bot/] and I help

the community manage its development. I see this issues has been marked as

Major but is unassigned and neither itself nor its Sub-Tasks have been updated

for 60 days. I have gone ahead and added a "stale-major" to the issue". If this

ticket is a Major, please either assign yourself or give an update. Afterwards,

please remove the label or in 7 days the issue will be deprioritized.

> AvroStreamingFileSinkITCase failed on azure

> ---

>

> Key: FLINK-26110

> URL: https://issues.apache.org/jira/browse/FLINK-26110

> Project: Flink

> Issue Type: Bug

> Components: Connectors / FileSystem

>Affects Versions: 1.13.5

>Reporter: Yun Gao

>Priority: Major

> Labels: stale-major, test-stability

>

> {code:java}

> Feb 12 01:00:00 [ERROR] Tests run: 3, Failures: 1, Errors: 0, Skipped: 0,

> Time elapsed: 2.433 s <<< FAILURE! - in

> org.apache.flink.formats.avro.AvroStreamingFileSinkITCase

> Feb 12 01:00:00 [ERROR]

> testWriteAvroGeneric(org.apache.flink.formats.avro.AvroStreamingFileSinkITCase)

> Time elapsed: 0.433 s <<< FAILURE!

> Feb 12 01:00:00 java.lang.AssertionError: expected:<1> but was:<2>

> Feb 12 01:00:00 at org.junit.Assert.fail(Assert.java:88)

> Feb 12 01:00:00 at org.junit.Assert.failNotEquals(Assert.java:834)

> Feb 12 01:00:00 at org.junit.Assert.assertEquals(Assert.java:645)

> Feb 12 01:00:00 at org.junit.Assert.assertEquals(Assert.java:631)

> Feb 12 01:00:00 at

> org.apache.flink.formats.avro.AvroStreamingFileSinkITCase.validateResults(AvroStreamingFileSinkITCase.java:139)

> Feb 12 01:00:00 at

> org.apache.flink.formats.avro.AvroStreamingFileSinkITCase.testWriteAvroGeneric(AvroStreamingFileSinkITCase.java:109)

> Feb 12 01:00:00 at sun.reflect.NativeMethodAccessorImpl.invoke0(Native

> Method)

> Feb 12 01:00:00 at

> sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

> Feb 12 01:00:00 at

> sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

> Feb 12 01:00:00 at java.lang.reflect.Method.invoke(Method.java:498)

> Feb 12 01:00:00 at

> org.junit.runners.model.FrameworkMethod$1.runReflectiveCall(FrameworkMethod.java:50)

> Feb 12 01:00:00 at

> org.junit.internal.runners.model.ReflectiveCallable.run(ReflectiveCallable.java:12)

> Feb 12 01:00:00 at

> org.junit.runners.model.FrameworkMethod.invokeExplosively(FrameworkMethod.java:47)

> Feb 12 01:00:00 at

> org.junit.internal.runners.statements.InvokeMethod.evaluate(InvokeMethod.java:17)

> Feb 12 01:00:00 at

> org.junit.internal.runners.statements.RunAfters.evaluate(RunAfters.java:27)

> Feb 12 01:00:00 at

> org.junit.internal.runners.statements.FailOnTimeout$CallableStatement.call(FailOnTimeout.java:298)

> Feb 12 01:00:00 at

> org.junit.internal.runners.statements.FailOnTimeout$CallableStatement.call(FailOnTimeout.java:292)

> Feb 12 01:00:00 at

> java.util.concurrent.FutureTask.run(FutureTask.java:266)

> Feb 12 01:00:00 at java.lang.Thread.run(Thread.java:748)

> Feb 12 01:00:00

> {code}

> https://dev.azure.com/apache-flink/apache-flink/_build/results?buildId=31304=logs=c91190b6-40ae-57b2-5999-31b869b0a7c1=43529380-51b4-5e90-5af4-2dccec0ef402=13026

--

This message was sent by Atlassian Jira

(v8.20.1#820001)

[jira] [Updated] (FLINK-26047) Support usrlib in HDFS for YARN application mode

[

https://issues.apache.org/jira/browse/FLINK-26047?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Flink Jira Bot updated FLINK-26047:

---

Labels: pull-request-available stale-assigned (was: pull-request-available)

I am the [Flink Jira Bot|https://github.com/apache/flink-jira-bot/] and I help

the community manage its development. I see this issue is assigned but has not

received an update in 30 days, so it has been labeled "stale-assigned".

If you are still working on the issue, please remove the label and add a

comment updating the community on your progress. If this issue is waiting on

feedback, please consider this a reminder to the committer/reviewer. Flink is a

very active project, and so we appreciate your patience.

If you are no longer working on the issue, please unassign yourself so someone

else may work on it.

> Support usrlib in HDFS for YARN application mode

>

>

> Key: FLINK-26047

> URL: https://issues.apache.org/jira/browse/FLINK-26047

> Project: Flink

> Issue Type: Improvement

> Components: Deployment / YARN

>Reporter: Biao Geng

>Assignee: Biao Geng

>Priority: Major

> Labels: pull-request-available, stale-assigned

>

> In YARN Application mode, we currently support using user jar and lib jar

> from HDFS. For example, we can run commands like:

> {quote}./bin/flink run-application -t yarn-application \

> -Dyarn.provided.lib.dirs="hdfs://myhdfs/my-remote-flink-dist-dir" \

> hdfs://myhdfs/jars/my-application.jar{quote}

> For {{usrlib}}, we currently only support local directory. I propose to add

> HDFS support for {{usrlib}} to work with CLASSPATH_INCLUDE_USER_JAR better.

> It can also benefit cases like using notebook to submit flink job.

--

This message was sent by Atlassian Jira

(v8.20.1#820001)

[jira] [Updated] (FLINK-21643) JDBC sink should be able to execute statements on multiple tables

[ https://issues.apache.org/jira/browse/FLINK-21643?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Flink Jira Bot updated FLINK-21643: --- Labels: auto-deprioritized-major auto-deprioritized-minor pull-request-available (was: auto-deprioritized-major pull-request-available stale-minor) Priority: Not a Priority (was: Minor) This issue was labeled "stale-minor" 7 days ago and has not received any updates so it is being deprioritized. If this ticket is actually Minor, please raise the priority and ask a committer to assign you the issue or revive the public discussion. > JDBC sink should be able to execute statements on multiple tables > - > > Key: FLINK-21643 > URL: https://issues.apache.org/jira/browse/FLINK-21643 > Project: Flink > Issue Type: New Feature > Components: Connectors / JDBC >Reporter: Maciej Obuchowski >Priority: Not a Priority > Labels: auto-deprioritized-major, auto-deprioritized-minor, > pull-request-available > > Currently datastream JDBC sink supports outputting data only to one table - > by having to provide SQL template, from which SimpleBatchStatementExecutor > creates PreparedStatement. Creating multiple sinks, each of which writes data > to one table is impractical for moderate to large number of tables - > relational databases don't usually tolerate large number of connections. > I propose adding DynamicBatchStatementExecutor, which will additionally > require > 1) provided mechanism to create SQL statements based on given object > 2) cache for prepared statements > 3) mechanism for determining which statement should be used for given object -- This message was sent by Atlassian Jira (v8.20.1#820001)

[jira] [Updated] (FLINK-25529) java.lang.ClassNotFoundException: org.apache.orc.PhysicalWriter when write bulkly into hive-2.1.1 orc table

[

https://issues.apache.org/jira/browse/FLINK-25529?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Flink Jira Bot updated FLINK-25529:

---

Labels: pull-request-available stale-major (was: pull-request-available)

I am the [Flink Jira Bot|https://github.com/apache/flink-jira-bot/] and I help

the community manage its development. I see this issues has been marked as

Major but is unassigned and neither itself nor its Sub-Tasks have been updated

for 60 days. I have gone ahead and added a "stale-major" to the issue". If this

ticket is a Major, please either assign yourself or give an update. Afterwards,

please remove the label or in 7 days the issue will be deprioritized.

> java.lang.ClassNotFoundException: org.apache.orc.PhysicalWriter when write

> bulkly into hive-2.1.1 orc table

> ---

>

> Key: FLINK-25529

> URL: https://issues.apache.org/jira/browse/FLINK-25529

> Project: Flink

> Issue Type: Bug

> Components: Connectors / Hive

> Environment: hive 2.1.1

> flink 1.12.4

>Reporter: Yuan Zhu

>Priority: Major

> Labels: pull-request-available, stale-major

> Attachments: lib.jpg

>

>

> I tried to write data bulkly into hive-2.1.1 with orc format, and encountered

> java.lang.ClassNotFoundException: org.apache.orc.PhysicalWriter

>

> Using bulk writer by setting table.exec.hive.fallback-mapred-writer = false;

>

> {code:java}

> SET 'table.sql-dialect'='hive';

> create table orders(

> order_id int,

> order_date timestamp,

> customer_name string,

> price decimal(10,3),

> product_id int,

> order_status boolean

> )partitioned by (dt string)

> stored as orc;

>

> SET 'table.sql-dialect'='default';

> create table datagen_source (

> order_id int,

> order_date timestamp(9),

> customer_name varchar,

> price decimal(10,3),

> product_id int,

> order_status boolean

> )with('connector' = 'datagen');

> create catalog myhive with ('type' = 'hive', 'hive-conf-dir' = '/mnt/conf');

> set table.exec.hive.fallback-mapred-writer = false;

> insert into myhive.`default`.orders

> /*+ OPTIONS(

> 'sink.partition-commit.trigger'='process-time',

> 'sink.partition-commit.policy.kind'='metastore,success-file',

> 'sink.rolling-policy.file-size'='128MB',

> 'sink.rolling-policy.rollover-interval'='10s',

> 'sink.rolling-policy.check-interval'='10s',

> 'auto-compaction'='true',

> 'compaction.file-size'='1MB' ) */

> select * , date_format(now(),'-MM-dd') as dt from datagen_source; {code}

> [ERROR] Could not execute SQL statement. Reason:

> java.lang.ClassNotFoundException: org.apache.orc.PhysicalWriter

>

> My jars in lib dir listed in attachment.

> In HiveTableSink#createStreamSink(line:270), createBulkWriterFactory if

> table.exec.hive.fallback-mapred-writer is false.

> If table is orc, HiveShimV200#createOrcBulkWriterFactory will be invoked.

> OrcBulkWriterFactory depends on org.apache.orc.PhysicalWriter in orc-core,

> but flink-connector-hive excludes orc-core for conflicting with hive-exec.

>

--

This message was sent by Atlassian Jira

(v8.20.1#820001)

[jira] [Updated] (FLINK-20979) Wrong description of 'sink.bulk-flush.max-actions'

[

https://issues.apache.org/jira/browse/FLINK-20979?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Flink Jira Bot updated FLINK-20979:

---

Labels: auto-deprioritized-major auto-deprioritized-minor

pull-request-available starter (was: auto-deprioritized-major

pull-request-available stale-minor starter)

Priority: Not a Priority (was: Minor)

This issue was labeled "stale-minor" 7 days ago and has not received any

updates so it is being deprioritized. If this ticket is actually Minor, please

raise the priority and ask a committer to assign you the issue or revive the

public discussion.

> Wrong description of 'sink.bulk-flush.max-actions'

> --

>

> Key: FLINK-20979

> URL: https://issues.apache.org/jira/browse/FLINK-20979

> Project: Flink

> Issue Type: Bug

> Components: Connectors / ElasticSearch, Documentation, Table SQL /

> Ecosystem

>Affects Versions: 1.12.0

>Reporter: Dawid Wysakowicz

>Priority: Not a Priority

> Labels: auto-deprioritized-major, auto-deprioritized-minor,

> pull-request-available, starter

>

> The documentation claims the option can be disabled with '0', but it can

> actually be disable with '-1' whereas '0' is an illegal value.

> {{Maximum number of buffered actions per bulk request. Can be set to '0' to

> disable it. }}

--

This message was sent by Atlassian Jira

(v8.20.1#820001)

[jira] [Updated] (FLINK-26109) Avro Confluent Schema Registry nightly end-to-end test failed on azure

[

https://issues.apache.org/jira/browse/FLINK-26109?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Flink Jira Bot updated FLINK-26109:

---

Labels: stale-major test-stability (was: test-stability)

I am the [Flink Jira Bot|https://github.com/apache/flink-jira-bot/] and I help

the community manage its development. I see this issues has been marked as

Major but is unassigned and neither itself nor its Sub-Tasks have been updated

for 60 days. I have gone ahead and added a "stale-major" to the issue". If this

ticket is a Major, please either assign yourself or give an update. Afterwards,

please remove the label or in 7 days the issue will be deprioritized.

> Avro Confluent Schema Registry nightly end-to-end test failed on azure

> --

>

> Key: FLINK-26109

> URL: https://issues.apache.org/jira/browse/FLINK-26109

> Project: Flink

> Issue Type: Bug

> Components: Formats (JSON, Avro, Parquet, ORC, SequenceFile)

>Affects Versions: 1.15.0

>Reporter: Yun Gao

>Priority: Major

> Labels: stale-major, test-stability

>

> {code:java}

> Feb 12 07:55:02 Stopping job timeout watchdog (with pid=130662)

> Feb 12 07:55:03 Checking for errors...

> Feb 12 07:55:03 Found error in log files; printing first 500 lines; see full

> logs for details:

> ...

> az209-567.vil1xujjdrkuxjp2ihtao45w0e.ax.internal.cloudapp.net

> (dataPort=41161).

> org.apache.flink.util.FlinkException: The TaskExecutor is shutting down.

> at

> org.apache.flink.runtime.taskexecutor.TaskExecutor.onStop(TaskExecutor.java:456)

> ~[flink-dist-1.15-SNAPSHOT.jar:1.15-SNAPSHOT]

> at

> org.apache.flink.runtime.rpc.RpcEndpoint.internalCallOnStop(RpcEndpoint.java:214)

> ~[flink-dist-1.15-SNAPSHOT.jar:1.15-SNAPSHOT]

> at

> org.apache.flink.runtime.rpc.akka.AkkaRpcActor$StartedState.lambda$terminate$0(AkkaRpcActor.java:568)

> ~[flink-rpc-akka_7dcae025-2017-4b0f-828d-f89a7ceb9bf7.jar:1.15-SNAPSHOT]

> at

> org.apache.flink.runtime.concurrent.akka.ClassLoadingUtils.runWithContextClassLoader(ClassLoadingUtils.java:83)

> ~[flink-rpc-akka_7dcae025-2017-4b0f-828d-f89a7ceb9bf7.jar:1.15-SNAPSHOT]

> at

> org.apache.flink.runtime.rpc.akka.AkkaRpcActor$StartedState.terminate(AkkaRpcActor.java:567)

> ~[flink-rpc-akka_7dcae025-2017-4b0f-828d-f89a7ceb9bf7.jar:1.15-SNAPSHOT]

> at

> org.apache.flink.runtime.rpc.akka.AkkaRpcActor.handleControlMessage(AkkaRpcActor.java:191)

> ~[flink-rpc-akka_7dcae025-2017-4b0f-828d-f89a7ceb9bf7.jar:1.15-SNAPSHOT]

> at akka.japi.pf.UnitCaseStatement.apply(CaseStatements.scala:24)

> ~[flink-rpc-akka_7dcae025-2017-4b0f-828d-f89a7ceb9bf7.jar:1.15-SNAPSHOT]

> at akka.japi.pf.UnitCaseStatement.apply(CaseStatements.scala:20)

> ~[flink-rpc-akka_7dcae025-2017-4b0f-828d-f89a7ceb9bf7.jar:1.15-SNAPSHOT]

> at scala.PartialFunction.applyOrElse(PartialFunction.scala:123)

> ~[flink-rpc-akka_7dcae025-2017-4b0f-828d-f89a7ceb9bf7.jar:1.15-SNAPSHOT]

> at scala.PartialFunction.applyOrElse$(PartialFunction.scala:122)

> ~[flink-rpc-akka_7dcae025-2017-4b0f-828d-f89a7ceb9bf7.jar:1.15-SNAPSHOT]

> at akka.japi.pf.UnitCaseStatement.applyOrElse(CaseStatements.scala:20)

> ~[flink-rpc-akka_7dcae025-2017-4b0f-828d-f89a7ceb9bf7.jar:1.15-SNAPSHOT]

> at scala.PartialFunction$OrElse.applyOrElse(PartialFunction.scala:171)

> [flink-rpc-akka_7dcae025-2017-4b0f-828d-f89a7ceb9bf7.jar:1.15-SNAPSHOT]

> at scala.PartialFunction$OrElse.applyOrElse(PartialFunction.scala:172)

> [flink-rpc-akka_7dcae025-2017-4b0f-828d-f89a7ceb9bf7.jar:1.15-SNAPSHOT]

> at akka.actor.Actor.aroundReceive(Actor.scala:537)

> [flink-rpc-akka_7dcae025-2017-4b0f-828d-f89a7ceb9bf7.jar:1.15-SNAPSHOT]

> at akka.actor.Actor.aroundReceive$(Actor.scala:535)

> [flink-rpc-akka_7dcae025-2017-4b0f-828d-f89a7ceb9bf7.jar:1.15-SNAPSHOT]

> at akka.actor.AbstractActor.aroundReceive(AbstractActor.scala:220)

> [flink-rpc-akka_7dcae025-2017-4b0f-828d-f89a7ceb9bf7.jar:1.15-SNAPSHOT]

> at akka.actor.ActorCell.receiveMessage(ActorCell.scala:580)

> [flink-rpc-akka_7dcae025-2017-4b0f-828d-f89a7ceb9bf7.jar:1.15-SNAPSHOT]

> at akka.actor.ActorCell.invoke(ActorCell.scala:548)

> [flink-rpc-akka_7dcae025-2017-4b0f-828d-f89a7ceb9bf7.jar:1.15-SNAPSHOT]

> at akka.dispatch.Mailbox.processMailbox(Mailbox.scala:270)

> [flink-rpc-akka_7dcae025-2017-4b0f-828d-f89a7ceb9bf7.jar:1.15-SNAPSHOT]

> at akka.dispatch.Mailbox.run(Mailbox.scala:231)

> [flink-rpc-akka_7dcae025-2017-4b0f-828d-f89a7ceb9bf7.jar:1.15-SNAPSHOT]

> at akka.dispatch.Mailbox.exec(Mailbox.scala:243)

> [flink-rpc-akka_7dcae025-2017-4b0f-828d-f89a7ceb9bf7.jar:1.15-SNAPSHOT]

> at java.util.concurrent.ForkJoinTask.doExec(ForkJoinTask.java:289)

> [?:1.8.0_312]

> at

> java.util.concurrent.ForkJoinPool$WorkQueue.runTask(ForkJoinPool.java:1056)

>

[jira] [Updated] (FLINK-21440) Translate Real Time Reporting with the Table API doc and correct a spelling mistake

[ https://issues.apache.org/jira/browse/FLINK-21440?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Flink Jira Bot updated FLINK-21440: --- Labels: auto-deprioritized-major auto-deprioritized-minor pull-request-available (was: auto-deprioritized-major pull-request-available stale-minor) Priority: Not a Priority (was: Minor) This issue was labeled "stale-minor" 7 days ago and has not received any updates so it is being deprioritized. If this ticket is actually Minor, please raise the priority and ask a committer to assign you the issue or revive the public discussion. > Translate Real Time Reporting with the Table API doc and correct a spelling > mistake > --- > > Key: FLINK-21440 > URL: https://issues.apache.org/jira/browse/FLINK-21440 > Project: Flink > Issue Type: Improvement > Components: Documentation, Table SQL / Ecosystem >Reporter: GuotaoLi >Priority: Not a Priority > Labels: auto-deprioritized-major, auto-deprioritized-minor, > pull-request-available > > * Translate Real Time Reporting with the Table API doc to Chinese > * Correct Real Time Reporting with the Table API doc allong with spelling > mistake to along with -- This message was sent by Atlassian Jira (v8.20.1#820001)

[jira] [Updated] (FLINK-21444) Lookup joins should deal with intermediate table scans correctly

[

https://issues.apache.org/jira/browse/FLINK-21444?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Flink Jira Bot updated FLINK-21444:

---

Labels: auto-deprioritized-major auto-deprioritized-minor auto-unassigned

(was: auto-deprioritized-major auto-unassigned stale-minor)

Priority: Not a Priority (was: Minor)

This issue was labeled "stale-minor" 7 days ago and has not received any

updates so it is being deprioritized. If this ticket is actually Minor, please

raise the priority and ask a committer to assign you the issue or revive the

public discussion.

> Lookup joins should deal with intermediate table scans correctly

>

>

> Key: FLINK-21444

> URL: https://issues.apache.org/jira/browse/FLINK-21444

> Project: Flink

> Issue Type: Bug

> Components: Table SQL / Planner

>Affects Versions: 1.12.0, 1.13.0

>Reporter: Caizhi Weng

>Priority: Not a Priority

> Labels: auto-deprioritized-major, auto-deprioritized-minor,

> auto-unassigned

>

> Add the following test case to

> {{org.apache.flink.table.planner.runtime.stream.sql.LookupJoinITCase}}

> {code:scala}

> @Test

> def myTest(): Unit = {

>

> tEnv.getConfig.getConfiguration.setBoolean(OptimizerConfigOptions.TABLE_OPTIMIZER_REUSE_SOURCE_ENABLED,

> true)

>

> tEnv.getConfig.getConfiguration.setBoolean(RelNodeBlockPlanBuilder.TABLE_OPTIMIZER_REUSE_OPTIMIZE_BLOCK_WITH_DIGEST_ENABLED,

> true)

> val ddl1 =

> """

> |CREATE TABLE sink1 (

> | `id` BIGINT

> |) WITH (

> | 'connector' = 'blackhole'

> |)

> |""".stripMargin

> tEnv.executeSql(ddl1)

> val ddl2 =

> """

> |CREATE TABLE sink2 (

> | `id` BIGINT

> |) WITH (

> | 'connector' = 'blackhole'

> |)

> |""".stripMargin

> tEnv.executeSql(ddl2)

> val sql1 = "INSERT INTO sink1 SELECT T.id FROM src AS T JOIN user_table for

> system_time as of T.proctime AS D ON T.id = D.id"

> val sql2 = "INSERT INTO sink2 SELECT T.id FROM src AS T JOIN user_table for

> system_time as of T.proctime AS D ON T.id + 1 = D.id"

> val stmtSet = tEnv.createStatementSet()

> stmtSet.addInsertSql(sql1)

> stmtSet.addInsertSql(sql2)

> stmtSet.execute().await()

> }

> {code}

> The following exception will occur

> {code}

> org.apache.flink.table.api.ValidationException: Temporal Table Join requires

> primary key in versioned table, but no primary key can be found. The physical

> plan is:

> FlinkLogicalJoin(condition=[AND(=($0, $2),

> __INITIAL_TEMPORAL_JOIN_CONDITION($1, __TEMPORAL_JOIN_LEFT_KEY($0),

> __TEMPORAL_JOIN_RIGHT_KEY($2)))], joinType=[inner])

> FlinkLogicalCalc(select=[id, proctime])

> FlinkLogicalIntermediateTableScan(table=[[IntermediateRelTable_0]],

> fields=[id, len, content, proctime])

> FlinkLogicalSnapshot(period=[$cor0.proctime])

> FlinkLogicalCalc(select=[id])

> FlinkLogicalIntermediateTableScan(table=[[IntermediateRelTable_1]],

> fields=[age, id, name])

> at

> org.apache.flink.table.planner.plan.rules.logical.TemporalJoinRewriteWithUniqueKeyRule.org$apache$flink$table$planner$plan$rules$logical$TemporalJoinRewriteWithUniqueKeyRule$$validateRightPrimaryKey(TemporalJoinRewriteWithUniqueKeyRule.scala:124)

> at

> org.apache.flink.table.planner.plan.rules.logical.TemporalJoinRewriteWithUniqueKeyRule$$anon$1.visitCall(TemporalJoinRewriteWithUniqueKeyRule.scala:88)

> at

> org.apache.flink.table.planner.plan.rules.logical.TemporalJoinRewriteWithUniqueKeyRule$$anon$1.visitCall(TemporalJoinRewriteWithUniqueKeyRule.scala:70)

> at org.apache.calcite.rex.RexCall.accept(RexCall.java:174)

> at org.apache.calcite.rex.RexShuttle.visitList(RexShuttle.java:158)

> at org.apache.calcite.rex.RexShuttle.visitCall(RexShuttle.java:110)

> at

> org.apache.flink.table.planner.plan.rules.logical.TemporalJoinRewriteWithUniqueKeyRule$$anon$1.visitCall(TemporalJoinRewriteWithUniqueKeyRule.scala:109)

> at

> org.apache.flink.table.planner.plan.rules.logical.TemporalJoinRewriteWithUniqueKeyRule$$anon$1.visitCall(TemporalJoinRewriteWithUniqueKeyRule.scala:70)

> at org.apache.calcite.rex.RexCall.accept(RexCall.java:174)

> at

> org.apache.flink.table.planner.plan.rules.logical.TemporalJoinRewriteWithUniqueKeyRule.onMatch(TemporalJoinRewriteWithUniqueKeyRule.scala:70)

> at

> org.apache.calcite.plan.AbstractRelOptPlanner.fireRule(AbstractRelOptPlanner.java:333)

> at org.apache.calcite.plan.hep.HepPlanner.applyRule(HepPlanner.java:542)

> at

> org.apache.calcite.plan.hep.HepPlanner.applyRules(HepPlanner.java:407)

> at

> org.apache.calcite.plan.hep.HepPlanner.executeInstruction(HepPlanner.java:243)

> at

> org.apache.calcite.plan.hep.HepInstruction$RuleInstance.execute(HepInstruction.java:127)

> at

>

[jira] [Updated] (FLINK-21686) Duplicate code in hive parser file should be abstracted into functions

[ https://issues.apache.org/jira/browse/FLINK-21686?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Flink Jira Bot updated FLINK-21686: --- Labels: auto-deprioritized-minor pull-request-available (was: pull-request-available stale-minor) Priority: Not a Priority (was: Minor) This issue was labeled "stale-minor" 7 days ago and has not received any updates so it is being deprioritized. If this ticket is actually Minor, please raise the priority and ask a committer to assign you the issue or revive the public discussion. > Duplicate code in hive parser file should be abstracted into functions > -- > > Key: FLINK-21686 > URL: https://issues.apache.org/jira/browse/FLINK-21686 > Project: Flink > Issue Type: Improvement > Components: Connectors / Hive >Reporter: humengyu >Priority: Not a Priority > Labels: auto-deprioritized-minor, pull-request-available > > It would be better to use functions rather than duplicate code in hive parser > file: > # option should be abstracted into a function; > #option should be abstracted into a function. -- This message was sent by Atlassian Jira (v8.20.1#820001)

[jira] [Commented] (FLINK-27255) Flink-avro does not support serialization and deserialization of avro schema longer than 65535 characters

[ https://issues.apache.org/jira/browse/FLINK-27255?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17522943#comment-17522943 ] Steven Zhen Wu commented on FLINK-27255: [~jinyius] this issue existed for a while now. not sth new. > Flink-avro does not support serialization and deserialization of avro schema > longer than 65535 characters > - > > Key: FLINK-27255 > URL: https://issues.apache.org/jira/browse/FLINK-27255 > Project: Flink > Issue Type: Bug > Components: Formats (JSON, Avro, Parquet, ORC, SequenceFile) >Affects Versions: 1.14.4 >Reporter: Haizhou Zhao >Assignee: Haizhou Zhao >Priority: Major > > The underlying serialization of avro schema uses string serialization method > of ObjectOutputStream.class, however, the default string serialization by > ObjectOutputStream.class does not support handling string of more than 66535 > characters (64kb). As a result, constructing flink operators that > input/output Avro Generic Record with huge schema is not possible. > > The purposed fix is two change the serialization and deserialization method > of these following classes so that huge string could also be handled. > > [GenericRecordAvroTypeInfo|https://github.com/apache/flink/blob/master/flink-formats/flink-avro/src/main/java/org/apache/flink/formats/avro/typeutils/GenericRecordAvroTypeInfo.java#L107] > [SerializableAvroSchema|https://github.com/apache/flink/blob/master/flink-formats/flink-avro/src/main/java/org/apache/flink/formats/avro/typeutils/SerializableAvroSchema.java#L55] > -- This message was sent by Atlassian Jira (v8.20.1#820001)

[GitHub] [flink-kubernetes-operator] gyfora commented on pull request #165: [FLINK-26140] Support rollback strategies

gyfora commented on PR #165: URL: https://github.com/apache/flink-kubernetes-operator/pull/165#issuecomment-1100367932 @wangyang0918 I think for the suspend to work, I need to fix 2 things basically: 1. If the user suspends a job, the spec should be automatically marked as `lastStableSpec` 2. If an upgrade after a suspend fails, we should roll back to the suspended state (simply stop the job) Will work on this over the weekend -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: issues-unsubscr...@flink.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [flink] metaswirl commented on a diff in pull request #17228: [FLINK-24236] Migrate tests to factory approach

metaswirl commented on code in PR #17228:

URL: https://github.com/apache/flink/pull/17228#discussion_r851495599

##

flink-runtime/src/test/java/org/apache/flink/runtime/metrics/ReporterSetupTest.java:

##

@@ -47,17 +50,30 @@

import static org.junit.Assert.assertTrue;

/** Tests for the {@link ReporterSetup}. */

-public class ReporterSetupTest extends TestLogger {

+@ExtendWith(TestLoggerExtension.class)

+class ReporterSetupTest {

+

+@RegisterExtension

+static final ContextClassLoaderExtension CONTEXT_CLASS_LOADER_EXTENSION =

+ContextClassLoaderExtension.builder()

+.withServiceEntry(

+MetricReporterFactory.class,

+TestReporter1.class.getName(),

+TestReporter2.class.getName(),

+TestReporter11.class.getName(),

+TestReporter12.class.getName(),

+TestReporter13.class.getName())

+.build();

Review Comment:

What is the benefit of using a custom class loader here?

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: issues-unsubscr...@flink.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [flink] flinkbot commented on pull request #19493: [FLINK-27205][docs-zh] Translate "Concepts -> Glossary" page into Chinese.

flinkbot commented on PR #19493: URL: https://github.com/apache/flink/pull/19493#issuecomment-1100198258 ## CI report: * 42bb3147bb7ab02a51fdc0c0b9cca28c15289a91 UNKNOWN Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: issues-unsubscr...@flink.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

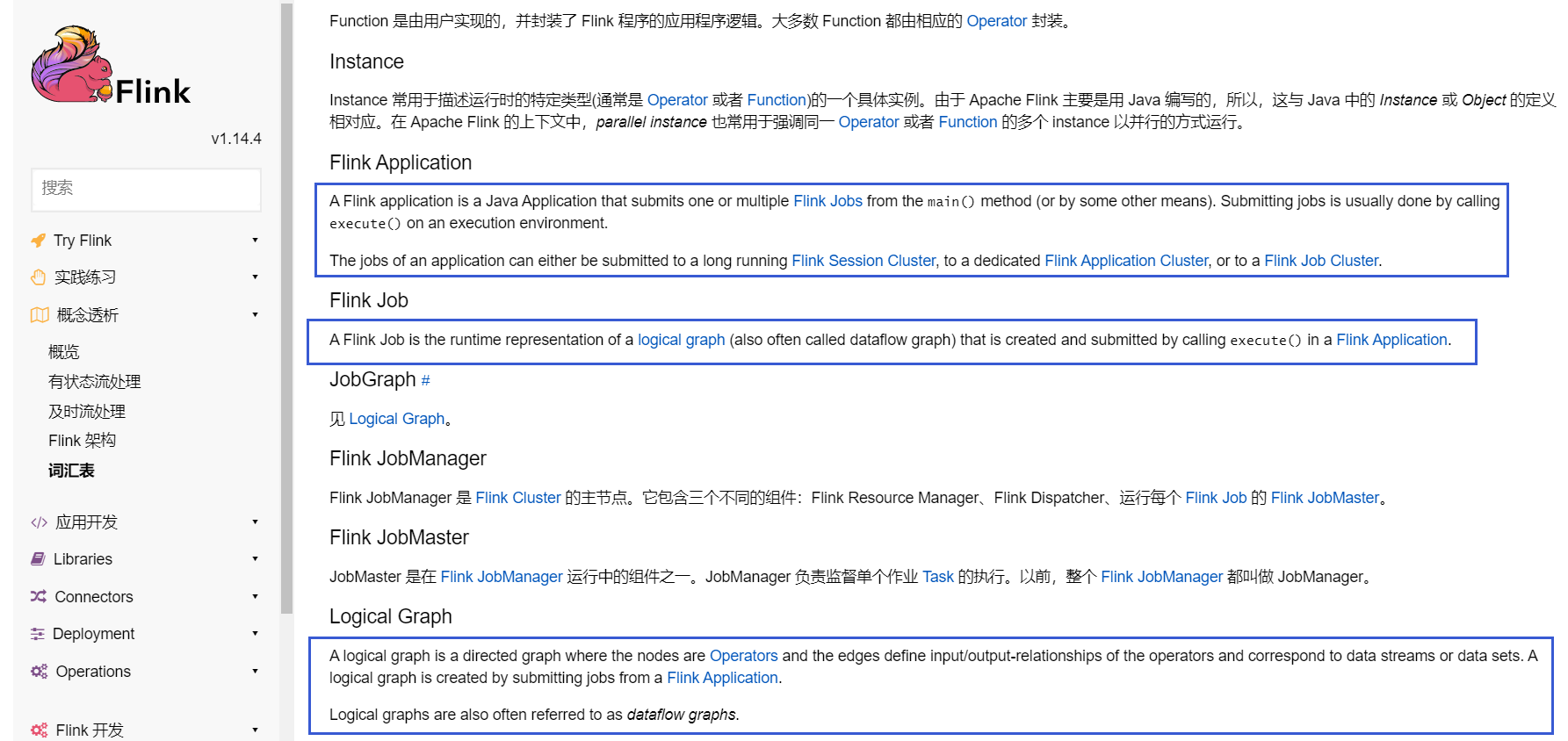

[GitHub] [flink] liuzhuang2017 opened a new pull request, #19493: [FLINK-27205][docs-zh] Translate "Concepts -> Glossary" page into Chinese.

liuzhuang2017 opened a new pull request, #19493: URL: https://github.com/apache/flink/pull/19493 ## What is the purpose of the change   - **As can be seen from the above figure, there are still some untranslated content in the Chinese document, so this part is translated.** ## Brief change log Translate "Concepts -> Glossary" page into Chinese. ## Verifying this change This change is a trivial rework / code cleanup without any test coverage. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: issues-unsubscr...@flink.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Updated] (FLINK-27264) Add ITCase for concurrent batch overwrite and streaming insert

[ https://issues.apache.org/jira/browse/FLINK-27264?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] ASF GitHub Bot updated FLINK-27264: --- Labels: pull-request-available (was: ) > Add ITCase for concurrent batch overwrite and streaming insert > -- > > Key: FLINK-27264 > URL: https://issues.apache.org/jira/browse/FLINK-27264 > Project: Flink > Issue Type: Sub-task > Components: Table Store >Affects Versions: table-store-0.1.0 >Reporter: Jane Chan >Assignee: Jane Chan >Priority: Major > Labels: pull-request-available > Fix For: table-store-0.1.0 > > Attachments: image-2022-04-15-19-26-09-649.png > > > !image-2022-04-15-19-26-09-649.png|width=609,height=241! > Add it case for user story -- This message was sent by Atlassian Jira (v8.20.1#820001)

[GitHub] [flink-table-store] LadyForest opened a new pull request, #91: [FLINK-27264] Add IT case for concurrent batch overwrite and streaming insert into

LadyForest opened a new pull request, #91: URL: https://github.com/apache/flink-table-store/pull/91 Add it case to cover the user story -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: issues-unsubscr...@flink.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [flink-web] liuzhuang2017 commented on pull request #521: [hotfix][docs] Fix link tags typo.

liuzhuang2017 commented on PR #521: URL: https://github.com/apache/flink-web/pull/521#issuecomment-1100116349 @wuchong ,Hi,please help me review this pr when you are free time, thank you. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: issues-unsubscr...@flink.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [flink] flinkbot commented on pull request #19492: Update TimeWindow.java

flinkbot commented on PR #19492: URL: https://github.com/apache/flink/pull/19492#issuecomment-1100089900 ## CI report: * e5b4f082ff6f70ab1e93b3c6384e08424a71e97f UNKNOWN Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: issues-unsubscr...@flink.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Comment Edited] (FLINK-26793) Flink Cassandra connector performance issue

[

https://issues.apache.org/jira/browse/FLINK-26793?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17522355#comment-17522355

]

Etienne Chauchot edited comment on FLINK-26793 at 4/15/22 12:58 PM:

[~bumblebee] I reproduced the behavior you observed: I ran [this streaming

infinite

pipeline|https://github.com/echauchot/flink-samples/blob/master/src/main/java/org/example/CassandraPojoSinkStreamingExample.java]

for 3 days on a local flink 1.14.4 cluster + cassandra 3.0 docker (I did not

have rights to instanciate a cassandra cluster on Azure). The pipeline has

checkpointing configured every 10 min with exactly once semantics and no

watermark defined. It was run at parallelism 16 which corresponds to the number

of cores on my laptop. I created a source that gives pojos every 100 ms. The

source is mono-threaded so at parallelism 1. See all the screenshots

I ran the pipeline for more than 72 hours and indeed after little less than

72h, I got an exception from Cassandra cluster see task manager log:

{code:java}

2022-04-13 16:38:15,227 ERROR

org.apache.flink.streaming.connectors.cassandra.CassandraPojoSink [] - Error

while sending value.

com.datastax.driver.core.exceptions.WriteTimeoutException: Cassandra timeout

during write query at consistency LOCAL_ONE (1 replica were required but only 0

acknowledged the write)

{code}

This exception means that Cassandra coordinator node (internal Cassandra)

waited too long for an internal replication (raplication to another node in the

same casssandra "datacenter") and did not ack the write.

This led to a failure of the write task and to a restoration of the job from

the last checkpoint see job manager log:

{code:java}

2022-04-13 16:38:20,847 INFO

org.apache.flink.runtime.executiongraph.ExecutionGraph [] - Job Cassandra

Pojo Sink Streaming example (dc7522bc1855f6f98038ac2b4eed4095) switched from

state RESTARTING to RUNNING.

2022-04-13 16:38:20,850 INFO

org.apache.flink.runtime.checkpoint.CheckpointCoordinator[] - Restoring job

dc7522bc1855f6f98038ac2b4eed4095 from Checkpoint 136 @ 1649858983772 for

dc7522bc1855f6f98038ac2b4eed4095 located at

file:/tmp/flink-checkpoints/dc7522bc1855f6f98038ac2b4eed4095/chk-136.

{code}

This restoration led to the restoration of the _CassandraPojoSink_ and to the

call of _CassandraPojoSink#open_ which reconnects to cassandra cluster and

re-creates the related _MappingManager_

So in short, this is what I supposed in my previous comments. Restoring from

checkpoints slows down your writes (job restart time + cassandra driver state

re-creation - reconnection, prepared statements recreation in the

MappingManager etc... -)

The problem is that the timeout comes from Cassandra itself not from Flink and

it is normal that Flink restores the job in such circumstances.

What you can do is to increase the Cassandra write timeout to adapt to your

workload in your Cassandra cluster so that such timeout errors do not happen.

For that you need to raise _write_request_timeout_in_ms_ conf parameter in

your _cassandra.yml_.

I do not recommend that you lower the replication factor in your Cassandra

cluster (I did that only for local tests on Flink) because it is mandatory that

you do not loose data in case of your Cassandra cluster failure. Waiting for a

single replica for write acknowledge is the minimum level for this guarantee in

Cassandra.

Best

Etienne

[^Capture d’écran de 2022-04-14 16-34-59.png]

[^Capture d’écran de 2022-04-14 16-35-30.png]

[^Capture d’écran de 2022-04-14 16-35-07.png]

[^jobmanager_log.txt]

[^taskmanager_127.0.1.1_33251-af56fa_log]

was (Author: echauchot):

[~bumblebee] I reproduced the behavior you observed: I ran [this streaming

infinite

pipeline|https://github.com/echauchot/flink-samples/blob/master/src/main/java/org/example/CassandraPojoSinkStreamingExample.java]

for 3 days on a local flink 1.14.4 cluster + cassandra 3.0 docker (I did not

have rights to instanciate a cassandra cluster on Azure). The pipeline has

checkpointing configured every 10 min with exactly once semantics and no

watermark defined. It was run at parallelism 16 which corresponds to the number

of cores on my laptop. I created a source that gives pojos every 100 ms. The

source is mono-threaded so at parallelism 1. See all the screenshots

I ran the pipeline for more than 72 hours and indeed after little less than

72h, I got an exception from Cassandra cluster see task manager log:

{code:java}

2022-04-13 16:38:15,227 ERROR

org.apache.flink.streaming.connectors.cassandra.CassandraPojoSink [] - Error

while sending value.

com.datastax.driver.core.exceptions.WriteTimeoutException: Cassandra timeout

during write query at consistency LOCAL_ONE (1 replica were required but only 0

acknowledged the write)

{code}

This exception means that Cassandra coordinator node (internal Cassandra)

waited

[jira] [Comment Edited] (FLINK-26824) Upgrade Flink's supported Cassandra versions to match with the Cassandra community supported versions

[ https://issues.apache.org/jira/browse/FLINK-26824?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17521203#comment-17521203 ] Etienne Chauchot edited comment on FLINK-26824 at 4/15/22 12:57 PM: and by the way, there was a complete refactoring of datastax cassandra driver and a relocation starting at 4.0 that impacts all our code base. But using latest 3.x driver works with both Cassandra 4.x and 3.x. So I suggest we keep the cassandra-driver 3.x versions was (Author: echauchot): and by the way, there was a complete refactoring of cassandra driver and a relocation starting at 4.0 that impacts all our code base. But using latest 3.x driver works with both Cassandra 4.x and 3.x. So I suggest we keep the cassandra-driver 3.x versions > Upgrade Flink's supported Cassandra versions to match with the Cassandra > community supported versions > - > > Key: FLINK-26824 > URL: https://issues.apache.org/jira/browse/FLINK-26824 > Project: Flink > Issue Type: Sub-task > Components: Connectors / Cassandra >Reporter: Martijn Visser >Assignee: Etienne Chauchot >Priority: Major > > Flink's Cassandra connector is currently only supporting > com.datastax.cassandra:cassandra-driver-core version 3.0.0. > The Cassandra community supports 3 versions. One GA (general availability, > the latest version), one stable and one older supported release per > https://cassandra.apache.org/_/download.html. > These are currently: > Cassandra 4.0 (GA) > Cassandra 3.11 (Stable) > Cassandra 3.0 (Older supported release). > We should support (and follow) the supported versions by the Cassandra > community -- This message was sent by Atlassian Jira (v8.20.1#820001)

[GitHub] [flink] huacaicai commented on pull request #19492: Update TimeWindow.java

huacaicai commented on PR #19492: URL: https://github.com/apache/flink/pull/19492#issuecomment-1100087484 Based on the rolling window is a special window with a step size equal to the window size, so I modified the parameter to the window step size, which can better express the meaning of the method parameters. Please pass -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: issues-unsubscr...@flink.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Comment Edited] (FLINK-6755) Allow triggering Checkpoints through command line client

[

https://issues.apache.org/jira/browse/FLINK-6755?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17458438#comment-17458438

]

Piotr Nowojski edited comment on FLINK-6755 at 4/15/22 12:52 PM:

-

The motivation behind this feature request will be covered by FLINK-25276.

As mentioned above by Aljoscha, there might be still a value of exposing manual

checkpoint triggering REST API hook, so I'm keeping this ticket open. However

it doesn't look like such feature is well motivated. Implementation of this

should be quite straightforward since Flink internally already supports this

(FLINK-24280). It's just not exposed in anyway to the user.

edit: Although this idea might be still valid, I strongly think we should not

expose checkpoint directory when triggering checkpoints as proposed in the

description:

{noformat}

./bin/flink checkpoint [checkpointDirectory]

{noformat}

Since checkpoints are owned fully by Flink, CLI/REST API call to trigger

checkpoints should not expose anything like that. If anything, it should be

just a simple trigger with optionally parameters like whether the checkpoint

should be full or incremental.

The same remark applies to:

{noformat}

./bin/flink cancel -c [targetDirectory]

{nofrmat}

and I don't see a point of supporting cancelling/stopping job with checkpoint.

was (Author: pnowojski):

The motivation behind this feature request will be covered by FLINK-25276.

As mentioned above by Aljoscha, there might be still a value of exposing manual

checkpoint triggering REST API hook, so I'm keeping this ticket open. However

it doesn't look like such feature is well motivated. Implementation of this

should be quite straightforward since Flink internally already supports this

(FLINK-24280). It's just not exposed in anyway to the user.

edit: Although this idea might be still valid, I strongly think we should not

expose checkpoint directory when triggering checkpoints as proposed in the

description:

{noformat}

./bin/flink checkpoint [checkpointDirectory]

{noformat}

Since checkpoints are owned fully by Flink, CLI/REST API call to trigger

checkpoints should not expose anything like that. If anything, it should be

just a simple trigger with optionally parameters like whether the checkpoint

should be full or incremental.

> Allow triggering Checkpoints through command line client

>

>

> Key: FLINK-6755

> URL: https://issues.apache.org/jira/browse/FLINK-6755

> Project: Flink

> Issue Type: New Feature

> Components: Command Line Client, Runtime / Checkpointing

>Affects Versions: 1.3.0

>Reporter: Gyula Fora

>Priority: Not a Priority

> Labels: auto-deprioritized-major, auto-unassigned

>

> The command line client currently only allows triggering (and canceling with)

> Savepoints.

> While this is good if we want to fork or modify the pipelines in a

> non-checkpoint compatible way, now with incremental checkpoints this becomes

> wasteful for simple job restarts/pipeline updates.

> I suggest we add a new command:

> ./bin/flink checkpoint [checkpointDirectory]

> and a new flag -c for the cancel command to indicate we want to trigger a

> checkpoint:

> ./bin/flink cancel -c [targetDirectory]

> Otherwise this can work similar to the current savepoint taking logic, we

> could probably even piggyback on the current messages by adding boolean flag

> indicating whether it should be a savepoint or a checkpoint.

--

This message was sent by Atlassian Jira

(v8.20.1#820001)

[jira] [Created] (FLINK-27266) in hive_read_write.md, there is an extra backtick in the description

陈磊 created FLINK-27266: -- Summary: in hive_read_write.md, there is an extra backtick in the description Key: FLINK-27266 URL: https://issues.apache.org/jira/browse/FLINK-27266 Project: Flink Issue Type: New Feature Components: Documentation Reporter: 陈磊 Attachments: image-2022-04-15-20-52-40-819.png in hive_read_write.md, there is an extra backtick in the description !image-2022-04-15-20-52-40-819.png! -- This message was sent by Atlassian Jira (v8.20.1#820001)

[GitHub] [flink] huacaicai commented on pull request #19492: Update TimeWindow.java

huacaicai commented on PR #19492: URL: https://github.com/apache/flink/pull/19492#issuecomment-1100087060 The window size cannot accurately express the true meaning of the parameter, because the sliding window needs to pass in the step size, and the rolling window passes in the window size. Based on the rolling window is a special window with a step size equal to the window size, so I modified the parameter to the window step size, which can better express the meaning of the method parameters -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: issues-unsubscr...@flink.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [flink] huacaicai commented on pull request #19492: Update TimeWindow.java

huacaicai commented on PR #19492: URL: https://github.com/apache/flink/pull/19492#issuecomment-1100086913 The window size cannot accurately express the true meaning of the parameter, because the sliding window needs to pass in the step size, and the rolling window passes in the window size. Based on the rolling window is a special window with a step size equal to the window size, so I modified the parameter to the window step size, which can better express the meaning of the method parameters. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: issues-unsubscr...@flink.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Comment Edited] (FLINK-6755) Allow triggering Checkpoints through command line client

[

https://issues.apache.org/jira/browse/FLINK-6755?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17458438#comment-17458438

]

Piotr Nowojski edited comment on FLINK-6755 at 4/15/22 12:51 PM:

-

The motivation behind this feature request will be covered by FLINK-25276.

As mentioned above by Aljoscha, there might be still a value of exposing manual

checkpoint triggering REST API hook, so I'm keeping this ticket open. However

it doesn't look like such feature is well motivated. Implementation of this

should be quite straightforward since Flink internally already supports this

(FLINK-24280). It's just not exposed in anyway to the user.

edit: Although this idea might be still valid, I strongly think we should not

expose checkpoint directory when triggering checkpoints as proposed in the

description:

{noformat}

./bin/flink checkpoint [checkpointDirectory]

{noformat}

Since checkpoints are owned fully by Flink, CLI/REST API call to trigger

checkpoints should not expose anything like that. If anything, it should be

just a simple trigger with optionally parameters like whether the checkpoint

should be full or incremental.

was (Author: pnowojski):

The motivation behind this feature request will be covered by FLINK-25276.

As mentioned above by Aljoscha, there might be still a value of exposing manual

checkpoint triggering REST API hook, so I'm keeping this ticket open. However

it doesn't look like such feature is well motivated. Implementation of this

should be quite straightforward since Flink internally already supports this

(FLINK-24280). It's just not exposed in anyway to the user.

> Allow triggering Checkpoints through command line client

>

>

> Key: FLINK-6755

> URL: https://issues.apache.org/jira/browse/FLINK-6755

> Project: Flink

> Issue Type: New Feature

> Components: Command Line Client, Runtime / Checkpointing

>Affects Versions: 1.3.0

>Reporter: Gyula Fora

>Priority: Not a Priority

> Labels: auto-deprioritized-major, auto-unassigned

>

> The command line client currently only allows triggering (and canceling with)

> Savepoints.

> While this is good if we want to fork or modify the pipelines in a

> non-checkpoint compatible way, now with incremental checkpoints this becomes

> wasteful for simple job restarts/pipeline updates.

> I suggest we add a new command:

> ./bin/flink checkpoint [checkpointDirectory]

> and a new flag -c for the cancel command to indicate we want to trigger a

> checkpoint:

> ./bin/flink cancel -c [targetDirectory]

> Otherwise this can work similar to the current savepoint taking logic, we

> could probably even piggyback on the current messages by adding boolean flag

> indicating whether it should be a savepoint or a checkpoint.

--

This message was sent by Atlassian Jira

(v8.20.1#820001)

[GitHub] [flink] huacaicai opened a new pull request, #19492: Update TimeWindow.java

huacaicai opened a new pull request, #19492: URL: https://github.com/apache/flink/pull/19492 The window size cannot accurately express the true meaning of the parameter, because the sliding window needs to pass in the step size, and the rolling window passes in the window size. Based on the rolling window is a special window with a step size equal to the window size, so I modified the parameter to the window step size, which can better express the meaning of the method parameters. ## What is the purpose of the change *(For example: This pull request makes task deployment go through the blob server, rather than through RPC. That way we avoid re-transferring them on each deployment (during recovery).)* ## Brief change log *(for example:)* - *The TaskInfo is stored in the blob store on job creation time as a persistent artifact* - *Deployments RPC transmits only the blob storage reference* - *TaskManagers retrieve the TaskInfo from the blob cache* ## Verifying this change Please make sure both new and modified tests in this PR follows the conventions defined in our code quality guide: https://flink.apache.org/contributing/code-style-and-quality-common.html#testing *(Please pick either of the following options)* This change is a trivial rework / code cleanup without any test coverage. *(or)* This change is already covered by existing tests, such as *(please describe tests)*. *(or)* This change added tests and can be verified as follows: *(example:)* - *Added integration tests for end-to-end deployment with large payloads (100MB)* - *Extended integration test for recovery after master (JobManager) failure* - *Added test that validates that TaskInfo is transferred only once across recoveries* - *Manually verified the change by running a 4 node cluster with 2 JobManagers and 4 TaskManagers, a stateful streaming program, and killing one JobManager and two TaskManagers during the execution, verifying that recovery happens correctly.* ## Does this pull request potentially affect one of the following parts: - Dependencies (does it add or upgrade a dependency): (yes / no) - The public API, i.e., is any changed class annotated with `@Public(Evolving)`: (yes / no) - The serializers: (yes / no / don't know) - The runtime per-record code paths (performance sensitive): (yes / no / don't know) - Anything that affects deployment or recovery: JobManager (and its components), Checkpointing, Kubernetes/Yarn, ZooKeeper: (yes / no / don't know) - The S3 file system connector: (yes / no / don't know) ## Documentation - Does this pull request introduce a new feature? (yes / no) - If yes, how is the feature documented? (not applicable / docs / JavaDocs / not documented) -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: issues-unsubscr...@flink.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Comment Edited] (FLINK-27101) Periodically break the chain of incremental checkpoint