[jira] [Updated] (KAFKA-7706) Spotbugs task fails with Gradle 5.0

[

https://issues.apache.org/jira/browse/KAFKA-7706?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

FuQiao Wang updated KAFKA-7706:

---

Description:

When I'm building Kafka with Gradle 5.0, the failure of Spotbugs task occurred.

I'm running "gradle build --stacktrace".

An interesting part of the stacktrace is:

{quote}

{code:java}

Caused by: java.lang.NoClassDefFoundError:

org/gradle/api/internal/ClosureBackedAction at

com.github.spotbugs.SpotBugsTask.reports(SpotBugsTask.java:136) at

com.github.spotbugs.SpotBugsTask.reports(SpotBugsTask.java:55) at

org.gradle.api.reporting.Reporting$reports.call(Unknown Source) at

build_9sk7crqolfjf8m0yenkwy63v1$_run_closure1.doCall(/Users/mchalupa/projects/others/spotbugsFailExample/build.gradle:18)

at org.gradle.util.ClosureBackedAction.execute(ClosureBackedAction.java:70) at

org.gradle.util.ConfigureUtil.configureTarget(ConfigureUtil.java:154) at

org.gradle.util.ConfigureUtil.configureSelf(ConfigureUtil.java:130) at

org.gradle.api.internal.AbstractTask.configure(AbstractTask.java:600) at

org.gradle.api.internal.AbstractTask.configure(AbstractTask.java:92) at

org.gradle.util.ConfigureUtil.configure(ConfigureUtil.java:103) at

org.gradle.util.ConfigureUtil$WrappedConfigureAction.execute(ConfigureUtil.java:166)

at

org.gradle.api.internal.DefaultDomainObjectCollection.all(DefaultDomainObjectCollection.java:161)

at

org.gradle.api.internal.DefaultDomainObjectCollection.all(DefaultDomainObjectCollection.java:190)

at

org.gradle.api.internal.tasks.DefaultRealizableTaskCollection.all(DefaultRealizableTaskCollection.java:229)

at

org.gradle.api.internal.DefaultDomainObjectCollection.withType(DefaultDomainObjectCollection.java:201)

at org.gradle.api.DomainObjectCollection$withType.call(Unknown Source) at

build_9sk7crqolfjf8m0yenkwy63v1.run(/Users/mchalupa/projects/others/spotbugsFailExample/build.gradle:17)

at

org.gradle.groovy.scripts.internal.DefaultScriptRunnerFactory$ScriptRunnerImpl.run(DefaultScriptRunnerFactory.java:90)

... 102 more{code}

{quote}

was:

When I'm building Kafka with Gradle 5.0, the failure of Spotbugs task occurred.

I'm running "gradle build --stacktrace".

An interesting part of the stacktrace is:

{quote}{{Caused by: java.lang.NoClassDefFoundError:

org/gradle/api/internal/ClosureBackedAction at

com.github.spotbugs.SpotBugsTask.reports(SpotBugsTask.java:136) at

com.github.spotbugs.SpotBugsTask.reports(SpotBugsTask.java:55) at

org.gradle.api.reporting.Reporting$reports.call(Unknown Source) at

build_9sk7crqolfjf8m0yenkwy63v1$_run_closure1.doCall(/Users/mchalupa/projects/others/spotbugsFailExample/build.gradle:18)

at org.gradle.util.ClosureBackedAction.execute(ClosureBackedAction.java:70) at

org.gradle.util.ConfigureUtil.configureTarget(ConfigureUtil.java:154) at

org.gradle.util.ConfigureUtil.configureSelf(ConfigureUtil.java:130) at

org.gradle.api.internal.AbstractTask.configure(AbstractTask.java:600) at

org.gradle.api.internal.AbstractTask.configure(AbstractTask.java:92) at

org.gradle.util.ConfigureUtil.configure(ConfigureUtil.java:103) at

org.gradle.util.ConfigureUtil$WrappedConfigureAction.execute(ConfigureUtil.java:166)

at

org.gradle.api.internal.DefaultDomainObjectCollection.all(DefaultDomainObjectCollection.java:161)

at

org.gradle.api.internal.DefaultDomainObjectCollection.all(DefaultDomainObjectCollection.java:190)

at

org.gradle.api.internal.tasks.DefaultRealizableTaskCollection.all(DefaultRealizableTaskCollection.java:229)

at

org.gradle.api.internal.DefaultDomainObjectCollection.withType(DefaultDomainObjectCollection.java:201)

at org.gradle.api.DomainObjectCollection$withType.call(Unknown Source) at

build_9sk7crqolfjf8m0yenkwy63v1.run(/Users/mchalupa/projects/others/spotbugsFailExample/build.gradle:17)

at

org.gradle.groovy.scripts.internal.DefaultScriptRunnerFactory$ScriptRunnerImpl.run(DefaultScriptRunnerFactory.java:90)

... 102 more}}

{quote}

> Spotbugs task fails with Gradle 5.0

> ---

>

> Key: KAFKA-7706

> URL: https://issues.apache.org/jira/browse/KAFKA-7706

> Project: Kafka

> Issue Type: Bug

> Components: build

> Environment: jdk1.8

> scala 2.12.7

> gradle 5.0

> Ubuntu/Windows

>Reporter: FuQiao Wang

>Priority: Major

> Labels: build

>

> When I'm building Kafka with Gradle 5.0, the failure of Spotbugs task

> occurred.

> I'm running "gradle build --stacktrace".

> An interesting part of the stacktrace is:

> {quote}

> {code:java}

> Caused by: java.lang.NoClassDefFoundError:

> org/gradle/api/internal/ClosureBackedAction at

> com.github.spotbugs.SpotBugsTask.reports(SpotBugsTask.java:136) at

> com.github.spotbugs.SpotBugsTask.reports(SpotBugsTask.java:55) at

> org.gradle.api.reporting.Reporting$reports.call(Unknown Source) at

> build_9sk7crqolfjf8m0yenkwy63v1$_run_closure1.doCall(/Users/mchalupa/proj

[jira] [Updated] (KAFKA-7706) Spotbugs task fails with Gradle 5.0

[

https://issues.apache.org/jira/browse/KAFKA-7706?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

FuQiao Wang updated KAFKA-7706:

---

Description:

When I'm building Kafka with Gradle 5.0, the failure of Spotbugs task occurred.

I'm running "gradle build --stacktrace".

An interesting part of the stacktrace is:

{quote}

{code:java}

Caused by: java.lang.NoClassDefFoundError:

org/gradle/api/internal/ClosureBackedAction at

com.github.spotbugs.SpotBugsTask.reports(SpotBugsTask.java:136) at

com.github.spotbugs.SpotBugsTask.reports(SpotBugsTask.java:55) at

org.gradle.api.reporting.Reporting$reports.call(Unknown Source) at

build_9sk7crqolfjf8m0yenkwy63v1$_run_closure1.doCall(/Users/mchalupa/projects/others/spotbugsFailExample/build.gradle:18)

at org.gradle.util.ClosureBackedAction.execute(ClosureBackedAction.java:70) at

org.gradle.util.ConfigureUtil.configureTarget(ConfigureUtil.java:154) at

org.gradle.util.ConfigureUtil.configureSelf(ConfigureUtil.java:130) at

org.gradle.api.internal.AbstractTask.configure(AbstractTask.java:600) at

org.gradle.api.internal.AbstractTask.configure(AbstractTask.java:92) at

org.gradle.util.ConfigureUtil.configure(ConfigureUtil.java:103) at

org.gradle.util.ConfigureUtil$WrappedConfigureAction.execute(ConfigureUtil.java:166)

at

org.gradle.api.internal.DefaultDomainObjectCollection.all(DefaultDomainObjectCollection.java:161)

at

org.gradle.api.internal.DefaultDomainObjectCollection.all(DefaultDomainObjectCollection.java:190)

at

org.gradle.api.internal.tasks.DefaultRealizableTaskCollection.all(DefaultRealizableTaskCollection.java:229)

at

org.gradle.api.internal.DefaultDomainObjectCollection.withType(DefaultDomainObjectCollection.java:201)

at org.gradle.api.DomainObjectCollection$withType.call(Unknown Source) at

build_9sk7crqolfjf8m0yenkwy63v1.run(/Users/mchalupa/projects/others/spotbugsFailExample/build.gradle:17)

at

org.gradle.groovy.scripts.internal.DefaultScriptRunnerFactory$ScriptRunnerImpl.run(DefaultScriptRunnerFactory.java:90)

... 102 more{code}

{quote}

was:

When I'm building Kafka with Gradle 5.0, the failure of Spotbugs task occurred.

I'm running "gradle build --stacktrace".

An interesting part of the stacktrace is:

{quote}

{code:java}

Caused by: java.lang.NoClassDefFoundError:

org/gradle/api/internal/ClosureBackedAction at

com.github.spotbugs.SpotBugsTask.reports(SpotBugsTask.java:136) at

com.github.spotbugs.SpotBugsTask.reports(SpotBugsTask.java:55) at

org.gradle.api.reporting.Reporting$reports.call(Unknown Source) at

build_9sk7crqolfjf8m0yenkwy63v1$_run_closure1.doCall(/Users/mchalupa/projects/others/spotbugsFailExample/build.gradle:18)

at org.gradle.util.ClosureBackedAction.execute(ClosureBackedAction.java:70) at

org.gradle.util.ConfigureUtil.configureTarget(ConfigureUtil.java:154) at

org.gradle.util.ConfigureUtil.configureSelf(ConfigureUtil.java:130) at

org.gradle.api.internal.AbstractTask.configure(AbstractTask.java:600) at

org.gradle.api.internal.AbstractTask.configure(AbstractTask.java:92) at

org.gradle.util.ConfigureUtil.configure(ConfigureUtil.java:103) at

org.gradle.util.ConfigureUtil$WrappedConfigureAction.execute(ConfigureUtil.java:166)

at

org.gradle.api.internal.DefaultDomainObjectCollection.all(DefaultDomainObjectCollection.java:161)

at

org.gradle.api.internal.DefaultDomainObjectCollection.all(DefaultDomainObjectCollection.java:190)

at

org.gradle.api.internal.tasks.DefaultRealizableTaskCollection.all(DefaultRealizableTaskCollection.java:229)

at

org.gradle.api.internal.DefaultDomainObjectCollection.withType(DefaultDomainObjectCollection.java:201)

at org.gradle.api.DomainObjectCollection$withType.call(Unknown Source) at

build_9sk7crqolfjf8m0yenkwy63v1.run(/Users/mchalupa/projects/others/spotbugsFailExample/build.gradle:17)

at

org.gradle.groovy.scripts.internal.DefaultScriptRunnerFactory$ScriptRunnerImpl.run(DefaultScriptRunnerFactory.java:90)

... 102 more{code}

{quote}

> Spotbugs task fails with Gradle 5.0

> ---

>

> Key: KAFKA-7706

> URL: https://issues.apache.org/jira/browse/KAFKA-7706

> Project: Kafka

> Issue Type: Bug

> Components: build

> Environment: jdk1.8

> scala 2.12.7

> gradle 5.0

> Ubuntu/Windows

>Reporter: FuQiao Wang

>Priority: Major

> Labels: build

>

> When I'm building Kafka with Gradle 5.0, the failure of Spotbugs task

> occurred.

> I'm running "gradle build --stacktrace".

> An interesting part of the stacktrace is:

> {quote}

> {code:java}

> Caused by: java.lang.NoClassDefFoundError:

> org/gradle/api/internal/ClosureBackedAction at

> com.github.spotbugs.SpotBugsTask.reports(SpotBugsTask.java:136) at

> com.github.spotbugs.SpotBugsTask.reports(SpotBugsTask.java:55) at

> org.gradle.api.reporting.Reporting$reports.call(Unknown Source) at

> build_9sk7crqolfjf8m0yenkwy63v1$_run_closu

[jira] [Updated] (KAFKA-7706) Spotbugs task fails with Gradle 5.0

[

https://issues.apache.org/jira/browse/KAFKA-7706?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

FuQiao Wang updated KAFKA-7706:

---

Description:

When I'm building Kafka with Gradle 5.0, the failure of Spotbugs task occurred.

I'm running "gradle build --stacktrace".

An interesting part of the stacktrace is:

{quote}

{code:java}

Caused by: java.lang.NoClassDefFoundError:

org/gradle/api/internal/ClosureBackedAction

at com.github.spotbugs.SpotBugsTask.reports(SpotBugsTask.java:136)

at com.github.spotbugs.SpotBugsTask.reports(SpotBugsTask.java:55)

at org.gradle.api.reporting.Reporting$reports.call(Unknown Source)

at

build_9sk7crqolfjf8m0yenkwy63v1$_run_closure1.doCall(/Users/mchalupa/projects/others/spotbugsFailExample/build.gradle:18)

at org.gradle.util.ClosureBackedAction.execute(ClosureBackedAction.java:70)

at org.gradle.util.ConfigureUtil.configureTarget(ConfigureUtil.java:154)

at org.gradle.util.ConfigureUtil.configureSelf(ConfigureUtil.java:130)

at org.gradle.api.internal.AbstractTask.configure(AbstractTask.java:600)

at org.gradle.api.internal.AbstractTask.configure(AbstractTask.java:92)

at org.gradle.util.ConfigureUtil.configure(ConfigureUtil.java:103) at

org.gradle.util.ConfigureUtil$WrappedConfigureAction.execute(ConfigureUtil.java:166)

at

org.gradle.api.internal.DefaultDomainObjectCollection.all(DefaultDomainObjectCollection.java:161)

at

org.gradle.api.internal.DefaultDomainObjectCollection.all(DefaultDomainObjectCollection.java:190)

at

org.gradle.api.internal.tasks.DefaultRealizableTaskCollection.all(DefaultRealizableTaskCollection.java:229)

at

org.gradle.api.internal.DefaultDomainObjectCollection.withType(DefaultDomainObjectCollection.java:201)

at org.gradle.api.DomainObjectCollection$withType.call(Unknown Source)

at

build_9sk7crqolfjf8m0yenkwy63v1.run(/Users/mchalupa/projects/others/spotbugsFailExample/build.gradle:17)

at

org.gradle.groovy.scripts.internal.DefaultScriptRunnerFactory$ScriptRunnerImpl.run(DefaultScriptRunnerFactory.java:90)

... 102 more{code}

{quote}

was:

When I'm building Kafka with Gradle 5.0, the failure of Spotbugs task occurred.

I'm running "gradle build --stacktrace".

An interesting part of the stacktrace is:

{quote}

{code:java}

Caused by: java.lang.NoClassDefFoundError:

org/gradle/api/internal/ClosureBackedAction at

com.github.spotbugs.SpotBugsTask.reports(SpotBugsTask.java:136) at

com.github.spotbugs.SpotBugsTask.reports(SpotBugsTask.java:55) at

org.gradle.api.reporting.Reporting$reports.call(Unknown Source) at

build_9sk7crqolfjf8m0yenkwy63v1$_run_closure1.doCall(/Users/mchalupa/projects/others/spotbugsFailExample/build.gradle:18)

at org.gradle.util.ClosureBackedAction.execute(ClosureBackedAction.java:70) at

org.gradle.util.ConfigureUtil.configureTarget(ConfigureUtil.java:154) at

org.gradle.util.ConfigureUtil.configureSelf(ConfigureUtil.java:130) at

org.gradle.api.internal.AbstractTask.configure(AbstractTask.java:600) at

org.gradle.api.internal.AbstractTask.configure(AbstractTask.java:92) at

org.gradle.util.ConfigureUtil.configure(ConfigureUtil.java:103) at

org.gradle.util.ConfigureUtil$WrappedConfigureAction.execute(ConfigureUtil.java:166)

at

org.gradle.api.internal.DefaultDomainObjectCollection.all(DefaultDomainObjectCollection.java:161)

at

org.gradle.api.internal.DefaultDomainObjectCollection.all(DefaultDomainObjectCollection.java:190)

at

org.gradle.api.internal.tasks.DefaultRealizableTaskCollection.all(DefaultRealizableTaskCollection.java:229)

at

org.gradle.api.internal.DefaultDomainObjectCollection.withType(DefaultDomainObjectCollection.java:201)

at org.gradle.api.DomainObjectCollection$withType.call(Unknown Source) at

build_9sk7crqolfjf8m0yenkwy63v1.run(/Users/mchalupa/projects/others/spotbugsFailExample/build.gradle:17)

at

org.gradle.groovy.scripts.internal.DefaultScriptRunnerFactory$ScriptRunnerImpl.run(DefaultScriptRunnerFactory.java:90)

... 102 more{code}

{quote}

> Spotbugs task fails with Gradle 5.0

> ---

>

> Key: KAFKA-7706

> URL: https://issues.apache.org/jira/browse/KAFKA-7706

> Project: Kafka

> Issue Type: Bug

> Components: build

> Environment: jdk1.8

> scala 2.12.7

> gradle 5.0

> Ubuntu/Windows

>Reporter: FuQiao Wang

>Priority: Major

> Labels: build

>

> When I'm building Kafka with Gradle 5.0, the failure of Spotbugs task

> occurred.

> I'm running "gradle build --stacktrace".

> An interesting part of the stacktrace is:

> {quote}

> {code:java}

> Caused by: java.lang.NoClassDefFoundError:

> org/gradle/api/internal/ClosureBackedAction

> at com.github.spotbugs.SpotBugsTask.reports(SpotBugsTask.java:136)

> at com.github.spotbugs.SpotBugsTask.reports(SpotBugsTask.java:55)

> at org.gradle.api.reporting.Reporting$reports.call(Unknown Source)

> at

> build_9sk7crqolfjf8m0yenkwy63v1$_run_c

[jira] [Created] (KAFKA-7706) Spotbugs task fails with Gradle 5.0

FuQiao Wang created KAFKA-7706:

--

Summary: Spotbugs task fails with Gradle 5.0

Key: KAFKA-7706

URL: https://issues.apache.org/jira/browse/KAFKA-7706

Project: Kafka

Issue Type: Bug

Components: build

Environment: jdk1.8

scala 2.12.7

gradle 5.0

Ubuntu/Windows

Reporter: FuQiao Wang

When I'm building Kafka with Gradle 5.0, the failure of Spotbugs task occurred.

I'm running "gradle build --stacktrace".

An interesting part of the stacktrace is:

{quote}{{Caused by: java.lang.NoClassDefFoundError:

org/gradle/api/internal/ClosureBackedAction at

com.github.spotbugs.SpotBugsTask.reports(SpotBugsTask.java:136) at

com.github.spotbugs.SpotBugsTask.reports(SpotBugsTask.java:55) at

org.gradle.api.reporting.Reporting$reports.call(Unknown Source) at

build_9sk7crqolfjf8m0yenkwy63v1$_run_closure1.doCall(/Users/mchalupa/projects/others/spotbugsFailExample/build.gradle:18)

at org.gradle.util.ClosureBackedAction.execute(ClosureBackedAction.java:70) at

org.gradle.util.ConfigureUtil.configureTarget(ConfigureUtil.java:154) at

org.gradle.util.ConfigureUtil.configureSelf(ConfigureUtil.java:130) at

org.gradle.api.internal.AbstractTask.configure(AbstractTask.java:600) at

org.gradle.api.internal.AbstractTask.configure(AbstractTask.java:92) at

org.gradle.util.ConfigureUtil.configure(ConfigureUtil.java:103) at

org.gradle.util.ConfigureUtil$WrappedConfigureAction.execute(ConfigureUtil.java:166)

at

org.gradle.api.internal.DefaultDomainObjectCollection.all(DefaultDomainObjectCollection.java:161)

at

org.gradle.api.internal.DefaultDomainObjectCollection.all(DefaultDomainObjectCollection.java:190)

at

org.gradle.api.internal.tasks.DefaultRealizableTaskCollection.all(DefaultRealizableTaskCollection.java:229)

at

org.gradle.api.internal.DefaultDomainObjectCollection.withType(DefaultDomainObjectCollection.java:201)

at org.gradle.api.DomainObjectCollection$withType.call(Unknown Source) at

build_9sk7crqolfjf8m0yenkwy63v1.run(/Users/mchalupa/projects/others/spotbugsFailExample/build.gradle:17)

at

org.gradle.groovy.scripts.internal.DefaultScriptRunnerFactory$ScriptRunnerImpl.run(DefaultScriptRunnerFactory.java:90)

... 102 more}}

{quote}

--

This message was sent by Atlassian JIRA

(v7.6.3#76005)

[jira] [Updated] (KAFKA-7706) Spotbugs task fails with Gradle 5.0

[

https://issues.apache.org/jira/browse/KAFKA-7706?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

FuQiao Wang updated KAFKA-7706:

---

Description:

*1.* When I'm building Kafka with Gradle 5.0, the failure of Spotbugs task

occurred.

I'm running "gradle build --stacktrace".

An interesting part of the stacktrace is:

{quote}

{code:java}

Caused by: java.lang.NoClassDefFoundError:

org/gradle/api/internal/ClosureBackedAction

at com.github.spotbugs.SpotBugsTask.reports(SpotBugsTask.java:136)

at com.github.spotbugs.SpotBugsTask.reports(SpotBugsTask.java:55)

at org.gradle.api.reporting.Reporting$reports.call(Unknown Source)

at

build_9sk7crqolfjf8m0yenkwy63v1$_run_closure1.doCall(/Users/mchalupa/projects/others/spotbugsFailExample/build.gradle:18)

at

org.gradle.util.ClosureBackedAction.execute(ClosureBackedAction.java:70)

at

org.gradle.util.ConfigureUtil.configureTarget(ConfigureUtil.java:154)

at org.gradle.util.ConfigureUtil.configureSelf(ConfigureUtil.java:130)

at

org.gradle.api.internal.AbstractTask.configure(AbstractTask.java:600)

at org.gradle.api.internal.AbstractTask.configure(AbstractTask.java:92)

at org.gradle.util.ConfigureUtil.configure(ConfigureUtil.java:103) at

org.gradle.util.ConfigureUtil$WrappedConfigureAction.execute(ConfigureUtil.java:166)

at

org.gradle.api.internal.DefaultDomainObjectCollection.all(DefaultDomainObjectCollection.java:161)

at

org.gradle.api.internal.DefaultDomainObjectCollection.all(DefaultDomainObjectCollection.java:190)

at

org.gradle.api.internal.tasks.DefaultRealizableTaskCollection.all(DefaultRealizableTaskCollection.java:229)

at

org.gradle.api.internal.DefaultDomainObjectCollection.withType(DefaultDomainObjectCollection.java:201)

at org.gradle.api.DomainObjectCollection$withType.call(Unknown Source)

at

build_9sk7crqolfjf8m0yenkwy63v1.run(/Users/mchalupa/projects/others/spotbugsFailExample/build.gradle:17)

at

org.gradle.groovy.scripts.internal.DefaultScriptRunnerFactory$ScriptRunnerImpl.run(DefaultScriptRunnerFactory.java:90)

... 102 more

{code}

{quote}

*2.* Similar to the previous one--- ---When I'm building Kafka with Gradle 5.0,

apply plugin[org.scoverage] fails

I'm running "gradle build --stacktrace".

An interesting part of the stacktrace is:

{quote}

{code:java}

Caused by: org.gradle.api.internal.plugins.PluginApplicationException: Failed

to apply plugin [id 'org.scoverage']

at

org.gradle.api.internal.plugins.DefaultPluginManager.doApply(DefaultPluginManager.java:160)

at

org.gradle.api.internal.plugins.DefaultPluginManager.apply(DefaultPluginManager.java:130)

... ...

Caused by: org.gradle.api.reflect.ObjectInstantiationException: Could not

create an instance of type org.scoverage.ScoverageExtension_Decorated.

at

org.gradle.internal.reflect.DirectInstantiator.newInstance(DirectInstantiator.java:53)

at

org.gradle.api.internal.ClassGeneratorBackedInstantiator.newInstance(ClassGeneratorBackedInstantiator.java:36)

at

org.gradle.api.internal.plugins.DefaultConvention.instantiate(DefaultConvention.java:242)

at

org.gradle.api.internal.plugins.DefaultConvention.create(DefaultConvention.java:142)

at org.scoverage.ScoveragePlugin.apply(ScoveragePlugin.groovy:18)

at org.scoverage.ScoveragePlugin.apply(ScoveragePlugin.groovy)

at

org.gradle.api.internal.plugins.ImperativeOnlyPluginTarget.applyImperative(ImperativeOnlyPluginTarget.java:42)

at

org.gradle.api.internal.plugins.RuleBasedPluginTarget.applyImperative(RuleBasedPluginTarget.java:50)

at

org.gradle.api.internal.plugins.DefaultPluginManager.addPlugin(DefaultPluginManager.java:174)

at

org.gradle.api.internal.plugins.DefaultPluginManager.access$300(DefaultPluginManager.java:50)

... 167 more

Caused by: org.gradle.api.InvalidUserDataException: You can't map a property

that does not exist: propertyName=testClassesDir

at

org.gradle.api.internal.ConventionAwareHelper.map(ConventionAwareHelper.java:56)

at

org.gradle.api.internal.ConventionAwareHelper.map(ConventionAwareHelper.java:80)

at org.gradle.api.internal.ConventionMapping$map.call(Unknown Source)

at

org.scoverage.ScoverageExtension$_closure6.doCall(ScoverageExtension.groovy:89)

at

org.gradle.util.ClosureBackedAction.execute(ClosureBackedAction.java:70)

at org.gradle.util.ConfigureUtil.configureTarget(ConfigureUtil.java:154)

at org.gradle.util.ConfigureUtil.configureSelf(ConfigureUtil.java:130)

... 186 more

{code}

{quote}

was:

When I'm building Kafka with Gradle 5.0, the failure of Spotbugs task occurred.

I'm running "gradle build --stacktrace".

An interesting part of the stacktrace is:

{quote}

{code:java}

Caused by: java.lang.NoClassD

[jira] [Updated] (KAFKA-7706) Spotbugs task fails with Gradle 5.0

[

https://issues.apache.org/jira/browse/KAFKA-7706?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

FuQiao Wang updated KAFKA-7706:

---

Attachment: 0001-fix-bug-build-fails-wiht-gradle-5.0.patch

> Spotbugs task fails with Gradle 5.0

> ---

>

> Key: KAFKA-7706

> URL: https://issues.apache.org/jira/browse/KAFKA-7706

> Project: Kafka

> Issue Type: Bug

> Components: build

> Environment: jdk1.8

> scala 2.12.7

> gradle 5.0

> Ubuntu/Windows

>Reporter: FuQiao Wang

>Priority: Major

> Labels: build

> Attachments: 0001-fix-bug-build-fails-wiht-gradle-5.0.patch

>

>

> *1.* When I'm building Kafka with Gradle 5.0, the failure of Spotbugs task

> occurred.

> I'm running "gradle build --stacktrace".

> An interesting part of the stacktrace is:

> {quote}

> {code:java}

> Caused by: java.lang.NoClassDefFoundError:

> org/gradle/api/internal/ClosureBackedAction

> at com.github.spotbugs.SpotBugsTask.reports(SpotBugsTask.java:136)

> at com.github.spotbugs.SpotBugsTask.reports(SpotBugsTask.java:55)

> at org.gradle.api.reporting.Reporting$reports.call(Unknown Source)

> at

> build_9sk7crqolfjf8m0yenkwy63v1$_run_closure1.doCall(/Users/mchalupa/projects/others/spotbugsFailExample/build.gradle:18)

>

> at

> org.gradle.util.ClosureBackedAction.execute(ClosureBackedAction.java:70)

> at

> org.gradle.util.ConfigureUtil.configureTarget(ConfigureUtil.java:154)

> at

> org.gradle.util.ConfigureUtil.configureSelf(ConfigureUtil.java:130)

> at

> org.gradle.api.internal.AbstractTask.configure(AbstractTask.java:600)

> at

> org.gradle.api.internal.AbstractTask.configure(AbstractTask.java:92)

> at org.gradle.util.ConfigureUtil.configure(ConfigureUtil.java:103) at

> org.gradle.util.ConfigureUtil$WrappedConfigureAction.execute(ConfigureUtil.java:166)

>

> at

> org.gradle.api.internal.DefaultDomainObjectCollection.all(DefaultDomainObjectCollection.java:161)

>

> at

> org.gradle.api.internal.DefaultDomainObjectCollection.all(DefaultDomainObjectCollection.java:190)

>

> at

> org.gradle.api.internal.tasks.DefaultRealizableTaskCollection.all(DefaultRealizableTaskCollection.java:229)

>

> at

> org.gradle.api.internal.DefaultDomainObjectCollection.withType(DefaultDomainObjectCollection.java:201)

>

> at org.gradle.api.DomainObjectCollection$withType.call(Unknown

> Source)

> at

> build_9sk7crqolfjf8m0yenkwy63v1.run(/Users/mchalupa/projects/others/spotbugsFailExample/build.gradle:17)

>

> at

> org.gradle.groovy.scripts.internal.DefaultScriptRunnerFactory$ScriptRunnerImpl.run(DefaultScriptRunnerFactory.java:90)

> ... 102 more

> {code}

> {quote}

> *2.* Similar to the previous one--- ---When I'm building Kafka with Gradle

> 5.0, apply plugin[org.scoverage] fails

> I'm running "gradle build --stacktrace".

> An interesting part of the stacktrace is:

> {quote}

> {code:java}

> Caused by: org.gradle.api.internal.plugins.PluginApplicationException: Failed

> to apply plugin [id 'org.scoverage']

> at

> org.gradle.api.internal.plugins.DefaultPluginManager.doApply(DefaultPluginManager.java:160)

> at

> org.gradle.api.internal.plugins.DefaultPluginManager.apply(DefaultPluginManager.java:130)

> ... ...

> Caused by: org.gradle.api.reflect.ObjectInstantiationException: Could not

> create an instance of type org.scoverage.ScoverageExtension_Decorated.

> at

> org.gradle.internal.reflect.DirectInstantiator.newInstance(DirectInstantiator.java:53)

> at

> org.gradle.api.internal.ClassGeneratorBackedInstantiator.newInstance(ClassGeneratorBackedInstantiator.java:36)

> at

> org.gradle.api.internal.plugins.DefaultConvention.instantiate(DefaultConvention.java:242)

> at

> org.gradle.api.internal.plugins.DefaultConvention.create(DefaultConvention.java:142)

> at org.scoverage.ScoveragePlugin.apply(ScoveragePlugin.groovy:18)

> at org.scoverage.ScoveragePlugin.apply(ScoveragePlugin.groovy)

> at

> org.gradle.api.internal.plugins.ImperativeOnlyPluginTarget.applyImperative(ImperativeOnlyPluginTarget.java:42)

> at

> org.gradle.api.internal.plugins.RuleBasedPluginTarget.applyImperative(RuleBasedPluginTarget.java:50)

> at

> org.gradle.api.internal.plugins.DefaultPluginManager.addPlugin(DefaultPluginManager.java:174)

> at

> org.gradle.api.internal.plugins.DefaultPluginManager.access$300(DefaultPluginManager.java:50)

> ... 167 more

> Caused by: org.gradle.api.InvalidUserDataException: You can't map a property

> that does not exist: propertyName=testClassesDir

> at

> org.gradle.api.internal.ConventionAwareHelper.map(ConventionAwareHelper.java:56)

> at

> org.gradle.api.internal

[jira] [Updated] (KAFKA-7706) Spotbugs task fails with Gradle 5.0

[

https://issues.apache.org/jira/browse/KAFKA-7706?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

FuQiao Wang updated KAFKA-7706:

---

Attachment: (was: 0001-fix-bug-build-fails-wiht-gradle-5.0.patch)

> Spotbugs task fails with Gradle 5.0

> ---

>

> Key: KAFKA-7706

> URL: https://issues.apache.org/jira/browse/KAFKA-7706

> Project: Kafka

> Issue Type: Bug

> Components: build

> Environment: jdk1.8

> scala 2.12.7

> gradle 5.0

> Ubuntu/Windows

>Reporter: FuQiao Wang

>Priority: Major

> Labels: build

> Attachments: 0001-fix-bug-build-fails-wiht-gradle-5.0.patch

>

>

> *1.* When I'm building Kafka with Gradle 5.0, the failure of Spotbugs task

> occurred.

> I'm running "gradle build --stacktrace".

> An interesting part of the stacktrace is:

> {quote}

> {code:java}

> Caused by: java.lang.NoClassDefFoundError:

> org/gradle/api/internal/ClosureBackedAction

> at com.github.spotbugs.SpotBugsTask.reports(SpotBugsTask.java:136)

> at com.github.spotbugs.SpotBugsTask.reports(SpotBugsTask.java:55)

> at org.gradle.api.reporting.Reporting$reports.call(Unknown Source)

> at

> build_9sk7crqolfjf8m0yenkwy63v1$_run_closure1.doCall(/Users/mchalupa/projects/others/spotbugsFailExample/build.gradle:18)

>

> at

> org.gradle.util.ClosureBackedAction.execute(ClosureBackedAction.java:70)

> at

> org.gradle.util.ConfigureUtil.configureTarget(ConfigureUtil.java:154)

> at

> org.gradle.util.ConfigureUtil.configureSelf(ConfigureUtil.java:130)

> at

> org.gradle.api.internal.AbstractTask.configure(AbstractTask.java:600)

> at

> org.gradle.api.internal.AbstractTask.configure(AbstractTask.java:92)

> at org.gradle.util.ConfigureUtil.configure(ConfigureUtil.java:103) at

> org.gradle.util.ConfigureUtil$WrappedConfigureAction.execute(ConfigureUtil.java:166)

>

> at

> org.gradle.api.internal.DefaultDomainObjectCollection.all(DefaultDomainObjectCollection.java:161)

>

> at

> org.gradle.api.internal.DefaultDomainObjectCollection.all(DefaultDomainObjectCollection.java:190)

>

> at

> org.gradle.api.internal.tasks.DefaultRealizableTaskCollection.all(DefaultRealizableTaskCollection.java:229)

>

> at

> org.gradle.api.internal.DefaultDomainObjectCollection.withType(DefaultDomainObjectCollection.java:201)

>

> at org.gradle.api.DomainObjectCollection$withType.call(Unknown

> Source)

> at

> build_9sk7crqolfjf8m0yenkwy63v1.run(/Users/mchalupa/projects/others/spotbugsFailExample/build.gradle:17)

>

> at

> org.gradle.groovy.scripts.internal.DefaultScriptRunnerFactory$ScriptRunnerImpl.run(DefaultScriptRunnerFactory.java:90)

> ... 102 more

> {code}

> {quote}

> *2.* Similar to the previous one--- ---When I'm building Kafka with Gradle

> 5.0, apply plugin[org.scoverage] fails

> I'm running "gradle build --stacktrace".

> An interesting part of the stacktrace is:

> {quote}

> {code:java}

> Caused by: org.gradle.api.internal.plugins.PluginApplicationException: Failed

> to apply plugin [id 'org.scoverage']

> at

> org.gradle.api.internal.plugins.DefaultPluginManager.doApply(DefaultPluginManager.java:160)

> at

> org.gradle.api.internal.plugins.DefaultPluginManager.apply(DefaultPluginManager.java:130)

> ... ...

> Caused by: org.gradle.api.reflect.ObjectInstantiationException: Could not

> create an instance of type org.scoverage.ScoverageExtension_Decorated.

> at

> org.gradle.internal.reflect.DirectInstantiator.newInstance(DirectInstantiator.java:53)

> at

> org.gradle.api.internal.ClassGeneratorBackedInstantiator.newInstance(ClassGeneratorBackedInstantiator.java:36)

> at

> org.gradle.api.internal.plugins.DefaultConvention.instantiate(DefaultConvention.java:242)

> at

> org.gradle.api.internal.plugins.DefaultConvention.create(DefaultConvention.java:142)

> at org.scoverage.ScoveragePlugin.apply(ScoveragePlugin.groovy:18)

> at org.scoverage.ScoveragePlugin.apply(ScoveragePlugin.groovy)

> at

> org.gradle.api.internal.plugins.ImperativeOnlyPluginTarget.applyImperative(ImperativeOnlyPluginTarget.java:42)

> at

> org.gradle.api.internal.plugins.RuleBasedPluginTarget.applyImperative(RuleBasedPluginTarget.java:50)

> at

> org.gradle.api.internal.plugins.DefaultPluginManager.addPlugin(DefaultPluginManager.java:174)

> at

> org.gradle.api.internal.plugins.DefaultPluginManager.access$300(DefaultPluginManager.java:50)

> ... 167 more

> Caused by: org.gradle.api.InvalidUserDataException: You can't map a property

> that does not exist: propertyName=testClassesDir

> at

> org.gradle.api.internal.ConventionAwareHelper.map(ConventionAwareHelper.java:56)

> at

> org.gradle.a

Re: [jira] [Updated] (KAFKA-7705) Update javadoc for the values of delivery.timeout.ms or linger.ms

should put delivery.timeout.ms a bit higher than 3 + 1? (default value of request.timeout.ms and specific value of linger.ms) > On Dec 5, 2018, at 04:43, John Roesler (JIRA) wrote: > > > [ > https://issues.apache.org/jira/browse/KAFKA-7705?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel > ] > > John Roesler updated KAFKA-7705: > >Component/s: producer > clients > >> Update javadoc for the values of delivery.timeout.ms or linger.ms >> - >> >>Key: KAFKA-7705 >>URL: https://issues.apache.org/jira/browse/KAFKA-7705 >>Project: Kafka >> Issue Type: Bug >> Components: clients, documentation, producer >> Affects Versions: 2.1.0 >> Reporter: huxihx >> Priority: Minor >> Labels: newbie >> >> In >> [https://kafka.apache.org/21/javadoc/index.html?org/apache/kafka/clients/producer/KafkaProducer.html,] >> the sample producer code fails to run due to the ConfigException thrown: >> delivery.timeout.ms should be equal to or larger than linger.ms + >> request.timeout.ms >> The given value for delivery.timeout.ms or linger.ms on that page should be >> updated accordingly. > > > > -- > This message was sent by Atlassian JIRA > (v7.6.3#76005)

[jira] [Commented] (KAFKA-7697) Possible deadlock in kafka.cluster.Partition

[

https://issues.apache.org/jira/browse/KAFKA-7697?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=16709777#comment-16709777

]

ASF GitHub Bot commented on KAFKA-7697:

---

rajinisivaram closed pull request #5999: KAFKA-7697: Process DelayedFetch

without holding leaderIsrUpdateLock

URL: https://github.com/apache/kafka/pull/5999

This is a PR merged from a forked repository.

As GitHub hides the original diff on merge, it is displayed below for

the sake of provenance:

As this is a foreign pull request (from a fork), the diff is supplied

below (as it won't show otherwise due to GitHub magic):

diff --git a/core/src/main/scala/kafka/cluster/Partition.scala

b/core/src/main/scala/kafka/cluster/Partition.scala

index 745c89a393b..1f52bd769cf 100755

--- a/core/src/main/scala/kafka/cluster/Partition.scala

+++ b/core/src/main/scala/kafka/cluster/Partition.scala

@@ -740,8 +740,6 @@ class Partition(val topicPartition: TopicPartition,

}

val info = log.appendAsLeader(records, leaderEpoch =

this.leaderEpoch, isFromClient)

- // probably unblock some follower fetch requests since log end

offset has been updated

-

replicaManager.tryCompleteDelayedFetch(TopicPartitionOperationKey(this.topic,

this.partitionId))

// we may need to increment high watermark since ISR could be down

to 1

(info, maybeIncrementLeaderHW(leaderReplica))

@@ -754,6 +752,10 @@ class Partition(val topicPartition: TopicPartition,

// some delayed operations may be unblocked after HW changed

if (leaderHWIncremented)

tryCompleteDelayedRequests()

+else {

+ // probably unblock some follower fetch requests since log end offset

has been updated

+ replicaManager.tryCompleteDelayedFetch(new

TopicPartitionOperationKey(topicPartition))

+}

info

}

diff --git a/core/src/test/scala/unit/kafka/cluster/PartitionTest.scala

b/core/src/test/scala/unit/kafka/cluster/PartitionTest.scala

index 6e38ca9575b..cfaa147f407 100644

--- a/core/src/test/scala/unit/kafka/cluster/PartitionTest.scala

+++ b/core/src/test/scala/unit/kafka/cluster/PartitionTest.scala

@@ -19,14 +19,14 @@ package kafka.cluster

import java.io.File

import java.nio.ByteBuffer

import java.util.{Optional, Properties}

-import java.util.concurrent.CountDownLatch

+import java.util.concurrent.{CountDownLatch, Executors, TimeUnit,

TimeoutException}

import java.util.concurrent.atomic.AtomicBoolean

import kafka.api.Request

import kafka.common.UnexpectedAppendOffsetException

import kafka.log.{Defaults => _, _}

import kafka.server._

-import kafka.utils.{MockScheduler, MockTime, TestUtils}

+import kafka.utils.{CoreUtils, MockScheduler, MockTime, TestUtils}

import kafka.zk.KafkaZkClient

import org.apache.kafka.common.TopicPartition

import org.apache.kafka.common.errors.ReplicaNotAvailableException

@@ -39,7 +39,7 @@ import org.apache.kafka.common.requests.{IsolationLevel,

LeaderAndIsrRequest, Li

import org.junit.{After, Before, Test}

import org.junit.Assert._

import org.scalatest.Assertions.assertThrows

-import org.easymock.EasyMock

+import org.easymock.{Capture, EasyMock, IAnswer}

import scala.collection.JavaConverters._

@@ -671,7 +671,95 @@ class PartitionTest {

partition.updateReplicaLogReadResult(follower1Replica,

readResult(FetchDataInfo(LogOffsetMetadata(currentLeaderEpochStartOffset),

batch3), leaderReplica))

assertEquals("ISR", Set[Integer](leader, follower1, follower2),

partition.inSyncReplicas.map(_.brokerId))

- }

+ }

+

+ /**

+ * Verify that delayed fetch operations which are completed when records are

appended don't result in deadlocks.

+ * Delayed fetch operations acquire Partition leaderIsrUpdate read lock for

one or more partitions. So they

+ * need to be completed after releasing the lock acquired to append records.

Otherwise, waiting writers

+ * (e.g. to check if ISR needs to be shrinked) can trigger deadlock in

request handler threads waiting for

+ * read lock of one Partition while holding on to read lock of another

Partition.

+ */

+ @Test

+ def testDelayedFetchAfterAppendRecords(): Unit = {

+val replicaManager: ReplicaManager = EasyMock.mock(classOf[ReplicaManager])

+val zkClient: KafkaZkClient = EasyMock.mock(classOf[KafkaZkClient])

+val controllerId = 0

+val controllerEpoch = 0

+val leaderEpoch = 5

+val replicaIds = List[Integer](brokerId, brokerId + 1).asJava

+val isr = replicaIds

+val logConfig = LogConfig(new Properties)

+

+val topicPartitions = (0 until 5).map { i => new

TopicPartition("test-topic", i) }

+val logs = topicPartitions.map { tp => logManager.getOrCreateLog(tp,

logConfig) }

+val replicas = logs.map { log => new Replica(brokerId, log.topicPartition,

time, log = Some(log)) }

+val partitions = replicas.map { replica =>

+ val tp = repli

[jira] [Commented] (KAFKA-7705) Update javadoc for the values of delivery.timeout.ms or linger.ms

[ https://issues.apache.org/jira/browse/KAFKA-7705?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=16709791#comment-16709791 ] ASF GitHub Bot commented on KAFKA-7705: --- hackerwin7 opened a new pull request #6000: MINOR KAFKA-7705 : update java doc for delivery.timeout.ms URL: https://github.com/apache/kafka/pull/6000 update KafkaProducer javadoc to put delivery.timeout.ms >= request.timeout.ms + linger.ms This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org > Update javadoc for the values of delivery.timeout.ms or linger.ms > - > > Key: KAFKA-7705 > URL: https://issues.apache.org/jira/browse/KAFKA-7705 > Project: Kafka > Issue Type: Bug > Components: clients, documentation, producer >Affects Versions: 2.1.0 >Reporter: huxihx >Priority: Minor > Labels: newbie > > In > [https://kafka.apache.org/21/javadoc/index.html?org/apache/kafka/clients/producer/KafkaProducer.html,] > the sample producer code fails to run due to the ConfigException thrown: > delivery.timeout.ms should be equal to or larger than linger.ms + > request.timeout.ms > The given value for delivery.timeout.ms or linger.ms on that page should be > updated accordingly. -- This message was sent by Atlassian JIRA (v7.6.3#76005)

[jira] [Commented] (KAFKA-7706) Spotbugs task fails with Gradle 5.0

[ https://issues.apache.org/jira/browse/KAFKA-7706?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=16709800#comment-16709800 ] ASF GitHub Bot commented on KAFKA-7706: --- FuqiaoWang opened a new pull request #6001: KAFKA-7706: Spotbugs task fails with Gradle 5.0 URL: https://github.com/apache/kafka/pull/6001 1. When I'm building Kafka with Gradle 5.0, the failure of Spotbugs task occurred. I'm running "gradle build --stacktrace". An interesting part of the stacktrace is: ``` Caused by: java.lang.NoClassDefFoundError: org/gradle/api/internal/ClosureBackedAction at com.github.spotbugs.SpotBugsTask.reports(SpotBugsTask.java:136) at com.github.spotbugs.SpotBugsTask.reports(SpotBugsTask.java:55) at org.gradle.api.reporting.Reporting$reports.call(Unknown Source) at build_9sk7crqolfjf8m0yenkwy63v1$_run_closure1.doCall(/Users/mchalupa/projects/others/spotbugsFailExample/build.gradle:18) at org.gradle.util.ClosureBackedAction.execute(ClosureBackedAction.java:70) at org.gradle.util.ConfigureUtil.configureTarget(ConfigureUtil.java:154) at org.gradle.util.ConfigureUtil.configureSelf(ConfigureUtil.java:130) at org.gradle.api.internal.AbstractTask.configure(AbstractTask.java:600) at org.gradle.api.internal.AbstractTask.configure(AbstractTask.java:92) at org.gradle.util.ConfigureUtil.configure(ConfigureUtil.java:103) at org.gradle.util.ConfigureUtil$WrappedConfigureAction.execute(ConfigureUtil.java:166) at org.gradle.api.internal.DefaultDomainObjectCollection.all(DefaultDomainObjectCollection.java:161) at org.gradle.api.internal.DefaultDomainObjectCollection.all(DefaultDomainObjectCollection.java:190) at org.gradle.api.internal.tasks.DefaultRealizableTaskCollection.all(DefaultRealizableTaskCollection.java:229) at org.gradle.api.internal.DefaultDomainObjectCollection.withType(DefaultDomainObjectCollection.java:201) at org.gradle.api.DomainObjectCollection$withType.call(Unknown Source) at build_9sk7crqolfjf8m0yenkwy63v1.run(/Users/mchalupa/projects/others/spotbugsFailExample/build.gradle:17) at org.gradle.groovy.scripts.internal.DefaultScriptRunnerFactory$ScriptRunnerImpl.run(DefaultScriptRunnerFactory.java:90) ... 102 more ``` 2. Similar to the previous one--- ---When I'm building Kafka with Gradle 5.0, apply plugin[org.scoverage] fails I'm running "gradle build --stacktrace". An interesting part of the stacktrace is: ``` Caused by: org.gradle.api.internal.plugins.PluginApplicationException: Failed to apply plugin [id 'org.scoverage'] at org.gradle.api.internal.plugins.DefaultPluginManager.doApply(DefaultPluginManager.java:160) at org.gradle.api.internal.plugins.DefaultPluginManager.apply(DefaultPluginManager.java:130) ... ... Caused by: org.gradle.api.reflect.ObjectInstantiationException: Could not create an instance of type org.scoverage.ScoverageExtension_Decorated. at org.gradle.internal.reflect.DirectInstantiator.newInstance(DirectInstantiator.java:53) at org.gradle.api.internal.ClassGeneratorBackedInstantiator.newInstance(ClassGeneratorBackedInstantiator.java:36) at org.gradle.api.internal.plugins.DefaultConvention.instantiate(DefaultConvention.java:242) at org.gradle.api.internal.plugins.DefaultConvention.create(DefaultConvention.java:142) at org.scoverage.ScoveragePlugin.apply(ScoveragePlugin.groovy:18) at org.scoverage.ScoveragePlugin.apply(ScoveragePlugin.groovy) at org.gradle.api.internal.plugins.ImperativeOnlyPluginTarget.applyImperative(ImperativeOnlyPluginTarget.java:42) at org.gradle.api.internal.plugins.RuleBasedPluginTarget.applyImperative(RuleBasedPluginTarget.java:50) at org.gradle.api.internal.plugins.DefaultPluginManager.addPlugin(DefaultPluginManager.java:174) at org.gradle.api.internal.plugins.DefaultPluginManager.access$300(DefaultPluginManager.java:50) ... 167 more Caused by: org.gradle.api.InvalidUserDataException: You can't map a property that does not exist: propertyName=testClassesDir at org.gradle.api.internal.ConventionAwareHelper.map(ConventionAwareHelper.java:56) at org.gradle.api.internal.ConventionAwareHelper.map(ConventionAwareHelper.java:80) at org.gradle.api.internal.ConventionMapping$map.call(Unknown Source) at org.scoverage.ScoverageExtension$_closure6.doCall(ScoverageExtension.groovy:89) at org.gradle.util.ClosureBackedAction.execute(ClosureBackedAction.java:70) at org.gradle.util.ConfigureUtil.configureTarget(ConfigureUtil.java:154) at org.gradle.util

[jira] [Commented] (KAFKA-7706) Spotbugs task fails with Gradle 5.0

[

https://issues.apache.org/jira/browse/KAFKA-7706?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=16709811#comment-16709811

]

ASF GitHub Bot commented on KAFKA-7706:

---

FuqiaoWang closed pull request #6001: KAFKA-7706: Spotbugs task fails with

Gradle 5.0

URL: https://github.com/apache/kafka/pull/6001

This is a PR merged from a forked repository.

As GitHub hides the original diff on merge, it is displayed below for

the sake of provenance:

As this is a foreign pull request (from a fork), the diff is supplied

below (as it won't show otherwise due to GitHub magic):

diff --git a/build.gradle b/build.gradle

index d4a92a216c1..d1651f261d6 100644

--- a/build.gradle

+++ b/build.gradle

@@ -29,11 +29,11 @@ buildscript {

// For Apache Rat plugin to ignore non-Git files

classpath "org.ajoberstar:grgit:1.9.3"

classpath 'com.github.ben-manes:gradle-versions-plugin:0.17.0'

-classpath 'org.scoverage:gradle-scoverage:2.3.0'

+classpath 'org.scoverage:gradle-scoverage:2.5.0'

classpath 'com.github.jengelman.gradle.plugins:shadow:2.0.4'

classpath 'org.owasp:dependency-check-gradle:3.2.1'

classpath "com.diffplug.spotless:spotless-plugin-gradle:3.10.0"

-classpath "gradle.plugin.com.github.spotbugs:spotbugs-gradle-plugin:1.6.3"

+classpath "gradle.plugin.com.github.spotbugs:spotbugs-gradle-plugin:1.6.5"

}

}

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

> Spotbugs task fails with Gradle 5.0

> ---

>

> Key: KAFKA-7706

> URL: https://issues.apache.org/jira/browse/KAFKA-7706

> Project: Kafka

> Issue Type: Bug

> Components: build

> Environment: jdk1.8

> scala 2.12.7

> gradle 5.0

> Ubuntu/Windows

>Reporter: FuQiao Wang

>Priority: Major

> Labels: build

> Attachments: 0001-fix-bug-build-fails-wiht-gradle-5.0.patch

>

>

> *1.* When I'm building Kafka with Gradle 5.0, the failure of Spotbugs task

> occurred.

> I'm running "gradle build --stacktrace".

> An interesting part of the stacktrace is:

> {quote}

> {code:java}

> Caused by: java.lang.NoClassDefFoundError:

> org/gradle/api/internal/ClosureBackedAction

> at com.github.spotbugs.SpotBugsTask.reports(SpotBugsTask.java:136)

> at com.github.spotbugs.SpotBugsTask.reports(SpotBugsTask.java:55)

> at org.gradle.api.reporting.Reporting$reports.call(Unknown Source)

> at

> build_9sk7crqolfjf8m0yenkwy63v1$_run_closure1.doCall(/Users/mchalupa/projects/others/spotbugsFailExample/build.gradle:18)

>

> at

> org.gradle.util.ClosureBackedAction.execute(ClosureBackedAction.java:70)

> at

> org.gradle.util.ConfigureUtil.configureTarget(ConfigureUtil.java:154)

> at

> org.gradle.util.ConfigureUtil.configureSelf(ConfigureUtil.java:130)

> at

> org.gradle.api.internal.AbstractTask.configure(AbstractTask.java:600)

> at

> org.gradle.api.internal.AbstractTask.configure(AbstractTask.java:92)

> at org.gradle.util.ConfigureUtil.configure(ConfigureUtil.java:103) at

> org.gradle.util.ConfigureUtil$WrappedConfigureAction.execute(ConfigureUtil.java:166)

>

> at

> org.gradle.api.internal.DefaultDomainObjectCollection.all(DefaultDomainObjectCollection.java:161)

>

> at

> org.gradle.api.internal.DefaultDomainObjectCollection.all(DefaultDomainObjectCollection.java:190)

>

> at

> org.gradle.api.internal.tasks.DefaultRealizableTaskCollection.all(DefaultRealizableTaskCollection.java:229)

>

> at

> org.gradle.api.internal.DefaultDomainObjectCollection.withType(DefaultDomainObjectCollection.java:201)

>

> at org.gradle.api.DomainObjectCollection$withType.call(Unknown

> Source)

> at

> build_9sk7crqolfjf8m0yenkwy63v1.run(/Users/mchalupa/projects/others/spotbugsFailExample/build.gradle:17)

>

> at

> org.gradle.groovy.scripts.internal.DefaultScriptRunnerFactory$ScriptRunnerImpl.run(DefaultScriptRunnerFactory.java:90)

> ... 102 more

> {code}

> {quote}

> *2.* Similar to the previous one--- ---When I'm building Kafka with Gradle

> 5.0, apply plugin[org.scoverage] fails

> I'm running "gradle build --stacktrace".

> An interesting part of the stacktrace is:

> {quote}

> {code:java}

> Caused by: org.gradle.api.internal.plugins.PluginApplicationException: Failed

> to apply plugin [id 'org.scoverage']

> at

> org.gradle.api.internal.plugins.DefaultPluginManager.doApply(DefaultPluginManager.java:160)

> at

> org.gradle.api.internal.plugins.DefaultPluginManager.apply(DefaultPluginManage

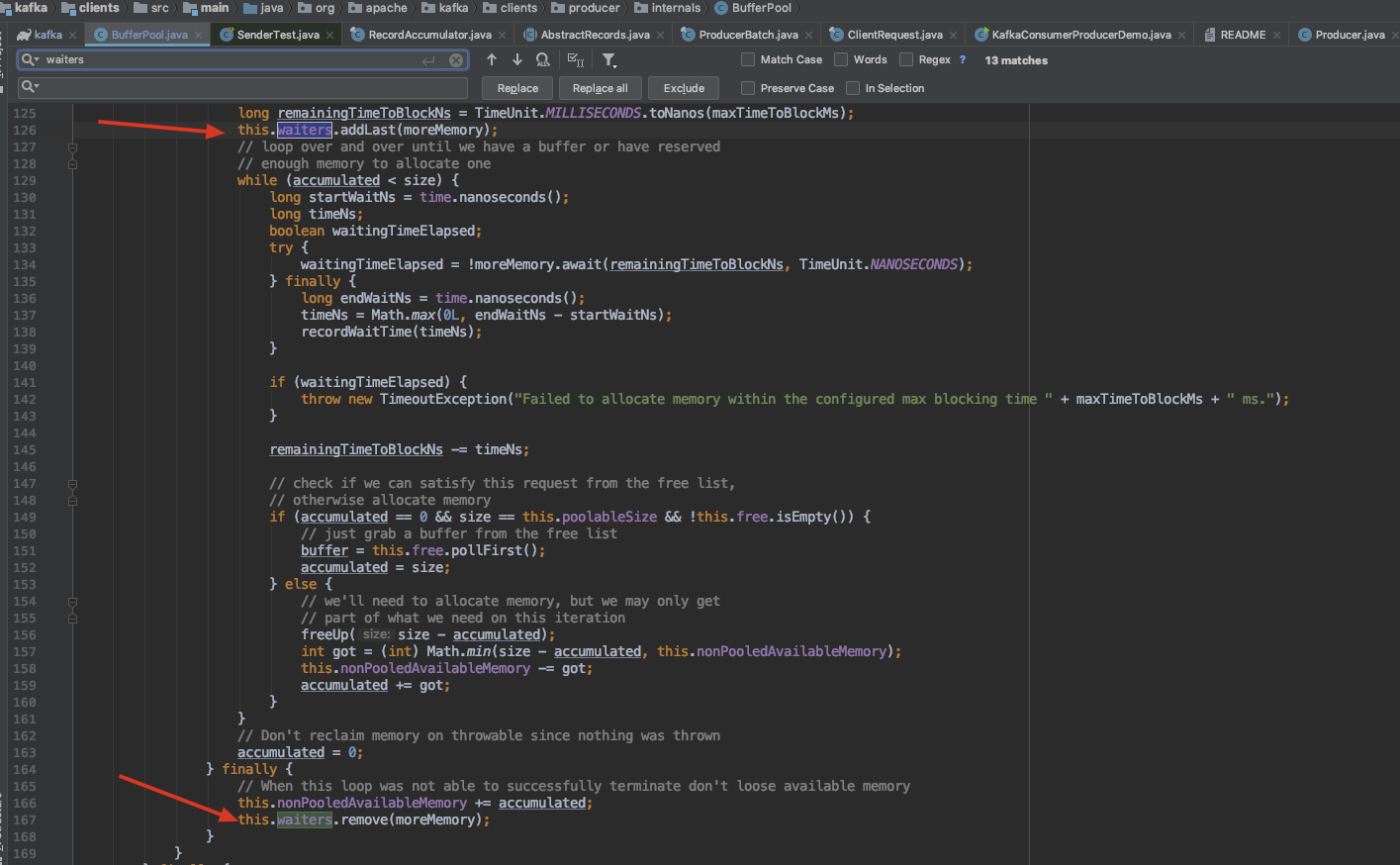

[jira] [Created] (KAFKA-7707) Some code is not necessary

huangyiming created KAFKA-7707:

--

Summary: Some code is not necessary

Key: KAFKA-7707

URL: https://issues.apache.org/jira/browse/KAFKA-7707

Project: Kafka

Issue Type: Improvement

Reporter: huangyiming

Attachments: image-2018-12-05-18-01-46-886.png

!image-2018-12-05-18-01-46-886.png!

in the trunk branch,i think the code can clean,is not necessary,it will never

execute

{code:java}

if (!(this.nonPooledAvailableMemory == 0 && this.free.isEmpty()) &&

!this.waiters.isEmpty())

this.waiters.peekFirst().signal();

{code}

--

This message was sent by Atlassian JIRA

(v7.6.3#76005)

[jira] [Updated] (KAFKA-7707) Some code is not necessary

[

https://issues.apache.org/jira/browse/KAFKA-7707?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Sönke Liebau updated KAFKA-7707:

Description:

In the trunk branch in

[BufferPool.java|https://github.com/apache/kafka/blob/578205cadd0bf64d671c6c162229c4975081a9d6/clients/src/main/java/org/apache/kafka/clients/producer/internals/BufferPool.java#L174],

i think the code can clean,is not necessary,it will never execute

{code:java}

if (!(this.nonPooledAvailableMemory == 0 && this.free.isEmpty()) &&

!this.waiters.isEmpty())

this.waiters.peekFirst().signal();

{code}

was:

!image-2018-12-05-18-01-46-886.png!

in the trunk branch,i think the code can clean,is not necessary,it will never

execute

{code:java}

if (!(this.nonPooledAvailableMemory == 0 && this.free.isEmpty()) &&

!this.waiters.isEmpty())

this.waiters.peekFirst().signal();

{code}

> Some code is not necessary

> --

>

> Key: KAFKA-7707

> URL: https://issues.apache.org/jira/browse/KAFKA-7707

> Project: Kafka

> Issue Type: Improvement

>Reporter: huangyiming

>Priority: Minor

> Attachments: image-2018-12-05-18-01-46-886.png

>

>

> In the trunk branch in

> [BufferPool.java|https://github.com/apache/kafka/blob/578205cadd0bf64d671c6c162229c4975081a9d6/clients/src/main/java/org/apache/kafka/clients/producer/internals/BufferPool.java#L174],

> i think the code can clean,is not necessary,it will never execute

> {code:java}

> if (!(this.nonPooledAvailableMemory == 0 && this.free.isEmpty()) &&

> !this.waiters.isEmpty())

> this.waiters.peekFirst().signal();

> {code}

--

This message was sent by Atlassian JIRA

(v7.6.3#76005)

[jira] [Commented] (KAFKA-5286) Producer should await transaction completion in close

[ https://issues.apache.org/jira/browse/KAFKA-5286?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=16709877#comment-16709877 ] Viktor Somogyi commented on KAFKA-5286: --- [~apurva], [~ijuma], [~hachikuji] Is this the same as KAFKA-6635? I have a wip solution on that but I'd be happy to receive some feedback if I'm going towards the right direction. > Producer should await transaction completion in close > - > > Key: KAFKA-5286 > URL: https://issues.apache.org/jira/browse/KAFKA-5286 > Project: Kafka > Issue Type: Sub-task > Components: clients, core, producer >Affects Versions: 0.11.0.0 >Reporter: Jason Gustafson >Priority: Major > Fix For: 2.2.0 > > > We should wait at least as long as the timeout for a transaction which has > begun completion (commit or abort) to be finished. Tricky thing is whether we > should abort a transaction which is in progress. It seems reasonable since > that's the coordinator will either timeout and abort the transaction or the > next producer using the same transactionalId will fence the producer and > abort the transaction. In any case, the transaction will be aborted, so > perhaps we should do it proactively. -- This message was sent by Atlassian JIRA (v7.6.3#76005)

[jira] [Commented] (KAFKA-7707) Some code is not necessary

[

https://issues.apache.org/jira/browse/KAFKA-7707?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=16709878#comment-16709878

]

ASF GitHub Bot commented on KAFKA-7707:

---

huangyiminghappy opened a new pull request #6002: KAFKA-7707: clean the code

never execute

URL: https://github.com/apache/kafka/pull/6002

in the BufferPool,the waiters is locked by ReentrantLock,and the waiters add

Condition all within the lock,and the waiters remove also within the lock.in

the waiters there is only one Condition instance.

and in the finally we have remove the waiters's condition,so in the last we

use the

``` java

finally {

// signal any additional waiters if there is more memory left

// over for them

try {

if (!(this.nonPooledAvailableMemory == 0 &&

this.free.isEmpty()) && !this.waiters.isEmpty())

this.waiters.peekFirst().signal();

} finally {

// Another finally... otherwise find bugs complains

lock.unlock();

}

}

```

can modify like

``` java

finally {

lock.unlock();

}

```

### Committer Checklist (excluded from commit message)

- [ ] Verify design and implementation

- [ ] Verify test coverage and CI build status

- [ ] Verify documentation (including upgrade notes)

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

> Some code is not necessary

> --

>

> Key: KAFKA-7707

> URL: https://issues.apache.org/jira/browse/KAFKA-7707

> Project: Kafka

> Issue Type: Improvement

>Reporter: huangyiming

>Priority: Minor

> Attachments: image-2018-12-05-18-01-46-886.png

>

>

> In the trunk branch in

> [BufferPool.java|https://github.com/apache/kafka/blob/578205cadd0bf64d671c6c162229c4975081a9d6/clients/src/main/java/org/apache/kafka/clients/producer/internals/BufferPool.java#L174],

> i think the code can clean,is not necessary,it will never execute

> {code:java}

> if (!(this.nonPooledAvailableMemory == 0 && this.free.isEmpty()) &&

> !this.waiters.isEmpty())

> this.waiters.peekFirst().signal();

> {code}

--

This message was sent by Atlassian JIRA

(v7.6.3#76005)

[jira] [Assigned] (KAFKA-5209) Transient failure: kafka.server.MetadataRequestTest.testControllerId

[

https://issues.apache.org/jira/browse/KAFKA-5209?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Viktor Somogyi reassigned KAFKA-5209:

-

Assignee: Umesh Chaudhary

> Transient failure: kafka.server.MetadataRequestTest.testControllerId

>

>

> Key: KAFKA-5209

> URL: https://issues.apache.org/jira/browse/KAFKA-5209

> Project: Kafka

> Issue Type: Sub-task

> Components: unit tests

>Reporter: Guozhang Wang

>Assignee: Umesh Chaudhary

>Priority: Major

>

> {code}

> Stacktrace

> java.lang.NullPointerException

> at

> kafka.server.MetadataRequestTest.testControllerId(MetadataRequestTest.scala:57)

> at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

> at

> sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

> at

> sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

> at java.lang.reflect.Method.invoke(Method.java:498)

> at

> org.junit.runners.model.FrameworkMethod$1.runReflectiveCall(FrameworkMethod.java:50)

> at

> org.junit.internal.runners.model.ReflectiveCallable.run(ReflectiveCallable.java:12)

> at

> org.junit.runners.model.FrameworkMethod.invokeExplosively(FrameworkMethod.java:47)

> at

> org.junit.internal.runners.statements.InvokeMethod.evaluate(InvokeMethod.java:17)

> at

> org.junit.internal.runners.statements.RunBefores.evaluate(RunBefores.java:26)

> at

> org.junit.internal.runners.statements.RunAfters.evaluate(RunAfters.java:27)

> at org.junit.runners.ParentRunner.runLeaf(ParentRunner.java:325)

> at

> org.junit.runners.BlockJUnit4ClassRunner.runChild(BlockJUnit4ClassRunner.java:78)

> at

> org.junit.runners.BlockJUnit4ClassRunner.runChild(BlockJUnit4ClassRunner.java:57)

> at org.junit.runners.ParentRunner$3.run(ParentRunner.java:290)

> at org.junit.runners.ParentRunner$1.schedule(ParentRunner.java:71)

> at org.junit.runners.ParentRunner.runChildren(ParentRunner.java:288)

> at org.junit.runners.ParentRunner.access$000(ParentRunner.java:58)

> at org.junit.runners.ParentRunner$2.evaluate(ParentRunner.java:268)

> at org.junit.runners.ParentRunner.run(ParentRunner.java:363)

> at

> org.gradle.api.internal.tasks.testing.junit.JUnitTestClassExecuter.runTestClass(JUnitTestClassExecuter.java:114)

> at

> org.gradle.api.internal.tasks.testing.junit.JUnitTestClassExecuter.execute(JUnitTestClassExecuter.java:57)

> at

> org.gradle.api.internal.tasks.testing.junit.JUnitTestClassProcessor.processTestClass(JUnitTestClassProcessor.java:66)

> at

> org.gradle.api.internal.tasks.testing.SuiteTestClassProcessor.processTestClass(SuiteTestClassProcessor.java:51)

> at sun.reflect.GeneratedMethodAccessor50.invoke(Unknown Source)

> at

> sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

> at java.lang.reflect.Method.invoke(Method.java:498)

> at

> org.gradle.internal.dispatch.ReflectionDispatch.dispatch(ReflectionDispatch.java:35)

> at

> org.gradle.internal.dispatch.ReflectionDispatch.dispatch(ReflectionDispatch.java:24)

> at

> org.gradle.internal.dispatch.ContextClassLoaderDispatch.dispatch(ContextClassLoaderDispatch.java:32)

> at

> org.gradle.internal.dispatch.ProxyDispatchAdapter$DispatchingInvocationHandler.invoke(ProxyDispatchAdapter.java:93)

> at com.sun.proxy.$Proxy2.processTestClass(Unknown Source)

> at

> org.gradle.api.internal.tasks.testing.worker.TestWorker.processTestClass(TestWorker.java:109)

> at sun.reflect.GeneratedMethodAccessor49.invoke(Unknown Source)

> at

> sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

> at java.lang.reflect.Method.invoke(Method.java:498)

> at

> org.gradle.internal.dispatch.ReflectionDispatch.dispatch(ReflectionDispatch.java:35)

> at

> org.gradle.internal.dispatch.ReflectionDispatch.dispatch(ReflectionDispatch.java:24)

> at

> org.gradle.internal.remote.internal.hub.MessageHubBackedObjectConnection$DispatchWrapper.dispatch(MessageHubBackedObjectConnection.java:147)

> at

> org.gradle.internal.remote.internal.hub.MessageHubBackedObjectConnection$DispatchWrapper.dispatch(MessageHubBackedObjectConnection.java:129)

> at

> org.gradle.internal.remote.internal.hub.MessageHub$Handler.run(MessageHub.java:404)

> at

> org.gradle.internal.concurrent.ExecutorPolicy$CatchAndRecordFailures.onExecute(ExecutorPolicy.java:63)

> at

> org.gradle.internal.concurrent.StoppableExecutorImpl$1.run(StoppableExecutorImpl.java:46)

> at

> java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1142)

> at

> java.util.concurrent.ThreadPoolExecu

[jira] [Commented] (KAFKA-5209) Transient failure: kafka.server.MetadataRequestTest.testControllerId

[

https://issues.apache.org/jira/browse/KAFKA-5209?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=16709880#comment-16709880

]

Viktor Somogyi commented on KAFKA-5209:

---

[~umesh9...@gmail.com] are you planning to continue this? I've assigned it to

you but if you think you won't continue, I'm happy to take over.

> Transient failure: kafka.server.MetadataRequestTest.testControllerId

>

>

> Key: KAFKA-5209

> URL: https://issues.apache.org/jira/browse/KAFKA-5209

> Project: Kafka

> Issue Type: Sub-task

> Components: unit tests

>Reporter: Guozhang Wang

>Assignee: Umesh Chaudhary

>Priority: Major

>

> {code}

> Stacktrace

> java.lang.NullPointerException

> at

> kafka.server.MetadataRequestTest.testControllerId(MetadataRequestTest.scala:57)

> at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

> at

> sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

> at

> sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

> at java.lang.reflect.Method.invoke(Method.java:498)

> at

> org.junit.runners.model.FrameworkMethod$1.runReflectiveCall(FrameworkMethod.java:50)

> at

> org.junit.internal.runners.model.ReflectiveCallable.run(ReflectiveCallable.java:12)

> at

> org.junit.runners.model.FrameworkMethod.invokeExplosively(FrameworkMethod.java:47)

> at

> org.junit.internal.runners.statements.InvokeMethod.evaluate(InvokeMethod.java:17)

> at

> org.junit.internal.runners.statements.RunBefores.evaluate(RunBefores.java:26)

> at

> org.junit.internal.runners.statements.RunAfters.evaluate(RunAfters.java:27)

> at org.junit.runners.ParentRunner.runLeaf(ParentRunner.java:325)

> at

> org.junit.runners.BlockJUnit4ClassRunner.runChild(BlockJUnit4ClassRunner.java:78)

> at

> org.junit.runners.BlockJUnit4ClassRunner.runChild(BlockJUnit4ClassRunner.java:57)

> at org.junit.runners.ParentRunner$3.run(ParentRunner.java:290)

> at org.junit.runners.ParentRunner$1.schedule(ParentRunner.java:71)

> at org.junit.runners.ParentRunner.runChildren(ParentRunner.java:288)

> at org.junit.runners.ParentRunner.access$000(ParentRunner.java:58)

> at org.junit.runners.ParentRunner$2.evaluate(ParentRunner.java:268)

> at org.junit.runners.ParentRunner.run(ParentRunner.java:363)

> at

> org.gradle.api.internal.tasks.testing.junit.JUnitTestClassExecuter.runTestClass(JUnitTestClassExecuter.java:114)

> at

> org.gradle.api.internal.tasks.testing.junit.JUnitTestClassExecuter.execute(JUnitTestClassExecuter.java:57)

> at

> org.gradle.api.internal.tasks.testing.junit.JUnitTestClassProcessor.processTestClass(JUnitTestClassProcessor.java:66)

> at

> org.gradle.api.internal.tasks.testing.SuiteTestClassProcessor.processTestClass(SuiteTestClassProcessor.java:51)

> at sun.reflect.GeneratedMethodAccessor50.invoke(Unknown Source)

> at

> sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

> at java.lang.reflect.Method.invoke(Method.java:498)

> at

> org.gradle.internal.dispatch.ReflectionDispatch.dispatch(ReflectionDispatch.java:35)

> at

> org.gradle.internal.dispatch.ReflectionDispatch.dispatch(ReflectionDispatch.java:24)

> at

> org.gradle.internal.dispatch.ContextClassLoaderDispatch.dispatch(ContextClassLoaderDispatch.java:32)

> at

> org.gradle.internal.dispatch.ProxyDispatchAdapter$DispatchingInvocationHandler.invoke(ProxyDispatchAdapter.java:93)

> at com.sun.proxy.$Proxy2.processTestClass(Unknown Source)

> at

> org.gradle.api.internal.tasks.testing.worker.TestWorker.processTestClass(TestWorker.java:109)

> at sun.reflect.GeneratedMethodAccessor49.invoke(Unknown Source)

> at

> sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

> at java.lang.reflect.Method.invoke(Method.java:498)

> at

> org.gradle.internal.dispatch.ReflectionDispatch.dispatch(ReflectionDispatch.java:35)

> at

> org.gradle.internal.dispatch.ReflectionDispatch.dispatch(ReflectionDispatch.java:24)

> at

> org.gradle.internal.remote.internal.hub.MessageHubBackedObjectConnection$DispatchWrapper.dispatch(MessageHubBackedObjectConnection.java:147)

> at

> org.gradle.internal.remote.internal.hub.MessageHubBackedObjectConnection$DispatchWrapper.dispatch(MessageHubBackedObjectConnection.java:129)

> at

> org.gradle.internal.remote.internal.hub.MessageHub$Handler.run(MessageHub.java:404)

> at

> org.gradle.internal.concurrent.ExecutorPolicy$CatchAndRecordFailures.onExecute(ExecutorPolicy.java:63)

> at

> org.gradle.internal.concurrent.StoppableExecutorImpl$1.run(StoppableE

[jira] [Commented] (KAFKA-7707) Some code is not necessary

[

https://issues.apache.org/jira/browse/KAFKA-7707?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=16709870#comment-16709870

]

Sönke Liebau commented on KAFKA-7707:

-

Hi [~huangyimingha...@163.com],

thanks for looking into this and opening a ticket!

I've taken the liberty of replacing your Intellij screenshot by a link to the

relevant code on github, I hope that is ok with you.

Also, could you please explain why it is that you think this code will never be

executed?

> Some code is not necessary

> --

>

> Key: KAFKA-7707

> URL: https://issues.apache.org/jira/browse/KAFKA-7707

> Project: Kafka

> Issue Type: Improvement

>Reporter: huangyiming

>Priority: Minor

> Attachments: image-2018-12-05-18-01-46-886.png

>

>

> In the trunk branch in

> [BufferPool.java|https://github.com/apache/kafka/blob/578205cadd0bf64d671c6c162229c4975081a9d6/clients/src/main/java/org/apache/kafka/clients/producer/internals/BufferPool.java#L174],

> i think the code can clean,is not necessary,it will never execute

> {code:java}

> if (!(this.nonPooledAvailableMemory == 0 && this.free.isEmpty()) &&

> !this.waiters.isEmpty())

> this.waiters.peekFirst().signal();

> {code}

--

This message was sent by Atlassian JIRA

(v7.6.3#76005)

[jira] [Commented] (KAFKA-5383) Additional Test Cases for ReplicaManager

[ https://issues.apache.org/jira/browse/KAFKA-5383?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=16709926#comment-16709926 ] Viktor Somogyi commented on KAFKA-5383: --- [~hachikuji] do you mind if I pick this up? Since I've been working on the incremental partition reassignment, I think this is a good candidate for me. > Additional Test Cases for ReplicaManager > > > Key: KAFKA-5383 > URL: https://issues.apache.org/jira/browse/KAFKA-5383 > Project: Kafka > Issue Type: Sub-task > Components: clients, core, producer >Reporter: Jason Gustafson >Priority: Major > Fix For: 2.2.0 > > > KAFKA-5355 and KAFKA-5376 have shown that current testing of ReplicaManager > is inadequate. This is definitely the case when it comes to KIP-98 and is > likely true in general. We should improve this. -- This message was sent by Atlassian JIRA (v7.6.3#76005)

[jira] [Commented] (KAFKA-5453) Controller may miss requests sent to the broker when zk session timeout happens.

[

https://issues.apache.org/jira/browse/KAFKA-5453?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=16709927#comment-16709927

]

Viktor Somogyi commented on KAFKA-5453:

---

[~becket_qin] I'd pick this up if you don't mind, I'm interested in this issue.

> Controller may miss requests sent to the broker when zk session timeout

> happens.

>

>

> Key: KAFKA-5453

> URL: https://issues.apache.org/jira/browse/KAFKA-5453

> Project: Kafka

> Issue Type: Bug

> Components: core

>Affects Versions: 0.11.0.0

>Reporter: Jiangjie Qin

>Priority: Major

> Fix For: 2.2.0

>

>

> The issue I encountered was the following:

> 1. Partition reassignment was in progress, one replica of a partition is

> being reassigned from broker 1 to broker 2.

> 2. Controller received an ISR change notification which indicates broker 2

> has caught up.

> 3. Controller was sending StopReplicaRequest to broker 1.

> 4. Broker 1 zk session timeout occurs. Controller removed broker 1 from the

> cluster and cleaned up the queue. i.e. the StopReplicaRequest was removed

> from the ControllerChannelManager.

> 5. Broker 1 reconnected to zk and act as if it is still a follower replica of

> the partition.

> 6. Broker 1 will always receive exception from the leader because it is not

> in the replica list.

> Not sure what is the correct fix here. It seems that broke 1 in this case

> should ask the controller for the latest replica assignment.

> There are two related bugs:

> 1. when a {{NotAssignedReplicaException}} is thrown from

> {{Partition.updateReplicaLogReadResult()}}, the other partitions in the same

> request will failed to update the fetch timestamp and offset and thus also

> drop out of the ISR.

> 2. The {{NotAssignedReplicaException}} was not properly returned to the

> replicas, instead, a UnknownServerException is returned.

--

This message was sent by Atlassian JIRA

(v7.6.3#76005)

[jira] [Assigned] (KAFKA-7703) KafkaConsumer.position may return a wrong offset after "seekToEnd" is called

[ https://issues.apache.org/jira/browse/KAFKA-7703?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Viktor Somogyi reassigned KAFKA-7703: - Assignee: Viktor Somogyi > KafkaConsumer.position may return a wrong offset after "seekToEnd" is called > > > Key: KAFKA-7703 > URL: https://issues.apache.org/jira/browse/KAFKA-7703 > Project: Kafka > Issue Type: Bug > Components: clients >Affects Versions: 1.1.0, 1.1.1, 2.0.0, 2.0.1, 2.1.0 >Reporter: Shixiong Zhu >Assignee: Viktor Somogyi >Priority: Major > > After "seekToEnd" is called, "KafkaConsumer.position" may return a wrong > offset set by another reset request. > Here is a reproducer: > https://github.com/zsxwing/kafka/commit/4e1aa11bfa99a38ac1e2cb0872c055db56b33246 > In this reproducer, "poll(0)" will send an "earliest" request in background. > However, after "seekToEnd" is called, due to a race condition in > "Fetcher.resetOffsetIfNeeded" (It's not atomic, "seekToEnd" could happen > between the check > https://github.com/zsxwing/kafka/commit/4e1aa11bfa99a38ac1e2cb0872c055db56b33246#diff-b45245913eaae46aa847d2615d62cde0R585 > and the seek > https://github.com/zsxwing/kafka/commit/4e1aa11bfa99a38ac1e2cb0872c055db56b33246#diff-b45245913eaae46aa847d2615d62cde0R605), > "KafkaConsumer.position" may return an "earliest" offset. -- This message was sent by Atlassian JIRA (v7.6.3#76005)

[jira] [Commented] (KAFKA-7703) KafkaConsumer.position may return a wrong offset after "seekToEnd" is called

[ https://issues.apache.org/jira/browse/KAFKA-7703?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=16710012#comment-16710012 ] Viktor Somogyi commented on KAFKA-7703: --- [~zsxwing] I'll pick this up if you don't mind and look into it. > KafkaConsumer.position may return a wrong offset after "seekToEnd" is called > > > Key: KAFKA-7703 > URL: https://issues.apache.org/jira/browse/KAFKA-7703 > Project: Kafka > Issue Type: Bug > Components: clients >Affects Versions: 1.1.0, 1.1.1, 2.0.0, 2.0.1, 2.1.0 >Reporter: Shixiong Zhu >Assignee: Viktor Somogyi >Priority: Major > > After "seekToEnd" is called, "KafkaConsumer.position" may return a wrong > offset set by another reset request. > Here is a reproducer: > https://github.com/zsxwing/kafka/commit/4e1aa11bfa99a38ac1e2cb0872c055db56b33246 > In this reproducer, "poll(0)" will send an "earliest" request in background. > However, after "seekToEnd" is called, due to a race condition in > "Fetcher.resetOffsetIfNeeded" (It's not atomic, "seekToEnd" could happen > between the check > https://github.com/zsxwing/kafka/commit/4e1aa11bfa99a38ac1e2cb0872c055db56b33246#diff-b45245913eaae46aa847d2615d62cde0R585 > and the seek > https://github.com/zsxwing/kafka/commit/4e1aa11bfa99a38ac1e2cb0872c055db56b33246#diff-b45245913eaae46aa847d2615d62cde0R605), > "KafkaConsumer.position" may return an "earliest" offset. -- This message was sent by Atlassian JIRA (v7.6.3#76005)

[jira] [Resolved] (KAFKA-7697) Possible deadlock in kafka.cluster.Partition

[ https://issues.apache.org/jira/browse/KAFKA-7697?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Rajini Sivaram resolved KAFKA-7697. --- Resolution: Fixed Reviewer: Jason Gustafson > Possible deadlock in kafka.cluster.Partition > > > Key: KAFKA-7697 > URL: https://issues.apache.org/jira/browse/KAFKA-7697 > Project: Kafka > Issue Type: Bug >Affects Versions: 2.1.0 >Reporter: Gian Merlino >Assignee: Rajini Sivaram >Priority: Blocker > Fix For: 2.2.0, 2.1.1 > > Attachments: threaddump.txt > > > After upgrading a fairly busy broker from 0.10.2.0 to 2.1.0, it locked up > within a few minutes (by "locked up" I mean that all request handler threads > were busy, and other brokers reported that they couldn't communicate with > it). I restarted it a few times and it did the same thing each time. After > downgrading to 0.10.2.0, the broker was stable. I attached a thread dump from > the last attempt on 2.1.0 that shows lots of kafka-request-handler- threads > trying to acquire the leaderIsrUpdateLock lock in kafka.cluster.Partition. > It jumps out that there are two threads that already have some read lock > (can't tell which one) and are trying to acquire a second one (on two > different read locks: 0x000708184b88 and 0x00070821f188): > kafka-request-handler-1 and kafka-request-handler-4. Both are handling a > produce request, and in the process of doing so, are calling > Partition.fetchOffsetSnapshot while trying to complete a DelayedFetch. At the > same time, both of those locks have writers from other threads waiting on > them (kafka-request-handler-2 and kafka-scheduler-6). Neither of those locks > appear to have writers that hold them (if only because no threads in the dump > are deep enough in inWriteLock to indicate that). > ReentrantReadWriteLock in nonfair mode prioritizes waiting writers over > readers. Is it possible that kafka-request-handler-1 and > kafka-request-handler-4 are each trying to read-lock the partition that is > currently locked by the other one, and they're both parked waiting for > kafka-request-handler-2 and kafka-scheduler-6 to get write locks, which they > never will, because the former two threads own read locks and aren't giving > them up? -- This message was sent by Atlassian JIRA (v7.6.3#76005)

[jira] [Assigned] (KAFKA-5453) Controller may miss requests sent to the broker when zk session timeout happens.

[