[jira] [Updated] (HDDS-3288) Update default RPC handler SCM/OM count to 100

[

https://issues.apache.org/jira/browse/HDDS-3288?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Mukul Kumar Singh updated HDDS-3288:

Fix Version/s: 0.6.0

Resolution: Fixed

Status: Resolved (was: Patch Available)

Thanks for the contribution [~rakeshr]. I have committed this.

> Update default RPC handler SCM/OM count to 100

> ---

>

> Key: HDDS-3288

> URL: https://issues.apache.org/jira/browse/HDDS-3288

> Project: Hadoop Distributed Data Store

> Issue Type: Improvement

> Components: om, SCM

>Reporter: Rakesh Radhakrishnan

>Assignee: Rakesh Radhakrishnan

>Priority: Minor

> Labels: pull-request-available

> Fix For: 0.6.0

>

> Time Spent: 20m

> Remaining Estimate: 0h

>

> Presently, default PC handler count of {{ozone.scm.handler.count.key=10}} and

> {{ozone.om.handler.count.key=20}} are too small values and its good to

> increase the default values to a realistic value.

> {code:java}

> ozone.om.handler.count.key=100

> ozone.scm.handler.count.key=100

> {code}

>

>

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

-

To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] mukul1987 merged pull request #729: HDDS-3288: Update default RPC handler SCM/OM count to 100

mukul1987 merged pull request #729: HDDS-3288: Update default RPC handler SCM/OM count to 100 URL: https://github.com/apache/hadoop-ozone/pull/729 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Comment Edited] (HDDS-3241) Invalid container reported to SCM should be deleted

[

https://issues.apache.org/jira/browse/HDDS-3241?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=17069198#comment-17069198

]

Yiqun Lin edited comment on HDDS-3241 at 3/28/20, 2:55 AM:

---

Thanks for the comments, [~elek] / [~msingh].

Actually current SCM safemode can also ensure this behavior is safe enough once

we startup SCM with wrong container/pipeline db files. And then leads large

containers deleted. This should not happen because SCM won't exit safemode

firstly since DN containers reported will not reach the safemode threshold

anyway.

Also I have mentioned another case that in large clusters, the node sent to

repair and come back to cluster again. SCM deletion behavior can help

automation cleanup Datanode stale container datas. This is also one common

cases.

I have updated the PR to make this configurable and disabled by default. Please

help have a look, thanks.

was (Author: linyiqun):

Thanks for the comments, [~elek] / [~msingh].

Actually current SCM safemode can also protect this behavior once we startup

SCM with wrong container/pipeline db files. And then leads large containers

deleted. This should not happen because SCM won't exit safemode firstly since

DN containers reported will not reach the safemode threshold anyway.

Also I have mentioned another case that in large clusters, the node sent to

repair and come back to cluster again. SCM deletion behavior can help

automation cleanup Datanode stale container datas. This is also one common

cases.

I have updated the PR to make this configurable and disabled by default. Please

help have a look, thanks.

> Invalid container reported to SCM should be deleted

> ---

>

> Key: HDDS-3241

> URL: https://issues.apache.org/jira/browse/HDDS-3241

> Project: Hadoop Distributed Data Store

> Issue Type: Bug

>Affects Versions: 0.4.1

>Reporter: Yiqun Lin

>Assignee: Yiqun Lin

>Priority: Major

> Labels: pull-request-available

> Time Spent: 10m

> Remaining Estimate: 0h

>

> For the invalid or out-updated container reported by Datanode,

> ContainerReportHandler in SCM only prints error log and doesn't

> take any action.

> {noformat}

> 2020-03-15 05:19:41,072 ERROR

> org.apache.hadoop.hdds.scm.container.ContainerReportHandler: Received

> container report for an unknown container 37 from datanode

> 0d98dfab-9d34-46c3-93fd-6b64b65ff543{ip: xx.xx.xx.xx, host: lyq-xx.xx.xx.xx,

> networkLocation: /dc2/rack1, certSerialId: null}.

> org.apache.hadoop.hdds.scm.container.ContainerNotFoundException: Container

> with id #37 not found.

> at

> org.apache.hadoop.hdds.scm.container.states.ContainerStateMap.checkIfContainerExist(ContainerStateMap.java:542)

> at

> org.apache.hadoop.hdds.scm.container.states.ContainerStateMap.getContainerInfo(ContainerStateMap.java:188)

> at

> org.apache.hadoop.hdds.scm.container.ContainerStateManager.getContainer(ContainerStateManager.java:484)

> at

> org.apache.hadoop.hdds.scm.container.SCMContainerManager.getContainer(SCMContainerManager.java:204)

> at

> org.apache.hadoop.hdds.scm.container.AbstractContainerReportHandler.processContainerReplica(AbstractContainerReportHandler.java:85)

> at

> org.apache.hadoop.hdds.scm.container.ContainerReportHandler.processContainerReplicas(ContainerReportHandler.java:126)

> at

> org.apache.hadoop.hdds.scm.container.ContainerReportHandler.onMessage(ContainerReportHandler.java:97)

> at

> org.apache.hadoop.hdds.scm.container.ContainerReportHandler.onMessage(ContainerReportHandler.java:46)

> at

> org.apache.hadoop.hdds.server.events.SingleThreadExecutor.lambda$onMessage$1(SingleThreadExecutor.java:81)

> at

> java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1142)

> at

> java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:617)

> at java.lang.Thread.run(Thread.java:745)

> 2020-03-15 05:19:41,073 ERROR

> org.apache.hadoop.hdds.scm.container.ContainerReportHandler: Received

> container report for an unknown container 38 from datanode

> 0d98dfab-9d34-46c3-93fd-6b64b65ff543{ip: xx.xx.xx.xx, host: lyq-xx.xx.xx.xx,

> networkLocation: /dc2/rack1, certSerialId: null}.

> org.apache.hadoop.hdds.scm.container.ContainerNotFoundException: Container

> with id #38 not found.

> at

> org.apache.hadoop.hdds.scm.container.states.ContainerStateMap.checkIfContainerExist(ContainerStateMap.java:542)

> at

> org.apache.hadoop.hdds.scm.container.states.ContainerStateMap.getContainerInfo(ContainerStateMap.java:188)

> at

> org.apache.hadoop.hdds.scm.container.ContainerStateManager.getContainer(ContainerStateManager.java:484)

> at

> org.apache.hadoop.hdds.scm.cont

[jira] [Commented] (HDDS-3241) Invalid container reported to SCM should be deleted

[

https://issues.apache.org/jira/browse/HDDS-3241?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=17069198#comment-17069198

]

Yiqun Lin commented on HDDS-3241:

-

Thanks for the comments, [~elek] / [~msingh].

Actually current SCM safemode can also protect this behavior once we startup

SCM with wrong container/pipeline db files. And then leads large containers

deleted. This should not happen because SCM won't exit safemode firstly since

DN containers reported will not reach the safemode threshold anyway.

Also I have mentioned another case that in large clusters, the node sent to

repair and come back to cluster again. SCM deletion behavior can help

automation cleanup Datanode stale container datas. This is also one common

cases.

I have updated the PR to make this configurable and disabled by default. Please

help have a look, thanks.

> Invalid container reported to SCM should be deleted

> ---

>

> Key: HDDS-3241

> URL: https://issues.apache.org/jira/browse/HDDS-3241

> Project: Hadoop Distributed Data Store

> Issue Type: Bug

>Affects Versions: 0.4.1

>Reporter: Yiqun Lin

>Assignee: Yiqun Lin

>Priority: Major

> Labels: pull-request-available

> Time Spent: 10m

> Remaining Estimate: 0h

>

> For the invalid or out-updated container reported by Datanode,

> ContainerReportHandler in SCM only prints error log and doesn't

> take any action.

> {noformat}

> 2020-03-15 05:19:41,072 ERROR

> org.apache.hadoop.hdds.scm.container.ContainerReportHandler: Received

> container report for an unknown container 37 from datanode

> 0d98dfab-9d34-46c3-93fd-6b64b65ff543{ip: xx.xx.xx.xx, host: lyq-xx.xx.xx.xx,

> networkLocation: /dc2/rack1, certSerialId: null}.

> org.apache.hadoop.hdds.scm.container.ContainerNotFoundException: Container

> with id #37 not found.

> at

> org.apache.hadoop.hdds.scm.container.states.ContainerStateMap.checkIfContainerExist(ContainerStateMap.java:542)

> at

> org.apache.hadoop.hdds.scm.container.states.ContainerStateMap.getContainerInfo(ContainerStateMap.java:188)

> at

> org.apache.hadoop.hdds.scm.container.ContainerStateManager.getContainer(ContainerStateManager.java:484)

> at

> org.apache.hadoop.hdds.scm.container.SCMContainerManager.getContainer(SCMContainerManager.java:204)

> at

> org.apache.hadoop.hdds.scm.container.AbstractContainerReportHandler.processContainerReplica(AbstractContainerReportHandler.java:85)

> at

> org.apache.hadoop.hdds.scm.container.ContainerReportHandler.processContainerReplicas(ContainerReportHandler.java:126)

> at

> org.apache.hadoop.hdds.scm.container.ContainerReportHandler.onMessage(ContainerReportHandler.java:97)

> at

> org.apache.hadoop.hdds.scm.container.ContainerReportHandler.onMessage(ContainerReportHandler.java:46)

> at

> org.apache.hadoop.hdds.server.events.SingleThreadExecutor.lambda$onMessage$1(SingleThreadExecutor.java:81)

> at

> java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1142)

> at

> java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:617)

> at java.lang.Thread.run(Thread.java:745)

> 2020-03-15 05:19:41,073 ERROR

> org.apache.hadoop.hdds.scm.container.ContainerReportHandler: Received

> container report for an unknown container 38 from datanode

> 0d98dfab-9d34-46c3-93fd-6b64b65ff543{ip: xx.xx.xx.xx, host: lyq-xx.xx.xx.xx,

> networkLocation: /dc2/rack1, certSerialId: null}.

> org.apache.hadoop.hdds.scm.container.ContainerNotFoundException: Container

> with id #38 not found.

> at

> org.apache.hadoop.hdds.scm.container.states.ContainerStateMap.checkIfContainerExist(ContainerStateMap.java:542)

> at

> org.apache.hadoop.hdds.scm.container.states.ContainerStateMap.getContainerInfo(ContainerStateMap.java:188)

> at

> org.apache.hadoop.hdds.scm.container.ContainerStateManager.getContainer(ContainerStateManager.java:484)

> at

> org.apache.hadoop.hdds.scm.container.SCMContainerManager.getContainer(SCMContainerManager.java:204)

> at

> org.apache.hadoop.hdds.scm.container.AbstractContainerReportHandler.processContainerReplica(AbstractContainerReportHandler.java:85)

> at

> org.apache.hadoop.hdds.scm.container.ContainerReportHandler.processContainerReplicas(ContainerReportHandler.java:126)

> at

> org.apache.hadoop.hdds.scm.container.ContainerReportHandler.onMessage(ContainerReportHandler.java:97)

> at

> org.apache.hadoop.hdds.scm.container.ContainerReportHandler.onMessage(ContainerReportHandler.java:46)

> at

> org.apache.hadoop.hdds.server.events.SingleThreadExecutor.lambda$onMessage$1(SingleThreadExecutor.java:81)

> at

> java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadP

[jira] [Created] (HDDS-3296) Ozone admin should always have read/write ACL permission on ozone objects

Xiaoyu Yao created HDDS-3296: Summary: Ozone admin should always have read/write ACL permission on ozone objects Key: HDDS-3296 URL: https://issues.apache.org/jira/browse/HDDS-3296 Project: Hadoop Distributed Data Store Issue Type: Bug Affects Versions: 0.5.0 Reporter: Xiaoyu Yao Assignee: Xiaoyu Yao Ozone admin should always have read/write acl permission to ozone objects. This way, if owner incorrectly set the acls and lose access, admin can always help to get acces back. -- This message was sent by Atlassian Jira (v8.3.4#803005) - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Updated] (HDDS-3291) Write operation when both OM followers are shutdown

[

https://issues.apache.org/jira/browse/HDDS-3291?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

ASF GitHub Bot updated HDDS-3291:

-

Labels: pull-request-available (was: )

> Write operation when both OM followers are shutdown

> ---

>

> Key: HDDS-3291

> URL: https://issues.apache.org/jira/browse/HDDS-3291

> Project: Hadoop Distributed Data Store

> Issue Type: Bug

>Reporter: Nilotpal Nandi

>Assignee: Bharat Viswanadham

>Priority: Major

> Labels: pull-request-available

>

> steps taken :

> --

> 1. In OM HA environment, shutdown both OM followers.

> 2. Start PUT key operation.

> PUT key operation is hung.

> Cluster details :

> https://quasar-vwryte-1.quasar-vwryte.root.hwx.site:7183/cmf/home

> Snippet of OM log on LEADER:

> {code:java}

> 2020-03-24 04:16:46,249 WARN org.apache.ratis.grpc.server.GrpcLogAppender:

> om1@group-9F198C4C3682->om2-AppendLogResponseHandler: Failed appendEntries:

> org.apache.ratis.thirdparty.io.grpc.StatusRuntimeException: UNAVAILABLE: io

> exception

> 2020-03-24 04:16:46,249 WARN org.apache.ratis.grpc.server.GrpcLogAppender:

> om1@group-9F198C4C3682->om3-AppendLogResponseHandler: Failed appendEntries:

> org.apache.ratis.thirdparty.io.grpc.StatusRuntimeException: UNAVAILABLE: io

> exception

> 2020-03-24 04:16:46,250 INFO org.apache.ratis.server.impl.FollowerInfo:

> om1@group-9F198C4C3682->om2: nextIndex: updateUnconditionally 360 -> 359

> 2020-03-24 04:16:46,250 INFO org.apache.ratis.server.impl.FollowerInfo:

> om1@group-9F198C4C3682->om3: nextIndex: updateUnconditionally 360 -> 359

> 2020-03-24 04:16:46,750 WARN org.apache.ratis.grpc.server.GrpcLogAppender:

> om1@group-9F198C4C3682->om3-AppendLogResponseHandler: Failed appendEntries:

> org.apache.ratis.thirdparty.io.grpc.StatusRuntimeException: UNAVAILABLE: io

> exception

> 2020-03-24 04:16:46,750 WARN org.apache.ratis.grpc.server.GrpcLogAppender:

> om1@group-9F198C4C3682->om2-AppendLogResponseHandler: Failed appendEntries:

> org.apache.ratis.thirdparty.io.grpc.StatusRuntimeException: UNAVAILABLE: io

> exception

> 2020-03-24 04:16:46,750 INFO org.apache.ratis.server.impl.FollowerInfo:

> om1@group-9F198C4C3682->om3: nextIndex: updateUnconditionally 360 -> 359

> 2020-03-24 04:16:46,750 INFO org.apache.ratis.server.impl.FollowerInfo:

> om1@group-9F198C4C3682->om2: nextIndex: updateUnconditionally 360 -> 359

> 2020-03-24 04:16:47,250 WARN org.apache.ratis.grpc.server.GrpcLogAppender:

> om1@group-9F198C4C3682->om3-AppendLogResponseHandler: Failed appendEntries:

> org.apache.ratis.thirdparty.io.grpc.StatusRuntimeException: UNAVAILABLE: io

> exception

> 2020-03-24 04:16:47,251 WARN org.apache.ratis.grpc.server.GrpcLogAppender:

> om1@group-9F198C4C3682->om2-AppendLogResponseHandler: Failed appendEntries:

> org.apache.ratis.thirdparty.io.grpc.StatusRuntimeException: UNAVAILABLE: io

> exception

> 2020-03-24 04:16:47,251 INFO org.apache.ratis.server.impl.FollowerInfo:

> om1@group-9F198C4C3682->om3: nextIndex: updateUnconditionally 360 -> 359

> 2020-03-24 04:16:47,251 INFO org.apache.ratis.server.impl.FollowerInfo:

> om1@group-9F198C4C3682->om2: nextIndex: updateUnconditionally 360 -> 359

> 2020-03-24 04:16:47,751 WARN org.apache.ratis.grpc.server.GrpcLogAppender:

> om1@group-9F198C4C3682->om2-AppendLogResponseHandler: Failed appendEntries:

> org.apache.ratis.thirdparty.io.grpc.StatusRuntimeException: UNAVAILABLE: io

> exception

> 2020-03-24 04:16:47,751 WARN org.apache.ratis.grpc.server.GrpcLogAppender:

> om1@group-9F198C4C3682->om3-AppendLogResponseHandler: Failed appendEntries:

> org.apache.ratis.thirdparty.io.grpc.StatusRuntimeException: UNAVAILABLE: io

> exception

> 2020-03-24 04:16:47,752 INFO org.apache.ratis.server.impl.FollowerInfo:

> om1@group-9F198C4C3682->om2: nextIndex: updateUnconditionally 360 -> 359

> 2020-03-24 04:16:47,752 INFO org.apache.ratis.server.impl.FollowerInfo:

> om1@group-9F198C4C3682->om3: nextIndex: updateUnconditionally 360 -> 359

> 2020-03-24 04:16:48,252 WARN org.apache.ratis.grpc.server.GrpcLogAppender:

> om1@group-9F198C4C3682->om2-AppendLogResponseHandler: Failed appendEntries:

> org.apache.ratis.thirdparty.io.grpc.StatusRuntimeException: UNAVAILABLE: io

> exception

> 2020-03-24 04:16:48,252 WARN org.apache.ratis.grpc.server.GrpcLogAppender:

> om1@group-9F198C4C3682->om3-AppendLogResponseHandler: Failed appendEntries:

> org.apache.ratis.thirdparty.io.grpc.StatusRuntimeException: UNAVAILABLE: io

> exception

> 2020-03-24 04:16:48,252 INFO org.apache.ratis.server.impl.FollowerInfo:

> om1@group-9F198C4C3682->om2: nextIndex: updateUnconditionally 360 -> 359

> 2020-03-24 04:16:48,252 INFO org.apache.ratis.server.impl.FollowerInfo:

> om1@group-9F198C4C3682->om3: nextIndex: updateUnconditionally 360 -> 359

> 2020-03-24 04:16:48,752 WARN org.apache.rati

[GitHub] [hadoop-ozone] bharatviswa504 opened a new pull request #733: HDDS-3291. Write operation when both OM followers are shutdown.

bharatviswa504 opened a new pull request #733: HDDS-3291. Write operation when both OM followers are shutdown. URL: https://github.com/apache/hadoop-ozone/pull/733 ## What changes were proposed in this pull request? Add IPC client time out, so that the client will fail with socket time out exception in cases of 2 OM node failures. ## What is the link to the Apache JIRA https://issues.apache.org/jira/browse/HDDS-3291 Please replace this section with the link to the Apache JIRA) ## How was this patch tested? Tested it in docker compose cluster with 1 minute, and see that it failed finally, instead of hanging. To repro this test, we need to change leader.election.time.out value also to large value, as we need this request to be submitted to ratis, and as ratis server keeps on retry then only we will see this issue. 2020-03-27 16:25:27,625 [main] INFO RetryInvocationHandler:411 - com.google.protobuf.ServiceException: java.net.SocketTimeoutException: Call From c5263a1df1ad/172.22.0.3 to om2:9862 failed on socket timeout exception: java.net.SocketTimeoutException: 6 millis timeout while waiting for channel to be ready for read. ch : java.nio.channels.SocketChannel[connected local=/172.22.0.3:56460 remote=om2/172.22.0.4:9862]; For more details see: http://wiki.apache.org/hadoop/SocketTimeout, while invoking $Proxy19.submitRequest over nodeId=om2,nodeAddress=om2:9862 after 15 failover attempts. Trying to failover immediately. 2020-03-27 16:25:27,626 [main] ERROR OMFailoverProxyProvider:285 - Failed to connect to OMs: [nodeId=om1,nodeAddress=om1:9862, nodeId=om3,nodeAddress=om3:9862, nodeId=om2,nodeAddress=om2:9862]. Attempted 15 failovers. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] vivekratnavel commented on issue #732: HDDS-3295. Ozone admins getting Permission Denied error while creating volume

vivekratnavel commented on issue #732: HDDS-3295. Ozone admins getting Permission Denied error while creating volume URL: https://github.com/apache/hadoop-ozone/pull/732#issuecomment-605341527 @bharatviswa504 @xiaoyuyao Please review This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Updated] (HDDS-3295) Ozone admins getting Permission Denied error while creating volume

[ https://issues.apache.org/jira/browse/HDDS-3295?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] ASF GitHub Bot updated HDDS-3295: - Labels: pull-request-available (was: ) > Ozone admins getting Permission Denied error while creating volume > --- > > Key: HDDS-3295 > URL: https://issues.apache.org/jira/browse/HDDS-3295 > Project: Hadoop Distributed Data Store > Issue Type: Bug > Components: Security >Affects Versions: 0.5.0 >Reporter: Vivek Ratnavel Subramanian >Assignee: Vivek Ratnavel Subramanian >Priority: Major > Labels: pull-request-available > > Even when a user is added to ozone.administrators, Permission Denied error > is thrown while creating a new volume. -- This message was sent by Atlassian Jira (v8.3.4#803005) - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] vivekratnavel opened a new pull request #732: HDDS-3295. Ozone admins getting Permission Denied error while creating volume

vivekratnavel opened a new pull request #732: HDDS-3295. Ozone admins getting Permission Denied error while creating volume URL: https://github.com/apache/hadoop-ozone/pull/732 ## What changes were proposed in this pull request? - get user information from om request instead of client ## What is the link to the Apache JIRA https://issues.apache.org/jira/browse/HDDS-3295 ## How was this patch tested? Tested manually in a cluster by replacing the ozone-manager jar. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Created] (HDDS-3295) Ozone admins getting Permission Denied error while creating volume

Vivek Ratnavel Subramanian created HDDS-3295: Summary: Ozone admins getting Permission Denied error while creating volume Key: HDDS-3295 URL: https://issues.apache.org/jira/browse/HDDS-3295 Project: Hadoop Distributed Data Store Issue Type: Bug Components: Security Affects Versions: 0.5.0 Reporter: Vivek Ratnavel Subramanian Assignee: Vivek Ratnavel Subramanian Even when a user is added to ozone.administrators, Permission Denied error is thrown while creating a new volume. -- This message was sent by Atlassian Jira (v8.3.4#803005) - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Commented] (HDDS-3266) Intermittent integration test failure due to DEADLINE_EXCEEDED

[

https://issues.apache.org/jira/browse/HDDS-3266?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=17069067#comment-17069067

]

Attila Doroszlai commented on HDDS-3266:

{code:title=https://github.com/apache/hadoop-ozone/pull/582/checks?check_run_id=540143086}

ERROR freon.RandomKeyGenerator (RandomKeyGenerator.java:run(1064)) - Exception

while validating write.

...

Caused by: java.io.IOException: java.util.concurrent.ExecutionException:

org.apache.ratis.thirdparty.io.grpc.StatusRuntimeException: DEADLINE_EXCEEDED:

ClientCall started after deadline exceeded: -0.186633493s from now

{code}

> Intermittent integration test failure due to DEADLINE_EXCEEDED

> --

>

> Key: HDDS-3266

> URL: https://issues.apache.org/jira/browse/HDDS-3266

> Project: Hadoop Distributed Data Store

> Issue Type: Bug

> Components: test

>Reporter: Attila Doroszlai

>Priority: Blocker

> Attachments:

> org.apache.hadoop.ozone.client.rpc.TestSecureOzoneRpcClient-output.txt,

> org.apache.hadoop.ozone.client.rpc.TestSecureOzoneRpcClient.txt,

> org.apache.hadoop.ozone.freon.TestRandomKeyGenerator-output.txt

>

>

> {code:title=https://github.com/apache/hadoop-ozone/runs/527778966}

> Tests run: 71, Failures: 0, Errors: 1, Skipped: 3, Time elapsed: 85.254 s <<<

> FAILURE! - in org.apache.hadoop.ozone.client.rpc.TestSecureOzoneRpcClient

> testReadKeyWithCorruptedDataWithMutiNodes(org.apache.hadoop.ozone.client.rpc.TestSecureOzoneRpcClient)

> Time elapsed: 2.577 s <<< ERROR!

> java.io.IOException: Unexpected OzoneException: java.io.IOException:

> java.util.concurrent.ExecutionException:

> org.apache.ratis.thirdparty.io.grpc.StatusRuntimeException:

> DEADLINE_EXCEEDED: ClientCall started after deadline exceeded: -0.611771733s

> from now

> at

> org.apache.hadoop.hdds.scm.storage.ChunkInputStream.readChunk(ChunkInputStream.java:341)

> {code}

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

-

To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] hanishakoneru commented on a change in pull request #625: HDDS-2980. Delete replayed entry from OpenKeyTable during commit

hanishakoneru commented on a change in pull request #625: HDDS-2980. Delete

replayed entry from OpenKeyTable during commit

URL: https://github.com/apache/hadoop-ozone/pull/625#discussion_r399554295

##

File path:

hadoop-ozone/ozone-manager/src/main/java/org/apache/hadoop/ozone/om/request/s3/multipart/S3MultipartUploadCommitPartRequest.java

##

@@ -147,11 +146,6 @@ public OMClientResponse

validateAndUpdateCache(OzoneManager ozoneManager,

throw new OMException("Failed to commit Multipart Upload key, as " +

openKey + "entry is not found in the openKey table",

KEY_NOT_FOUND);

- } else {

-// Check the OpenKeyTable if this transaction is a replay of ratis

logs.

Review comment:

This check was redundant. Irrespective of whether KeyCreate Request was

replayed or not, if key+clientID exits in the openKey table, then the

CommitPart request should also be executed (same as we do for KeyCommit

Request).

If the same Key part was created again, the clientID would be different.

Hence the openKey would also be different.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Updated] (HDDS-3266) Intermittent integration test failure due to DEADLINE_EXCEEDED

[

https://issues.apache.org/jira/browse/HDDS-3266?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Attila Doroszlai updated HDDS-3266:

---

Summary: Intermittent integration test failure due to DEADLINE_EXCEEDED

(was: Intermittent TestSecureOzoneRpcClient failure due to DEADLINE_EXCEEDED)

> Intermittent integration test failure due to DEADLINE_EXCEEDED

> --

>

> Key: HDDS-3266

> URL: https://issues.apache.org/jira/browse/HDDS-3266

> Project: Hadoop Distributed Data Store

> Issue Type: Bug

> Components: test

>Reporter: Attila Doroszlai

>Priority: Blocker

> Attachments:

> org.apache.hadoop.ozone.client.rpc.TestSecureOzoneRpcClient-output.txt,

> org.apache.hadoop.ozone.client.rpc.TestSecureOzoneRpcClient.txt,

> org.apache.hadoop.ozone.freon.TestRandomKeyGenerator-output.txt

>

>

> {code:title=https://github.com/apache/hadoop-ozone/runs/527778966}

> Tests run: 71, Failures: 0, Errors: 1, Skipped: 3, Time elapsed: 85.254 s <<<

> FAILURE! - in org.apache.hadoop.ozone.client.rpc.TestSecureOzoneRpcClient

> testReadKeyWithCorruptedDataWithMutiNodes(org.apache.hadoop.ozone.client.rpc.TestSecureOzoneRpcClient)

> Time elapsed: 2.577 s <<< ERROR!

> java.io.IOException: Unexpected OzoneException: java.io.IOException:

> java.util.concurrent.ExecutionException:

> org.apache.ratis.thirdparty.io.grpc.StatusRuntimeException:

> DEADLINE_EXCEEDED: ClientCall started after deadline exceeded: -0.611771733s

> from now

> at

> org.apache.hadoop.hdds.scm.storage.ChunkInputStream.readChunk(ChunkInputStream.java:341)

> {code}

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

-

To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] bharatviswa504 edited a comment on issue #728: Master stable

bharatviswa504 edited a comment on issue #728: Master stable URL: https://github.com/apache/hadoop-ozone/pull/728#issuecomment-605312146 > @bharatviswa504 There are multiple problems. The space issue is fixed thanks to @adoroszlai (and I forget to merge it originally as he wrote). But the space issue is just one problem. > > HDDS-3234 + HDDS-3064 together can cause timeout problems visible both in integration and acceptance tests (at least this is my understanding) Because I see HDDS-3234 PR got committed after a clean run. Might be including both has caused the problem, but once it is affecting write time out and other is reads. But I am fine with reverting, but I just want to say it out here. https://github.com/apache/hadoop-ozone/runs/518369082 I will open a new PR to try out with HDDS-3234 again. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] bharatviswa504 commented on issue #728: Master stable

bharatviswa504 commented on issue #728: Master stable URL: https://github.com/apache/hadoop-ozone/pull/728#issuecomment-605312146 > @bharatviswa504 There are multiple problems. The space issue is fixed thanks to @adoroszlai (and I forget to merge it originally as he wrote). But the space issue is just one problem. > > HDDS-3234 + HDDS-3064 together can cause timeout problems visible both in integration and acceptance tests (at least this is my understanding) Because I see HDDS-3234 PR got committed after a clean run. https://github.com/apache/hadoop-ozone/runs/518369082 Can we try with reverting that change or I can open a new PR to try out? This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] elek commented on issue #728: Master stable

elek commented on issue #728: Master stable URL: https://github.com/apache/hadoop-ozone/pull/728#issuecomment-605301043 @bharatviswa504 There are multiple problems. The space issue is fixed thanks to @adoroszlai (and I forget to merge it originally as he wrote). But the space issue is just one problem. HDDS-3234 + HDDS-3064 together can cause timeout problems visible both in integration and acceptance tests (at least this is my understanding) This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Commented] (HDDS-2011) TestRandomKeyGenerator fails due to timeout

[

https://issues.apache.org/jira/browse/HDDS-2011?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=17069020#comment-17069020

]

Siyao Meng commented on HDDS-2011:

--

Also found in:

https://github.com/apache/hadoop-ozone/pull/696/checks?check_run_id=540098578

and

https://github.com/apache/hadoop-ozone/pull/582/checks?check_run_id=540143086

> TestRandomKeyGenerator fails due to timeout

> ---

>

> Key: HDDS-2011

> URL: https://issues.apache.org/jira/browse/HDDS-2011

> Project: Hadoop Distributed Data Store

> Issue Type: Bug

> Components: test

>Reporter: Attila Doroszlai

>Priority: Major

>

> {{TestRandomKeyGenerator#bigFileThan2GB}} is failing intermittently due to

> timeout in Ratis {{appendEntries}}. Commit on pipeline fails, and new

> pipeline cannot be created with 2 nodes (there are 5 nodes total).

> Most recent one:

> https://github.com/elek/ozone-ci/tree/master/trunk/trunk-nightly-pz9vg/integration/hadoop-ozone/tools

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

-

To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Assigned] (HDDS-3294) Flaky test TestContainerStateMachineFailureOnRead#testReadStateMachineFailureClosesPipeline

[

https://issues.apache.org/jira/browse/HDDS-3294?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Siyao Meng reassigned HDDS-3294:

Assignee: (was: Siyao Meng)

> Flaky test

> TestContainerStateMachineFailureOnRead#testReadStateMachineFailureClosesPipeline

> ---

>

> Key: HDDS-3294

> URL: https://issues.apache.org/jira/browse/HDDS-3294

> Project: Hadoop Distributed Data Store

> Issue Type: Bug

>Reporter: Siyao Meng

>Priority: Major

>

> Shows up in a PR: https://github.com/apache/hadoop-ozone/runs/540133363

> {code:title=log}

> [INFO] Running

> org.apache.hadoop.ozone.client.rpc.TestContainerStateMachineFailureOnRead

> [ERROR] Tests run: 1, Failures: 0, Errors: 1, Skipped: 0, Time elapsed:

> 49.766 s <<< FAILURE! - in

> org.apache.hadoop.ozone.client.rpc.TestContainerStateMachineFailureOnRead

> [ERROR]

> testReadStateMachineFailureClosesPipeline(org.apache.hadoop.ozone.client.rpc.TestContainerStateMachineFailureOnRead)

> Time elapsed: 49.623 s <<< ERROR!

> java.lang.NullPointerException

> at

> org.apache.hadoop.ozone.client.rpc.TestContainerStateMachineFailureOnRead.testReadStateMachineFailureClosesPipeline(TestContainerStateMachineFailureOnRead.java:204)

> at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

> at

> sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

> at

> sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

> at java.lang.reflect.Method.invoke(Method.java:498)

> at

> org.junit.runners.model.FrameworkMethod$1.runReflectiveCall(FrameworkMethod.java:47)

> at

> org.junit.internal.runners.model.ReflectiveCallable.run(ReflectiveCallable.java:12)

> at

> org.junit.runners.model.FrameworkMethod.invokeExplosively(FrameworkMethod.java:44)

> at

> org.junit.internal.runners.statements.InvokeMethod.evaluate(InvokeMethod.java:17)

> at

> org.junit.internal.runners.statements.FailOnTimeout$StatementThread.run(FailOnTimeout.java:74)

> {code}

> {code:title=Location of NPE at

> TestContainerStateMachineFailureOnRead.java:204}

> // delete the container dir from leader

> FileUtil.fullyDelete(new File(

> leaderDn.get().getDatanodeStateMachine()

> .getContainer().getContainerSet()

>

> .getContainer(omKeyLocationInfo.getContainerID()).getContainerData() <-- this

> line

> .getContainerPath()));

> {code}

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

-

To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Created] (HDDS-3294) Flaky test TestContainerStateMachineFailureOnRead#testReadStateMachineFailureClosesPipeline

Siyao Meng created HDDS-3294:

Summary: Flaky test

TestContainerStateMachineFailureOnRead#testReadStateMachineFailureClosesPipeline

Key: HDDS-3294

URL: https://issues.apache.org/jira/browse/HDDS-3294

Project: Hadoop Distributed Data Store

Issue Type: Bug

Reporter: Siyao Meng

Assignee: Siyao Meng

Shows up in a PR: https://github.com/apache/hadoop-ozone/runs/540133363

{code:title=log}

[INFO] Running

org.apache.hadoop.ozone.client.rpc.TestContainerStateMachineFailureOnRead

[ERROR] Tests run: 1, Failures: 0, Errors: 1, Skipped: 0, Time elapsed: 49.766

s <<< FAILURE! - in

org.apache.hadoop.ozone.client.rpc.TestContainerStateMachineFailureOnRead

[ERROR]

testReadStateMachineFailureClosesPipeline(org.apache.hadoop.ozone.client.rpc.TestContainerStateMachineFailureOnRead)

Time elapsed: 49.623 s <<< ERROR!

java.lang.NullPointerException

at

org.apache.hadoop.ozone.client.rpc.TestContainerStateMachineFailureOnRead.testReadStateMachineFailureClosesPipeline(TestContainerStateMachineFailureOnRead.java:204)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at

sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at

sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at

org.junit.runners.model.FrameworkMethod$1.runReflectiveCall(FrameworkMethod.java:47)

at

org.junit.internal.runners.model.ReflectiveCallable.run(ReflectiveCallable.java:12)

at

org.junit.runners.model.FrameworkMethod.invokeExplosively(FrameworkMethod.java:44)

at

org.junit.internal.runners.statements.InvokeMethod.evaluate(InvokeMethod.java:17)

at

org.junit.internal.runners.statements.FailOnTimeout$StatementThread.run(FailOnTimeout.java:74)

{code}

{code:title=Location of NPE at TestContainerStateMachineFailureOnRead.java:204}

// delete the container dir from leader

FileUtil.fullyDelete(new File(

leaderDn.get().getDatanodeStateMachine()

.getContainer().getContainerSet()

.getContainer(omKeyLocationInfo.getContainerID()).getContainerData() <-- this

line

.getContainerPath()));

{code}

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

-

To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Resolved] (HDDS-3281) Add timeouts to all robot tests

[ https://issues.apache.org/jira/browse/HDDS-3281?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Hanisha Koneru resolved HDDS-3281. -- Resolution: Fixed > Add timeouts to all robot tests > --- > > Key: HDDS-3281 > URL: https://issues.apache.org/jira/browse/HDDS-3281 > Project: Hadoop Distributed Data Store > Issue Type: Bug > Components: test >Reporter: Hanisha Koneru >Assignee: Hanisha Koneru >Priority: Major > Labels: pull-request-available > Time Spent: 20m > Remaining Estimate: 0h > > We have seen in some CI runs that the acceptance test suit is getting > cancelled as it runs for more than 6 hours. Because of this, the test results > and logs are also not saved. > This Jira aims to add a 5 minute timeout to all robot tests. In case some > tests require more time, we can update the timeout. This would help to > isolate the test which could be causing the whole acceptance test suit to > time out. -- This message was sent by Atlassian Jira (v8.3.4#803005) - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] hanishakoneru commented on issue #723: HDDS-3281. Add timeouts to all robot tests

hanishakoneru commented on issue #723: HDDS-3281. Add timeouts to all robot tests URL: https://github.com/apache/hadoop-ozone/pull/723#issuecomment-605255750 Thank you all for the reviews. Will merge this PR. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] hanishakoneru merged pull request #723: HDDS-3281. Add timeouts to all robot tests

hanishakoneru merged pull request #723: HDDS-3281. Add timeouts to all robot tests URL: https://github.com/apache/hadoop-ozone/pull/723 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] aryangupta1998 opened a new pull request #730: HDDS-3289. Add a freon generator to create nested directories.

aryangupta1998 opened a new pull request #730: HDDS-3289. Add a freon generator to create nested directories. URL: https://github.com/apache/hadoop-ozone/pull/730 ## What changes were proposed in this pull request? This Jira proposes to add a functionality to freon to create nested directories. Also, multiple child directories can be created inside the leaf directory and also multiple top level directories can be created. ## What is the link to the Apache JIRA https://issues.apache.org/jira/browse/HDDS-3289 ## How was this patch tested? Tested manually by running Freon Directory Generator. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Updated] (HDDS-3047) ObjectStore#listVolumesByUser and CreateVolumeHandler#call should get principal name by default

[

https://issues.apache.org/jira/browse/HDDS-3047?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Siyao Meng updated HDDS-3047:

-

Summary: ObjectStore#listVolumesByUser and CreateVolumeHandler#call should

get principal name by default (was: ObjectStore#listVolumesByUser and

CreateVolumeHandler#call should get full principal name by default)

> ObjectStore#listVolumesByUser and CreateVolumeHandler#call should get

> principal name by default

> ---

>

> Key: HDDS-3047

> URL: https://issues.apache.org/jira/browse/HDDS-3047

> Project: Hadoop Distributed Data Store

> Issue Type: Bug

> Components: Ozone Client

>Reporter: Siyao Meng

>Assignee: Siyao Meng

>Priority: Major

> Labels: pull-request-available

> Time Spent: 10m

> Remaining Estimate: 0h

>

> [{{ObjectStore#listVolumesByUser}}|https://github.com/apache/hadoop-ozone/blob/2fa37ef99b8fb4575169ba8326eeb677b3d2ed74/hadoop-ozone/client/src/main/java/org/apache/hadoop/ozone/client/ObjectStore.java#L249-L256]

> is using {{getShortUserName()}} by default (when user is empty or null):

> {code:java|title=ObjectStore#listVolumesByUser}

> public Iterator listVolumesByUser(String user,

> String volumePrefix, String prevVolume)

> throws IOException {

> if(Strings.isNullOrEmpty(user)) {

> user = UserGroupInformation.getCurrentUser().getShortUserName(); // <--

> }

> return new VolumeIterator(user, volumePrefix, prevVolume);

> }

> {code}

> It should use {{getUserName()}} instead.

> For a quick reference for the difference between {{getUserName()}} and

> {{getShortUserName()}}:

> {code:java|title=UserGroupInformation#getUserName}

> /**

>* Get the user's full principal name.

>* @return the user's full principal name.

>*/

> @InterfaceAudience.Public

> @InterfaceStability.Evolving

> public String getUserName() {

> return user.getName();

> }

> {code}

> {code:java|title=UserGroupInformation#getShortUserName}

> /**

>* Get the user's login name.

>* @return the user's name up to the first '/' or '@'.

>*/

> public String getShortUserName() {

> return user.getShortName();

> }

> {code}

> This won't cause issue if Kerberos is not in use. However, once Kerberos is

> enabled, {{getUserName()}} and {{getShortUserName()}} result differs and can

> cause some issues.

> When Kerberos is enabled, {{getUserName()}} returns full principal name e.g.

> {{om/o...@example.com}}, but {{getShortUserName()}} will return login name

> e.g. {{hadoop}}.

> If {{hadoop.security.auth_to_local}} is set, {{getShortUserName()}} result

> can become very different from full principal name.

> For example, when {{hadoop.security.auth_to_local =

> RULE:[2:$1@$0](.*)s/.*/root/}},

> {{getShortUserName()}} returns {{root}}, while {{getUserName()}} still gives

> {{om/o...@example.com}}.)

> This can lead to user experience issue (when Kerberos is enabled) where the

> user creates a volume with ozone shell ([uses

> {{getUserName()}}|https://github.com/apache/hadoop-ozone/blob/ecb5bf4df1d80723835a1500d595102f3f861708/hadoop-ozone/ozone-manager/src/main/java/org/apache/hadoop/ozone/web/ozShell/volume/CreateVolumeHandler.java#L63-L65]

> internally) then try to list it with {{ObjectStore#listVolumesByUser(null,

> ...)}} ([uses {{getShortUserName()}} by

> default|https://github.com/apache/hadoop-ozone/blob/2fa37ef99b8fb4575169ba8326eeb677b3d2ed74/hadoop-ozone/client/src/main/java/org/apache/hadoop/ozone/client/ObjectStore.java#L238-L256]

> when user param is empty or null), the user won't see any volumes because of

> the mismatch.

> We should also double check *all* usages that uses {{getShortUserName()}}.

> *Update:*

> Xiaoyu and I checked that the usage of {{getShortUserName()}} on the server

> side shouldn't become a problem. Because server should've maintained it's own

> auth_to_local rules (admin should make sure they separate each user into

> different short names. just don't map multiple principal names into the same

> then it won't be a problem).

> The usage in {{BasicOzoneFileSystem}} itself also seems valid because that

> {{getShortUserName()}} is only used for client side purpose (to set

> {{workingDir}}, etc.).

> But the usage in {{ObjectStore#listVolumesByUser}} is confirmed problematic

> at the moment, which needs to be fixed. Same for

> [{{CreateVolumeHandler#call}}|https://github.com/apache/hadoop-ozone/blob/ecb5bf4df1d80723835a1500d595102f3f861708/hadoop-ozone/ozone-manager/src/main/java/org/apache/hadoop/ozone/web/ozShell/volume/CreateVolumeHandler.java#L81-L83]:

> {code:java|title=CreateVolumeHandler#call}

> } else {

> rootName = UserGroupInformation.getCurrentUser().getShortUserName();

> }

> {code}

> It should pa

[GitHub] [hadoop-ozone] aryangupta1998 closed pull request #730: HDDS-3289. Add a freon generator to create nested directories.

aryangupta1998 closed pull request #730: HDDS-3289. Add a freon generator to create nested directories. URL: https://github.com/apache/hadoop-ozone/pull/730 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Updated] (HDDS-3047) ObjectStore#listVolumesByUser and CreateVolumeHandler#call should get full principal name by default

[

https://issues.apache.org/jira/browse/HDDS-3047?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Siyao Meng updated HDDS-3047:

-

Summary: ObjectStore#listVolumesByUser and CreateVolumeHandler#call should

get full principal name by default (was: ObjectStore#listVolumesByUser and

CreateVolumeHandler#call should get user's full principal name instead of login

name by default)

> ObjectStore#listVolumesByUser and CreateVolumeHandler#call should get full

> principal name by default

>

>

> Key: HDDS-3047

> URL: https://issues.apache.org/jira/browse/HDDS-3047

> Project: Hadoop Distributed Data Store

> Issue Type: Bug

> Components: Ozone Client

>Reporter: Siyao Meng

>Assignee: Siyao Meng

>Priority: Major

> Labels: pull-request-available

> Time Spent: 10m

> Remaining Estimate: 0h

>

> [{{ObjectStore#listVolumesByUser}}|https://github.com/apache/hadoop-ozone/blob/2fa37ef99b8fb4575169ba8326eeb677b3d2ed74/hadoop-ozone/client/src/main/java/org/apache/hadoop/ozone/client/ObjectStore.java#L249-L256]

> is using {{getShortUserName()}} by default (when user is empty or null):

> {code:java|title=ObjectStore#listVolumesByUser}

> public Iterator listVolumesByUser(String user,

> String volumePrefix, String prevVolume)

> throws IOException {

> if(Strings.isNullOrEmpty(user)) {

> user = UserGroupInformation.getCurrentUser().getShortUserName(); // <--

> }

> return new VolumeIterator(user, volumePrefix, prevVolume);

> }

> {code}

> It should use {{getUserName()}} instead.

> For a quick reference for the difference between {{getUserName()}} and

> {{getShortUserName()}}:

> {code:java|title=UserGroupInformation#getUserName}

> /**

>* Get the user's full principal name.

>* @return the user's full principal name.

>*/

> @InterfaceAudience.Public

> @InterfaceStability.Evolving

> public String getUserName() {

> return user.getName();

> }

> {code}

> {code:java|title=UserGroupInformation#getShortUserName}

> /**

>* Get the user's login name.

>* @return the user's name up to the first '/' or '@'.

>*/

> public String getShortUserName() {

> return user.getShortName();

> }

> {code}

> This won't cause issue if Kerberos is not in use. However, once Kerberos is

> enabled, {{getUserName()}} and {{getShortUserName()}} result differs and can

> cause some issues.

> When Kerberos is enabled, {{getUserName()}} returns full principal name e.g.

> {{om/o...@example.com}}, but {{getShortUserName()}} will return login name

> e.g. {{hadoop}}.

> If {{hadoop.security.auth_to_local}} is set, {{getShortUserName()}} result

> can become very different from full principal name.

> For example, when {{hadoop.security.auth_to_local =

> RULE:[2:$1@$0](.*)s/.*/root/}},

> {{getShortUserName()}} returns {{root}}, while {{getUserName()}} still gives

> {{om/o...@example.com}}.)

> This can lead to user experience issue (when Kerberos is enabled) where the

> user creates a volume with ozone shell ([uses

> {{getUserName()}}|https://github.com/apache/hadoop-ozone/blob/ecb5bf4df1d80723835a1500d595102f3f861708/hadoop-ozone/ozone-manager/src/main/java/org/apache/hadoop/ozone/web/ozShell/volume/CreateVolumeHandler.java#L63-L65]

> internally) then try to list it with {{ObjectStore#listVolumesByUser(null,

> ...)}} ([uses {{getShortUserName()}} by

> default|https://github.com/apache/hadoop-ozone/blob/2fa37ef99b8fb4575169ba8326eeb677b3d2ed74/hadoop-ozone/client/src/main/java/org/apache/hadoop/ozone/client/ObjectStore.java#L238-L256]

> when user param is empty or null), the user won't see any volumes because of

> the mismatch.

> We should also double check *all* usages that uses {{getShortUserName()}}.

> *Update:*

> Xiaoyu and I checked that the usage of {{getShortUserName()}} on the server

> side shouldn't become a problem. Because server should've maintained it's own

> auth_to_local rules (admin should make sure they separate each user into

> different short names. just don't map multiple principal names into the same

> then it won't be a problem).

> The usage in {{BasicOzoneFileSystem}} itself also seems valid because that

> {{getShortUserName()}} is only used for client side purpose (to set

> {{workingDir}}, etc.).

> But the usage in {{ObjectStore#listVolumesByUser}} is confirmed problematic

> at the moment, which needs to be fixed. Same for

> [{{CreateVolumeHandler#call}}|https://github.com/apache/hadoop-ozone/blob/ecb5bf4df1d80723835a1500d595102f3f861708/hadoop-ozone/ozone-manager/src/main/java/org/apache/hadoop/ozone/web/ozShell/volume/CreateVolumeHandler.java#L81-L83]:

> {code:java|title=CreateVolumeHandler#call}

> } else {

> rootName = UserGroupInformation.getCurrentUser().getShort

[GitHub] [hadoop-ozone] aryangupta1998 opened a new pull request #730: HDDS-3289. Add a freon generator to create nested directories.

aryangupta1998 opened a new pull request #730: HDDS-3289. Add a freon generator to create nested directories. URL: https://github.com/apache/hadoop-ozone/pull/730 ## What changes were proposed in this pull request? This Jira proposes to add a functionality to freon to create nested directories. Also, multiple child directories can be created inside the leaf directory and also multiple top level directories can be created. ## What is the link to the Apache JIRA https://issues.apache.org/jira/browse/HDDS-3289 ## How was this patch tested? Tested manually by running Freon Directory Generator. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Assigned] (HDDS-2976) Recon throws error while trying to get snapshot in secure environment

[

https://issues.apache.org/jira/browse/HDDS-2976?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Siddharth Wagle reassigned HDDS-2976:

-

Assignee: Prashant Pogde (was: Siddharth Wagle)

> Recon throws error while trying to get snapshot in secure environment

> -

>

> Key: HDDS-2976

> URL: https://issues.apache.org/jira/browse/HDDS-2976

> Project: Hadoop Distributed Data Store

> Issue Type: Sub-task

> Components: Ozone Recon

>Reporter: Vivek Ratnavel Subramanian

>Assignee: Prashant Pogde

>Priority: Critical

>

> Recon throws the following exception while trying to get snapshot from OM in

> a secure env:

> {code:java}

> 10:19:24.743 PMINFO OzoneManagerServiceProviderImpl Obtaining full snapshot

> from Ozone Manager

> 10:19:24.754 PMERROR OzoneManagerServiceProviderImpl Unable to obtain Ozone

> Manager DB Snapshot.

> javax.net.ssl.SSLHandshakeException: Received fatal alert: handshake_failure

> at sun.security.ssl.Alerts.getSSLException(Alerts.java:192)

> at sun.security.ssl.Alerts.getSSLException(Alerts.java:154)

> at sun.security.ssl.SSLSocketImpl.recvAlert(SSLSocketImpl.java:2020)

> at sun.security.ssl.SSLSocketImpl.readRecord(SSLSocketImpl.java:1127)

> at

> sun.security.ssl.SSLSocketImpl.performInitialHandshake(SSLSocketImpl.java:1367)

> at

> sun.security.ssl.SSLSocketImpl.startHandshake(SSLSocketImpl.java:1395)

> at

> sun.security.ssl.SSLSocketImpl.startHandshake(SSLSocketImpl.java:1379)

> at

> org.apache.http.conn.ssl.SSLConnectionSocketFactory.createLayeredSocket(SSLConnectionSocketFactory.java:394)

> at

> org.apache.http.conn.ssl.SSLConnectionSocketFactory.connectSocket(SSLConnectionSocketFactory.java:353)

> at

> org.apache.http.impl.conn.DefaultHttpClientConnectionOperator.connect(DefaultHttpClientConnectionOperator.java:141)

> at

> org.apache.http.impl.conn.PoolingHttpClientConnectionManager.connect(PoolingHttpClientConnectionManager.java:353)

> at

> org.apache.http.impl.execchain.MainClientExec.establishRoute(MainClientExec.java:380)

> at

> org.apache.http.impl.execchain.MainClientExec.execute(MainClientExec.java:236)

> at

> org.apache.http.impl.execchain.ProtocolExec.execute(ProtocolExec.java:184)

> at org.apache.http.impl.execchain.RetryExec.execute(RetryExec.java:88)

> at

> org.apache.http.impl.execchain.RedirectExec.execute(RedirectExec.java:110)

> at

> org.apache.http.impl.client.InternalHttpClient.doExecute(InternalHttpClient.java:184)

> at

> org.apache.http.impl.client.CloseableHttpClient.execute(CloseableHttpClient.java:82)

> at

> org.apache.http.impl.client.CloseableHttpClient.execute(CloseableHttpClient.java:107)

> at

> org.apache.hadoop.ozone.recon.ReconUtils.makeHttpCall(ReconUtils.java:232)

> at

> org.apache.hadoop.ozone.recon.spi.impl.OzoneManagerServiceProviderImpl.getOzoneManagerDBSnapshot(OzoneManagerServiceProviderImpl.java:239)

> at

> org.apache.hadoop.ozone.recon.spi.impl.OzoneManagerServiceProviderImpl.updateReconOmDBWithNewSnapshot(OzoneManagerServiceProviderImpl.java:267)

> at

> org.apache.hadoop.ozone.recon.spi.impl.OzoneManagerServiceProviderImpl.syncDataFromOM(OzoneManagerServiceProviderImpl.java:358)

> at

> java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511)

> at java.util.concurrent.FutureTask.runAndReset(FutureTask.java:308)

> at

> java.util.concurrent.ScheduledThreadPoolExecutor$ScheduledFutureTask.access$301(ScheduledThreadPoolExecutor.java:180)

> at

> java.util.concurrent.ScheduledThreadPoolExecutor$ScheduledFutureTask.run(ScheduledThreadPoolExecutor.java:294)

> at

> java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

> at

> java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

> at java.lang.Thread.run(Thread.java:748)

> 10:19:24.755 PMERROR OzoneManagerServiceProviderImpl Null snapshot location

> got from OM.

> {code}

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

-

To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] aryangupta1998 closed pull request #730: HDDS-3289. Add a freon generator to create nested directories.

aryangupta1998 closed pull request #730: HDDS-3289. Add a freon generator to create nested directories. URL: https://github.com/apache/hadoop-ozone/pull/730 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Created] (HDDS-3293) read operation failing when two container replicas are corrupted

Nilotpal Nandi created HDDS-3293:

Summary: read operation failing when two container replicas are

corrupted

Key: HDDS-3293

URL: https://issues.apache.org/jira/browse/HDDS-3293

Project: Hadoop Distributed Data Store

Issue Type: Bug

Components: Ozone Datanode

Reporter: Nilotpal Nandi

steps taken :

1) Mounted noise injection FUSE on all datanodes.

2) Write a key ( multi blocks)

3) Select one of the container ids , inject error on 2 container replicas for

that container id.

4) Run GET key operation.

GET key operation fails intermittenly.

Error seen :

-

{noformat}

20/03/27 18:30:40 WARN impl.MetricsConfig: Cannot locate configuration: tried

hadoop-metrics2-xceiverclientmetrics.properties,hadoop-metrics2.properties

E 20/03/27 18:30:40 INFO impl.MetricsSystemImpl: Scheduled Metric snapshot

period at 10 second(s).

E 20/03/27 18:30:40 INFO impl.MetricsSystemImpl: XceiverClientMetrics metrics

system started

E 20/03/27 18:31:12 ERROR scm.XceiverClientGrpc: Failed to execute command

cmdType: ReadChunk

E traceID: "f80a51eaec481a1c:cbb8e92869015a53:f80a51eaec481a1c:0"

E containerID: 67

E datanodeUuid: "96101390-2446-40e6-a54e-36e170497e57"

E readChunk {

E blockID {

E containerID: 67

E localID: 103896435892617248

E blockCommitSequenceId: 1010

E }

E chunkData {

E chunkName: "103896435892617248_chunk_28"

E offset: 113246208

E len: 4194304

E checksumData {

E type: CRC32

E bytesPerChecksum: 1048576

E checksums: "\034\376\313\031"

E checksums: ";U\225\037"

E checksums: "\327m\332."

E checksums: "|\307\004E"

E }

E }

E }

E on the pipeline Pipeline[ Id: bce6316c-9690-452b-80e3-0f3590533444, Nodes:

96101390-2446-40e6-a54e-36e170497e57{ip: 172.27.111.129, host:

quasar-olrywk-3.quasar-olrywk.root.hwx.site, networkLocation: /default-rack,

certSerialId: null}3e85204d-2399-43b5-952a-55b837eb4c1d{ip: 172.27.100.0, host:

quasar-olrywk-1.quasar-olrywk.root.hwx.site, networkLocation: /default-rack,

certSerialId: null}5af0340a-6fee-4ce8-9f68-37fa35566a5a{ip: 172.27.73.0, host:

quasar-olrywk-9.quasar-olrywk.root.hwx.site, networkLocation: /default-rack,

certSerialId: null}, Type:STAND_ALONE, Factor:THREE, State:OPEN,

leaderId:96101390-2446-40e6-a54e-36e170497e57,

CreationTimestamp2020-03-27T03:36:51.880Z].

E Unexpected OzoneException: java.io.IOException:

java.util.concurrent.ExecutionException:

org.apache.ratis.thirdparty.io.grpc.StatusRuntimeException: DEADLINE_EXCEEDED:

deadline exceeded after 84603913ns. [remote_addr=/172.27.73.0:9859]]{noformat}

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

-

To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] smengcl edited a comment on issue #731: HDDS-3279. Rebase OFS branch

smengcl edited a comment on issue #731: HDDS-3279. Rebase OFS branch URL: https://github.com/apache/hadoop-ozone/pull/731#issuecomment-605202481 Unrelated flaky test `org.apache.hadoop.ozone.client.rpc.TestContainerStateMachineFailureOnRead`. Will commit in a min. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] smengcl commented on issue #731: HDDS-3279. Rebase OFS branch

smengcl commented on issue #731: HDDS-3279. Rebase OFS branch URL: https://github.com/apache/hadoop-ozone/pull/731#issuecomment-605202481 Unrelated flaky test `org.apache.hadoop.ozone.client.rpc.TestContainerStateMachineFailureOnRead`. Will merge in a min. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] smengcl merged pull request #731: HDDS-3279. Rebase OFS branch

smengcl merged pull request #731: HDDS-3279. Rebase OFS branch URL: https://github.com/apache/hadoop-ozone/pull/731 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

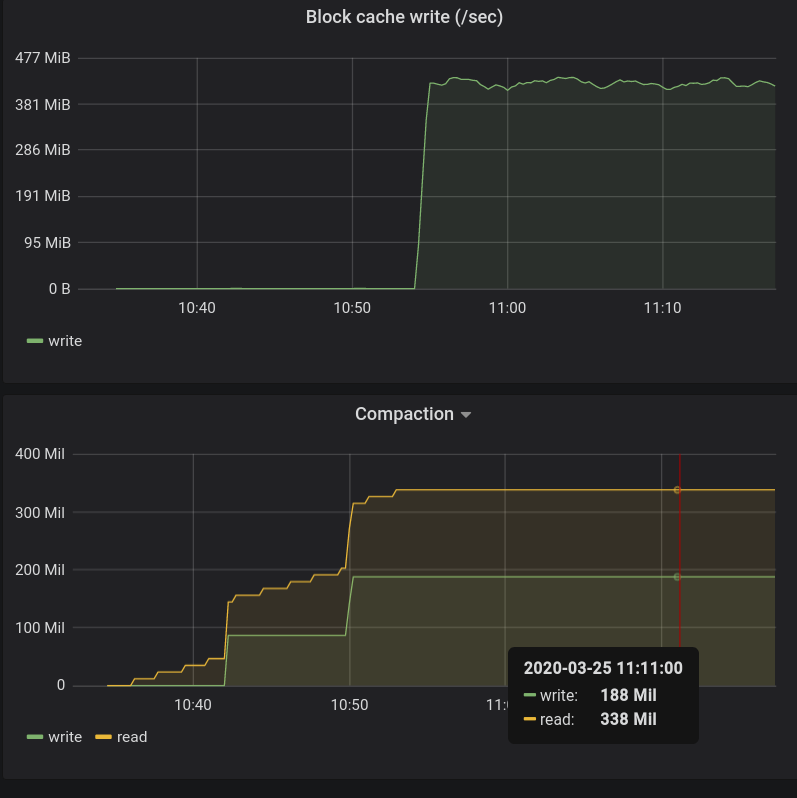

[GitHub] [hadoop-ozone] xiaoyuyao commented on issue #665: HDDS-3160. Disable index and filter block cache for RocksDB.

xiaoyuyao commented on issue #665: HDDS-3160. Disable index and filter block cache for RocksDB. URL: https://github.com/apache/hadoop-ozone/pull/665#issuecomment-605189323 Note even we don't put the filter/index into the block cache after this change, they will still be put into off-heap memory by rocksdb. It is good to track the OM JVM heap usage w/wo this change during compaction to fully understand the impact of this change. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] adoroszlai commented on issue #723: HDDS-3281. Add timeouts to all robot tests

adoroszlai commented on issue #723: HDDS-3281. Add timeouts to all robot tests URL: https://github.com/apache/hadoop-ozone/pull/723#issuecomment-605153928 > @adoroszlai, @elek are we good to merge this patch? Yes, thanks. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] adoroszlai commented on issue #728: Master stable

adoroszlai commented on issue #728: Master stable URL: https://github.com/apache/hadoop-ozone/pull/728#issuecomment-605148945 > Downloaded tarball and see that it failed due to no disk space. Disk space issue was fixed after `master-stable` branch had been created, so fix was brought into the branch with most recent merge from `master`: ``` * 947ca10a1 (origin/master-stable) retrigger build * f801e60e7 Merge remote-tracking branch 'origin/master' into master-stable |\ | * 7d132ce38 (origin/master) HDDS-3179. Pipeline placement based on Topology does not have fallback (#678) | * 3d2856869 HDDS-3074. Make the configuration of container scrub consistent. (#722) | * 07fcb79e8 HDDS-3284. ozonesecure-mr test fails due to lack of disk space (#725) | * 4682babb6 HDDS-3164. Add Recon endpoint to serve missing containers and its metadata. (#714) | * f6be7660a HDDS-3243. Recon should not have the ability to send Create/Close Container commands to Datanode. (#712) | * 824938534 HDDS-3250. Create a separate log file for Warnings and Errors in MiniOzoneChaosCluster. (#711) * | 58cdc36c2 Revert "HDDS-3234. Fix retry interval default in Ozone client. (#698)" * | 1d4227b5d Revert "HDDS-3064. Get Key is hung when READ delay is injected in chunk file path. (#673)" |/ * 512d607df Revert "HDDS-3142. Create isolated enviornment for OM to test it without SCM. (#656)" ``` This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] bharatviswa504 edited a comment on issue #728: Master stable

bharatviswa504 edited a comment on issue #728: Master stable URL: https://github.com/apache/hadoop-ozone/pull/728#issuecomment-605143568 @elek Even for a revert of HDDS-3234 mr jobs failed. So, HDDS-3234 is not a real issue I think, our underlying CI has some issue. Downloaded tarball and see that it failed due to no disk space. https://user-images.githubusercontent.com/8586345/77783575-5708b000-7016-11ea-9233-c228e471be96.png";> This PR fixed this issue of disk space issue. https://github.com/apache/hadoop-ozone/commit/07fcb79e8253c19d9537772ab8f3d82c51a0220f This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] bharatviswa504 edited a comment on issue #728: Master stable

bharatviswa504 edited a comment on issue #728: Master stable URL: https://github.com/apache/hadoop-ozone/pull/728#issuecomment-605143568 @elek Even for a revert of HDDS-3234 mr jobs failed. So, HDDS-3234 is not a real issue I think, our underlying CI has some issue. Downloaded tarball and see that it failed due to no disk space. https://user-images.githubusercontent.com/8586345/77783575-5708b000-7016-11ea-9233-c228e471be96.png";> This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] bharatviswa504 commented on issue #728: Master stable

bharatviswa504 commented on issue #728: Master stable URL: https://github.com/apache/hadoop-ozone/pull/728#issuecomment-605143568 @elek Even for revert of HDDS-3234 mr jobs failed. Downloaded tarball and see that it failed due to no disk space. https://user-images.githubusercontent.com/8586345/77783575-5708b000-7016-11ea-9233-c228e471be96.png";> This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] smengcl opened a new pull request #731: HDDS-3279. Rebase OFS branch

smengcl opened a new pull request #731: HDDS-3279. Rebase OFS branch URL: https://github.com/apache/hadoop-ozone/pull/731 ## What changes were proposed in this pull request? Get the necessary changes in OFS dev branch after the rebase to master branch. See the description and comments in https://github.com/apache/hadoop-ozone/pull/721 ## What is the link to the Apache JIRA https://issues.apache.org/jira/browse/HDDS-3279 ## How was this patch tested? Tested in https://github.com/apache/hadoop-ozone/pull/721 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] smengcl edited a comment on issue #721: HDDS-3279. Rebase OFS branch (Draft)

smengcl edited a comment on issue #721: HDDS-3279. Rebase OFS branch (Draft) URL: https://github.com/apache/hadoop-ozone/pull/721#issuecomment-605136056 Thanks @xiaoyuyao . I am going to do the following: 1. Close this PR; 2. Merge master commits to OFS dev branch manually; 3. Create a new PR https://github.com/apache/hadoop-ozone/pull/731 with only the 3 commits I posted in this PR already; 4. Merge that new PR https://github.com/apache/hadoop-ozone/pull/731. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Assigned] (HDDS-3285) MiniOzoneChaosCluster exits because of deadline exceeding

[

https://issues.apache.org/jira/browse/HDDS-3285?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Mukul Kumar Singh reassigned HDDS-3285:

---

Assignee: Shashikant Banerjee

> MiniOzoneChaosCluster exits because of deadline exceeding

> -

>

> Key: HDDS-3285

> URL: https://issues.apache.org/jira/browse/HDDS-3285

> Project: Hadoop Distributed Data Store

> Issue Type: Bug

> Components: Ozone Datanode

>Reporter: Mukul Kumar Singh

>Assignee: Shashikant Banerjee

>Priority: Major

> Labels: MiniOzoneChaosCluster

> Attachments: complete.log.gz

>

>

> 2020-03-26 21:26:48,869 [pool-326-thread-2] INFO util.ExitUtil

> (ExitUtil.java:terminate(210)) - Exiting with status 1: java.io.IOException:

> java.util.concurrent.ExecutionException: org.apache.ratis.thirdparty.io.

> grpc.StatusRuntimeException: DEADLINE_EXCEEDED: ClientCall started after

> deadline exceeded: -4.330590725s from now

> {code}

> 2020-03-26 21:26:48,866 [pool-326-thread-2] ERROR

> loadgenerators.LoadExecutors (LoadExecutors.java:load(64)) - FileSystem

> LOADGEN: null Exiting due to exception

> java.io.IOException: java.util.concurrent.ExecutionException:

> org.apache.ratis.thirdparty.io.grpc.StatusRuntimeException:

> DEADLINE_EXCEEDED: ClientCall started after deadline exceeded: -4.330590725s

> from now

> at

> org.apache.hadoop.hdds.scm.XceiverClientGrpc.sendCommandWithRetry(XceiverClientGrpc.java:359)

> at

> org.apache.hadoop.hdds.scm.XceiverClientGrpc.sendCommandWithTraceIDAndRetry(XceiverClientGrpc.java:281)

> at

> org.apache.hadoop.hdds.scm.XceiverClientGrpc.sendCommand(XceiverClientGrpc.java:259)

> at

> org.apache.hadoop.hdds.scm.storage.ContainerProtocolCalls.getBlock(ContainerProtocolCalls.java:119)

> at

> org.apache.hadoop.hdds.scm.storage.BlockInputStream.getChunkInfos(BlockInputStream.java:199)

> at

> org.apache.hadoop.hdds.scm.storage.BlockInputStream.initialize(BlockInputStream.java:133)

> at

> org.apache.hadoop.hdds.scm.storage.BlockInputStream.read(BlockInputStream.java:254)

> at

> org.apache.hadoop.ozone.client.io.KeyInputStream.read(KeyInputStream.java:197)

> at

> org.apache.hadoop.fs.ozone.OzoneFSInputStream.read(OzoneFSInputStream.java:63)

> at java.io.DataInputStream.read(DataInputStream.java:100)

> at

> org.apache.hadoop.ozone.utils.LoadBucket$ReadOp.doPostOp(LoadBucket.java:205)

> at