xiaozhch5 commented on PR #6013: URL: https://github.com/apache/hudi/pull/6013#issuecomment-1172886885

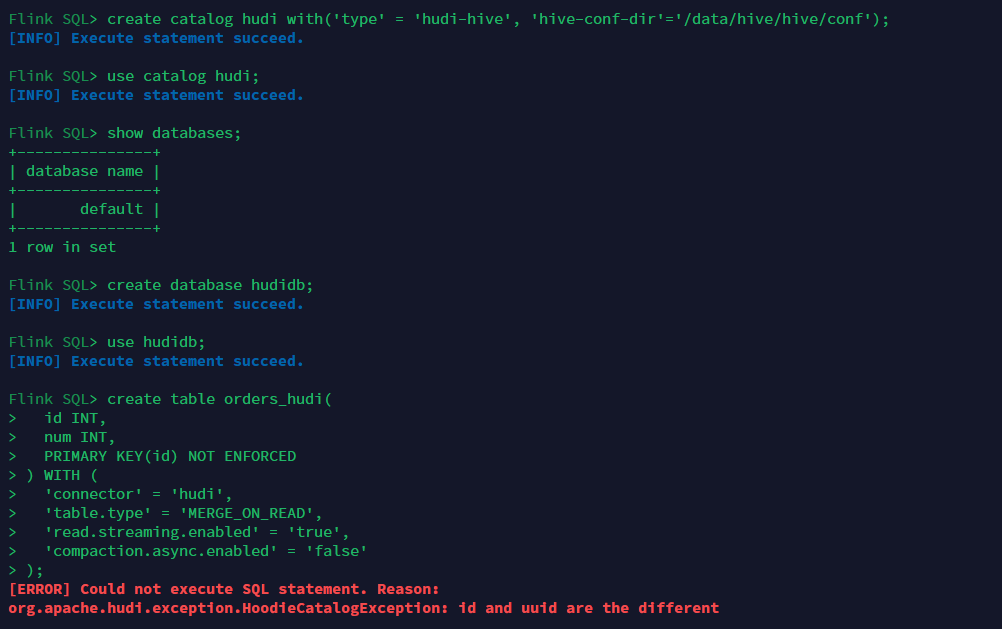

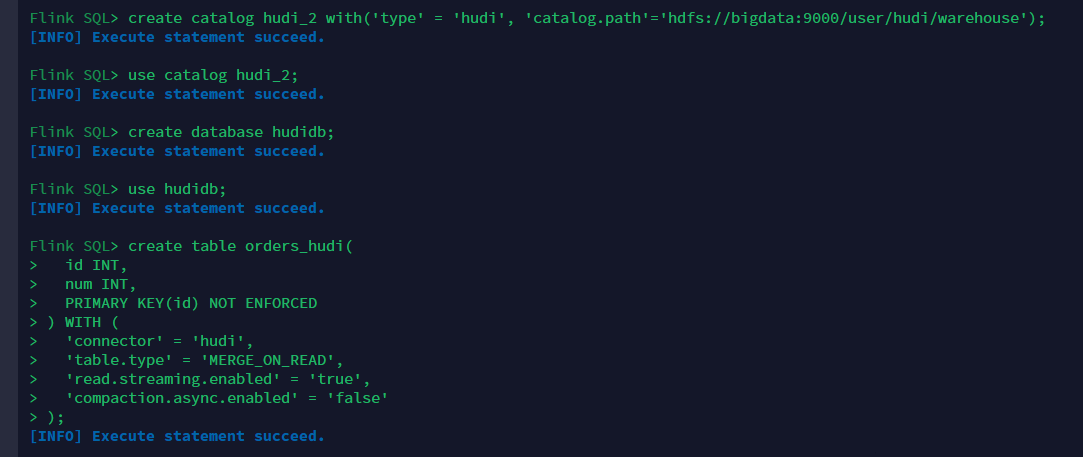

Hello, I test the PR, but the following problem occurs. compile command: ```bash mvn clean install -DskipTests -Dhive.version=3.1.2 -Dflink.version=1.13.6 -Pflink1.13 -Dhadoop.version=3.2.2 -Pspark3 -Pflink-bundle-shade-hive3 -Dscala.binary.version=2.12 -Dscala.version=2.12.12 ```  The full stacks are list below, ```java 2022-07-02 19:37:04,770 INFO org.apache.hudi.table.catalog.HoodieCatalogUtil [] - Setting hive conf dir as /data/hive/hive/conf 2022-07-02 19:37:04,776 INFO org.apache.hudi.table.catalog.HoodieHiveCatalog [] - Created HiveCatalog 'hudi' 2022-07-02 19:37:04,777 INFO org.apache.hadoop.hive.metastore.HiveMetaStoreClient [] - Trying to connect to metastore with URI thrift://bigdata:9083 2022-07-02 19:37:04,778 INFO org.apache.hadoop.hive.metastore.HiveMetaStoreClient [] - Opened a connection to metastore, current connections: 1 2022-07-02 19:37:04,802 INFO org.apache.hadoop.hive.metastore.HiveMetaStoreClient [] - Connected to metastore. 2022-07-02 19:37:04,802 INFO org.apache.hadoop.hive.metastore.RetryingMetaStoreClient [] - RetryingMetaStoreClient proxy=class org.apache.hadoop.hive.ql.metadata.SessionHiveMetaStoreClient ugi=root (auth:SIMPLE) retries=1 delay=1 lifetime=0 2022-07-02 19:37:04,933 INFO org.apache.hudi.table.catalog.HoodieHiveCatalog [] - Connected to Hive metastore 2022-07-02 19:37:19,532 INFO org.apache.flink.table.catalog.CatalogManager [] - Set the current default catalog as [hudi] and the current default database as [default]. 2022-07-02 19:37:47,587 INFO org.apache.flink.table.catalog.CatalogManager [] - Set the current default database as [hudidb] in the current default catalog [hudi]. 2022-07-02 19:38:29,533 WARN org.apache.flink.table.client.cli.CliClient [] - Could not execute SQL statement. org.apache.flink.table.client.gateway.SqlExecutionException: Could not execute SQL statement. at org.apache.flink.table.client.gateway.local.LocalExecutor.executeOperation(LocalExecutor.java:215) ~[flink-sql-client_2.12-1.13.6.jar:1.13.6] at org.apache.flink.table.client.cli.CliClient.executeOperation(CliClient.java:564) ~[flink-sql-client_2.12-1.13.6.jar:1.13.6] at org.apache.flink.table.client.cli.CliClient.callOperation(CliClient.java:424) ~[flink-sql-client_2.12-1.13.6.jar:1.13.6] at org.apache.flink.table.client.cli.CliClient.lambda$executeStatement$0(CliClient.java:327) [flink-sql-client_2.12-1.13.6.jar:1.13.6] at java.util.Optional.ifPresent(Optional.java:159) ~[?:1.8.0_241] at org.apache.flink.table.client.cli.CliClient.executeStatement(CliClient.java:327) [flink-sql-client_2.12-1.13.6.jar:1.13.6] at org.apache.flink.table.client.cli.CliClient.executeInteractive(CliClient.java:297) [flink-sql-client_2.12-1.13.6.jar:1.13.6] at org.apache.flink.table.client.cli.CliClient.executeInInteractiveMode(CliClient.java:221) [flink-sql-client_2.12-1.13.6.jar:1.13.6] at org.apache.flink.table.client.SqlClient.openCli(SqlClient.java:151) [flink-sql-client_2.12-1.13.6.jar:1.13.6] at org.apache.flink.table.client.SqlClient.start(SqlClient.java:95) [flink-sql-client_2.12-1.13.6.jar:1.13.6] at org.apache.flink.table.client.SqlClient.startClient(SqlClient.java:187) [flink-sql-client_2.12-1.13.6.jar:1.13.6] at org.apache.flink.table.client.SqlClient.main(SqlClient.java:161) [flink-sql-client_2.12-1.13.6.jar:1.13.6] Caused by: org.apache.flink.table.api.TableException: Could not execute CreateTable in path `hudi`.`hudidb`.`orders_hudi` at org.apache.flink.table.catalog.CatalogManager.execute(CatalogManager.java:847) ~[flink-table_2.12-1.13.6.jar:1.13.6] at org.apache.flink.table.catalog.CatalogManager.createTable(CatalogManager.java:659) ~[flink-table_2.12-1.13.6.jar:1.13.6] at org.apache.flink.table.api.internal.TableEnvironmentImpl.executeInternal(TableEnvironmentImpl.java:865) ~[flink-table_2.12-1.13.6.jar:1.13.6] at org.apache.flink.table.client.gateway.local.LocalExecutor.lambda$executeOperation$3(LocalExecutor.java:213) ~[flink-sql-client_2.12-1.13.6.jar:1.13.6] at org.apache.flink.table.client.gateway.context.ExecutionContext.wrapClassLoader(ExecutionContext.java:90) ~[flink-sql-client_2.12-1.13.6.jar:1.13.6] at org.apache.flink.table.client.gateway.local.LocalExecutor.executeOperation(LocalExecutor.java:213) ~[flink-sql-client_2.12-1.13.6.jar:1.13.6] ... 11 more Caused by: org.apache.hudi.exception.HoodieCatalogException: Failed to create table hudidb.orders_hudi at org.apache.hudi.table.catalog.HoodieHiveCatalog.createTable(HoodieHiveCatalog.java:446) ~[hudi-flink1.13-bundle_2.12-0.12.0-SNAPSHOT.jar:0.12.0-SNAPSHOT] at org.apache.flink.table.catalog.CatalogManager.lambda$createTable$10(CatalogManager.java:661) ~[flink-table_2.12-1.13.6.jar:1.13.6] at org.apache.flink.table.catalog.CatalogManager.execute(CatalogManager.java:841) ~[flink-table_2.12-1.13.6.jar:1.13.6] at org.apache.flink.table.catalog.CatalogManager.createTable(CatalogManager.java:659) ~[flink-table_2.12-1.13.6.jar:1.13.6] at org.apache.flink.table.api.internal.TableEnvironmentImpl.executeInternal(TableEnvironmentImpl.java:865) ~[flink-table_2.12-1.13.6.jar:1.13.6] at org.apache.flink.table.client.gateway.local.LocalExecutor.lambda$executeOperation$3(LocalExecutor.java:213) ~[flink-sql-client_2.12-1.13.6.jar:1.13.6] at org.apache.flink.table.client.gateway.context.ExecutionContext.wrapClassLoader(ExecutionContext.java:90) ~[flink-sql-client_2.12-1.13.6.jar:1.13.6] at org.apache.flink.table.client.gateway.local.LocalExecutor.executeOperation(LocalExecutor.java:213) ~[flink-sql-client_2.12-1.13.6.jar:1.13.6] ... 11 more Caused by: org.apache.hudi.exception.HoodieCatalogException: id and uuid are the different at org.apache.hudi.table.catalog.HoodieHiveCatalog.instantiateHiveTable(HoodieHiveCatalog.java:579) ~[hudi-flink1.13-bundle_2.12-0.12.0-SNAPSHOT.jar:0.12.0-SNAPSHOT] at org.apache.hudi.table.catalog.HoodieHiveCatalog.createTable(HoodieHiveCatalog.java:435) ~[hudi-flink1.13-bundle_2.12-0.12.0-SNAPSHOT.jar:0.12.0-SNAPSHOT] at org.apache.flink.table.catalog.CatalogManager.lambda$createTable$10(CatalogManager.java:661) ~[flink-table_2.12-1.13.6.jar:1.13.6] at org.apache.flink.table.catalog.CatalogManager.execute(CatalogManager.java:841) ~[flink-table_2.12-1.13.6.jar:1.13.6] at org.apache.flink.table.catalog.CatalogManager.createTable(CatalogManager.java:659) ~[flink-table_2.12-1.13.6.jar:1.13.6] at org.apache.flink.table.api.internal.TableEnvironmentImpl.executeInternal(TableEnvironmentImpl.java:865) ~[flink-table_2.12-1.13.6.jar:1.13.6] at org.apache.flink.table.client.gateway.local.LocalExecutor.lambda$executeOperation$3(LocalExecutor.java:213) ~[flink-sql-client_2.12-1.13.6.jar:1.13.6] at org.apache.flink.table.client.gateway.context.ExecutionContext.wrapClassLoader(ExecutionContext.java:90) ~[flink-sql-client_2.12-1.13.6.jar:1.13.6] at org.apache.flink.table.client.gateway.local.LocalExecutor.executeOperation(LocalExecutor.java:213) ~[flink-sql-client_2.12-1.13.6.jar:1.13.6] ... 11 more ``` But it's ok if I use hudi catalog.  -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected]