[GitHub] spark pull request #22500: [SPARK-25488][TEST] Refactor MiscBenchmark to use...

Github user wangyum commented on a diff in the pull request: https://github.com/apache/spark/pull/22500#discussion_r219403342 --- Diff: sql/core/benchmarks/MiscBenchmark-results.txt --- @@ -0,0 +1,132 @@ + +filter & aggregate without group + + +Java HotSpot(TM) 64-Bit Server VM 1.8.0_151-b12 on Mac OS X 10.12.6 +Intel(R) Core(TM) i7-7820HQ CPU @ 2.90GHz + +range/filter/sum:Best/Avg Time(ms)Rate(M/s) Per Row(ns) Relative + +range/filter/sum wholestage off 36618 / 41080 57.3 17.5 1.0X +range/filter/sum wholestage on2495 / 2609840.4 1.2 14.7X + + + +range/limit/sum + + +Java HotSpot(TM) 64-Bit Server VM 1.8.0_151-b12 on Mac OS X 10.12.6 +Intel(R) Core(TM) i7-7820HQ CPU @ 2.90GHz + +range/limit/sum: Best/Avg Time(ms)Rate(M/s) Per Row(ns) Relative + +range/limit/sum wholestage off 117 / 121 4477.9 0.2 1.0X +range/limit/sum wholestage on 178 / 187 2938.1 0.3 0.7X + + + +sample + + +Java HotSpot(TM) 64-Bit Server VM 1.8.0_151-b12 on Mac OS X 10.12.6 +Intel(R) Core(TM) i7-7820HQ CPU @ 2.90GHz + +sample with replacement: Best/Avg Time(ms)Rate(M/s) Per Row(ns) Relative + +sample with replacement wholestage off9142 / 9182 14.3 69.8 1.0X +sample with replacement wholestage on 5926 / 6107 22.1 45.2 1.5X + +Java HotSpot(TM) 64-Bit Server VM 1.8.0_151-b12 on Mac OS X 10.12.6 +Intel(R) Core(TM) i7-7820HQ CPU @ 2.90GHz + +sample without replacement: Best/Avg Time(ms)Rate(M/s) Per Row(ns) Relative + +sample without replacement wholestage off 1834 / 1837 71.5 14.0 1.0X +sample without replacement wholestage on 784 / 803167.2 6.0 2.3X + + + +collect + + +Java HotSpot(TM) 64-Bit Server VM 1.8.0_151-b12 on Mac OS X 10.12.6 +Intel(R) Core(TM) i7-7820HQ CPU @ 2.90GHz + +collect: Best/Avg Time(ms)Rate(M/s) Per Row(ns) Relative + +collect 1 million 186 / 215 5.6 177.5 1.0X +collect 2 millions 361 / 393 2.9 344.2 0.5X +collect 4 millions 884 / 1053 1.2 843.4 0.2X + + + +collect limit + + +Java HotSpot(TM) 64-Bit Server VM 1.8.0_151-b12 on Mac OS X 10.12.6 +Intel(R) Core(TM) i7-7820HQ CPU @ 2.90GHz + +collect limit: Best/Avg Time(ms)Rate(M/s) Per Row(ns) Relative + +collect limit 1 million206 / 225 5.1 196.6 1.0X +collect limit 2 millions 407 / 419 2.6 387.8 0.5X + + + +generate exp

[GitHub] spark issue #22497: [SPARK-25487][SQL][TEST] Refactor PrimitiveArrayBenchmar...

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22497 Congratulation, @kiszk --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22513: [SPARK-25499][TEST]Refactor BenchmarkBase and Benchmark

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22513 `KryoBenchmark` is in core, and `UnsafeProjectionBenchmark`, `HashByteArrayBenchmark` and `HashBenchmark` are in `catalyst`. If we move the benchmark base class to sql, benchmarks mentioned above would not be able to inherit from the benchmark base class. What do you think? --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22513: [SPARK-25499][TEST]Refactor BenchmarkBase and Ben...

Github user wangyum commented on a diff in the pull request:

https://github.com/apache/spark/pull/22513#discussion_r219388085

--- Diff:

sql/core/src/test/scala/org/apache/spark/sql/execution/benchmark/FilterPushdownBenchmark.scala

---

@@ -27,7 +27,7 @@ import

org.apache.spark.sql.functions.monotonically_increasing_id

import org.apache.spark.sql.internal.SQLConf

import org.apache.spark.sql.internal.SQLConf.ParquetOutputTimestampType

import org.apache.spark.sql.types.{ByteType, Decimal, DecimalType,

TimestampType}

-import org.apache.spark.util.{Benchmark, BenchmarkBase =>

FileBenchmarkBase, Utils}

+import org.apache.spark.util.Utils

/**

* Benchmark to measure read performance with Filter pushdown.

--- End diff --

How about change scala doc to below to fix **fails to generate

documentation**?

```scala

* To run this benchmark:

* {{{

* 1. without sbt: bin/spark-submit --class

* 2. build/sbt "sql/test:runMain "

* 3. generate result: SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt

"sql/test:runMain "

* Results will be written to

"benchmarks/FilterPushdownBenchmark-results.txt".

* }}}

```

fails to generate documentation error message:

```java

/home/jenkins/workspace/SparkPullRequestBuilder@2/target/javaunidoc/org/apache/spark/mllib/linalg/UDTSerializationBenchmark.html...

[error]

/home/jenkins/workspace/SparkPullRequestBuilder@2/mllib/target/java/org/apache/spark/mllib/linalg/UDTSerializationBenchmark.java:5:

error: unknown tag: this

[error] * 1. without sbt: bin/spark-submit --class

[error] ^

[error]

/home/jenkins/workspace/SparkPullRequestBuilder@2/mllib/target/java/org/apache/spark/mllib/linalg/UDTSerializationBenchmark.java:5:

error: unknown tag: spark

[error] * 1. without sbt: bin/spark-submit --class

[error] ^

[error]

/home/jenkins/workspace/SparkPullRequestBuilder@2/mllib/target/java/org/apache/spark/mllib/linalg/UDTSerializationBenchmark.java:6:

error: unknown tag: this

[error] * 2. build/sbt "mllib/test:runMain "

[error] ^

[error]

/home/jenkins/workspace/SparkPullRequestBuilder@2/mllib/target/java/org/apache/spark/mllib/linalg/UDTSerializationBenchmark.java:7:

error: unknown tag: this

[error] * 3. generate result: SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt

"mllib/test:runMain "

[error]

```

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22499: [SPARK-25489][ML][TEST] Refactor UDTSerialization...

Github user wangyum commented on a diff in the pull request:

https://github.com/apache/spark/pull/22499#discussion_r219366799

--- Diff:

mllib/src/test/scala/org/apache/spark/mllib/linalg/UDTSerializationBenchmark.scala

---

@@ -18,52 +18,52 @@

package org.apache.spark.mllib.linalg

import org.apache.spark.sql.catalyst.encoders.ExpressionEncoder

-import org.apache.spark.util.Benchmark

+import org.apache.spark.util.{Benchmark, BenchmarkBase =>

FileBenchmarkBase}

/**

* Serialization benchmark for VectorUDT.

+ * To run this benchmark:

+ * 1. without sbt: bin/spark-submit --class

--- End diff --

I think `<` should replaced to `[`:

```scala

[error]

/home/jenkins/workspace/SparkPullRequestBuilder@2/sql/core/target/java/org/apache/spark/sql/DatasetBenchmark.java:5:

error: unknown tag: this

[error] * 1. without sbt: bin/spark-submit --class

[error] ^

[error]

/home/jenkins/workspace/SparkPullRequestBuilder@2/sql/core/target/java/org/apache/spark/sql/DatasetBenchmark.java:5:

error: unknown tag: spark

[error] * 1. without sbt: bin/spark-submit --class

[error] ^

[error]

/home/jenkins/workspace/SparkPullRequestBuilder@2/sql/core/target/java/org/apache/spark/sql/DatasetBenchmark.java:6:

error: unknown tag: this

[error] * 2. build/sbt "sql/test:runMain "

[error] ^

[error]

/home/jenkins/workspace/SparkPullRequestBuilder@2/sql/core/target/java/org/apache/spark/sql/DatasetBenchmark.java:7:

error: unknown tag: this

[error] * 3. generate result: SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt

"sql/test:runMain "

[error]

```

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22501: [SPARK-25492][TEST] Refactor WideSchemaBenchmark ...

GitHub user wangyum opened a pull request: https://github.com/apache/spark/pull/22501 [SPARK-25492][TEST] Refactor WideSchemaBenchmark to use main method ## What changes were proposed in this pull request? Refactor `WideSchemaBenchmark` to use main method. Generate benchmark result: ```sh SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.WideSchemaBenchmark" ``` ## How was this patch tested? manual tests You can merge this pull request into a Git repository by running: $ git pull https://github.com/wangyum/spark SPARK-25492 Alternatively you can review and apply these changes as the patch at: https://github.com/apache/spark/pull/22501.patch To close this pull request, make a commit to your master/trunk branch with (at least) the following in the commit message: This closes #22501 commit f56b73223fbf765e408d9aef6565a2318f4836e3 Author: Yuming Wang Date: 2018-09-20T16:04:30Z Refactor WideSchemaBenchmark --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22500: [SPARK-25488][TEST] Refactor MiscBenchmark to use...

Github user wangyum commented on a diff in the pull request: https://github.com/apache/spark/pull/22500#discussion_r219219972 --- Diff: sql/core/benchmarks/MiscBenchmark-results.txt --- @@ -0,0 +1,132 @@ + +filter & aggregate without group + + +Java HotSpot(TM) 64-Bit Server VM 1.8.0_151-b12 on Mac OS X 10.12.6 +Intel(R) Core(TM) i7-7820HQ CPU @ 2.90GHz + +range/filter/sum:Best/Avg Time(ms)Rate(M/s) Per Row(ns) Relative + +range/filter/sum wholestage off 36618 / 41080 57.3 17.5 1.0X +range/filter/sum wholestage on2495 / 2609840.4 1.2 14.7X + + + +range/limit/sum + + +Java HotSpot(TM) 64-Bit Server VM 1.8.0_151-b12 on Mac OS X 10.12.6 +Intel(R) Core(TM) i7-7820HQ CPU @ 2.90GHz + +range/limit/sum: Best/Avg Time(ms)Rate(M/s) Per Row(ns) Relative + +range/limit/sum wholestage off 117 / 121 4477.9 0.2 1.0X +range/limit/sum wholestage on 178 / 187 2938.1 0.3 0.7X + + + +sample + + +Java HotSpot(TM) 64-Bit Server VM 1.8.0_151-b12 on Mac OS X 10.12.6 +Intel(R) Core(TM) i7-7820HQ CPU @ 2.90GHz + +sample with replacement: Best/Avg Time(ms)Rate(M/s) Per Row(ns) Relative + +sample with replacement wholestage off9142 / 9182 14.3 69.8 1.0X +sample with replacement wholestage on 5926 / 6107 22.1 45.2 1.5X + +Java HotSpot(TM) 64-Bit Server VM 1.8.0_151-b12 on Mac OS X 10.12.6 +Intel(R) Core(TM) i7-7820HQ CPU @ 2.90GHz + +sample without replacement: Best/Avg Time(ms)Rate(M/s) Per Row(ns) Relative + +sample without replacement wholestage off 1834 / 1837 71.5 14.0 1.0X +sample without replacement wholestage on 784 / 803167.2 6.0 2.3X + + + +collect + + +Java HotSpot(TM) 64-Bit Server VM 1.8.0_151-b12 on Mac OS X 10.12.6 +Intel(R) Core(TM) i7-7820HQ CPU @ 2.90GHz + +collect: Best/Avg Time(ms)Rate(M/s) Per Row(ns) Relative + +collect 1 million 186 / 215 5.6 177.5 1.0X +collect 2 millions 361 / 393 2.9 344.2 0.5X +collect 4 millions 884 / 1053 1.2 843.4 0.2X + + + +collect limit + + +Java HotSpot(TM) 64-Bit Server VM 1.8.0_151-b12 on Mac OS X 10.12.6 +Intel(R) Core(TM) i7-7820HQ CPU @ 2.90GHz + +collect limit: Best/Avg Time(ms)Rate(M/s) Per Row(ns) Relative + +collect limit 1 million206 / 225 5.1 196.6 1.0X +collect limit 2 millions 407 / 419 2.6 387.8 0.5X + + + +generate exp

[GitHub] spark pull request #22500: [SPARK-25488][TEST] Refactor MiscBenchmark to use...

Github user wangyum commented on a diff in the pull request:

https://github.com/apache/spark/pull/22500#discussion_r219218036

--- Diff:

sql/core/src/test/scala/org/apache/spark/sql/execution/benchmark/MiscBenchmark.scala

---

@@ -17,251 +17,154 @@

package org.apache.spark.sql.execution.benchmark

-import org.apache.spark.util.Benchmark

+import org.apache.spark.sql.SparkSession

+import org.apache.spark.util.{Benchmark, BenchmarkBase =>

FileBenchmarkBase}

/**

* Benchmark to measure whole stage codegen performance.

- * To run this:

- * build/sbt "sql/test-only *benchmark.MiscBenchmark"

- *

- * Benchmarks in this file are skipped in normal builds.

+ * To run this benchmark:

+ * 1. without sbt: bin/spark-submit --class

+ * 2. build/sbt "sql/test:runMain "

+ * 3. generate result: SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt

"sql/test:runMain "

+ *Results will be written to "benchmarks/MiscBenchmark-results.txt".

*/

-class MiscBenchmark extends BenchmarkBase {

-

- ignore("filter & aggregate without group") {

-val N = 500L << 22

-runBenchmark("range/filter/sum", N) {

- sparkSession.range(N).filter("(id & 1) =

1").groupBy().sum().collect()

+object MiscBenchmark extends FileBenchmarkBase {

+

+ lazy val sparkSession = SparkSession.builder

+.master("local[1]")

+.appName("microbenchmark")

+.config("spark.sql.shuffle.partitions", 1)

+.config("spark.sql.autoBroadcastJoinThreshold", 1)

+.getOrCreate()

+

+ /** Runs function `f` with whole stage codegen on and off. */

+ def runMiscBenchmark(name: String, cardinality: Long)(f: => Unit): Unit

= {

+val benchmark = new Benchmark(name, cardinality, output = output)

+

+benchmark.addCase(s"$name wholestage off", numIters = 2) { iter =>

+ sparkSession.conf.set("spark.sql.codegen.wholeStage", value = false)

+ f

}

-/*

-Java HotSpot(TM) 64-Bit Server VM 1.8.0_60-b27 on Mac OS X 10.11

-Intel(R) Core(TM) i7-4960HQ CPU @ 2.60GHz

-

-range/filter/sum:Best/Avg Time(ms)

Rate(M/s) Per Row(ns) Relative

-

-range/filter/sum codegen=false 30663 / 31216 68.4

14.6 1.0X

-range/filter/sum codegen=true 2399 / 2409874.1

1.1 12.8X

-*/

- }

- ignore("range/limit/sum") {

-val N = 500L << 20

-runBenchmark("range/limit/sum", N) {

- sparkSession.range(N).limit(100).groupBy().sum().collect()

+benchmark.addCase(s"$name wholestage on", numIters = 5) { iter =>

+ sparkSession.conf.set("spark.sql.codegen.wholeStage", value = true)

+ f

}

-/*

-Westmere E56xx/L56xx/X56xx (Nehalem-C)

-range/limit/sum:Best/Avg Time(ms)Rate(M/s)

Per Row(ns) Relative

-

---

-range/limit/sum codegen=false 609 / 672861.6

1.2 1.0X

-range/limit/sum codegen=true 561 / 621935.3

1.1 1.1X

-*/

- }

- ignore("sample") {

-val N = 500 << 18

-runBenchmark("sample with replacement", N) {

- sparkSession.range(N).sample(withReplacement = true,

0.01).groupBy().sum().collect()

-}

-/*

-Java HotSpot(TM) 64-Bit Server VM 1.8.0_60-b27 on Mac OS X 10.11

-Intel(R) Core(TM) i7-4960HQ CPU @ 2.60GHz

-

-sample with replacement: Best/Avg Time(ms)

Rate(M/s) Per Row(ns) Relative

-

-sample with replacement codegen=false 7073 / 7227 18.5

54.0 1.0X

-sample with replacement codegen=true 5199 / 5203 25.2

39.7 1.4X

-*/

-

-runBenchmark("sample without replacement", N) {

- sparkSession.range(N).sample(withReplacement = false,

0.01).groupBy().sum().collect()

-}

-/*

-Java HotSpot(TM) 64-Bit Server VM 1.8.0_60-b27 on Mac OS X 10.11

-Intel(R) Core(TM) i7-4960HQ CPU @ 2.60GHz

-

-sample without replacement: Best/Avg Time(ms)

Rate(M/s) Per Row(ns) Relative

-

[GitHub] spark pull request #22500: [SPARK-25488][TEST] Refactor MiscBenchmark to use...

GitHub user wangyum opened a pull request: https://github.com/apache/spark/pull/22500 [SPARK-25488][TEST] Refactor MiscBenchmark to use main method ## What changes were proposed in this pull request? Refactor `MiscBenchmark ` to use main method. Generate benchmark result: ```sh SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.MiscBenchmark" ``` ## How was this patch tested? manual tests You can merge this pull request into a Git repository by running: $ git pull https://github.com/wangyum/spark SPARK-25488 Alternatively you can review and apply these changes as the patch at: https://github.com/apache/spark/pull/22500.patch To close this pull request, make a commit to your master/trunk branch with (at least) the following in the commit message: This closes #22500 commit 6252440c1a079bbb12d41e2ae513f988fcdf5651 Author: Yuming Wang Date: 2018-09-20T15:41:03Z Refactor MiscBenchmark --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22497: [SPARK-25487][SQL][TEST] Refactor PrimitiveArrayBenchmar...

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22497 LGTM --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22491: [SPARK-25483][TEST] Refactor UnsafeArrayDataBench...

GitHub user wangyum opened a pull request: https://github.com/apache/spark/pull/22491 [SPARK-25483][TEST] Refactor UnsafeArrayDataBenchmark to use main method ## What changes were proposed in this pull request? Refactor `UnsafeArrayDataBenchmark` to use main method. Generate benchmark result: ```sh SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.UnsafeArrayDataBenchmark" ``` ## How was this patch tested? manual tests You can merge this pull request into a Git repository by running: $ git pull https://github.com/wangyum/spark SPARK-25483 Alternatively you can review and apply these changes as the patch at: https://github.com/apache/spark/pull/22491.patch To close this pull request, make a commit to your master/trunk branch with (at least) the following in the commit message: This closes #22491 commit 5bb5f0806bce127f07eefd337bc457912e9f5075 Author: Yuming Wang Date: 2018-09-20T12:13:52Z Refactor UnsafeArrayDataBenchmark --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22488: [SPARK-25479][TEST] Refactor DatasetBenchmark to use mai...

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22488 retest this please --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22484: [SPARK-25476][TEST] Refactor AggregateBenchmark t...

Github user wangyum commented on a diff in the pull request: https://github.com/apache/spark/pull/22484#discussion_r219104743 --- Diff: sql/core/benchmarks/AggregateBenchmark-results.txt --- @@ -0,0 +1,154 @@ + +aggregate without grouping + + +Java HotSpot(TM) 64-Bit Server VM 1.8.0_151-b12 on Mac OS X 10.12.6 +Intel(R) Core(TM) i7-7820HQ CPU @ 2.90GHz + +agg w/o group: Best/Avg Time(ms)Rate(M/s) Per Row(ns) Relative + +agg w/o group wholestage off39650 / 46049 52.9 18.9 1.0X +agg w/o group wholestage on 1224 / 1413 1713.5 0.6 32.4X + + + +stat functions + + --- End diff -- @davies Do you know how to generate there benchmark: https://github.com/apache/spark/blob/3c3eebc8734e36e61f4627e2c517fbbe342b3b42/sql/core/src/test/scala/org/apache/spark/sql/execution/benchmark/AggregateBenchmark.scala#L70-L78 --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22488: [SPARK-25479][TEST] Refactor DatasetBenchmark to ...

GitHub user wangyum opened a pull request: https://github.com/apache/spark/pull/22488 [SPARK-25479][TEST] Refactor DatasetBenchmark to use main method ## What changes were proposed in this pull request? Refactor `DatasetBenchmark` to use main method. Generate benchmark result: ```sh SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.DatasetBenchmark" ``` ## How was this patch tested? manual tests You can merge this pull request into a Git repository by running: $ git pull https://github.com/wangyum/spark SPARK-25479 Alternatively you can review and apply these changes as the patch at: https://github.com/apache/spark/pull/22488.patch To close this pull request, make a commit to your master/trunk branch with (at least) the following in the commit message: This closes #22488 commit 21b623aad6a84cca2ab5f89f1c29d3b3b1b82d80 Author: Yuming Wang Date: 2018-09-20T09:46:19Z Refactor DatasetBenchmark --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22486: [SPARK-25478][TEST] Refactor CompressionSchemeBen...

GitHub user wangyum opened a pull request: https://github.com/apache/spark/pull/22486 [SPARK-25478][TEST] Refactor CompressionSchemeBenchmark to use main method ## What changes were proposed in this pull request? Refactor `CompressionSchemeBenchmark` to use main method. To gererate benchmark result: ```sh SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.columnar.compression.CompressionSchemeBenchmark" ``` ## How was this patch tested? manual tests You can merge this pull request into a Git repository by running: $ git pull https://github.com/wangyum/spark SPARK-25478 Alternatively you can review and apply these changes as the patch at: https://github.com/apache/spark/pull/22486.patch To close this pull request, make a commit to your master/trunk branch with (at least) the following in the commit message: This closes #22486 commit 4dc46ad21e32784d42ab4b052ba73e31a050efb8 Author: Yuming Wang Date: 2018-09-20T08:38:04Z Refactor CompressionSchemeBenchmark --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22484: [SPARK-25476][TEST] Refactor AggregateBenchmark t...

GitHub user wangyum opened a pull request: https://github.com/apache/spark/pull/22484 [SPARK-25476][TEST] Refactor AggregateBenchmark to use main method ## What changes were proposed in this pull request? Refactor `AggregateBenchmark` to use main method. To gererate benchmark result: ``` SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.AggregateBenchmark" ``` ## How was this patch tested? manual tests You can merge this pull request into a Git repository by running: $ git pull https://github.com/wangyum/spark SPARK-25476 Alternatively you can review and apply these changes as the patch at: https://github.com/apache/spark/pull/22484.patch To close this pull request, make a commit to your master/trunk branch with (at least) the following in the commit message: This closes #22484 commit 649f2965188efcfa0b1d2b5acb4c0f057ecd3788 Author: Yuming Wang Date: 2018-09-20T07:23:46Z Refactor AggregateBenchmark --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22419: [SPARK-23906][SQL] Add built-in UDF TRUNCATE(numb...

Github user wangyum commented on a diff in the pull request:

https://github.com/apache/spark/pull/22419#discussion_r218749189

--- Diff:

sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/expressions/mathExpressions.scala

---

@@ -1245,3 +1245,80 @@ case class BRound(child: Expression, scale:

Expression)

with Serializable with ImplicitCastInputTypes {

def this(child: Expression) = this(child, Literal(0))

}

+

+/**

+ * The number truncated to scale decimal places.

+ */

+// scalastyle:off line.size.limit

+@ExpressionDescription(

+ usage = "_FUNC_(number, scale) - Returns number truncated to scale

decimal places. " +

+"If scale is omitted, then number is truncated to 0 places. " +

+"scale can be negative to truncate (make zero) scale digits left of

the decimal point.",

+ examples = """

+Examples:

+ > SELECT _FUNC_(1234567891.1234567891, 4);

+ 1234567891.1234

+ > SELECT _FUNC_(1234567891.1234567891, -4);

+ 123456

+ > SELECT _FUNC_(1234567891.1234567891);

+ 1234567891

+ """)

+// scalastyle:on line.size.limit

+case class Truncate(number: Expression, scale: Expression)

+ extends BinaryExpression with ImplicitCastInputTypes {

+

+ def this(number: Expression) = this(number, Literal(0))

+

+ override def left: Expression = number

+ override def right: Expression = scale

+

+ override def inputTypes: Seq[AbstractDataType] =

+Seq(TypeCollection(DoubleType, FloatType, DecimalType), IntegerType)

+

+ override def checkInputDataTypes(): TypeCheckResult = {

+super.checkInputDataTypes() match {

+ case TypeCheckSuccess =>

+if (scale.foldable) {

--- End diff --

Same to `RoundBase`. only support foldable:

https://github.com/apache/spark/blob/c7156943a2a32ba57e67aa6d8fa7035a09847e07/sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/expressions/mathExpressions.scala#L1076

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22443: [SPARK-25339][TEST] Refactor FilterPushdownBenchmark

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22443 Jenkins, retest this please. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22443: [SPARK-25339][TEST] Refactor FilterPushdownBenchmark

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22443 retest this please --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22461: [SPARK-25453] OracleIntegrationSuite IllegalArgumentExce...

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22461 cc @maropu --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22461: [SPARK-25453] OracleIntegrationSuite IllegalArgumentExce...

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22461 Could you add `[TEST]` to title, otherwise LGTM. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22443: [SPARK-25339][TEST] Refactor FilterPushdownBenchm...

Github user wangyum commented on a diff in the pull request:

https://github.com/apache/spark/pull/22443#discussion_r218642335

--- Diff:

sql/core/src/test/scala/org/apache/spark/sql/execution/benchmark/FilterPushdownBenchmark.scala

---

@@ -17,29 +17,28 @@

package org.apache.spark.sql.execution.benchmark

-import java.io.{File, FileOutputStream, OutputStream}

+import java.io.File

import scala.util.{Random, Try}

-import org.scalatest.{BeforeAndAfterEachTestData, Suite, TestData}

-

import org.apache.spark.SparkConf

-import org.apache.spark.SparkFunSuite

import org.apache.spark.sql.{DataFrame, SparkSession}

import org.apache.spark.sql.functions.monotonically_increasing_id

import org.apache.spark.sql.internal.SQLConf

import org.apache.spark.sql.internal.SQLConf.ParquetOutputTimestampType

import org.apache.spark.sql.types.{ByteType, Decimal, DecimalType,

TimestampType}

-import org.apache.spark.util.{Benchmark, Utils}

+import org.apache.spark.util.{Benchmark, BenchmarkBase =>

FileBenchmarkBase, Utils}

/**

* Benchmark to measure read performance with Filter pushdown.

- * To run this:

- * build/sbt "sql/test-only *FilterPushdownBenchmark"

- *

- * Results will be written to

"benchmarks/FilterPushdownBenchmark-results.txt".

+ * To run this benchmark:

+ * 1. without sbt: bin/spark-submit --class

+ * 2. build/sbt "sql/test:runMain "

+ * 3. generate result: SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt

"sql/test:runMain "

--- End diff --

Thanks

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22443: [SPARK-25339][TEST] Refactor FilterPushdownBenchm...

Github user wangyum commented on a diff in the pull request:

https://github.com/apache/spark/pull/22443#discussion_r218308258

--- Diff:

sql/core/src/test/scala/org/apache/spark/sql/execution/benchmark/FilterPushdownBenchmark.scala

---

@@ -17,29 +17,28 @@

package org.apache.spark.sql.execution.benchmark

-import java.io.{File, FileOutputStream, OutputStream}

+import java.io.File

import scala.util.{Random, Try}

-import org.scalatest.{BeforeAndAfterEachTestData, Suite, TestData}

-

import org.apache.spark.SparkConf

-import org.apache.spark.SparkFunSuite

import org.apache.spark.sql.{DataFrame, SparkSession}

import org.apache.spark.sql.functions.monotonically_increasing_id

import org.apache.spark.sql.internal.SQLConf

import org.apache.spark.sql.internal.SQLConf.ParquetOutputTimestampType

import org.apache.spark.sql.types.{ByteType, Decimal, DecimalType,

TimestampType}

-import org.apache.spark.util.{Benchmark, Utils}

+import org.apache.spark.util.{Benchmark, BenchmarkBase =>

FileBenchmarkBase, Utils}

/**

* Benchmark to measure read performance with Filter pushdown.

- * To run this:

- * build/sbt "sql/test-only *FilterPushdownBenchmark"

- *

- * Results will be written to

"benchmarks/FilterPushdownBenchmark-results.txt".

+ * To run this benchmark:

+ * 1. without sbt: bin/spark-submit --class

+ * 2. build/sbt "sql/test:runMain "

+ * 3. generate result: SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt

"sql/test:runMain "

--- End diff --

Yes, It can print the output to console if `SPARK_GENERATE_BENCHMARK_FILES`

not set.

https://github.com/apache/spark/blob/4e8ac6edd5808ca8245b39d804c6d4f5ea9d0d36/core/src/main/scala/org/apache/spark/util/Benchmark.scala#L59-L63

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22446: [SPARK-19550][DOC][FOLLOW-UP] Update tuning.md to use JD...

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22446 Yes. I can't find more references to the old JDK docs also. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22446: [SPARK-19550][DOC][FOLLOW-UP] Update tuning.md to use JD...

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22446 Some references to Java 7, Some references to Java 6. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22446: [SPARK-19550][DOC][FOLLOW-UP] Update tuning.md to use JD...

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22446 cc @srowen --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22446: [SPARK-19550][DOC][FOLLOW-UP] Update tuning.md to...

GitHub user wangyum opened a pull request: https://github.com/apache/spark/pull/22446 [SPARK-19550][DOC][FOLLOW-UP] Update tuning.md to use JDK8 ## What changes were proposed in this pull request? Update `tuning.md` and `building-spark.md` to use JDK8. ## How was this patch tested? manual tests You can merge this pull request into a Git repository by running: $ git pull https://github.com/wangyum/spark java8 Alternatively you can review and apply these changes as the patch at: https://github.com/apache/spark/pull/22446.patch To close this pull request, make a commit to your master/trunk branch with (at least) the following in the commit message: This closes #22446 commit f8924bb0ce876beb35309ea51f1c1c42497d26e0 Author: Yuming Wang Date: 2018-09-18T01:14:04Z To java 8 --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22443: [SPARK-25339][TESTS] Refactor FilterPushdownBenchmark

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22443 cc @dongjoon-hyun --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22443: [SPARK-25339][TESTS] Refactor FilterPushdownBench...

GitHub user wangyum opened a pull request: https://github.com/apache/spark/pull/22443 [SPARK-25339][TESTS] Refactor FilterPushdownBenchmark ## What changes were proposed in this pull request? Refactor `FilterPushdownBenchmark` use `main` method. we can use 3 ways to run this test now: 1. bin/spark-submit --class org.apache.spark.sql.execution.benchmark.FilterPushdownBenchmark spark-sql_2.11-2.5.0-SNAPSHOT-tests.jar 2. build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.FilterPushdownBenchmark" 3. SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.FilterPushdownBenchmark" The method 2 and the method 3 do not need to compile the `spark-sql_*-tests.jar` package. So these two methods are mainly for developers to quickly do benchmark. ## How was this patch tested? manual tests You can merge this pull request into a Git repository by running: $ git pull https://github.com/wangyum/spark SPARK-25339 Alternatively you can review and apply these changes as the patch at: https://github.com/apache/spark/pull/22443.patch To close this pull request, make a commit to your master/trunk branch with (at least) the following in the commit message: This closes #22443 commit 7b50eb5225b664c43cd5dd66a49024741d2ca19c Author: Yuming Wang Date: 2018-09-17T16:51:03Z Refactor FilterPushdownBenchmark commit 6e7cfc85d4d7719ee31254317b0ca81173be7128 Author: Yuming Wang Date: 2018-09-17T16:53:55Z Revert numRows --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22435: [SPARK-25423][SQL] Output "dataFilters" in DataSo...

Github user wangyum commented on a diff in the pull request:

https://github.com/apache/spark/pull/22435#discussion_r217934875

--- Diff:

sql/core/src/test/scala/org/apache/spark/sql/execution/DataSourceScanExecRedactionSuite.scala

---

@@ -83,4 +83,20 @@ class DataSourceScanExecRedactionSuite extends QueryTest

with SharedSQLContext {

}

}

+ test("FileSourceScanExec metadata") {

+withTempDir { dir =>

+ val basePath = dir.getCanonicalPath

+ spark.range(0, 10).toDF("a").write.parquet(new Path(basePath,

"foo=1").toString)

+ val df = spark.read.parquet(basePath).filter("a = 1")

--- End diff --

Thanks @dongjoon-hyun I fixed it.

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22435: [SPARK-25423][SQL] Output "dataFilters" in DataSo...

GitHub user wangyum opened a pull request: https://github.com/apache/spark/pull/22435 [SPARK-25423][SQL] Output "dataFilters" in DataSourceScanExec.metadata ## What changes were proposed in this pull request? Output `dataFilters` in `DataSourceScanExec.metadata`. ## How was this patch tested? unit tests You can merge this pull request into a Git repository by running: $ git pull https://github.com/wangyum/spark SPARK-25423 Alternatively you can review and apply these changes as the patch at: https://github.com/apache/spark/pull/22435.patch To close this pull request, make a commit to your master/trunk branch with (at least) the following in the commit message: This closes #22435 commit 830e1881b4ef4d9bb661d8b6635470e2596d4eaa Author: Yuming Wang Date: 2018-09-16T16:31:32Z Output "dataFilters" in DataSourceScanExec.metadata --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22427: [SPARK-25438][SQL][TEST] Fix FilterPushdownBenchm...

Github user wangyum commented on a diff in the pull request:

https://github.com/apache/spark/pull/22427#discussion_r217918733

--- Diff: sql/core/benchmarks/FilterPushdownBenchmark-results.txt ---

@@ -2,737 +2,669 @@

Pushdown for many distinct value case

-Java HotSpot(TM) 64-Bit Server VM 1.8.0_151-b12 on Mac OS X 10.12.6

-Intel(R) Core(TM) i7-7820HQ CPU @ 2.90GHz

-

+OpenJDK 64-Bit Server VM 1.8.0_181-b13 on Linux 3.10.0-862.3.2.el7.x86_64

+Intel(R) Xeon(R) CPU E5-2670 v2 @ 2.50GHz

Select 0 string row (value IS NULL): Best/Avg Time(ms)Rate(M/s)

Per Row(ns) Relative

-Parquet Vectorized8970 / 9122 1.8

570.3 1.0X

-Parquet Vectorized (Pushdown) 471 / 491 33.4

30.0 19.0X

-Native ORC Vectorized 7661 / 7853 2.1

487.0 1.2X

-Native ORC Vectorized (Pushdown) 1134 / 1161 13.9

72.1 7.9X

-

-Java HotSpot(TM) 64-Bit Server VM 1.8.0_151-b12 on Mac OS X 10.12.6

-Intel(R) Core(TM) i7-7820HQ CPU @ 2.90GHz

+Parquet Vectorized 11405 / 11485 1.4

725.1 1.0X

+Parquet Vectorized (Pushdown) 675 / 690 23.3

42.9 16.9X

+Native ORC Vectorized 7127 / 7170 2.2

453.1 1.6X

+Native ORC Vectorized (Pushdown) 519 / 541 30.3

33.0 22.0X

+OpenJDK 64-Bit Server VM 1.8.0_181-b13 on Linux 3.10.0-862.3.2.el7.x86_64

+Intel(R) Xeon(R) CPU E5-2670 v2 @ 2.50GHz

Select 0 string row ('7864320' < value < '7864320'): Best/Avg Time(ms)

Rate(M/s) Per Row(ns) Relative

-Parquet Vectorized9246 / 9297 1.7

587.8 1.0X

-Parquet Vectorized (Pushdown) 480 / 488 32.8

30.5 19.3X

-Native ORC Vectorized 7838 / 7850 2.0

498.3 1.2X

-Native ORC Vectorized (Pushdown) 1054 / 1118 14.9

67.0 8.8X

-

-Java HotSpot(TM) 64-Bit Server VM 1.8.0_151-b12 on Mac OS X 10.12.6

-Intel(R) Core(TM) i7-7820HQ CPU @ 2.90GHz

+Parquet Vectorized 11457 / 11473 1.4

728.4 1.0X

+Parquet Vectorized (Pushdown) 656 / 686 24.0

41.7 17.5X

+Native ORC Vectorized 7328 / 7342 2.1

465.9 1.6X

+Native ORC Vectorized (Pushdown) 539 / 565 29.2

34.2 21.3X

+OpenJDK 64-Bit Server VM 1.8.0_181-b13 on Linux 3.10.0-862.3.2.el7.x86_64

+Intel(R) Xeon(R) CPU E5-2670 v2 @ 2.50GHz

Select 1 string row (value = '7864320'): Best/Avg Time(ms)Rate(M/s)

Per Row(ns) Relative

-Parquet Vectorized8989 / 9100 1.7

571.5 1.0X

-Parquet Vectorized (Pushdown) 448 / 467 35.1

28.5 20.1X

-Native ORC Vectorized 7680 / 7768 2.0

488.3 1.2X

-Native ORC Vectorized (Pushdown) 1067 / 1118 14.7

67.8 8.4X

-

-Java HotSpot(TM) 64-Bit Server VM 1.8.0_151-b12 on Mac OS X 10.12.6

-Intel(R) Core(TM) i7-7820HQ CPU @ 2.90GHz

+Parquet Vectorized 11878 / 11888 1.3

755.2 1.0X

+Parquet Vectorized (Pushdown) 630 / 654 25.0

40.1 18.9X

+Native ORC Vectorized 7342 / 7362 2.1

466.8 1.6X

+Native ORC Vectorized (Pushdown) 519 / 537 30.3

33.0 22.9X

+OpenJDK 64-Bit Server VM 1.8.0_181-b13 on Linux 3.10.0-862.3.2.el7.x86_64

+Intel(R) Xeon(R) CPU E5-2670 v2 @ 2.50GHz

Select 1 string row (value <=> '7864320'): Best/Avg Time(ms)Rate(M/s)

Per Row(ns) Relative

-Parquet Vectorized9115 / 9266 1.7

579.5 1.0X

-Parquet Vectorized (Pushdown) 466 / 492 33.7

29.7 19.5X

-Native ORC Vectorized 7800 / 7914 2.0

[GitHub] spark pull request #22426: [SPARK-25436] Bump master branch version to 2.5.0...

Github user wangyum commented on a diff in the pull request: https://github.com/apache/spark/pull/22426#discussion_r217876251 --- Diff: docs/_config.yml --- @@ -14,8 +14,8 @@ include: # These allow the documentation to be updated with newer releases # of Spark, Scala, and Mesos. -SPARK_VERSION: 2.4.0-SNAPSHOT -SPARK_VERSION_SHORT: 2.4.0 +SPARK_VERSION: 2.5.0-SNAPSHOT +SPARK_VERSION_SHORT: 2.5.0-SNAPSHOT --- End diff -- 2.5.0-SNAPSHOT -> 2.5.0? --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22420: SPARK-25429

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22420 Could you update the PR title. The PR title should be of the form [SPARK-][COMPONENT] Title. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22419: [SPARK-23906][SQL] Add UDF TRUNCATE(number)

GitHub user wangyum opened a pull request: https://github.com/apache/spark/pull/22419 [SPARK-23906][SQL] Add UDF TRUNCATE(number) ## What changes were proposed in this pull request? Add UDF `TRUNCATE(number)`: ```sql > SELECT TRUNCATE(1234567891.1234567891, 4); 1234567891.1234 > SELECT TRUNCATE(1234567891.1234567891, -4); 123456 > SELECT TRUNCATE(1234567891.1234567891, 0); 1234567891 > SELECT TRUNCATE(1234567891.1234567891); 1234567891 ``` It's similar to MySQL [TRUNCATE(X, D)](https://dev.mysql.com/doc/refman/8.0/en/mathematical-functions.html#function_truncate) ## How was this patch tested? unit tests You can merge this pull request into a Git repository by running: $ git pull https://github.com/wangyum/spark SPARK-23906 Alternatively you can review and apply these changes as the patch at: https://github.com/apache/spark/pull/22419.patch To close this pull request, make a commit to your master/trunk branch with (at least) the following in the commit message: This closes #22419 commit b5365e28bf40448bb3cbd59668f316c7e5a3809a Author: Yuming Wang Date: 2018-09-14T08:23:50Z Support truncate number --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #18106: [SPARK-20754][SQL] Support TRUNC (number)

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/18106 @dongjoon-hyun Actually `TRUNC (number)` not resolved. I will fix it soon. https://issues.apache.org/jira/browse/SPARK-23906 --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #18106: [SPARK-20754][SQL] Support TRUNC (number)

Github user wangyum closed the pull request at: https://github.com/apache/spark/pull/18106 --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22038: [SPARK-25056][SQL] Unify the InConversion and BinaryComp...

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22038 @mgaido91 I updated Postgres and Hive to https://github.com/apache/spark/pull/22038#issuecomment-412737994 @gatorsmile Is this change make sense? --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22358: [SPARK-25366][SQL]Zstd and brotli CompressionCode...

Github user wangyum commented on a diff in the pull request: https://github.com/apache/spark/pull/22358#discussion_r216873177 --- Diff: docs/sql-programming-guide.md --- @@ -965,6 +965,8 @@ Configuration of Parquet can be done using the `setConf` method on `SparkSession `parquet.compression` is specified in the table-specific options/properties, the precedence would be `compression`, `parquet.compression`, `spark.sql.parquet.compression.codec`. Acceptable values include: none, uncompressed, snappy, gzip, lzo, brotli, lz4, zstd. +Note that `zstd` needs to install `ZStandardCodec` before Hadoop 2.9.0, `brotli` needs to install +`brotliCodec`. --- End diff -- @HyukjinKwon How about adding a link? Users may not know where to download it. ``` `brotliCodec` -> [`brotli-codec`](https://github.com/rdblue/brotli-codec) ``` --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22387: [SPARK-25313][SQL][FOLLOW-UP][BACKPORT-2.3] Fix I...

Github user wangyum closed the pull request at: https://github.com/apache/spark/pull/22387 --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22387: [SPARK-25313][SQL][FOLLOW-UP][BACKPORT-2.3] Fix InsertIn...

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22387 retest this please --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #20504: [SPARK-23332][SQL] Update SQLQueryTestSuite to su...

Github user wangyum closed the pull request at: https://github.com/apache/spark/pull/20504 --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22387: [SPARK-25313][SQL][FOLLOW-UP][BACKPORT-2.3] Fix InsertIn...

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22387 retest this please --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #20504: [SPARK-23332][SQL] Update SQLQueryTestSuite to support a...

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/20504 I will close it now. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22387: [SPARK-25313][SQL][FOLLOW-UP][BACKPORT-2.3] Fix I...

GitHub user wangyum opened a pull request: https://github.com/apache/spark/pull/22387 [SPARK-25313][SQL][FOLLOW-UP][BACKPORT-2.3] Fix InsertIntoHiveDirCommand output schema in Parquet issue ## What changes were proposed in this pull request? Backport https://github.com/apache/spark/pull/22359 to branch-2.3. You can merge this pull request into a Git repository by running: $ git pull https://github.com/wangyum/spark SPARK-25313-FOLLOW-UP-branch-2.3 Alternatively you can review and apply these changes as the patch at: https://github.com/apache/spark/pull/22387.patch To close this pull request, make a commit to your master/trunk branch with (at least) the following in the commit message: This closes #22387 commit a7b857c69fa20615108413d6f17a87978ca44ae2 Author: Yuming Wang Date: 2018-09-11T02:02:55Z [SPARK-25313][SQL][FOLLOW-UP] Fix InsertIntoHiveDirCommand output schema in Parquet issue --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22372: [SPARK-25385][BUILD] Upgrade Hadoop 3.1 jackson v...

Github user wangyum closed the pull request at: https://github.com/apache/spark/pull/22372 --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22372: [SPARK-25385][BUILD] Upgrade Hadoop 3.1 jackson version ...

Github user wangyum commented on the issue:

https://github.com/apache/spark/pull/22372

I did a simple test for 2.9.6. It works well. But that pr for 3.0. It means

that a simple test on branch 2.4 will fail:

```scala

scala> spark.range(10).write.parquet("/tmp/spark/parquet")

com.fasterxml.jackson.databind.JsonMappingException: Incompatible Jackson

version: 2.7.8

at

com.fasterxml.jackson.module.scala.JacksonModule$class.setupModule(JacksonModule.scala:64)

at

com.fasterxml.jackson.module.scala.DefaultScalaModule.setupModule(DefaultScalaModule.scala:19)

at

com.fasterxml.jackson.databind.ObjectMapper.registerModule(ObjectMapper.java:730)

at

org.apache.spark.rdd.RDDOperationScope$.(RDDOperationScope.scala:82)

at

org.apache.spark.rdd.RDDOperationScope$.(RDDOperationScope.scala)

```

How about merge this pr to branch-2.4 only?

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22372: [SPARK-25385][BUILD] Upgrade Hadoop 3.1 jackson v...

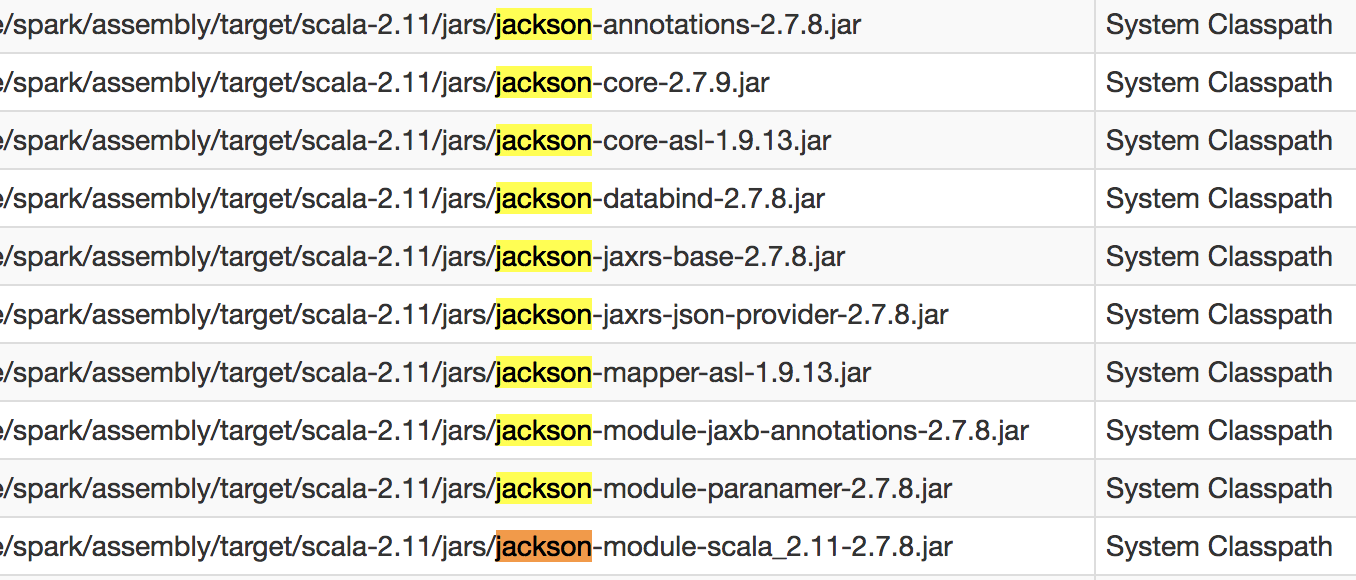

Github user wangyum commented on a diff in the pull request: https://github.com/apache/spark/pull/22372#discussion_r216208966 --- Diff: pom.xml --- @@ -2694,6 +2694,8 @@ 3.1.0 2.12.0 3.4.9 +2.7.8 + 2.7.8 --- End diff -- We should `clean` first for `package`: ```sh build/sbt clean package -Phadoop-3.1 ``` and then check: `assembly/target/scala-2.11/jars/`:  --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22372: [SPARK-25385][BUILD] Upgrade Hadoop 3.1 jackson v...

Github user wangyum commented on a diff in the pull request: https://github.com/apache/spark/pull/22372#discussion_r216208793 --- Diff: pom.xml --- @@ -2694,6 +2694,8 @@ 3.1.0 2.12.0 3.4.9 +2.7.8 + 2.7.8 --- End diff -- ```sh build/sbt dependency-tree -Phadoop-3.1 ```  --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22359: [SPARK-25313][SQL][FOLLOW-UP] Fix InsertIntoHiveDirComma...

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22359 cc @cloud-fan --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22372: [SPARK-25385][BUILD] Upgrade Hadoop 3.1 jackson version ...

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22372 We do not have jenkins tests for 3.1 profile: https://github.com/apache/spark/blob/395860a986987886df6d60fd9b26afd818b2cb39/dev/run-tests.py#L307-L310 --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22372: [SPARK-25385][BUILD] Upgrade Hadoop 3.1 jackson v...

GitHub user wangyum opened a pull request:

https://github.com/apache/spark/pull/22372

[SPARK-25385][BUILD] Upgrade Hadoop 3.1 jackson version to 2.7.8

## What changes were proposed in this pull request?

Upgrade Hadoop 3.1 jackson version to 2.7.8 to fix `JsonMappingException:

Incompatible Jackson version: 2.7.8`.

https://github.com/apache/hadoop/blob/release-3.1.0-RC1/hadoop-project/pom.xml#L72

## How was this patch tested?

manual tests:

```sh

export SPARK_PREPEND_CLASSES=true

build/sbt clean package -Phadoop-3.1

spark-shell

scala> spark.range(10).write.mode("overwrite").parquet("/tmp/spark/parquet")

```

You can merge this pull request into a Git repository by running:

$ git pull https://github.com/wangyum/spark SPARK-25385

Alternatively you can review and apply these changes as the patch at:

https://github.com/apache/spark/pull/22372.patch

To close this pull request, make a commit to your master/trunk branch

with (at least) the following in the commit message:

This closes #22372

commit f68083ab07df6fedf8a30c94e706a74e0c620694

Author: Yuming Wang

Date: 2018-09-09T11:51:39Z

Upgrade jackson version to 2.7.8

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22368: [SPARK-25368][SQL] Incorrect predicate pushdown returns ...

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22368 retest this please --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22368: [SPARK-25368][SQL] Incorrect predicate pushdown r...

GitHub user wangyum opened a pull request:

https://github.com/apache/spark/pull/22368

[SPARK-25368][SQL] Incorrect predicate pushdown returns wrong result

## What changes were proposed in this pull request?

How to reproduce:

```scala

val df1 = spark.createDataFrame(Seq(

(1, 1)

)).toDF("a", "b").withColumn("c", lit(null).cast("int"))

val df2 = df1.union(df1).withColumn("d",

spark_partition_id).filter($"c".isNotNull)

df2.show

+---+---++---+

| a| b| c| d|

+---+---++---+

| 1| 1|null| 0|

| 1| 1|null| 1|

+---+---++---+

```

`filter($"c".isNotNull)`changed to `(null <=> c#10)` before

https://github.com/apache/spark/pull/19201, but it changed to `(c#10 = null)`

since https://github.com/apache/spark/pull/20155. This pr revert it to `(null

<=> c#10)` to fix this issue.

## How was this patch tested?

unit tests

You can merge this pull request into a Git repository by running:

$ git pull https://github.com/wangyum/spark SPARK-25368

Alternatively you can review and apply these changes as the patch at:

https://github.com/apache/spark/pull/22368.patch

To close this pull request, make a commit to your master/trunk branch

with (at least) the following in the commit message:

This closes #22368

commit 86b9b7892c94be68145453f9519e35a3574fe568

Author: Yuming Wang

Date: 2018-09-09T03:46:18Z

Fix SPARK-25368

commit 865e0af572edad7fd775c25e317055ffa0df2a08

Author: Yuming Wang

Date: 2018-09-09T04:22:29Z

Fix InferFiltersFromConstraintsSuite test error

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22359: [SPARK-25313][SQL][FOLLOW-UP] Fix InsertIntoHiveD...

Github user wangyum commented on a diff in the pull request:

https://github.com/apache/spark/pull/22359#discussion_r216117397

--- Diff:

sql/hive/src/test/scala/org/apache/spark/sql/hive/execution/HiveDDLSuite.scala

---

@@ -803,6 +803,25 @@ class HiveDDLSuite

}

}

+ test("Insert overwrite directory should output correct schema") {

--- End diff --

Also added here?

https://github.com/apache/spark/blob/8e60b98239be63555644e013417cda7175baf984/sql/hive/src/test/scala/org/apache/spark/sql/hive/execution/HiveDDLSuite.scala#L758

https://github.com/apache/spark/blob/8e60b98239be63555644e013417cda7175baf984/sql/hive/src/test/scala/org/apache/spark/sql/hive/execution/HiveDDLSuite.scala#L782

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22358: [SPARK-25366][SQL]Zstd and brotil CompressionCode...

Github user wangyum commented on a diff in the pull request: https://github.com/apache/spark/pull/22358#discussion_r215897048 --- Diff: docs/sql-programming-guide.md --- @@ -964,7 +964,7 @@ Configuration of Parquet can be done using the `setConf` method on `SparkSession Sets the compression codec used when writing Parquet files. If either `compression` or `parquet.compression` is specified in the table-specific options/properties, the precedence would be `compression`, `parquet.compression`, `spark.sql.parquet.compression.codec`. Acceptable values include: -none, uncompressed, snappy, gzip, lzo, brotli, lz4, zstd. --- End diff -- `none, uncompressed, snappy, gzip, lzo, brotli(need install brotli-codec), lz4, zstd(since Hadoop 2.9.0)` https://jira.apache.org/jira/browse/HADOOP-13578 https://github.com/rdblue/brotli-codec https://jira.apache.org/jira/browse/HADOOP-13126 --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22358: [SPARK-25366][SQL]Zstd and brotil CompressionCode...

Github user wangyum commented on a diff in the pull request: https://github.com/apache/spark/pull/22358#discussion_r215874603 --- Diff: docs/sql-programming-guide.md --- @@ -964,7 +964,7 @@ Configuration of Parquet can be done using the `setConf` method on `SparkSession Sets the compression codec used when writing Parquet files. If either `compression` or `parquet.compression` is specified in the table-specific options/properties, the precedence would be `compression`, `parquet.compression`, `spark.sql.parquet.compression.codec`. Acceptable values include: -none, uncompressed, snappy, gzip, lzo, brotli, lz4, zstd. --- End diff -- I prefer `none, uncompressed, snappy, gzip, lzo, brotli(need install ...), lz4, zstd(need install ...)`. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22359: [SPARK-25313][SQL]FOLLOW-UP] Fix InsertIntoHiveDirComman...

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22359 cc @gengliangwang --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22359: [SPARK-25313][SQL]FOLLOW-UP] Fix InsertIntoHiveDi...

GitHub user wangyum opened a pull request: https://github.com/apache/spark/pull/22359 [SPARK-25313][SQL]FOLLOW-UP] Fix InsertIntoHiveDirCommand output schema issue ## What changes were proposed in this pull request? Fix `InsertIntoHiveDirCommand` output schema issue. ## How was this patch tested? unit tests You can merge this pull request into a Git repository by running: $ git pull https://github.com/wangyum/spark SPARK-25313-FOLLOW-UP Alternatively you can review and apply these changes as the patch at: https://github.com/apache/spark/pull/22359.patch To close this pull request, make a commit to your master/trunk branch with (at least) the following in the commit message: This closes #22359 commit ff78fdb017d87a8320e8be33c4beceffbdaa3ab4 Author: Yuming Wang Date: 2018-09-07T06:08:47Z Fix InsertIntoHiveDirCommand output schema --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22287: [SPARK-25135][SQL] FileFormatWriter should respec...

Github user wangyum closed the pull request at: https://github.com/apache/spark/pull/22287 --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22320: [SPARK-25313][SQL]Fix regression in FileFormatWriter out...

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22320 @gengliangwang We need backport this pr to branch-2.3. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22327: [SPARK-25330][BUILD] Revert Hadoop 2.7 to 2.7.3

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22327 How about revert it to branch-2.3 as we are going to release 2.3.2? We have time to fix it before releasing 2.4.0. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22320: [SPARK-25313][SQL]Fix regression in FileFormatWriter out...

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22320 retest this please --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #21404: [SPARK-24360][SQL] Support Hive 3.0 metastore

Github user wangyum commented on a diff in the pull request:

https://github.com/apache/spark/pull/21404#discussion_r215232389

--- Diff:

sql/hive/src/main/scala/org/apache/spark/sql/hive/client/IsolatedClientLoader.scala

---

@@ -99,6 +99,7 @@ private[hive] object IsolatedClientLoader extends Logging

{

case "2.1" | "2.1.0" | "2.1.1" => hive.v2_1

case "2.2" | "2.2.0" => hive.v2_2

case "2.3" | "2.3.0" | "2.3.1" | "2.3.2" | "2.3.3" => hive.v2_3

+case "3.0" | "3.0.0" => hive.v3_0

--- End diff --

@dongjoon-hyun Please update sql-programming-guide.md:

https://github.com/apache/spark/blob/05974f9431e9718a5f331a9892b7d81aca8387a6/docs/sql-programming-guide.md#L1217

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #17174: [SPARK-19145][SQL] Timestamp to String casting is slowin...

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/17174 @tanejagagan Are you still working on? --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22320: [SPARK-25313][SQL]Fix regression in FileFormatWri...

Github user wangyum commented on a diff in the pull request:

https://github.com/apache/spark/pull/22320#discussion_r215106921

--- Diff:

sql/core/src/main/scala/org/apache/spark/sql/execution/datasources/InsertIntoHadoopFsRelationCommand.scala

---

@@ -56,7 +56,7 @@ case class InsertIntoHadoopFsRelationCommand(

mode: SaveMode,

catalogTable: Option[CatalogTable],

fileIndex: Option[FileIndex],

-outputColumns: Seq[Attribute])

+outputColumnNames: Seq[String])

extends DataWritingCommand {

import

org.apache.spark.sql.catalyst.catalog.ExternalCatalogUtils.escapePathName

--- End diff --

Line 66: `query.schema` should be

`DataWritingCommand.logicalPlanSchemaWithNames(query, outputColumnNames)`.

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22228: [SPARK-25124][ML]VectorSizeHint setSize and getSize don'...

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/8 This is already merged, @huaxingao Could you please close this PR? --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22179: [SPARK-23131][BUILD] Upgrade Kryo to 4.0.2

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22179 Thanks, @dongjoon-hyun --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22327: [SPARK-25330][BUILD] Revert Hadoop 2.7 to 2.7.3

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22327 Yes. This is a Hadoop thing. I try to build Hadoop 2.7.7 with [`Configuration.getRestrictParserDefault(Object resource)`](https://github.com/apache/hadoop/blob/release-2.7.7-RC0/hadoop-common-project/hadoop-common/src/main/java/org/apache/hadoop/conf/Configuration.java#L236) = true and false. It succeeded when `Configuration.getRestrictParserDefault(Object resource)=false`, but failed when `Configuration.getRestrictParserDefault(Object resource)=true`. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22327: [SPARK-25330][BUILD] Revert Hadoop 2.7 to 2.7.3

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22327 cc @srowen @steveloughran --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22327: [SPARK-25330][BUILD] Revert Hadoop 2.7 to 2.7.3

GitHub user wangyum opened a pull request: https://github.com/apache/spark/pull/22327 [SPARK-25330][BUILD] Revert Hadoop 2.7 to 2.7.3 ## What changes were proposed in this pull request? Revert Hadoop 2.7 to 2.7.3 to fix permission issue. The issue occurred in this commit: https://github.com/apache/hadoop/commit/feb886f2093ea5da0cd09c69bd1360a335335c86 ## How was this patch tested? unit tests and manual tests. You can merge this pull request into a Git repository by running: $ git pull https://github.com/wangyum/spark SPARK-25330 Alternatively you can review and apply these changes as the patch at: https://github.com/apache/spark/pull/22327.patch To close this pull request, make a commit to your master/trunk branch with (at least) the following in the commit message: This closes #22327 commit f89448b7b0598a59f750a324e869e7768cfedbc1 Author: Yuming Wang Date: 2018-09-04T08:31:13Z Revert Hadoop 2.7 to 2.7.3 --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22320: [SPARK-25313][SQL]Fix regression in FileFormatWri...

Github user wangyum commented on a diff in the pull request:

https://github.com/apache/spark/pull/22320#discussion_r214786494

--- Diff:

sql/hive/src/test/scala/org/apache/spark/sql/hive/execution/HiveDDLSuite.scala

---

@@ -754,6 +754,47 @@ class HiveDDLSuite

}

}

+ test("Insert overwrite Hive table should output correct schema") {

+withTable("tbl", "tbl2") {

+ withView("view1") {

+spark.sql("CREATE TABLE tbl(id long)")

+spark.sql("INSERT OVERWRITE TABLE tbl SELECT 4")

+spark.sql("CREATE VIEW view1 AS SELECT id FROM tbl")

+spark.sql("CREATE TABLE tbl2(ID long)")

+spark.sql("INSERT OVERWRITE TABLE tbl2 SELECT ID FROM view1")

+checkAnswer(spark.table("tbl2"), Seq(Row(4)))

--- End diff --

Add schema assert please. We can read data since

[SPARK-25132](https://issues.apache.org/jira/browse/SPARK-25132).

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22179: [SPARK-23131][BUILD] Upgrade Kryo to 4.0.2

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22179 retest this please --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22179: [SPARK-23131][BUILD] Upgrade Kryo to 4.0.2

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22179 retest this please --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22179: [SPARK-23131][BUILD] Upgrade Kryo to 4.0.2

Github user wangyum commented on the issue:

https://github.com/apache/spark/pull/22179

Sorry @dongjoon-hyun I only reproduce one test.

kryo-parametrized-type-inheritance related to language. It seems scala

can't reproduce it:

```scala

val ser = new KryoSerializer(new

SparkConf).newInstance().asInstanceOf[KryoSerializerInstance]

class BaseType[R] {}

class CollectionType(val child: BaseType[_]*) extends BaseType[Boolean]

{

val children: List[BaseType[_]] = child.toList

}

class ValueType[R](val v: R) extends BaseType[R] {}

val value = new CollectionType(new ValueType("hello"))

ser.serialize(value)

```

SPARK-23131 may be related to data. I can't reproduce it:

```scala

def modelToString(model: GeneralizedLinearRegressionModel): (String,

String) = {

val os: ByteArrayOutputStream = new ByteArrayOutputStream()

val zos = new GZIPOutputStream(os)

val oo: ObjectOutputStream = new ObjectOutputStream(zos)

oo.writeObject(model)

oo.close()

zos.close()

os.close()

(model.uid, DatatypeConverter.printBase64Binary(os.toByteArray))

}

```

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22179: [SPARK-23131][BUILD] Upgrade Kryo to 4.0.2

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22179 @dongjoon-hyun I'm trying to add test cases. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #21987: [SPARK-25015][BUILD] Update Hadoop 2.7 to 2.7.7

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/21987 It seems that this change caused permission issue: ``` export HADOOP_PROXY_USER=user_a spark-sql ``` It will create dir `/tmp/hive-$%7Buser.name%7D/user_a/`. then change to other user: ``` export HADOOP_PROXY_USER=user_b spark-sql ``` exception: ```scala Exception in thread "main" java.lang.RuntimeException: org.apache.hadoop.security.AccessControlException: Permission denied: user=user_b, access=EXECUTE, inode="/tmp/hive-$%7Buser.name%7D/user_b/6b446017-a880-4f23-a8d0-b62f37d3c413":user_a:hadoop:drwx-- at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.check(FSPermissionChecker.java:319) at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.checkTraverse(FSPermissionChecker.java:259) at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.checkPermission(FSPermissionChecker.java:205) at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.checkPermission(FSPermissionChecker.java:190) at org.apache.hadoop.hdfs.server.namenode.FSDirectory.checkPermission(FSDirectory.java:1780) at org.apache.hadoop.hdfs.server.namenode.FSDirStatAndListingOp.getFileInfo(FSDirStatAndListingOp.java:108) ``` I'll do verification later. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22124: [SPARK-25135][SQL] Insert datasource table may al...

Github user wangyum closed the pull request at: https://github.com/apache/spark/pull/22124 --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22124: [SPARK-25135][SQL] Insert datasource table may all null ...

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22124 close it. I have create a new PR. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22287: [SPARK-25135][SQL] FileFormatWriter should respec...

GitHub user wangyum opened a pull request: https://github.com/apache/spark/pull/22287 [SPARK-25135][SQL] FileFormatWriter should respect the schema of Hive ## What changes were proposed in this pull request? This pr fix `FileFormatWriter's dataSchema` should respect the schema of Hive. Otherwise there will be two issues. 1. Throwing an exception(This can be reproduce by added test case): ```scala java.util.NoSuchElementException: None.get at scala.None$.get(Option.scala:347) at scala.None$.get(Option.scala:345) at org.apache.spark.sql.execution.datasources.FileFormatWriter$$anonfun$3$$anonfun$4.apply(FileFormatWriter.scala:87) at org.apache.spark.sql.execution.datasources.FileFormatWriter$$anonfun$3$$anonfun$4.apply(FileFormatWriter.scala:87) ``` 2. The schema of the Hive table is not the same as the schema of the parquet file. ## How was this patch tested? - Unit tests for FileFormatWriter should respect the schema of Hive. - Manual tests for didn't break UI issues fixed by [SPARK-22834](https://issues.apache.org/jira/browse/SPARK-22834):  You can merge this pull request into a Git repository by running: $ git pull https://github.com/wangyum/spark SPARK-25135-view Alternatively you can review and apply these changes as the patch at: https://github.com/apache/spark/pull/22287.patch To close this pull request, make a commit to your master/trunk branch with (at least) the following in the commit message: This closes #22287 commit b54953a8224aa0a7759289a83e876e3bfc166cb6 Author: Yuming Wang Date: 2018-08-30T17:46:02Z FileFormatWriter should respect the input query schema in HIVE --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22267: [SPARK-24716][TESTS][FOLLOW-UP] Test Hive metastore sche...

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22267 cc @cloud-fan --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22267: [SPARK-24716][TESTS][FOLLOW-UP] Test Hive metasto...

GitHub user wangyum opened a pull request: https://github.com/apache/spark/pull/22267 [SPARK-24716][TESTS][FOLLOW-UP] Test Hive metastore schema and parquet schema are in different letter cases ## What changes were proposed in this pull request? Since https://github.com/apache/spark/pull/21696. Spark uses Parquet schema instead of Hive metastore schema to do pushdown. This change can avoid wrong records returned when Hive metastore schema and parquet schema are in different letter cases. This pr add a test case for it. More details: https://issues.apache.org/jira/browse/SPARK-25206 ## How was this patch tested? unit tests You can merge this pull request into a Git repository by running: $ git pull https://github.com/wangyum/spark SPARK-24716-TESTS Alternatively you can review and apply these changes as the patch at: https://github.com/apache/spark/pull/22267.patch To close this pull request, make a commit to your master/trunk branch with (at least) the following in the commit message: This closes #22267 commit f5559f40dc7d3bfd80ced7090f617998094811bf Author: Yuming Wang Date: 2018-08-29T10:03:15Z Improvement test. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22250: [SPARK-25259][SQL] left/right join support push down dur...

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22250 Fixed by [SPARK-21479](https://issues.apache.org/jira/browse/SPARK-21479). --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22250: [SPARK-25259][SQL] left/right join support push d...

Github user wangyum closed the pull request at: https://github.com/apache/spark/pull/22250 --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22263: [SPARK-25269][SQL] SQL interface support specify Storage...

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22263 retest this please. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22263: [SPARK-25269][SQL] SQL interface support specify ...

GitHub user wangyum opened a pull request: https://github.com/apache/spark/pull/22263 [SPARK-25269][SQL] SQL interface support specify StorageLevel when cache table ## What changes were proposed in this pull request? SQL interface support specify `StorageLevel` when cache table. The semantic is like this: ```sql CACHE DISK_ONLY TABLE tableName; ``` All supported `StorageLevel` is: https://github.com/apache/spark/blob/eefdf9f9dd8afde49ad7d4e230e2735eb817ab0a/core/src/main/scala/org/apache/spark/storage/StorageLevel.scala#L172-L183 ## How was this patch tested? unit tests and manual tests You can merge this pull request into a Git repository by running: $ git pull https://github.com/wangyum/spark SPARK-25269 Alternatively you can review and apply these changes as the patch at: https://github.com/apache/spark/pull/22263.patch To close this pull request, make a commit to your master/trunk branch with (at least) the following in the commit message: This closes #22263 commit 9e058d1b402dec85982a880bf086268a1dcec99e Author: Yuming Wang Date: 2018-08-29T06:31:44Z SQL interface support specify StorageLevel when cache table --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22250: [SPARK-25259][SQL] left/right join support push down dur...

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22250 cc @cloud-fan --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22177: [SPARK-25119][Web UI] stages in wrong order within job p...

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22177 How about backport https://github.com/apache/spark/pull/21680? --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark issue #22250: [SPARK-25259][SQL] left/right join support push down dur...

Github user wangyum commented on the issue: https://github.com/apache/spark/pull/22250 retest this please --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22250: [SPARK-25259][SQL] left/right join support push d...

GitHub user wangyum opened a pull request:

https://github.com/apache/spark/pull/22250

[SPARK-25259][SQL] left/right join support push down during-join predicates

## What changes were proposed in this pull request?

Prepare data:

```sql

create temporary view EMPLOYEE as select * from values

("10", "HAAS", "A00"),