[GitHub] [airflow] github-actions[bot] commented on pull request #17578: Improve validation of Group id

github-actions[bot] commented on pull request #17578: URL: https://github.com/apache/airflow/pull/17578#issuecomment-898491348 The PR most likely needs to run full matrix of tests because it modifies parts of the core of Airflow. However, committers might decide to merge it quickly and take the risk. If they don't merge it quickly - please rebase it to the latest main at your convenience, or amend the last commit of the PR, and push it with --force-with-lease. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] jedcunningham commented on a change in pull request #17578: Improve validation of Group id

jedcunningham commented on a change in pull request #17578:

URL: https://github.com/apache/airflow/pull/17578#discussion_r688550730

##

File path: airflow/utils/task_group.py

##

@@ -94,10 +95,15 @@ def __init__(

self.used_group_ids: Set[Optional[str]] = set()

self._parent_group = None

else:

-if not isinstance(group_id, str):

-raise ValueError("group_id must be str")

-if not group_id:

-raise ValueError("group_id must not be empty")

+if prefix_group_id:

Review comment:

One issue I see with removal is the possibility that nearly _all_ of the

characters could be removed, leaving something meaningless behind.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [airflow] ppatel8-wooliex commented on pull request #15599: Mask passwords and sensitive info in task logs and UI

ppatel8-wooliex commented on pull request #15599: URL: https://github.com/apache/airflow/pull/15599#issuecomment-898488185 you still need to set the below 2 parameters into airflow.cfg in order to hide the secret in logs and rendered template. hide_sensitive_var_conn_fields = True sensitive_var_conn_names = 'password', 'secret', 'passwd', 'authorization', 'api_key', 'apikey', 'access_token' -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] jedcunningham commented on a change in pull request #17561: Simplify bug report template

jedcunningham commented on a change in pull request #17561: URL: https://github.com/apache/airflow/pull/17561#discussion_r688529800 ## File path: .github/ISSUE_TEMPLATE/bug_report.md ## @@ -8,89 +8,58 @@ assignees: '' --- - - **Apache Airflow version**: -**Apache Airflow Provider versions** (please include all providers that are relevant to your bug): + - - - **Apache Airflow version**: -**Apache Airflow Provider versions** (please include all providers that are relevant to your bug): + - ---> +**Apache Airflow Provider versions**: + + -**Kubernetes version (if you are using kubernetes)** (use `kubectl version`): +**Deployment**: -**Environment**: + -- **Cloud provider or hardware configuration**: -- **OS** (e.g. from /etc/os-release): -- **Kernel** (e.g. `uname -a`): -- **Install tools**: -- **Others**: + **What happened**: - + **What you expected to happen**: **How to reproduce it**: - - +You can include images/screen-casts etc. by drag-dropping the image here. +--> **Anything else we need to know**: + +**Are you willing to submit a PR?** + + - - **Apache Airflow version**: -**Apache Airflow Provider versions** (please include all providers that are relevant to your bug): + - ---> +**Apache Airflow Provider versions**: + + -**Kubernetes version (if you are using kubernetes)** (use `kubectl version`): +**Deployment**: -**Environment**: + -- **Cloud provider or hardware configuration**: -- **OS** (e.g. from /etc/os-release): -- **Kernel** (e.g. `uname -a`): -- **Install tools**: -- **Others**: + **What happened**: - + **What you expected to happen**: **How to reproduce it**: - - +You can include images/screen-casts etc. by drag-dropping the image here. +--> **Anything else we need to know**: + +**Are you willing to submit a PR?** +

[GitHub] [airflow] tsaltena commented on issue #14574: DockerOperator breaks with active log-collection for long running tasks

tsaltena commented on issue #14574: URL: https://github.com/apache/airflow/issues/14574#issuecomment-898473334 We're experiencing the same issue - have you been able to find a fix since, @kaustubhharapanahalli ? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] ephraimbuddy commented on pull request #17601: Fix triggerer query where limit is not supported in some DB versions

ephraimbuddy commented on pull request #17601: URL: https://github.com/apache/airflow/pull/17601#issuecomment-898473265 cc: @andrewgodwin -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk closed pull request #17608: Extended JSON client with missing API

potiuk closed pull request #17608: URL: https://github.com/apache/airflow/pull/17608 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk commented on pull request #17608: Extended JSON client with missing API

potiuk commented on pull request #17608: URL: https://github.com/apache/airflow/pull/17608#issuecomment-898472628 As explained in #17606 - our goal is to move people away from the experimental API to the new Stable REST API. The experimental API is deprecated and it will be removed in Airflow 3 so getting people stay longer with it by adding new functionality, is a bad idea. No more changes to the experimental API will be accepted. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk edited a comment on issue #17606: JSON client does not provide an API for getting a list of Dag Runs

potiuk edited a comment on issue #17606: URL: https://github.com/apache/airflow/issues/17606#issuecomment-898471060 > Many thanks for your reply. I see your point of view. I am working on a project that already uses the deprecated API and it would take us some time to switch to the new one. I could have made the corresponding changes inside my project and left them unpublished, but many other users may be in a similar situation and would like to reuse that functionality. Thus in my opinion it would be good to accept this pull request. Not a good idea. We want to move people away from the experimental API as soon as possible (now). It will be removed in Airflow 3 completely (and irreversibly). Effectlively - adding new functionality has the effect that people stay longer with the deprecated API, which is precisely what we want to avoid. No more changes will be accepted to the experimental API. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk closed issue #17606: JSON client does not provide an API for getting a list of Dag Runs

potiuk closed issue #17606: URL: https://github.com/apache/airflow/issues/17606 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk commented on issue #17606: JSON client does not provide an API for getting a list of Dag Runs

potiuk commented on issue #17606: URL: https://github.com/apache/airflow/issues/17606#issuecomment-898471060 > Many thanks for your reply. I see your point of view. I am working on a project that already uses the deprecated API and it would take us some time to switch to the new one. I could have made the corresponding changes inside my project and left them unpublished, but many other users may be in a similar situation and would like to reuse that functionality. Thus in my opinion it would be good to accept this pull request. Not a good idea. We want to move people away from the experimental API as soon as possible (now). It will be removed in Airflow 3 completely (and irreversibly). Effectlively - adding new functionality has the effect that people staying longer with the deprecated API, which is precisely what we want to avoid. No more changes will be accepted to the experimental API. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk edited a comment on issue #17604: Add an option to remove or mask environment variables in the KubernetesPodOperator task instance logs on failure or error events

potiuk edited a comment on issue #17604: URL: https://github.com/apache/airflow/issues/17604#issuecomment-898467597 Which Airflow version are you running ? There is a "Secret Masker" feature in Airflow 2.1, and from what I understand it should also mask secret values in KPO(https://airflow.apache.org/docs/apache-airflow/stable/security/secrets/index.html#masking-sensitive-data) - it will mask sensitive variables/ sensitive fields in connections in task logs. I believe it should also mask them in K8SPodOperator log (and it would do it always, not only for errors). -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk commented on issue #17604: Add an option to remove or mask environment variables in the KubernetesPodOperator task instance logs on failure or error events

potiuk commented on issue #17604: URL: https://github.com/apache/airflow/issues/17604#issuecomment-898467597 Which Airflow version are you running ? There is a "Secret Masker" feature in Airflow 2.1, and from what I understand it should also mask secret values (https://airflow.apache.org/docs/apache-airflow/stable/security/secrets/index.html#masking-sensitive-data) - it will mask sensitive variables/ sensitive fields in connections in task logs. I believe it should also mask them in K8SPodOperator log (and it would do it always, not only for errors). -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[airflow] branch main updated (d11d3e6 -> 7db43f7)

This is an automated email from the ASF dual-hosted git repository. kaxilnaik pushed a change to branch main in repository https://gitbox.apache.org/repos/asf/airflow.git. from d11d3e6 Use built-in ``cached_property`` on Python 3.8 for Asana provider (#17597) add 7db43f7 Remove upper-limit on ``tenacity`` (#17593) No new revisions were added by this update. Summary of changes: setup.cfg | 2 +- 1 file changed, 1 insertion(+), 1 deletion(-)

[GitHub] [airflow] kaxil merged pull request #17593: Remove upper-limit on ``tenacity``

kaxil merged pull request #17593: URL: https://github.com/apache/airflow/pull/17593 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] github-actions[bot] commented on pull request #17593: Remove upper-limit on ``tenacity``

github-actions[bot] commented on pull request #17593: URL: https://github.com/apache/airflow/pull/17593#issuecomment-898466892 The PR most likely needs to run full matrix of tests because it modifies parts of the core of Airflow. However, committers might decide to merge it quickly and take the risk. If they don't merge it quickly - please rebase it to the latest main at your convenience, or amend the last commit of the PR, and push it with --force-with-lease. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] eladkal closed pull request #16937: New Tableau operator: TableauRefreshDatasourceOperator

eladkal closed pull request #16937: URL: https://github.com/apache/airflow/pull/16937 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] kaxil commented on issue #17607: Airflow Summit 2021 banner is still present on docs site

kaxil commented on issue #17607: URL: https://github.com/apache/airflow/issues/17607#issuecomment-898463934 https://github.com/apache/airflow-site/pull/466 should fix it -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] kaxil closed issue #17607: Airflow Summit 2021 banner is still present on docs site

kaxil closed issue #17607: URL: https://github.com/apache/airflow/issues/17607 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow-site] kaxil merged pull request #466: Manually remove banner from pre-built docs files

kaxil merged pull request #466: URL: https://github.com/apache/airflow-site/pull/466 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] github-actions[bot] commented on pull request #17603: Fix MySQL database character set instruction

github-actions[bot] commented on pull request #17603: URL: https://github.com/apache/airflow/pull/17603#issuecomment-898463434 The PR is likely ready to be merged. No tests are needed as no important environment files, nor python files were modified by it. However, committers might decide that full test matrix is needed and add the 'full tests needed' label. Then you should rebase it to the latest main or amend the last commit of the PR, and push it with --force-with-lease. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk commented on issue #17546: ImportError: /usr/lib/x86_64-linux-gnu/libstdc++.so.6: cannot allocate memory in static TLS block

potiuk commented on issue #17546: URL: https://github.com/apache/airflow/issues/17546#issuecomment-898462656 > Thanks for your suggestion Jarek, I'll try to follow the customization route. Let us know! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow-site] ryanahamilton opened a new pull request #466: Manually remove banner from pre-built docs files

ryanahamilton opened a new pull request #466: URL: https://github.com/apache/airflow-site/pull/466 Follow-up to #465. Removes the banner from the static HTML files that have been generated with the banner in them. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] lboudard edited a comment on issue #17490: KubernetesJobOperator

lboudard edited a comment on issue #17490: URL: https://github.com/apache/airflow/issues/17490#issuecomment-898448207 I agree on this subject, currently pod operator is missing some very handy features that [kubernetes job controller](https://kubernetes.io/docs/concepts/workloads/controllers/job/) implements such as time to live after success/failure (though they are a number of overlapping features such as retries and parallelism control). I also agree on the fact that the usage of kubernetes executor vs kubernetes pod operator is not very clear yet. In our use case, since we have very different dags types living in the same airflow instance, so we use multiple images that are scheduled through pod operators (that we used before kubernetes executor and taskflow api appeared). Say for instance one image to parse new batches of data and another one to train models on it in another dag. That is not ideal since the workflow dependencies are not properly binded in code but rather to expected data checkpoints, say instead of having ``` read_file | parse | feature_engineering | train_model read_file | archive ``` that describe direct data dependencies in code (say the airflow taskflow way, or equivalently in spark or apache beam), we rather have ``` schedule_parse_file_and_store(raw_data_batch_location, parsing_docker_image) schedule_feature_engineer(raw_data_batch_location, feature_engineering_docker_image) schedule_train_model(feature_engineered_batch_location, model_training_docker_image) ``` -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] sw1010 commented on issue #17606: JSON client does not provide an API for getting a list of Dag Runs

sw1010 commented on issue #17606: URL: https://github.com/apache/airflow/issues/17606#issuecomment-898452189 > I don’t think we are going to accept contributions to `/api/experimental` (Deprecated API). Would it be possible for you to switch to `/api/v1` (Stable API)? If not, why? Many thanks for your reply. I see your point of view. I am working on a project that already uses the deprecated API and it would take us some time to switch to the new one. I could have made the corresponding changes inside my project and left them unpublished, but many other users may be in a similar situation and would like to reuse that functionality. Thus in my opinion it would be good to accept this pull request. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] lboudard edited a comment on issue #17490: KubernetesJobOperator

lboudard edited a comment on issue #17490: URL: https://github.com/apache/airflow/issues/17490#issuecomment-898448207 I agree on this subject, currently pod operator is missing some very handy features that [kubernetes job controller](https://kubernetes.io/docs/concepts/workloads/controllers/job/) implements such as time to live after success/failure that are really handy (though they are a number of overlapping features such as retries and parallelism control). I also agree on the fact that the usage of kubernetes executor vs kubernetes pod operator is not very clear yet. In our use case, since we have very different dags types living in the same airflow instance, so we use multiple images that are scheduled through pod operators (that we used before kubernetes executor and taskflow api appeared). Say for instance one image to parse new batches of data and another one to train models on it in another dag. That is not ideal since the workflow dependencies are not properly binded in code but rather to expected data checkpoints, say instead of having ``` read_file | parse | feature_engineering | train_model read_file | archive ``` that describe direct data dependencies in code (say the airflow taskflow way, or equivalently in spark or apache beam), we rather have ``` schedule_parse_file_and_store(raw_data_batch_location) schedule_feature_engineer(raw_data_batch_location) schedule_train_model(feature_engineered_batch_location) ``` -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] lboudard edited a comment on issue #17490: KubernetesJobOperator

lboudard edited a comment on issue #17490: URL: https://github.com/apache/airflow/issues/17490#issuecomment-898448207 I agree on this subject, currently pod operator is missing some very handy features that [kubernetes job controller](https://kubernetes.io/docs/concepts/workloads/controllers/job/) implements such as time to live after success/failure that are really handy. I also agree on the fact that the usage of kubernetes executor vs kubernetes pod operator is not very clear yet. In our use case, since we have very different dags types living in the same airflow instance, so we use multiple images that are scheduled through pod operators (that we used before kubernetes executor and taskflow api appeared). Say for instance one image to parse new batches of data and another one to train models on it in another dag. That is not ideal since the workflow dependencies are not properly binded in code but rather to expected data checkpoints, say instead of having ``` read_file | parse | feature_engineering | train_model read_file | archive ``` that describe direct data dependencies in code (say the airflow taskflow way, or equivalently in spark or apache beam), we rather have ``` schedule_parse_file_and_store(raw_data_batch_location) schedule_feature_engineer(raw_data_batch_location) schedule_train_model(feature_engineered_batch_location) ``` -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] lboudard commented on issue #17490: KubernetesJobOperator

lboudard commented on issue #17490: URL: https://github.com/apache/airflow/issues/17490#issuecomment-898448207 I agree on this subject, currently pod operator is missing some very handy features that [kubernetes job controller](https://kubernetes.io/docs/concepts/workloads/controllers/job/) implements such as time to live after success/failure that are really handy. I also agree on the fact that the usage of kubernetes executor vs kubernetes pod operator is not very clear yet. In our use case, since we have very different dags types living in the same airflow instance, we have multiple images that are run through pod operators (that we used before kubernetes executor and taskflow api). Say for instance one image to parse new batches of data and another one to train models on it in another dag. But that is not ideal since the workflow dependencies are not properly binded in code ``` read_file | parse | feature_engineering | train_model read_file | archive ``` that describe direct data dependencies in code (say the airflow taskflow way, or equivalently in spark or apache beam), we rather have ``` schedule_parse_file_and_store(raw_data_batch_location) schedule_feature_engineer(raw_data_batch_location) schedule_train_model(feature_engineered_batch_location) ``` -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] sw1010 opened a new pull request #17608: Extended JSON client with missing API

sw1010 opened a new pull request #17608: URL: https://github.com/apache/airflow/pull/17608 closes: #17606 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] thmzlt commented on issue #17607: Airflow Summit 2021 banner is still present on docs site

thmzlt commented on issue #17607: URL: https://github.com/apache/airflow/issues/17607#issuecomment-898440968 @bbovenzi -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] boring-cyborg[bot] commented on issue #17607: Airflow Summit 2021 banner is still present on docs site

boring-cyborg[bot] commented on issue #17607: URL: https://github.com/apache/airflow/issues/17607#issuecomment-898440504 Thanks for opening your first issue here! Be sure to follow the issue template! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] thmzlt opened a new issue #17607: Airflow Summit 2021 banner is still present on docs site

thmzlt opened a new issue #17607: URL: https://github.com/apache/airflow/issues/17607 **Apache Airflow version**: 2.1.2 **Environment**: - **Cloud provider or hardware configuration**: - **OS** (e.g. from /etc/os-release): - **Kernel** (e.g. `uname -a`): - **Install tools**: - **Others**: **What happened**: Banner for Airflow Summit 2021 (which has passed) still shows up on docs site using some of the view space. **What you expected to happen**: Banner does not show up. **How to reproduce it**: Go to https://airflow.apache.org/docs/apache-airflow/stable/index.html and look at the top section of the page -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] ciancolo commented on pull request #16937: New Tableau operator: TableauRefreshDatasourceOperator

ciancolo commented on pull request #16937: URL: https://github.com/apache/airflow/pull/16937#issuecomment-898437188 I think #16915 covers the functionality of this PR. The current PR is not needed anymore. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] uranusjr commented on issue #17606: JSON client does not provide an API for getting a list of Dag Runs

uranusjr commented on issue #17606: URL: https://github.com/apache/airflow/issues/17606#issuecomment-898433667 I don’t think we are going to accept contributions to `/api/experimental` (Deprecated API). Would it be possible for you to switch to `/api/v1` (Stable API)? If not, why? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] sw1010 opened a new issue #17606: JSON client does not provide an API for getting a list of Dag Runs

sw1010 opened a new issue #17606: URL: https://github.com/apache/airflow/issues/17606 **Description** The JSON client provides API for many endpoints, but some of them are still missing, e.g. `GET /api/experimental/dags//dag_runs`. **Use case / motivation** I would like to obtain all the run ids for a given DAG id. It looks like the missing API is the way to do so. **Are you willing to submit a PR?** Sure! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] boring-cyborg[bot] commented on issue #17605: Airflow stdout not working/console problem

boring-cyborg[bot] commented on issue #17605: URL: https://github.com/apache/airflow/issues/17605#issuecomment-898421321 Thanks for opening your first issue here! Be sure to follow the issue template! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] aioannoa opened a new issue #17605: Airflow stdout not working/console problem

aioannoa opened a new issue #17605:

URL: https://github.com/apache/airflow/issues/17605

**Apache Airflow version**:

Airflow 2.0.0

**Apache Airflow Provider versions**:

apache-airflow-providers-ftp==1.0.0

apache-airflow-providers-http==1.0.0

apache-airflow-providers-imap==1.0.0

apache-airflow-providers-postgres==1.0.1

apache-airflow-providers-sqlite==1.0.0

**Kubernetes version (if you are using kubernetes)**:

Client Version: version.Info{Major:"1", Minor:"15", GitVersion:"v1.15.0",

GitCommit:"e8462b5b5dc2584fdcd18e6bcfe9f1e4d970a529", GitTreeState:"clean",

BuildDate:"2019-06-19T16:40:16Z", GoVersion:"go1.12.5", Compiler:"gc",

Platform:"linux/amd64"}

Server Version: version.Info{Major:"1", Minor:"15", GitVersion:"v1.15.12",

GitCommit:"e2a822d9f3c2fdb5c9bfbe64313cf9f657f0a725", GitTreeState:"clean",

BuildDate:"2020-05-06T05:09:48Z", GoVersion:"go1.12.17", Compiler:"gc",

Platform:"linux/amd64"}

**Environment**:

- **Cloud provider or hardware configuration**:

AWS Cloud - EC2 instance

- **OS** (e.g. from /etc/os-release):

NAME="Ubuntu"

VERSION="16.04.3 LTS (Xenial Xerus)"

ID=ubuntu

ID_LIKE=debian

PRETTY_NAME="Ubuntu 16.04.3 LTS"

VERSION_ID="16.04"

HOME_URL="http://www.ubuntu.com/;

SUPPORT_URL="http://help.ubuntu.com/;

BUG_REPORT_URL="http://bugs.launchpad.net/ubuntu/;

VERSION_CODENAME=xenial

UBUNTU_CODENAME=xenial

- **Kernel** (e.g. `uname -a`):

Linux ip-172-25-1-109 4.4.0-1128-aws #142-Ubuntu SMP Fri Apr 16 12:42:33 UTC

2021 x86_64 x86_64 x86_64 GNU/Linux

- **Install tools**:

- **Others**:

**What happened**:

I have been trying to get Airflow logs to be printed to stdout by:

1. creating a new python script, shown below, named log_config.py, under the

directory config as instructed here:

https://airflow.apache.org/docs/apache-airflow/stable/logging-monitoring/logging-tasks.html

from copy import deepcopy

from airflow.config_templates.airflow_local_settings import

DEFAULT_LOGGING_CONFIG

import sys

LOGGING_CONFIG = deepcopy(DEFAULT_LOGGING_CONFIG)

LOGGING_CONFIG["handlers"]["stdouttask"] = {

"class": "logging.StreamHandler",

"formatter": "airflow",

"stream": sys.stdout,

}

LOGGING_CONFIG["loggers"]["airflow.task"]["handlers"] = ["stdouttask"]

2. setting logging_config_class = log_config.LOGGING_CONFIG, and

task_log_reader = stdouttask in the airflow.cfg file.

After checking the logs, the logs are still printed into a file, so this has

not worked out for me. After searching online I noticed that people suggest

using the console handler instead. However, when using this the pod becomes

unresponsive, and this affects other pods as well as the system. ssh won't work

anymore for some time, I guess while the dag runs, and other pods will be

unresponsive or even down, e.g. the database of the cluster. I have read that

there may be some memory leak issues with this. Has anyone been able to verify

whether this is the case and under which circumstances this causes a problem? I

have not been able to find a clear answer, neither online or in the Airflow

documentation.

**What you expected to happen**:

I was expecting all Airflow logs to be printed to stdout alone.

For the 1st part, no idea.

For the 2nd part, memory leak or cpu exhaustion.

**How to reproduce it**:

For the 1st part, try the abive as is.

For the 2nd part, try:

LOGGING_CONFIG["loggers"]["airflow.task"]["handlers"] = ["console",

"stdouttask"]

**Anything else we need to know**:

How often does this problem occur? Once? Every time etc?

Every time.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [airflow] potiuk commented on a change in pull request #17591: Use gunicorn to serve logs generated by worker

potiuk commented on a change in pull request #17591:

URL: https://github.com/apache/airflow/pull/17591#discussion_r688468320

##

File path: airflow/utils/serve_logs.py

##

@@ -73,10 +74,47 @@ def serve_logs_view(filename):

return flask_app

+class StandaloneGunicornApplication(gunicorn.app.base.BaseApplication):

+"""

+Standalone Gunicorn application/serve for usage with any WSGI-application.

+

+Code inspired by an example from the Gunicorn documentation.

+

https://github.com/benoitc/gunicorn/blob/cf55d2cec277f220ebd605989ce78ad1bb553c46/examples/standalone_app.py

+

+For details, about standalone gunicorn application, see:

+https://docs.gunicorn.org/en/stable/custom.html

+"""

+

+def __init__(self, app, options=None):

+self.options = options or {}

+self.application = app

+super().__init__()

+

+def load_config(self):

+config = {

+key: value

+for key, value in self.options.items()

+if key in self.cfg.settings and value is not None

+}

+for key, value in config.items():

+self.cfg.set(key.lower(), value)

+

+def load(self):

+return self.application

+

+

def serve_logs():

"""Serves logs generated by Worker"""

setproctitle("airflow serve-logs")

-app = flask_app()

+wsgi_app = create_app()

worker_log_server_port = conf.getint('celery', 'WORKER_LOG_SERVER_PORT')

-app.run(host='0.0.0.0', port=worker_log_server_port)

+options = {

+'bind': f"0.0.0.0:{worker_log_server_port}",

+'workers': 2,

Review comment:

Yeah 2 is better just in case of memory errors/crashes. Just one small

nit (in case somene has problems with memory usage etc.) i think it would be

great to mention in the docs of worker that we are using Gunicorm and that the

configuration options can be overridden by GUNiCORN_CMD_ARGS env variable

https://docs.gunicorn.org/en/latest/settings.html#settings

It's not at all obvious from docs without looking at the code now that wlwe

have separate Gunicorm processes forked and that you can configure their

behaviour via ENV vars.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [airflow] github-actions[bot] commented on pull request #17591: Use gunicorn to serve logs generated by worker

github-actions[bot] commented on pull request #17591: URL: https://github.com/apache/airflow/pull/17591#issuecomment-898413764 The PR most likely needs to run full matrix of tests because it modifies parts of the core of Airflow. However, committers might decide to merge it quickly and take the risk. If they don't merge it quickly - please rebase it to the latest main at your convenience, or amend the last commit of the PR, and push it with --force-with-lease. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[airflow] branch main updated: Use built-in ``cached_property`` on Python 3.8 for Asana provider (#17597)

This is an automated email from the ASF dual-hosted git repository. kaxilnaik pushed a commit to branch main in repository https://gitbox.apache.org/repos/asf/airflow.git The following commit(s) were added to refs/heads/main by this push: new d11d3e6 Use built-in ``cached_property`` on Python 3.8 for Asana provider (#17597) d11d3e6 is described below commit d11d3e617ad121e75fbc955ad3730857ae8edab4 Author: Kaxil Naik AuthorDate: Fri Aug 13 13:09:04 2021 +0100 Use built-in ``cached_property`` on Python 3.8 for Asana provider (#17597) Functionality is the same, this just removes one dep for Py 3.8+ --- airflow/providers/asana/hooks/asana.py | 6 +- setup.py | 2 +- 2 files changed, 6 insertions(+), 2 deletions(-) diff --git a/airflow/providers/asana/hooks/asana.py b/airflow/providers/asana/hooks/asana.py index b1623f8..44367bd 100644 --- a/airflow/providers/asana/hooks/asana.py +++ b/airflow/providers/asana/hooks/asana.py @@ -21,7 +21,11 @@ from typing import Any, Dict from asana import Client from asana.error import NotFoundError -from cached_property import cached_property + +try: +from functools import cached_property +except ImportError: +from cached_property import cached_property from airflow.hooks.base import BaseHook diff --git a/setup.py b/setup.py index 75f87ca..81d4b2c 100644 --- a/setup.py +++ b/setup.py @@ -190,7 +190,7 @@ amazon = [ apache_beam = [ 'apache-beam>=2.20.0', ] -asana = ['asana>=0.10', 'cached-property>=1.5.2'] +asana = ['asana>=0.10'] async_packages = [ 'eventlet>= 0.9.7', 'gevent>=0.13',

[GitHub] [airflow] kaxil merged pull request #17597: Use built-in ``cached_property`` on Python 3.8 for Asana provider

kaxil merged pull request #17597: URL: https://github.com/apache/airflow/pull/17597 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] mik-laj commented on a change in pull request #17591: Use gunicorn to serve logs generated by worker

mik-laj commented on a change in pull request #17591:

URL: https://github.com/apache/airflow/pull/17591#discussion_r688462179

##

File path: airflow/utils/serve_logs.py

##

@@ -73,10 +74,47 @@ def serve_logs_view(filename):

return flask_app

+class StandaloneGunicornApplication(gunicorn.app.base.BaseApplication):

+"""

+Standalone Gunicorn application/serve for usage with any WSGI-application.

+

+Code inspired by an example from the Gunicorn documentation.

+

https://github.com/benoitc/gunicorn/blob/cf55d2cec277f220ebd605989ce78ad1bb553c46/examples/standalone_app.py

+

+For details, about standalone gunicorn application, see:

+https://docs.gunicorn.org/en/stable/custom.html

+"""

+

+def __init__(self, app, options=None):

+self.options = options or {}

+self.application = app

+super().__init__()

+

+def load_config(self):

+config = {

+key: value

+for key, value in self.options.items()

+if key in self.cfg.settings and value is not None

+}

+for key, value in config.items():

+self.cfg.set(key.lower(), value)

+

+def load(self):

+return self.application

+

+

def serve_logs():

"""Serves logs generated by Worker"""

setproctitle("airflow serve-logs")

-app = flask_app()

+wsgi_app = create_app()

worker_log_server_port = conf.getint('celery', 'WORKER_LOG_SERVER_PORT')

-app.run(host='0.0.0.0', port=worker_log_server_port)

+options = {

+'bind': f"0.0.0.0:{worker_log_server_port}",

+'workers': 2,

Review comment:

1 should work too, but I configured 2 to failover in case one worker had

problems.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [airflow] boring-cyborg[bot] commented on issue #17604: Add an option to remove or mask environment variables in the KubernetesPodOperator task instance logs on failure or error events

boring-cyborg[bot] commented on issue #17604: URL: https://github.com/apache/airflow/issues/17604#issuecomment-898387256 Thanks for opening your first issue here! Be sure to follow the issue template! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] dondaum opened a new issue #17604: Add an option to remove or mask environment variables in the KubernetesPodOperator task instance logs on failure or error events

dondaum opened a new issue #17604:

URL: https://github.com/apache/airflow/issues/17604

Hi core Airflow dev teams,

I would like to say a big 'thank you' first for this great plattform and for

all of your efforts to constantly improve and mantain it. It is just a joy to

work with.. Our company has recently switched to the Airflow 2 and we love the

overall improvements, such as scheduler speed, UI but also all your efforts in

knowledge sharing and community building.

**Description**

The KubernetesPodOperator does not have an option to either disable showing

environment variables information or masking variables in the task logs if a

failure or an error happen on a created pod. We use the KubernetesPodOperator

and k8s.V1EnvVar to pass sensitive information to a pod, such as target

connection, user name or passwords. If an error or failure happens the task

logs show all pod details including the environment variables. An option, such

as adding a new param to the KubernetesPodOperator that allows to either remove

env variables completely or mask env variables would prevent stop sharing those

senstive informations in the logs.

**Use case / motivation**

When running the KubernetesPodOperator with environment variables it would

be great to have the option to remove environmental variables or to mask

environment variables information in the task logs if there are failures or

errors.

**Are you willing to submit a PR?**

Yes I would.

Currently if the final_state not equals State.SUCCESS, we are handling this

by checking if we have env variables set and if so we start to masking them

with before raising the exception that then also logs the complete

remote_pod object. I guess this is rather a quick fix that we have applied. So

it would be good to think about other use cases or pod objects that could

benefit as well.

The relevant section is

`

launcher = self.create_pod_launcher()

if len(pod_list.items) == 1:

try_numbers_match = self._try_numbers_match(context,

pod_list.items[0])

final_state, remote_pod, result = self.handle_pod_overlap(

labels, try_numbers_match, launcher, pod_list.items[0]

)

else:

self.log.info("creating pod with labels %s and launcher %s",

labels, launcher)

final_state, remote_pod, result =

self.create_new_pod_for_operator(labels, launcher)

if final_state != State.SUCCESS:

raise AirflowException(f'Pod {self.pod.metadata.name}

returned a failure: {remote_pod}')

`

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [airflow] tidunguyen opened a new pull request #17603: Fix MySQL database character set instruction

tidunguyen opened a new pull request #17603: URL: https://github.com/apache/airflow/pull/17603 The currently instructed character set `utf8mb4 COLLATE utf8mb4_unicode_ci;` does not work on mysql 8. When I do: `airflow db init` the following error occurs: ` sqlalchemy.exc.OperationalError: (MySQLdb._exceptions.OperationalError) (1071, 'Specified key was too long; max key length is 3072 bytes') ` Changing to this character set: `utf8 COLLATE utf8_general_ci;` solved the problem -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] JavierLopezT commented on issue #17546: ImportError: /usr/lib/x86_64-linux-gnu/libstdc++.so.6: cannot allocate memory in static TLS block

JavierLopezT commented on issue #17546: URL: https://github.com/apache/airflow/issues/17546#issuecomment-898368669 Thanks for your suggestion Jarek, I'll try to follow the customization route. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] boring-cyborg[bot] commented on issue #17602: Scheduler is not working

boring-cyborg[bot] commented on issue #17602: URL: https://github.com/apache/airflow/issues/17602#issuecomment-898349071 Thanks for opening your first issue here! Be sure to follow the issue template! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

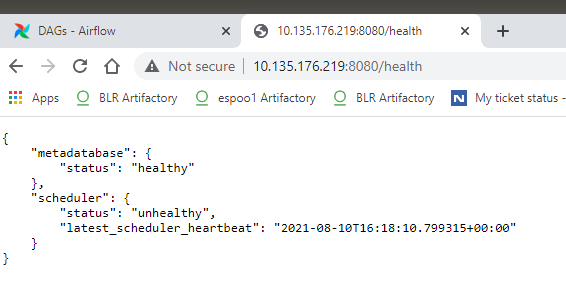

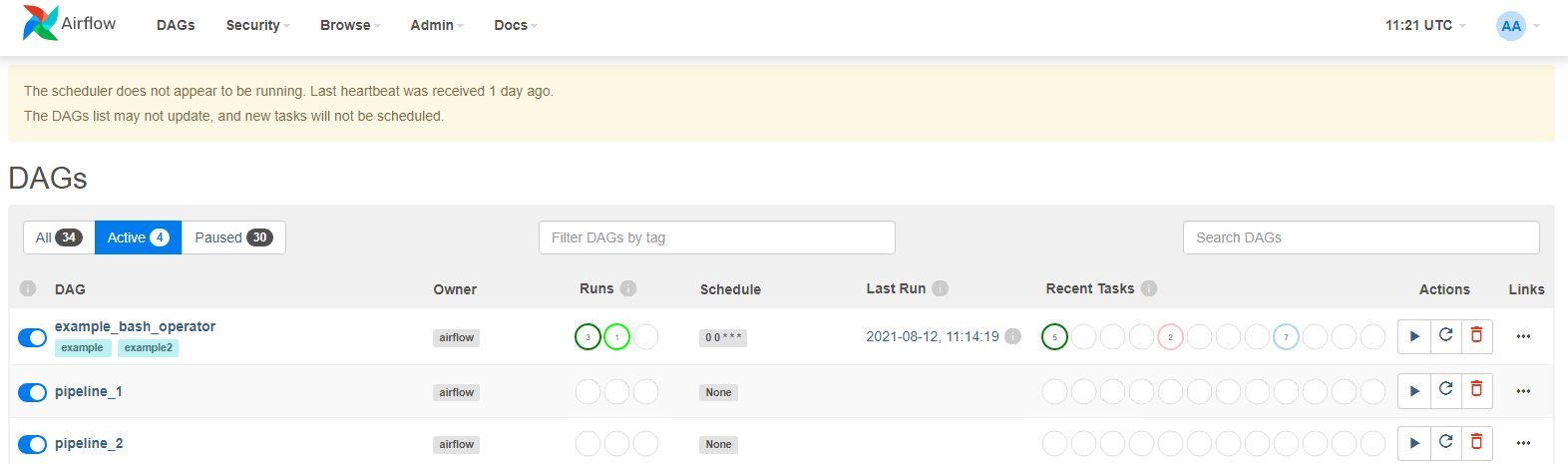

[GitHub] [airflow] vengi57 opened a new issue #17602: Scheduler is not working

vengi57 opened a new issue #17602: URL: https://github.com/apache/airflow/issues/17602 The scheduler does not appear to be running. Last heartbeat was received 2 days ago. The DAGs list may not update, and new tasks will not be scheduled. Scheduler service is up and running due to unhealthy it cant able to execute any dag runs   -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] github-actions[bot] commented on pull request #17597: Use built-in ``cached_property`` on Python 3.8 for Asana provider

github-actions[bot] commented on pull request #17597: URL: https://github.com/apache/airflow/pull/17597#issuecomment-898349023 The PR most likely needs to run full matrix of tests because it modifies parts of the core of Airflow. However, committers might decide to merge it quickly and take the risk. If they don't merge it quickly - please rebase it to the latest main at your convenience, or amend the last commit of the PR, and push it with --force-with-lease. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] uranusjr commented on pull request #17597: Use built-in ``cached_property`` on Python 3.8 for Asana provider

uranusjr commented on pull request #17597: URL: https://github.com/apache/airflow/pull/17597#issuecomment-898348584 Got it! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] ClassyLion closed issue #17599: FileNotFound Temporary file error

ClassyLion closed issue #17599: URL: https://github.com/apache/airflow/issues/17599 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] ClassyLion commented on issue #17599: FileNotFound Temporary file error

ClassyLion commented on issue #17599: URL: https://github.com/apache/airflow/issues/17599#issuecomment-898342965 Understood, thank you. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] ephraimbuddy commented on issue #17599: FileNotFound Temporary file error

ephraimbuddy commented on issue #17599: URL: https://github.com/apache/airflow/issues/17599#issuecomment-898340513 This has been fixed in https://github.com/apache/airflow/pull/17187 and would be released in 2.1.3 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow-client-python] kaxil commented on issue #28: Pools API broken using airflow 2.1.2

kaxil commented on issue #28: URL: https://github.com/apache/airflow-client-python/issues/28#issuecomment-898335315 cc @ephraimbuddy @msumit -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] kaxil commented on a change in pull request #17591: Use gunicorn to serve logs generated by worker

kaxil commented on a change in pull request #17591:

URL: https://github.com/apache/airflow/pull/17591#discussion_r688388159

##

File path: airflow/utils/serve_logs.py

##

@@ -73,10 +74,47 @@ def serve_logs_view(filename):

return flask_app

+class StandaloneGunicornApplication(gunicorn.app.base.BaseApplication):

+"""

+Standalone Gunicorn application/serve for usage with any WSGI-application.

+

+Code inspired by an example from the Gunicorn documentation.

+

https://github.com/benoitc/gunicorn/blob/cf55d2cec277f220ebd605989ce78ad1bb553c46/examples/standalone_app.py

+

+For details, about standalone gunicorn application, see:

+https://docs.gunicorn.org/en/stable/custom.html

+"""

+

+def __init__(self, app, options=None):

+self.options = options or {}

+self.application = app

+super().__init__()

+

+def load_config(self):

+config = {

+key: value

+for key, value in self.options.items()

+if key in self.cfg.settings and value is not None

+}

+for key, value in config.items():

+self.cfg.set(key.lower(), value)

+

+def load(self):

+return self.application

+

+

def serve_logs():

"""Serves logs generated by Worker"""

setproctitle("airflow serve-logs")

-app = flask_app()

+wsgi_app = create_app()

worker_log_server_port = conf.getint('celery', 'WORKER_LOG_SERVER_PORT')

-app.run(host='0.0.0.0', port=worker_log_server_port)

+options = {

+'bind': f"0.0.0.0:{worker_log_server_port}",

+'workers': 2,

Review comment:

Do we need 2 workers or do away with just 1?

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [airflow] ephraimbuddy opened a new pull request #17601: Fix triggerer query where limit is not supported in some Mysql version

ephraimbuddy opened a new pull request #17601: URL: https://github.com/apache/airflow/pull/17601 This PR fixes the triggerrer query where limit is not supported in some DB version --- **^ Add meaningful description above** Read the **[Pull Request Guidelines](https://github.com/apache/airflow/blob/main/CONTRIBUTING.rst#pull-request-guidelines)** for more information. In case of fundamental code change, Airflow Improvement Proposal ([AIP](https://cwiki.apache.org/confluence/display/AIRFLOW/Airflow+Improvements+Proposals)) is needed. In case of a new dependency, check compliance with the [ASF 3rd Party License Policy](https://www.apache.org/legal/resolved.html#category-x). In case of backwards incompatible changes please leave a note in [UPDATING.md](https://github.com/apache/airflow/blob/main/UPDATING.md). -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] kaxil commented on pull request #17597: Use built-in ``cached_property`` on Python 3.8 for Asana provider

kaxil commented on pull request #17597: URL: https://github.com/apache/airflow/pull/17597#issuecomment-898329117 > Oops, I missed #14606 and it’s already merged. There’s actually a shim for this: > > ```python > from airflow.compat.functools import cached_property > ``` > > And it handles type hint better; I added a `functools.pyi` file to manually provide the annotation. I intentionally skipped providers so that users can use this version of provider in older version of Airflow that does not contain `from airflow.compat.functools` module -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] uranusjr commented on pull request #17597: Use built-in ``cached_property`` on Python 3.8 for Asana provider

uranusjr commented on pull request #17597: URL: https://github.com/apache/airflow/pull/17597#issuecomment-898318923 Oops, I missed #14606 and it’s already merged. There’s actually a shim for this: ```python from airflow.compat.functools import cached_property ``` And it handles type hint better; I added a `functools.pyi` file to manually provide the annotation. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] boring-cyborg[bot] commented on pull request #17600: Fix wrong Postgres search_path set up instructions

boring-cyborg[bot] commented on pull request #17600: URL: https://github.com/apache/airflow/pull/17600#issuecomment-898312948 Congratulations on your first Pull Request and welcome to the Apache Airflow community! If you have any issues or are unsure about any anything please check our Contribution Guide (https://github.com/apache/airflow/blob/main/CONTRIBUTING.rst) Here are some useful points: - Pay attention to the quality of your code (flake8, mypy and type annotations). Our [pre-commits]( https://github.com/apache/airflow/blob/main/STATIC_CODE_CHECKS.rst#prerequisites-for-pre-commit-hooks) will help you with that. - In case of a new feature add useful documentation (in docstrings or in `docs/` directory). Adding a new operator? Check this short [guide](https://github.com/apache/airflow/blob/main/docs/apache-airflow/howto/custom-operator.rst) Consider adding an example DAG that shows how users should use it. - Consider using [Breeze environment](https://github.com/apache/airflow/blob/main/BREEZE.rst) for testing locally, it’s a heavy docker but it ships with a working Airflow and a lot of integrations. - Be patient and persistent. It might take some time to get a review or get the final approval from Committers. - Please follow [ASF Code of Conduct](https://www.apache.org/foundation/policies/conduct) for all communication including (but not limited to) comments on Pull Requests, Mailing list and Slack. - Be sure to read the [Airflow Coding style]( https://github.com/apache/airflow/blob/main/CONTRIBUTING.rst#coding-style-and-best-practices). Apache Airflow is a community-driven project and together we are making it better . In case of doubts contact the developers at: Mailing List: d...@airflow.apache.org Slack: https://s.apache.org/airflow-slack -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] tidunguyen opened a new pull request #17600: Fix wrong Postgres search_path set up instructions

tidunguyen opened a new pull request #17600: URL: https://github.com/apache/airflow/pull/17600 In the set up instructions for Postgres database backend, the instructed command for setting search_path to the correct schema lacks `public` and `utility`, which prevented SqlAlchemy to find all those required schemas. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] kaxil merged pull request #17598: Add more ``project_urls`` for PyPI

kaxil merged pull request #17598: URL: https://github.com/apache/airflow/pull/17598 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[airflow] branch main updated: Add more ``project_urls`` for PyPI (#17598)

This is an automated email from the ASF dual-hosted git repository.

kaxilnaik pushed a commit to branch main

in repository https://gitbox.apache.org/repos/asf/airflow.git

The following commit(s) were added to refs/heads/main by this push:

new ba3d5b3 Add more ``project_urls`` for PyPI (#17598)

ba3d5b3 is described below

commit ba3d5b3db4e2c057c1821fbb99f5cdc7734ebdaa

Author: Kaxil Naik

AuthorDate: Fri Aug 13 10:15:52 2021 +0100

Add more ``project_urls`` for PyPI (#17598)

This adds more of our urls for display on PyPI

---

dev/provider_packages/SETUP_TEMPLATE.py.jinja2 | 3 +++

setup.cfg | 3 +++

2 files changed, 6 insertions(+)

diff --git a/dev/provider_packages/SETUP_TEMPLATE.py.jinja2

b/dev/provider_packages/SETUP_TEMPLATE.py.jinja2

index 69dcdac..221291a 100644

--- a/dev/provider_packages/SETUP_TEMPLATE.py.jinja2

+++ b/dev/provider_packages/SETUP_TEMPLATE.py.jinja2

@@ -82,6 +82,9 @@ def do_setup():

'Documentation': 'https://airflow.apache.org/docs/{{

PACKAGE_PIP_NAME }}/{{RELEASE}}/',

'Bug Tracker': 'https://github.com/apache/airflow/issues',

'Source Code': 'https://github.com/apache/airflow',

+'Slack Chat': 'https://s.apache.org/airflow-slack',

+'Twitter': 'https://twitter.com/ApacheAirflow',

+'YouTube':

'https://www.youtube.com/channel/UCSXwxpWZQ7XZ1WL3wqevChA/',

},

)

diff --git a/setup.cfg b/setup.cfg

index 0febc0c..479bfdb 100644

--- a/setup.cfg

+++ b/setup.cfg

@@ -62,6 +62,9 @@ project_urls =

Documentation=https://airflow.apache.org/docs/

Bug Tracker=https://github.com/apache/airflow/issues

Source Code=https://github.com/apache/airflow

+Slack Chat=https://s.apache.org/airflow-slack

+Twitter=https://twitter.com/ApacheAirflow

+YouTube=https://www.youtube.com/channel/UCSXwxpWZQ7XZ1WL3wqevChA/

[options]

zip_safe = False

[airflow] branch main updated: Remove redundant note on HTTP Provider (#17595)

This is an automated email from the ASF dual-hosted git repository. kaxilnaik pushed a commit to branch main in repository https://gitbox.apache.org/repos/asf/airflow.git The following commit(s) were added to refs/heads/main by this push: new f2a8a73 Remove redundant note on HTTP Provider (#17595) f2a8a73 is described below commit f2a8a73ca99912d25e9cd8142038cf3a6df622d6 Author: Kaxil Naik AuthorDate: Fri Aug 13 10:15:30 2021 +0100 Remove redundant note on HTTP Provider (#17595) Since https://github.com/apache/airflow/pull/16974 we have switched back to including HTTP provider and this comment now is not correct. --- setup.py | 8 1 file changed, 8 deletions(-) diff --git a/setup.py b/setup.py index 0fc6da8..75f87ca 100644 --- a/setup.py +++ b/setup.py @@ -340,14 +340,6 @@ http = [ 'requests>=2.26.0', ] http_provider = [ -# NOTE ! The HTTP provider is NOT preinstalled by default when Airflow is installed - because it -#depends on `requests` library and until `chardet` is mandatory dependency of `requests` -#See https://github.com/psf/requests/pull/5797 -#This means that providers that depend on Http and cannot work without it, have to have -#explicit dependency on `apache-airflow-providers-http` which needs to be pulled in for them. -#Other cross-provider-dependencies are optional (usually cross-provider dependencies only enable -#some features of providers and majority of those providers works). They result with an extra, -#not with the `install-requires` dependency. 'apache-airflow-providers-http', ] jdbc = [

[GitHub] [airflow] kaxil merged pull request #17595: Remove redundant note on HTTP Provider

kaxil merged pull request #17595: URL: https://github.com/apache/airflow/pull/17595 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[airflow] branch main updated: Remove redundant ``numpy`` dependency (#17594)

This is an automated email from the ASF dual-hosted git repository. kaxilnaik pushed a commit to branch main in repository https://gitbox.apache.org/repos/asf/airflow.git The following commit(s) were added to refs/heads/main by this push: new 6b327c1 Remove redundant ``numpy`` dependency (#17594) 6b327c1 is described below commit 6b327c14f773e265d9c54551d9ca273ac7350fdb Author: Kaxil Naik AuthorDate: Fri Aug 13 10:14:30 2021 +0100 Remove redundant ``numpy`` dependency (#17594) Missed removing ``numpy`` from `setup.cfg` in https://github.com/apache/airflow/pull/17575. It was only added in setup.cfg in https://github.com/apache/airflow/pull/15209/files#diff-380c6a8ebbbce17d55d50ef17d3cf906 numpy already has `python_requires` metadata: https://github.com/numpy/numpy/blob/v1.20.3/setup.py#L473 so we don't need to set `numpy<1.20;python_version<"3.7"` --- setup.cfg | 3 --- 1 file changed, 3 deletions(-) diff --git a/setup.cfg b/setup.cfg index 69ad425..0febc0c 100644 --- a/setup.cfg +++ b/setup.cfg @@ -121,9 +121,6 @@ install_requires = markdown>=2.5.2, <4.0 markupsafe>=1.1.1, <2.0 marshmallow-oneofschema>=2.0.1 -# Numpy stopped releasing 3.6 binaries for 1.20.* series. -numpy<1.20;python_version<"3.7" -numpy;python_version>="3.7" # Required by vendored-in connexion openapi-spec-validator>=0.2.4 pendulum~=2.0

[GitHub] [airflow] kaxil merged pull request #17594: Remove redundant ``numpy`` dependency

kaxil merged pull request #17594: URL: https://github.com/apache/airflow/pull/17594 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] ClassyLion opened a new issue #17599: FileNotFound Temporary file error

ClassyLion opened a new issue #17599: URL: https://github.com/apache/airflow/issues/17599 **Apache Airflow version**: 2.1.3 **Apache Airflow Provider versions** (please include all providers that are relevant to your bug): apache-airflow-providers-snowflake==2.0.0 DAG deals with loading data to Snowflake, not sure if other providers are related, but installation happens with constraints file. **Kubernetes version (if you are using kubernetes)** (use `kubectl version`): 1.18.20 **Environment**: Dockerfile running in k8s deployment, python:3.8.8-slim-buster docker is used as base **What happened**: Full error message ``` Traceback (most recent call last): File "/usr/local/bin/airflow", line 8, in sys.exit(main()) File "/usr/local/lib/python3.8/site-packages/airflow/__main__.py", line 40, in main args.func(args) File "/usr/local/lib/python3.8/site-packages/airflow/cli/cli_parser.py", line 48, in command return func(*args, **kwargs) File "/usr/local/lib/python3.8/site-packages/airflow/utils/cli.py", line 91, in wrapper return f(*args, **kwargs) File "/usr/local/lib/python3.8/site-packages/airflow/cli/commands/task_command.py", line 237, in task_run _run_task_by_selected_method(args, dag, ti) File "/usr/local/lib/python3.8/site-packages/airflow/cli/commands/task_command.py", line 64, in _run_task_by_selected_method _run_task_by_local_task_job(args, ti) File "/usr/local/lib/python3.8/site-packages/airflow/cli/commands/task_command.py", line 120, in _run_task_by_local_task_job run_job.run() File "/usr/local/lib/python3.8/site-packages/airflow/jobs/base_job.py", line 237, in run self._execute() File "/usr/local/lib/python3.8/site-packages/airflow/jobs/local_task_job.py", line 147, in _execute self.on_kill() File "/usr/local/lib/python3.8/site-packages/airflow/jobs/local_task_job.py", line 166, in on_kill self.task_runner.on_finish() File "/usr/local/lib/python3.8/site-packages/airflow/task/task_runner/base_task_runner.py", line 179, in on_finish self._error_file.close() File "/usr/local/lib/python3.8/tempfile.py", line 499, in close self._closer.close() File "/usr/local/lib/python3.8/tempfile.py", line 436, in close unlink(self.name) FileNotFoundError: [Errno 2] No such file or directory: '/tmp/tmpynk61lsx' ``` **What you expected to happen**: Tempfile to be there and DAG not failing **How to reproduce it**: Don't know, haven't seen consistency when this occurs, but it has been happening for a while, more than a month. **Anything else we need to know**: How often does this problem occur? Once? Every time etc? This happens on an almost daily basis, one or two dags fail from time to time. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk edited a comment on pull request #17576: Add pre/post execution hooks

potiuk edited a comment on pull request #17576: URL: https://github.com/apache/airflow/pull/17576#issuecomment-898261046 Looking at the test, there must be a reason the test it here (not only to annoy the user) and it says explicitly that new fields should not be added, so that makes me wonder if just adding the fields to the test is a good idea/enough. @kaxil - is that OK that we add new fields to Base Operator? Will that work for already serialized Tasks? to de-serialize them without problems ? Or do we need to do something else besides adding the two two fields to the test? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk commented on pull request #17576: Add pre/post execution hooks

potiuk commented on pull request #17576: URL: https://github.com/apache/airflow/pull/17576#issuecomment-898261046 Looking at the test, there must be a reason the test it here (not only to annoy the user) and it says explicitly that new fields should not be added, so that makes me wonder if just adding the fields to the test is a good idea.. @kaxil - is that OK that we add new fields to Base Operator? Will that work for already serialized Tasks? to de-serialize them without problems ? Or do we need to do something else besides adding the two two fields to the test? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk commented on a change in pull request #17578: Improve validation of Group id

potiuk commented on a change in pull request #17578:

URL: https://github.com/apache/airflow/pull/17578#discussion_r688306237

##

File path: airflow/utils/task_group.py

##

@@ -94,10 +95,15 @@ def __init__(

self.used_group_ids: Set[Optional[str]] = set()

self._parent_group = None

else:

-if not isinstance(group_id, str):

-raise ValueError("group_id must be str")

-if not group_id:

-raise ValueError("group_id must not be empty")

+if prefix_group_id:

Review comment:

This is a good question. I think not.

If we do it - that's technically backwards incompatible change because some

DAGs that already used spaces might stop working. With prefix, those DAGs would

not work anyway so it's not breaking anything 'more'.

I do not think that there is any limitation in case we do not use group id

as prefix in task Id. I think it would work and it has nice properties. Spaces

and national characters especially might be much better in the name of the

group in the UI. We do not have separate 'display name' there and the Id is

displayed in the UI, so I'm 100% sure people will use their national characters

there (and spaces).

If we find that it does not work with those characters, i think we should

rather fix it than prevent it.

Now when we talk about it - maybe even the right fix should be to remove all

the invalid characters from group I'd rather than prevent it even in prefix

case ?

This one can make it to 2.1.3 but then in 2.2 we could add it as a feature

to allow such cases.

WDYT?

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [airflow] uranusjr commented on pull request #17544: Rescue if a DagRun's DAG was removed from db

uranusjr commented on pull request #17544: URL: https://github.com/apache/airflow/pull/17544#issuecomment-898243405 For future reference, the complete steps to reproduce this from the web UI is: 1. Create a DAG with a subDAG that runs for a long-ish time. Wait for them to show up in the web UI. 2. Run the DAG (thus trigging the subDAG). 3. delete the parent DAG from the web UI. (This does not delete the subDAG.) 4. Try to block the subDAG’s unfinished run from step 2. (Should fail with `SerializedDagNotFound` before this patch.) -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] github-actions[bot] commented on pull request #17594: Remove redundant ``numpy`` dependency