[GitHub] [hudi] hudi-bot edited a comment on pull request #3696: [WIP][HUDI-2439] Refactor commit actions in hudi-client module

hudi-bot edited a comment on pull request #3696: URL: https://github.com/apache/hudi/pull/3696#issuecomment-924126593 ## CI report: * 2831b493e97cdc9e2a5aa99e170f15d48028de51 Azure: [FAILURE](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2315) * 1c37ce18b451091cdcd751af679be6833d713c68 Azure: [PENDING](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2317) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3696: [WIP][HUDI-2439] Refactor commit actions in hudi-client module

hudi-bot edited a comment on pull request #3696: URL: https://github.com/apache/hudi/pull/3696#issuecomment-924126593 ## CI report: * 2831b493e97cdc9e2a5aa99e170f15d48028de51 Azure: [FAILURE](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2315) * 1c37ce18b451091cdcd751af679be6833d713c68 UNKNOWN Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3702: [HUDI-2479] HoodieFileIndex throws NPE for FileSlice with pure log files

hudi-bot edited a comment on pull request #3702: URL: https://github.com/apache/hudi/pull/3702#issuecomment-924636021 ## CI report: * 163949191ab006195970b6acd209e282dd3cc068 Azure: [PENDING](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2316) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot commented on pull request #3702: [HUDI-2479] HoodieFileIndex throws NPE for FileSlice with pure log files

hudi-bot commented on pull request #3702: URL: https://github.com/apache/hudi/pull/3702#issuecomment-924636021 ## CI report: * 163949191ab006195970b6acd209e282dd3cc068 UNKNOWN Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Updated] (HUDI-2479) HoodieFileIndex throws NPE for FileSlice with pure log files

[ https://issues.apache.org/jira/browse/HUDI-2479?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] ASF GitHub Bot updated HUDI-2479: - Labels: pull-request-available (was: ) > HoodieFileIndex throws NPE for FileSlice with pure log files > > > Key: HUDI-2479 > URL: https://issues.apache.org/jira/browse/HUDI-2479 > Project: Apache Hudi > Issue Type: Bug > Components: Spark Integration >Reporter: Danny Chen >Assignee: Danny Chen >Priority: Major > Labels: pull-request-available > Fix For: 0.10.0 > > -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [hudi] danny0405 opened a new pull request #3702: [HUDI-2479] HoodieFileIndex throws NPE for FileSlice with pure log files

danny0405 opened a new pull request #3702: URL: https://github.com/apache/hudi/pull/3702 ## *Tips* - *Thank you very much for contributing to Apache Hudi.* - *Please review https://hudi.apache.org/contribute/how-to-contribute before opening a pull request.* ## What is the purpose of the pull request *(For example: This pull request adds quick-start document.)* ## Brief change log *(for example:)* - *Modify AnnotationLocation checkstyle rule in checkstyle.xml* ## Verify this pull request *(Please pick either of the following options)* This pull request is a trivial rework / code cleanup without any test coverage. *(or)* This pull request is already covered by existing tests, such as *(please describe tests)*. (or) This change added tests and can be verified as follows: *(example:)* - *Added integration tests for end-to-end.* - *Added HoodieClientWriteTest to verify the change.* - *Manually verified the change by running a job locally.* ## Committer checklist - [ ] Has a corresponding JIRA in PR title & commit - [ ] Commit message is descriptive of the change - [ ] CI is green - [ ] Necessary doc changes done or have another open PR - [ ] For large changes, please consider breaking it into sub-tasks under an umbrella JIRA. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Created] (HUDI-2479) HoodieFileIndex throws NPE for FileSlice with pure log files

Danny Chen created HUDI-2479: Summary: HoodieFileIndex throws NPE for FileSlice with pure log files Key: HUDI-2479 URL: https://issues.apache.org/jira/browse/HUDI-2479 Project: Apache Hudi Issue Type: Bug Components: Spark Integration Reporter: Danny Chen Assignee: Danny Chen Fix For: 0.10.0 -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [hudi] hudi-bot edited a comment on pull request #3696: [WIP][HUDI-2439] Refactor commit actions in hudi-client module

hudi-bot edited a comment on pull request #3696: URL: https://github.com/apache/hudi/pull/3696#issuecomment-924126593 ## CI report: * 2831b493e97cdc9e2a5aa99e170f15d48028de51 Azure: [FAILURE](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2315) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3696: [WIP][HUDI-2439] Refactor commit actions in hudi-client module

hudi-bot edited a comment on pull request #3696: URL: https://github.com/apache/hudi/pull/3696#issuecomment-924126593 ## CI report: * a4b4afdf7b5dd711cc3978e2c02f9efb0c7b5514 Azure: [FAILURE](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2305) * 2831b493e97cdc9e2a5aa99e170f15d48028de51 UNKNOWN Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Updated] (HUDI-2441) To support partial update function which can move and update the data from the old partition to the new partition , when the data with same key change it's partition

[

https://issues.apache.org/jira/browse/HUDI-2441?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

David_Liang updated HUDI-2441:

--

Description:

to considerate such a scene, there 2 reocod *in different batch* as follow

||post_id ||position||weight||ts||day ||

| 1|shengzhen|3KG|1630480027|{color:#ff}20210901{color}|

| 1|beijing|3KG|1630652828|{color:#ff}20210903{color}|

when using the {color:#ff}*Global Index*{color} with such sql

{code:java}

merge into target_hudi_table t

using (

select post_id, position, ts , day from source_table

) as s

on t.id = s.id

when natched then update set t.position = s.position, t.ts=s.ts, t.day = s.day

when not matched then insert *

{code}

Beacuse now the hudi engine haven't support *cross partitions partial merge

into,* the result in the target table is

||post_id (as primiary key)||position||weight||ts||day||

| 1|beijing| |1630652828|*{color:#ff}20210903{color}*|

the record still in the old parition.

but the *expected* result is

||post_id (as primiary key)||position||weight||ts||day||

|

1|beijing|*{color:#ff}3KG{color}*|1630652828|{color:#ff}*20210903*{color}|

was:

to considerate such a scene, there 2 reocod in different batch as follow

||post_id ||position||weight||ts||day ||

| 1|shengzhen|3KG|1630480027|{color:#ff}20210901{color}|

| 1|beijing|3KG|1630652828|{color:#ff}20210903{color}|

when using the {color:#ff}*Global Index*{color} with such sql

{code:java}

merge into target_hudi_table t

using (

select post_id, position, ts , day from source_table

) as s

on t.id = s.id

when natched then update set t.position = s.position, t.ts=s.ts, t.day = s.day

when not matched then insert *

{code}

Beacuse now the hudi engine haven't support *cross partitions partial merge

into,* the result in the target table is

||post_id (as primiary key)||position||weight||ts||day||

| 1|beijing| |1630652828|*{color:#ff}20210903{color}*|

the record still in the old parition.

but the *expected* result is

||post_id (as primiary key)||position||weight||ts||day||

|

1|beijing|*{color:#FF}3KG{color}*|1630652828|{color:#ff}*20210903*{color}|

> To support partial update function which can move and update the data from

> the old partition to the new partition , when the data with same key change

> it's partition

> -

>

> Key: HUDI-2441

> URL: https://issues.apache.org/jira/browse/HUDI-2441

> Project: Apache Hudi

> Issue Type: Improvement

> Components: Storage Management

>Reporter: David_Liang

>Assignee: Nicholas Jiang

>Priority: Major

>

> to considerate such a scene, there 2 reocod *in different batch* as follow

> ||post_id ||position||weight||ts||day ||

> | 1|shengzhen|3KG|1630480027|{color:#ff}20210901{color}|

> | 1|beijing|3KG|1630652828|{color:#ff}20210903{color}|

>

> when using the {color:#ff}*Global Index*{color} with such sql

>

> {code:java}

> merge into target_hudi_table t

> using (

> select post_id, position, ts , day from source_table

> ) as s

> on t.id = s.id

> when natched then update set t.position = s.position, t.ts=s.ts, t.day =

> s.day

> when not matched then insert *

> {code}

>

> Beacuse now the hudi engine haven't support *cross partitions partial merge

> into,* the result in the target table is

>

> ||post_id (as primiary key)||position||weight||ts||day||

> | 1|beijing| |1630652828|*{color:#ff}20210903{color}*|

> the record still in the old parition.

>

> but the *expected* result is

> ||post_id (as primiary key)||position||weight||ts||day||

> |

> 1|beijing|*{color:#ff}3KG{color}*|1630652828|{color:#ff}*20210903*{color}|

>

>

>

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

[GitHub] [hudi] hudi-bot edited a comment on pull request #3623: [WIP][HUDI-2409] Using HBase shaded jars in Hudi presto bundle

hudi-bot edited a comment on pull request #3623: URL: https://github.com/apache/hudi/pull/3623#issuecomment-915056982 ## CI report: * a34260e3bc4c2344005feefe4c7672b9589569af Azure: [FAILURE](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2313) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] xushiyan commented on issue #3699: [SUPPORT] Job hanging on toRdd at HoodieSparkUtils

xushiyan commented on issue #3699: URL: https://github.com/apache/hudi/issues/3699#issuecomment-924585220 Need more details to understand what's going on: - Which line of code from HoodieSparkUtils was ran here? - What Hudi actions are you trying to perform? - What is the total input data size are you reading? - How many executors were actually created during the run? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3623: [WIP][HUDI-2409] Using HBase shaded jars in Hudi presto bundle

hudi-bot edited a comment on pull request #3623: URL: https://github.com/apache/hudi/pull/3623#issuecomment-915056982 ## CI report: * 427429a67168db05df942087f2cbf950f853196a Azure: [FAILURE](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2260) * a34260e3bc4c2344005feefe4c7672b9589569af Azure: [PENDING](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2313) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3623: [WIP][HUDI-2409] Using HBase shaded jars in Hudi presto bundle

hudi-bot edited a comment on pull request #3623: URL: https://github.com/apache/hudi/pull/3623#issuecomment-915056982 ## CI report: * 427429a67168db05df942087f2cbf950f853196a Azure: [FAILURE](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2260) * a34260e3bc4c2344005feefe4c7672b9589569af UNKNOWN Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Updated] (HUDI-2441) To support partial update function which can move and update the data from the old partition to the new partition , when the data with same key change it's partition

[

https://issues.apache.org/jira/browse/HUDI-2441?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

David_Liang updated HUDI-2441:

--

Description:

to considerate such a scene, there 2 reocod in different batch as follow

||post_id ||position||weight||ts||day ||

| 1|shengzhen|3KG|1630480027|{color:#ff}20210901{color}|

| 1|beijing|3KG|1630652828|{color:#ff}20210903{color}|

when using the {color:#ff}*Global Index*{color} with such sql

{code:java}

merge into target_hudi_table t

using (

select post_id, position, ts , day from source_table

) as s

on t.id = s.id

when natched then update set t.position = s.position, t.ts=s.ts, t.day = s.day

when not matched then insert *

{code}

Beacuse now the hudi engine haven't support *cross partitions partial merge

into,* the result in the target table is

||post_id (as primiary key)||position||weight||ts||day||

| 1|beijing| |1630652828|*{color:#ff}20210903{color}*|

the record still in the old parition.

but the *expected* result is

||post_id (as primiary key)||position||weight||ts||day||

|

1|beijing|*{color:#FF}3KG{color}*|1630652828|{color:#ff}*20210903*{color}|

was:

to considerate such a scene, there 2 reocod as follow in the source table

||post_id ||position||weight||ts||day ||

| 1|shengzhen|3KG|1630480027|{color:#ff}20210901{color}|

| 1|beijing|3KG|1630652828|{color:#ff}20210903{color}|

when using the {color:#ff}*Global Index*{color} with such sql

{code:java}

merge into target_hudi_table t

using (

select post_id, position, ts , day from source_table

) as s

on t.id = s.id

when natched then update set t.position = s.position, t.ts=s.ts, t.day = s.day

when not matched then insert *

{code}

Beacuse now the hudi engine haven't support *cross partitions partial merge

into,* the result in the target table is

||post_id (as primiary key)||position||weight||ts||day||

| 1|beijing|3KG|1630652828|*{color:#ff}20210901{color}*|

the record still in the old parition.

but the *expected* result is

||post_id (as primiary key)||position||weight||ts||day||

| 1|beijing|3KG|1630652828|{color:#ff}*20210903*{color}|

> To support partial update function which can move and update the data from

> the old partition to the new partition , when the data with same key change

> it's partition

> -

>

> Key: HUDI-2441

> URL: https://issues.apache.org/jira/browse/HUDI-2441

> Project: Apache Hudi

> Issue Type: Improvement

> Components: Storage Management

>Reporter: David_Liang

>Assignee: Nicholas Jiang

>Priority: Major

>

> to considerate such a scene, there 2 reocod in different batch as follow

> ||post_id ||position||weight||ts||day ||

> | 1|shengzhen|3KG|1630480027|{color:#ff}20210901{color}|

> | 1|beijing|3KG|1630652828|{color:#ff}20210903{color}|

>

> when using the {color:#ff}*Global Index*{color} with such sql

>

> {code:java}

> merge into target_hudi_table t

> using (

> select post_id, position, ts , day from source_table

> ) as s

> on t.id = s.id

> when natched then update set t.position = s.position, t.ts=s.ts, t.day =

> s.day

> when not matched then insert *

> {code}

>

> Beacuse now the hudi engine haven't support *cross partitions partial merge

> into,* the result in the target table is

>

> ||post_id (as primiary key)||position||weight||ts||day||

> | 1|beijing| |1630652828|*{color:#ff}20210903{color}*|

> the record still in the old parition.

>

> but the *expected* result is

> ||post_id (as primiary key)||position||weight||ts||day||

> |

> 1|beijing|*{color:#FF}3KG{color}*|1630652828|{color:#ff}*20210903*{color}|

>

>

>

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

[GitHub] [hudi] hudi-bot edited a comment on pull request #3413: [HUDI-2277] HoodieDeltaStreamer reading ORC files directly using ORCDFSSource

hudi-bot edited a comment on pull request #3413: URL: https://github.com/apache/hudi/pull/3413#issuecomment-893311636 ## CI report: * a7a59556703d2ea881abee407f8fd88291d04d80 UNKNOWN * 99414ba1ee89c6cdd2f482425001aec2392d65e9 Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2312) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[hudi] branch master updated (55df8f6 -> e813dae)

This is an automated email from the ASF dual-hosted git repository.

danny0405 pushed a change to branch master

in repository https://gitbox.apache.org/repos/asf/hudi.git.

from 55df8f6 [MINOR] Fix typo."funcitons" corrected to "functions" (#3681)

add e813dae [MINOR] Cosmetic changes for flink (#3701)

No new revisions were added by this update.

Summary of changes:

.../org/apache/hudi/sink/StreamWriteFunction.java | 2 +-

.../sink/partitioner/profile/EmptyWriteProfile.java | 7 ++-

.../apache/hudi/streamer/HoodieFlinkStreamer.java | 5 +++--

.../org/apache/hudi/table/HoodieTableFactory.java | 2 +-

.../org/apache/hudi/table/HoodieTableSource.java| 11 ---

.../table/format/mor/MergeOnReadInputFormat.java| 2 +-

.../Transformer.java => util/InputFormats.java} | 21 +

.../java/org/apache/hudi/util/StreamerUtil.java | 16 ++--

8 files changed, 35 insertions(+), 31 deletions(-)

copy hudi-flink/src/main/java/org/apache/hudi/{sink/transform/Transformer.java

=> util/InputFormats.java} (67%)

[GitHub] [hudi] danny0405 merged pull request #3701: [MINOR] Cosmetic changes for flink

danny0405 merged pull request #3701: URL: https://github.com/apache/hudi/pull/3701 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3701: [MINOR] Cosmetic changes for flink

hudi-bot edited a comment on pull request #3701: URL: https://github.com/apache/hudi/pull/3701#issuecomment-924551270 ## CI report: * 4e1b5f8accb6d597d4fe2244ae381a4a56b6f109 Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2311) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] YannByron commented on pull request #3693: [HUDI-2456] support 'show partitions' sql

YannByron commented on pull request #3693: URL: https://github.com/apache/hudi/pull/3693#issuecomment-924562478 @leesf can you have time to review this? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] zhangyue19921010 commented on a change in pull request #3413: [HUDI-2277] HoodieDeltaStreamer reading ORC files directly using ORCDFSSource

zhangyue19921010 commented on a change in pull request #3413:

URL: https://github.com/apache/hudi/pull/3413#discussion_r713565743

##

File path:

hudi-utilities/src/test/java/org/apache/hudi/utilities/testutils/UtilitiesTestBase.java

##

@@ -364,5 +385,32 @@ public static String toJsonString(HoodieRecord hr) {

public static String[] jsonifyRecords(List records) {

return

records.stream().map(Helpers::toJsonString).toArray(String[]::new);

}

+

+public static void addAvroRecord(

+VectorizedRowBatch batch,

+GenericRecord record,

+TypeDescription orcSchema,

+int orcBatchSize,

+Writer writer

+) throws IOException {

+ for (int c = 0; c < batch.numCols; c++) {

+ColumnVector colVector = batch.cols[c];

+final String thisField = orcSchema.getFieldNames().get(c);

+final TypeDescription type = orcSchema.getChildren().get(c);

+

+Object fieldValue = record.get(thisField);

+Schema.Field avroField = record.getSchema().getField(thisField);

+AvroOrcUtils.addToVector(type, colVector, avroField.schema(),

fieldValue, batch.size);

+ }

+

+ batch.size++;

+

+ if (batch.size % orcBatchSize == 0 || batch.size == batch.getMaxSize()) {

Review comment:

Sure thing, changed :)

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3413: [HUDI-2277] HoodieDeltaStreamer reading ORC files directly using ORCDFSSource

hudi-bot edited a comment on pull request #3413: URL: https://github.com/apache/hudi/pull/3413#issuecomment-893311636 ## CI report: * 32223149bbb3d0c23e710fd338de4ed63e5f8be8 Azure: [CANCELED](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2310) * a7a59556703d2ea881abee407f8fd88291d04d80 UNKNOWN * 99414ba1ee89c6cdd2f482425001aec2392d65e9 Azure: [PENDING](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2312) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] danny0405 commented on a change in pull request #3698: [HUDI-2474] Refreshing timeline for every operation in Hudi

danny0405 commented on a change in pull request #3698:

URL: https://github.com/apache/hudi/pull/3698#discussion_r713564251

##

File path:

hudi-client/hudi-spark-client/src/main/java/org/apache/hudi/client/SparkRDDWriteClient.java

##

@@ -318,6 +323,7 @@ protected void completeCompaction(HoodieCommitMetadata

metadata, JavaRDD compact(String compactionInstantTime, boolean

shouldComplete) {

HoodieSparkTable table = HoodieSparkTable.create(config, context);

+table.getHoodieView().sync();

preWrite(compactionInstantTime, WriteOperationType.COMPACT,

table.getMetaClient());

Review comment:

Is there any possibility that we only sync the view when metadata table

is enabled ?

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3701: [MINOR] Cosmetic changes for flink

hudi-bot edited a comment on pull request #3701: URL: https://github.com/apache/hudi/pull/3701#issuecomment-924551270 ## CI report: * 4e1b5f8accb6d597d4fe2244ae381a4a56b6f109 Azure: [PENDING](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2311) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot commented on pull request #3701: [MINOR] Cosmetic changes for flink

hudi-bot commented on pull request #3701: URL: https://github.com/apache/hudi/pull/3701#issuecomment-924551270 ## CI report: * 4e1b5f8accb6d597d4fe2244ae381a4a56b6f109 UNKNOWN Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3413: [HUDI-2277] HoodieDeltaStreamer reading ORC files directly using ORCDFSSource

hudi-bot edited a comment on pull request #3413: URL: https://github.com/apache/hudi/pull/3413#issuecomment-893311636 ## CI report: * 32223149bbb3d0c23e710fd338de4ed63e5f8be8 Azure: [CANCELED](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2310) * a7a59556703d2ea881abee407f8fd88291d04d80 UNKNOWN * 99414ba1ee89c6cdd2f482425001aec2392d65e9 UNKNOWN Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] danny0405 opened a new pull request #3701: [MINOR] Cosmetic changes for flink

danny0405 opened a new pull request #3701: URL: https://github.com/apache/hudi/pull/3701 ## *Tips* - *Thank you very much for contributing to Apache Hudi.* - *Please review https://hudi.apache.org/contribute/how-to-contribute before opening a pull request.* ## What is the purpose of the pull request *(For example: This pull request adds quick-start document.)* ## Brief change log *(for example:)* - *Modify AnnotationLocation checkstyle rule in checkstyle.xml* ## Verify this pull request *(Please pick either of the following options)* This pull request is a trivial rework / code cleanup without any test coverage. *(or)* This pull request is already covered by existing tests, such as *(please describe tests)*. (or) This change added tests and can be verified as follows: *(example:)* - *Added integration tests for end-to-end.* - *Added HoodieClientWriteTest to verify the change.* - *Manually verified the change by running a job locally.* ## Committer checklist - [ ] Has a corresponding JIRA in PR title & commit - [ ] Commit message is descriptive of the change - [ ] CI is green - [ ] Necessary doc changes done or have another open PR - [ ] For large changes, please consider breaking it into sub-tasks under an umbrella JIRA. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] nsivabalan commented on issue #3605: [SUPPORT]Hudi Inserts and Upserts for MoR and CoW tables are taking very long time.

nsivabalan commented on issue #3605: URL: https://github.com/apache/hudi/issues/3605#issuecomment-924546245 If your cardinality for partition is low, we can try to partition using a diff field which could have high cardinality. We can leverage more parallel processing depending on the no of partitions. Within each partition, we can't do much of parallel processing and so we are limited. I mean, hudi does assign one file group to each executor, but I am talking about indexing. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] nsivabalan commented on issue #3605: [SUPPORT]Hudi Inserts and Upserts for MoR and CoW tables are taking very long time.

nsivabalan commented on issue #3605:

URL: https://github.com/apache/hudi/issues/3605#issuecomment-924545165

btw, an orthogonal point.

I see your record key is {segmentId,uuid} and partition path is segmentId.

Not sure if you need to prefix segmentId to your record keys, if you are solely

using it to uniquely identify unique records and apply updates within hudi. If

there is no external facing requirement for record keys to be a pair of

{segmentId,uuid}, you can just have uuid.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [hudi] nsivabalan commented on issue #3605: [SUPPORT]Hudi Inserts and Upserts for MoR and CoW tables are taking very long time.

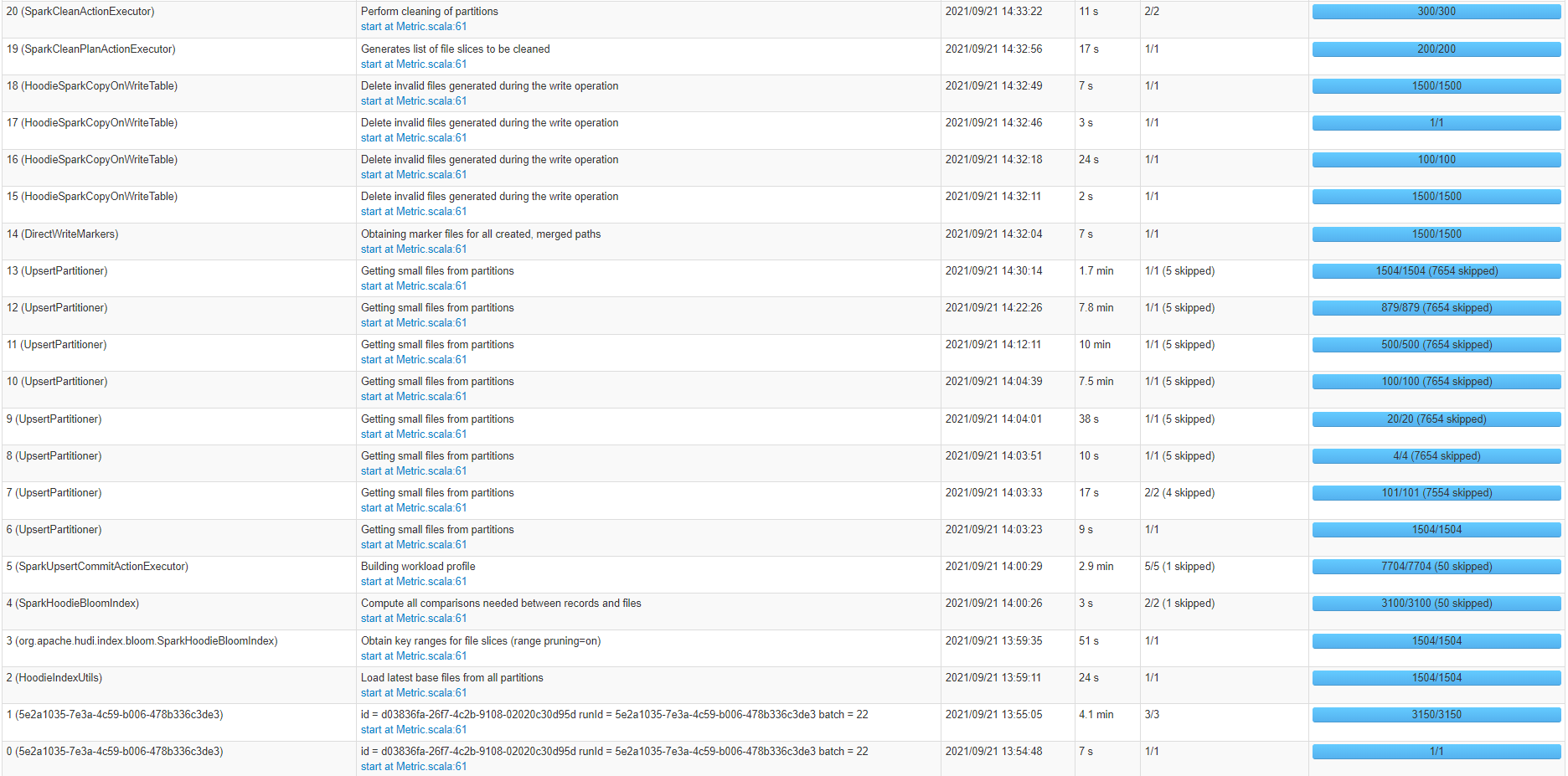

nsivabalan commented on issue #3605: URL: https://github.com/apache/hudi/issues/3605#issuecomment-924544144 got it, would you mind sharing the screenshots of spark stages. we will get an idea of where the time is spent more. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3413: [HUDI-2277] HoodieDeltaStreamer reading ORC files directly using ORCDFSSource

hudi-bot edited a comment on pull request #3413: URL: https://github.com/apache/hudi/pull/3413#issuecomment-893311636 ## CI report: * 32223149bbb3d0c23e710fd338de4ed63e5f8be8 Azure: [CANCELED](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2310) * a7a59556703d2ea881abee407f8fd88291d04d80 UNKNOWN Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3413: [HUDI-2277] HoodieDeltaStreamer reading ORC files directly using ORCDFSSource

hudi-bot edited a comment on pull request #3413: URL: https://github.com/apache/hudi/pull/3413#issuecomment-893311636 ## CI report: * 7a21d39bce12b04c3663d8966e9923145b2ce234 Azure: [FAILURE](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2100) Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2103) * 32223149bbb3d0c23e710fd338de4ed63e5f8be8 Azure: [PENDING](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2310) * a7a59556703d2ea881abee407f8fd88291d04d80 UNKNOWN Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] zhangyue19921010 commented on a change in pull request #3413: [HUDI-2277] HoodieDeltaStreamer reading ORC files directly using ORCDFSSource

zhangyue19921010 commented on a change in pull request #3413:

URL: https://github.com/apache/hudi/pull/3413#discussion_r713553429

##

File path:

hudi-utilities/src/test/java/org/apache/hudi/utilities/testutils/UtilitiesTestBase.java

##

@@ -364,5 +385,32 @@ public static String toJsonString(HoodieRecord hr) {

public static String[] jsonifyRecords(List records) {

return

records.stream().map(Helpers::toJsonString).toArray(String[]::new);

}

+

+public static void addAvroRecord(

Review comment:

Sure thing. changed.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3700: [HUDI-2471] Add support ignoring case when column name matches in merge into

hudi-bot edited a comment on pull request #3700: URL: https://github.com/apache/hudi/pull/3700#issuecomment-924523979 ## CI report: * 98de9c0ec2e814c3c8c20276e6d1457c4eb7243d Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2309) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] zhangyue19921010 commented on a change in pull request #3413: [HUDI-2277] HoodieDeltaStreamer reading ORC files directly using ORCDFSSource

zhangyue19921010 commented on a change in pull request #3413:

URL: https://github.com/apache/hudi/pull/3413#discussion_r713552896

##

File path:

hudi-utilities/src/test/java/org/apache/hudi/utilities/functional/TestHoodieDeltaStreamer.java

##

@@ -1398,6 +1399,34 @@ private void testParquetDFSSource(boolean

useSchemaProvider, List transf

testNum++;

}

+ private void testORCDFSSource(boolean useSchemaProvider, List

transformerClassNames) throws Exception {

+// prepare ORCDFSSource

+TypedProperties orcProps = new TypedProperties();

+

+// Properties used for testing delta-streamer with orc source

+orcProps.setProperty("include", "base.properties");

+orcProps.setProperty("hoodie.embed.timeline.server","false");

+orcProps.setProperty("hoodie.datasource.write.recordkey.field",

"_row_key");

+orcProps.setProperty("hoodie.datasource.write.partitionpath.field",

"not_there");

+if (useSchemaProvider) {

+

orcProps.setProperty("hoodie.deltastreamer.schemaprovider.source.schema.file",

dfsBasePath + "/" + "source.avsc");

+ if (transformerClassNames != null) {

+

orcProps.setProperty("hoodie.deltastreamer.schemaprovider.target.schema.file",

dfsBasePath + "/" + "target.avsc");

+ }

+}

+orcProps.setProperty("hoodie.deltastreamer.source.dfs.root",

ORC_SOURCE_ROOT);

+UtilitiesTestBase.Helpers.savePropsToDFS(orcProps, dfs, dfsBasePath + "/"

+ PROPS_FILENAME_TEST_ORC);

+

+String tableBasePath = dfsBasePath + "/test_orc_source_table" + testNum;

+HoodieDeltaStreamer deltaStreamer = new HoodieDeltaStreamer(

+TestHelpers.makeConfig(tableBasePath, WriteOperationType.INSERT,

ORCDFSSource.class.getName(),

+transformerClassNames, PROPS_FILENAME_TEST_ORC, false,

+useSchemaProvider, 10, false, null, null, "timestamp",

null), jsc);

+deltaStreamer.sync();

+TestHelpers.assertRecordCount(ORC_NUM_RECORDS, tableBasePath +

"/*/*.parquet", sqlContext);

Review comment:

Hi @nsivabalan Thanks for your review. I think this is .parquet Because

this patch is a ORCDFSSource which let HoodieDeltaStreamer can read orc file

into hudi table and also use parquet format as base file format. So that we

need to use .parquet when reading hudi table data.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[jira] [Updated] (HUDI-2472) Tests failure follow up when metadata is enabled by default

[ https://issues.apache.org/jira/browse/HUDI-2472?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] sivabalan narayanan updated HUDI-2472: -- Description: We plan to enable metadata by default. but there are some tests that fail with this. Dumping details on tests for which metadata is disabled for now. We need to fix them one by one. hudi-spark-client: // this is the module that has lot of tests that could potentially have issues. TestHoodieSparkMergeOnReadTableIncrementalRead.testIncrementalReadsWithCompaction. disabled metadata for now. directly accesses files. TestHoodieIndex. testSimpleTagLocationAndUpdateWithRollback. known issue. https://issues.apache.org/jira/browse/HUDI-2468 testSimpleGlobalIndexTagLocationWhenShouldUpdatePartitionPath. uses test table. disabled metadata. TestHoodieRowCreateHandle.testInstantiationFailure. disabled metadata. not a real issue. TestHoodieSparkMergeOnReadTableRollback.testMultiRollbackWithDeltaAndCompactionCommit. restore fails. bcoz, there is an inflight rollback in dataset timeline. disabling for now. https://issues.apache.org/jira/browse/HUDI-2477 TestHoodieMergeOnReadTable.testLogFileCountsAfterCompaction. uses HoodieSparkWriteableTestTable. disabled metadata for now. TestHoodieCompactor.testWriteStatusContentsAfterCompaction. uses HoodieSparkWriteableTestTable. have disabled metadata. TestHbaseIndex.testEnsureTagLocationUsesCommitTimeline. rolling back 1st commit. known issue. disabling metadata. https://issues.apache.org/jira/browse/HUDI-2468 TestHbaseIndex.testSimpleTagLocationAndUpdateWithRollback. rolling back 1st commit. known issue. disabling metadata. https://issues.apache.org/jira/browse/HUDI-2468 TestCleaner. lot of tests. uses test table. TestHoodieTimelineArchiveLog. lot of tests. uses test table. hudi-utilities: TestHoodieDeltaStreamer. testCleanerDeleteReplacedDataWithArchive. fails. relating to archival. disabling metadata. need to look into it. hudi-client-common: all passed. hudi-flink-client: all passed. hudi-java-client: disabled metadata for java. all ok. hudi-common: all passed. hudi-spark java: Testbootstrap class fully fails. rollback of 1st commit. have disbaled metadata. https://issues.apache.org/jira/browse/HUDI-2477 hudi-spark scala tests: all good. hudi-utilities: one test in deltastreamer. hudi-timelineserver: all good. hudi-sync: hudi-dla-sync: all good. hudi-hive-sync: all good. hudi-spark3: all good. hudi-spark2: all good. hudi-examples: no tests. pending modules. hudi-cli hudi-integ-test was: We plan to enable metadata by default. but there are some tests that fail with this. Dumping details on tests for which metadata is disabled for now. We need to fix them one by one. hudi-spark-client: // this is the module that has lot of tests that could potentially have issues. TestHoodieSparkMergeOnReadTableIncrementalRead.testIncrementalReadsWithCompaction. disabled metadata for now. directly accesses files. TestHoodieIndex. testSimpleTagLocationAndUpdateWithRollback. known issue. https://issues.apache.org/jira/browse/HUDI-2468 testSimpleGlobalIndexTagLocationWhenShouldUpdatePartitionPath. uses test table. disabled metadata. TestHoodieRowCreateHandle.testInstantiationFailure. disabled metadata. not a real issue. TestHoodieSparkMergeOnReadTableRollback.testMultiRollbackWithDeltaAndCompactionCommit. restore fails. bcoz, there is an inflight rollback in dataset timeline. disabling for now. https://issues.apache.org/jira/browse/HUDI-2477 TestHoodieMergeOnReadTable.testLogFileCountsAfterCompaction. uses HoodieSparkWriteableTestTable. disabled metadata for now. TestHoodieCompactor.testWriteStatusContentsAfterCompaction. uses HoodieSparkWriteableTestTable. have disabled metadata. TestHbaseIndex.testEnsureTagLocationUsesCommitTimeline. rolling back 1st commit. known issue. disabling metadata. https://issues.apache.org/jira/browse/HUDI-2468 TestHbaseIndex.testSimpleTagLocationAndUpdateWithRollback. rolling back 1st commit. known issue. disabling metadata. https://issues.apache.org/jira/browse/HUDI-2468 TestCleaner. lot of tests. uses test table. TestHoodieTimelineArchiveLog. lot of tests. uses test table. hudi-client-common: all passed. hudi-flink-client: all passed. hudi-java-client: disabled metadata for java. all ok. hudi-common: all passed. hudi-spark java: Testbootstrap class fully fails. rollback of 1st commit. have disbaled metadata. https://issues.apache.org/jira/browse/HUDI-2477 hudi-spark scala tests: all good. hudi-utilities: one test in deltastreamer. hudi-timelineserver: all good. hudi-sync: hudi-dla-sync: all good. hudi-hive-sync: all good. hudi-spark3: all good. hudi-spark2: all good. hudi-examples: no tests. pending modules. hudi-cli hudi-integ-test > Tests failure follow up when metadat

[GitHub] [hudi] nsivabalan commented on pull request #3455: [HUDI-2297] Estimate available memory size accurately for spillable map.

nsivabalan commented on pull request #3455: URL: https://github.com/apache/hudi/pull/3455#issuecomment-924533840 @rmahindra123 : a gentle reminder to review the PR. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] liujinhui1994 commented on pull request #3614: [HUDI-2370] Supports data encryption

liujinhui1994 commented on pull request #3614: URL: https://github.com/apache/hudi/pull/3614#issuecomment-924533202 I tested and verified last week. After upgrading the parquet version, many unit tests and integration tests failed. I am still looking for a solution. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3413: [HUDI-2277] HoodieDeltaStreamer reading ORC files directly using ORCDFSSource

hudi-bot edited a comment on pull request #3413: URL: https://github.com/apache/hudi/pull/3413#issuecomment-893311636 ## CI report: * 7a21d39bce12b04c3663d8966e9923145b2ce234 Azure: [FAILURE](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2100) Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2103) * 32223149bbb3d0c23e710fd338de4ed63e5f8be8 Azure: [PENDING](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2310) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] nsivabalan edited a comment on pull request #3614: [HUDI-2370] Supports data encryption

nsivabalan edited a comment on pull request #3614: URL: https://github.com/apache/hudi/pull/3614#issuecomment-924531159 @liujinhui1994 : how did your testing go. Can you update w/ your findings. @vinothchandar : apart from parquet version upgrade, I also see that we are enabling vectorized reading by default in this patch. Just wanted to remind you just incase we need to watch out for something. Also, should we do parquet upgrade in a separate patch, so that we can do some testing around diff query types, engines etc to certify the upgrade. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] nsivabalan commented on pull request #3614: [HUDI-2370] Supports data encryption

nsivabalan commented on pull request #3614: URL: https://github.com/apache/hudi/pull/3614#issuecomment-924531159 @liujinhui1994 : how did your testing go. @vinothchandar : apart from parquet version upgrade, I also see that we are enabling vectorized reading by default in this patch. Just wanted to remind you just incase we need to watch out for something. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3413: [HUDI-2277] HoodieDeltaStreamer reading ORC files directly using ORCDFSSource

hudi-bot edited a comment on pull request #3413: URL: https://github.com/apache/hudi/pull/3413#issuecomment-893311636 ## CI report: * 7a21d39bce12b04c3663d8966e9923145b2ce234 Azure: [FAILURE](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2100) Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2103) * 32223149bbb3d0c23e710fd338de4ed63e5f8be8 UNKNOWN Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3700: [HUDI-2471] Add support ignoring case when column name matches in merge into

hudi-bot edited a comment on pull request #3700: URL: https://github.com/apache/hudi/pull/3700#issuecomment-924523979 ## CI report: * 98de9c0ec2e814c3c8c20276e6d1457c4eb7243d Azure: [PENDING](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2309) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot commented on pull request #3700: [HUDI-2471] Add support ignoring case when column name matches in merge into

hudi-bot commented on pull request #3700: URL: https://github.com/apache/hudi/pull/3700#issuecomment-924523979 ## CI report: * 98de9c0ec2e814c3c8c20276e6d1457c4eb7243d UNKNOWN Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Commented] (HUDI-2470) use commit_time in the WHERE STATEMENT to optimize the incremental query

[ https://issues.apache.org/jira/browse/HUDI-2470?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel&focusedCommentId=17418375#comment-17418375 ] David_Liang commented on HUDI-2470: --- please assign to me? > use commit_time in the WHERE STATEMENT to optimize the incremental query > - > > Key: HUDI-2470 > URL: https://issues.apache.org/jira/browse/HUDI-2470 > Project: Apache Hudi > Issue Type: Improvement > Components: Incremental Pull, Performance >Reporter: David_Liang >Priority: Major > > In the module of DeltaStreamer, Option of QUERY_TYPE_OPT_KEY and > BEGIN_INSTANTTIME_OPT_KEY is used to tell the DeltaStreamer to query data > after the specific time. > Such as method is not very convenient for user. So if we can implement the > function that User can set BEGIN_INSTANTTIME_OPT_KEY and > BEGIN_INSTANTTIME_OPT_KEY at the sql, which is not only very convinient for > user, also very a elegant implementation. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Updated] (HUDI-2471) Add support ignoring case when column name matches in merge into

[ https://issues.apache.org/jira/browse/HUDI-2471?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] ASF GitHub Bot updated HUDI-2471: - Labels: pull-request-available (was: ) > Add support ignoring case when column name matches in merge into > - > > Key: HUDI-2471 > URL: https://issues.apache.org/jira/browse/HUDI-2471 > Project: Apache Hudi > Issue Type: Improvement > Components: Spark Integration >Reporter: 董可伦 >Assignee: 董可伦 >Priority: Major > Labels: pull-request-available > Fix For: 0.10.0 > > -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [hudi] dongkelun opened a new pull request #3700: [HUDI-2471] Add support ignoring case when column name matches in merge into

dongkelun opened a new pull request #3700: URL: https://github.com/apache/hudi/pull/3700 ## *Tips* - *Thank you very much for contributing to Apache Hudi.* - *Please review https://hudi.apache.org/contribute/how-to-contribute before opening a pull request.* ## What is the purpose of the pull request *Add support ignoring case when column name matches in merge into* ## Brief change log *(for example:)* - *Add support ignoring case when column name matches in merge into* ## Verify this pull request *(example:)* - *Added unit test in TestMergeIntoTable2* ## Committer checklist - [ ] Has a corresponding JIRA in PR title & commit - [ ] Commit message is descriptive of the change - [ ] CI is green - [ ] Necessary doc changes done or have another open PR - [ ] For large changes, please consider breaking it into sub-tasks under an umbrella JIRA. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3590: [HUDI-2285][HUDI-2476] Metadata table synchronous design. Rebased and Squashed from pull/3426

hudi-bot edited a comment on pull request #3590: URL: https://github.com/apache/hudi/pull/3590#issuecomment-912237120 ## CI report: * aefac7ec2f2e40bdf3ad4365ea6aa825803a439d UNKNOWN * 9c0123c0f27f990d009b323bab75b76ceecf3dab Azure: [FAILURE](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2307) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3590: [HUDI-2285][HUDI-2476] Metadata table synchronous design. Rebased and Squashed from pull/3426

hudi-bot edited a comment on pull request #3590: URL: https://github.com/apache/hudi/pull/3590#issuecomment-912237120 ## CI report: * aefac7ec2f2e40bdf3ad4365ea6aa825803a439d UNKNOWN * 7793fbdb9b93a129ef606cb2d73ea6e1e9074957 Azure: [FAILURE](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2299) * 9c0123c0f27f990d009b323bab75b76ceecf3dab Azure: [PENDING](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2307) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] rubenssoto commented on issue #3607: [SUPPORT]Presto query hudi data with metadata table enable un-successfully.

rubenssoto commented on issue #3607: URL: https://github.com/apache/hudi/issues/3607#issuecomment-924497696 @nsivabalan Does presto already support Hudi metadata? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] nsivabalan commented on a change in pull request #3646: [HUDI-349]: Added new cleaning policy based on number of hours

nsivabalan commented on a change in pull request #3646:

URL: https://github.com/apache/hudi/pull/3646#discussion_r713508760

##

File path:

hudi-client/hudi-client-common/src/main/java/org/apache/hudi/config/HoodieCompactionConfig.java

##

@@ -512,6 +517,11 @@ public Builder retainCommits(int commitsRetained) {

return this;

}

+public Builder retainNumberOfHours(int numberOfHours) {

Review comment:

can we name the arg same as its usage. cleanerHoursRetained

##

File path:

hudi-common/src/main/java/org/apache/hudi/common/model/HoodieCleaningPolicy.java

##

@@ -22,5 +22,5 @@

* Hoodie cleaning policies.

*/

public enum HoodieCleaningPolicy {

- KEEP_LATEST_FILE_VERSIONS, KEEP_LATEST_COMMITS;

+ KEEP_LATEST_FILE_VERSIONS, KEEP_LATEST_COMMITS, KEEP_LAST_X_HOURS;

Review comment:

KEEP_LATEST_BY_HOURS

##

File path:

hudi-client/hudi-spark-client/src/test/java/org/apache/hudi/table/TestCleaner.java

##

@@ -1240,6 +1244,154 @@ public void testKeepLatestCommits(boolean

simulateFailureRetry, boolean enableIn

assertTrue(testTable.baseFileExists(p0, "05", file3P0C2));

}

+ /**

+ * Test cleaning policy based on number of hours retained policy. This test

case covers the case when files will not be cleaned.

+ */

+ @ParameterizedTest

+ @MethodSource("argumentsForTestKeepLatestCommits")

+ public void testKeepXHoursNoCleaning(boolean simulateFailureRetry, boolean

enableIncrementalClean, boolean enableBootstrapSourceClean) throws Exception {

Review comment:

again, is it possible to reuse existing tests for keepLatestcommits

rather than rewriting entire tests.

##

File path:

hudi-client/hudi-client-common/src/main/java/org/apache/hudi/table/action/clean/CleanPlanner.java

##

@@ -402,9 +478,16 @@ private String

getLatestVersionBeforeCommit(List fileSliceList, Hoodi

public Option getEarliestCommitToRetain() {

Option earliestCommitToRetain = Option.empty();

int commitsRetained = config.getCleanerCommitsRetained();

+int hoursRetained = config.getCleanerHoursRetained();

if (config.getCleanerPolicy() == HoodieCleaningPolicy.KEEP_LATEST_COMMITS

&& commitTimeline.countInstants() > commitsRetained) {

- earliestCommitToRetain =

commitTimeline.nthInstant(commitTimeline.countInstants() - commitsRetained);

+ earliestCommitToRetain =

commitTimeline.nthInstant(commitTimeline.countInstants() - commitsRetained);

//15 instants total, 10 commits to retain, this gives 6th instant in the list

+} else if (config.getCleanerPolicy() ==

HoodieCleaningPolicy.KEEP_LAST_X_HOURS) {

+ Instant instant = Instant.now();

+ ZonedDateTime commitDateTime = ZonedDateTime.ofInstant(instant,

ZoneId.systemDefault());

Review comment:

Do we have any precedence in hudi code base for doing time based

calculations. Can you explore and let me know. Wanna maintain some uniformity

if we have any.

##

File path:

hudi-client/hudi-client-common/src/main/java/org/apache/hudi/config/HoodieCompactionConfig.java

##

@@ -69,6 +69,11 @@

.withDocumentation("Number of commits to retain, without cleaning. This

will be retained for num_of_commits * time_between_commits "

+ "(scheduled). This also directly translates into how much data

retention the table supports for incremental queries.");

+ public static final ConfigProperty CLEANER_HOURS_RETAINED =

ConfigProperty.key("hoodie.cleaner.hours.retained")

+ .defaultValue("5")

Review comment:

lets have 24 may be. 5 hours is very aggressive. 1 day seems reasonable.

##

File path:

hudi-client/hudi-client-common/src/main/java/org/apache/hudi/table/action/clean/CleanPlanner.java

##

@@ -330,6 +336,74 @@ public CleanPlanner(HoodieEngineContext context,

HoodieTable hoodieT

}

return deletePaths;

}

+

+ /**

+ * This method finds the files to be cleaned based on the number of hours.

If {@code config.getCleanerHoursRetained()} is set to 5,

+ * all the files with commit time earlier than 5 hours will be removed. Also

the latest file for any file group is retained.

+ * This policy gives much more flexibility to users for retaining data for

running incremental queries as compared to

+ * KEEP_LATEST_COMMITS cleaning policy. The default number of hours is 5.

+ * @param partitionPath partition path to check

+ * @return list of files to clean

+ */

+ private List getFilesToCleanKeepingLatestHours(String

partitionPath) {

Review comment:

can't we re-use getFilesToCleanKeepingLatestCommits(). all we need to do

is to move most of these to a private method and reuse across both.

for getFilesToCleanKeepingLatestCommits(), you can pass in the config value

for N commits to retain. where as for getFilesToCleanKeepingLatestHours, we can

determine how many commits can be retained and then pass in the N value.

lets try to re-use code as

[GitHub] [hudi] nsivabalan commented on pull request #3630: [HUDI-313] NPE when select count start from a realtime table

nsivabalan commented on pull request #3630: URL: https://github.com/apache/hudi/pull/3630#issuecomment-924490394 @codope : can you please review this as you are working w/ realtime input format recently. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] nsivabalan commented on pull request #3648: [HUDI-2413] fix Sql source's checkpoint issue

nsivabalan commented on pull request #3648: URL: https://github.com/apache/hudi/pull/3648#issuecomment-924487255 @codope : can you review this as well. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[hudi] branch master updated (5a94043 -> 55df8f6)

This is an automated email from the ASF dual-hosted git repository. sivabalan pushed a change to branch master in repository https://gitbox.apache.org/repos/asf/hudi.git. from 5a94043 [HUDI-2343]Fix the exception for mergeInto when the primaryKey and preCombineField of source table and target table differ in case only (#3517) add 55df8f6 [MINOR] Fix typo."funcitons" corrected to "functions" (#3681) No new revisions were added by this update. Summary of changes: .../main/java/org/apache/hudi/hadoop/HoodieColumnProjectionUtils.java | 2 +- 1 file changed, 1 insertion(+), 1 deletion(-)

[GitHub] [hudi] nsivabalan merged pull request #3681: [MINOR]Fix typo."funcitons" corrected to "functions"

nsivabalan merged pull request #3681: URL: https://github.com/apache/hudi/pull/3681 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] nsivabalan commented on pull request #3691: [HUDI-2455] Adding spark_avro dependency to hudi-integ-test

nsivabalan commented on pull request #3691: URL: https://github.com/apache/hudi/pull/3691#issuecomment-924486497 ``` mvn package -DskipTests ``` -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] nsivabalan commented on pull request #3691: [HUDI-2455] Adding spark_avro dependency to hudi-integ-test

nsivabalan commented on pull request #3691: URL: https://github.com/apache/hudi/pull/3691#issuecomment-924486402 yeah, I could build successfully w/ master. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] nsivabalan removed a comment on pull request #3590: [HUDI-2285][HUDI-2476] Metadata table synchronous design. Rebased and Squashed from pull/3426

nsivabalan removed a comment on pull request #3590: URL: https://github.com/apache/hudi/pull/3590#issuecomment-92856 So, given this approach, we could also support async compaction and clustering in metadata table. Here is what we could do. all things stay same wrt data table. i.e. take locks and do conflict resolution for all regular writes, commit and release locks. take locks and do conflict resolution while scheduling compaction/clustering and release locks. take locks and commit compaction and clustering. when it comes to metadata table. We will enable multi-writer mode in metadata table. (As of this patch, we have only single writer mode for metadata table) all writes to metadata table happens within data table lock. And so wrt new delta commits to metadata table, it is always going to be a single writer implicitly. after committing to metadata table, we can just schedule compaction and cleaning if something is available. This internally will take the lock for metadata table and check for any conflicts, but since there are no other writers, we should be good. and once the commit and scheduling completes, we return to data table, make the commit and release the lockk. Later when async compaction for metadata table is about to get committed, we take metadata table lock and make the commit. this will ensure this may not collide with regular delta commits happening to metadata table. We may not invoke any conflict resolution here similar to how it is done in data table. But one major issue we need to fix here is the ConflictResolutionStrategy: as of now, there are any pending or complete compactions after current commit of interest, writes will fail. since all of them are going to operate on the same partition with one file group, there will definitely be conflict. So, just for metadata, we might want to consider if we can come up with a special conflict resolution strategy where we consider only new writes as conflicts and not any scheduled compaction). I need to understand the implications of this in more finer detail. But just putting it out here. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3590: [HUDI-2285][HUDI-2468][HUDI-2476] Metadata table synchronous design. Rebased and Squashed from pull/3426

hudi-bot edited a comment on pull request #3590: URL: https://github.com/apache/hudi/pull/3590#issuecomment-912237120 ## CI report: * aefac7ec2f2e40bdf3ad4365ea6aa825803a439d UNKNOWN * 7793fbdb9b93a129ef606cb2d73ea6e1e9074957 Azure: [FAILURE](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2299) * 9c0123c0f27f990d009b323bab75b76ceecf3dab UNKNOWN Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Assigned] (HUDI-2472) Tests failure follow up when metadata is enabled by default

[ https://issues.apache.org/jira/browse/HUDI-2472?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] sivabalan narayanan reassigned HUDI-2472: - Assignee: sivabalan narayanan > Tests failure follow up when metadata is enabled by default > --- > > Key: HUDI-2472 > URL: https://issues.apache.org/jira/browse/HUDI-2472 > Project: Apache Hudi > Issue Type: Sub-task > Components: Testing >Reporter: sivabalan narayanan >Assignee: sivabalan narayanan >Priority: Major > > We plan to enable metadata by default. but there are some tests that fail > with this. Dumping details on tests for which metadata is disabled for now. > We need to fix them one by one. > > hudi-spark-client: // this is the module that has lot of tests that could > potentially have issues. > TestHoodieSparkMergeOnReadTableIncrementalRead.testIncrementalReadsWithCompaction. > disabled metadata for now. directly accesses files. > TestHoodieIndex. > testSimpleTagLocationAndUpdateWithRollback. known issue. > https://issues.apache.org/jira/browse/HUDI-2468 > testSimpleGlobalIndexTagLocationWhenShouldUpdatePartitionPath. uses test > table. disabled metadata. > TestHoodieRowCreateHandle.testInstantiationFailure. disabled metadata. not a > real issue. > > TestHoodieSparkMergeOnReadTableRollback.testMultiRollbackWithDeltaAndCompactionCommit. > restore fails. bcoz, there is an inflight rollback in dataset timeline. > disabling for now. https://issues.apache.org/jira/browse/HUDI-2477 > TestHoodieMergeOnReadTable.testLogFileCountsAfterCompaction. uses > HoodieSparkWriteableTestTable. disabled metadata for now. > TestHoodieCompactor.testWriteStatusContentsAfterCompaction. uses > HoodieSparkWriteableTestTable. have disabled metadata. > TestHbaseIndex.testEnsureTagLocationUsesCommitTimeline. rolling back 1st > commit. known issue. disabling metadata. > https://issues.apache.org/jira/browse/HUDI-2468 > TestHbaseIndex.testSimpleTagLocationAndUpdateWithRollback. rolling back 1st > commit. known issue. disabling metadata. > https://issues.apache.org/jira/browse/HUDI-2468 > TestCleaner. lot of tests. uses test table. > TestHoodieTimelineArchiveLog. lot of tests. uses test table. > hudi-client-common: all passed. > hudi-flink-client: all passed. > hudi-java-client: disabled metadata for java. all ok. > hudi-common: all passed. > hudi-spark java: Testbootstrap class fully fails. rollback of 1st commit. > have disbaled metadata. https://issues.apache.org/jira/browse/HUDI-2477 > hudi-spark scala tests: all good. > hudi-utilities: one test in deltastreamer. > hudi-timelineserver: all good. > hudi-sync: > hudi-dla-sync: all good. > hudi-hive-sync: all good. > hudi-spark3: all good. > hudi-spark2: all good. > hudi-examples: no tests. > > pending modules. > hudi-cli > hudi-integ-test > > > > -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Updated] (HUDI-2472) Tests failure follow up when metadata is enabled by default