[jira] [Resolved] (HUDI-1820) Remove legacy code for Flink writer

[ https://issues.apache.org/jira/browse/HUDI-1820?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Danny Chen resolved HUDI-1820. -- Resolution: Invalid The legacy code already been removed. > Remove legacy code for Flink writer > --- > > Key: HUDI-1820 > URL: https://issues.apache.org/jira/browse/HUDI-1820 > Project: Apache Hudi > Issue Type: Task > Components: Flink Integration >Reporter: Danny Chen >Assignee: Danny Chen >Priority: Major > Fix For: 0.10.0 > > > Remove legacy code to avoid confusion. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Assigned] (HUDI-2559) Ensure unique timestamps are generated for commit times with concurrent writers

[

https://issues.apache.org/jira/browse/HUDI-2559?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

sivabalan narayanan reassigned HUDI-2559:

-

Assignee: sivabalan narayanan

> Ensure unique timestamps are generated for commit times with concurrent

> writers

> ---

>

> Key: HUDI-2559

> URL: https://issues.apache.org/jira/browse/HUDI-2559

> Project: Apache Hudi

> Issue Type: Improvement

>Reporter: sivabalan narayanan

>Assignee: sivabalan narayanan

>Priority: Major

>

> Ensure unique timestamps are generated for commit times with concurrent

> writers.

> this is the piece of code in HoodieActiveTimeline which creates a new commit

> time.

> {code:java}

> public static String createNewInstantTime(long milliseconds) {

> return lastInstantTime.updateAndGet((oldVal) -> {

> String newCommitTime;

> do {

> newCommitTime = HoodieActiveTimeline.COMMIT_FORMATTER.format(new

> Date(System.currentTimeMillis() + milliseconds));

> } while (HoodieTimeline.compareTimestamps(newCommitTime,

> LESSER_THAN_OR_EQUALS, oldVal));

> return newCommitTime;

> });

> }

> {code}

> There are chances that a deltastreamer and a concurrent spark ds writer gets

> same timestamp and one of them fails.

> Related issues and github jiras:

> [https://github.com/apache/hudi/issues/3782]

> https://issues.apache.org/jira/browse/HUDI-2549

>

>

>

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

[jira] [Created] (HUDI-2559) Ensure unique timestamps are generated for commit times with concurrent writers

sivabalan narayanan created HUDI-2559:

-

Summary: Ensure unique timestamps are generated for commit times

with concurrent writers

Key: HUDI-2559

URL: https://issues.apache.org/jira/browse/HUDI-2559

Project: Apache Hudi

Issue Type: Improvement

Reporter: sivabalan narayanan

Ensure unique timestamps are generated for commit times with concurrent writers.

this is the piece of code in HoodieActiveTimeline which creates a new commit

time.

{code:java}

public static String createNewInstantTime(long milliseconds) {

return lastInstantTime.updateAndGet((oldVal) -> {

String newCommitTime;

do {

newCommitTime = HoodieActiveTimeline.COMMIT_FORMATTER.format(new

Date(System.currentTimeMillis() + milliseconds));

} while (HoodieTimeline.compareTimestamps(newCommitTime,

LESSER_THAN_OR_EQUALS, oldVal));

return newCommitTime;

});

}

{code}

There are chances that a deltastreamer and a concurrent spark ds writer gets

same timestamp and one of them fails.

Related issues and github jiras:

[https://github.com/apache/hudi/issues/3782]

https://issues.apache.org/jira/browse/HUDI-2549

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

[jira] [Updated] (HUDI-2558) Clustering w/ sort columns with null values fails

[ https://issues.apache.org/jira/browse/HUDI-2558?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] sivabalan narayanan updated HUDI-2558: -- Fix Version/s: 0.10.0 > Clustering w/ sort columns with null values fails > - > > Key: HUDI-2558 > URL: https://issues.apache.org/jira/browse/HUDI-2558 > Project: Apache Hudi > Issue Type: Improvement > Components: Writer Core >Reporter: sivabalan narayanan >Assignee: sivabalan narayanan >Priority: Major > Fix For: 0.10.0 > > > https://github.com/apache/hudi/issues/3766 -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Assigned] (HUDI-2558) Clustering w/ sort columns with null values fails

[ https://issues.apache.org/jira/browse/HUDI-2558?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] sivabalan narayanan reassigned HUDI-2558: - Assignee: sivabalan narayanan > Clustering w/ sort columns with null values fails > - > > Key: HUDI-2558 > URL: https://issues.apache.org/jira/browse/HUDI-2558 > Project: Apache Hudi > Issue Type: Improvement > Components: Writer Core >Reporter: sivabalan narayanan >Assignee: sivabalan narayanan >Priority: Major > > https://github.com/apache/hudi/issues/3766 -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Created] (HUDI-2558) Clustering w/ sort columns with null values fails

sivabalan narayanan created HUDI-2558: - Summary: Clustering w/ sort columns with null values fails Key: HUDI-2558 URL: https://issues.apache.org/jira/browse/HUDI-2558 Project: Apache Hudi Issue Type: Improvement Components: Writer Core Reporter: sivabalan narayanan https://github.com/apache/hudi/issues/3766 -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Updated] (HUDI-2558) Clustering w/ sort columns with null values fails

[ https://issues.apache.org/jira/browse/HUDI-2558?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] sivabalan narayanan updated HUDI-2558: -- Labels: sev:high user-support-issues (was: ) > Clustering w/ sort columns with null values fails > - > > Key: HUDI-2558 > URL: https://issues.apache.org/jira/browse/HUDI-2558 > Project: Apache Hudi > Issue Type: Improvement > Components: Writer Core >Reporter: sivabalan narayanan >Assignee: sivabalan narayanan >Priority: Major > Labels: sev:high, user-support-issues > Fix For: 0.10.0 > > > https://github.com/apache/hudi/issues/3766 -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [hudi] hudi-bot edited a comment on pull request #3794: [HUDI-2553] Metadata table compaction trigger max delta commits default config (re-enable)

hudi-bot edited a comment on pull request #3794: URL: https://github.com/apache/hudi/pull/3794#issuecomment-942793927 ## CI report: * 31852dac3234f80b094392197a34ac5704f2e784 Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2631) Azure: [PENDING](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2653) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] manojpec commented on pull request #3794: [HUDI-2553] Metadata table compaction trigger max delta commits default config (re-enable)

manojpec commented on pull request #3794: URL: https://github.com/apache/hudi/pull/3794#issuecomment-943505633 @hudi-bot run azure -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Updated] (HUDI-2557) Shade javax.servlet for flink bundle jar

[ https://issues.apache.org/jira/browse/HUDI-2557?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] ASF GitHub Bot updated HUDI-2557: - Labels: pull-request-available (was: ) > Shade javax.servlet for flink bundle jar > > > Key: HUDI-2557 > URL: https://issues.apache.org/jira/browse/HUDI-2557 > Project: Apache Hudi > Issue Type: Test > Components: Flink Integration >Reporter: Danny Chen >Assignee: Danny Chen >Priority: Major > Labels: pull-request-available > Fix For: 0.10.0 > > -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [hudi] yiduwangkai commented on pull request #3798: [HUDI-2557] Shade javax.servlet for flink bundle jar

yiduwangkai commented on pull request #3798: URL: https://github.com/apache/hudi/pull/3798#issuecomment-943494883 @danny0405 sorry, i donot know how i can avoid this problem that i submitting code that someone else has already submitted -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3799: [HUDI-2491] hoodie.datasource.hive_sync.mode=hms mode is supported in…

hudi-bot edited a comment on pull request #3799: URL: https://github.com/apache/hudi/pull/3799#issuecomment-943176004 ## CI report: * f44907a941b5b61e642abb5783f70fe8830fe6a6 Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2644) * a8b2f1a63fc3cb1f4fe99495070d1d160bba4031 Azure: [PENDING](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2652) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3799: [HUDI-2491] hoodie.datasource.hive_sync.mode=hms mode is supported in…

hudi-bot edited a comment on pull request #3799: URL: https://github.com/apache/hudi/pull/3799#issuecomment-943176004 ## CI report: * f44907a941b5b61e642abb5783f70fe8830fe6a6 Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2644) * a8b2f1a63fc3cb1f4fe99495070d1d160bba4031 UNKNOWN Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] leesf commented on a change in pull request #3754: [HUDI-2482] support 'drop partition' sql

leesf commented on a change in pull request #3754:

URL: https://github.com/apache/hudi/pull/3754#discussion_r729045601

##

File path:

hudi-spark-datasource/hudi-spark/src/main/scala/org/apache/spark/sql/hudi/HoodieSqlUtils.scala

##

@@ -92,7 +93,45 @@ object HoodieSqlUtils extends SparkAdapterSupport {

properties.putAll((spark.sessionState.conf.getAllConfs ++

table.storage.properties).asJava)

HoodieMetadataConfig.newBuilder.fromProperties(properties).build()

}

-FSUtils.getAllPartitionPaths(sparkEngine, metadataConfig,

HoodieSqlUtils.getTableLocation(table, spark)).asScala

+FSUtils.getAllPartitionPaths(sparkEngine, metadataConfig,

getTableLocation(table, spark)).asScala

+ }

+

+ /**

+ * This method is used to compatible with the old non-hive-styled partition

table.

+ * By default we enable the "hoodie.datasource.write.hive_style_partitioning"

+ * when writing data to hudi table by spark sql by default.

+ * If the exist table is a non-hive-styled partitioned table, we should

+ * disable the "hoodie.datasource.write.hive_style_partitioning" when

+ * merge or update the table. Or else, we will get an incorrect merge result

+ * as the partition path mismatch.

+ */

+ def isHiveStylePartitionPartitioning(partitionPaths: Seq[String], table:

CatalogTable): Boolean = {

Review comment:

rename to isHiveStyledPartitioning

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [hudi] leesf commented on a change in pull request #3754: [HUDI-2482] support 'drop partition' sql

leesf commented on a change in pull request #3754:

URL: https://github.com/apache/hudi/pull/3754#discussion_r729045075

##

File path:

hudi-spark-datasource/hudi-spark/src/main/scala/org/apache/spark/sql/hudi/HoodieSqlUtils.scala

##

@@ -92,7 +93,45 @@ object HoodieSqlUtils extends SparkAdapterSupport {

properties.putAll((spark.sessionState.conf.getAllConfs ++

table.storage.properties).asJava)

HoodieMetadataConfig.newBuilder.fromProperties(properties).build()

}

-FSUtils.getAllPartitionPaths(sparkEngine, metadataConfig,

HoodieSqlUtils.getTableLocation(table, spark)).asScala

+FSUtils.getAllPartitionPaths(sparkEngine, metadataConfig,

getTableLocation(table, spark)).asScala

+ }

+

+ /**

+ * This method is used to compatible with the old non-hive-styled partition

table.

+ * By default we enable the "hoodie.datasource.write.hive_style_partitioning"

+ * when writing data to hudi table by spark sql by default.

+ * If the exist table is a non-hive-styled partitioned table, we should

+ * disable the "hoodie.datasource.write.hive_style_partitioning" when

+ * merge or update the table. Or else, we will get an incorrect merge result

+ * as the partition path mismatch.

+ */

+ def isHiveStylePartitionPartitioning(partitionPaths: Seq[String], table:

CatalogTable): Boolean = {

+if (table.partitionColumnNames.nonEmpty) {

+ val isHiveStylePartitionPath = (path: String) => {

+val fragments = path.split("/")

+if (fragments.size != table.partitionColumnNames.size) {

+ false

+} else {

+ fragments.zip(table.partitionColumnNames).forall {

+case (pathFragment, partitionColumn) =>

pathFragment.startsWith(s"$partitionColumn=")

+ }

+}

+ }

+ partitionPaths.forall(isHiveStylePartitionPath)

+} else {

+ true

Review comment:

here means if it is not a partition table, we treat it as hive style

partition?

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3802: [HUDI-1500] Support replace commit in DeltaSync with commit metadata preserved

hudi-bot edited a comment on pull request #3802: URL: https://github.com/apache/hudi/pull/3802#issuecomment-943342747 ## CI report: * a6459139223bd70e665424b1ae2b1b9a8f08b5c0 Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2651) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] codope commented on a change in pull request #3799: [HUDI-2491] hoodie.datasource.hive_sync.mode=hms mode is supported in…

codope commented on a change in pull request #3799:

URL: https://github.com/apache/hudi/pull/3799#discussion_r728979206

##

File path:

hudi-utilities/src/main/java/org/apache/hudi/utilities/deltastreamer/BootstrapExecutor.java

##

@@ -159,10 +159,14 @@ public void execute() throws IOException {

* Sync to Hive.

*/

private void syncHive() {

-if (cfg.enableHiveSync) {

+if (cfg.enableHiveSync || cfg.enableHiveSync) {

Review comment:

Redundant condition. Did you mean to add some other condition?

##

File path:

hudi-sync/hudi-hive-sync/src/main/java/org/apache/hudi/hive/HiveSyncConfig.java

##

@@ -49,6 +49,9 @@

@Parameter(names = {"--jdbc-url"}, description = "Hive jdbc connect url")

public String jdbcUrl;

+ @Parameter(names = {"--metastore-uris"}, description = "Hive metastore uris")

+ public String metastoreUris;

Review comment:

This should be used somewhere right. I mean in HoodieHiveClient or

HiveSyncTool. I don't see it is being used anywhere in `hudi-hive-sync` module.

##

File path:

hudi-utilities/src/main/java/org/apache/hudi/utilities/deltastreamer/DeltaSync.java

##

@@ -618,9 +618,10 @@ private void syncMeta(HoodieDeltaStreamerMetrics metrics) {

public void syncHive() {

HiveSyncConfig hiveSyncConfig = DataSourceUtils.buildHiveSyncConfig(props,

cfg.targetBasePath, cfg.baseFileFormat);

-LOG.info("Syncing target hoodie table with hive table(" +

hiveSyncConfig.tableName + "). Hive metastore URL :"

-+ hiveSyncConfig.jdbcUrl + ", basePath :" + cfg.targetBasePath);

HiveConf hiveConf = new HiveConf(conf, HiveConf.class);

+if

(!DataSourceWriteOptions.METASTORE_URIS().defaultValue().equals(hiveSyncConfig.metastoreUris))

{

Review comment:

Why do we need to check this? Why not simply set whatever user has

passed irrespective of the default value?

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3802: [HUDI-1500] Support replace commit in DeltaSync with commit metadata preserved

hudi-bot edited a comment on pull request #3802: URL: https://github.com/apache/hudi/pull/3802#issuecomment-943342747 ## CI report: * a6459139223bd70e665424b1ae2b1b9a8f08b5c0 Azure: [PENDING](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2651) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot commented on pull request #3802: [HUDI-1500] Support replace commit in DeltaSync with commit metadata preserved

hudi-bot commented on pull request #3802: URL: https://github.com/apache/hudi/pull/3802#issuecomment-943342747 ## CI report: * a6459139223bd70e665424b1ae2b1b9a8f08b5c0 UNKNOWN Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Commented] (HUDI-2549) Exceptions when using second writer into Hudi table managed by DeltaStreamer

[

https://issues.apache.org/jira/browse/HUDI-2549?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17428798#comment-17428798

]

Dave Hagman commented on HUDI-2549:

---

OK so you only ran 1 iteration of each (1 commit from each)? This issue only

appears for me after multiple commits from each writer which better aligns with

a real-world use-case (since deltastreamer is usually running continuously).

> Exceptions when using second writer into Hudi table managed by DeltaStreamer

>

>

> Key: HUDI-2549

> URL: https://issues.apache.org/jira/browse/HUDI-2549

> Project: Apache Hudi

> Issue Type: Bug

> Components: DeltaStreamer, Spark Integration, Writer Core

>Reporter: Dave Hagman

>Assignee: Dave Hagman

>Priority: Critical

> Labels: multi-writer, sev:critical

> Fix For: 0.10.0

>

>

> When running the DeltaStreamer along with a second spark datasource writer

> (with [ZK-based OCC

> enabled|https://hudi.apache.org/docs/concurrency_control#enabling-multi-writing]

> we receive the following exception (which haults the spark datasource

> writer). This occurs following warnings of timeline inconsistencies:

>

> {code:java}

> 21/10/07 17:10:05 INFO TransactionManager: Transaction ending with

> transaction owner Option{val=[==>20211007170717__commit__INFLIGHT]}

> 21/10/07 17:10:05 INFO ZookeeperBasedLockProvider: RELEASING lock

> atZkBasePath = /events/test/mwc/v1, lock key = events_mwc_test_v1

> 21/10/07 17:10:05 INFO ZookeeperBasedLockProvider: RELEASED lock atZkBasePath

> = /events/test/mwc/v1, lock key = events_mwc_test_v1

> 21/10/07 17:10:05 INFO TransactionManager: Transaction ended

> Exception in thread "main" java.lang.IllegalArgumentException

> at

> org.apache.hudi.common.util.ValidationUtils.checkArgument(ValidationUtils.java:31)

> at

> org.apache.hudi.common.table.timeline.HoodieActiveTimeline.transitionState(HoodieActiveTimeline.java:414)

> at

> org.apache.hudi.common.table.timeline.HoodieActiveTimeline.transitionState(HoodieActiveTimeline.java:395)

> at

> org.apache.hudi.common.table.timeline.HoodieActiveTimeline.saveAsComplete(HoodieActiveTimeline.java:153)

> at

> org.apache.hudi.client.AbstractHoodieWriteClient.commit(AbstractHoodieWriteClient.java:218)

> at

> org.apache.hudi.client.AbstractHoodieWriteClient.commitStats(AbstractHoodieWriteClient.java:190)

> at

> org.apache.hudi.client.SparkRDDWriteClient.commit(SparkRDDWriteClient.java:124)

> at

> org.apache.hudi.HoodieSparkSqlWriter$.commitAndPerformPostOperations(HoodieSparkSqlWriter.scala:617)

> at

> org.apache.hudi.HoodieSparkSqlWriter$.write(HoodieSparkSqlWriter.scala:274)

> at

> org.apache.hudi.DefaultSource.createRelation(DefaultSource.scala:164)

> at

> org.apache.spark.sql.execution.datasources.SaveIntoDataSourceCommand.run(SaveIntoDataSourceCommand.scala:46)

> at

> org.apache.spark.sql.execution.command.ExecutedCommandExec.sideEffectResult$lzycompute(commands.scala:70)

> at

> org.apache.spark.sql.execution.command.ExecutedCommandExec.sideEffectResult(commands.scala:68)

> at

> org.apache.spark.sql.execution.command.ExecutedCommandExec.doExecute(commands.scala:90)

> at

> org.apache.spark.sql.execution.SparkPlan.$anonfun$execute$1(SparkPlan.scala:185)

> at

> org.apache.spark.sql.execution.SparkPlan.$anonfun$executeQuery$1(SparkPlan.scala:223)

> at

> org.apache.spark.rdd.RDDOperationScope$.withScope(RDDOperationScope.scala:151)

> at

> org.apache.spark.sql.execution.SparkPlan.executeQuery(SparkPlan.scala:220)

> at

> org.apache.spark.sql.execution.SparkPlan.execute(SparkPlan.scala:181)

> at

> org.apache.spark.sql.execution.QueryExecution.toRdd$lzycompute(QueryExecution.scala:134)

> at

> org.apache.spark.sql.execution.QueryExecution.toRdd(QueryExecution.scala:133)

> at

> org.apache.spark.sql.DataFrameWriter.$anonfun$runCommand$1(DataFrameWriter.scala:989)

> at

> org.apache.spark.sql.catalyst.QueryPlanningTracker$.withTracker(QueryPlanningTracker.scala:107)

> at

> org.apache.spark.sql.execution.SQLExecution$.withTracker(SQLExecution.scala:232)

> at

> org.apache.spark.sql.execution.SQLExecution$.executeQuery$1(SQLExecution.scala:110)

> at

> org.apache.spark.sql.execution.SQLExecution$.$anonfun$withNewExecutionId$6(SQLExecution.scala:135)

> at

> org.apache.spark.sql.catalyst.QueryPlanningTracker$.withTracker(QueryPlanningTracker.scala:107)

> at

> org.apache.spark.sql.execution.SQLExecution$.withTracker(SQLExecution.scala:232)

> at

> org.apache.spark.sql.execution.SQLExecution$.$anonfun$withNewExecutionId$5(SQLExecution.scala:135)

>

[jira] [Updated] (HUDI-1500) Support incrementally reading clustering commit via Spark Datasource/DeltaStreamer

[ https://issues.apache.org/jira/browse/HUDI-1500?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] ASF GitHub Bot updated HUDI-1500: - Labels: pull-request-available (was: ) > Support incrementally reading clustering commit via Spark > Datasource/DeltaStreamer > --- > > Key: HUDI-1500 > URL: https://issues.apache.org/jira/browse/HUDI-1500 > Project: Apache Hudi > Issue Type: Sub-task > Components: DeltaStreamer, Spark Integration >Reporter: liwei >Assignee: Sagar Sumit >Priority: Blocker > Labels: pull-request-available > Fix For: 0.10.0 > > > now in DeltaSync.readFromSource() can not read last instant as replace > commit, such as clustering. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [hudi] codope opened a new pull request #3802: [HUDI-1500] Support replace commit in DeltaSync with commit metadata preserved

codope opened a new pull request #3802: URL: https://github.com/apache/hudi/pull/3802 ## What is the purpose of the pull request This PR fixes [HUDI-1500](https://issues.apache.org/jira/browse/HUDI-1500) for deltastreamer. For Spark datasource, it was fixed by #3139 ## Brief change log * Enable commit metadata preservation by default. * Remove the filter of replace commits in DeltaSync. ## Verify this pull request This pull request is already covered by existing tests. ## Committer checklist - [ ] Has a corresponding JIRA in PR title & commit - [ ] Commit message is descriptive of the change - [ ] CI is green - [ ] Necessary doc changes done or have another open PR - [ ] For large changes, please consider breaking it into sub-tasks under an umbrella JIRA. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3203: [HUDI-2086] Refactor hive mor_incremental_view

hudi-bot edited a comment on pull request #3203: URL: https://github.com/apache/hudi/pull/3203#issuecomment-872092745 ## CI report: * 0fa6297ce58eb877fd5c4eba59fef20ad9335d26 UNKNOWN * c4af04dab3dab31ef05ba6007000738a2dfb81ce Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2649) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] nsivabalan commented on pull request #3776: [HUDI-2543]: Added guides section

nsivabalan commented on pull request #3776: URL: https://github.com/apache/hudi/pull/3776#issuecomment-943312764 One nit. When I mouse over "Guides", I see Tuning as first entry and Trouble shooting as 2nd entry. while on the left pane order is different. Can we fix that. https://user-images.githubusercontent.com/513218/137317986-5061c313-8b5a-47b4-837b-16d7b6f45956.png;> -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Closed] (HUDI-2484) Hive sync not working in HMS mode with DeltaStreamer

[ https://issues.apache.org/jira/browse/HUDI-2484?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Sagar Sumit closed HUDI-2484. - Resolution: Fixed > Hive sync not working in HMS mode with DeltaStreamer > > > Key: HUDI-2484 > URL: https://issues.apache.org/jira/browse/HUDI-2484 > Project: Apache Hudi > Issue Type: Bug >Reporter: Sagar Sumit >Assignee: Sagar Sumit >Priority: Major > Labels: pull-request-available, sev:critical, user-support-issues > Fix For: 0.10.0 > > > Set Hive sync mdoe to HMS and disable JDBC mode: > ``` > --hoodie-conf hoodie.datasource.hive_sync.mode=hms > --hoodie-conf hoodie.datasource.hive_sync.use_jdbc=false > ``` > It throws the following exception: > ``` > Caused by: java.lang.NoClassDefFoundError: > org/apache/calcite/rel/type/RelDataTypeSystem > at > org.apache.hadoop.hive.ql.parse.SemanticAnalyzerFactory.get(SemanticAnalyzerFactory.java:318) > at org.apache.hadoop.hive.ql.Driver.compile(Driver.java:484) > at org.apache.hadoop.hive.ql.Driver.compileInternal(Driver.java:1317) > at org.apache.hadoop.hive.ql.Driver.runInternal(Driver.java:1457) > at org.apache.hadoop.hive.ql.Driver.run(Driver.java:1237) > at org.apache.hadoop.hive.ql.Driver.run(Driver.java:1227) > at > org.apache.hudi.hive.ddl.HiveQueryDDLExecutor.updateHiveSQLs(HiveQueryDDLExecutor.java:94) > at > org.apache.hudi.hive.ddl.HiveQueryDDLExecutor.runSQL(HiveQueryDDLExecutor.java:85) > at > org.apache.hudi.hive.ddl.QueryBasedDDLExecutor.createTable(QueryBasedDDLExecutor.java:82) > at > org.apache.hudi.hive.HoodieHiveClient.createTable(HoodieHiveClient.java:191) > at org.apache.hudi.hive.HiveSyncTool.syncSchema(HiveSyncTool.java:237) > at > org.apache.hudi.hive.HiveSyncTool.syncHoodieTable(HiveSyncTool.java:182) > at org.apache.hudi.hive.HiveSyncTool.doSync(HiveSyncTool.java:131) > at > org.apache.hudi.hive.HiveSyncTool.syncHoodieTable(HiveSyncTool.java:117) > at > org.apache.hudi.utilities.deltastreamer.DeltaSync.syncHive(DeltaSync.java:625) > ``` > The same works with Spark data source. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Resolved] (HUDI-2525) Test prometheus metrics with hudi (both spark ds and deltastreamer)

[

https://issues.apache.org/jira/browse/HUDI-2525?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Sagar Sumit resolved HUDI-2525.

---

Resolution: Fixed

> Test prometheus metrics with hudi (both spark ds and deltastreamer)

> ---

>

> Key: HUDI-2525

> URL: https://issues.apache.org/jira/browse/HUDI-2525

> Project: Apache Hudi

> Issue Type: Improvement

>Reporter: sivabalan narayanan

>Assignee: Sagar Sumit

>Priority: Major

> Labels: sev:critical, user-support-issues

> Fix For: 0.10.0

>

>

> Test prometheus metrics with hudi (both spark ds and deltastreamer)

>

> exception w/ deltastreamer

> {code:java}

> Exception in thread "main" java.lang.NoSuchMethodError: 'void

> io.prometheus.client.dropwizard.DropwizardExports.(org.apache.hudi.com.codahale.metrics.MetricRegistry)'

> at

> org.apache.hudi.metrics.prometheus.PrometheusReporter.(PrometheusReporter.java:49)

> at

> org.apache.hudi.metrics.MetricsReporterFactory.createReporter(MetricsReporterFactory.java:75)

> at org.apache.hudi.metrics.Metrics.(Metrics.java:50) at

> org.apache.hudi.metrics.Metrics.init(Metrics.java:96)at

> org.apache.hudi.utilities.deltastreamer.HoodieDeltaStreamerMetrics.(HoodieDeltaStreamerMetrics.java:44)

> at

> org.apache.hudi.utilities.deltastreamer.DeltaSync.(DeltaSync.java:224)

> at

> org.apache.hudi.utilities.deltastreamer.HoodieDeltaStreamer$DeltaSyncService.(HoodieDeltaStreamer.java:606)

> at

> org.apache.hudi.utilities.deltastreamer.HoodieDeltaStreamer.(HoodieDeltaStreamer.java:143)

> at

> org.apache.hudi.utilities.deltastreamer.HoodieDeltaStreamer.(HoodieDeltaStreamer.java:107)

> at

> org.apache.hudi.integ.testsuite.HoodieDeltaStreamerWrapper.(HoodieDeltaStreamerWrapper.java:39)

> at

> org.apache.hudi.integ.testsuite.HoodieTestSuiteWriter.(HoodieTestSuiteWriter.java:88)

> at

> org.apache.hudi.integ.testsuite.dag.WriterContext.initContext(WriterContext.java:70)

> at

> org.apache.hudi.integ.testsuite.HoodieTestSuiteJob.runTestSuite(HoodieTestSuiteJob.java:188)

> at

> org.apache.hudi.integ.testsuite.HoodieTestSuiteJob.main(HoodieTestSuiteJob.java:170)

> at java.base/jdk.internal.reflect.NativeMethodAccessorImpl.invoke0(Native

> Method) at

> java.base/jdk.internal.reflect.NativeMethodAccessorImpl.invoke(Unknown

> Source) at

> java.base/jdk.internal.reflect.DelegatingMethodAccessorImpl.invoke(Unknown

> Source) at java.base/java.lang.reflect.Method.invoke(Unknown Source)at

> org.apache.spark.deploy.JavaMainApplication.start(SparkApplication.scala:52)

> at

> org.apache.spark.deploy.SparkSubmit.org$apache$spark$deploy$SparkSubmit$$runMain(SparkSubmit.scala:951)

> at

> org.apache.spark.deploy.SparkSubmit.doRunMain$1(SparkSubmit.scala:180)

> at org.apache.spark.deploy.SparkSubmit.submit(SparkSubmit.scala:203)at

> org.apache.spark.deploy.SparkSubmit.doSubmit(SparkSubmit.scala:90) at

> org.apache.spark.deploy.SparkSubmit$$anon$2.doSubmit(SparkSubmit.scala:1030)

> at org.apache.spark.deploy.SparkSubmit$.main(SparkSubmit.scala:1039)at

> org.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala)21/10/01 17:06:01

> INFO ShutdownHookManager: Shutdown hook called

> {code}

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

[GitHub] [hudi] hudi-bot edited a comment on pull request #3203: [HUDI-2086] Refactor hive mor_incremental_view

hudi-bot edited a comment on pull request #3203: URL: https://github.com/apache/hudi/pull/3203#issuecomment-872092745 ## CI report: * 0fa6297ce58eb877fd5c4eba59fef20ad9335d26 UNKNOWN * 1a80559bd98829552acffdaf20d3ea0384d1d936 Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2645) * c4af04dab3dab31ef05ba6007000738a2dfb81ce Azure: [PENDING](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2649) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3203: [HUDI-2086] Refactor hive mor_incremental_view

hudi-bot edited a comment on pull request #3203: URL: https://github.com/apache/hudi/pull/3203#issuecomment-872092745 ## CI report: * 0fa6297ce58eb877fd5c4eba59fef20ad9335d26 UNKNOWN * 1a80559bd98829552acffdaf20d3ea0384d1d936 Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2645) * c4af04dab3dab31ef05ba6007000738a2dfb81ce UNKNOWN Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Resolved] (HUDI-2556) Tweak some default config options for flink

[ https://issues.apache.org/jira/browse/HUDI-2556?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Danny Chen resolved HUDI-2556. -- Resolution: Fixed Fixed via master branch: 2c370cbae084a41162fedbcc0b1e66558629dcbe > Tweak some default config options for flink > --- > > Key: HUDI-2556 > URL: https://issues.apache.org/jira/browse/HUDI-2556 > Project: Apache Hudi > Issue Type: Task >Reporter: Danny Chen >Assignee: Danny Chen >Priority: Major > Labels: pull-request-available > Fix For: 0.10.0 > > -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Updated] (HUDI-2556) Tweak some default config options for flink

[ https://issues.apache.org/jira/browse/HUDI-2556?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Danny Chen updated HUDI-2556: - Component/s: Flink Integration > Tweak some default config options for flink > --- > > Key: HUDI-2556 > URL: https://issues.apache.org/jira/browse/HUDI-2556 > Project: Apache Hudi > Issue Type: Task > Components: Flink Integration >Reporter: Danny Chen >Assignee: Danny Chen >Priority: Major > Labels: pull-request-available > Fix For: 0.10.0 > > -- This message was sent by Atlassian Jira (v8.3.4#803005)

[hudi] branch master updated (f897e6d -> 2c370cb)

This is an automated email from the ASF dual-hosted git repository. danny0405 pushed a change to branch master in repository https://gitbox.apache.org/repos/asf/hudi.git. from f897e6d [HUDI-2551] Support DefaultHoodieRecordPayload for flink (#3792) add 2c370cb [HUDI-2556] Tweak some default config options for flink (#3800) No new revisions were added by this update. Summary of changes: .../apache/hudi/configuration/FlinkOptions.java| 26 -- .../org/apache/hudi/sink/StreamWriteFunction.java | 4 ++-- .../hudi/sink/StreamWriteOperatorCoordinator.java | 10 +++-- .../apache/hudi/sink/utils/PayloadCreation.java| 2 +- .../apache/hudi/streamer/FlinkStreamerConfig.java | 8 +++ .../org/apache/hudi/table/HoodieTableFactory.java | 19 +++- .../java/org/apache/hudi/util/StreamerUtil.java| 12 +- .../org/apache/hudi/sink/TestWriteCopyOnWrite.java | 4 ++-- .../apache/hudi/table/HoodieDataSourceITCase.java | 4 ++-- .../apache/hudi/table/TestHoodieTableFactory.java | 15 + 10 files changed, 57 insertions(+), 47 deletions(-)

[GitHub] [hudi] danny0405 merged pull request #3800: [HUDI-2556] Tweak some default config options for flink

danny0405 merged pull request #3800: URL: https://github.com/apache/hudi/pull/3800 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3800: [HUDI-2556] Tweak some default config options for flink

hudi-bot edited a comment on pull request #3800: URL: https://github.com/apache/hudi/pull/3800#issuecomment-943179326 ## CI report: * b676a7d441b059d4c22918e700a84b8fe51e240b UNKNOWN * 56a96d75ecea14a1f0367ccb339a45f4c8813dfa Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2648) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] xiarixiaoyao commented on a change in pull request #3203: [HUDI-2086] Refactor hive mor_incremental_view

xiarixiaoyao commented on a change in pull request #3203:

URL: https://github.com/apache/hudi/pull/3203#discussion_r728890684

##

File path:

hudi-hadoop-mr/src/main/java/org/apache/hudi/hadoop/utils/HoodieRealtimeInputFormatUtils.java

##

@@ -161,6 +162,51 @@

return rtSplits.toArray(new InputSplit[0]);

}

+ // pick all incremental files and add them to rtSplits then filter out those

files.

+ private static Map> filterOutIncrementalSplits(

+ List fileSplitList,

+ List rtSplits,

+ final Option finalHoodieVirtualKeyInfo) {

+return fileSplitList.stream().filter(s -> {

+ // deal with incremental query.

+ try {

+if (s instanceof BaseFileWithLogsSplit) {

+ BaseFileWithLogsSplit bs = (BaseFileWithLogsSplit)s;

+ if (bs.getBelongToIncrementalSplit()) {

+rtSplits.add(new HoodieRealtimeFileSplit(bs, bs.getBasePath(),

bs.getDeltaLogPaths(), bs.getMaxCommitTime(), finalHoodieVirtualKeyInfo));

+ }

+} else if (s instanceof RealtimeBootstrapBaseFileSplit) {

+ rtSplits.add(s);

+}

+ } catch (IOException e) {

+throw new HoodieIOException("Error creating hoodie real time split ",

e);

+ }

+ // filter the snapshot split.

+ if (s instanceof RealtimeBootstrapBaseFileSplit) {

+return false;

+ } else if ((s instanceof BaseFileWithLogsSplit) &&

((BaseFileWithLogsSplit) s).getBelongToIncrementalSplit()) {

Review comment:

i just want to split the logical of incremental query and snapshot

query.ok i will change it ,thanks

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3771: [HUDI-2402] Add Kerberos configuration options to Hive Sync

hudi-bot edited a comment on pull request #3771: URL: https://github.com/apache/hudi/pull/3771#issuecomment-939200284 ## CI report: * 9e64e88d819b6b6bf5ccc5811ea5f4714138fc9e UNKNOWN * c2bc8115f70b89dfc31f27645f98cfbff8d79c0f Azure: [FAILURE](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2646) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3800: [HUDI-2556] Tweak some default config options for flink

hudi-bot edited a comment on pull request #3800: URL: https://github.com/apache/hudi/pull/3800#issuecomment-943179326 ## CI report: * 75ee4b1f600b8231384fb65986e974c1edf26590 Azure: [CANCELED](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2647) * b676a7d441b059d4c22918e700a84b8fe51e240b UNKNOWN * 56a96d75ecea14a1f0367ccb339a45f4c8813dfa Azure: [PENDING](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2648) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3203: [HUDI-2086] Refactor hive mor_incremental_view

hudi-bot edited a comment on pull request #3203: URL: https://github.com/apache/hudi/pull/3203#issuecomment-872092745 ## CI report: * 0fa6297ce58eb877fd5c4eba59fef20ad9335d26 UNKNOWN * 1a80559bd98829552acffdaf20d3ea0384d1d936 Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2645) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3799: [HUDI-2491] hoodie.datasource.hive_sync.mode=hms mode is supported in…

hudi-bot edited a comment on pull request #3799: URL: https://github.com/apache/hudi/pull/3799#issuecomment-943176004 ## CI report: * f44907a941b5b61e642abb5783f70fe8830fe6a6 Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2644) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] gaoshihang opened a new issue #3801: [SUPPORT]Does Flink-cdc support Schema-Evolution?

gaoshihang opened a new issue #3801: URL: https://github.com/apache/hudi/issues/3801 @danny0405 Hi~please ask a question, I use kafka(debezium) as source table, and hudi as target table, create a cdc application. How can I do schema evolution use flink-cdc? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] danny0405 commented on pull request #3798: 3797 java.lang.NoSuchMethodError: io.javalin.core.CachedRequestWrappe…

danny0405 commented on pull request #3798: URL: https://github.com/apache/hudi/pull/3798#issuecomment-943210670 Changes the title and commit message to "[HUDI-2557] Shade javax.servlet for flink bundle jar" -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Created] (HUDI-2557) Shade javax.servlet for flink bundle jar

Danny Chen created HUDI-2557: Summary: Shade javax.servlet for flink bundle jar Key: HUDI-2557 URL: https://issues.apache.org/jira/browse/HUDI-2557 Project: Apache Hudi Issue Type: Test Components: Flink Integration Reporter: Danny Chen Assignee: Danny Chen Fix For: 0.10.0 -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [hudi] danny0405 commented on a change in pull request #3203: [HUDI-2086] Refactor hive mor_incremental_view

danny0405 commented on a change in pull request #3203:

URL: https://github.com/apache/hudi/pull/3203#discussion_r728830538

##

File path:

hudi-hadoop-mr/src/main/java/org/apache/hudi/hadoop/utils/HoodieRealtimeInputFormatUtils.java

##

@@ -161,6 +162,51 @@

return rtSplits.toArray(new InputSplit[0]);

}

+ // pick all incremental files and add them to rtSplits then filter out those

files.

+ private static Map> filterOutIncrementalSplits(

+ List fileSplitList,

+ List rtSplits,

+ final Option finalHoodieVirtualKeyInfo) {

+return fileSplitList.stream().filter(s -> {

+ // deal with incremental query.

+ try {

+if (s instanceof BaseFileWithLogsSplit) {

+ BaseFileWithLogsSplit bs = (BaseFileWithLogsSplit)s;

+ if (bs.getBelongToIncrementalSplit()) {

+rtSplits.add(new HoodieRealtimeFileSplit(bs, bs.getBasePath(),

bs.getDeltaLogPaths(), bs.getMaxCommitTime(), finalHoodieVirtualKeyInfo));

+ }

+} else if (s instanceof RealtimeBootstrapBaseFileSplit) {

+ rtSplits.add(s);

+}

+ } catch (IOException e) {

+throw new HoodieIOException("Error creating hoodie real time split ",

e);

+ }

+ // filter the snapshot split.

+ if (s instanceof RealtimeBootstrapBaseFileSplit) {

+return false;

+ } else if ((s instanceof BaseFileWithLogsSplit) &&

((BaseFileWithLogsSplit) s).getBelongToIncrementalSplit()) {

Review comment:

Why not just return early, i have pasted the code. And Why we need to

handle the incremental query first, can we handle them together with snapshot

query ?

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3800: [HUDI-2556] Tweak some default config options for flink

hudi-bot edited a comment on pull request #3800: URL: https://github.com/apache/hudi/pull/3800#issuecomment-943179326 ## CI report: * 75ee4b1f600b8231384fb65986e974c1edf26590 Azure: [CANCELED](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2647) * b676a7d441b059d4c22918e700a84b8fe51e240b UNKNOWN * 56a96d75ecea14a1f0367ccb339a45f4c8813dfa UNKNOWN Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] danny0405 commented on issue #3796: [SUPPORT] Flink write to hudi,after running for a period of time,throw a NoClassDefFoundError

danny0405 commented on issue #3796: URL: https://github.com/apache/hudi/issues/3796#issuecomment-943201590 Yes, the bundle jar does not package the hadoop jar. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3800: [HUDI-2556] Tweak some default config options for flink

hudi-bot edited a comment on pull request #3800: URL: https://github.com/apache/hudi/pull/3800#issuecomment-943179326 ## CI report: * 75ee4b1f600b8231384fb65986e974c1edf26590 Azure: [CANCELED](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2647) * b676a7d441b059d4c22918e700a84b8fe51e240b UNKNOWN Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3800: [HUDI-2556] Tweak some default config options for flink

hudi-bot edited a comment on pull request #3800: URL: https://github.com/apache/hudi/pull/3800#issuecomment-943179326 ## CI report: * 75ee4b1f600b8231384fb65986e974c1edf26590 Azure: [PENDING](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2647) * b676a7d441b059d4c22918e700a84b8fe51e240b UNKNOWN Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3771: [HUDI-2402] Add Kerberos configuration options to Hive Sync

hudi-bot edited a comment on pull request #3771: URL: https://github.com/apache/hudi/pull/3771#issuecomment-939200284 ## CI report: * b4808aaf973608255c97e1eb1f46ff04d9bb4bee Azure: [FAILURE](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2556) * 9e64e88d819b6b6bf5ccc5811ea5f4714138fc9e UNKNOWN * c2bc8115f70b89dfc31f27645f98cfbff8d79c0f Azure: [PENDING](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2646) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3800: [HUDI-2556] Tweak some default config options for flink

hudi-bot edited a comment on pull request #3800: URL: https://github.com/apache/hudi/pull/3800#issuecomment-943179326 ## CI report: * 75ee4b1f600b8231384fb65986e974c1edf26590 Azure: [PENDING](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2647) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3771: [HUDI-2402] Add Kerberos configuration options to Hive Sync

hudi-bot edited a comment on pull request #3771: URL: https://github.com/apache/hudi/pull/3771#issuecomment-939200284 ## CI report: * b4808aaf973608255c97e1eb1f46ff04d9bb4bee Azure: [FAILURE](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2556) * 9e64e88d819b6b6bf5ccc5811ea5f4714138fc9e UNKNOWN * c2bc8115f70b89dfc31f27645f98cfbff8d79c0f UNKNOWN Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3203: [HUDI-2086] Refactor hive mor_incremental_view

hudi-bot edited a comment on pull request #3203: URL: https://github.com/apache/hudi/pull/3203#issuecomment-872092745 ## CI report: * 0fa6297ce58eb877fd5c4eba59fef20ad9335d26 UNKNOWN * 6d67b68e19a43f8668e5773d27ca9c33a8de0a37 Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2642) * 1a80559bd98829552acffdaf20d3ea0384d1d936 Azure: [PENDING](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2645) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] test-wangxiaoyu commented on a change in pull request #3771: [HUDI-2402] Add Kerberos configuration options to Hive Sync

test-wangxiaoyu commented on a change in pull request #3771: URL: https://github.com/apache/hudi/pull/3771#discussion_r728802039 ## File path: hudi-spark-datasource/hudi-spark-common/src/main/java/org/apache/hudi/DataSourceUtils.java ## @@ -307,6 +307,10 @@ public static HiveSyncConfig buildHiveSyncConfig(TypedProperties props, String b DataSourceWriteOptions.HIVE_SKIP_RO_SUFFIX_FOR_READ_OPTIMIZED_TABLE().defaultValue())); hiveSyncConfig.supportTimestamp = Boolean.valueOf(props.getString(DataSourceWriteOptions.HIVE_SUPPORT_TIMESTAMP_TYPE().key(), DataSourceWriteOptions.HIVE_SUPPORT_TIMESTAMP_TYPE().defaultValue())); +hiveSyncConfig.useKerberos = + Boolean.valueOf(props.getString(DataSourceWriteOptions.HIVE_SYNC_USE_KERBEROS().key(),DataSourceWriteOptions.HIVE_SYNC_USE_KERBEROS().defaultValue())); Review comment: I added in the TestHiveSyncTool testHiveSyncOfKerberosEnvironment method is used to test -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot commented on pull request #3800: [HUDI-2556] Tweak some default config options for flink

hudi-bot commented on pull request #3800: URL: https://github.com/apache/hudi/pull/3800#issuecomment-943179326 ## CI report: * 75ee4b1f600b8231384fb65986e974c1edf26590 UNKNOWN Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3799: [HUDI-2491] hoodie.datasource.hive_sync.mode=hms mode is supported in…

hudi-bot edited a comment on pull request #3799: URL: https://github.com/apache/hudi/pull/3799#issuecomment-943176004 ## CI report: * f44907a941b5b61e642abb5783f70fe8830fe6a6 Azure: [PENDING](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2644) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3771: [HUDI-2402] Add Kerberos configuration options to Hive Sync

hudi-bot edited a comment on pull request #3771: URL: https://github.com/apache/hudi/pull/3771#issuecomment-939200284 ## CI report: * b4808aaf973608255c97e1eb1f46ff04d9bb4bee Azure: [FAILURE](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2556) * 9e64e88d819b6b6bf5ccc5811ea5f4714138fc9e UNKNOWN Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] test-wangxiaoyu commented on a change in pull request #3771: [HUDI-2402] Add Kerberos configuration options to Hive Sync

test-wangxiaoyu commented on a change in pull request #3771:

URL: https://github.com/apache/hudi/pull/3771#discussion_r728800351

##

File path:

hudi-sync/hudi-hive-sync/src/main/java/org/apache/hudi/hive/HiveSyncTool.java

##

@@ -77,6 +77,10 @@ public HiveSyncTool(HiveSyncConfig cfg, HiveConf

configuration, FileSystem fs) {

super(configuration.getAllProperties(), fs);

try {

+ if (cfg.useKerberos) {

+configuration.set("hive.metastore.sasl.enabled", "true");

+configuration.set("hive.metastore.kerberos.principal",

cfg.kerberosPrincipal);

Review comment:

Thanks to review

I set it to its initial value

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3203: [HUDI-2086] Refactor hive mor_incremental_view

hudi-bot edited a comment on pull request #3203: URL: https://github.com/apache/hudi/pull/3203#issuecomment-872092745 ## CI report: * 0fa6297ce58eb877fd5c4eba59fef20ad9335d26 UNKNOWN * 6d67b68e19a43f8668e5773d27ca9c33a8de0a37 Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2642) * 1a80559bd98829552acffdaf20d3ea0384d1d936 UNKNOWN Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot commented on pull request #3799: [HUDI-2491] hoodie.datasource.hive_sync.mode=hms mode is supported in…

hudi-bot commented on pull request #3799: URL: https://github.com/apache/hudi/pull/3799#issuecomment-943176004 ## CI report: * f44907a941b5b61e642abb5783f70fe8830fe6a6 UNKNOWN Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3798: 3797 java.lang.NoSuchMethodError: io.javalin.core.CachedRequestWrappe…

hudi-bot edited a comment on pull request #3798: URL: https://github.com/apache/hudi/pull/3798#issuecomment-943124632 ## CI report: * e8b0555ed45956734721eda7529f04a3e739a0d2 Azure: [SUCCESS](https://dev.azure.com/apache-hudi-ci-org/785b6ef4-2f42-4a89-8f0e-5f0d7039a0cc/_build/results?buildId=2643) Bot commands @hudi-bot supports the following commands: - `@hudi-bot run travis` re-run the last Travis build - `@hudi-bot run azure` re-run the last Azure build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Updated] (HUDI-2556) Tweak some default config options for flink

[ https://issues.apache.org/jira/browse/HUDI-2556?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] ASF GitHub Bot updated HUDI-2556: - Labels: pull-request-available (was: ) > Tweak some default config options for flink > --- > > Key: HUDI-2556 > URL: https://issues.apache.org/jira/browse/HUDI-2556 > Project: Apache Hudi > Issue Type: Task >Reporter: Danny Chen >Assignee: Danny Chen >Priority: Major > Labels: pull-request-available > Fix For: 0.10.0 > > -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [hudi] danny0405 opened a new pull request #3800: [HUDI-2556] Tweak some default config options for flink

danny0405 opened a new pull request #3800: URL: https://github.com/apache/hudi/pull/3800 * rename write.insert.drop.duplicates to write.precombine and set it as true for COW table * set index.global.enabled default as true * set compaction.target_io default as 500GB ## *Tips* - *Thank you very much for contributing to Apache Hudi.* - *Please review https://hudi.apache.org/contribute/how-to-contribute before opening a pull request.* ## What is the purpose of the pull request *(For example: This pull request adds quick-start document.)* ## Brief change log *(for example:)* - *Modify AnnotationLocation checkstyle rule in checkstyle.xml* ## Verify this pull request *(Please pick either of the following options)* This pull request is a trivial rework / code cleanup without any test coverage. *(or)* This pull request is already covered by existing tests, such as *(please describe tests)*. (or) This change added tests and can be verified as follows: *(example:)* - *Added integration tests for end-to-end.* - *Added HoodieClientWriteTest to verify the change.* - *Manually verified the change by running a job locally.* ## Committer checklist - [ ] Has a corresponding JIRA in PR title & commit - [ ] Commit message is descriptive of the change - [ ] CI is green - [ ] Necessary doc changes done or have another open PR - [ ] For large changes, please consider breaking it into sub-tasks under an umbrella JIRA. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] xiarixiaoyao commented on pull request #3203: [HUDI-2086] Refactor hive mor_incremental_view

xiarixiaoyao commented on pull request #3203: URL: https://github.com/apache/hudi/pull/3203#issuecomment-943163714 @danny0405 @nsivabalan thanks you very much for your patience to review those code。 already rebase the code and addressed all comments -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] parisni commented on issue #2498: [SUPPORT] Hudi MERGE_ON_READ load to dataframe fails for the versions [0.6.0],[0.7.0] and runs for [0.5.3]

parisni commented on issue #2498: URL: https://github.com/apache/hudi/issues/2498#issuecomment-943156528 @nsivabalan Also I have to mention this is OSS spark 2.4.4 with metastore overwrite with aws glue to connect spark to glue: https://github.com/awslabs/aws-glue-libs This might be related. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] fuyun2024 commented on pull request #3799: [HUDI-2491] hoodie.datasource.hive_sync.mode=hms mode is supported in…

fuyun2024 commented on pull request #3799: URL: https://github.com/apache/hudi/pull/3799#issuecomment-943152937 @codepe Are you free to take a look at it for me ? This is my new commit . -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

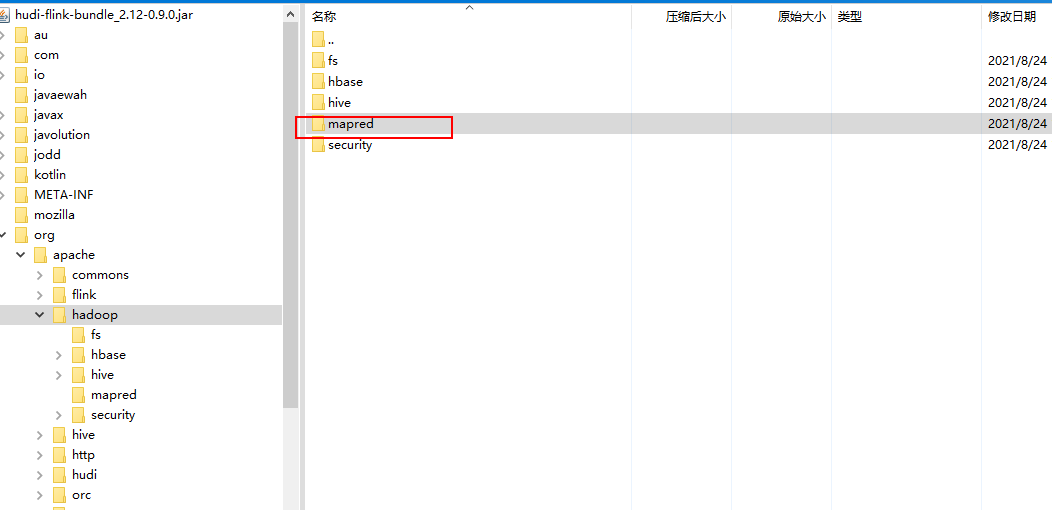

[GitHub] [hudi] t822876884 commented on issue #3796: [SUPPORT] Flink write to hudi,after running for a period of time,throw a NoClassDefFoundError

t822876884 commented on issue #3796: URL: https://github.com/apache/hudi/issues/3796#issuecomment-943149014  the jar hudi-flink-bundle_2.12-0.9.0.jar from mvnrepository.com has no mapreduce -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] fuyun2024 opened a new pull request #3799: [HUDI-2491] hoodie.datasource.hive_sync.mode=hms mode is supported in…

fuyun2024 opened a new pull request #3799: URL: https://github.com/apache/hudi/pull/3799 … spark writer option ## *Tips* - *Thank you very much for contributing to Apache Hudi.* - *Please review https://hudi.apache.org/contribute/how-to-contribute before opening a pull request.* ## What is the purpose of the pull request *(For example: This pull request adds quick-start document.)* ## Brief change log *(for example:)* - *Modify AnnotationLocation checkstyle rule in checkstyle.xml* ## Verify this pull request *(Please pick either of the following options)* This pull request is a trivial rework / code cleanup without any test coverage. *(or)* This pull request is already covered by existing tests, such as *(please describe tests)*. (or) This change added tests and can be verified as follows: *(example:)* - *Added integration tests for end-to-end.* - *Added HoodieClientWriteTest to verify the change.* - *Manually verified the change by running a job locally.* ## Committer checklist - [ ] Has a corresponding JIRA in PR title & commit - [ ] Commit message is descriptive of the change - [ ] CI is green - [ ] Necessary doc changes done or have another open PR - [ ] For large changes, please consider breaking it into sub-tasks under an umbrella JIRA. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] codope commented on a change in pull request #3771: [HUDI-2402] Add Kerberos configuration options to Hive Sync

codope commented on a change in pull request #3771:

URL: https://github.com/apache/hudi/pull/3771#discussion_r728752802

##

File path:

hudi-sync/hudi-hive-sync/src/main/java/org/apache/hudi/hive/HiveSyncTool.java

##

@@ -77,6 +77,10 @@ public HiveSyncTool(HiveSyncConfig cfg, HiveConf

configuration, FileSystem fs) {

super(configuration.getAllProperties(), fs);

try {

+ if (cfg.useKerberos) {

+configuration.set("hive.metastore.sasl.enabled", "true");

+configuration.set("hive.metastore.kerberos.principal",

cfg.kerberosPrincipal);

Review comment:

Let's validate that `cfg.kerberosPrincipal` is not null in this case.

##

File path:

hudi-spark-datasource/hudi-spark-common/src/main/java/org/apache/hudi/DataSourceUtils.java

##

@@ -307,6 +307,10 @@ public static HiveSyncConfig

buildHiveSyncConfig(TypedProperties props, String b

DataSourceWriteOptions.HIVE_SKIP_RO_SUFFIX_FOR_READ_OPTIMIZED_TABLE().defaultValue()));

hiveSyncConfig.supportTimestamp =

Boolean.valueOf(props.getString(DataSourceWriteOptions.HIVE_SUPPORT_TIMESTAMP_TYPE().key(),

DataSourceWriteOptions.HIVE_SUPPORT_TIMESTAMP_TYPE().defaultValue()));

+hiveSyncConfig.useKerberos =

+

Boolean.valueOf(props.getString(DataSourceWriteOptions.HIVE_SYNC_USE_KERBEROS().key(),DataSourceWriteOptions.HIVE_SYNC_USE_KERBEROS().defaultValue()));

+hiveSyncConfig.kerberosPrincipal =

+

props.getString(DataSourceWriteOptions.HIVE_SYNC_KERBEROS_PRINCIPAL().key(),

DataSourceWriteOptions.HIVE_SYNC_KERBEROS_PRINCIPAL().defaultValue());

Review comment:

`HIVE_SYNC_KERBEROS_PRINCIPAL` has null default value. This might throw

HoodieException. Maybe, we can set EMPTY_STRING as default and validate in

HiveSyncTool that this config is not null or empty when

`HIVE_SYNC_USE_KERBEROS` is true.

##

File path:

hudi-spark-datasource/hudi-spark-common/src/main/java/org/apache/hudi/DataSourceUtils.java

##

@@ -307,6 +307,10 @@ public static HiveSyncConfig

buildHiveSyncConfig(TypedProperties props, String b

DataSourceWriteOptions.HIVE_SKIP_RO_SUFFIX_FOR_READ_OPTIMIZED_TABLE().defaultValue()));

hiveSyncConfig.supportTimestamp =

Boolean.valueOf(props.getString(DataSourceWriteOptions.HIVE_SUPPORT_TIMESTAMP_TYPE().key(),

DataSourceWriteOptions.HIVE_SUPPORT_TIMESTAMP_TYPE().defaultValue()));

+hiveSyncConfig.useKerberos =

+

Boolean.valueOf(props.getString(DataSourceWriteOptions.HIVE_SYNC_USE_KERBEROS().key(),DataSourceWriteOptions.HIVE_SYNC_USE_KERBEROS().defaultValue()));

Review comment:

Can we add a unit test in TestDataSourceUtils or TestHiveSyncTool?

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [hudi] t822876884 commented on issue #3796: [SUPPORT] Flink write to hudi,after running for a period of time,throw a NoClassDefFoundError

t822876884 commented on issue #3796: URL: https://github.com/apache/hudi/issues/3796#issuecomment-943128499 > Seems you do not set up the `HADOOP_CLASSPATH` corrently, how do you submit your job ? i have a machine to submit the job by command "/home/flink/flink-1.12.2/bin/flink run -c com.xxx.streaming.bdg.exec.YarnDataExecutorSQL -m yarn-cluster -d -yjm 2048 -ytm 5120 -p 4 -ys 3 -ynm com.xxx.streaming.YarnDataExecutorSQL k-bdg-stream.jar" and look at the resource code ,it caused when merge file. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] fuyun2024 closed pull request #3722: HUDI-2491 hoodie.datasource.hive_sync.mode=hms mode is supported in s…

fuyun2024 closed pull request #3722: URL: https://github.com/apache/hudi/pull/3722 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@hudi.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [hudi] hudi-bot edited a comment on pull request #3798: 3797 java.lang.NoSuchMethodError: io.javalin.core.CachedRequestWrappe…