DeyinZhong opened a new pull request #1855: URL: https://github.com/apache/hudi/pull/1855

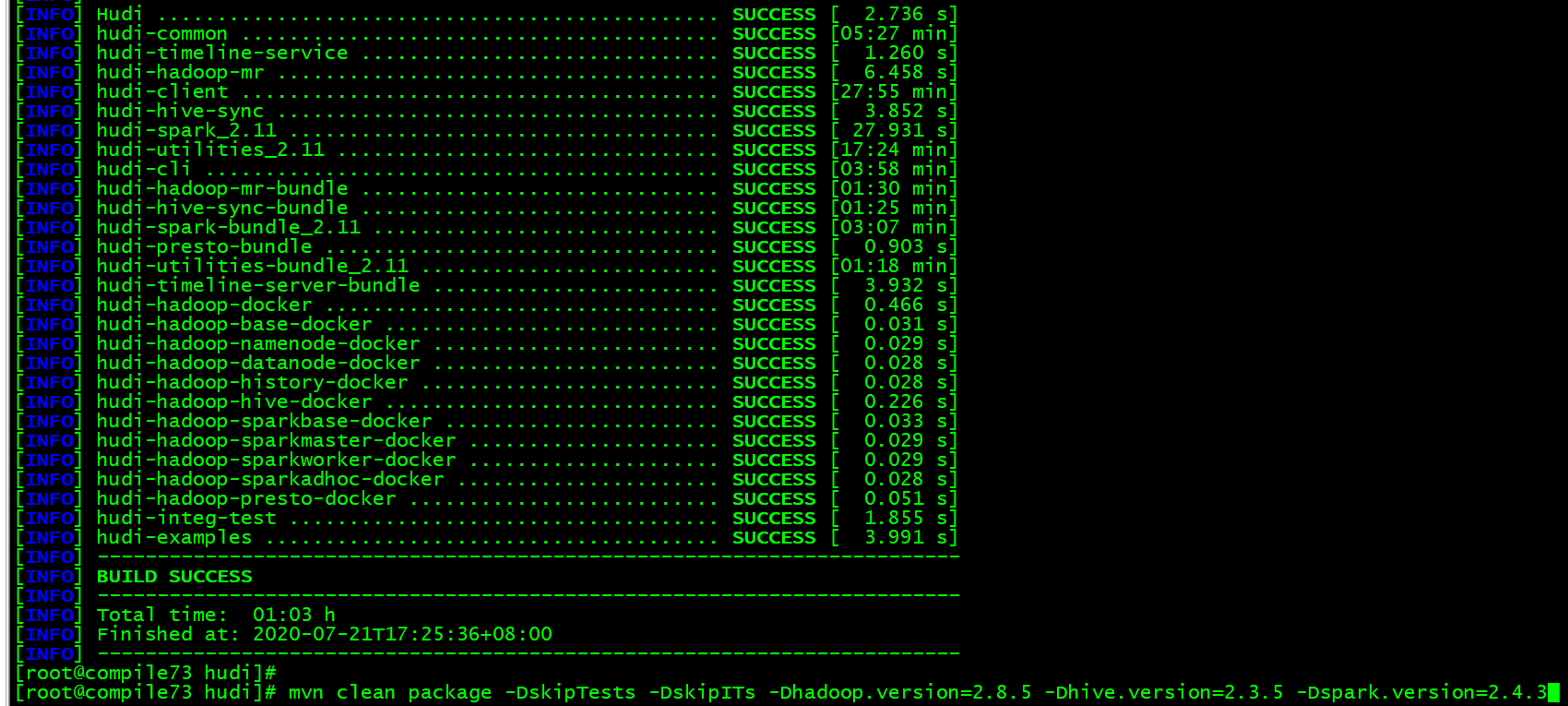

## *Tips* - *Thank you very much for contributing to Apache Hudi.* - *Please review https://hudi.apache.org/contributing.html before opening a pull request.* ## What is the purpose of the pull request - Add hudi support Tencent Cloud Object Storage(COS) ## Brief change log - add cosn schema in StorageSchemes.java - compile hudi after modified codes ``` mvn clean package -DskipTests -DskipITs -Dhadoop.version=2.8.5 -Dhive.version=2.3.5 -Dspark.version=2.4.3 ```  ## Verify this pull request This change added tests and can be verified as follows: You can refer to the documents: http://hudi.apache.org/docs/docker_demo.html Also, We have implemented this feature on Tencent cloud EMR product, please read the link: https://cloud.tencent.com/document/product/589/42955 environments: - hadoop: 2.8.5 - hive: 2.3.5 - spark: 2.4.3 - hudi: release-0.5.1-incubating The general steps for hudi in tencent object storage(cos) as follows: - step1: Upload config to cos ``` hdfs dfs -mkdir -p cosn://[bucket]/hudi/config hdfs dfs -copyFromLocal demo/config/* cosn://[bucket]/hudi/config/ ``` - Step 2: Incrementally ingest data from Kafka, and write to cos ``` spark-submit --class org.apache.hudi.utilities.deltastreamer.HoodieDeltaStreamer --master yarn ./hudi-utilities-bundle_2.11-0.5.1-incubating.jar --table-type COPY_ON_WRITE --source-class org.apache.hudi.utilities.sources.JsonKafkaSource --source-ordering-field ts --target-base-path cosn://[bucket]/usr/hive/warehouse/stock_ticks_cow --target-table stock_ticks_cow --props cosn://[bucket]/hudi/config/kafka-source.properties --schemaprovider-class org.apache.hudi.utilities.schema.FilebasedSchemaProvider spark-submit --class org.apache.hudi.utilities.deltastreamer.HoodieDeltaStreamer --master yarn ./hudi-utilities-bundle_2.11-0.5.1-incubating.jar --table-type MERGE_ON_READ --source-class org.apache.hudi.utilities.sources.JsonKafkaSource --source-ordering-field ts --target-base-path cosn://[bucket]/usr/hive/warehouse/stock_ticks_mor --target-table stock_ticks_mor --props cosn://[bucket]/hudi/config/kafka-source.properties --schemaprovider-class org.apache.hudi.utilities.schema.FilebasedSchemaProvider --disable-compaction ``` - Step3: Sync with Hive when data on cos ``` bin/run_sync_tool.sh --jdbc-url jdbc:hive2://[hiveserver2_ip:hiveserver2_port] --user hadoop --pass isd@cloud --partitioned-by dt --base-path cosn://[bucket]/usr/hive/warehouse/stock_ticks_cow --database default --table stock_ticks_cow bin/run_sync_tool.sh --jdbc-url jdbc:hive2://[hiveserver2_ip:hiveserver2_port] --user hadoop --pass hive --partitioned-by dt --base-path cosn://[bucket]/usr/hive/warehouse/stock_ticks_mor --database default --table stock_ticks_mor --skip-ro-suffix ``` - Step4: Query hudi table by hive or spark sql engine ``` beeline -u jdbc:hive2://[hiveserver2_ip:hiveserver2_port] -n hadoop --hiveconf hive.input.format=org.apache.hadoop.hive.ql.io.HiveInputFormat --hiveconf hive.stats.autogather=false spark-sql --master yarn --conf spark.sql.hive.convertMetastoreParquet=false hivesqls: select symbol, max(ts) from stock_ticks_cow group by symbol HAVING symbol = 'GOOG'; select `_hoodie_commit_time`, symbol, ts, volume, open, close from stock_ticks_cow where symbol = 'GOOG'; select symbol, max(ts) from stock_ticks_mor group by symbol HAVING symbol = 'GOOG'; select `_hoodie_commit_time`, symbol, ts, volume, open, close from stock_ticks_mor where symbol = 'GOOG'; select symbol, max(ts) from stock_ticks_mor_rt group by symbol HAVING symbol = 'GOOG'; select `_hoodie_commit_time`, symbol, ts, volume, open, close from stock_ticks_mor_rt where symbol = 'GOOG'; ``` - Step5: Run Compaction when data in cos ``` cli/bin/hudi-cli.sh connect --path cosn://[bucket]/usr/hive/warehouse/stock_ticks_mor compactions show all compaction schedule compaction run --compactionInstant [requestid] --parallelism 2 --sparkMemory 1G --schemaFilePath cosn://[bucket]/hudi/config/schema.avsc --retry 1 ``` ## Committer checklist - [ ] Has a corresponding JIRA in PR title & commit - [ ] Commit message is descriptive of the change - [ ] CI is green - [ ] Necessary doc changes done or have another open PR - [ ] For large changes, please consider breaking it into sub-tasks under an umbrella JIRA. ---------------------------------------------------------------- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org