[GitHub] [spark] viirya commented on a change in pull request #24626: [SPARK-27747][SQL] add a logical plan link in the physical plan

viirya commented on a change in pull request #24626: [SPARK-27747][SQL] add a

logical plan link in the physical plan

URL: https://github.com/apache/spark/pull/24626#discussion_r284990160

##

File path:

sql/core/src/main/scala/org/apache/spark/sql/execution/SparkStrategies.scala

##

@@ -63,6 +63,17 @@ case class PlanLater(plan: LogicalPlan) extends

LeafExecNode {

abstract class SparkStrategies extends QueryPlanner[SparkPlan] {

self: SparkPlanner =>

+ override def plan(plan: LogicalPlan): Iterator[SparkPlan] = {

+super.plan(plan).map { p =>

+ val logicalPlan = plan match {

+case ReturnAnswer(rootPlan) => rootPlan

+case _ => plan

+ }

+ p.tags += QueryPlan.LOGICAL_PLAN_TAG_NAME -> logicalPlan

Review comment:

Only the most top physical plan has the link to logical plan, right?

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] wenxuanguan commented on a change in pull request #24631: [MINOR][CORE]Avoid hardcoded configs

wenxuanguan commented on a change in pull request #24631: [MINOR][CORE]Avoid hardcoded configs URL: https://github.com/apache/spark/pull/24631#discussion_r284989565 ## File path: docs/structured-streaming-kafka-integration.md ## @@ -441,7 +441,7 @@ Apache Kafka only supports at least once write semantics. Consequently, when wri or Batch Queries---to Kafka, some records may be duplicated; this can happen, for example, if Kafka needs to retry a message that was not acknowledged by a Broker, even though that Broker received and wrote the message record. Structured Streaming cannot prevent such duplicates from occurring due to these Kafka write semantics. However, -if writing the query is successful, then you can assume that the query output was written at least once. A possible +if writing the query is successful, then you can assume that the query output was written exactly once. A possible Review comment: @dongjoon-hyun Thanks, done This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] AmplabJenkins removed a comment on issue #24626: [SPARK-27747][SQL] add a logical plan link in the physical plan

AmplabJenkins removed a comment on issue #24626: [SPARK-27747][SQL] add a logical plan link in the physical plan URL: https://github.com/apache/spark/pull/24626#issuecomment-49558 Merged build finished. Test PASSed. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] AmplabJenkins removed a comment on issue #24626: [SPARK-27747][SQL] add a logical plan link in the physical plan

AmplabJenkins removed a comment on issue #24626: [SPARK-27747][SQL] add a logical plan link in the physical plan URL: https://github.com/apache/spark/pull/24626#issuecomment-49571 Test PASSed. Refer to this link for build results (access rights to CI server needed): https://amplab.cs.berkeley.edu/jenkins//job/testing-k8s-prb-make-spark-distribution-unified/10743/ Test PASSed. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] AmplabJenkins commented on issue #24626: [SPARK-27747][SQL] add a logical plan link in the physical plan

AmplabJenkins commented on issue #24626: [SPARK-27747][SQL] add a logical plan link in the physical plan URL: https://github.com/apache/spark/pull/24626#issuecomment-49558 Merged build finished. Test PASSed. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] AmplabJenkins commented on issue #24626: [SPARK-27747][SQL] add a logical plan link in the physical plan

AmplabJenkins commented on issue #24626: [SPARK-27747][SQL] add a logical plan link in the physical plan URL: https://github.com/apache/spark/pull/24626#issuecomment-49571 Test PASSed. Refer to this link for build results (access rights to CI server needed): https://amplab.cs.berkeley.edu/jenkins//job/testing-k8s-prb-make-spark-distribution-unified/10743/ Test PASSed. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] uncleGen commented on a change in pull request #24609: [SPARK-27715][SQL] SQL query details in UI dose not show in correct format.

uncleGen commented on a change in pull request #24609: [SPARK-27715][SQL] SQL

query details in UI dose not show in correct format.

URL: https://github.com/apache/spark/pull/24609#discussion_r284988033

##

File path:

sql/core/src/main/scala/org/apache/spark/sql/execution/ui/AllExecutionsPage.scala

##

@@ -382,13 +382,14 @@ private[ui] class ExecutionPagedTable(

}

private def descriptionCell(execution: SQLExecutionUIData): Seq[Node] = {

+val jobDescription = UIUtils.makeDescription(execution.description,

basePath, plainText = false)

val details = if (execution.details != null && execution.details.nonEmpty)

{

+details

++

-{execution.description}{execution.details}

+{jobDescription}{execution.details}

Review comment:

@shahidki31 Got it, let me confirm this problem.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] AmplabJenkins removed a comment on issue #24632: [SPARK-27755][BUILD] Update zstd-jni to 1.4.0-1

AmplabJenkins removed a comment on issue #24632: [SPARK-27755][BUILD] Update zstd-jni to 1.4.0-1 URL: https://github.com/apache/spark/pull/24632#issuecomment-493331877 Test PASSed. Refer to this link for build results (access rights to CI server needed): https://amplab.cs.berkeley.edu/jenkins//job/testing-k8s-prb-make-spark-distribution-unified/10742/ Test PASSed. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] uncleGen commented on a change in pull request #24609: [SPARK-27715][SQL] SQL query details in UI dose not show in correct format.

uncleGen commented on a change in pull request #24609: [SPARK-27715][SQL] SQL

query details in UI dose not show in correct format.

URL: https://github.com/apache/spark/pull/24609#discussion_r284987586

##

File path:

sql/core/src/main/scala/org/apache/spark/sql/execution/ui/AllExecutionsPage.scala

##

@@ -382,13 +382,14 @@ private[ui] class ExecutionPagedTable(

}

private def descriptionCell(execution: SQLExecutionUIData): Seq[Node] = {

+val jobDescription = UIUtils.makeDescription(execution.description,

basePath, plainText = false)

val details = if (execution.details != null && execution.details.nonEmpty)

{

+details

++

-{execution.description}{execution.details}

+{jobDescription}{execution.details}

Review comment:

What is the entire sql query?

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] AmplabJenkins removed a comment on issue #24632: [SPARK-27755][BUILD] Update zstd-jni to 1.4.0-1

AmplabJenkins removed a comment on issue #24632: [SPARK-27755][BUILD] Update zstd-jni to 1.4.0-1 URL: https://github.com/apache/spark/pull/24632#issuecomment-493331875 Merged build finished. Test PASSed. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] uncleGen commented on a change in pull request #24609: [SPARK-27715][SQL] SQL query details in UI dose not show in correct format.

uncleGen commented on a change in pull request #24609: [SPARK-27715][SQL] SQL

query details in UI dose not show in correct format.

URL: https://github.com/apache/spark/pull/24609#discussion_r284987586

##

File path:

sql/core/src/main/scala/org/apache/spark/sql/execution/ui/AllExecutionsPage.scala

##

@@ -382,13 +382,14 @@ private[ui] class ExecutionPagedTable(

}

private def descriptionCell(execution: SQLExecutionUIData): Seq[Node] = {

+val jobDescription = UIUtils.makeDescription(execution.description,

basePath, plainText = false)

val details = if (execution.details != null && execution.details.nonEmpty)

{

+details

++

-{execution.description}{execution.details}

+{jobDescription}{execution.details}

Review comment:

What is the entire sql query?

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] SparkQA commented on issue #24626: [SPARK-27747][SQL] add a logical plan link in the physical plan

SparkQA commented on issue #24626: [SPARK-27747][SQL] add a logical plan link in the physical plan URL: https://github.com/apache/spark/pull/24626#issuecomment-493332329 **[Test build #105482 has started](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/105482/testReport)** for PR 24626 at commit [`9f377d7`](https://github.com/apache/spark/commit/9f377d7a30bbfc6d982321212a585451cc87bb17). This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] SparkQA commented on issue #24632: [SPARK-27755][BUILD] Update zstd-jni to 1.4.0-1

SparkQA commented on issue #24632: [SPARK-27755][BUILD] Update zstd-jni to 1.4.0-1 URL: https://github.com/apache/spark/pull/24632#issuecomment-493332330 **[Test build #105481 has started](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/105481/testReport)** for PR 24632 at commit [`017841c`](https://github.com/apache/spark/commit/017841c2790609bf3d0dae37b345b7327bbf9f88). This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] AmplabJenkins commented on issue #24632: [SPARK-27755][BUILD] Update zstd-jni to 1.4.0-1

AmplabJenkins commented on issue #24632: [SPARK-27755][BUILD] Update zstd-jni to 1.4.0-1 URL: https://github.com/apache/spark/pull/24632#issuecomment-493331875 Merged build finished. Test PASSed. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] AmplabJenkins commented on issue #24632: [SPARK-27755][BUILD] Update zstd-jni to 1.4.0-1

AmplabJenkins commented on issue #24632: [SPARK-27755][BUILD] Update zstd-jni to 1.4.0-1 URL: https://github.com/apache/spark/pull/24632#issuecomment-493331877 Test PASSed. Refer to this link for build results (access rights to CI server needed): https://amplab.cs.berkeley.edu/jenkins//job/testing-k8s-prb-make-spark-distribution-unified/10742/ Test PASSed. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] wenxuanguan commented on a change in pull request #24631: [MINOR][CORE][DOC]Avoid hardcoded configs and fix kafka sink write semantics in document

wenxuanguan commented on a change in pull request #24631: [MINOR][CORE][DOC]Avoid hardcoded configs and fix kafka sink write semantics in document URL: https://github.com/apache/spark/pull/24631#discussion_r284976140 ## File path: docs/structured-streaming-kafka-integration.md ## @@ -441,7 +441,7 @@ Apache Kafka only supports at least once write semantics. Consequently, when wri or Batch Queries---to Kafka, some records may be duplicated; this can happen, for example, if Kafka needs to retry a message that was not acknowledged by a Broker, even though that Broker received and wrote the message record. Structured Streaming cannot prevent such duplicates from occurring due to these Kafka write semantics. However, -if writing the query is successful, then you can assume that the query output was written at least once. A possible +if writing the query is successful, then you can assume that the query output was written exactly once. A possible Review comment: Thanks for your reply. @dongjoon-hyun I thought this describes the Spark exactly once write semantics if ignore the effect of Kafka. How about change to `So if writing the query is successful, then you can assume that the query output was written at least once`, which will not be confused by `However` This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun opened a new pull request #24632: [SPARK-27755][BUILD] Update zstd-jni to 1.4.0-1

dongjoon-hyun opened a new pull request #24632: [SPARK-27755][BUILD] Update zstd-jni to 1.4.0-1 URL: https://github.com/apache/spark/pull/24632 ## What changes were proposed in this pull request? This PR aims to update `zstd-jni` library to `1.4.0-1` which improves the `level 1 compression speed` performance by 6% in most scenarios. The following is the full release note. - https://github.com/facebook/zstd/releases/tag/v1.4.0 ## How was this patch tested? Pass the Jenkins. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun commented on a change in pull request #24631: [MINOR][CORE][DOC]Avoid hardcoded configs and fix kafka sink write semantics in document

dongjoon-hyun commented on a change in pull request #24631: [MINOR][CORE][DOC]Avoid hardcoded configs and fix kafka sink write semantics in document URL: https://github.com/apache/spark/pull/24631#discussion_r284981733 ## File path: docs/structured-streaming-kafka-integration.md ## @@ -441,7 +441,7 @@ Apache Kafka only supports at least once write semantics. Consequently, when wri or Batch Queries---to Kafka, some records may be duplicated; this can happen, for example, if Kafka needs to retry a message that was not acknowledged by a Broker, even though that Broker received and wrote the message record. Structured Streaming cannot prevent such duplicates from occurring due to these Kafka write semantics. However, -if writing the query is successful, then you can assume that the query output was written at least once. A possible +if writing the query is successful, then you can assume that the query output was written exactly once. A possible Review comment: The existing one looks okay to me. Let's remove this doc change from this PR. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] wenxuanguan commented on a change in pull request #24631: [MINOR][CORE][DOC]Avoid hardcoded configs and fix kafka sink write semantics in document

wenxuanguan commented on a change in pull request #24631: [MINOR][CORE][DOC]Avoid hardcoded configs and fix kafka sink write semantics in document URL: https://github.com/apache/spark/pull/24631#discussion_r284976140 ## File path: docs/structured-streaming-kafka-integration.md ## @@ -441,7 +441,7 @@ Apache Kafka only supports at least once write semantics. Consequently, when wri or Batch Queries---to Kafka, some records may be duplicated; this can happen, for example, if Kafka needs to retry a message that was not acknowledged by a Broker, even though that Broker received and wrote the message record. Structured Streaming cannot prevent such duplicates from occurring due to these Kafka write semantics. However, -if writing the query is successful, then you can assume that the query output was written at least once. A possible +if writing the query is successful, then you can assume that the query output was written exactly once. A possible Review comment: Thanks for your reply. @dongjoon-hyun I thought this describes the situation that query writes to kafka successfully and no records are duplicated. How about change to `So if writing the query is successful, then you can assume that the query output was written at least once`, which will not be confused by `However` This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

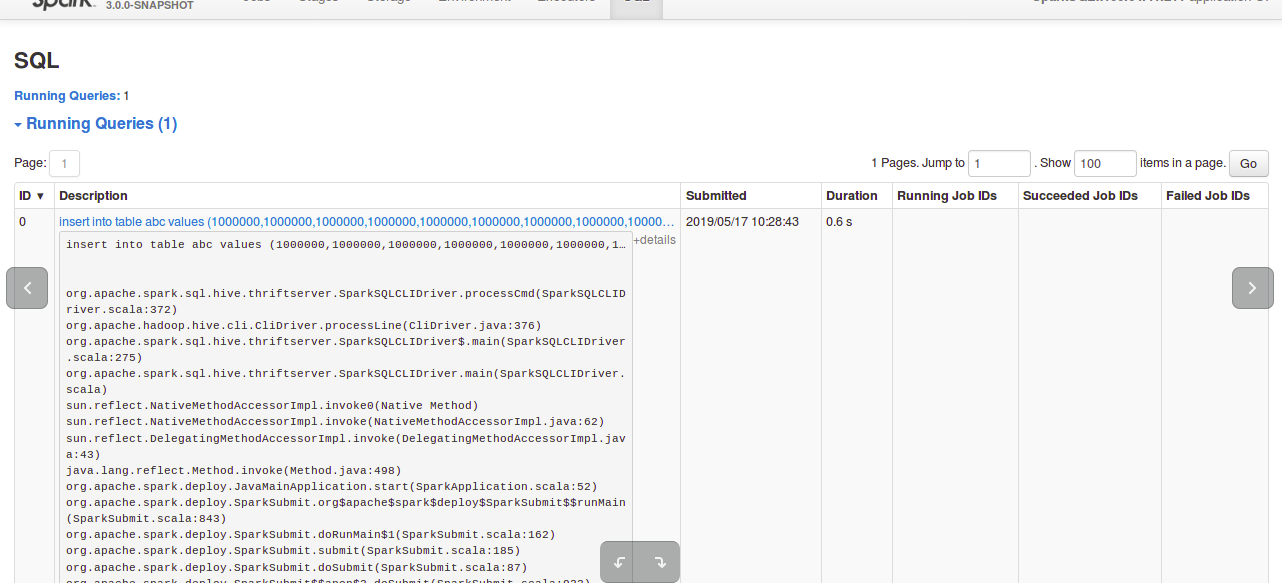

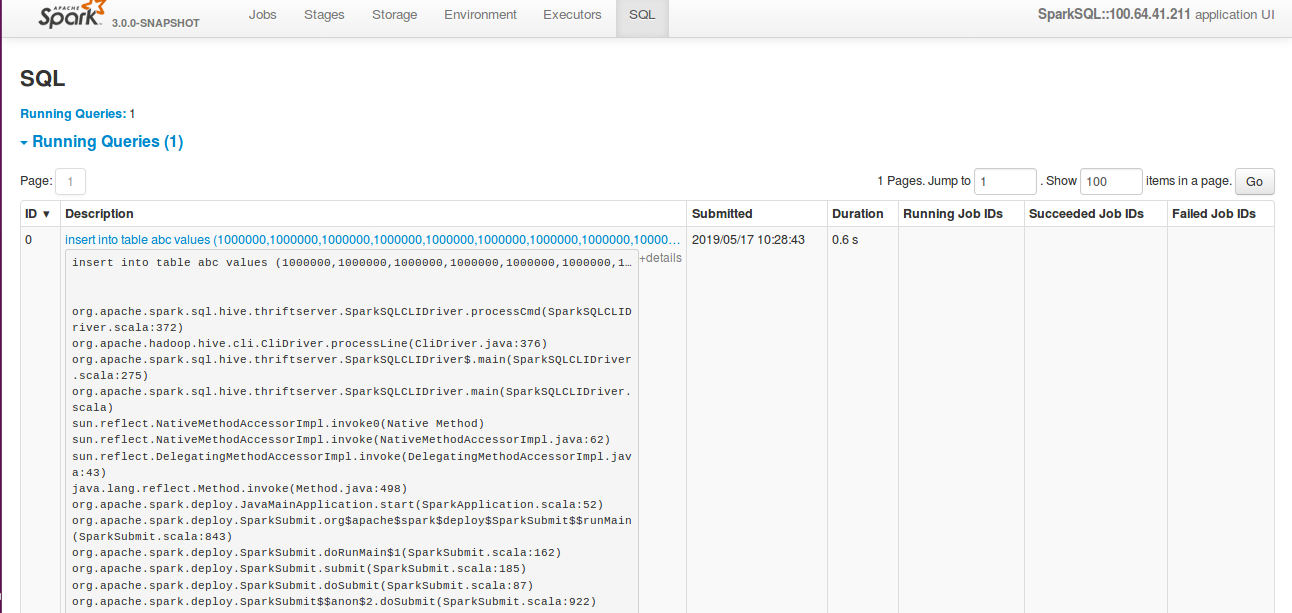

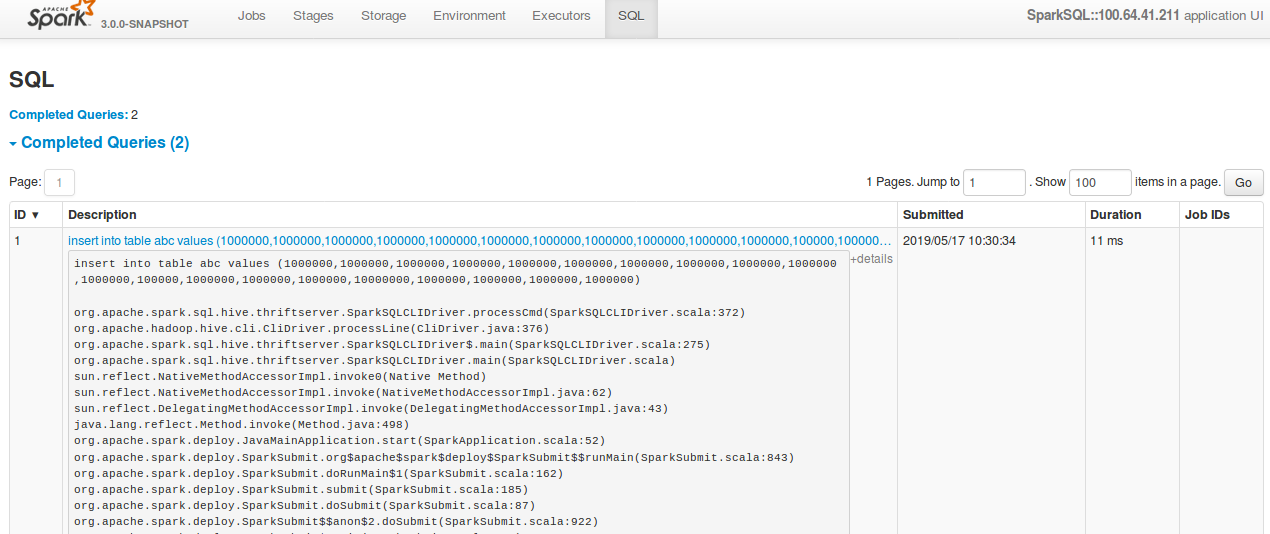

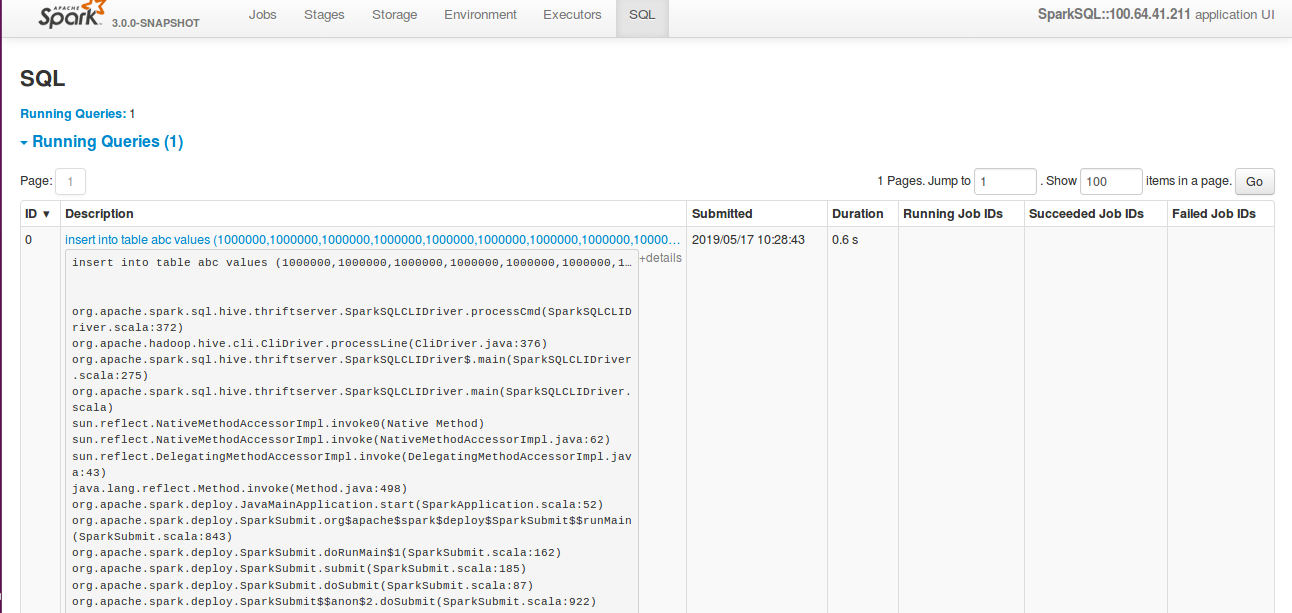

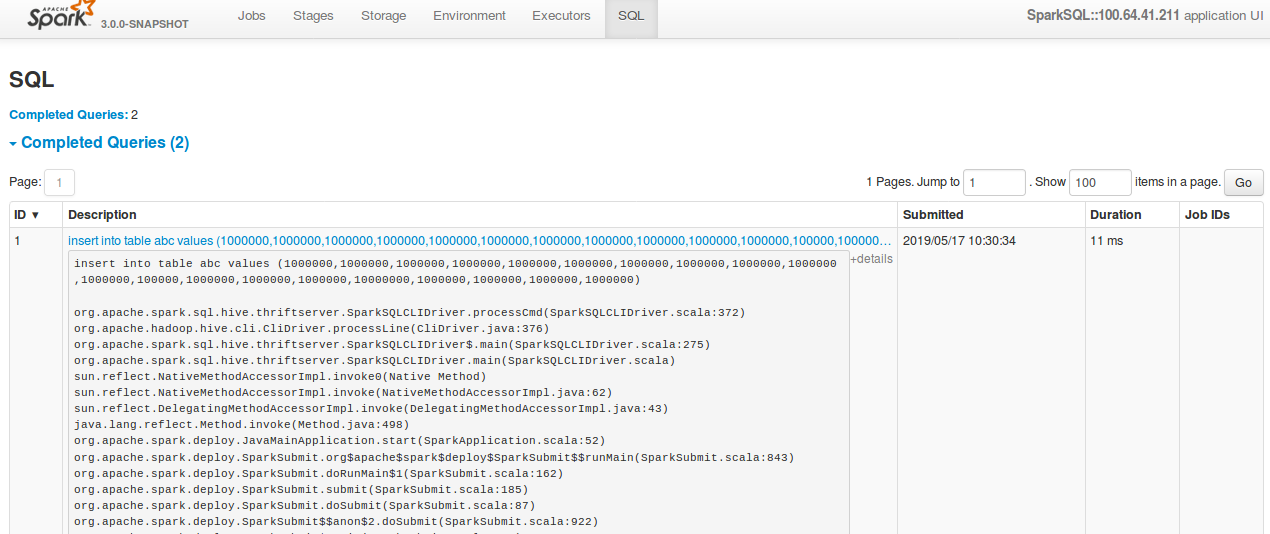

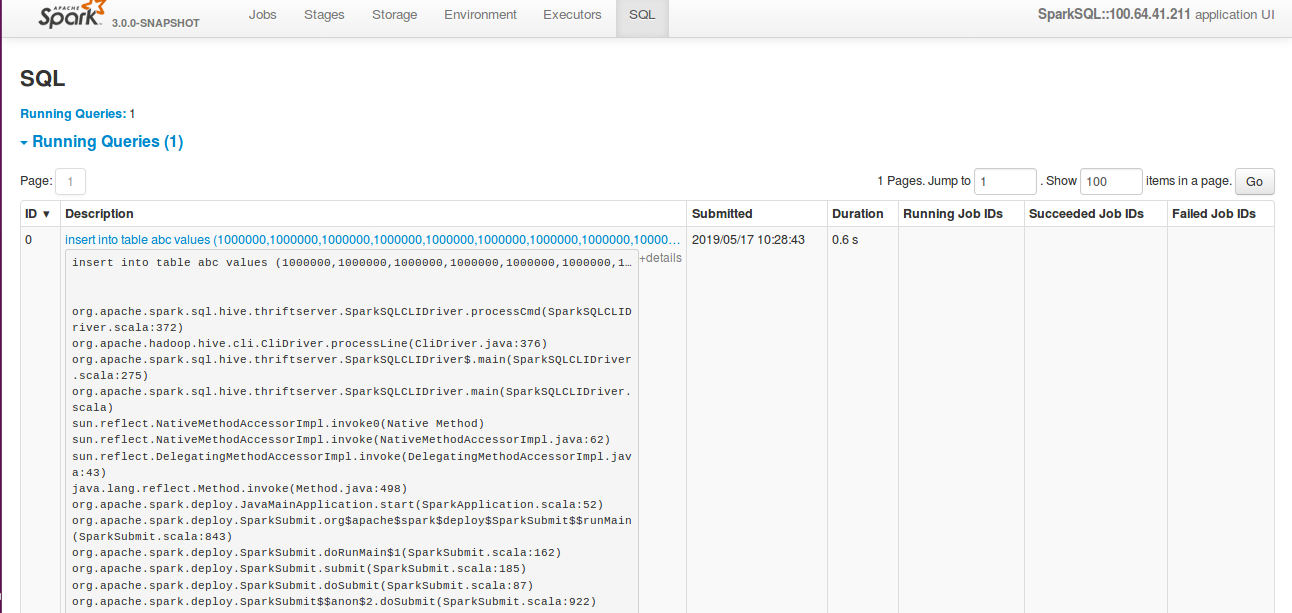

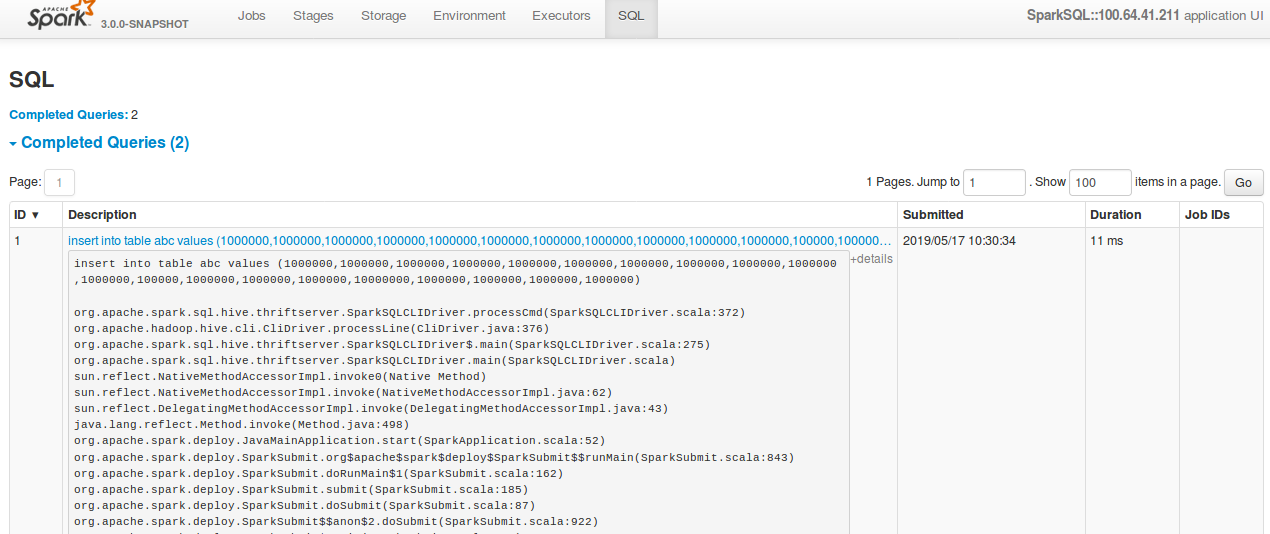

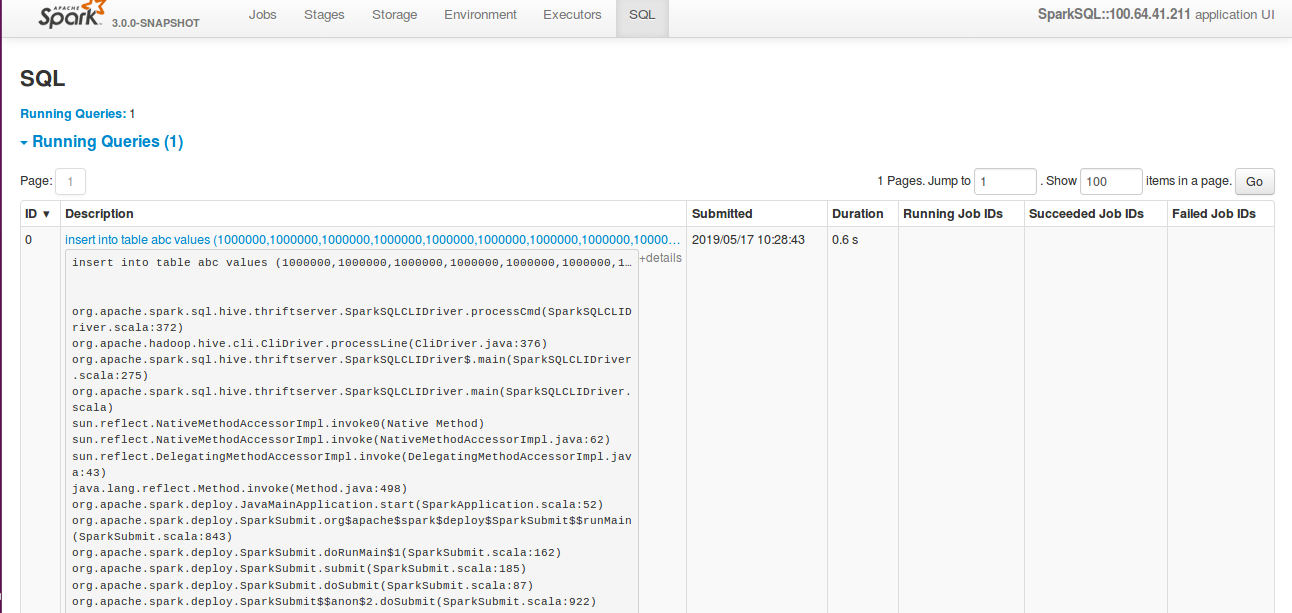

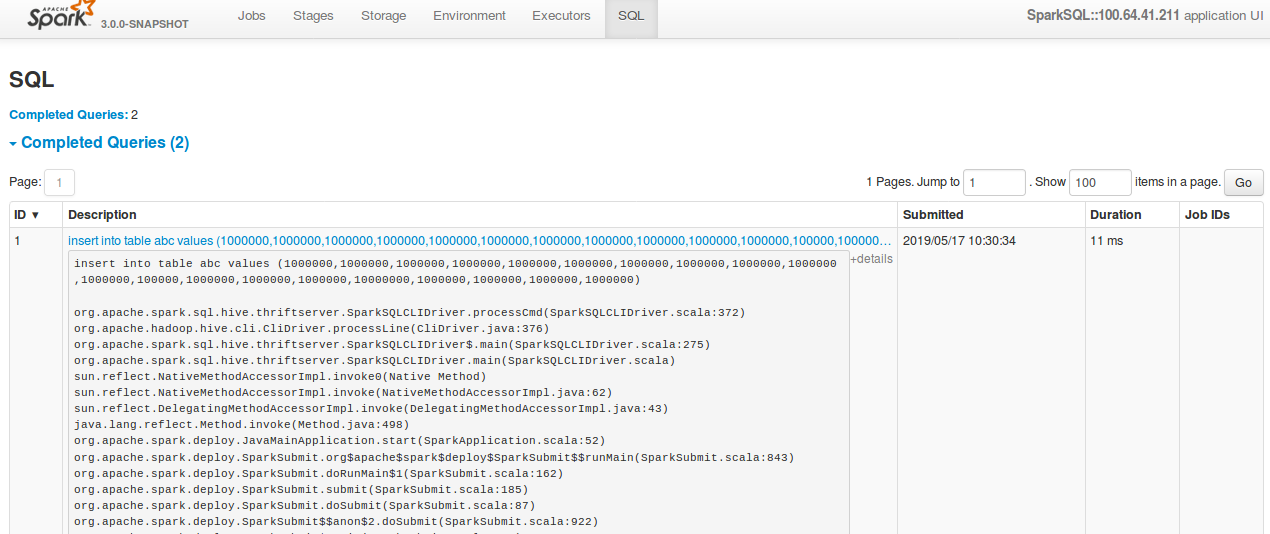

[GitHub] [spark] shahidki31 commented on a change in pull request #24609: [SPARK-27715][SQL] SQL query details in UI dose not show in correct format.

shahidki31 commented on a change in pull request #24609: [SPARK-27715][SQL] SQL

query details in UI dose not show in correct format.

URL: https://github.com/apache/spark/pull/24609#discussion_r284978946

##

File path:

sql/core/src/main/scala/org/apache/spark/sql/execution/ui/AllExecutionsPage.scala

##

@@ -382,13 +382,14 @@ private[ui] class ExecutionPagedTable(

}

private def descriptionCell(execution: SQLExecutionUIData): Seq[Node] = {

+val jobDescription = UIUtils.makeDescription(execution.description,

basePath, plainText = false)

val details = if (execution.details != null && execution.details.nonEmpty)

{

+details

++

-{execution.description}{execution.details}

+{jobDescription}{execution.details}

Review comment:

In the `details`, before this change it used to show entire query if the

query is large. But after the change, it seems not showing the entire sql query.

**After change:**

**Before change:**

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] AmplabJenkins removed a comment on issue #24565: [SPARK-27665][Core] Split fetch shuffle blocks protocol from OpenBlocks

AmplabJenkins removed a comment on issue #24565: [SPARK-27665][Core] Split fetch shuffle blocks protocol from OpenBlocks URL: https://github.com/apache/spark/pull/24565#issuecomment-493321249 Merged build finished. Test PASSed. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] AmplabJenkins removed a comment on issue #24565: [SPARK-27665][Core] Split fetch shuffle blocks protocol from OpenBlocks

AmplabJenkins removed a comment on issue #24565: [SPARK-27665][Core] Split fetch shuffle blocks protocol from OpenBlocks URL: https://github.com/apache/spark/pull/24565#issuecomment-493321253 Test PASSed. Refer to this link for build results (access rights to CI server needed): https://amplab.cs.berkeley.edu/jenkins//job/SparkPullRequestBuilder/105480/ Test PASSed. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] AmplabJenkins commented on issue #24565: [SPARK-27665][Core] Split fetch shuffle blocks protocol from OpenBlocks

AmplabJenkins commented on issue #24565: [SPARK-27665][Core] Split fetch shuffle blocks protocol from OpenBlocks URL: https://github.com/apache/spark/pull/24565#issuecomment-493321249 Merged build finished. Test PASSed. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] AmplabJenkins commented on issue #24565: [SPARK-27665][Core] Split fetch shuffle blocks protocol from OpenBlocks

AmplabJenkins commented on issue #24565: [SPARK-27665][Core] Split fetch shuffle blocks protocol from OpenBlocks URL: https://github.com/apache/spark/pull/24565#issuecomment-493321253 Test PASSed. Refer to this link for build results (access rights to CI server needed): https://amplab.cs.berkeley.edu/jenkins//job/SparkPullRequestBuilder/105480/ Test PASSed. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

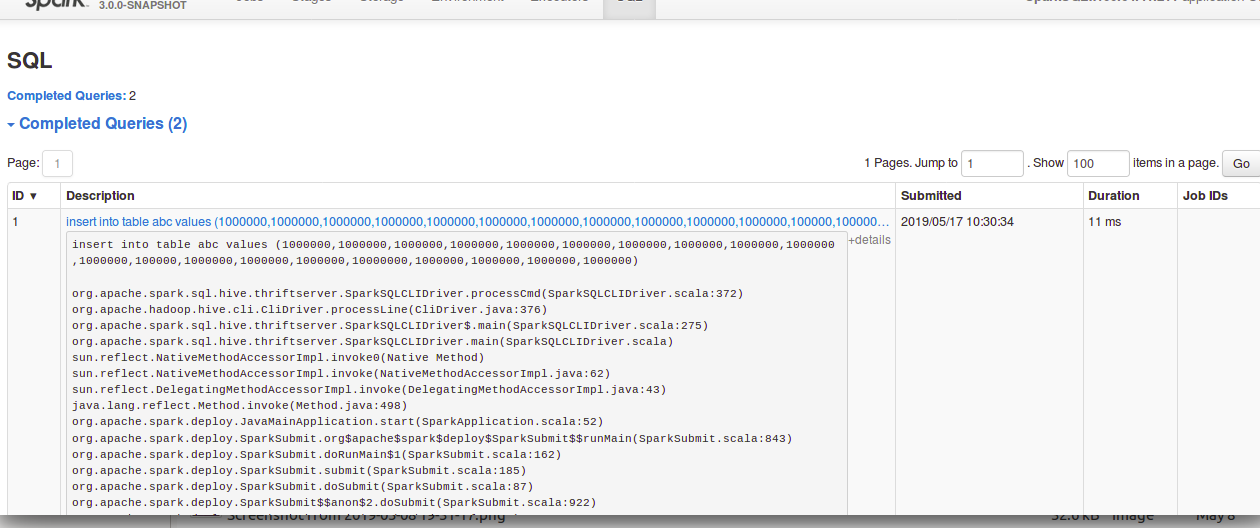

[GitHub] [spark] shahidki31 commented on a change in pull request #24609: [SPARK-27715][SQL] SQL query details in UI dose not show in correct format.

shahidki31 commented on a change in pull request #24609: [SPARK-27715][SQL] SQL

query details in UI dose not show in correct format.

URL: https://github.com/apache/spark/pull/24609#discussion_r284978946

##

File path:

sql/core/src/main/scala/org/apache/spark/sql/execution/ui/AllExecutionsPage.scala

##

@@ -382,13 +382,14 @@ private[ui] class ExecutionPagedTable(

}

private def descriptionCell(execution: SQLExecutionUIData): Seq[Node] = {

+val jobDescription = UIUtils.makeDescription(execution.description,

basePath, plainText = false)

val details = if (execution.details != null && execution.details.nonEmpty)

{

+details

++

-{execution.description}{execution.details}

+{jobDescription}{execution.details}

Review comment:

In the `details`, before this change it used to show entire query if the

query is large. But after the change, it seems not showing the entire sql query.

**Before change:**

**After change:**

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] shahidki31 commented on a change in pull request #24609: [SPARK-27715][SQL] SQL query details in UI dose not show in correct format.

shahidki31 commented on a change in pull request #24609: [SPARK-27715][SQL] SQL

query details in UI dose not show in correct format.

URL: https://github.com/apache/spark/pull/24609#discussion_r284978946

##

File path:

sql/core/src/main/scala/org/apache/spark/sql/execution/ui/AllExecutionsPage.scala

##

@@ -382,13 +382,14 @@ private[ui] class ExecutionPagedTable(

}

private def descriptionCell(execution: SQLExecutionUIData): Seq[Node] = {

+val jobDescription = UIUtils.makeDescription(execution.description,

basePath, plainText = false)

val details = if (execution.details != null && execution.details.nonEmpty)

{

+details

++

-{execution.description}{execution.details}

+{jobDescription}{execution.details}

Review comment:

In the `details`, before this change it used to show entire query if the

query is large. But after the change, it seems not showing the entire sql query.

**After change:**

**Before change:**

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] SparkQA removed a comment on issue #24565: [SPARK-27665][Core] Split fetch shuffle blocks protocol from OpenBlocks

SparkQA removed a comment on issue #24565: [SPARK-27665][Core] Split fetch shuffle blocks protocol from OpenBlocks URL: https://github.com/apache/spark/pull/24565#issuecomment-493301810 **[Test build #105480 has started](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/105480/testReport)** for PR 24565 at commit [`fddcd6c`](https://github.com/apache/spark/commit/fddcd6ce7a6a01c497a0f750c43fd4357fb1a2fd). This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

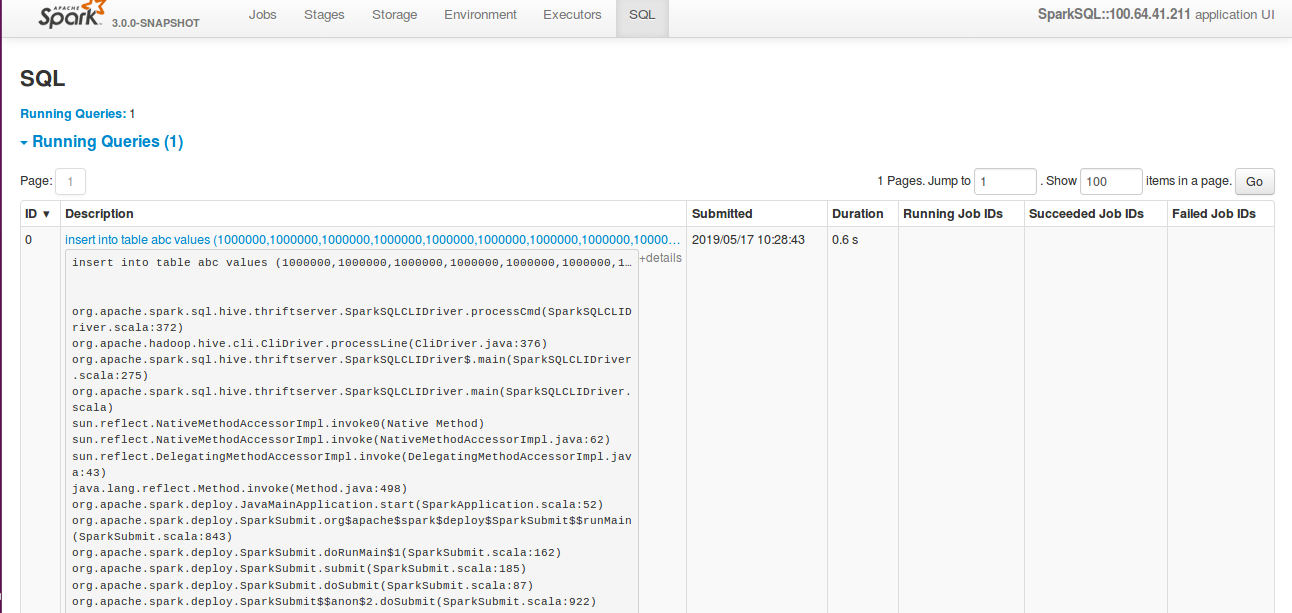

[GitHub] [spark] shahidki31 commented on a change in pull request #24609: [SPARK-27715][SQL] SQL query details in UI dose not show in correct format.

shahidki31 commented on a change in pull request #24609: [SPARK-27715][SQL] SQL

query details in UI dose not show in correct format.

URL: https://github.com/apache/spark/pull/24609#discussion_r284978946

##

File path:

sql/core/src/main/scala/org/apache/spark/sql/execution/ui/AllExecutionsPage.scala

##

@@ -382,13 +382,14 @@ private[ui] class ExecutionPagedTable(

}

private def descriptionCell(execution: SQLExecutionUIData): Seq[Node] = {

+val jobDescription = UIUtils.makeDescription(execution.description,

basePath, plainText = false)

val details = if (execution.details != null && execution.details.nonEmpty)

{

+details

++

-{execution.description}{execution.details}

+{jobDescription}{execution.details}

Review comment:

In the `details`, before this change it used to show entire query if the

query is large. But after the change it seems, it seems not showing the entire

sql query

Before change:

After change:

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] SparkQA commented on issue #24565: [SPARK-27665][Core] Split fetch shuffle blocks protocol from OpenBlocks

SparkQA commented on issue #24565: [SPARK-27665][Core] Split fetch shuffle blocks protocol from OpenBlocks URL: https://github.com/apache/spark/pull/24565#issuecomment-493320966 **[Test build #105480 has finished](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/105480/testReport)** for PR 24565 at commit [`fddcd6c`](https://github.com/apache/spark/commit/fddcd6ce7a6a01c497a0f750c43fd4357fb1a2fd). * This patch passes all tests. * This patch merges cleanly. * This patch adds no public classes. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

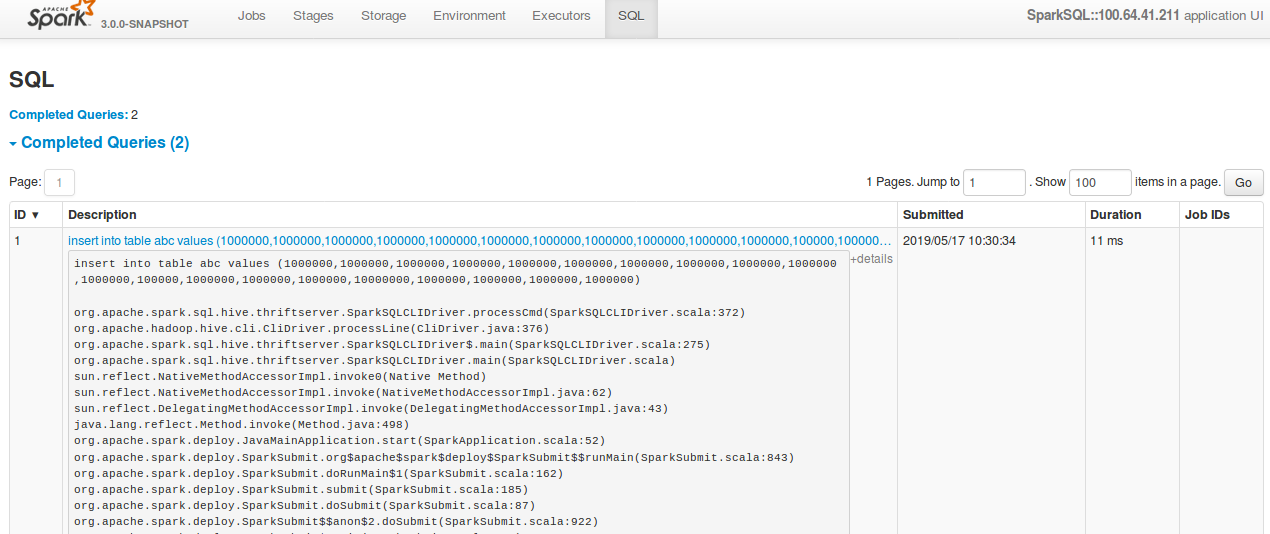

[GitHub] [spark] shahidki31 commented on a change in pull request #24609: [SPARK-27715][SQL] SQL query details in UI dose not show in correct format.

shahidki31 commented on a change in pull request #24609: [SPARK-27715][SQL] SQL

query details in UI dose not show in correct format.

URL: https://github.com/apache/spark/pull/24609#discussion_r284978946

##

File path:

sql/core/src/main/scala/org/apache/spark/sql/execution/ui/AllExecutionsPage.scala

##

@@ -382,13 +382,14 @@ private[ui] class ExecutionPagedTable(

}

private def descriptionCell(execution: SQLExecutionUIData): Seq[Node] = {

+val jobDescription = UIUtils.makeDescription(execution.description,

basePath, plainText = false)

val details = if (execution.details != null && execution.details.nonEmpty)

{

+details

++

-{execution.description}{execution.details}

+{jobDescription}{execution.details}

Review comment:

In the `details`, before this change it used to show entire query if the

query is large. But after the change it seems, it seems not showing the entire

sql query.

**Before change:**

**After change:**

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] shahidki31 commented on a change in pull request #24609: [SPARK-27715][SQL] SQL query details in UI dose not show in correct format.

shahidki31 commented on a change in pull request #24609: [SPARK-27715][SQL] SQL

query details in UI dose not show in correct format.

URL: https://github.com/apache/spark/pull/24609#discussion_r284978946

##

File path:

sql/core/src/main/scala/org/apache/spark/sql/execution/ui/AllExecutionsPage.scala

##

@@ -382,13 +382,14 @@ private[ui] class ExecutionPagedTable(

}

private def descriptionCell(execution: SQLExecutionUIData): Seq[Node] = {

+val jobDescription = UIUtils.makeDescription(execution.description,

basePath, plainText = false)

val details = if (execution.details != null && execution.details.nonEmpty)

{

+details

++

-{execution.description}{execution.details}

+{jobDescription}{execution.details}

Review comment:

It the `details`, before this change it used to show entire query if the

query is large. But after the change it seems, it seems not showing the entire

sql query

Before change:

After change:

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] AmplabJenkins removed a comment on issue #24609: [SPARK-27715][SQL] SQL query details in UI dose not show in correct format.

AmplabJenkins removed a comment on issue #24609: [SPARK-27715][SQL] SQL query details in UI dose not show in correct format. URL: https://github.com/apache/spark/pull/24609#issuecomment-493319728 Test PASSed. Refer to this link for build results (access rights to CI server needed): https://amplab.cs.berkeley.edu/jenkins//job/SparkPullRequestBuilder/105479/ Test PASSed. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] AmplabJenkins removed a comment on issue #24609: [SPARK-27715][SQL] SQL query details in UI dose not show in correct format.

AmplabJenkins removed a comment on issue #24609: [SPARK-27715][SQL] SQL query details in UI dose not show in correct format. URL: https://github.com/apache/spark/pull/24609#issuecomment-493319721 Merged build finished. Test PASSed. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] AmplabJenkins commented on issue #24609: [SPARK-27715][SQL] SQL query details in UI dose not show in correct format.

AmplabJenkins commented on issue #24609: [SPARK-27715][SQL] SQL query details in UI dose not show in correct format. URL: https://github.com/apache/spark/pull/24609#issuecomment-493319728 Test PASSed. Refer to this link for build results (access rights to CI server needed): https://amplab.cs.berkeley.edu/jenkins//job/SparkPullRequestBuilder/105479/ Test PASSed. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] AmplabJenkins commented on issue #24609: [SPARK-27715][SQL] SQL query details in UI dose not show in correct format.

AmplabJenkins commented on issue #24609: [SPARK-27715][SQL] SQL query details in UI dose not show in correct format. URL: https://github.com/apache/spark/pull/24609#issuecomment-493319721 Merged build finished. Test PASSed. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] SparkQA removed a comment on issue #24609: [SPARK-27715][SQL] SQL query details in UI dose not show in correct format.

SparkQA removed a comment on issue #24609: [SPARK-27715][SQL] SQL query details in UI dose not show in correct format. URL: https://github.com/apache/spark/pull/24609#issuecomment-493291753 **[Test build #105479 has started](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/105479/testReport)** for PR 24609 at commit [`ca1a1f7`](https://github.com/apache/spark/commit/ca1a1f787ea17aebeb0910e39c24b44268126bb7). This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] SparkQA commented on issue #24609: [SPARK-27715][SQL] SQL query details in UI dose not show in correct format.

SparkQA commented on issue #24609: [SPARK-27715][SQL] SQL query details in UI dose not show in correct format. URL: https://github.com/apache/spark/pull/24609#issuecomment-493319411 **[Test build #105479 has finished](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/105479/testReport)** for PR 24609 at commit [`ca1a1f7`](https://github.com/apache/spark/commit/ca1a1f787ea17aebeb0910e39c24b44268126bb7). * This patch passes all tests. * This patch merges cleanly. * This patch adds no public classes. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] wenxuanguan commented on a change in pull request #24631: [MINOR][CORE][DOC]Avoid hardcoded configs and fix kafka sink write semantics in document

wenxuanguan commented on a change in pull request #24631: [MINOR][CORE][DOC]Avoid hardcoded configs and fix kafka sink write semantics in document URL: https://github.com/apache/spark/pull/24631#discussion_r284976140 ## File path: docs/structured-streaming-kafka-integration.md ## @@ -441,7 +441,7 @@ Apache Kafka only supports at least once write semantics. Consequently, when wri or Batch Queries---to Kafka, some records may be duplicated; this can happen, for example, if Kafka needs to retry a message that was not acknowledged by a Broker, even though that Broker received and wrote the message record. Structured Streaming cannot prevent such duplicates from occurring due to these Kafka write semantics. However, -if writing the query is successful, then you can assume that the query output was written at least once. A possible +if writing the query is successful, then you can assume that the query output was written exactly once. A possible Review comment: Thanks for your reply. I thought this describes the situation that query writes to kafka successfully and no records are duplicated. How about change to `So if writing the query is successful, then you can assume that the query output was written at least once`, which will not be confused by `However` This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun commented on a change in pull request #24631: [MINOR][CORE][DOC]Avoid hardcoded configs and fix kafka sink write semantics in document

dongjoon-hyun commented on a change in pull request #24631: [MINOR][CORE][DOC]Avoid hardcoded configs and fix kafka sink write semantics in document URL: https://github.com/apache/spark/pull/24631#discussion_r284972996 ## File path: docs/structured-streaming-kafka-integration.md ## @@ -441,7 +441,7 @@ Apache Kafka only supports at least once write semantics. Consequently, when wri or Batch Queries---to Kafka, some records may be duplicated; this can happen, for example, if Kafka needs to retry a message that was not acknowledged by a Broker, even though that Broker received and wrote the message record. Structured Streaming cannot prevent such duplicates from occurring due to these Kafka write semantics. However, -if writing the query is successful, then you can assume that the query output was written at least once. A possible +if writing the query is successful, then you can assume that the query output was written exactly once. A possible Review comment: Hi, @wenxuanguan . This looks wrong in this context. Could you explain your thought? This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] gengliangwang commented on a change in pull request #24598: [SPARK-27699][SQL] Partially push down disjunctive predicated in Parquet/ORC

gengliangwang commented on a change in pull request #24598: [SPARK-27699][SQL]

Partially push down disjunctive predicated in Parquet/ORC

URL: https://github.com/apache/spark/pull/24598#discussion_r284972693

##

File path:

sql/core/v1.2.1/src/main/scala/org/apache/spark/sql/execution/datasources/orc/OrcFilters.scala

##

@@ -75,10 +75,42 @@ private[sql] object OrcFilters extends OrcFiltersBase {

schema: StructType,

dataTypeMap: Map[String, DataType],

filters: Seq[Filter]): Seq[Filter] = {

-for {

- filter <- filters

- _ <- buildSearchArgument(dataTypeMap, filter, newBuilder())

-} yield filter

+import org.apache.spark.sql.sources._

+

+def convertibleFiltersHelper(

Review comment:

This is a helper method for converting Filter to Expression recursively. It

can also be `_convertibleFilters` or `convertibleFilters0`...

Here it is following `createFilterHelper` in `ParquetFilters`.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun closed pull request #24529: [SPARK-27634][Structured Streaming] deleteCheckpointOnStop should be configurable

dongjoon-hyun closed pull request #24529: [SPARK-27634][Structured Streaming] deleteCheckpointOnStop should be configurable URL: https://github.com/apache/spark/pull/24529 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun commented on issue #24529: [SPARK-27634][Structured Streaming] deleteCheckpointOnStop should be configurable

dongjoon-hyun commented on issue #24529: [SPARK-27634][Structured Streaming] deleteCheckpointOnStop should be configurable URL: https://github.com/apache/spark/pull/24529#issuecomment-493312396 Hi, @gentlewangyu . Thank you for making a PR. But it seems that Apache Spark already implemented the required option. I'll close this PR and Apache JIRA issue as a duplicate of SPARK-26389 . Sorry for closing your PR. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun commented on a change in pull request #24605: [SPARK-27711][CORE] Unset InputFileBlockHolder at the end of tasks

dongjoon-hyun commented on a change in pull request #24605: [SPARK-27711][CORE]

Unset InputFileBlockHolder at the end of tasks

URL: https://github.com/apache/spark/pull/24605#discussion_r284970207

##

File path: python/pyspark/sql/tests/test_functions.py

##

@@ -278,6 +279,22 @@ def test_sort_with_nulls_order(self):

df.select(df.name).orderBy(functions.desc_nulls_last('name')).collect(),

[Row(name=u'Tom'), Row(name=u'Alice'), Row(name=None)])

+def test_input_file_name_reset_for_rdd(self):

+from pyspark.sql.functions import udf, input_file_name

+rdd =

self.sc.textFile('python/test_support/hello/hello.txt').map(lambda x: {'data':

x})

+df = self.spark.createDataFrame(rdd, StructType([StructField('data',

StringType(), True)]))

+df.select(input_file_name().alias('file')).collect()

+

+non_file_df = self.spark.range(0, 100, 1,

100).select(input_file_name().alias('file'))

+

+results = non_file_df.collect()

+self.assertTrue(len(results) == 100)

+

+# [SC-12160]: if everything was properly reset after the last job,

this should return

Review comment:

+1 for @HyukjinKwon 's comment. Is this the internal issue tracker ID?

Could you update the PR, @jose-torres ?

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun closed pull request #24629: [SPARK-27752][Core] Upgrade lz4-java from 1.5.1 to 1.6.0

dongjoon-hyun closed pull request #24629: [SPARK-27752][Core] Upgrade lz4-java from 1.5.1 to 1.6.0 URL: https://github.com/apache/spark/pull/24629 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] cloud-fan commented on a change in pull request #24598: [SPARK-27699][SQL] Partially push down disjunctive predicated in Parquet/ORC

cloud-fan commented on a change in pull request #24598: [SPARK-27699][SQL]

Partially push down disjunctive predicated in Parquet/ORC

URL: https://github.com/apache/spark/pull/24598#discussion_r284969751

##

File path:

sql/core/v1.2.1/src/main/scala/org/apache/spark/sql/execution/datasources/orc/OrcFilters.scala

##

@@ -75,10 +75,42 @@ private[sql] object OrcFilters extends OrcFiltersBase {

schema: StructType,

dataTypeMap: Map[String, DataType],

filters: Seq[Filter]): Seq[Filter] = {

-for {

- filter <- filters

- _ <- buildSearchArgument(dataTypeMap, filter, newBuilder())

-} yield filter

+import org.apache.spark.sql.sources._

+

+def convertibleFiltersHelper(

Review comment:

it's weird to call a method "helper", what does it do?

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] AmplabJenkins removed a comment on issue #24598: [SPARK-27699][SQL] Partially push down disjunctive predicated in Parquet/ORC

AmplabJenkins removed a comment on issue #24598: [SPARK-27699][SQL] Partially push down disjunctive predicated in Parquet/ORC URL: https://github.com/apache/spark/pull/24598#issuecomment-493308277 Merged build finished. Test PASSed. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] AmplabJenkins removed a comment on issue #24598: [SPARK-27699][SQL] Partially push down disjunctive predicated in Parquet/ORC

AmplabJenkins removed a comment on issue #24598: [SPARK-27699][SQL] Partially push down disjunctive predicated in Parquet/ORC URL: https://github.com/apache/spark/pull/24598#issuecomment-493308285 Test PASSed. Refer to this link for build results (access rights to CI server needed): https://amplab.cs.berkeley.edu/jenkins//job/SparkPullRequestBuilder/105477/ Test PASSed. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] SparkQA commented on issue #24598: [SPARK-27699][SQL] Partially push down disjunctive predicated in Parquet/ORC

SparkQA commented on issue #24598: [SPARK-27699][SQL] Partially push down disjunctive predicated in Parquet/ORC URL: https://github.com/apache/spark/pull/24598#issuecomment-493308028 **[Test build #105477 has finished](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/105477/testReport)** for PR 24598 at commit [`90b0b69`](https://github.com/apache/spark/commit/90b0b697246251b1e0b8acfe07f53f1153aefe45). * This patch passes all tests. * This patch merges cleanly. * This patch adds no public classes. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] SparkQA removed a comment on issue #24598: [SPARK-27699][SQL] Partially push down disjunctive predicated in Parquet/ORC

SparkQA removed a comment on issue #24598: [SPARK-27699][SQL] Partially push down disjunctive predicated in Parquet/ORC URL: https://github.com/apache/spark/pull/24598#issuecomment-493278220 **[Test build #105477 has started](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/105477/testReport)** for PR 24598 at commit [`90b0b69`](https://github.com/apache/spark/commit/90b0b697246251b1e0b8acfe07f53f1153aefe45). This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] AmplabJenkins commented on issue #24598: [SPARK-27699][SQL] Partially push down disjunctive predicated in Parquet/ORC

AmplabJenkins commented on issue #24598: [SPARK-27699][SQL] Partially push down disjunctive predicated in Parquet/ORC URL: https://github.com/apache/spark/pull/24598#issuecomment-493308285 Test PASSed. Refer to this link for build results (access rights to CI server needed): https://amplab.cs.berkeley.edu/jenkins//job/SparkPullRequestBuilder/105477/ Test PASSed. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] AmplabJenkins commented on issue #24598: [SPARK-27699][SQL] Partially push down disjunctive predicated in Parquet/ORC

AmplabJenkins commented on issue #24598: [SPARK-27699][SQL] Partially push down disjunctive predicated in Parquet/ORC URL: https://github.com/apache/spark/pull/24598#issuecomment-493308277 Merged build finished. Test PASSed. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun commented on issue #24630: [SPARK-27754][K8S] Introduce config for driver request cores

dongjoon-hyun commented on issue #24630: [SPARK-27754][K8S] Introduce config for driver request cores URL: https://github.com/apache/spark/pull/24630#issuecomment-493307559 BTW, @arunmahadevan . The PR title, `Introduce config for driver request cores`, seems to claim too much. We already have a configuration, `spark.driver.cores`. And, it's intentionally designed like that. This PR should focus on the new benefits. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun commented on a change in pull request #24630: [SPARK-27754][K8S] Introduce config for driver request cores

dongjoon-hyun commented on a change in pull request #24630: [SPARK-27754][K8S]

Introduce config for driver request cores

URL: https://github.com/apache/spark/pull/24630#discussion_r284967932

##

File path:

resource-managers/kubernetes/core/src/test/scala/org/apache/spark/deploy/k8s/features/BasicDriverFeatureStepSuite.scala

##

@@ -117,6 +117,33 @@ class BasicDriverFeatureStepSuite extends SparkFunSuite {

assert(featureStep.getAdditionalPodSystemProperties() ===

expectedSparkConf)

}

+ test("Check driver pod respects kubernetes driver request cores") {

+val sparkConf = new SparkConf()

+ .set(KUBERNETES_DRIVER_POD_NAME, "spark-driver-pod")

+ .set(CONTAINER_IMAGE, "spark-driver:latest")

+

+val basePod = SparkPod.initialPod()

+val requests1 = new

BasicDriverFeatureStep(KubernetesTestConf.createDriverConf(sparkConf))

+ .configurePod(basePod)

+ .container.getResources

+ .getRequests.asScala

+assert(requests1("cpu").getAmount === "1")

Review comment:

Can we avoid this assumption? You had better get the default value of

`DRIVER_CORES` and compare with that.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun commented on a change in pull request #24630: [SPARK-27754][K8S] Introduce config for driver request cores

dongjoon-hyun commented on a change in pull request #24630: [SPARK-27754][K8S]

Introduce config for driver request cores

URL: https://github.com/apache/spark/pull/24630#discussion_r284968318

##

File path:

resource-managers/kubernetes/core/src/test/scala/org/apache/spark/deploy/k8s/features/BasicDriverFeatureStepSuite.scala

##

@@ -117,6 +117,33 @@ class BasicDriverFeatureStepSuite extends SparkFunSuite {

assert(featureStep.getAdditionalPodSystemProperties() ===

expectedSparkConf)

}

+ test("Check driver pod respects kubernetes driver request cores") {

+val sparkConf = new SparkConf()

+ .set(KUBERNETES_DRIVER_POD_NAME, "spark-driver-pod")

+ .set(CONTAINER_IMAGE, "spark-driver:latest")

+

+val basePod = SparkPod.initialPod()

+val requests1 = new

BasicDriverFeatureStep(KubernetesTestConf.createDriverConf(sparkConf))

+ .configurePod(basePod)

+ .container.getResources

+ .getRequests.asScala

+assert(requests1("cpu").getAmount === "1")

+

+sparkConf.set(KUBERNETES_DRIVER_REQUEST_CORES, "0.1")

+val requests2 = new

BasicDriverFeatureStep(KubernetesTestConf.createDriverConf(sparkConf))

+ .configurePod(basePod)

+ .container.getResources

+ .getRequests.asScala

+assert(requests2("cpu").getAmount === "0.1")

+

+sparkConf.set(KUBERNETES_DRIVER_REQUEST_CORES, "100m")

+val requests3 = new

BasicDriverFeatureStep(KubernetesTestConf.createDriverConf(sparkConf))

+ .configurePod(basePod)

+ .container.getResources

+ .getRequests.asScala

+assert(requests3("cpu").getAmount === "100m")

Review comment:

If you don't mind, could you avoid repetitions like the following?

```scala

Seq("0.1", "100m").foreach { value =>

sparkConf.set(KUBERNETES_DRIVER_REQUEST_CORES, value)

val requests = new

BasicDriverFeatureStep(KubernetesTestConf.createDriverConf(sparkConf))

.configurePod(basePod)

.container.getResources

.getRequests.asScala

assert(requests("cpu").getAmount === value)

}

```

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun commented on a change in pull request #24630: [SPARK-27754][K8S] Introduce config for driver request cores

dongjoon-hyun commented on a change in pull request #24630: [SPARK-27754][K8S]

Introduce config for driver request cores

URL: https://github.com/apache/spark/pull/24630#discussion_r284968318

##

File path:

resource-managers/kubernetes/core/src/test/scala/org/apache/spark/deploy/k8s/features/BasicDriverFeatureStepSuite.scala

##

@@ -117,6 +117,33 @@ class BasicDriverFeatureStepSuite extends SparkFunSuite {

assert(featureStep.getAdditionalPodSystemProperties() ===

expectedSparkConf)

}

+ test("Check driver pod respects kubernetes driver request cores") {

+val sparkConf = new SparkConf()

+ .set(KUBERNETES_DRIVER_POD_NAME, "spark-driver-pod")

+ .set(CONTAINER_IMAGE, "spark-driver:latest")

+

+val basePod = SparkPod.initialPod()

+val requests1 = new

BasicDriverFeatureStep(KubernetesTestConf.createDriverConf(sparkConf))

+ .configurePod(basePod)

+ .container.getResources

+ .getRequests.asScala

+assert(requests1("cpu").getAmount === "1")

+

+sparkConf.set(KUBERNETES_DRIVER_REQUEST_CORES, "0.1")

+val requests2 = new

BasicDriverFeatureStep(KubernetesTestConf.createDriverConf(sparkConf))

+ .configurePod(basePod)

+ .container.getResources

+ .getRequests.asScala

+assert(requests2("cpu").getAmount === "0.1")

+

+sparkConf.set(KUBERNETES_DRIVER_REQUEST_CORES, "100m")

+val requests3 = new

BasicDriverFeatureStep(KubernetesTestConf.createDriverConf(sparkConf))

+ .configurePod(basePod)

+ .container.getResources

+ .getRequests.asScala

+assert(requests3("cpu").getAmount === "100m")

Review comment:

If you don't mind, could you avoid repetitions like the following?

```

Seq("0.1", "100m").foreach { value =>

sparkConf.set(KUBERNETES_DRIVER_REQUEST_CORES, value)

val requests = new

BasicDriverFeatureStep(KubernetesTestConf.createDriverConf(sparkConf))

.configurePod(basePod)

.container.getResources

.getRequests.asScala

assert(requests("cpu").getAmount === value)

}

```

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun commented on a change in pull request #24630: [SPARK-27754][K8S] Introduce config for driver request cores

dongjoon-hyun commented on a change in pull request #24630: [SPARK-27754][K8S]

Introduce config for driver request cores

URL: https://github.com/apache/spark/pull/24630#discussion_r284966698

##

File path:

resource-managers/kubernetes/core/src/main/scala/org/apache/spark/deploy/k8s/features/BasicDriverFeatureStep.scala

##

@@ -44,6 +44,11 @@ private[spark] class BasicDriverFeatureStep(conf:

KubernetesDriverConf)

// CPU settings

private val driverCpuCores = conf.get(DRIVER_CORES.key, "1")

+ private val driverCoresRequest = if

(conf.contains(KUBERNETES_DRIVER_REQUEST_CORES)) {

+conf.get(KUBERNETES_DRIVER_REQUEST_CORES).get

+ } else {

+driverCpuCores

+ }

Review comment:

Thank you for making a PR, @arunmahadevan . Could you rewrite like the

following one-liner?

```scala

- private val driverCoresRequest = if

(conf.contains(KUBERNETES_DRIVER_REQUEST_CORES)) {

-conf.get(KUBERNETES_DRIVER_REQUEST_CORES).get

- } else {

-driverCpuCores

- }

+ private val driverCoresRequest =

conf.get(KUBERNETES_DRIVER_REQUEST_CORES.key, driverCpuCores)

```

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun commented on a change in pull request #24630: [SPARK-27754][K8S] Introduce config for driver request cores

dongjoon-hyun commented on a change in pull request #24630: [SPARK-27754][K8S] Introduce config for driver request cores URL: https://github.com/apache/spark/pull/24630#discussion_r284967598 ## File path: docs/running-on-kubernetes.md ## @@ -793,6 +793,15 @@ See the [configuration page](configuration.html) for information on Spark config Interval between reports of the current Spark job status in cluster mode. + + spark.kubernetes.driver.request.cores + (none) + +Specify the cpu request for the driver pod. Values conform to the Kubernetes https://kubernetes.io/docs/concepts/configuration/manage-compute-resources-container/#meaning-of-cpu";>convention. +Example values include 0.1, 500m, 1.5, 5, etc., with the definition of cpu units documented in https://kubernetes.io/docs/tasks/configure-pod-container/assign-cpu-resource/#cpu-units";>CPU units. +This takes precedence over spark.driver.cores for specifying the driver pod cpu request if set. Review comment: So, is the goal of this PR to support fractional cpu requests (like `0.5`) because `spark.driver.cores` is an integral configuration? This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun commented on a change in pull request #24630: [SPARK-27754][K8S] Introduce config for driver request cores

dongjoon-hyun commented on a change in pull request #24630: [SPARK-27754][K8S] Introduce config for driver request cores URL: https://github.com/apache/spark/pull/24630#discussion_r284967598 ## File path: docs/running-on-kubernetes.md ## @@ -793,6 +793,15 @@ See the [configuration page](configuration.html) for information on Spark config Interval between reports of the current Spark job status in cluster mode. + + spark.kubernetes.driver.request.cores + (none) + +Specify the cpu request for the driver pod. Values conform to the Kubernetes https://kubernetes.io/docs/concepts/configuration/manage-compute-resources-container/#meaning-of-cpu";>convention. +Example values include 0.1, 500m, 1.5, 5, etc., with the definition of cpu units documented in https://kubernetes.io/docs/tasks/configure-pod-container/assign-cpu-resource/#cpu-units";>CPU units. +This takes precedence over spark.driver.cores for specifying the driver pod cpu request if set. Review comment: So, is the goal of this PR to support fractional cpu requests (like `0.5`) and the unit (like `500m`) because `spark.driver.cores` is an integral configuration? This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] AmplabJenkins removed a comment on issue #24598: [SPARK-27699][SQL] Partially push down disjunctive predicated in Parquet/ORC

AmplabJenkins removed a comment on issue #24598: [SPARK-27699][SQL] Partially push down disjunctive predicated in Parquet/ORC URL: https://github.com/apache/spark/pull/24598#issuecomment-493303740 Merged build finished. Test PASSed. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] AmplabJenkins removed a comment on issue #24598: [SPARK-27699][SQL] Partially push down disjunctive predicated in Parquet/ORC

AmplabJenkins removed a comment on issue #24598: [SPARK-27699][SQL] Partially push down disjunctive predicated in Parquet/ORC URL: https://github.com/apache/spark/pull/24598#issuecomment-493303746 Test PASSed. Refer to this link for build results (access rights to CI server needed): https://amplab.cs.berkeley.edu/jenkins//job/SparkPullRequestBuilder/105476/ Test PASSed. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] AmplabJenkins commented on issue #24598: [SPARK-27699][SQL] Partially push down disjunctive predicated in Parquet/ORC

AmplabJenkins commented on issue #24598: [SPARK-27699][SQL] Partially push down disjunctive predicated in Parquet/ORC URL: https://github.com/apache/spark/pull/24598#issuecomment-493303740 Merged build finished. Test PASSed. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] AmplabJenkins commented on issue #24598: [SPARK-27699][SQL] Partially push down disjunctive predicated in Parquet/ORC

AmplabJenkins commented on issue #24598: [SPARK-27699][SQL] Partially push down disjunctive predicated in Parquet/ORC URL: https://github.com/apache/spark/pull/24598#issuecomment-493303746 Test PASSed. Refer to this link for build results (access rights to CI server needed): https://amplab.cs.berkeley.edu/jenkins//job/SparkPullRequestBuilder/105476/ Test PASSed. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun closed pull request #24621: [WIP][SPARK-27738][BUILD][test-hadoop3.2] Upgrade the built-in Hive to 2.3.5 for hadoop-3.2

dongjoon-hyun closed pull request #24621: [WIP][SPARK-27738][BUILD][test-hadoop3.2] Upgrade the built-in Hive to 2.3.5 for hadoop-3.2 URL: https://github.com/apache/spark/pull/24621 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun commented on issue #24621: [WIP][SPARK-27738][BUILD][test-hadoop3.2] Upgrade the built-in Hive to 2.3.5 for hadoop-3.2

dongjoon-hyun commented on issue #24621: [WIP][SPARK-27738][BUILD][test-hadoop3.2] Upgrade the built-in Hive to 2.3.5 for hadoop-3.2 URL: https://github.com/apache/spark/pull/24621#issuecomment-493303507 We should show the correct value if you don't have any reason to disguise this. And let's discuss on #24620 together. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] SparkQA removed a comment on issue #24598: [SPARK-27699][SQL] Partially push down disjunctive predicated in Parquet/ORC

SparkQA removed a comment on issue #24598: [SPARK-27699][SQL] Partially push down disjunctive predicated in Parquet/ORC URL: https://github.com/apache/spark/pull/24598#issuecomment-493271725 **[Test build #105476 has started](https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/105476/testReport)** for PR 24598 at commit [`4d84060`](https://github.com/apache/spark/commit/4d840607490b52ebdf65a103a3502a1442fc2198). This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] SparkQA commented on issue #24598: [SPARK-27699][SQL] Partially push down disjunctive predicated in Parquet/ORC