[GitHub] [flink] XuQianJin-Stars commented on a change in pull request #11186: [FLINK-16200][sql] Support JSON_EXISTS for blink planner

XuQianJin-Stars commented on a change in pull request #11186:

[FLINK-16200][sql] Support JSON_EXISTS for blink planner

URL: https://github.com/apache/flink/pull/11186#discussion_r403428053

##

File path:

flink-table/flink-table-planner-blink/src/test/scala/org/apache/flink/table/planner/expressions/JsonFunctionsTest.scala

##

@@ -125,4 +127,53 @@ class JsonFunctionsTest extends ExpressionTestBase {

}

}

+ @Test

+ def testJsonExists(): Unit = {

+testSqlApi("json_exists('{\"foo\":\"bar\"}', "

+ + "'strict $.foo' false on error)", "true")

+testSqlApi("json_exists('{\"foo\":\"bar\"}', "

+ + "'strict $.foo' true on error)", "true")

+testSqlApi("json_exists('{\"foo\":\"bar\"}', "

+ + "'strict $.foo' unknown on error)", "true")

+testSqlApi("json_exists('{\"foo\":\"bar\"}', "

+ + "'lax $.foo' false on error)", "true")

+testSqlApi("json_exists('{\"foo\":\"bar\"}', "

+ + "'lax $.foo' true on error)", "true")

+testSqlApi("json_exists('{\"foo\":\"bar\"}', "

+ + "'lax $.foo' unknown on error)", "true")

+testSqlApi("json_exists('{}', "

+ + "'invalid $.foo' false on error)", "false")

+testSqlApi("json_exists('{}', "

+ + "'invalid $.foo' true on error)", "true")

+testSqlApi("json_exists('{}', "

+ + "'invalid $.foo' unknown on error)", "null")

+testSqlApi("json_exists(cast('{\"foo\":\"bar\"}' as varchar), "

+ + "'strict $.foo1')", "false")

+

+// not exists

+testSqlApi("json_exists('{\"foo\":\"bar\"}', "

+ + "'strict $.foo1' false on error)", "false")

+testSqlApi("json_exists('{\"foo\":\"bar\"}', "

+ + "'strict $.foo1' true on error)", "true")

+testSqlApi("json_exists('{\"foo\":\"bar\"}', "

+ + "'strict $.foo1' unknown on error)", "null")

+testSqlApi("json_exists('{\"foo\":\"bar\"}', "

+ + "'lax $.foo1' true on error)", "false")

+testSqlApi("json_exists('{\"foo\":\"bar\"}', "

+ + "'lax $.foo1' false on error)", "false")

+testSqlApi("json_exists('{\"foo\":\"bar\"}', "

+ + "'lax $.foo1' error on error)", "false")

+testSqlApi("json_exists('{\"foo\":\"bar\"}', "

+ + "'lax $.foo1' unknown on error)", "false")

+

+// nulls

+testSqlApi("json_exists(cast(null as varchar), 'lax $' unknown on error)",

"null")

+ }

+

+ @Test

+ def testJsonFuncError(): Unit = {

+expectedException.expect(classOf[CodeGenException])

+expectedException.expectMessage(startsWith("Unsupported call:

JSON_EXISTS"))

Review comment:

> This exception message is still misleading. We already support

`JSON_EXISTS`, why the exception says not? I think we should improve the

exception to give a better understandable message, e.g. `the json path 'lax $'

is illegal.`

Because `JSON_EXISTS (INT, CHAR (5) NOT NULL, RAW

('org.apache.calcite.sql.SqlJsonExistsErrorBehavior',?)` This is not supported.

The json path 'lax $' is illegal will return `false`. Like `verifyException`.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

[GitHub] [flink] XuQianJin-Stars commented on a change in pull request #11186: [FLINK-16200][sql] Support JSON_EXISTS for blink planner

XuQianJin-Stars commented on a change in pull request #11186:

[FLINK-16200][sql] Support JSON_EXISTS for blink planner

URL: https://github.com/apache/flink/pull/11186#discussion_r403428053

##

File path:

flink-table/flink-table-planner-blink/src/test/scala/org/apache/flink/table/planner/expressions/JsonFunctionsTest.scala

##

@@ -125,4 +127,53 @@ class JsonFunctionsTest extends ExpressionTestBase {

}

}

+ @Test

+ def testJsonExists(): Unit = {

+testSqlApi("json_exists('{\"foo\":\"bar\"}', "

+ + "'strict $.foo' false on error)", "true")

+testSqlApi("json_exists('{\"foo\":\"bar\"}', "

+ + "'strict $.foo' true on error)", "true")

+testSqlApi("json_exists('{\"foo\":\"bar\"}', "

+ + "'strict $.foo' unknown on error)", "true")

+testSqlApi("json_exists('{\"foo\":\"bar\"}', "

+ + "'lax $.foo' false on error)", "true")

+testSqlApi("json_exists('{\"foo\":\"bar\"}', "

+ + "'lax $.foo' true on error)", "true")

+testSqlApi("json_exists('{\"foo\":\"bar\"}', "

+ + "'lax $.foo' unknown on error)", "true")

+testSqlApi("json_exists('{}', "

+ + "'invalid $.foo' false on error)", "false")

+testSqlApi("json_exists('{}', "

+ + "'invalid $.foo' true on error)", "true")

+testSqlApi("json_exists('{}', "

+ + "'invalid $.foo' unknown on error)", "null")

+testSqlApi("json_exists(cast('{\"foo\":\"bar\"}' as varchar), "

+ + "'strict $.foo1')", "false")

+

+// not exists

+testSqlApi("json_exists('{\"foo\":\"bar\"}', "

+ + "'strict $.foo1' false on error)", "false")

+testSqlApi("json_exists('{\"foo\":\"bar\"}', "

+ + "'strict $.foo1' true on error)", "true")

+testSqlApi("json_exists('{\"foo\":\"bar\"}', "

+ + "'strict $.foo1' unknown on error)", "null")

+testSqlApi("json_exists('{\"foo\":\"bar\"}', "

+ + "'lax $.foo1' true on error)", "false")

+testSqlApi("json_exists('{\"foo\":\"bar\"}', "

+ + "'lax $.foo1' false on error)", "false")

+testSqlApi("json_exists('{\"foo\":\"bar\"}', "

+ + "'lax $.foo1' error on error)", "false")

+testSqlApi("json_exists('{\"foo\":\"bar\"}', "

+ + "'lax $.foo1' unknown on error)", "false")

+

+// nulls

+testSqlApi("json_exists(cast(null as varchar), 'lax $' unknown on error)",

"null")

+ }

+

+ @Test

+ def testJsonFuncError(): Unit = {

+expectedException.expect(classOf[CodeGenException])

+expectedException.expectMessage(startsWith("Unsupported call:

JSON_EXISTS"))

Review comment:

> This exception message is still misleading. We already support

`JSON_EXISTS`, why the exception says not? I think we should improve the

exception to give a better understandable message, e.g. `the json path 'lax $'

is illegal.`

Because `JSON_EXISTS (INT, CHAR (5) NOT NULL, RAW

('org.apache.calcite.sql.SqlJsonExistsErrorBehavior',?)` This is not supported.

The json path 'lax $' is illegal will return `false`.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

[GitHub] [flink] flinkbot edited a comment on issue #11630: [FLINK-16921][e2e] Describe all resources and show pods logs before cleanup when failed

flinkbot edited a comment on issue #11630: [FLINK-16921][e2e] Describe all resources and show pods logs before cleanup when failed URL: https://github.com/apache/flink/pull/11630#issuecomment-608974004 ## CI report: * 9a9073f279a1bc2bb44352199134c372825cb769 Travis: [PENDING](https://travis-ci.com/github/flink-ci/flink/builds/158328185) Azure: [PENDING](https://dev.azure.com/rmetzger/5bd3ef0a-4359-41af-abca-811b04098d2e/_build/results?buildId=7054) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [flink] flinkbot commented on issue #11630: [FLINK-16921][e2e] Describe all resources and show pods logs before cleanup when failed

flinkbot commented on issue #11630: [FLINK-16921][e2e] Describe all resources and show pods logs before cleanup when failed URL: https://github.com/apache/flink/pull/11630#issuecomment-608974004 ## CI report: * 9a9073f279a1bc2bb44352199134c372825cb769 UNKNOWN Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [flink] flinkbot commented on issue #11630: [FLINK-16921][e2e] Describe all resources and show pods logs before cleanup when failed

flinkbot commented on issue #11630: [FLINK-16921][e2e] Describe all resources and show pods logs before cleanup when failed URL: https://github.com/apache/flink/pull/11630#issuecomment-608972585 Thanks a lot for your contribution to the Apache Flink project. I'm the @flinkbot. I help the community to review your pull request. We will use this comment to track the progress of the review. ## Automated Checks Last check on commit 9a9073f279a1bc2bb44352199134c372825cb769 (Sat Apr 04 04:38:33 UTC 2020) **Warnings:** * No documentation files were touched! Remember to keep the Flink docs up to date! Mention the bot in a comment to re-run the automated checks. ## Review Progress * ❓ 1. The [description] looks good. * ❓ 2. There is [consensus] that the contribution should go into to Flink. * ❓ 3. Needs [attention] from. * ❓ 4. The change fits into the overall [architecture]. * ❓ 5. Overall code [quality] is good. Please see the [Pull Request Review Guide](https://flink.apache.org/contributing/reviewing-prs.html) for a full explanation of the review process. The Bot is tracking the review progress through labels. Labels are applied according to the order of the review items. For consensus, approval by a Flink committer of PMC member is required Bot commands The @flinkbot bot supports the following commands: - `@flinkbot approve description` to approve one or more aspects (aspects: `description`, `consensus`, `architecture` and `quality`) - `@flinkbot approve all` to approve all aspects - `@flinkbot approve-until architecture` to approve everything until `architecture` - `@flinkbot attention @username1 [@username2 ..]` to require somebody's attention - `@flinkbot disapprove architecture` to remove an approval you gave earlier This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [flink] wangyang0918 opened a new pull request #11630: [FLINK-16921][e2e] Describe all resources and show pods logs before cleanup when failed

wangyang0918 opened a new pull request #11630: [FLINK-16921][e2e] Describe all resources and show pods logs before cleanup when failed URL: https://github.com/apache/flink/pull/11630 ## What is the purpose of the change The pods may be pending because of not enough resources, disk pressure, or other problems. Then wait_rest_endpoint_up will timeout. Describing all resources will help to debug these problems. We still have some failed instances and can not reproduce in the local environment(Mac/Linux). Open this PR to run e2e tests more times to find the root cause. ## Brief change log * Describe all resources so that we could find more information about why the K8s e2e tests failed * Debug log could not show up in sometimes, so move `debug_and_show_logs` before `cleanup` ## Verifying this change * Run e2e tests more times, K8s related tests should pass ## Does this pull request potentially affect one of the following parts: - Dependencies (does it add or upgrade a dependency): (yes / **no**) - The public API, i.e., is any changed class annotated with `@Public(Evolving)`: (yes / **no**) - The serializers: (yes / **no** / don't know) - The runtime per-record code paths (performance sensitive): (yes / **no** / don't know) - Anything that affects deployment or recovery: JobManager (and its components), Checkpointing, Kubernetes/Yarn/Mesos, ZooKeeper: (yes / **no** / don't know) - The S3 file system connector: (yes / **no** / don't know) ## Documentation - Does this pull request introduce a new feature? (yes / **no**) - If yes, how is the feature documented? (**not applicable** / docs / JavaDocs / not documented) This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [flink] liuzhixing1006 commented on issue #11455: [FLINK-16098] [chinese-translation, Documentation] Translate "Overvie…

liuzhixing1006 commented on issue #11455: [FLINK-16098] [chinese-translation, Documentation] Translate "Overvie… URL: https://github.com/apache/flink/pull/11455#issuecomment-608969822 Hi @wuchong @JingsongLi , It seems that this pr has met the standard, can you help to merge it? Thank you! This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[jira] [Commented] (FLINK-16931) Large _metadata file lead to JobManager not responding when restart

[

https://issues.apache.org/jira/browse/FLINK-16931?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17075023#comment-17075023

]

Lu Niu commented on FLINK-16931:

Thanks in advance! Will share with the plan later.

> Large _metadata file lead to JobManager not responding when restart

> ---

>

> Key: FLINK-16931

> URL: https://issues.apache.org/jira/browse/FLINK-16931

> Project: Flink

> Issue Type: Bug

> Components: Runtime / Checkpointing, Runtime / Coordination

>Affects Versions: 1.9.2, 1.10.0, 1.11.0

>Reporter: Lu Niu

>Assignee: Lu Niu

>Priority: Critical

> Fix For: 1.11.0

>

>

> When _metadata file is big, JobManager could never recover from checkpoint.

> It fall into a loop that fetch checkpoint -> JM timeout -> restart. Here is

> related log:

> {code:java}

> 2020-04-01 17:08:25,689 INFO

> org.apache.flink.runtime.checkpoint.ZooKeeperCompletedCheckpointStore -

> Recovering checkpoints from ZooKeeper.

> 2020-04-01 17:08:25,698 INFO

> org.apache.flink.runtime.checkpoint.ZooKeeperCompletedCheckpointStore - Found

> 3 checkpoints in ZooKeeper.

> 2020-04-01 17:08:25,698 INFO

> org.apache.flink.runtime.checkpoint.ZooKeeperCompletedCheckpointStore -

> Trying to fetch 3 checkpoints from storage.

> 2020-04-01 17:08:25,698 INFO

> org.apache.flink.runtime.checkpoint.ZooKeeperCompletedCheckpointStore -

> Trying to retrieve checkpoint 50.

> 2020-04-01 17:08:48,589 INFO

> org.apache.flink.runtime.checkpoint.ZooKeeperCompletedCheckpointStore -

> Trying to retrieve checkpoint 51.

> 2020-04-01 17:09:12,775 INFO org.apache.flink.yarn.YarnResourceManager - The

> heartbeat of JobManager with id 02500708baf0bb976891c391afd3d7d5 timed out.

> {code}

> Digging into the code, looks like ExecutionGraph::restart runs in JobMaster

> main thread and finally calls

> ZooKeeperCompletedCheckpointStore::retrieveCompletedCheckpoint which download

> file form DFS. The main thread is basically blocked for a while because of

> this. One possible solution is to making the downloading part async. More

> things might need to consider as the original change tries to make it

> single-threaded. [https://github.com/apache/flink/pull/7568]

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

[jira] [Commented] (FLINK-16724) ListSerializer cannot serialize list which containers null

[

https://issues.apache.org/jira/browse/FLINK-16724?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17074991#comment-17074991

]

Congxian Qiu(klion26) commented on FLINK-16724:

---

[~nppoly] What do you think about my comment, If you agree with it, could you

please close this ticket?

PS: FLINK-16916 has been fixed, now you can try to use {{NullableSerialzier}}

in the new master codebase.

> ListSerializer cannot serialize list which containers null

> --

>

> Key: FLINK-16724

> URL: https://issues.apache.org/jira/browse/FLINK-16724

> Project: Flink

> Issue Type: Bug

> Components: Runtime / State Backends

>Reporter: Chongchen Chen

>Priority: Major

> Attachments: list_serializer_err.diff

>

>

> MapSerializer handles null value correctly, but ListSerializer doesn't. The

> attachment is the modification of unit test that can reproduce the bug.

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

[GitHub] [flink] flinkbot edited a comment on issue #11564: [FLINK-16864][metrics] Add IdleTime metric for task.

flinkbot edited a comment on issue #11564: [FLINK-16864][metrics] Add IdleTime metric for task. URL: https://github.com/apache/flink/pull/11564#issuecomment-605967084 ## CI report: * dfc6f9642a2fe6fca383707a11d53ef6ed2ea381 UNKNOWN * 7da912a7e2bd854fec07c6eb6e7784fba30df765 Travis: [FAILURE](https://travis-ci.com/github/flink-ci/flink/builds/158315901) Azure: [FAILURE](https://dev.azure.com/rmetzger/5bd3ef0a-4359-41af-abca-811b04098d2e/_build/results?buildId=7053) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [flink] flinkbot edited a comment on issue #11564: [FLINK-16864][metrics] Add IdleTime metric for task.

flinkbot edited a comment on issue #11564: [FLINK-16864][metrics] Add IdleTime metric for task. URL: https://github.com/apache/flink/pull/11564#issuecomment-605967084 ## CI report: * b709e1952df66cba5a316f9a46902538bf8cf245 Travis: [CANCELED](https://travis-ci.com/github/flink-ci/flink/builds/158128040) Azure: [FAILURE](https://dev.azure.com/rmetzger/5bd3ef0a-4359-41af-abca-811b04098d2e/_build/results?buildId=7015) * dfc6f9642a2fe6fca383707a11d53ef6ed2ea381 UNKNOWN * 7da912a7e2bd854fec07c6eb6e7784fba30df765 UNKNOWN Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [flink] flinkbot edited a comment on issue #11460: [FLINK-16655][FLINK-16657] Introduce embedded executor and use it for Web Submission

flinkbot edited a comment on issue #11460: [FLINK-16655][FLINK-16657] Introduce embedded executor and use it for Web Submission URL: https://github.com/apache/flink/pull/11460#issuecomment-601593052 ## CI report: * 349f5d7bfd68016ba3595a17ff3a1533969581fb UNKNOWN * 1ef9863ccbeddc51317ec90fa662fd10a797b908 UNKNOWN * 07040ddd9344e4c6cf8e6a7d095cdf7471ebdf2e Travis: [SUCCESS](https://travis-ci.com/github/flink-ci/flink/builds/158289333) Azure: [FAILURE](https://dev.azure.com/rmetzger/5bd3ef0a-4359-41af-abca-811b04098d2e/_build/results?buildId=7051) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [flink] flinkbot edited a comment on issue #11460: [FLINK-16655][FLINK-16657] Introduce embedded executor and use it for Web Submission

flinkbot edited a comment on issue #11460: [FLINK-16655][FLINK-16657] Introduce embedded executor and use it for Web Submission URL: https://github.com/apache/flink/pull/11460#issuecomment-601593052 ## CI report: * 349f5d7bfd68016ba3595a17ff3a1533969581fb UNKNOWN * 1ef9863ccbeddc51317ec90fa662fd10a797b908 UNKNOWN * 07040ddd9344e4c6cf8e6a7d095cdf7471ebdf2e Travis: [PENDING](https://travis-ci.com/github/flink-ci/flink/builds/158289333) Azure: [FAILURE](https://dev.azure.com/rmetzger/5bd3ef0a-4359-41af-abca-811b04098d2e/_build/results?buildId=7051) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [flink] flinkbot edited a comment on issue #11624: [FLINK-16949] Enhance AbstractStreamOperatorTestHarness to use customized TtlTimeProvider

flinkbot edited a comment on issue #11624: [FLINK-16949] Enhance AbstractStreamOperatorTestHarness to use customized TtlTimeProvider URL: https://github.com/apache/flink/pull/11624#issuecomment-608048566 ## CI report: * c4207aa80f6279d013a51b9104997e840716640e Travis: [SUCCESS](https://travis-ci.com/github/flink-ci/flink/builds/158278462) Azure: [FAILURE](https://dev.azure.com/rmetzger/5bd3ef0a-4359-41af-abca-811b04098d2e/_build/results?buildId=7049) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [flink] flinkbot edited a comment on issue #11460: [FLINK-16655][FLINK-16657] Introduce embedded executor and use it for Web Submission

flinkbot edited a comment on issue #11460: [FLINK-16655][FLINK-16657] Introduce embedded executor and use it for Web Submission URL: https://github.com/apache/flink/pull/11460#issuecomment-601593052 ## CI report: * 349f5d7bfd68016ba3595a17ff3a1533969581fb UNKNOWN * 1ef9863ccbeddc51317ec90fa662fd10a797b908 UNKNOWN * cb1fc7686309a1ad8a6278d060f51a24d74dbc00 Travis: [SUCCESS](https://travis-ci.com/github/flink-ci/flink/builds/158256732) Azure: [FAILURE](https://dev.azure.com/rmetzger/5bd3ef0a-4359-41af-abca-811b04098d2e/_build/results?buildId=7047) * 07040ddd9344e4c6cf8e6a7d095cdf7471ebdf2e Travis: [PENDING](https://travis-ci.com/github/flink-ci/flink/builds/158289333) Azure: [PENDING](https://dev.azure.com/rmetzger/5bd3ef0a-4359-41af-abca-811b04098d2e/_build/results?buildId=7051) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [flink] flinkbot edited a comment on issue #11624: [FLINK-16949] Enhance AbstractStreamOperatorTestHarness to use customized TtlTimeProvider

flinkbot edited a comment on issue #11624: [FLINK-16949] Enhance AbstractStreamOperatorTestHarness to use customized TtlTimeProvider URL: https://github.com/apache/flink/pull/11624#issuecomment-608048566 ## CI report: * c4207aa80f6279d013a51b9104997e840716640e Travis: [PENDING](https://travis-ci.com/github/flink-ci/flink/builds/158278462) Azure: [FAILURE](https://dev.azure.com/rmetzger/5bd3ef0a-4359-41af-abca-811b04098d2e/_build/results?buildId=7049) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [flink] flinkbot edited a comment on issue #11507: [FLINK-16587] Add basic CheckpointBarrierHandler for unaligned checkpoint

flinkbot edited a comment on issue #11507: [FLINK-16587] Add basic CheckpointBarrierHandler for unaligned checkpoint URL: https://github.com/apache/flink/pull/11507#issuecomment-603776093 ## CI report: * dee2b337e5e72d8f7b1f5098b74f2958d000fb3c UNKNOWN * 80b7f76f24b5fb6704a4b2292543f8764ec19053 UNKNOWN * a8335f0def293f04fd74bfc47c1b31fc98a46a23 Travis: [FAILURE](https://travis-ci.com/github/flink-ci/flink/builds/158276096) Azure: [FAILURE](https://dev.azure.com/rmetzger/5bd3ef0a-4359-41af-abca-811b04098d2e/_build/results?buildId=7048) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [flink] flinkbot edited a comment on issue #11460: [FLINK-16655][FLINK-16657] Introduce embedded executor and use it for Web Submission

flinkbot edited a comment on issue #11460: [FLINK-16655][FLINK-16657] Introduce embedded executor and use it for Web Submission URL: https://github.com/apache/flink/pull/11460#issuecomment-601593052 ## CI report: * 349f5d7bfd68016ba3595a17ff3a1533969581fb UNKNOWN * 1ef9863ccbeddc51317ec90fa662fd10a797b908 UNKNOWN * cb1fc7686309a1ad8a6278d060f51a24d74dbc00 Travis: [SUCCESS](https://travis-ci.com/github/flink-ci/flink/builds/158256732) Azure: [FAILURE](https://dev.azure.com/rmetzger/5bd3ef0a-4359-41af-abca-811b04098d2e/_build/results?buildId=7047) * 07040ddd9344e4c6cf8e6a7d095cdf7471ebdf2e UNKNOWN Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[jira] [Closed] (FLINK-16710) Log Upload blocks Main Thread in TaskExecutor

[

https://issues.apache.org/jira/browse/FLINK-16710?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Gary Yao closed FLINK-16710.

Resolution: Fixed

master: dbf0c4c5914d11b6c1209f089ed014db8cd733cb

> Log Upload blocks Main Thread in TaskExecutor

> -

>

> Key: FLINK-16710

> URL: https://issues.apache.org/jira/browse/FLINK-16710

> Project: Flink

> Issue Type: Bug

> Components: Runtime / Coordination

>Affects Versions: 1.10.0

>Reporter: Gary Yao

>Assignee: wangsan

>Priority: Critical

> Labels: pull-request-available

> Fix For: 1.11.0

>

> Time Spent: 20m

> Remaining Estimate: 0h

>

> Uploading logs to the BlobServer blocks the TaskExecutor's main thread. We

> should introduce an IO thread pool that carries out file system accesses

> (listing files in a directory, checking if file exists, uploading files).

> Affected RPCs:

> * {{TaskExecutor#requestLogList(Time)}}

> * {{TaskExecutor#requestFileUploadByName(String, Time)}}

> * {{TaskExecutor#requestFileUploadByType(FileType, Time)}}

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

[GitHub] [flink] GJL closed pull request #11571: [FLINK-16710][runtime] Log Upload blocks Main Thread in TaskExecutor

GJL closed pull request #11571: [FLINK-16710][runtime] Log Upload blocks Main Thread in TaskExecutor URL: https://github.com/apache/flink/pull/11571 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[jira] [Reopened] (FLINK-16921) "kubernetes session test" is unstable

[

https://issues.apache.org/jira/browse/FLINK-16921?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Robert Metzger reopened FLINK-16921:

The test failed again:

https://dev.azure.com/rmetzger/Flink/_build/results?buildId=7046=logs=c88eea3b-64a0-564d-0031-9fdcd7b8abee=1e2bbe5b-4657-50be-1f07-d84bfce5b1f5

> "kubernetes session test" is unstable

> -

>

> Key: FLINK-16921

> URL: https://issues.apache.org/jira/browse/FLINK-16921

> Project: Flink

> Issue Type: Bug

> Components: Deployment / Kubernetes, Tests

>Affects Versions: 1.11.0

>Reporter: Robert Metzger

>Assignee: Yang Wang

>Priority: Major

> Labels: pull-request-available, test-stability

> Fix For: 1.11.0

>

> Time Spent: 20m

> Remaining Estimate: 0h

>

> CI:

> https://dev.azure.com/rmetzger/Flink/_build/results?buildId=6915=logs=c88eea3b-64a0-564d-0031-9fdcd7b8abee=1e2bbe5b-4657-50be-1f07-d84bfce5b1f5

> I assume some services didn't come up?

> {code}

> Caused by: java.util.concurrent.CompletionException:

> org.apache.flink.shaded.netty4.io.netty.channel.AbstractChannel$AnnotatedConnectException:

> Connection refused: /10.1.0.4:30095

> {code}

> Full log

> {code}

> 2020-04-01T09:13:59.0673858Z Successfully built ba628fa7af0d

> 2020-04-01T09:13:59.0726818Z Successfully tagged

> test_kubernetes_session:latest

> 2020-04-01T09:13:59.2547709Z

> clusterrolebinding.rbac.authorization.k8s.io/flink-role-binding-default

> created

> 2020-04-01T09:14:00.0586087Z 2020-04-01 09:14:00,055 INFO

> org.apache.flink.configuration.GlobalConfiguration [] - Loading

> configuration property: jobmanager.rpc.address, localhost

> 2020-04-01T09:14:00.0608876Z 2020-04-01 09:14:00,060 INFO

> org.apache.flink.configuration.GlobalConfiguration [] - Loading

> configuration property: jobmanager.rpc.port, 6123

> 2020-04-01T09:14:00.0611236Z 2020-04-01 09:14:00,060 INFO

> org.apache.flink.configuration.GlobalConfiguration [] - Loading

> configuration property: jobmanager.heap.size, 1024m

> 2020-04-01T09:14:00.0613869Z 2020-04-01 09:14:00,061 INFO

> org.apache.flink.configuration.GlobalConfiguration [] - Loading

> configuration property: taskmanager.memory.process.size, 1728m

> 2020-04-01T09:14:00.0616344Z 2020-04-01 09:14:00,061 INFO

> org.apache.flink.configuration.GlobalConfiguration [] - Loading

> configuration property: taskmanager.numberOfTaskSlots, 1

> 2020-04-01T09:14:00.0619384Z 2020-04-01 09:14:00,061 INFO

> org.apache.flink.configuration.GlobalConfiguration [] - Loading

> configuration property: parallelism.default, 1

> 2020-04-01T09:14:00.0624467Z 2020-04-01 09:14:00,062 INFO

> org.apache.flink.configuration.GlobalConfiguration [] - Loading

> configuration property: jobmanager.execution.failover-strategy, region

> 2020-04-01T09:14:00.9838038Z 2020-04-01 09:14:00,983 INFO

> org.apache.flink.runtime.util.config.memory.ProcessMemoryUtils [] - The

> derived from fraction jvm overhead memory (172.800mb (181193935 bytes)) is

> less than its min value 192.000mb (201326592 bytes), min value will be used

> instead

> 2020-04-01T09:14:00.9922554Z 2020-04-01 09:14:00,991 INFO

> org.apache.flink.kubernetes.utils.KubernetesUtils[] - Kubernetes

> deployment requires a fixed port. Configuration blob.server.port will be set

> to 6124

> 2020-04-01T09:14:00.9927409Z 2020-04-01 09:14:00,992 INFO

> org.apache.flink.kubernetes.utils.KubernetesUtils[] - Kubernetes

> deployment requires a fixed port. Configuration taskmanager.rpc.port will be

> set to 6122

> 2020-04-01T09:14:01.0587014Z 2020-04-01 09:14:01,058 WARN

> org.apache.flink.kubernetes.kubeclient.decorators.HadoopConfMountDecorator []

> - Found 0 files in directory null/etc/hadoop, skip to mount the Hadoop

> Configuration ConfigMap.

> 2020-04-01T09:14:01.0592498Z 2020-04-01 09:14:01,059 WARN

> org.apache.flink.kubernetes.kubeclient.decorators.HadoopConfMountDecorator []

> - Found 0 files in directory null/etc/hadoop, skip to create the Hadoop

> Configuration ConfigMap.

> 2020-04-01T09:14:01.8684880Z 2020-04-01 09:14:01,868 INFO

> org.apache.flink.kubernetes.KubernetesClusterDescriptor [] - Create

> flink session cluster flink-native-k8s-session-1 successfully, JobManager Web

> Interface: http://10.1.0.4:30095

> 2020-04-01T09:14:03.2952029Z Executing WordCount example with default input

> data set.

> 2020-04-01T09:14:03.2955365Z Use --input to specify file input.

> 2020-04-01T09:15:31.5606577Z

> 2020-04-01T09:15:31.5610358Z

>

> 2020-04-01T09:15:31.5610913Z The program finished with the following

> exception:

> 2020-04-01T09:15:31.564Z

> 2020-04-01T09:15:31.5611772Z

>

[GitHub] [flink] flinkbot edited a comment on issue #11624: [FLINK-16949] Enhance AbstractStreamOperatorTestHarness to use customized TtlTimeProvider

flinkbot edited a comment on issue #11624: [FLINK-16949] Enhance AbstractStreamOperatorTestHarness to use customized TtlTimeProvider URL: https://github.com/apache/flink/pull/11624#issuecomment-608048566 ## CI report: * 668797635ab529ea21ef234a1f99747cfb4d898a Travis: [SUCCESS](https://travis-ci.com/github/flink-ci/flink/builds/158015561) Azure: [SUCCESS](https://dev.azure.com/rmetzger/5bd3ef0a-4359-41af-abca-811b04098d2e/_build/results?buildId=7006) * c4207aa80f6279d013a51b9104997e840716640e Travis: [PENDING](https://travis-ci.com/github/flink-ci/flink/builds/158278462) Azure: [PENDING](https://dev.azure.com/rmetzger/5bd3ef0a-4359-41af-abca-811b04098d2e/_build/results?buildId=7049) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [flink] flinkbot edited a comment on issue #11507: [FLINK-16587] Add basic CheckpointBarrierHandler for unaligned checkpoint

flinkbot edited a comment on issue #11507: [FLINK-16587] Add basic CheckpointBarrierHandler for unaligned checkpoint URL: https://github.com/apache/flink/pull/11507#issuecomment-603776093 ## CI report: * dee2b337e5e72d8f7b1f5098b74f2958d000fb3c UNKNOWN * 80b7f76f24b5fb6704a4b2292543f8764ec19053 UNKNOWN * 49d5d3e1e8e1bf962716241b79db1f2e28e506b4 Travis: [FAILURE](https://travis-ci.com/github/flink-ci/flink/builds/158188400) Azure: [FAILURE](https://dev.azure.com/rmetzger/5bd3ef0a-4359-41af-abca-811b04098d2e/_build/results?buildId=7040) * a8335f0def293f04fd74bfc47c1b31fc98a46a23 Travis: [PENDING](https://travis-ci.com/github/flink-ci/flink/builds/158276096) Azure: [PENDING](https://dev.azure.com/rmetzger/5bd3ef0a-4359-41af-abca-811b04098d2e/_build/results?buildId=7048) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [flink] flinkbot edited a comment on issue #11624: [FLINK-16949] Enhance AbstractStreamOperatorTestHarness to use customized TtlTimeProvider

flinkbot edited a comment on issue #11624: [FLINK-16949] Enhance AbstractStreamOperatorTestHarness to use customized TtlTimeProvider URL: https://github.com/apache/flink/pull/11624#issuecomment-608048566 ## CI report: * 668797635ab529ea21ef234a1f99747cfb4d898a Travis: [SUCCESS](https://travis-ci.com/github/flink-ci/flink/builds/158015561) Azure: [SUCCESS](https://dev.azure.com/rmetzger/5bd3ef0a-4359-41af-abca-811b04098d2e/_build/results?buildId=7006) * c4207aa80f6279d013a51b9104997e840716640e UNKNOWN Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [flink] flinkbot edited a comment on issue #11507: [FLINK-16587] Add basic CheckpointBarrierHandler for unaligned checkpoint

flinkbot edited a comment on issue #11507: [FLINK-16587] Add basic CheckpointBarrierHandler for unaligned checkpoint URL: https://github.com/apache/flink/pull/11507#issuecomment-603776093 ## CI report: * dee2b337e5e72d8f7b1f5098b74f2958d000fb3c UNKNOWN * 80b7f76f24b5fb6704a4b2292543f8764ec19053 UNKNOWN * 49d5d3e1e8e1bf962716241b79db1f2e28e506b4 Travis: [FAILURE](https://travis-ci.com/github/flink-ci/flink/builds/158188400) Azure: [FAILURE](https://dev.azure.com/rmetzger/5bd3ef0a-4359-41af-abca-811b04098d2e/_build/results?buildId=7040) * a8335f0def293f04fd74bfc47c1b31fc98a46a23 UNKNOWN Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [flink-statefun] sjwiesman commented on a change in pull request #95: [FLINK-16977][docs] Add docs for components and distributed architecture

sjwiesman commented on a change in pull request #95: [FLINK-16977][docs] Add docs for components and distributed architecture URL: https://github.com/apache/flink-statefun/pull/95#discussion_r403225047 ## File path: docs/concepts/distributed_architecture.md ## @@ -0,0 +1,99 @@ +--- +title: Distributed Architecture +nav-id: dist-arch +nav-pos: 2 Review comment: I think this should come after the logical functions page, what do you think? ```suggestion nav-pos: 3 ``` This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [flink] flinkbot edited a comment on issue #11460: [FLINK-16655][FLINK-16657] Introduce embedded executor and use it for Web Submission

flinkbot edited a comment on issue #11460: [FLINK-16655][FLINK-16657] Introduce embedded executor and use it for Web Submission URL: https://github.com/apache/flink/pull/11460#issuecomment-601593052 ## CI report: * 349f5d7bfd68016ba3595a17ff3a1533969581fb UNKNOWN * 1ef9863ccbeddc51317ec90fa662fd10a797b908 UNKNOWN * cb1fc7686309a1ad8a6278d060f51a24d74dbc00 Travis: [SUCCESS](https://travis-ci.com/github/flink-ci/flink/builds/158256732) Azure: [FAILURE](https://dev.azure.com/rmetzger/5bd3ef0a-4359-41af-abca-811b04098d2e/_build/results?buildId=7047) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [flink] Myasuka commented on a change in pull request #11624: [FLINK-16949] Enhance AbstractStreamOperatorTestHarness to use customized TtlTimeProvider

Myasuka commented on a change in pull request #11624: [FLINK-16949] Enhance

AbstractStreamOperatorTestHarness to use customized TtlTimeProvider

URL: https://github.com/apache/flink/pull/11624#discussion_r403207707

##

File path:

flink-streaming-java/src/main/java/org/apache/flink/streaming/api/operators/StreamTaskStateInitializerImpl.java

##

@@ -258,6 +258,10 @@ protected OperatorStateBackend operatorStateBackend(

}

}

+ protected TtlTimeProvider getTtlTimeProvider() {

Review comment:

There existed another problem why we not change the constructor of

`StreamTaskStateInitializerImpl`.

Current `AbstractStreamOperatorTestHarness` is not created from a builder,

and once a new `AbstractStreamOperatorTestHarness` is created, the inner

`streamTaskStateInitializer` has been created with the default

`TtlTimeProvider`. Even we set ttl time provider to

`AbstractStreamOperatorTestHarness` later, the inner

`streamTaskStateInitializer` would not notice the changed ttl time provider

unless we call `AbstractStreamOperatorTestHarness#setup` to re-create the inner

`streamTaskStateInitializer`.

However, `AbstractStreamOperatorTestHarness#setup` actually call a

deprecated `SetupableStreamOperator#setup` interface.

In a nutshell, unless we refactor how we build

`AbstractStreamOperatorTestHarness`, to make the customized ttl time provider

take effect, we must call `AbstractStreamOperatorTestHarness#setup` each time

which might already be treated as a deprecated interface.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

[GitHub] [flink-statefun] sjwiesman commented on a change in pull request #95: [FLINK-16977][docs] Add docs for components and distributed architecture

sjwiesman commented on a change in pull request #95: [FLINK-16977][docs] Add docs for components and distributed architecture URL: https://github.com/apache/flink-statefun/pull/95#discussion_r403193426 ## File path: docs/concepts/distributed_architecture.md ## @@ -0,0 +1,99 @@ +--- +title: Distributed Architecture +nav-id: dist-arch +nav-pos: 2 +nav-title: Architecture +nav-parent_id: concepts +--- + + +A Stateful Functions deployment consists of a few components interacting together. Here we describe these pieces and their Review comment: You're missing the second half of this sentence. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [flink-statefun] sjwiesman commented on a change in pull request #95: [FLINK-16977][docs] Add docs for components and distributed architecture

sjwiesman commented on a change in pull request #95: [FLINK-16977][docs] Add

docs for components and distributed architecture

URL: https://github.com/apache/flink-statefun/pull/95#discussion_r403198004

##

File path: docs/concepts/distributed_architecture.md

##

@@ -0,0 +1,99 @@

+---

+title: Distributed Architecture

+nav-id: dist-arch

+nav-pos: 2

+nav-title: Architecture

+nav-parent_id: concepts

+---

+

+

+A Stateful Functions deployment consists of a few components interacting

together. Here we describe these pieces and their

+

+* This will be replaced by the TOC

+{:toc}

+

+## High-level View

+

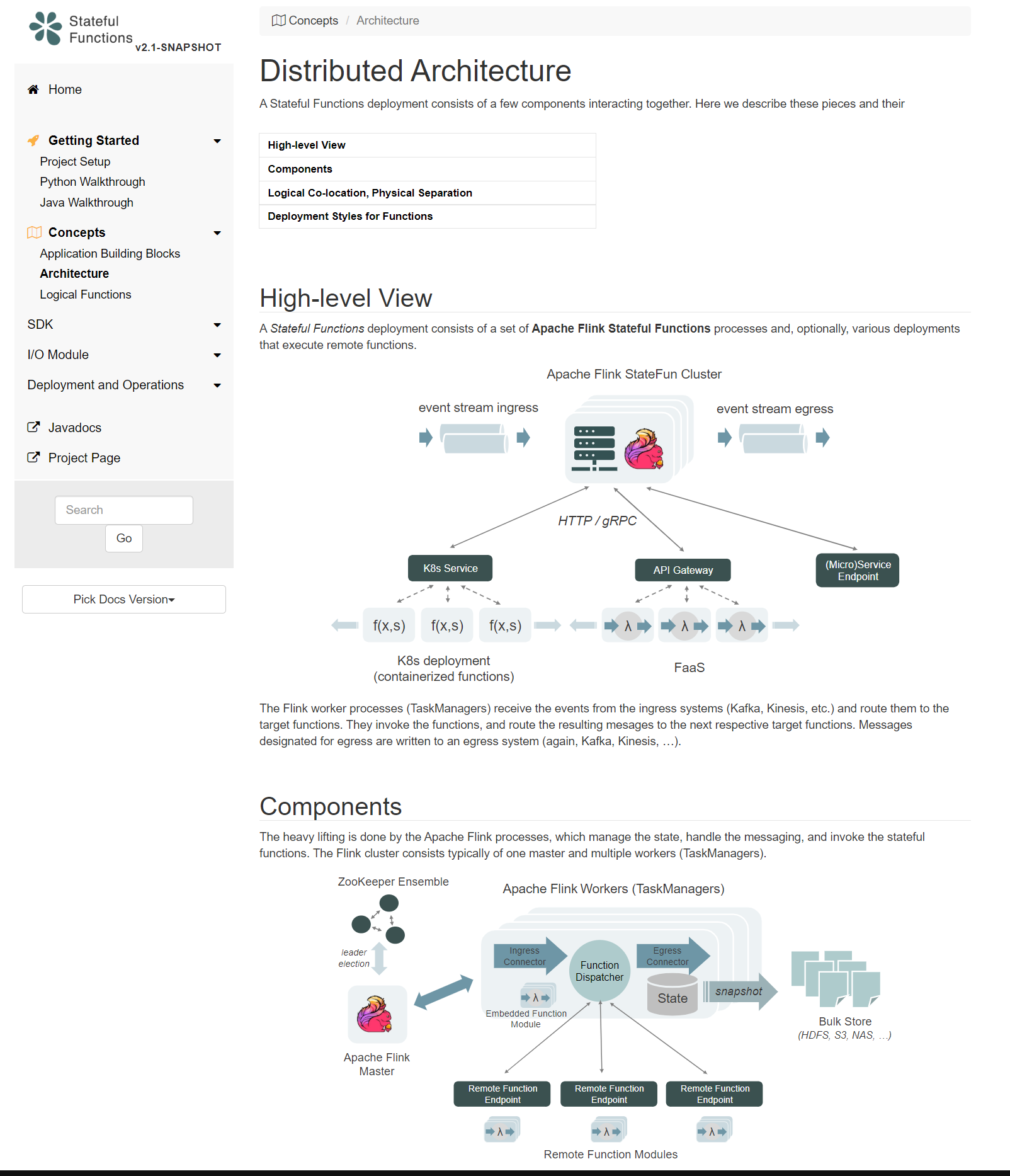

+A *Stateful Functions* deployment consists of a set of **Apache Flink Stateful

Functions** processes and, optionally, various deployments that execute remote

functions.

+

+

+

+

+

+The Flink worker processes (TaskManagers) receive the events from the ingress

systems (Kafka, Kinesis, etc.) and route them to the target functions. They

invoke the functions, and route the resulting mesages to the next respective

target functions. Messages designated for egress are written to an egress

system (again, Kafka, Kinesis, ...).

Review comment:

```suggestion

The Flink worker processes (TaskManagers) receive the events from the

ingress systems (Kafka, Kinesis, etc.) and route them to the target functions.

They invoke the functions and route the resulting messages to the next

respective target functions. Messages designated for egress are written to an

egress system (again, Kafka, Kinesis, ...).

```

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

[GitHub] [flink-statefun] sjwiesman commented on a change in pull request #95: [FLINK-16977][docs] Add docs for components and distributed architecture

sjwiesman commented on a change in pull request #95: [FLINK-16977][docs] Add

docs for components and distributed architecture

URL: https://github.com/apache/flink-statefun/pull/95#discussion_r403196811

##

File path: docs/concepts/distributed_architecture.md

##

@@ -0,0 +1,99 @@

+---

+title: Distributed Architecture

+nav-id: dist-arch

+nav-pos: 2

+nav-title: Architecture

+nav-parent_id: concepts

+---

+

+

+A Stateful Functions deployment consists of a few components interacting

together. Here we describe these pieces and their

+

+* This will be replaced by the TOC

+{:toc}

+

+## High-level View

+

+A *Stateful Functions* deployment consists of a set of **Apache Flink Stateful

Functions** processes and, optionally, various deployments that execute remote

functions.

+

+

+

+

+

+The Flink worker processes (TaskManagers) receive the events from the ingress

systems (Kafka, Kinesis, etc.) and route them to the target functions. They

invoke the functions, and route the resulting mesages to the next respective

target functions. Messages designated for egress are written to an egress

system (again, Kafka, Kinesis, ...).

+

+## Components

+

+The heavy lifting is done by the Apache Flink processes, which manage the

state, handle the messaging, and invoke the stateful functions.

+The Flink cluster consists typically of one master and multiple workers

(TaskManagers).

+

+

+

+

+

+In addition to the Apache Flink processes, a full deployment requires

[ZooKeeper](https://zookeeper.apache.org/) (for [master

failover](https://ci.apache.org/projects/flink/flink-docs-stable/ops/jobmanager_high_availability.html))

and bulk storage (S3, HDFS, NAS, GCS, Azure Blob Store, etc.) to store Flink's

[checkpoints](https://ci.apache.org/projects/flink/flink-docs-master/concepts/stateful-stream-processing.html#checkpointing).

In turn, the deployment requires no database, and Flink processes do not

require persistent volumes.

+

+## Logical Co-location, Physical Separation

+

+A core principle of many Stream Processors is that application logic and the

application state must be co-located. That approach is the basis for their

out-of-the box consistency. Stateful Function takes a unique approach to that

by *logically co-locating* state and compute, but allowing to *physically

separate* them.

+

+ - *Logical co-location:* Messaging, state access/updates and function

invocations are managed tightly together, in the same way as in Flink's

DataStream API. State is sharded by key, and messages are routed to the state

by key. There is a single writer per key at a time, also scheduling the

function invocations.

+

+ - *Physical separation:* Functions can be executed remotely, with message

and state access provided as part of the invocation request. This way,

functions can be managed independently, like stateless processes.

+

+

+## Deployment Styles for Functions

+

+The stateful functions themselves can be deployed in various ways that trade

off certain properties with each other: loose coupling and independent scaling

on the one hand with performance overhead on the other hand. Each module of

functions can be of a different kind, so some functions can run remote, while

others could run embedded.

+

+ Remote Functions

+

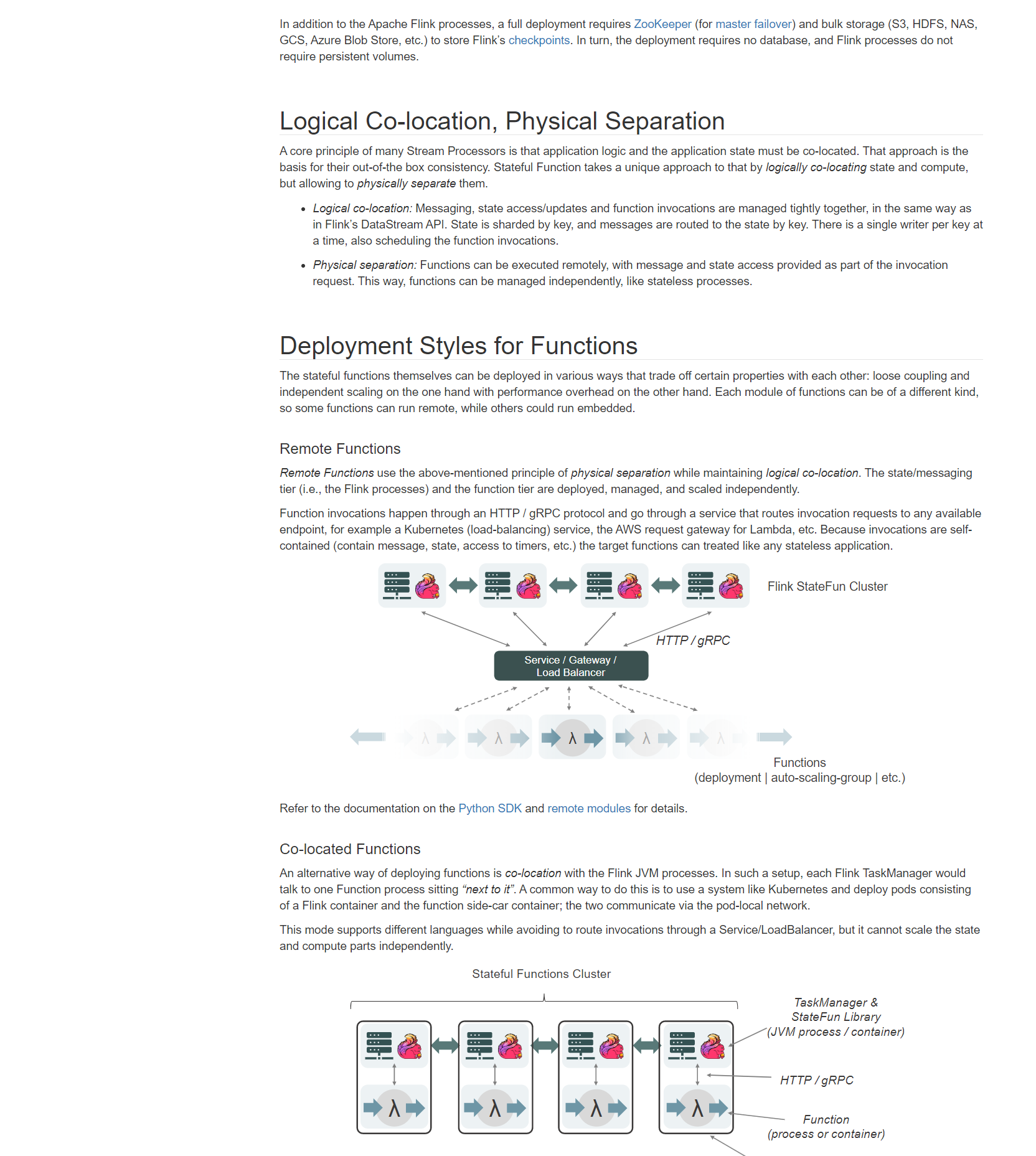

+*Remote Functions* use the above-mentioned principle of *physical separation*

while maintaining *logical co-location*. The state/messaging tier (i.e., the

Flink processes) and the function tier are deployed, managed, and scaled

independently.

+

+Function invocations happen through an HTTP / gRPC protocol and go through a

service that routes invocation requests to any available endpoint, for example

a Kubernetes (load-balancing) service, the AWS request gateway for Lambda, etc.

Because invocations are self-contained (contain message, state, access to

timers, etc.) the target functions can treated like any stateless application.

+

+

+

+

+

+

+Refer to the documentation on the [Python SDK]({{ site.baseurl

}}/sdk/python.html) and [remote modules]({{ site.baseurl

}}/sdk/modules.html#remote-module) for details.

+

+ Co-located Functions

+

+An alternative way of deploying functions is *co-location* with the Flink JVM

processes. In such a setup, each Flink TaskManager would talk to one Function

process sitting *"next to it"*. A common way to do this is to use a system like

Kubernetes and deploy pods consisting of a Flink container and the function

side-car container; the two communicate via the pod-local network.

+

+This mode supports different languages while avoiding to route invocations

through a Service/LoadBalancer, but it cannot scale the state and compute parts

independently.

+

+

+

+

+

+This style of deployment is similar to how Flink's Table API and API Beam's

portability layer and deploy execute non-JVM functions.

Review comment:

```suggestion

This style of deployment is similar to how Flink's Table API and API Beam's

portability

[GitHub] [flink] bowenli86 commented on a change in pull request #11538: [FLINK-16813][jdbc] JDBCInputFormat doesn't correctly map Short

bowenli86 commented on a change in pull request #11538: [FLINK-16813][jdbc]

JDBCInputFormat doesn't correctly map Short

URL: https://github.com/apache/flink/pull/11538#discussion_r403184113

##

File path: flink-core/src/main/java/org/apache/flink/util/StringUtils.java

##

@@ -139,8 +139,16 @@ public static String arrayToString(Object array) {

if (array instanceof long[]) {

return Arrays.toString((long[]) array);

}

- if (array instanceof Object[]) {

- return Arrays.toString((Object[]) array);

+ // for array of byte array

+ if (array instanceof byte[][]) {

Review comment:

maybe it should be added later, I haven't seen a case yet.

We need byte[][] as pg supports array of byte array.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

[GitHub] [flink] flinkbot edited a comment on issue #11460: [FLINK-16655][FLINK-16657] Introduce embedded executor and use it for Web Submission

flinkbot edited a comment on issue #11460: [FLINK-16655][FLINK-16657] Introduce embedded executor and use it for Web Submission URL: https://github.com/apache/flink/pull/11460#issuecomment-601593052 ## CI report: * 349f5d7bfd68016ba3595a17ff3a1533969581fb UNKNOWN * 1ef9863ccbeddc51317ec90fa662fd10a797b908 UNKNOWN * cb1fc7686309a1ad8a6278d060f51a24d74dbc00 Travis: [PENDING](https://travis-ci.com/github/flink-ci/flink/builds/158256732) Azure: [FAILURE](https://dev.azure.com/rmetzger/5bd3ef0a-4359-41af-abca-811b04098d2e/_build/results?buildId=7047) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [flink] flinkbot edited a comment on issue #11427: [FLINK-15790][k8s] Make FlinkKubeClient and its implementations asynchronous

flinkbot edited a comment on issue #11427: [FLINK-15790][k8s] Make FlinkKubeClient and its implementations asynchronous URL: https://github.com/apache/flink/pull/11427#issuecomment-599949839 ## CI report: * e5e11418358bf450d1cca543916bbd7d695375b1 UNKNOWN * ad481f5d846621032feb21e409690eed5b114191 UNKNOWN * 2a76c7689c032056a462a615c28f27f1361f1f0e Travis: [SUCCESS](https://travis-ci.com/github/flink-ci/flink/builds/158236401) Azure: [FAILURE](https://dev.azure.com/rmetzger/5bd3ef0a-4359-41af-abca-811b04098d2e/_build/results?buildId=7045) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[jira] [Created] (FLINK-16978) Update flink-statefun README.md for Stateful Functions 2.0.

Marta Paes Moreira created FLINK-16978: -- Summary: Update flink-statefun README.md for Stateful Functions 2.0. Key: FLINK-16978 URL: https://issues.apache.org/jira/browse/FLINK-16978 Project: Flink Issue Type: Task Components: Stateful Functions Reporter: Marta Paes Moreira Assignee: Marta Paes Moreira Updating the README in the repository to fit the changes of Stateful Functions 2.0. Will also update the README in the statefun-python-sdk directory. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Comment Edited] (FLINK-9173) RestClient - Received response is abnormal

[

https://issues.apache.org/jira/browse/FLINK-9173?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17074738#comment-17074738

]

François Lacombe edited comment on FLINK-9173 at 4/3/20, 5:06 PM:

--

This issue is still alive with Flink 1.10 and Springboot 2.2.5.

I had to deal with it recently and colleagues of mine find out this workdaround:

Root cause comes from wrong classloader selection due to some SpringBoot

packaging flaw.

In my pom.xml, I replaced :

{code:java}

org.springframework.boot

spring-boot-maven-plugin

{code}

by:

{code:java}

org.apache.maven.plugins

maven-dependency-plugin

copy-dependencies

prepare-package

copy-dependencies

${project.build.directory}/libs

org.apache.maven.plugins

maven-jar-plugin

true

libs/

org.baeldung.executable.ExecutableMavenJar

{code}

{{}}

It will produce a jar file with libs in a side directry instead of a fat-jar.

Hope it will help and eventually be fixed in a next release

All the best

was (Author: flacombe):

This issue is still alive with Flink 1.10 and Springboot 2.2.5.

I had to deal with it recently and colleagues of mine find out this workdaround:

Root cause comes from wrong classloader selection due to some SpringBoot

packaging flaw.

In my pom.xml, I replaced :

{code:java}

org.springframework.boot

spring-boot-maven-plugin

{code}

by:

{{}}

{code:java}

org.apache.maven.plugins

maven-dependency-plugin

copy-dependencies

prepare-package

copy-dependencies

${project.build.directory}/libs

org.apache.maven.plugins

maven-jar-plugin

true

libs/

org.baeldung.executable.ExecutableMavenJar

{code}

{{}}

It will produce a jar file with libs in a side directry instead of a fat-jar.

Hope it will help and eventually be fixed in a next release

All the best

> RestClient - Received response is abnormal

> --

>

> Key: FLINK-9173

> URL: https://issues.apache.org/jira/browse/FLINK-9173

> Project: Flink

> Issue Type: Bug

> Components: Runtime / Web Frontend

>Affects Versions: 1.5.0

> Environment: OS: CentOS 6.8

> JAVA: 1.8.0_161-b12

> maven-plugin: spring-boot-maven-plugin

> Spring-boot: 1.5.10.RELEASE

>Reporter: Bob Lau

>Priority: Major

> Attachments: image-2018-04-17-14-09-33-991.png

>

>

> The system prints the exception log as follows:

>

> {code:java}

> //代码占位符

> 09:07:20.755 tysc_log [Flink-RestClusterClient-IO-thread-4] ERROR

> o.a.flink.runtime.rest.RestClient - Received response was neither of the

> expected type ([simple type, class

> org.apache.flink.runtime.rest.messages.job.JobExecutionResultResponseBody])

> nor an error.

> Response=org.apache.flink.runtime.rest.RestClient$JsonResponse@2ac43968

> org.apache.flink.shaded.jackson2.com.fasterxml.jackson.databind.exc.UnrecognizedPropertyException:

> Unrecognized field "status" (class

> org.apache.flink.runtime.rest.messages.ErrorResponseBody), not marked as

> ignorable (one known property: "errors"])

> at [Source: N/A; line: -1, column: -1] (through reference chain:

> org.apache.flink.runtime.rest.messages.ErrorResponseBody["status"])

> at

> org.apache.flink.shaded.jackson2.com.fasterxml.jackson.databind.exc.UnrecognizedPropertyException.from(UnrecognizedPropertyException.java:62)

> at

> org.apache.flink.shaded.jackson2.com.fasterxml.jackson.databind.DeserializationContext.reportUnknownProperty(DeserializationContext.java:851)

> at

> org.apache.flink.shaded.jackson2.com.fasterxml.jackson.databind.deser.std.StdDeserializer.handleUnknownProperty(StdDeserializer.java:1085)

> at

> org.apache.flink.shaded.jackson2.com.fasterxml.jackson.databind.deser.BeanDeserializerBase.handleUnknownProperty(BeanDeserializerBase.java:1392)

> at

> org.apache.flink.shaded.jackson2.com.fasterxml.jackson.databind.deser.BeanDeserializerBase.handleUnknownProperties(BeanDeserializerBase.java:1346)

> at

> org.apache.flink.shaded.jackson2.com.fasterxml.jackson.databind.deser.BeanDeserializer._deserializeUsingPropertyBased(BeanDeserializer.java:455)

> at

> org.apache.flink.shaded.jackson2.com.fasterxml.jackson.databind.deser.BeanDeserializerBase.deserializeFromObjectUsingNonDefault(BeanDeserializerBase.java:1127)

> at

> org.apache.flink.shaded.jackson2.com.fasterxml.jackson.databind.deser.BeanDeserializer.deserializeFromObject(BeanDeserializer.java:298)

> at

> org.apache.flink.shaded.jackson2.com.fasterxml.jackson.databind.deser.BeanDeserializer.deserialize(BeanDeserializer.java:133)

> at

>

[jira] [Commented] (FLINK-9173) RestClient - Received response is abnormal

[

https://issues.apache.org/jira/browse/FLINK-9173?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17074738#comment-17074738

]

François Lacombe commented on FLINK-9173:

-

This issue is still alive with Flink 1.10 and Springboot 2.2.5.

I had to deal with it recently and colleagues of mine find out this workdaround:

Root cause comes from wrong classloader selection due to some SpringBoot

packaging flaw.

In my pom.xml, I replaced :

{code:java}

org.springframework.boot

spring-boot-maven-plugin

{code}

by:

{{}}

{code:java}

org.apache.maven.plugins

maven-dependency-plugin

copy-dependencies

prepare-package

copy-dependencies

${project.build.directory}/libs

org.apache.maven.plugins

maven-jar-plugin

true

libs/

org.baeldung.executable.ExecutableMavenJar

{code}

{{}}

It will produce a jar file with libs in a side directry instead of a fat-jar.

Hope it will help and eventually be fixed in a next release

All the best

> RestClient - Received response is abnormal

> --

>

> Key: FLINK-9173

> URL: https://issues.apache.org/jira/browse/FLINK-9173

> Project: Flink

> Issue Type: Bug

> Components: Runtime / Web Frontend

>Affects Versions: 1.5.0

> Environment: OS: CentOS 6.8

> JAVA: 1.8.0_161-b12

> maven-plugin: spring-boot-maven-plugin

> Spring-boot: 1.5.10.RELEASE

>Reporter: Bob Lau

>Priority: Major

> Attachments: image-2018-04-17-14-09-33-991.png

>

>

> The system prints the exception log as follows:

>

> {code:java}

> //代码占位符

> 09:07:20.755 tysc_log [Flink-RestClusterClient-IO-thread-4] ERROR

> o.a.flink.runtime.rest.RestClient - Received response was neither of the

> expected type ([simple type, class

> org.apache.flink.runtime.rest.messages.job.JobExecutionResultResponseBody])

> nor an error.

> Response=org.apache.flink.runtime.rest.RestClient$JsonResponse@2ac43968

> org.apache.flink.shaded.jackson2.com.fasterxml.jackson.databind.exc.UnrecognizedPropertyException:

> Unrecognized field "status" (class

> org.apache.flink.runtime.rest.messages.ErrorResponseBody), not marked as

> ignorable (one known property: "errors"])

> at [Source: N/A; line: -1, column: -1] (through reference chain:

> org.apache.flink.runtime.rest.messages.ErrorResponseBody["status"])

> at

> org.apache.flink.shaded.jackson2.com.fasterxml.jackson.databind.exc.UnrecognizedPropertyException.from(UnrecognizedPropertyException.java:62)

> at

> org.apache.flink.shaded.jackson2.com.fasterxml.jackson.databind.DeserializationContext.reportUnknownProperty(DeserializationContext.java:851)

> at

> org.apache.flink.shaded.jackson2.com.fasterxml.jackson.databind.deser.std.StdDeserializer.handleUnknownProperty(StdDeserializer.java:1085)

> at

> org.apache.flink.shaded.jackson2.com.fasterxml.jackson.databind.deser.BeanDeserializerBase.handleUnknownProperty(BeanDeserializerBase.java:1392)

> at

> org.apache.flink.shaded.jackson2.com.fasterxml.jackson.databind.deser.BeanDeserializerBase.handleUnknownProperties(BeanDeserializerBase.java:1346)

> at

> org.apache.flink.shaded.jackson2.com.fasterxml.jackson.databind.deser.BeanDeserializer._deserializeUsingPropertyBased(BeanDeserializer.java:455)

> at

> org.apache.flink.shaded.jackson2.com.fasterxml.jackson.databind.deser.BeanDeserializerBase.deserializeFromObjectUsingNonDefault(BeanDeserializerBase.java:1127)

> at

> org.apache.flink.shaded.jackson2.com.fasterxml.jackson.databind.deser.BeanDeserializer.deserializeFromObject(BeanDeserializer.java:298)

> at

> org.apache.flink.shaded.jackson2.com.fasterxml.jackson.databind.deser.BeanDeserializer.deserialize(BeanDeserializer.java:133)

> at

> org.apache.flink.shaded.jackson2.com.fasterxml.jackson.databind.ObjectMapper._readValue(ObjectMapper.java:3779)

> at

> org.apache.flink.shaded.jackson2.com.fasterxml.jackson.databind.ObjectMapper.readValue(ObjectMapper.java:2050)

> at

> org.apache.flink.shaded.jackson2.com.fasterxml.jackson.databind.ObjectMapper.treeToValue(ObjectMapper.java:2547)

> at org.apache.flink.runtime.rest.RestClient.parseResponse(RestClient.java:225)

> at

> org.apache.flink.runtime.rest.RestClient.lambda$submitRequest$3(RestClient.java:210)

> at

> java.util.concurrent.CompletableFuture.uniCompose(CompletableFuture.java:952)

> at

> java.util.concurrent.CompletableFuture$UniCompose.tryFire(CompletableFuture.java:926)

> at

> java.util.concurrent.CompletableFuture$Completion.run(CompletableFuture.java:442)

> at

> java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

> at

> java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

> at java.lang.Thread.run(Thread.java:748)

> {code}

>

> In the development

[GitHub] [flink-statefun] StephanEwen opened a new pull request #95: [FLINK-16977][docs] Add docs for components and distributed architecture

StephanEwen opened a new pull request #95: [FLINK-16977][docs] Add docs for components and distributed architecture URL: https://github.com/apache/flink-statefun/pull/95 This adds documentation for the components of a distributed deployment.   This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[jira] [Updated] (FLINK-16977) Add Architecture docs for Stateful Functions

[ https://issues.apache.org/jira/browse/FLINK-16977?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] ASF GitHub Bot updated FLINK-16977: --- Labels: pull-request-available (was: ) > Add Architecture docs for Stateful Functions > > > Key: FLINK-16977 > URL: https://issues.apache.org/jira/browse/FLINK-16977 > Project: Flink > Issue Type: Improvement > Components: Stateful Functions >Reporter: Stephan Ewen >Assignee: Stephan Ewen >Priority: Major > Labels: pull-request-available > Fix For: 2.0.0, 2.1.0 > > > We should add a section to the documentation describing the distributed setup > and architecture of a Stateful Functions applications. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Created] (FLINK-16977) Add Architecture docs for Stateful Functions

Stephan Ewen created FLINK-16977: Summary: Add Architecture docs for Stateful Functions Key: FLINK-16977 URL: https://issues.apache.org/jira/browse/FLINK-16977 Project: Flink Issue Type: Improvement Components: Stateful Functions Reporter: Stephan Ewen Assignee: Stephan Ewen Fix For: 2.1.0, 2.0.0 We should add a section to the documentation describing the distributed setup and architecture of a Stateful Functions applications. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [flink] flinkbot edited a comment on issue #11460: [FLINK-16655][FLINK-16657] Introduce embedded executor and use it for Web Submission

flinkbot edited a comment on issue #11460: [FLINK-16655][FLINK-16657] Introduce embedded executor and use it for Web Submission URL: https://github.com/apache/flink/pull/11460#issuecomment-601593052 ## CI report: * 349f5d7bfd68016ba3595a17ff3a1533969581fb UNKNOWN * 1ef9863ccbeddc51317ec90fa662fd10a797b908 UNKNOWN * f128770ed2a5816a1f460734f736fd07b273e896 Travis: [SUCCESS](https://travis-ci.com/github/flink-ci/flink/builds/158227253) Azure: [FAILURE](https://dev.azure.com/rmetzger/5bd3ef0a-4359-41af-abca-811b04098d2e/_build/results?buildId=7043) * cb1fc7686309a1ad8a6278d060f51a24d74dbc00 Travis: [PENDING](https://travis-ci.com/github/flink-ci/flink/builds/158256732) Azure: [PENDING](https://dev.azure.com/rmetzger/5bd3ef0a-4359-41af-abca-811b04098d2e/_build/results?buildId=7047) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [flink] tillrohrmann commented on a change in pull request #11353: [FLINK-16438][yarn] Make YarnResourceManager starts workers using WorkerResourceSpec requested by SlotManager

tillrohrmann commented on a change in pull request #11353: [FLINK-16438][yarn]

Make YarnResourceManager starts workers using WorkerResourceSpec requested by

SlotManager

URL: https://github.com/apache/flink/pull/11353#discussion_r403089669

##

File path:

flink-kubernetes/src/main/java/org/apache/flink/kubernetes/KubernetesResourceManager.java

##

@@ -232,57 +229,75 @@ private void recoverWorkerNodesFromPreviousAttempts()

throws ResourceManagerExce

++currentMaxAttemptId);

}

- private void requestKubernetesPod() {

- numPendingPodRequests++;

+ private void requestKubernetesPod(WorkerResourceSpec

workerResourceSpec) {

+ final KubernetesTaskManagerParameters parameters =

+

createKubernetesTaskManagerParameters(workerResourceSpec);

+

+ podWorkerResources.put(parameters.getPodName(),

workerResourceSpec);

+ final int pendingWorkerNum =

pendingWorkerCounter.increaseAndGet(workerResourceSpec);

log.info("Requesting new TaskManager pod with <{},{}>. Number

pending requests {}.",

- defaultMemoryMB,

- defaultCpus,

- numPendingPodRequests);

+ parameters.getTaskManagerMemoryMB(),

+ parameters.getTaskManagerCPU(),

+ pendingWorkerNum);

+ log.info("TaskManager {} will be started with {}.",

parameters.getPodName(), workerResourceSpec);

+

+ final KubernetesPod taskManagerPod =

+

KubernetesTaskManagerFactory.createTaskManagerComponent(parameters);

+ kubeClient.createTaskManagerPod(taskManagerPod);

+ }

+

+ private KubernetesTaskManagerParameters

createKubernetesTaskManagerParameters(WorkerResourceSpec workerResourceSpec) {

+ // TODO: need to unset process/flink memory size from

configuration if dynamic worker resource is activated

+ final TaskExecutorProcessSpec taskExecutorProcessSpec =

+

TaskExecutorProcessUtils.processSpecFromWorkerResourceSpec(flinkConfig,

workerResourceSpec);

final String podName = String.format(

TASK_MANAGER_POD_FORMAT,

clusterId,

currentMaxAttemptId,

++currentMaxPodId);

+ final ContaineredTaskManagerParameters taskManagerParameters =

+ ContaineredTaskManagerParameters.create(flinkConfig,

taskExecutorProcessSpec);

+

final String dynamicProperties =

BootstrapTools.getDynamicPropertiesAsString(flinkClientConfig, flinkConfig);

- final KubernetesTaskManagerParameters

kubernetesTaskManagerParameters = new KubernetesTaskManagerParameters(

+ return new KubernetesTaskManagerParameters(

flinkConfig,

podName,

dynamicProperties,

taskManagerParameters);

-

- final KubernetesPod taskManagerPod =

-

KubernetesTaskManagerFactory.createTaskManagerComponent(kubernetesTaskManagerParameters);

-

- log.info("TaskManager {} will be started with {}.", podName,

taskExecutorProcessSpec);

- kubeClient.createTaskManagerPod(taskManagerPod);

}

/**

* Request new pod if pending pods cannot satisfy pending slot requests.

*/

- private void requestKubernetesPodIfRequired() {

- final int requiredTaskManagers =

getNumberRequiredTaskManagers();

+ private void requestKubernetesPodIfRequired(WorkerResourceSpec

workerResourceSpec) {

+ final int requiredTaskManagers =

getPendingWorkerNums().get(workerResourceSpec);

+ final int pendingWorkerNum =

pendingWorkerCounter.getNum(workerResourceSpec);

Review comment:

I think I would hide `pendingWorkerCounter` behind some methods which the

base class provides.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

[GitHub] [flink] tillrohrmann commented on a change in pull request #11353: [FLINK-16438][yarn] Make YarnResourceManager starts workers using WorkerResourceSpec requested by SlotManager

tillrohrmann commented on a change in pull request #11353: [FLINK-16438][yarn]

Make YarnResourceManager starts workers using WorkerResourceSpec requested by

SlotManager

URL: https://github.com/apache/flink/pull/11353#discussion_r403128803

##

File path:

flink-yarn/src/main/java/org/apache/flink/yarn/YarnResourceManager.java

##

@@ -540,39 +571,41 @@ private FinalApplicationStatus

getYarnStatus(ApplicationStatus status) {

/**

* Request new container if pending containers cannot satisfy pending

slot requests.

*/

- private void requestYarnContainerIfRequired() {

- int requiredTaskManagers = getNumberRequiredTaskManagers();

-

- if (requiredTaskManagers > numPendingContainerRequests) {

- requestYarnContainer();

- }

+ private void requestYarnContainerIfRequired(Resource containerResource)

{

+ getPendingWorkerNums().entrySet().stream()

+ .filter(entry ->

+

getContainerResource(entry.getKey()).equals(containerResource) &&

+ entry.getValue() >

pendingWorkerCounter.getNum(entry.getKey()))

+ .findAny()

Review comment:

Shouldn't we do this for all instead of any?

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

[GitHub] [flink] tillrohrmann commented on a change in pull request #11353: [FLINK-16438][yarn] Make YarnResourceManager starts workers using WorkerResourceSpec requested by SlotManager

tillrohrmann commented on a change in pull request #11353: [FLINK-16438][yarn]

Make YarnResourceManager starts workers using WorkerResourceSpec requested by

SlotManager

URL: https://github.com/apache/flink/pull/11353#discussion_r403115740

##

File path:

flink-yarn/src/test/java/org/apache/flink/yarn/TestingContainerStatus.java

##

@@ -0,0 +1,86 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one

+ * or more contributor license agreements. See the NOTICE file

+ * distributed with this work for additional information

+ * regarding copyright ownership. The ASF licenses this file

+ * to you under the Apache License, Version 2.0 (the

+ * "License"); you may not use this file except in compliance

+ * with the License. You may obtain a copy of the License at

+ *

+ * http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ */

+

+package org.apache.flink.yarn;

+

+import org.apache.hadoop.yarn.api.records.ContainerId;

+import org.apache.hadoop.yarn.api.records.ContainerState;

+import org.apache.hadoop.yarn.api.records.ContainerStatus;

+

+/**

+ * A {@link ContainerStatus} implementation for testing.

+ */

+class TestingContainerStatus extends ContainerStatus {

+

+ private final ContainerId containerId;

+ private final ContainerState containerState;

+ private final String diagnostics;

+ private final int exitStatus;

+